Testing Nvidia's RTX Mega Geometry tech — VRAM-reducing tech a leap forward for path-traced rendering

Reducing VRAM and eliminating visual artifacts? What's not to like?

We took Nvidia's RTX Mega Geometry technology through a series of tests in Alan Wake 2 and the RTX Bonsai Diorama Demo to see how this tech reduces VRAM consumption and eliminates visual artifacts, thus helping pave the way to photorealistic real-time graphics.

In 2018, NVIDIA announced its GeForce RTX line of graphics cards based on the Turing architecture, which would allow for hardware-accelerated real-time ray tracing. In November of that year, Battlefield V became the first title to support real-time ray tracing using the Microsoft DirectX Raytracing API (DXR). The game only supported one ray-traced effect – ray-traced reflections.

In 2019, Control launched with support for multiple ray-traced effects – RT reflections, RT transparent reflections, indirect diffuse lighting, RT contact shadows, and RT debris.

Later, we would see full ray tracing – or path tracing – in games like Quake II RTX, Cyberpunk 2077, and more. In contrast to the hybrid ray tracing solution used in some games, path tracing accurately simulates light in a scene. It does this by sampling a wide range of potential light paths a single ray can follow. This method is also used by the film industry to generate photorealistic visuals in movies.

Path tracing is present in a number of modern games, where it can significantly enhance lighting realism depending on the implementation. In addition to this enhanced lighting, there have also been technological advancements in geometric complexity and density for games. Unreal Engine 5’s Nanite is a virtualized geometry system that can render high-quality and high-density assets in real time with pixel-scale detail and high object counts. Other game engines have similar technologies, such as Micropolygon Geometry in the Anvil Engine.

These advancements in geometric detail require new techniques to ray trace full-quality geometry. That’s where Nvidia's RTX Mega Geometry technology comes in.

What is RTX Mega Geometry?

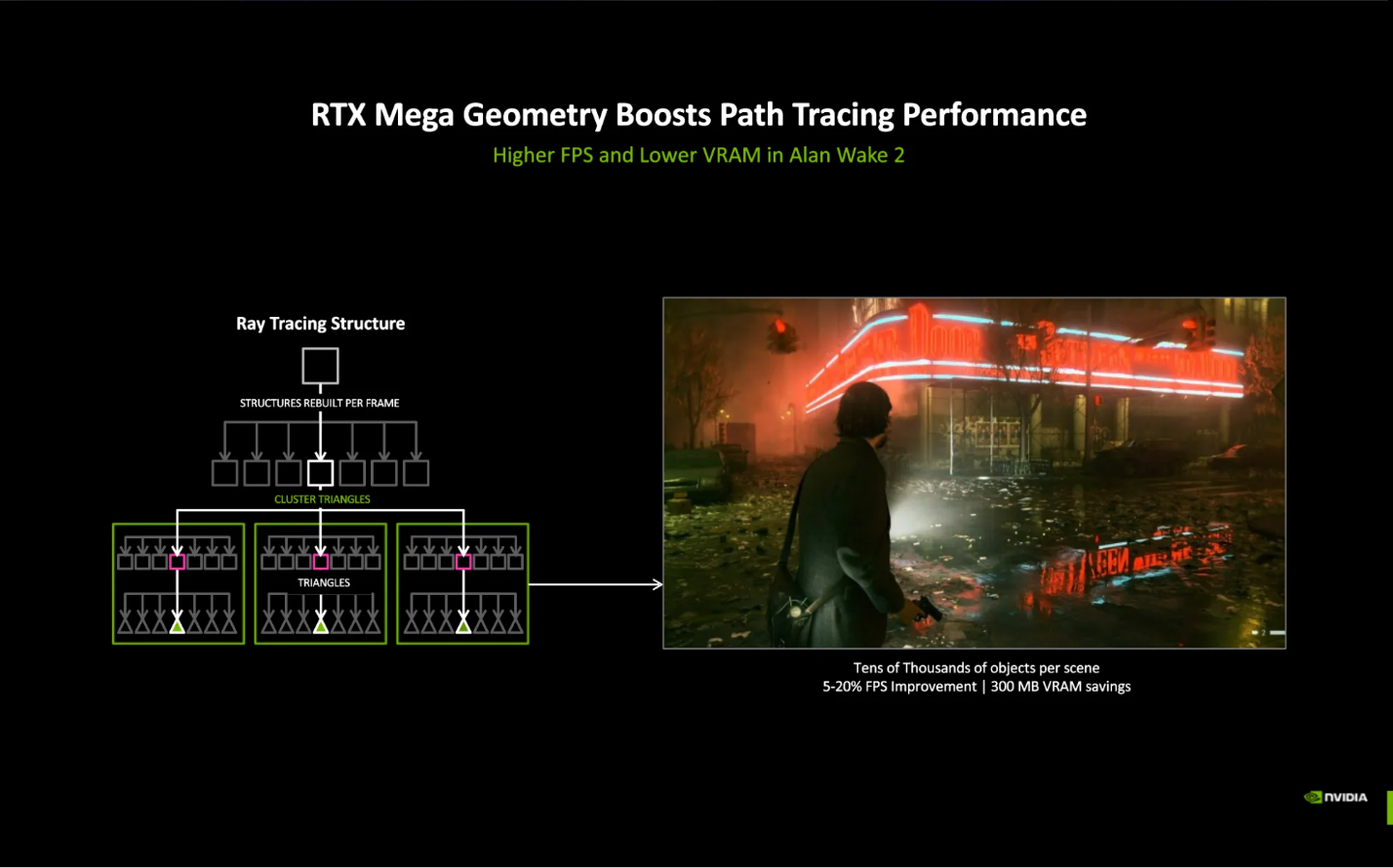

The Microsoft DXR API was designed years ago, before the level of geometric complexity that can be found in modern games. In DXR, geometry is represented using a Bounding Volume Hierarchy (BVH), which can become expensive to rebuild in dynamic scenes with dense geometry and countless complex animated objects. Fully ray tracing such geometry may require frequent BVH updates, placing significant strains on performance. As a result, developers often rely on lower-quality proxy meshes for ray-traced effects. These simplified representations contain only a fraction of the original detail, leading to artifacts such as incorrect self-occlusion and low-fidelity shadows and reflections.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

RTX Mega Geometry is a rendering technology that can significantly increase geometric detail in ray-traced games, allowing full-fidelity geometry to be traced without traditional trade-offs. RTX Mega Geometry adds an optional Cluster Acceleration Structure (CLAS), which generates batches of up to 256 triangles and is designed to be GPU-driven. This not only dramatically speeds up the process of rebuilding the BVH, but it also reduces the CPU overhead associated with BVH management.

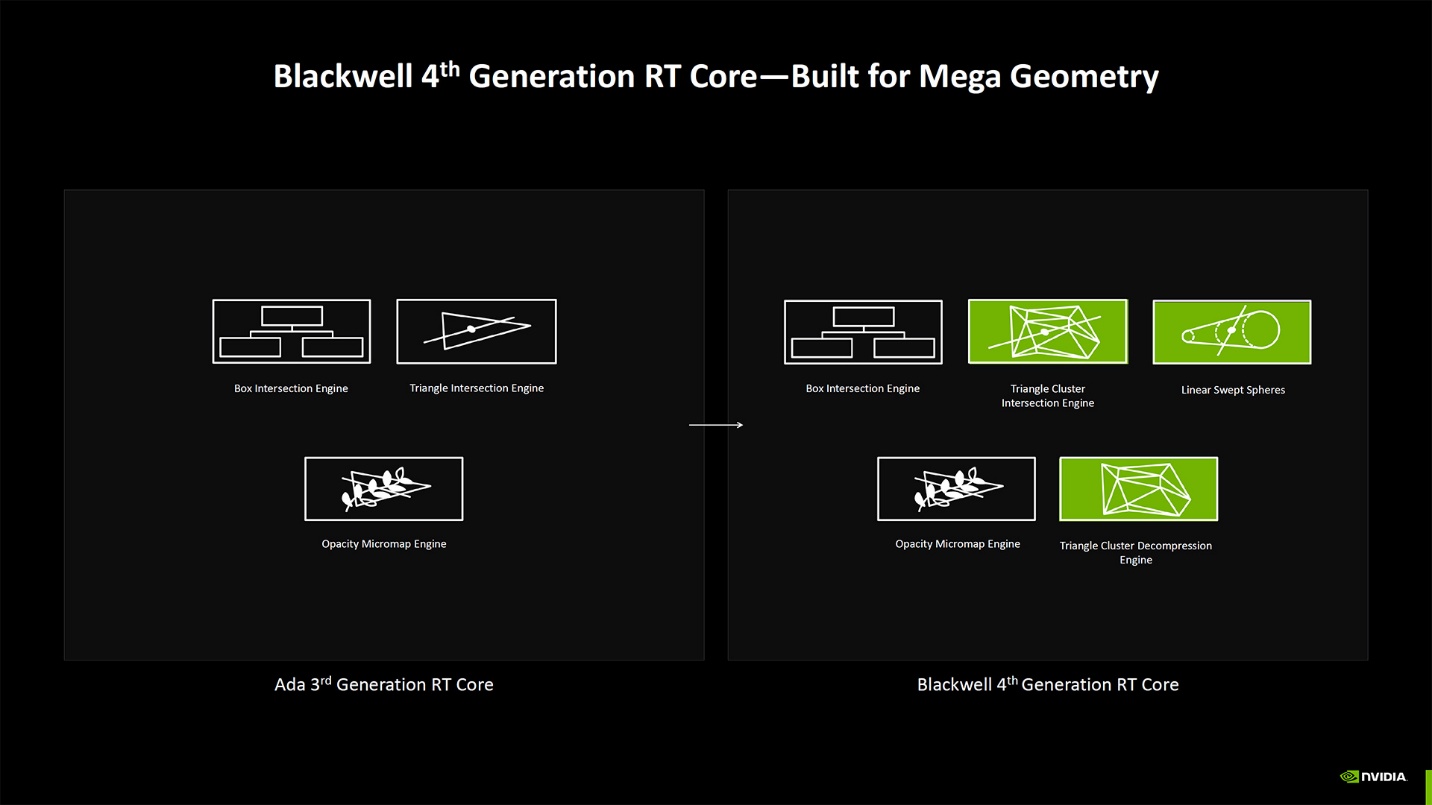

While Mega Geometry is supported on GPUs as far back as the RTX 20-series, the RTX 50-series has purpose-built hardware for the technology. The fourth-generation RT Cores in Blackwell GPUs add two new engines: a triangle cluster intersection engine and a triangle cluster compression engine. Together, they double the ray-triangle intersection rate compared to third-generation RT Cores, while also reducing VRAM usage by several hundred megabytes when ray tracing dense geometry.

The benefits of this technology vary depending on how it’s used. When applied to existing assets, it can improve performance and reduce VRAM consumption. Alternatively, it can eliminate the need for the compromises mentioned earlier – such as proxy meshes – enabling significantly higher-fidelity results without the associated visual artifacts. The latter use case comes at the cost of performance.

RTX Mega Geometry in Alan Wake 2

Alan Wake 2, released in October 2023, showcases a range of modern rendering technologies. It uses mesh shading to handle highly detailed, high-density geometry and supports path tracing on PC, making it an ideal candidate for Mega Geometry.

Mega Geometry was added to the game in title update 1.2.8 in early 2025, and Remedy opted to use it on existing assets to optimize performance and VRAM usage, as opposed to further increasing geometric complexity. Testing on an RTX 4090 back in early 2025, I compared build 1.2.8 to the previous 1.2.7 release, which did not use Mega Geometry, and observed VRAM savings of about 1 GB along with a 13% performance increase at both native 4K and 4K with DLSS Quality, with max settings and path tracing enabled. These numbers are roughly in line with the figures shared by NVIDIA at GDC 2026.

RTX Bonsai Diorama Demo

The RTX Bonsai Diorama Demo released by NVIDIA was developed in the NVIDIA RTX Branch of Unreal Engine (NvRTX) 5.6, and showcases RTX Mega Geometry, path tracing, RTX Dynamic Illumination (RTXDI), DLSS Super Resolution, DLSS Ray Reconstruction, and DLSS Frame Generation. The main focus of the demo is the fully path-traced Nanite meshes in real time.

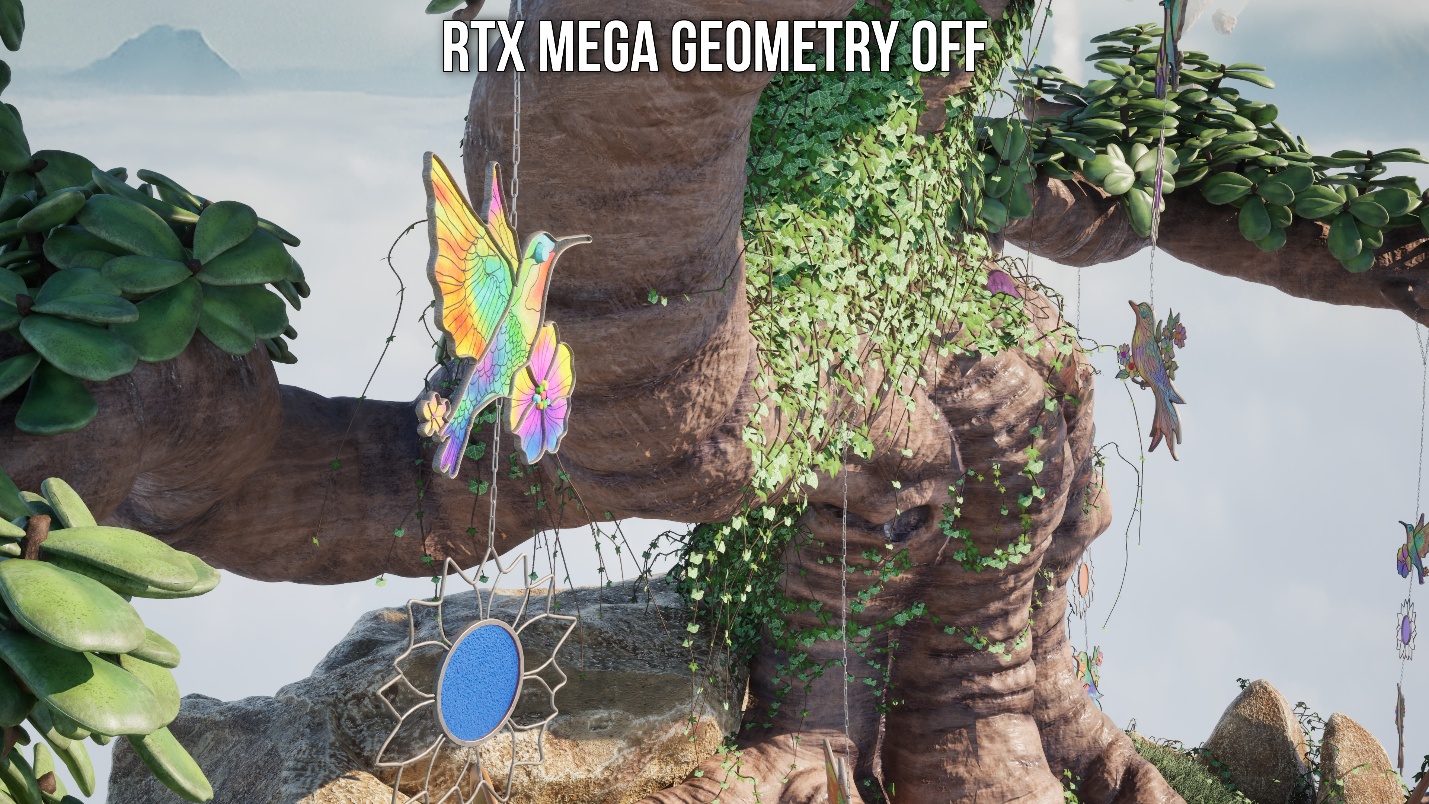

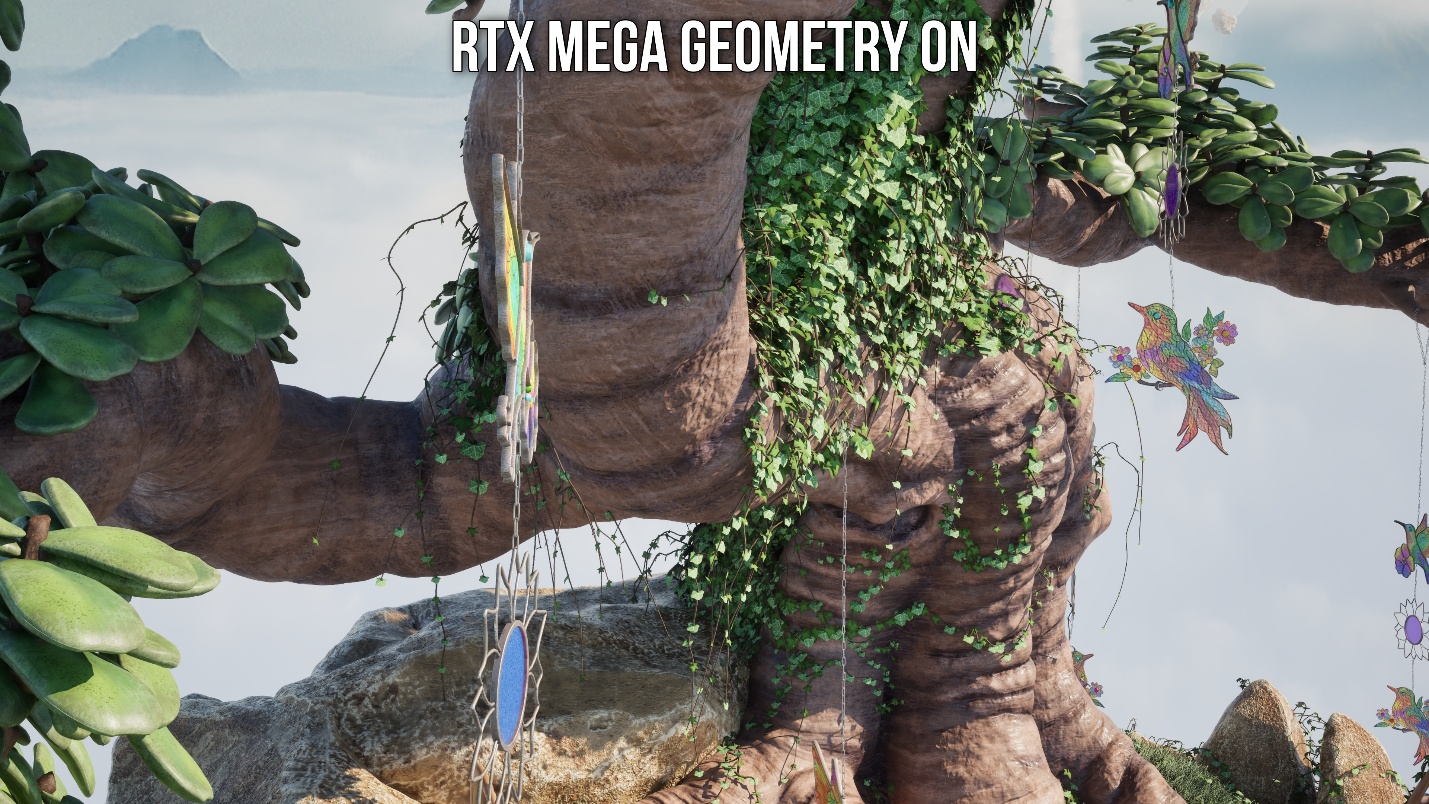

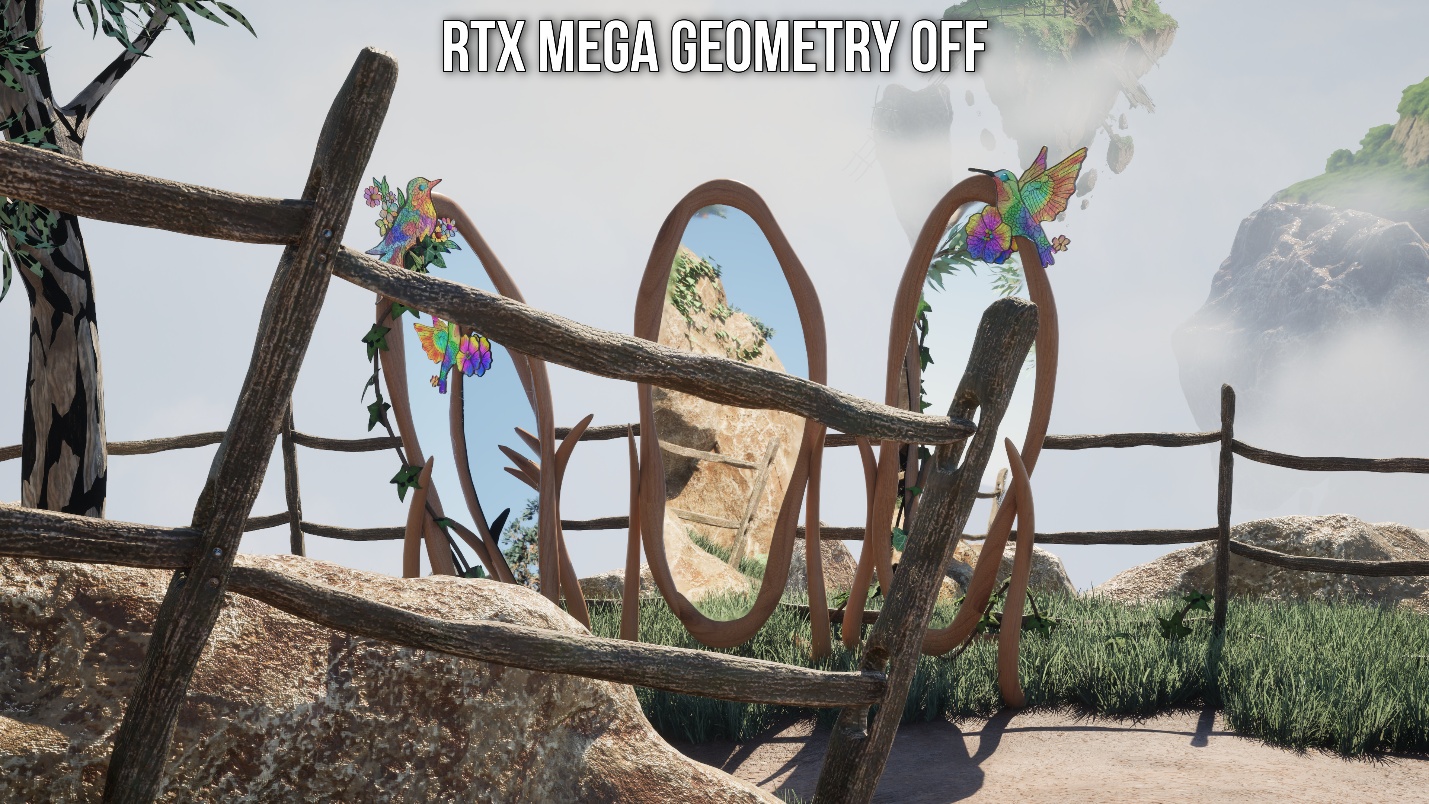

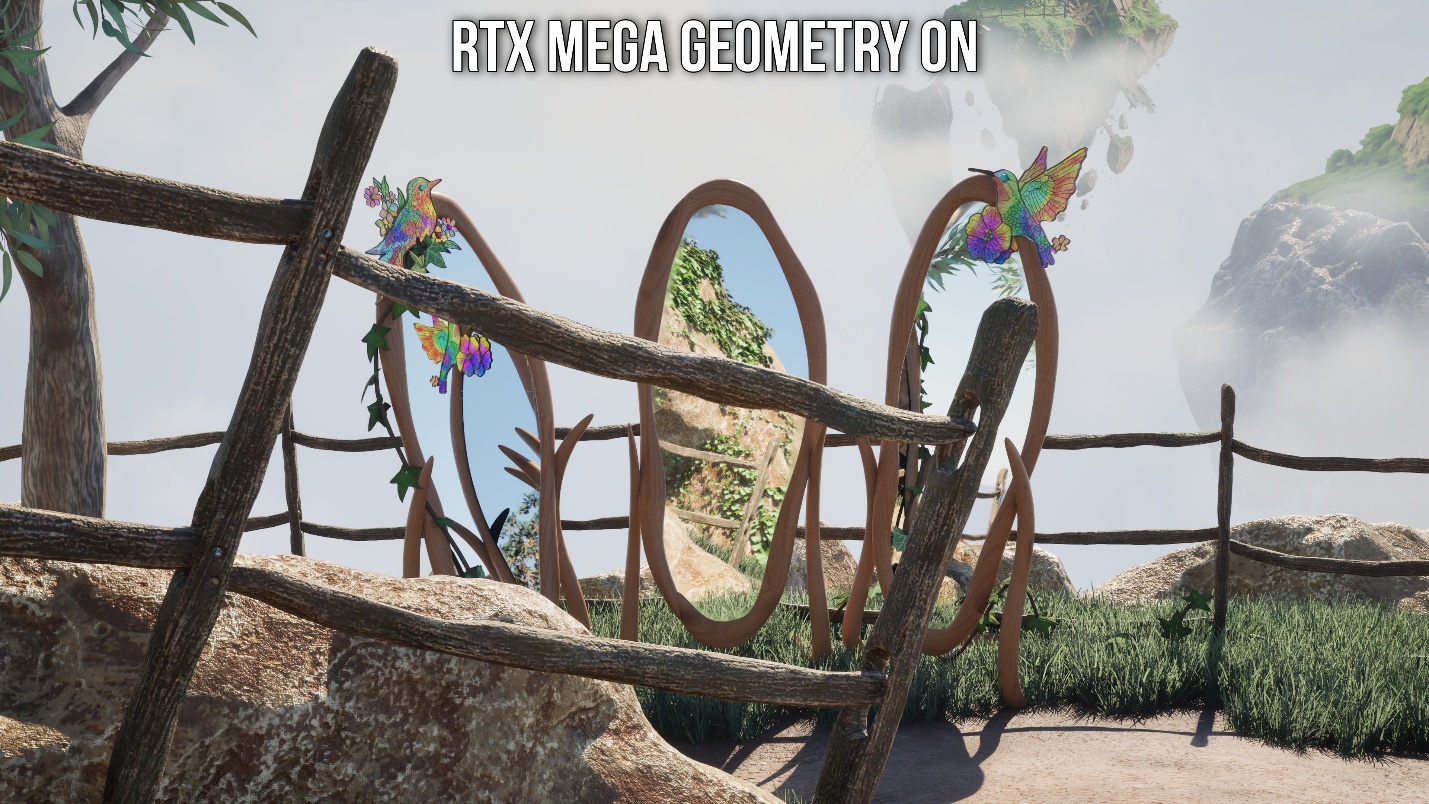

The great thing about the demo is that you can turn Mega Geometry on and off to compare image quality and performance. So that’s exactly what we will do.

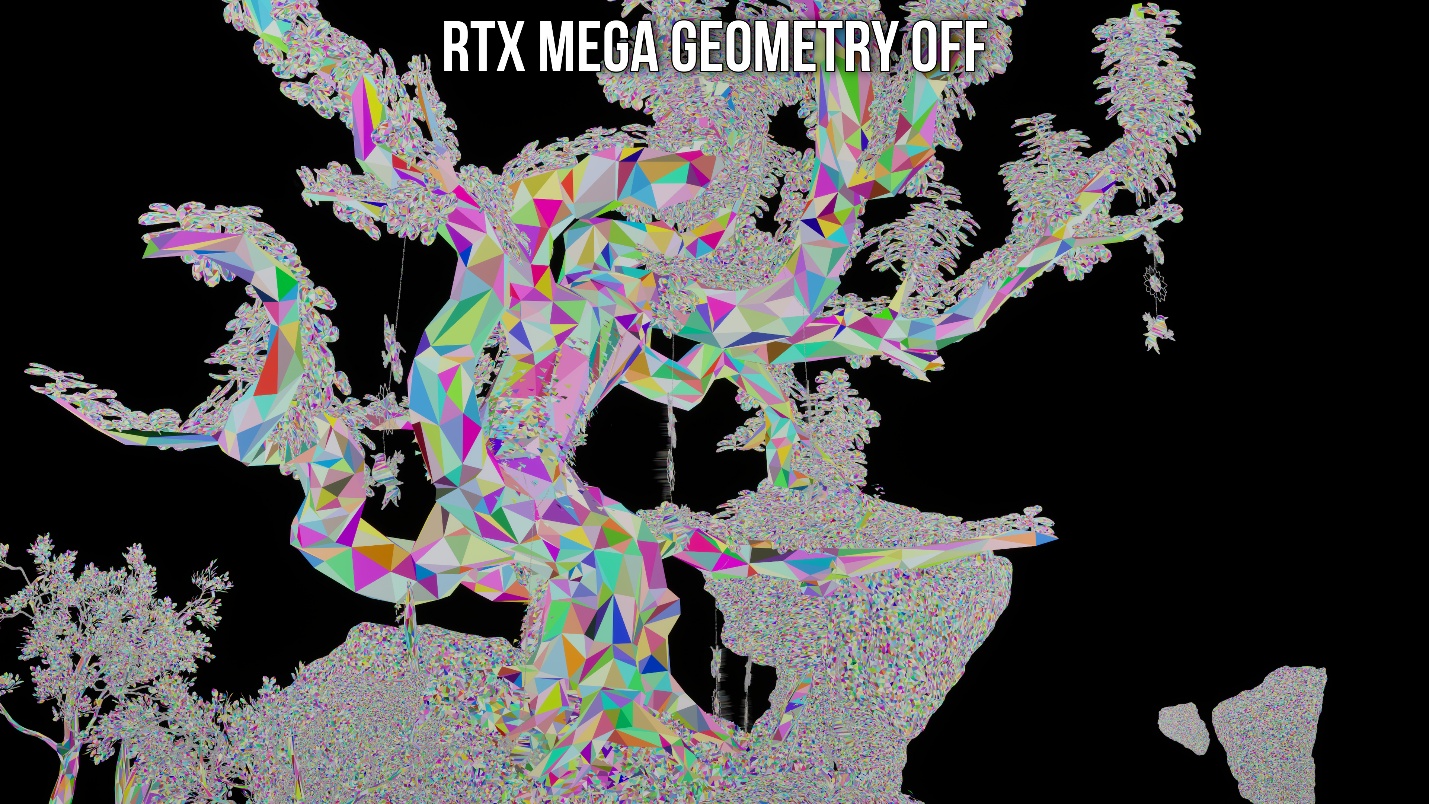

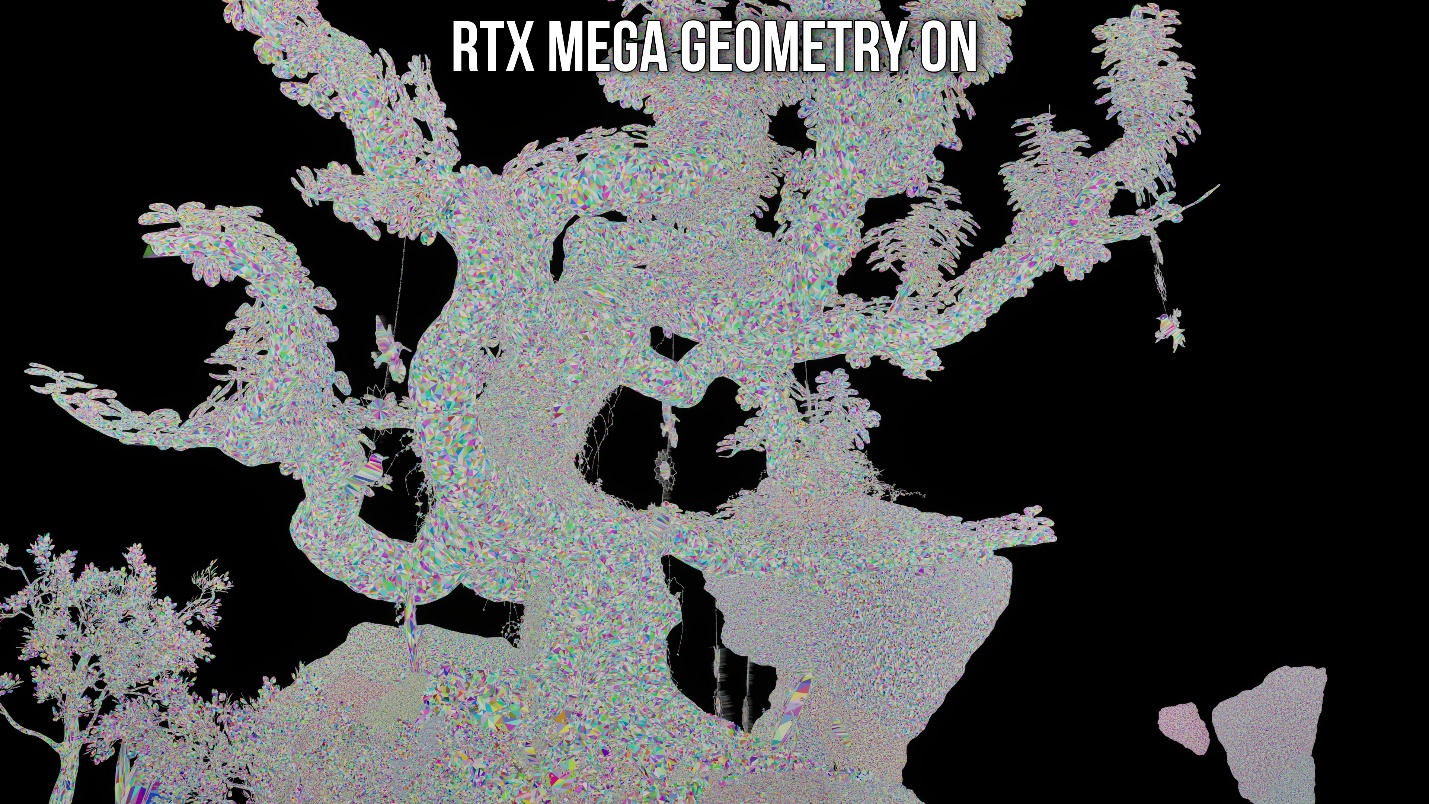

The debug visualization in the screenshots below illustrates the precision of Mega Geometry when path tracing Nanite meshes and the substantial increase in triangle count when it is enabled.

Because Mega Geometry adds full quality Nanite geometry to the BVH, ray-traced shadows no longer exhibit the visual artifacts that are present in Lumen, such as missing or incorrect shadows. In the comparison below, you can see that every object in view has accurate, pixel-perfect shadows with Mega Geometry enabled.

Similarly, reflections are no longer plagued by the issues that result from using low-quality proxy meshes. In the comparison below, you can see missing leaves from the reflection within the center mirror when Mega Geometry is disabled. With Mega Geometry enabled, you get the full Nanite mesh.

Additionally, pay attention to the tree on the far left. With Mega Geometry off, you get incorrect self-occlusion. This is entirely resolved with Mega Geometry.

The technology clearly improves image quality, but how does it perform?

It is important to note that the level of geometric detail and density observed above with Mega Geometry enabled was simply not feasible in Unreal Engine 5 prior to Mega Geometry, even on high end GPUs like the 5090. This level of fidelity could not be attained at an acceptable level of performance. Having said, while Mega Geometry makes this possible at playable framerates on high-end GPUs, path tracing such high-fidelity meshes is still extremely demanding.

Test system

- AMD Ryzen 7 9800X3D

- 64GB (2x 32GB) G.Skill Flare X5 DDR5 @6200 MHz CL30

- Crucial T700 Gen5 SSD

- Asus ROG STRIX B850-F Gaming WiFi

- Corsair Nautilus 360 RS AIO Cooler

- HAGS enabled

- Windows 11 25H2 (Build 26200.8246)

- Nvidia Driver 596.21

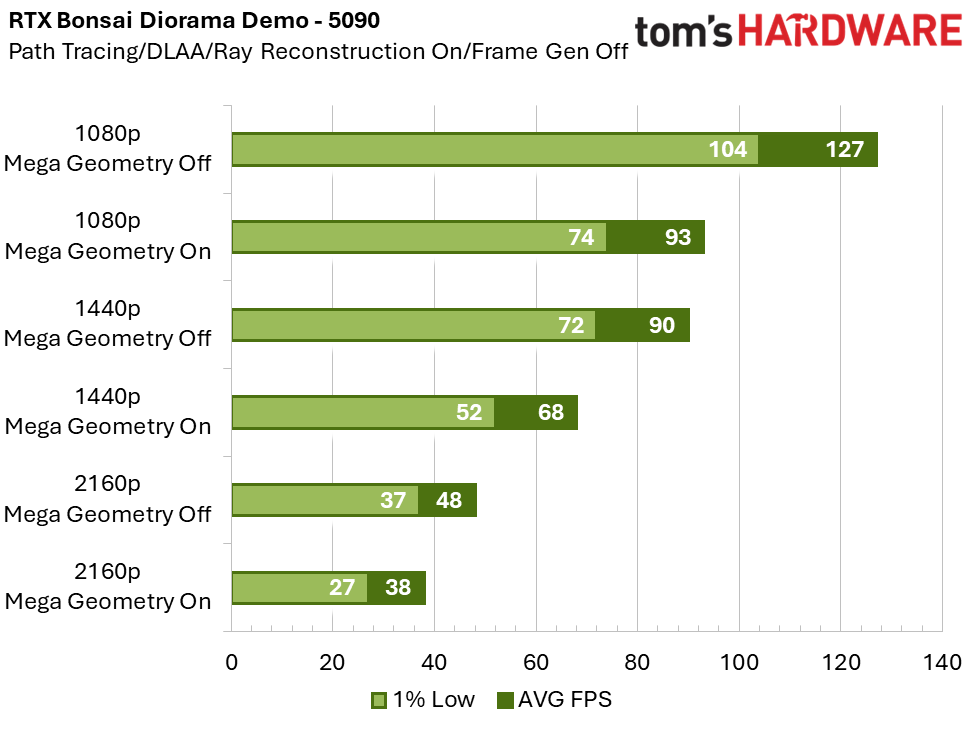

On the 5090, the performance cost of enabling RTX Mega Geometry is 23% at 1080p, 24% at 1440p, and 21% at 4K. At a rendering resolution of 1440p or below, performance is above 60 FPS. This gives you the option of using frame generation to further increase motion clarity without significantly impacting perceived latency. Typically, you want to use frame generation with a base framerate of at least 60 FPS – ideally higher – so that the game still feels responsive.

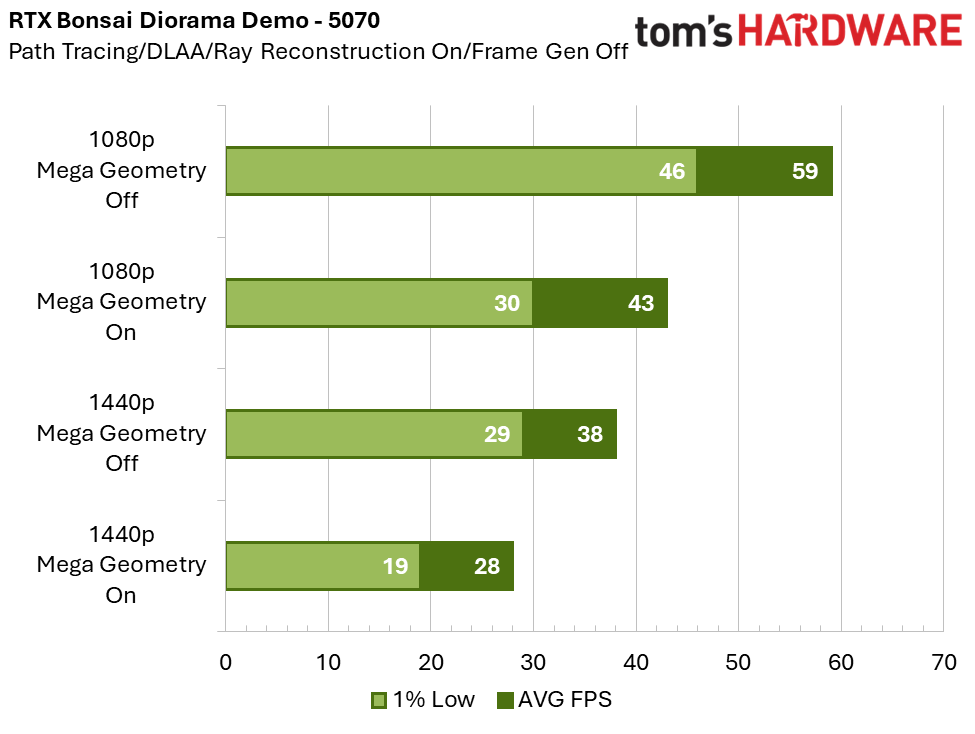

On the RTX 5070, the performance cost is 27% at 1080p, and 26% at 1440p. Unfortunately, 60 FPS with Mega Geometry is not possible in this demo on the 5070 unless you use frame generation or DLSS with an internal rendering resolution below 1080p. This result is not entirely surprising, as standard path tracing is already extremely taxing on this GPU.

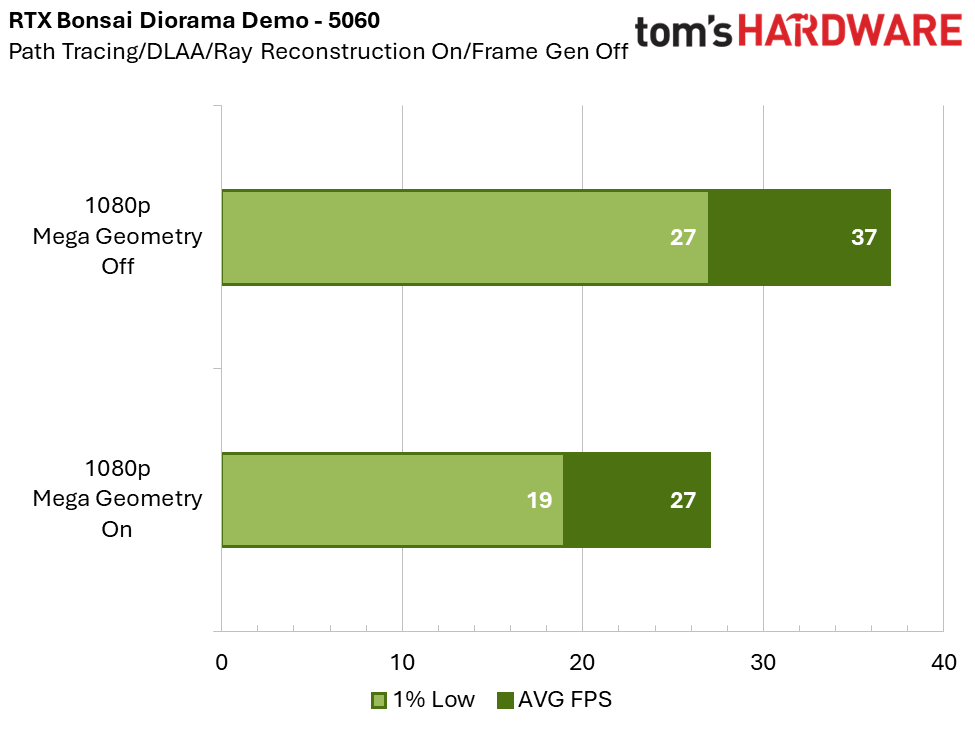

We included the 5060 here because the RTX Bonsai Diorama Demo developer guide listed this as the “recommended GPU.” Clearly, that must include the use of DLSS Super Resolution and Frame Generation, because without them, the 5060 averages below 30 FPS in this demo with Mega Geometry enabled. Indeed, with DLSS Super Resolution and Frame Generation at 1080p, the 5060 can exceed 100 FPS, but the resulting experience suffers in both image quality and input latency.

The Future of RTX Mega Geometry

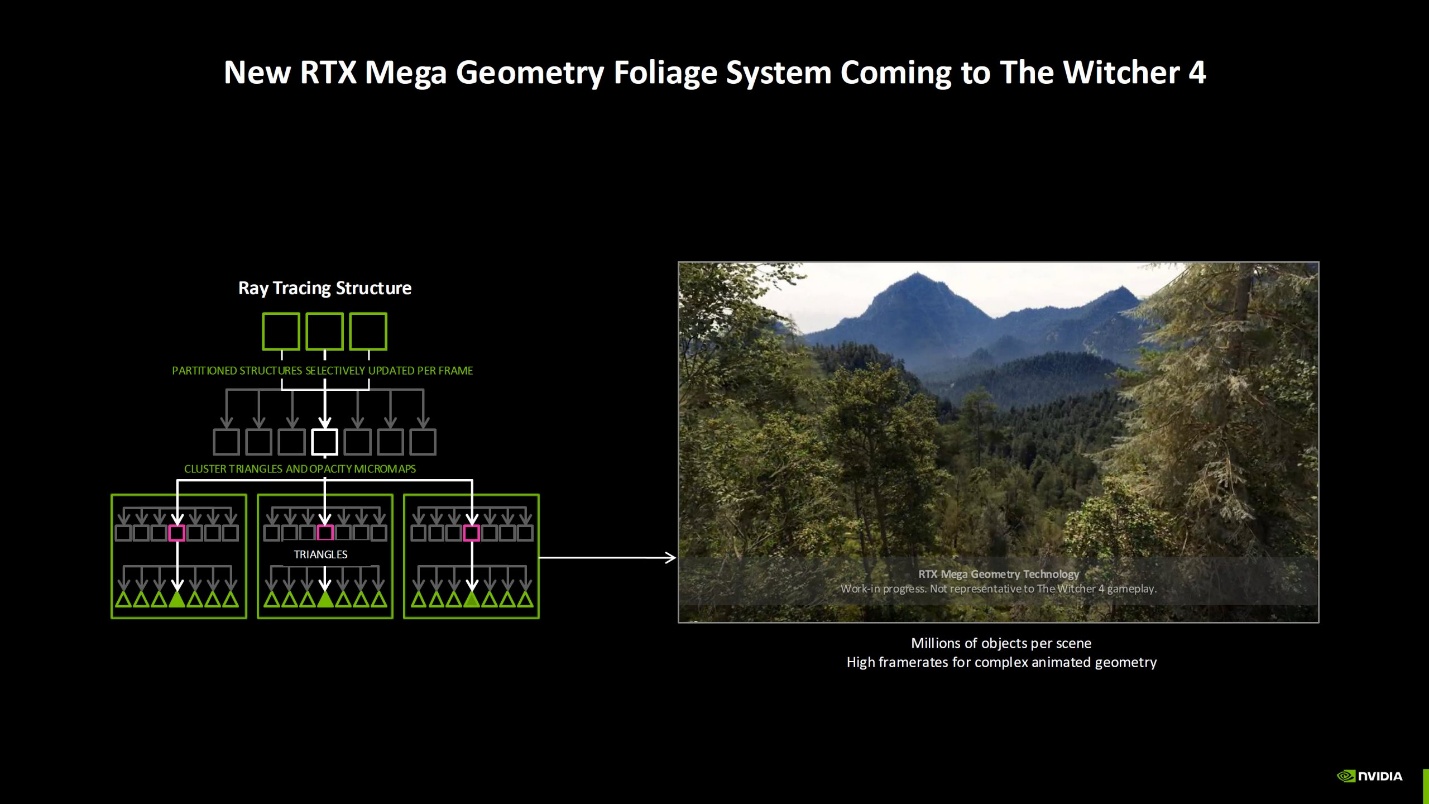

At GDC 2026, NVIDIA announced that Mega Geometry would be implemented in the upcoming 2026 titles Control Resonant and The Witcher 4.

During their Future of Path Tracing presentation at GDC 2026, NVIDIA highlighted the RTX Mega Geometry foliage system coming to The Witcher 4.

The new level-of-detail (LOD) system for foliage selectively updates only the relevant parts of the scene based on camera movement, and represents level-of-detail in a manner that is efficient to ray trace. This is done without exhibiting traditional LOD pop-in.

The system leverages Opacity Micromaps (OMMs) for distant LODs, which are used as a simplified representation of the geometry that is lightweight in memory. On the RTX 40-series and 50-series, OMMs are hardware-accelerated.

Later in the presentation, NVIDIA showcased the system in a demo featuring a vast 5x5 km forest composed of roughly 60 million plants spanning over 200 species, including around 1 million trees. Notably, the entire scene runs without streaming, with all assets resident in memory. Every element is represented as full geometry – down to individual pine needles – with some of the largest trees reaching approximately 10 million polygons each. The scene is rendered with fully dynamic, path-traced lighting, and the tree assets were provided by CD PROJEKT RED.

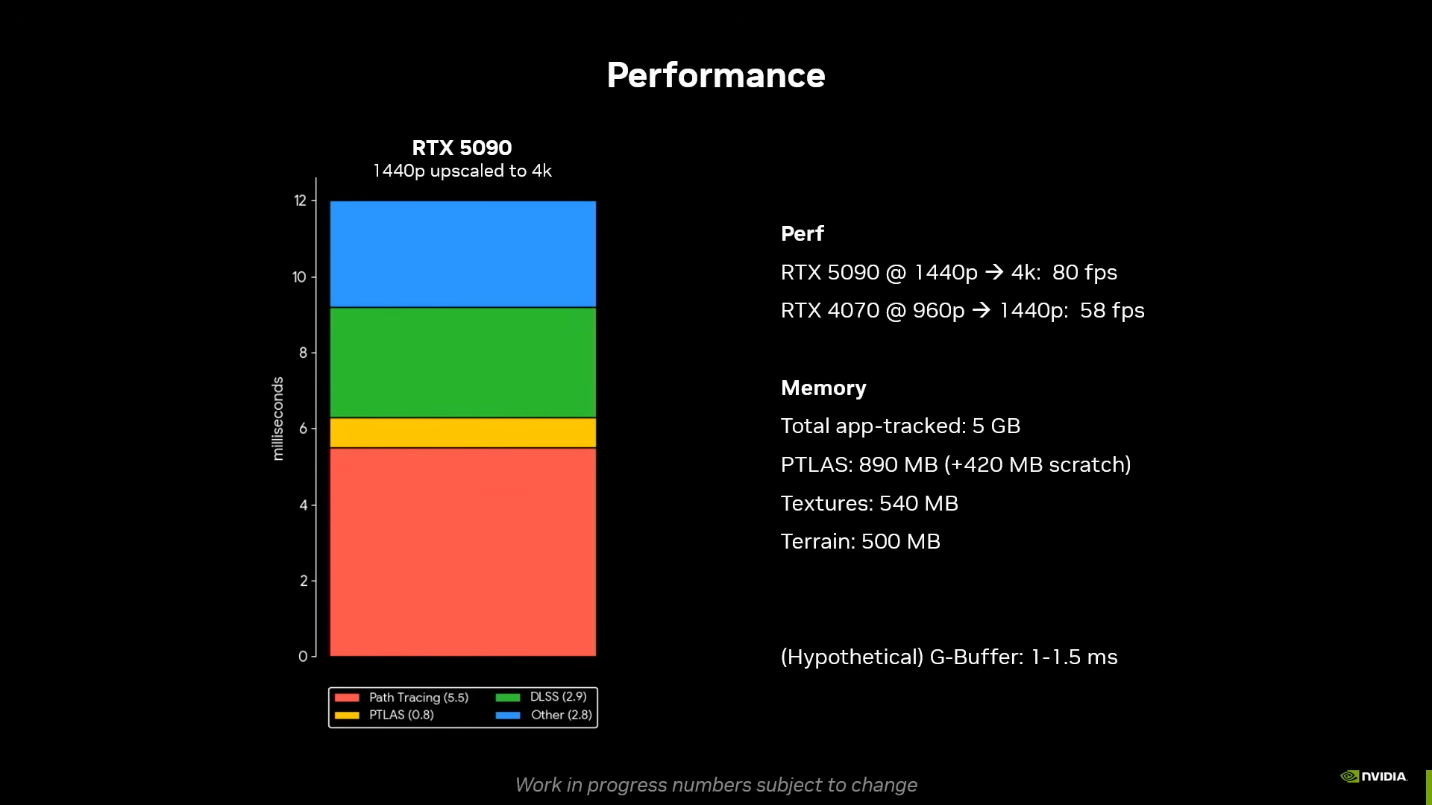

What was surprising was the level of performance achieved in this demo. On the RTX 5090 at 4K using DLSS Quality mode (internal rendering resolution of 1440p), the demo ran at 80 FPS. This is nearly 18% faster than the Bonsai Diorama demo ran on the 5090 at 1440p.

On the RTX 4070 at 1440p using DLSS Quality mode (internal rendering resolution of 960p), it ran at about 58 FPS.

RTX Mega Geometry represents a meaningful step toward truly photorealistic real-time graphics. With advancements like the new LOD system for foliage and ongoing performance optimizations, it has the potential to enable scenes of unprecedented geometric complexity that can be rendered with fully dynamic, path-traced lighting at playable framerates on modern hardware.

Dan Mateescu is a PC enthusiast with many years of experience benchmarking PC hardware. In 2021, he started his own YouTube channel called 'Compusemble' where he benchmarks hardware in video games and the latest tech demos.

-

hotaru251 reminder that majority of RTX features are not used in the intended way by devs because it takes time (and money) to do so and we get to buy brute forcing poorly optimized games.Reply

Would love to believe this would help but I won't trust it will based on history.

edit: also we really need to stop chasing photorealism in games outside cinematic games...its a waste of $/time & has too much requirements at all points (hardware & dev time) -

razor512 Reply

But don't you want a lego game to be rendered slightly more accurately and all at the low, low cost of needing frame gen to hit 30FPS? :)hotaru251 said:reminder that majority of RTX features are not used in the intended way by devs because it takes time (and money) to do so and we get to buy brute forcing poorly optimized games.

Would love to believe this would help but I won't trust it will based on history.

edit: also we really need to stop chasing photorealism in games outside cinematic games...its a waste of $/time & has too much requirements at all points (hardware & dev time)

https://www.digitalfoundry.net/features/frame-gen-to-30fps-lego-batmans-bizarre-pc-specs-sheet-is-a-case-study-in-how-not-to-market-a-game

Anyway it seems like when we get a combination of game devs not wanting optimization, while seeking even slightly better visuals, requirements skyrocket to an astronomical degree. -

-Fran- "What is there not to like?"Reply

Well, I don't see any red or blue graphs in there. Does anyone else see any other colour? I don't.

Screw praising nVidia for doing their best to corner the market and strangle consumers into a non-choice. Do not forget: you're praising an effective monopoly; one that is screwing you over on many fronts already.

Regards. -

derekullo Reply

How are they screwing us over on many fronts?-Fran- said:"What is there not to like?"

Well, I don't see any red or blue graphs in there. Does anyone else see any other colour? I don't.

Screw praising nVidia for doing their best to corner the market and strangle consumers into a non-choice. Do not forget: you're praising an effective monopoly; one that is screwing you over on many fronts already.

Regards.

They single handily were responsible for all of the graphics cards I used growing up, powering games from the original Deus Ex in 2000 to Morrowind to Guild Wars to WoW to Cyberpunk.

When I turned 18 back in 2004, my parents seeing how I liked gaming, bought me 10 shares of Nvidia stock for my birthday ... was like $15 each back then.

Those 10 shares, by themself, have since split into 1200 shares worth over $240,000 today.

How are they a monopoly?

AMD and now Intel are both highly capable companies that are trying to create a better GPU.

If you don't want an Nvidia GPU then you don't have to buy one.

Working for a library, my boss had asked me to test out an Arc B580 to see if it was a suitable for use in the upcoming refresh of our teen gaming computers which currently use aging Geforce 2080s.

Arc B580 is perfectly capable of maintaining 60 fps in games like Cyberpunk at 1080p on high to ultra settings.

Obviously Cyberpunk isn't one of the Steam games for the teens, due to its M rating, but was chosen for its GPU stress testing abilities. -

-Fran- Reply

You can thank, among many others, nVidia for the current AI bubble; I would even dare say they're a big factor at this point as well.derekullo said:How are they screwing us over on many fronts?

Also, most of the technological advancements of the last decade have been not thanks to nVidia, but Microsoft and AMD with DX12; sure nVidia has had its hand in the pot, but only so the spec adapts to their needs.

The only thing nVidia has tried to contribute to anything has been selling you proprietary solutions that can't be used by any other hardware and strangling their AIBs so they can absolutely squeeze the market dry. Look at the prices of their current cards. AMD got a lot of flak for trying to keep the MSRP via cashbacks, but nVidia has been doing it for over a decade with no one batting an eye and now that they stopped, because they don't care about you or the rest of the people "growing up" with nVidia, their cards are absurdly expensive. Even more so than normally, as they're not even trying to compete anymore.

That only shows your bias over the years and how you never got out of your comfort zone until recently (or so I understand your phrasing).derekullo said:

They single handily were responsible for all of the graphics cards I used growing up, powering games from the original Deus Ex in 2000 to Morrowind to Guild Wars to WoW to Cyberpunk.

Ah, you're an "investor". No wonder you have this view.derekullo said:When I turned 18 back in 2004, my parents seeing how I liked gaming, bought me 10 shares of Nvidia stock for my birthday ... was like $15 each back then.

Those 10 shares, by themself, have since split into 1200 shares worth over $240,000 today.

Have you been living under a rock for the last few years?derekullo said:How are they a monopoly?

Right... Because nVidia "allows" you to gracefully move to AMD or Intel and enjoy the same experiences, like with PhysX or DLSS, right? At the very least, as of late, AMD is definitely better at their drivers, even if there's other grievances with AMD. Intel... I doubt they'll get another discrete GPU for consumers, not with nVidia now owning 5% of it and ARC just fumbling hard.derekullo said:AMD and now Intel are both highly capable companies that are trying to create a better GPU.

If you don't want an Nvidia GPU then you don't have to buy one.

Working for a library, my boss had asked me to test out an Arc B580 to see if it was a suitable for use in the upcoming refresh of our teen gaming computers which currently use aging Geforce 2080s.

Arc B580 is perfectly capable of maintaining 60 fps in games like Cyberpunk at 1080p on high to ultra settings.

Obviously Cyberpunk isn't one of the Steam games for the teens, due to its M rating, but was chosen for its GPU stress testing abilities.

No need to justify anything else, "investor". We know where you stand.

Regards. -

derekullo Reply

I praised Intel's Arc and I'm still just the Nvidia investor? ... I didn't even buy the shares lol-Fran- said:You can thank, among many others, nVidia for the current AI bubble; I would even dare say they're a big factor at this point as well.

Also, most of the technological advancements of the last decade have been not thanks to nVidia, but Microsoft and AMD with DX12; sure nVidia has had its hand in the pot, but only so the spec adapts to their needs.

The only thing nVidia has tried to contribute to anything has been selling you proprietary solutions that can't be used by any other hardware and strangling their AIBs so they can absolutely squeeze the market dry. Look at the prices of their current cards. AMD got a lot of flak for trying to keep the MSRP via cashbacks, but nVidia has been doing it for over a decade with no one batting an eye and now that they stopped, because they don't care about you or the rest of the people "growing up" with nVidia, their cards are absurdly expensive. Even more so than normally, as they're not even trying to compete anymore.

That only shows your bias over the years and how you never got out of your comfort zone until recently (or so I understand your phrasing).

Ah, you're an "investor". No wonder you have this view.

Have you been living under a rock for the last few years?

Right... Because nVidia "allows" you to gracefully move to AMD or Intel and enjoy the same experiences, like with PhysX or DLSS, right? At the very least, as of late, AMD is definitely better at their drivers, even if there's other grievances with AMD. Intel... I doubt they'll get another discrete GPU for consumers, not with nVidia now owning 5% of it and ARC just fumbling hard.

No need to justify anything else, "investor". We know where you stand.

Regards.

Very few games still use PhysX and you don't need DLSS to get 60 fps with an Intel Arc B580 in Cyberpunk at near max settings ... perfectly suitable for 1080p gaming.