John Carmack muses using a long fiber line as an L2 cache for streaming AI data — programmer imagines fiber as alternative to DRAM

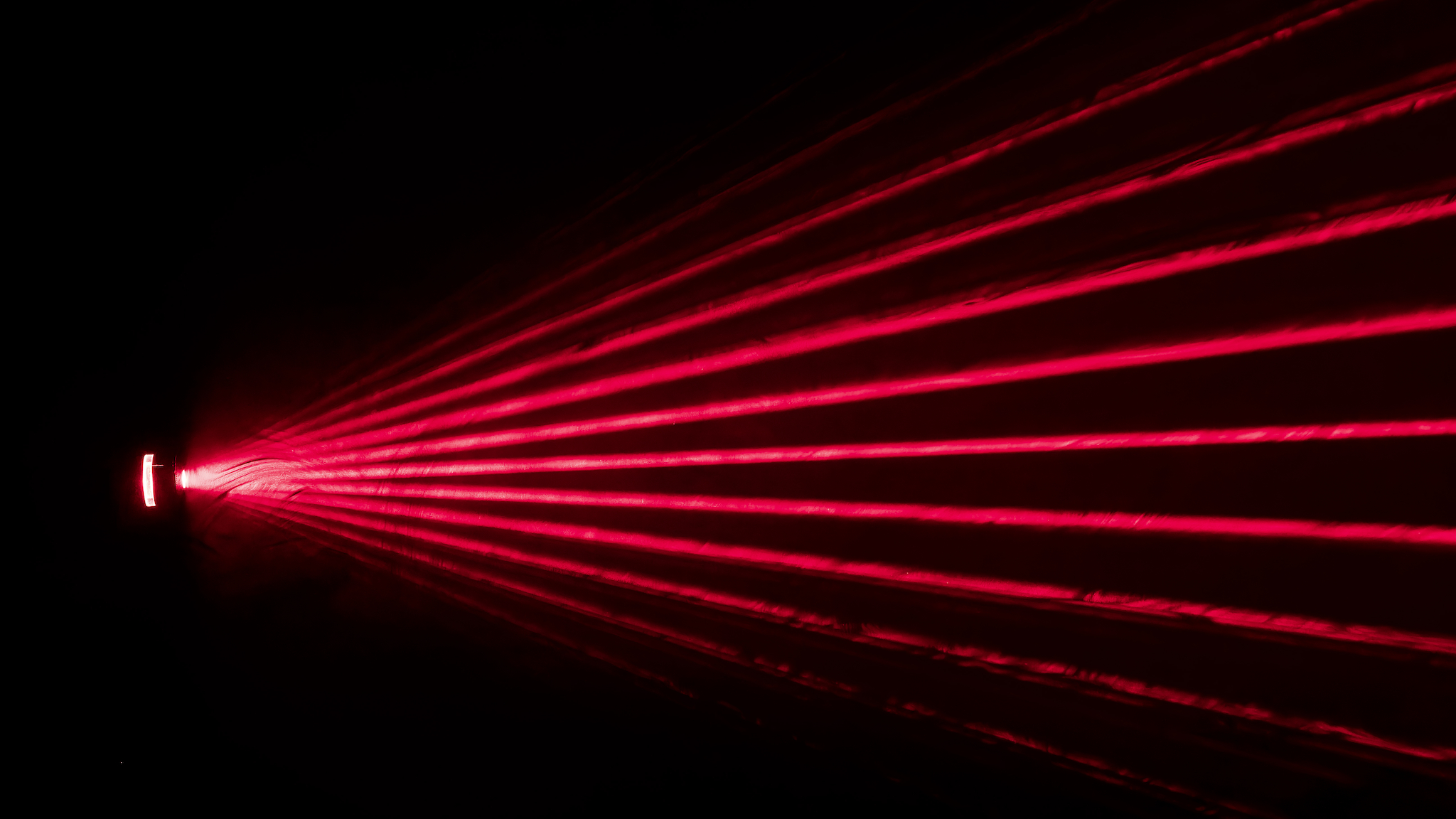

Yes, it's delay-line memory, but with lasers!

Anybody can create an X account and speak their mind, but not all minds are worth listening to. When John Carmack tweets, though, folks tend to listen. His latest musings pertain using a protracted fiber loop as an L2 cache of sorts, to hold AI model weights for near-zero latency and gigantic bandwidth.

Carmack came upon the idea after considering that single mode fiber speeds have reached 256 Tb/s, over a distance of 200 km. With some back-of-the-Doom-box math, he worked out that 32 GB of data are in the fiber cable itself at any one point.

AI model weights can be accessed sequentially for inference, and almost so for training. Carmack's next logical step, then, is using the fiber loop as a data cache to keep the AI accelerator always fed. Just think of conventional RAM as just a buffer between SSDs and the data processor, and how to improve or outright eliminate it.

256 Tb/s data rates over 200 km distance have been demonstrated on single mode fiber optic, which works out to 32 GB of data in flight, “stored” in the fiber, with 32 TB/s bandwidth. Neural network inference and training can have deterministic weight reference patterns, so it is…February 6, 2026

The discussion spawned a substantial amount of replies, many from folks from high pay grades. Several pointed out that the concept in itself is akin to delay-line memory, harkening back to the middle of the century when mercury was used as a medium, and soundwaves as data. Mercury's mercurialness proved hard to work with, though, and Alan Turing himself proposed using a gin mix as a medium.

The main real-world benefit of using a fiber line would actually be in power savings, as it takes a substantial amount of power to keep DRAM going, whereas managing light requires very little. Plus, light is predictable and easy to work with. Carmack notes that "fiber transmission may have a better growth trajectory than DRAM," but even disregarding plain logistics, 200 km of fiber are still likely to be pretty pricey.

Some commenters remarked other limitations outside of the fact that Carmack's proposal would require a lot of fiber. Optical amplifiers and DSPs could eat into energy savings, and DRAM prices will have to come down at some point anyway. Some, like Elon Musk, even suggested vacuum as the medium (space lasers!), though the practicality of such a design would be iffy.

Carmack's tweet alluded to the more practical approach of using existing flash memory chips, wiring enough of them together directly to the accelerators, with careful consideration for timing. That would naturally require a standard interface agreed upon by flash and AI accelerator makers, but given the insane investment in AI, that prospect doesn't seem far-fetched at all.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Variations on that idea have actually been explored by several groups of scientists. Approaches include Behemoth from 2021, FlashGNN and FlashNeuron from 2021, and more recently, the Augmented Memory Grid. It's not hard to imagine that one or several of these will be put into practice, assuming they aren't already.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Bruno Ferreira is a contributing writer for Tom's Hardware. He has decades of experience with PC hardware and assorted sundries, alongside a career as a developer. He's obsessed with detail and has a tendency to ramble on the topics he loves. When not doing that, he's usually playing games, or at live music shows and festivals.

-

Gururu Assuming current solid state media for L2 cache provide means for verification, I would be curious to know how data in a fiber might be managed free from corruption.Reply -

Scott_Tx The same way any data transmitted over fiber is checked. Some kind of CRC code and if there's an error you read it from the storage again.Reply -

Gururu Reply

Sounds great!Scott_Tx said:The same way any data transmitted over fiber is checked. Some kind of CRC code and if there's an error you read it from the storage again. -

Moores_Ghost Carmack? Jim Keller came up with a similar idea back in 2024. Nice that John is adding to it, but we need to give credit where credit is due.Reply -

mrlaich So what's stopping this from being a backbone like structure for nervous system linings throughout a robot for "muscle memory" storage?Reply -

bit_user Reply

I think this isn't true, for transformer-type models.The article said:AI model weights can be accessed sequentially for inference -

bit_user Reply

Yeah, L2 caches usually include some ECC.Gururu said:Assuming current solid state media for L2 cache provide means for verification,

Well, PCIe 6.0 added a forward-error-correction (FEC) header. This avoids the need to retransmit, in the event of small numbers of errors. It's conceptually similar to ECC, I think.Gururu said:I would be curious to know how data in a fiber might be managed free from corruption.

For all I know, protocols used over long-haul fiber might already employ such a mechanism. -

JohnyFin I think that next logical step to store temp data are limits of speed of light. Correction error management is a challenge.Reply -

abufrejoval It's one of the reasons I like looking at old computing stuff so much, right from the start in the 1940's. There is a video out there where JP Eckert discusses the ENIAC design (he might also be dissing John von Neumann), that's just so full of insights into those early stages. Likewise I consider Grace Hopper's lectures a must-see.Reply

I keep thinking that by the late 1960's pretty near everything in computing had already been invented, because those guys were really looking in every direction. And the various technologies around RAM were incredibly diverse, they really tried anything that somehow could hold state, expand capacity or relieve the memory bottleneck.

Whether it was media (those delay lines, magnetic core, and then DRAM), virtual memory including fixed head magnetic drum drives, or the Harvard vs. the Princeton architecture or even content addressable memory, it had all been tried and tested. Also architecture things like >60 bit designs, single level store, or capability based computing went further than most manage even today.

Even in terms of the math theory around computing, most things were already settled by Turing, Post, Shannon etc.

Lithography has given the industry incredible growth, but also extreme tunnel vision, Unix has also been a huge throwback compared to Multics. -

Spuwho Bell Labs has already built an optical storage device. They switch in laser light using a high speed micromirror and then laser light just reflects indefinitely between mirrors until the micromirror switches the light back out. Some people consider it a time machine since the state of the light never changes as long as it is inside the apparatus.Reply

But it seems using a fiber is an overkill, when light switching seems more practical.