Part 4: Avivo HD vs. PureVideo HD

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

CPU Benchmarks: Dual-Core Athlon 4800+ vs. Single-Core Sempron 3200+

We know these HTPCs can do the job with the faster dual-core Athlon 64 X2 4800+ at 2,400 MHz, but will a single-core Sempron 3200+ at 1,800 MHz be able to provide playback?

The short answer seems to be no. This CPU utilization chart looks empty, but the results are there. You can’t see them because they are solid lines at the 100% mark.

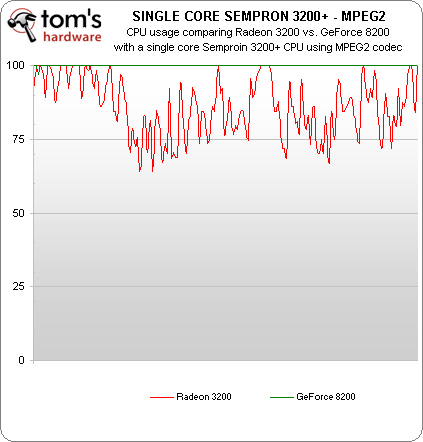

What the chart doesn’t show is that the Radeon 3200 demonstrated skipped frames, but the frames per second was MUCH faster and more consistent than the GeForce 8200 could muster. It gives us a little hope, and maybe playback will be possible with some of the less demanding codecs.

Aha! The CPU usage is high on the Radeon 3200, but it’s not at 100%, and playback appears smooth. The GeForce 8200 CPU utilization remains at 100%, however, and playback is a slide show. Let’s see if the VC1 title shows similar results:

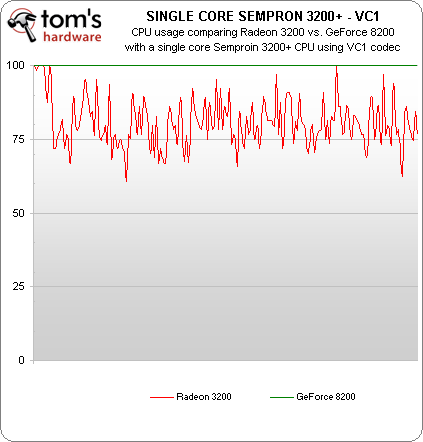

It does! Once again, the lowly Sempron 3200+ paired with the Radeon 3200 provides viewable 1080p playback, while the GeForce 8200 is stuttering heavily.

While H264 playback was a no-go on the Sempron 3200+, we did a quick overclocking test and at 2.3 GHz, the single-core Sempron was able to play back all of the Blu-ray disks in our test suite—including the H.264 title—stutter free on the 780G chipset. On a side note, overclocking the Sempron on the GeForce motherboard didn’t provide stutter-free performance.

We’d consider it likely that a cheap Sempron LE-1300 CPU at 2.3 GHz would be able to play back all Blu-ray titles at 1080p resolution with the 780G. However, we don’t think we’d recommend it, and here’s why: on Newegg, the single-core Sempron LE is $40, but the dual-core Athlon X2 BE-2400 is a mere $10 more and will provide smoother performance all-around. For $60, a home theater enthusiast can get an Athlon X2 5200+ at 2.7 GHz that will provide even better performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

In our opinion, this is one case where it’s worth the $20 for the extra margin of comfort. Of course, if you plan to construct your HTPC and you want a low-power CPU for low heat output, the Athlon X2 4450e at 2.3 GHz is probably a good choice at $60 as it’s rated to use only 45 watts. The point is, this is one area where we don’t think it makes sense to skimp on processing power.

Current page: CPU Benchmarks: Dual-Core Athlon 4800+ vs. Single-Core Sempron 3200+

Prev Page Resolution Benchmarks: 1080p vs. 780p Next Page Graphics Memory Benchmarks: 256MB vs. 128MBDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

abzillah Don't the 780G chips have hybrid technology? It would have been great to see what kind of performance difference it would make to add a discrete card with a 780G chip. Motherboards with integrated graphics cost about the same as those without integrated graphics, and so I would choose an integrated graphics + a discrete graphic card for hybrid performance.Reply -

liemfukliang Wao, you should update this article part 5 in tuesday when NDA 9300 lift out. 9300 vs 790GX. Does this NVidia VGA also defect?Reply -

TheGreatGrapeApe Nice job Don !Reply

Interesting seeing the theoretical HQV difference being a realistic nil due to playability (does image enhancement of a skipping image matter?)

I'll be linking to this one again.

Next round HD4K vs GTX vs GF9 integrated, complete with dual view decoding. >B~)

-

kingraven Great article, specially liked the decrypted video benchmarks as I was indeed expecting a much higher difference.Reply

Also was expecting that the single core handled it better as I use a old laptop with pentium M 1500mhz & ATI 9600 as a HTPC and it plays nearly all HD media I trow at it smoothly (Including 1080P) trough ffdshow. Notice the files are usually Matroska or AVI and the codecs vary but usually are H264.

I admit since its an old PC without blueray or HD-DVD I have no idea how the "real deal" would perform, probably as bad or worse as the article says :P -

I have a gigabyte GA-MA78GM-S2H m/b (780G)Reply

I just bought a Samsung LE46A656 TV and I have the following problem:

When I connect the TV with standard VGA (D-SUB) cable,

I can use Full HD (1920 X 1080) correctly.

If I use the HDMI or DVI (with DVI-> HDMI adaptor) I can not use 1920 X 1080 correctly.

The screen has black borders on all sides (about 3cm) and the picture is weird, like the monitor was not driven in its native resolution, but the 1920 X 1080 signal was compressed to the resolution that was visible on my TV.

I also tried my old laptop (also ATI, x700) and had the same problem.

I thought that my TV was defective but then I tried an old NVIDIA card I had and everything worked perfect!!!

Full 1920 X 1080 with my HDMI input (with DVI-> HDMI adaptor).

I don't know if this is a ATI driver problem or a general ATI hardware limitation,

but I WILL NEVER BUY ATI AGAIN.

They claim HDMI with full HD support. Well they are lying!

-

That's funny, bit-tech had some rather different numbers for HQV tests for the 780g board.Reply

http://www.bit-tech.net/hardware/2008/03/04/amd_780g_integrated_graphics_chipset/10

What's going on here? I assume bit-tech tweaked player settings to improve results, and you guys left everything at default?

-

puet What about the image enhacements in the HQV test posible with a 780G and a Phenom procesor?, would this mix stand up in front of the discrete solution chosen?.Reply

This one could be an interesting part V in the articles series. -

genored azraelI have a gigabyte GA-MA78GM-S2H m/b (780G)I just bought a Samsung LE46A656 TV and I have the following problem:When I connect the TV with standard VGA (D-SUB) cable, I can use Full HD (1920 X 1080) correctly.If I use the HDMI or DVI (with DVI-> HDMI adaptor) I can not use 1920 X 1080 correctly. The screen has black borders on all sides (about 3cm) and the picture is weird, like the monitor was not driven in its native resolution, but the 1920 X 1080 signal was compressed to the resolution that was visible on my TV.I also tried my old laptop (also ATI, x700) and had the same problem.I thought that my TV was defective but then I tried an old NVIDIA card I had and everything worked perfect!!!Full 1920 X 1080 with my HDMI input (with DVI-> HDMI adaptor).I don't know if this is a ATI driver problem or a general ATI hardware limitation, but I WILL NEVER BUY ATI AGAIN.They claim HDMI with full HD support. Well they are lying!Reply

LEARN TO DOWNLOAD DRIVERS -

Guys...I own this Gigabyte board. HDCP works over DVI because that's what I use at home. Albeit I go from DVI from the motherboard to HDMI on the TV (don't ask why, it's just the cable I had). I don't have ANYDVD so, I know that it works.Reply

As for the guy having issues with HDMI with the ATI 3200 onboard, dude, there were some problems with the initial BIOS. Update them, update your drivers and you won't have a problem. My brother has the same board too and he uses HDMI and it works just fine. Noob...