OCZ Vertex 4 128 GB: Testing Write Performance With Firmware 1.4

Back in April, we published one of the first reviews of OCZ's Vertex 4. In those three months, the company has issued three firmware updates, with a fourth reportedly in the pipeline. We take another look at the drive using its latest software.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Benchmark Results: Anvil’s Storage Utility

Anvil’s Storage Utility allows us to configure the size of our test file. We start off with a 1 GB file after the drive is secure-erased, returning it to a fresh-out-of-the-box state. We then refill the drive with data copied over from another repository. Specifically, we moved the Windows folder, the Program Files folders, and a number of folders composed of user data, leaving 50.4% free space.

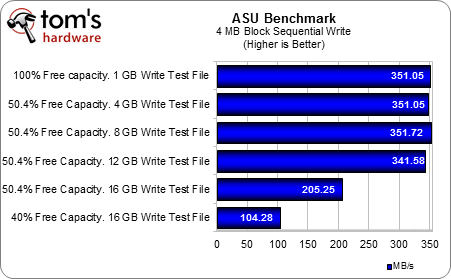

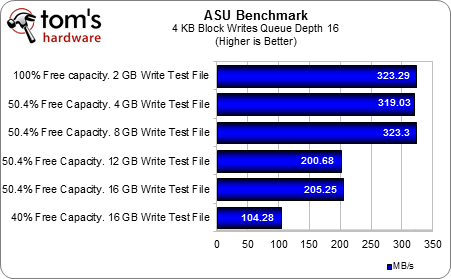

Then, we ran a series of benchmarks from ASU, incrementally increasing the test file from 4 to 16 GB. We found that sequential write performance remained steady, until we subjected the Vertex 4 to our 16 GB test file. At that point, sequential write performance dropped to 205 MB/s. The thing was, though the file itself was 16 GB-large, overall write operations resulted in 32.1 GB of test files on the drive after the benchmark had finished. Consequently, the 16 GB test file benchmark resulted in less than 50% available free space by the time it finishes, and that appears to be the tipping point for when performance begins tailing off.

After secure-erasing the drive and filling it to 60%, we repeated the test with a 16 GB file and saw write speeds drop to 104.28 MB/s. The Vertex 4 128 GB appears to need free space for optimal performance.

Article continues belowGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: Anvil’s Storage Utility

Prev Page Benchmark Results: HD Tune Pro Next Page Benchmark Results: Iometer-

danielkr This is unfortunate. I purchased four of these drives and configured them in RAID 10. I wanted the read performance and the security of knowing I would not have to reinstall everything if a drive failed. I understood I would only have double write performance. But now that I have about 100GB of free space left, I am realizing only single drive write performance. Now I will have to rebuild into a RAID 0 and do regular image backups. :(Reply -

edlivian What is with these games these vendors are playing with firmware. Sandforce has a trick with compressible data, indelix controllers now expects you to have half your drive empty to get the performance boost?!?Reply

Why can't you just get the consistent performance like you do on samsung 830's ad crucial m4's, there is nothing wrong with consistency. -

mayankleoboy1 Thats too bad.:(Reply

i was almost on the point of buying a 128GB Vertex4.

NOT NOW. will wait for the next 1.5 firmware.

its strange that such type of behavior was documented on Toms only, while multiple other sites have already reviewed this drive with 1.4 fw, giving it a very good rating.

+1 to Toms review team -

kikiking so let me get this.. just like vertex III max iops and regular edition there is a performance drop? I sworn this drive had no garbage collection? either way I may buy one, and wait on 1.5. might as well or wait till I see 1.5 firmware.Reply -

g-unit1111 Man I was really interested in seeing what Indilinx could do, and I've been recommending this drive on all high end builds. I was even thinking of replacing my Intel 320 with one. Guess I'll be sticking with the Crucial M4 and Plextor M3 from now on.Reply -

Todd Sauve According to OCZ this is the way the firmware for the Vertex4 128GB is designed to work and part of the reason is because of the way MS made the NTFS file system. They say the SSD will only slow down for a short time and then go back up to near normal speeds.Reply

They also tell me that Tom's Hardware is actually aware of this.

Read about it here: http://www.ocztechnologyforum.com/forum/showthread.php?102254-Anormal-128GB-Vertex-4-Performance -

waxdart danielkrThis is unfortunate.I read that RAID doesn't support TRIM (never checked beyond that) so I've not bothered with it. Have you done any tests with this?Reply

-

edlivian Todd SauveAccording to OCZ this is the way the firmware for the Vertex4 128GB is designed to work and part of the reason is because of the way MS made the NTFS file system. They say the SSD will only slow down for a short time and then go back up to near normal speeds.Reply

I am sorry, but there should be never be a slow down, this is ssd, people expect top speed all the time from their drives. -

Kurz edlivianWhat is with these games these vendors are playing with firmware. Sandforce has a trick with compressible data, indelix controllers now expects you to have half your drive empty to get the performance boost?!?Why can't you just get the consistent performance like you do on samsung 830's ad crucial m4's, there is nothing wrong with consistency.Reply

Reading Comprehension Fail... Let say you have a 20 Gigabytes of Free Space (The SSD has 512GB total).

If you try to write a file that is more than 10 GB you'll experience less than optinum performance.

Note we are talking about Sequential Writing.