Google Is Building A New Foveation Pipeline For Future XR Hardware

Google’s R&D arm, Google Research, recently dedicated some time and resources to discovering ways to improve the performance of foveated rendering. Foveated rendering already promises vast performance improvements compared to full-resolution rendering. However, Google believes that it can do even better. The company identified three elements that could be improved, and it proposed three solutions that could potentially solve the problems, including two new foveation techniques and a reworked rendering pipeline.

Foveated rendering

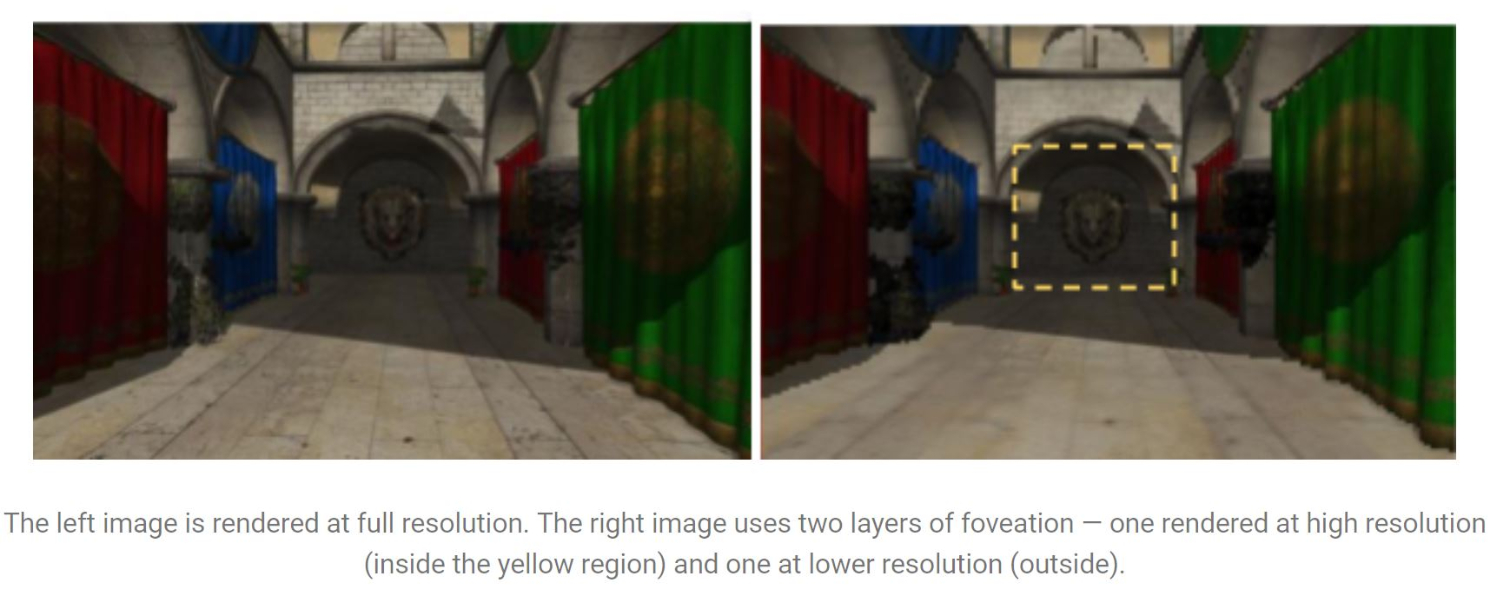

The human visual system has a function called fovea centralis, which allows us to see at higher fidelity in the center of our vision than in our peripheral vision. Your vision is clearest in the center and gradually degrades towards the perimeter of your visual perception. Foveated rendering works similarly, with the idea being that XR experiences would require less compute power if the graphics on the screen rendered in varying fidelity levels dependent on your focal point. Foveated rendering breaks the scene into a high acuity region that you focus on and a low acuity region, which would be subject to lower post processing.

Foveated rendering in VR isn’t a new concept. In fact, we already witnessed it working on Fove’s eye tracking VR headset prototype at CES two years ago. Fove’s hardware includes eye tracking modules, which is the key element that allows foveated rendering to function. Because the Fove 0 headset can determine where your focus lies, it can define a perimeter that separates the high acuity region and the low acuity region. The process works well, and we couldn’t “beat” the system in our short demo with the Fove HMD. However, traditional foveation methods can introduce artifacts in the low acuity region. Google’s research team identified two methods that improve the foveation process to reduce or illuminate the rendering distortions.

Article continues belowPhase-Aligned Rendering

Foveation techniques ensure that the graphics in your direct line of sight are well polished. However, the image beyond the high acuity region is subject to artifacts that can be distracting to the player. The low acuity region does not receive an anti-aliasing treatment, which can cause frustums to flicker when you move your head.

Google created a process called Phase-Aligned Rendering that addresses the flickering artifact problem that works somewhat like asynchronous reprojection, in that it discards the head rotation data and compensates with previously rendered frames. Where asynchronous reprojection relies on the most recent rendered frame, Phase-Aligned Rendering forces north, west, south, or east alignment on the background while your head rotates.

Google said that Phase-Aligned Rendering process adds some overhead to the rendering pipeline, but you can compensate for performance loss by reducing the acuity level further than you could with traditional foveated rendering techniques.

Conformal Rendering

Google also introduced a second new foveation process called Conformal Rendering, which offers a smoothly varying transition from the high acuity region and the low acuity region. Google said that Conformal is more efficient than traditional foveated rendering because it requires fewer rendered pixels than other techniques. The smooth visual fall-off also eliminates the possibility of perceiving the transition line between two acuity regions.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Google said that Conformal Rendering offers additional performance benefits over Phase-Aligned Rendering because the Conformal Rendering process requires one rasterization pass, whereas Phase-Aligned Rendering requires two passes. The downside of Conformal Rendering is that it does not reduce the flicker effect like Phase-Aligned Rendering.

New Foveation Pipeline Process Order

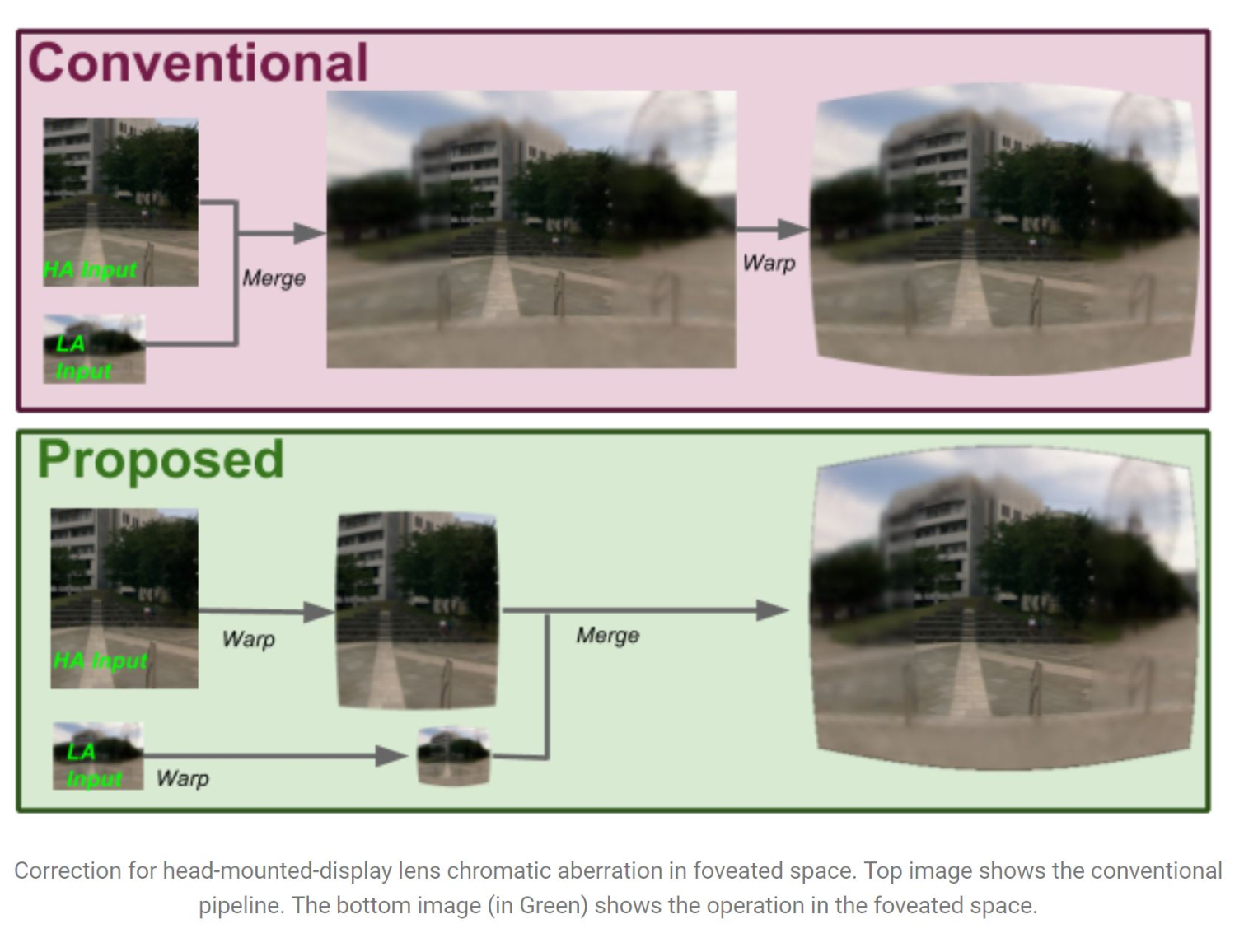

Google also proposed a new foveation pipeline order, which could help improve rendering performance in addition to the new foveated rendering techniques. With the current foveation pipeline, the graphics card renders the high-acuity image of the focus point alongside the low-acuity image of the entire scene. The low-acuity image is then upsampled to match the output resolution and combined with the high-acuity focus point. The full-size image then goes through a warping process to shape the image for output to an HMD.

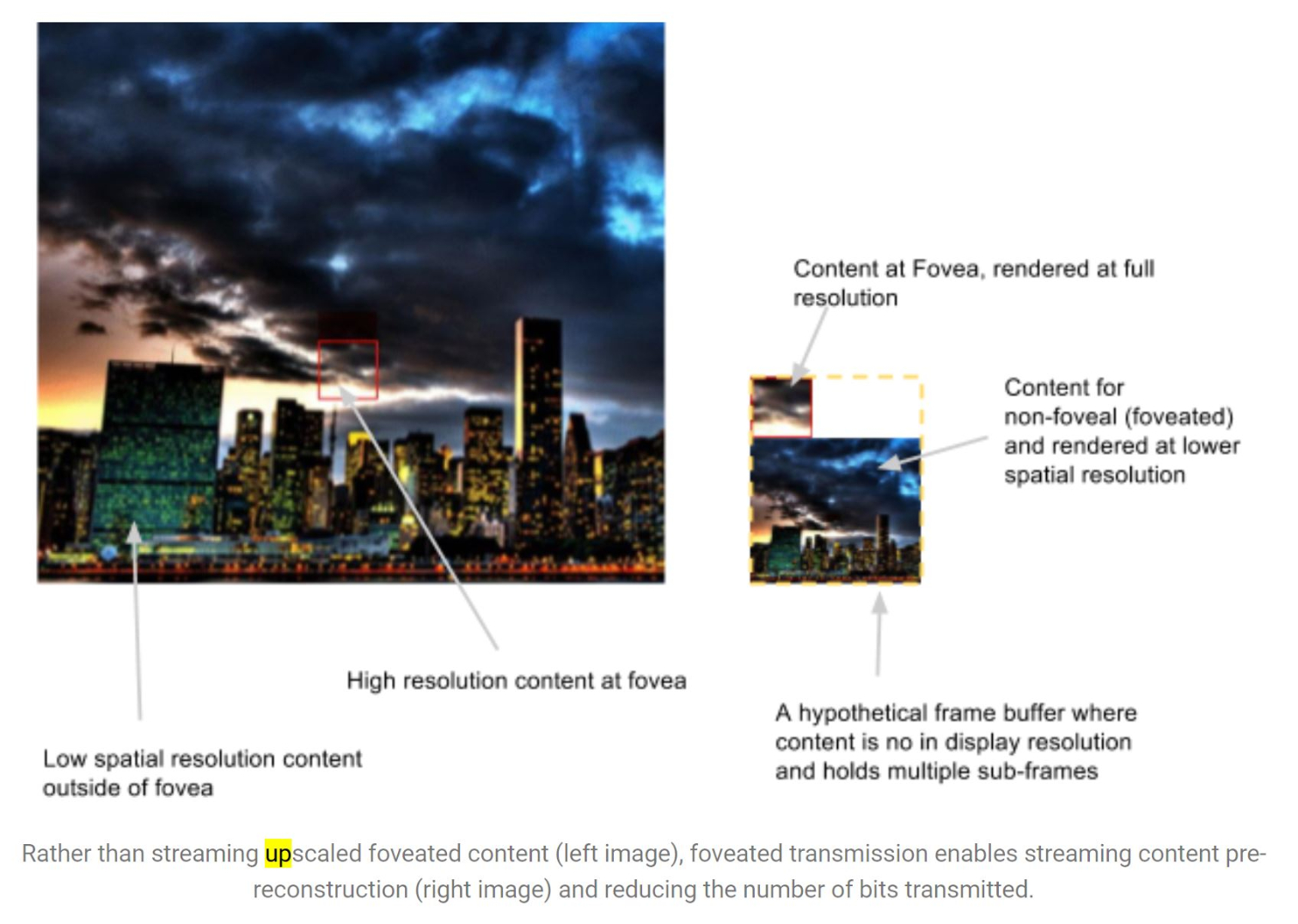

Google’s proposed pipeline order would warp the images before upsampling and merging them, which would dramatically reduce the number of pixels that must be warped.

Google also proposed a process called Foveated Transmission, which applies the separation of the high-acuity image and the low acuity image to the data transmission process between the display module and the system-on-chip of a mobile device or standalone HMD. With Foveated Transmission, the SoC would transmit the separated, low resolution images and the display side of the foveation pipeline would handle the upscaling and image blending processes.

These Developments Are For The Future, Not For Today

The above proposed foveated rendering techniques could vastly improve the performance of VR experiences on mobile hardware, and they could also be applied to desktop-level VR hardware. But for now, they will remain as research experiments. Without headsets that support eye-tracking, Foveated Rendering remains as a promising technology that is “just on the horizon.” It is possible to use foveated rendering without eye tracking hardware, but it is widely accepted that eye-tracking is necessary to make the process efficient and viable.

We may not see the fruits of Google Research’s labor for a while yet, but it’s good to see that the company is dedicating resources for the long haul. It’s only a matter of time before we see HMDs with eye tracking technology built into them and it would be better if we could take advantage of that technology as it lands, instead of waiting for software to take advantage of the hardware.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.