Nvidia Announces OptiX 5.0 With AI-Based Denoising

At SIGGRAPH 2017, Nvidia announced OptiX 5.0, the latest revision of its GPU-based ray-tracing API.

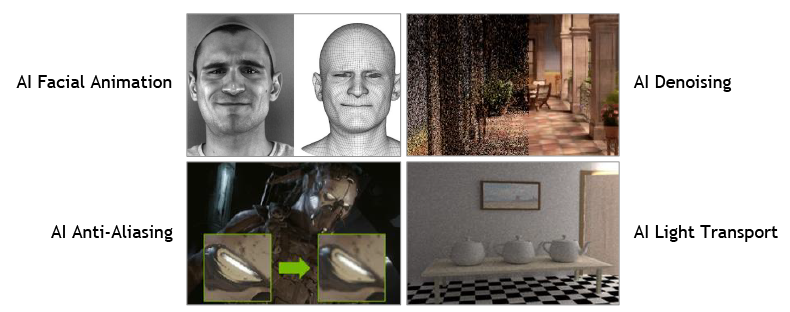

Nvidia has been working to bring its AI and machine learning research to graphics. It's researched how to use AI for facial animation, anti-aliasing, denoising, and light transport, for example, and with OptiX 5.0 it focused on AI denoising.

When using most models for global illumination, it takes dozens, hundreds, or even thousands of light rays per pixel to produce an image. The fewer rays the image uses, the more noisy or grainy it looks, especially in still images. In animation, motion blur often helps hide this, especially since the viewer doesn't get the chance to inspect the image, but even then an image will look better with more samples.

Various techniques can be used to scatter the ray samples (Monte Carlo sampling, for example) and interpolate the samples you have, but these can result in less-than-optimal effects of their own, such as flickering or a subtle blurred look. You can see these blobby, smeared samples in many games that use some type of in-engine global illumination.

Nvidia took tens of thousands of image pairs of rendered images with one sample per pixel and a companion image of the same render with 4,000 rays per pixel and used that to train the AI to predict what a denoised image looks like, thus giving it the ability to render an image that appears to have had a massively large number of samples without the accompanying render time.

The OptiX 5.0 framework also includes provisions for GPU-accelerated motion blur, presumably using some technique other than simply rendering a frame multiple times and blurring them together.

Though Nvidia had no specific announcement regarding when some of its other research may become available to consumers or professionals, it did say OptiX 5.0 will be available in November.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

Lucky_SLS presumably this would be released only for the quadro cards? poor us who use our laptops with nvidia gpu for after effects and vegas pro :(Reply -

Draven35 This is the SDK that Octane, Redshift, Maxwell and Vray use for their GPU rendering, so you will be able to get it from multiple sources.Reply -

bit_user ReplyThe OptiX 5.0 framework also includes provisions for GPU-accelerated motion blur, presumably using some technique other than simply rendering a frame multiple times and blurring them together.

The standard way to do motion blur in global-illumination ray tracers is to use stochastic sampling in both space and time. Even with AI denoising, you'll need more than one ray per pixel, for this. -

Draven35 GPU-accelerated motion blur is also added in Optix 5.Reply

I was making to attempt to explain everything about how 3D rendering works.