FreeSync: AMD's Approach To Variable Refresh Rates

AMD's FreeSync technology is gaining momentum, but is the buzz warranted? We did our own research, worked with Acer and spoke with AMD to find out.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Introduction

Human ingenuity works in incredible but mysterious ways. We somehow managed to put a man on the moon (1969) before realizing that adding wheels to luggage was a good idea (Sadow's patent, 1970). In similar (although maybe not as spectacular) fashion, it took more than a decade after the introduction of PC LCD displays for people to realize that there really was no reason for them to operate using a fixed refresh rate. This first page is dedicated to answering why fixed refresh rates on LCDs are even a thing. First, we need to explain how contemporary video signaling works. Feel free to skip ahead if you're not interested in a bit of PC history.

Back in the '80s, cathode ray tubes (CRTs) used in TVs needed a fixed refresh rate because they physically had to move an electron gun pixel by pixel, then line by line and, once they reached the end of the screen, re-position the gun at the beginning. Varying the refresh rate on the fly was impractical, at best. All of the supporting technology standards that emerged in the '80s, '90s and early '00s revolved around that necessity.

Note:

Article continues belowFor reference, the new Maxwell-class Nvidia GTX 980s support a pixel clock of up to 1045MHz (not to be confused with core or memory frequencies), allowing for a theoretical max resolution or refresh for each connector of 5120x3200 at 60Hz. We could not confirm the maximum pixel clock of AMD's Fury X, but we would expect it to be similar and, in both cases, it's likely more than you'll need for several years to come.

The most notable standard involved in controlling signaling from graphics processing units (GPUs) to displays is VESA's Coordinated Video Timings ("CVT," and also its "Reduced Blanking" cousins, "CVT-R" and "CVT-R2"), which, in 2002-2003, displaced the analog-oriented Generalized Timing Formula that had been the standard since 1999. CVT became the de facto signaling standard for both the older DVI and newer DisplayPort interfaces.

Like its predecessor, Generalized Timing Formula ("GTF"), CVT operates on a fixed "pixel clock" basis. The signal includes horizontal blanking and vertical blanking intervals, and horizontal frequency and vertical frequency. The pixel clock itself (which, together with some other factors, determines the interface bandwidth) is negotiated once and cannot easily be varied on the fly. It can be changed, though that typically makes the GPU and display go out of sync. Think about when you change your display's resolution in your OS, or if you've ever tried EVGA's "pixel clock overlocker."

Now, in the case of DisplayPort, the video stream attributes (together with other information used to regenerate the clock between the GPU and display) are sent as so-called "main stream attributes" every VBlank, that is, during each interval between frames.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

LCDs were built around this technology ecosystem, and thus naturally adopted many related approaches: fixed refresh rates, pixel-by-pixel and line-by-line refreshing of the screen (as opposed to a single-pass global refresh) and so on. Also, for simplicity, LCDs historically had a fixed backlight to control brightness.

Fixed refresh rates offered other benefits for LCDs that have only more recently started to be exploited. Because the timing between each frame is known in advance, so-called overdrive techniques can be implemented easily, thus reducing the effective response time of the display (minimizing ghosting). Furthermore, LCD backlights could be strobed rather than set to always-on, resulting in reduced pixel persistence at a set level of brightness. Both technologies are known by various vendor-specific terms, but "pixel transition overdrive" and "LCD backlight strobing" can be considered the generic versions.

Why Are Fixed Display Refresh Rates An Issue?

GPUs inherently render frames at variable rates. Historically, LCDs have rendered frames at a fixed rate. So, until recently, only two options were available to frustrated PC gamers:

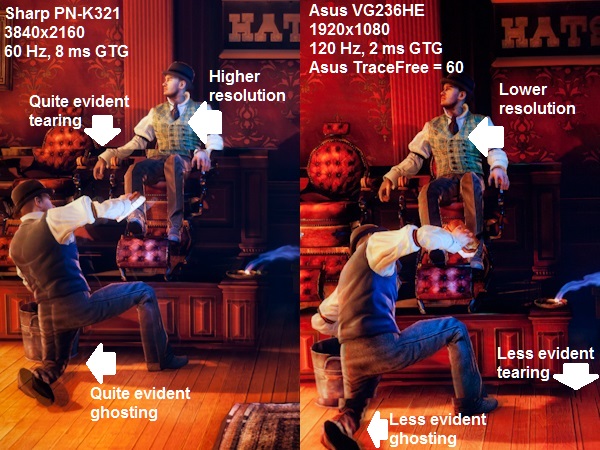

- Sync the GPU rate to the LCD rate and duplicate frames when necessary—so-called "turn v-sync on", which results in stuttering and lag.

- Do not sync the GPU rate to the LCD rate, and send updated frames mid-refresh—so-called "turn v-sync off", which results in screen tearing.

Without G-Sync or FreeSync, there simply was no solution to the above trade-off, and gamers are forced to choose between the two.

-

DbD2 Imo freesync has 2 advantages over gsync:Reply

1) price. No additional hardware required makes it relatively cheap. Gsync does cost substantially more.

2) ease of implementation. It is very easy for a monitor maker to do the basics and slap a freesync sticker on a monitor. Gsync is obviously harder to add.

However it also has 2 major disadvantages:

1) Quality. There is no required level of quality for freesync other then it can do some variable refresh. No min/max range, no anti-ghosting. No guarantees of behaviour outside of variable refresh range. It's very much buyer beware - most freesync displays have problems. This is very different to gsync which has a requirement for a high level of quality - you can buy any gsync display and know it will work well.

2) Market share. There are a lot less freesync enabled machines out there then gsync. Not only does nvidia have most of the market but most nvidia graphics cards support gsync. Only a few of the newest radeon cards support freesync, and sales of those cards have been weak. In addition the high end where you are most likely to get people spending extra on fancy monitors is dominated by nvidia, as is the whole gaming laptop market. Basically there are too few potential sales for freesync for it to really take off, unless nvidia or perhaps Intel decide to support it. -

InvalidError It sounds hilarious to me how some companies and representatives refuse to disclose certain details "for competitive reasons" when said details are either part of a standard that anyone interested in for whatever reason can get a copy of if they are willing to pay the ticket price, or can easily be determined by simply popping the cover on the physical product.Reply -

xenol I still think the concept of V-Sync must die because there's no real reason for it to exist any more. There are no displays that require precise timing that need V-Syncing to begin with. The only timing that should exist is the limit of the display itself to transition to another pixel.Reply

It sounds hilarious to me how some companies and representatives refuse to disclose certain details "for competitive reasons" when said details are either part of a standard that anyone interested in for whatever reason can get a copy of if they are willing to pay the ticket price, or can easily be determined by simply popping the cover on the physical product.

Especially if it's supposedly an "open" standard. -

nukemaster ReplyIt sounds hilarious to me how some companies and representatives refuse to disclose certain details "for competitive reasons" when said details are either part of a standard that anyone interested in for whatever reason can get a copy of if they are willing to pay the ticket price, or can easily be determined by simply popping the cover on the physical product.

It was kind of the highlight of the article.

(Ed.: Next time, I'll make a mental note to open up the display and look before sending it back. Unfortunately, the display had been shipped back at the time we received this answer)It is a shame that AMD is not pushing for some more standardization on these freesync enabled displays. A competition to ULMB would also be nice to see for games that already have steady frame rates. -

jkhoward Of course you think NVIDIA solution will win. You always do. This forum is becoming more and more bias.Reply -

InvalidError Reply

As stated in the article, modern LCDs still require some timing guarantees to drive pixels since the panel parameters to switch pixels from one brightness to another change depending on the time between refreshes. If you refresh the display at completely random intervals, you get random color errors due to fade, over-drive, under-drive, etc.16718305 said:I still think the concept of V-Sync must die because there's no real reason for it to exist any more.

While LCDs may not technically require vsync in the traditional CRT sense where it was directly related to an internal electromagnetic process, they still have operational limits on how quickly, slowly or regularly they need to be refreshed to produce predictable results.

It would have been more technically correct to call those limits min/max frame times instead of variable refresh rates but at the end of the day, the relationship between the two is simply f=1/t, which makes them effectively interchangeable. Explaining things in terms of refresh rates is simply more intuitive for gamers since it is almost directly comparable to frames per second. -

Freesync will clearly win, as a $200 price difference isn't trivial for most of us.Reply

Even if my card was nVidia, I'd get a freesync monitor. I'd rather have the money and not have the variable refresh rate technology. -

dwatterworth A suggestion for the high end frame rate issue with FreeSync, turn on FRTC and set it's maximum rate to the top end of the monitors sync range.Reply -

TechyInAZ Very interesting read. I never knew that variable refresh rates had effects on light strobing.Reply

I wonder how adaptive sync and G sync will work when the new OLED monitors start hitting the market?