Intel's Knights Corner: 50+ Core 22nm Co-processor

1 Teraflop. 1 Chip. Many integrated cores.

Today on our desktop computers, we have CPUs with core counts that we can count through with our fingers. Intel, however, has just presented at SC'11 its the first silicon of the "Knights Corner" co-processor that is capable of delivering more than 1 TFLOPs of double precision floating point performance.

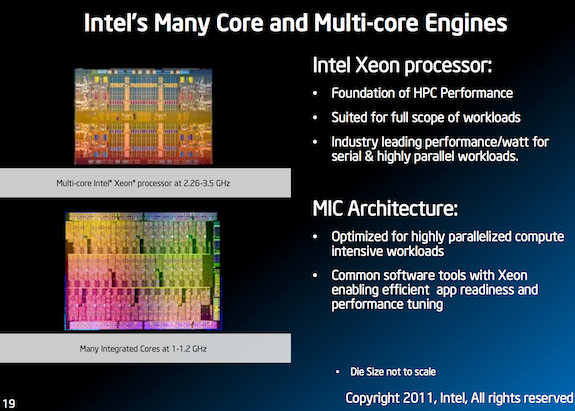

Such power of Intel's MIC (many integrated core) architecture won't be used to play Crysis, but rather it'll be put towards highly parallel applications, such as weather modelling, tomography, proteins folding and advanced materials simulation.

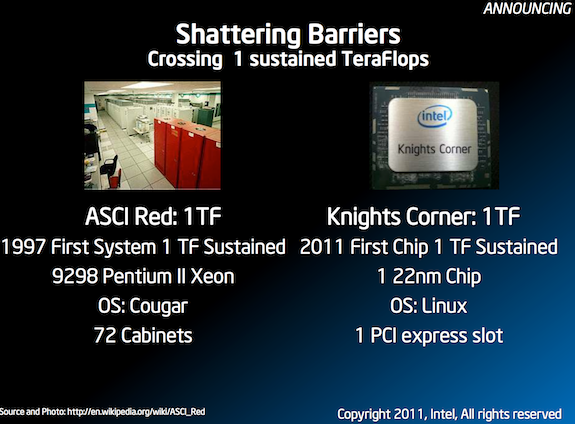

"Intel first demonstrated a Teraflop supercomputer utilizing 9,680 Intel Pentium Pro Processors in 1997 as part of Sandia Lab's 'ASCI RED' system," said Rajeeb Hazra, general manager of Technical Computing, Intel Datacenter and Connected Systems Group. "Having this performance now in a single chip based on Intel MIC architecture is a milestone that will once again be etched into HPC history."

Knights Corner, the first commercial Intel MIC architecture product, will be manufactured using Intel’s latest 3-D Tri-Gate 22nm transistor process and will feature more than 50 cores. Furthermore, Intel promises compatibility with existing x86 programming model and tools.

Hazra boasted that the Knights Corner co-processor is unlike traditional accelerators in that "it is fully accessible and programmable like fully functional HPC compute node, visible to applications as though it was a computer that runs its own Linux-based operating system independent of the host OS."

Intel says that its MIC architecture benefits from the ability to run existing applications without the need to port the code to a new programming environment. Intel believe that this will allow scientists to use both CPU and co-processor performance simultaneously with existing x86 based applications without needing to rewrite them to alternative proprietary languages.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

oparadoxical_ Makes me wonder just what we will have in ten years from now... Especially for personal computers.Reply -

Camikazi gmcizzleLol wow 50 cores. Guess that makes AMD's 16-core reveal another flop.It's a Co-Processor, an accelerator, not a main CPU they are not the same thing.Reply -

darthvidor I wonder when will "Co" get separated from the Processor and get into motherboard sockets other than pci-express cards.Reply -

Stardude82 What about GPU processing? Isn't that what CUDA is for? After all, my 460 GTX has 336 cores.Reply -

dragonsqrrl gmcizzleLol wow 50 cores. Guess that makes AMD's 16-core reveal another flop.Not an absolute flop (it does provide a good price/performance ratio) but not good either. There's just no getting around the inherent flaws in the current revision of the Bulldozer architecture, even in the highly parallel workloads found in the server/workstation market:Reply

http://www.anandtech.com/show/5058/amds-opteron-interlagos-6200

And Knights Corner isn't serving the same market as Interlagos, so they're not really directly comparable. -

LuckyDucky7 Hmm.Reply

You know what this is?

I think this is Intel's answer to ARM's server bids.

Think about it.

50+ cores at 1.2 GHz? That sounds a lot like what ARM will be promising in the near future.

Except that everyone who wants to go the low-power route needs to re-write their programs for the ARM instruction set. With this they don't have to. The tools for Xeon optimization are also the same.

So you can have a powerful 4/6/8/10-core Xeon processor (that you probably already own) but bolting this on, combined with Intel's advancements in power consumption (Sandy Bridge is already very good on idle battery life in notebooks), should make a changeover to ARM technology a hard sell. -

sinfulpotato This isn't a CPU, it is more like the function of a GPU used in parallel processing.Reply -

upgrade_1977 So, "it won't be used to play Crysis", what about Battlefield 4? Patiently waiting.....Reply