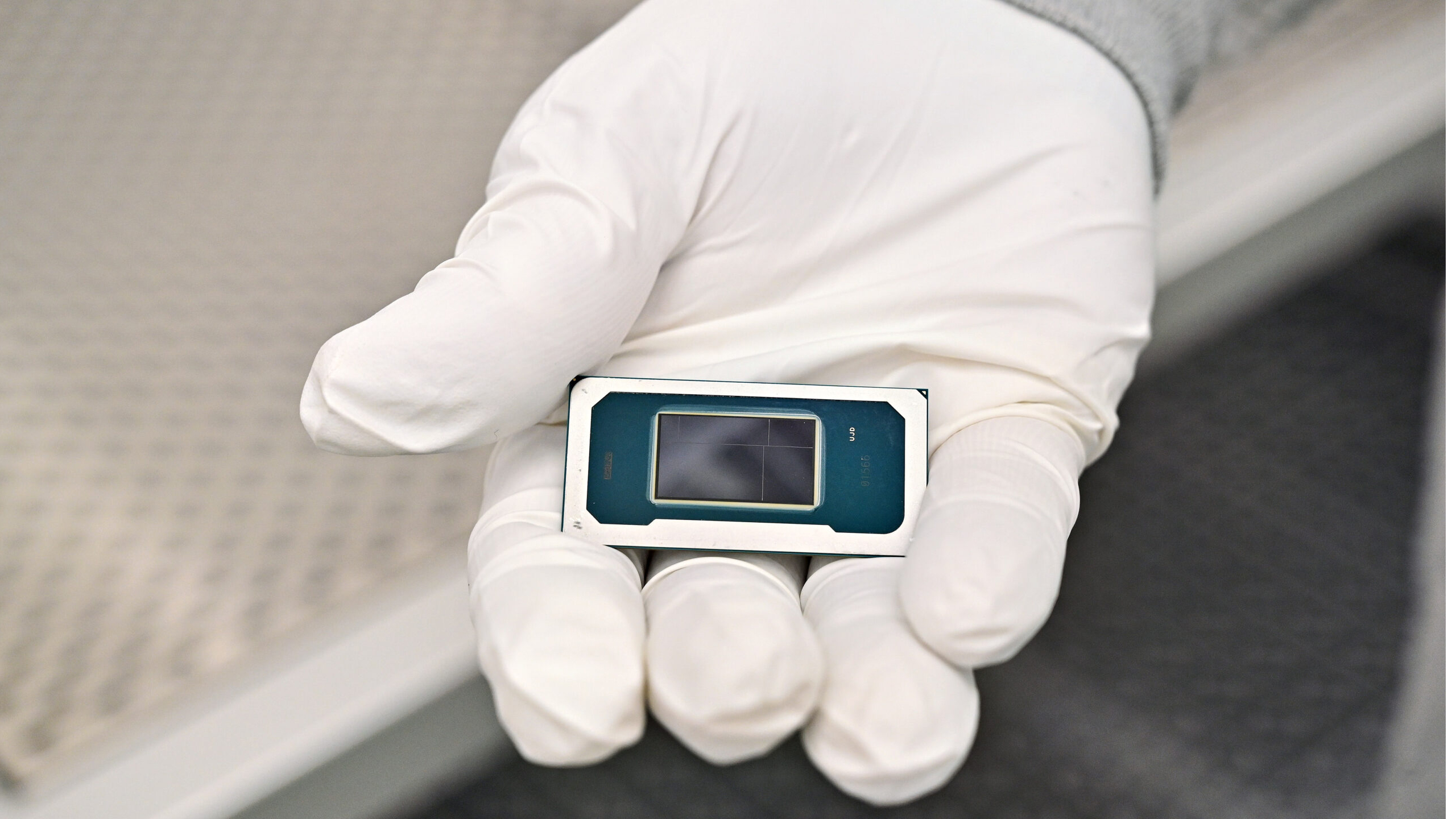

Intel preps CPUs with 'Unified Core' architecture — job listing hints at evolution beyond Intel's hybrid design

Senior CPU verification engineer needed for Intel's Unified Cores products.

Intel is working on processors featuring the so-called 'Unified Cores' and these CPUs are at least three or four years away, or may be more, based on a new job listing that Intel posted over at LinkedIn. The company is looking for a senior CPU verification engineer who will verify silicon design of processors featuring Unified Cores while working with architects and RTL designers, which gives us an idea about the stage of the project.

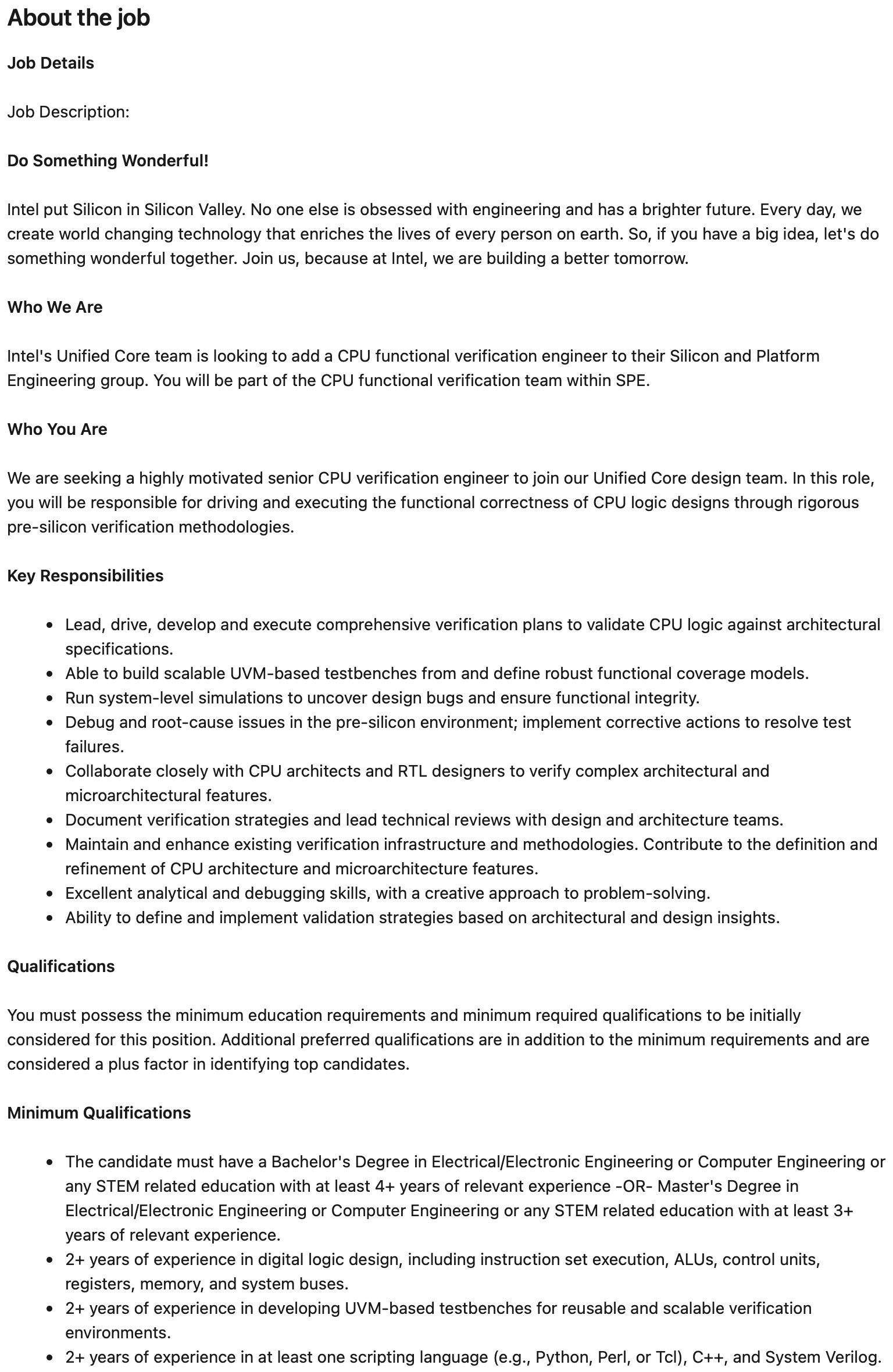

"Intel's Unified Core team is looking to add a CPU functional verification engineer to their Silicon and Platform Engineering group," a statement at LinkedIn reads. "You will be part of the CPU functional verification team within SPE."

Intel is looking for a "a highly motivated senior CPU verification engineer" for its Unified Core design team, who will be responsible for ensuring functional correctness of CPU logic designs using severe pre-silicon verification procedures. Among responsibilities of the verification engineer will also be close collaboration with CPU architects and RTL designers to "verify complex architectural and microarchitectural features."

Among preferred qualifications, Intel names knowledge and experience with x86 (which indicates this is an x86 project), experience with Synopsys simulators, and assembly skills.

In very active development

Traditionally, verification engineers begin their work right after microarchitecture design is completed and before register transfer level (RTL) begins. However, given how complex modern processors are, functional correctness of a CPU logic blocks is not an isolated stage that happens after architecture is finished and before RTL begins. In modern processor development, verification is tightly linked with both architectural definition and RTL implementation. By the time RTL is written, verification engineers are already hard at work building models, defining coverage, and stress-testing assumptions embedded in the specification.

That said, validation does not wait for RTL to be 'complete' as it ramps up as soon as design intent is stable enough to encode into checks and coverage targets. Once RTL blocks start coming in, verification proceeds alongside implementation. Engineers run block-level simulations, use constrained-random testing, and perform system-level integration to continuously check that the RTL behaves exactly as defined by the architectural and microarchitectural specifications, so verification engineers must collaborate with both architects and RTL designers.

In any case, functional correctness is a continuous discipline that spans architecture modeling, RTL development, integration, and ultimately post-silicon validation — not a narrow checkpoint between two phases. Nonetheless, the fact that the engineer will have to work with both architects and RTL designers means the architecture is not fully frozen and the RTL is still evolving, so the whole project is somewhere in the middle of the cycle.

In a mature program approaching tape-out, verification engineers mostly interact with RTL designers to close coverage gaps and fix bugs and architecture changes at that point are rare and extremely expensive (to a large degree because they involve new RTL and new debugging/verification). By contrast, when verification is expected to work closely with architects, it suggests that microarchitectural features are still being refined, clarified, or adjusted as implementation progresses, which means that the project is in its early stages.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

If our assumptions are correct, Intel is in the middle of the CPU development cycle and RTL implementation has not been completed, then the company is at least 18 to 24 months away from tape-out. Once Intel tapes out the first silicon featuring Unified Cores, it will be another 18 to 24 months for mass production of the product. That said, the most optimistic assumption is that Intel will be ready with its first product featuring Unified Cores in 2029, whereas a more realistic estimate is 2030. Keep in mind that since Intel does not clearly say where it is, we may be wrong by three to six months depending on various factors.

What is Unified Core?

It goes without saying that we still have little information about the nature of the Unified Core architecture or microarchitecture. While we can make our guesstimates about the stage of Intel's Unified Core project based on the job listing, we cannot do the same looking at the architecture.

The first leak about Intel's Unified Core emerged in mid-July 2025. @Silicon_Fly then speculated that Intel's Titan Lake processors due in 2028 would feature Unified Cores, not high-performance and energy-efficient cores, like today's Arrow Lake (Lion Cove P + Skymont E-cores) and Panther Lake (Cougar Cove P and Darkmont E-cores). Back then, the Unified Core was said to be an evolution of Intel's E-cores rather than P-cores. To launch a product in late 2028, Intel would need to tape it out by mid-2026 at the latest, so hiring verification engineers now would be rather late. Yet, if our estimates about Intel releasing first Unified Core-based products in 2029 – 2030 are correct, then making assumptions about their architectural decisions is a bit early.

Given the lack of information, Intel's Unified Core could be anything from an architecture containing many 'small' cores supporting the company's 'Software Defined Supercore' capability (we are speculating as this technology could be possibly 'attached' to a variety of microarchitectures) to AMD's modern approach to building hybrid CPUs (full-speed and compact Zen cores share the same microarchitecture, but differ in terms of performance and power consumption) to AMD's Hammer-like 'unification' to something completely different.

Given that Intel calls the project 'Unified Core,' it probably has a significant meaning for the company, suggesting that this might be a core design that is scalable for everything from an entry-level client PC CPU all the way to a heavy-duty datacenter processor. As to how such scalability could be implemented remains an open question, as there are numerous architectural approaches beyond those outlined above.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

alan.campbell99 ReplyGiven the lack of information, Intel's Unified Core could be anything from an architecture containing many 'small' cores supporting the company's 'Software Defined Supercore' capability (we are speculating as this technology could be possibly 'attached' to a variety of microarchitectures) to AMD's modern approach to building hybrid CPUs (full-speed and compact Zen cores share the same microarchitecture, but differ in terms of performance and power consumption) to AMD's Hammer-like 'unification' to something completely different.

I've often wondered how Intel's P/E core works in practice compared with AMD's approach, in terms of coding. Whether it's a bit more work involved for Intel's hybrid CPUs. -

TerryLaze Reply

Nobody codes for a specific CPU anymore, not since last century...alan.campbell99 said:I've often wondered how Intel's P/E core works in practice compared with AMD's approach, in terms of coding. Whether it's a bit more work involved for Intel's hybrid CPUs.

They code for the instruction set architectures (ISAs) they have, those work like APIs like directX, they code for/with sse, avx, aes, and so on.

It's on intel then to implement those apis in a way that will use as much of the CPU as possible, for example intel is working on avx 10 + which will just work as avx for the coder but will share the workload on e and p cores, the coder will not have to worry about which codes can only do 126 or 512 bits it will all be handled by the api/isa.

Or intel also made task scheduler for windows to work better with hybrid CPUs.

https://www.intel.com/content/www/us/en/content-details/828965/intel-advanced-vector-extensions-10-2-intel-avx10-2-architecture-specification.html -

alan.campbell99 Ah I see.Reply

Not a coder, I only ever managed to understand Motorola microcontroller assembler for some reason during my coursework a few decades ago now. Struggling with Pascal put me off. -

twin_savage Reply

Yes it is, however often times the easiest way to deal with the heterogeneous architectures is to have the runtime just completely ignore the e-cores because they drag down the performance of the p-cores with the extra overhead thread orchestration takes overcoming the meager performance they provide. This is coming from an HPC perspective.alan.campbell99 said:I've often wondered how Intel's P/E core works in practice compared with AMD's approach, in terms of coding. Whether it's a bit more work involved for Intel's hybrid CPUs. -

TerryLaze Reply

The overhead is just issuing a thread, the exact same overhead you would have with any type of core, and the meager e-core performance is like 70% of an p-core. Ignoring the e-cores will always net you a huge deficit in performance.twin_savage said:Yes it is, however often times the easiest way to deal with the heterogeneous architectures is to have the runtime just completely ignore the e-cores because they drag down the performance of the p-cores with the extra overhead thread orchestration takes overcoming the meager performance they provide. This is coming from an HPC perspective. -

twin_savage Reply

This is not true. For example when solving a system with FGMRES, even though the BLAS it calls are highly optimized with inline assembly, thread-dependent order of operation stalls happen much much more severely with heterogeneous architectures.TerryLaze said:The overhead is just issuing a thread, the exact same overhead you would have with any type of core, and the meager e-core performance is like 70% of an p-core. Ignoring the e-cores will always net you a huge deficit in performance.

On a "reasonably" sized problem, trying to include the e-cores in the calculation of a solution will net you roughly 25% slower performance and higher energy usage than using p-cores alone on Intel's 12/13/14th gen processors; and we're not talking about unoptimized code here, the cream of the crop at Apple and Intel are the ones that wrote the different BLAS.

This performance penalty has been definitively observed on both Intel's 12/13/14/15th gen consumer heterogeneous architectures and Apples M1/M2/M3/M4/M5 architectures. -

rluker5 Reply

I believe this is the case. The windows controls are also more thorough nowadays. You can even specify scheduling preferences for E,P cores in Windows power plans using this tool to show the option: https://forums.guru3d.com/threads/windows-power-plan-settings-explorer-utility.416058/ but it doesn't help much as Windows has gotten really good at scheduling. Less and less benefit to disabling anything. But if you pull up all threads in AB in a game you can see the use of, or lack thereof certain core types.TerryLaze said:

Or intel also made task scheduler for windows to work better with hybrid CPUs.

Windows has also been scheduling SMT for quite a while and P cores > AMD main threads > E cores > low power E cores > AMD SMT > Intel HT and I don't see people complaining about SMT or HT bogging down their system with weaker threads. -

derekullo Reply

Depends on the program.alan.campbell99 said:I've often wondered how Intel's P/E core works in practice compared with AMD's approach, in terms of coding. Whether it's a bit more work involved for Intel's hybrid CPUs.

When I upscale using only the CPU, i7-12700, with Stable Diffusion's LDSR, I have to use processor lasso to force the program to use all cores or else it 100% uses the e-cores and ignores the p-cores which leaves most of the CPU doing nothing. -

thestryker Reply

A completely irrelevant perspective given that nobody selling non-custom CPUs uses heterogeneous designs for enterprise.twin_savage said:This is coming from an HPC perspective.