The 990FX Chipset Arrives: AMD And SLI Rise Again

AMD is laying the foundation for its Socket AM3+, Bulldozer-based Zambezi processors with the 990FX chipset, functionally identical to 890FX. The big news is that motherboard vendors are licensing SLI again, and we want to compare performance to Intel.

Benchmark Results: World Of Warcraft: Cataclysm (DX11)

Our relationship with Blizzard’s World of Warcraft goes back a ways. Back in December of last year, I took a first look at the Cataclysm expansion pack with experimental support for DirectX 11 in World Of Warcraft: Cataclysm—Tom’s Performance Guide. Recently, that support was integrated into patch 4.1, under the game options menu. It's now official, and if you have a DX11-enabled card, I recommend you use it. If you need to ask why, check out the performance guide. Frame rate boosts can be quite surprising.

In our initial testing, we discovered that AMD’s processors were definitely limiting performance in this game. The average frame rate of the Phenom II X6 at 3.7 GHz was 60 frames per second.

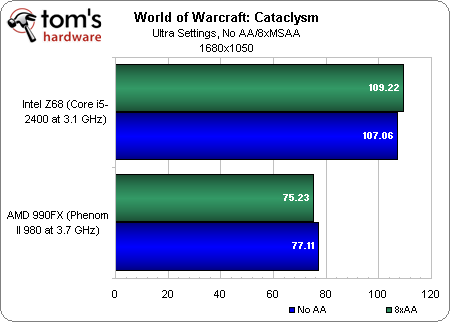

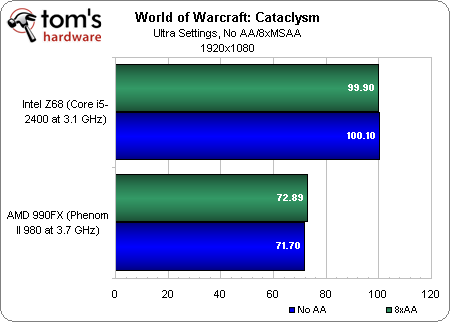

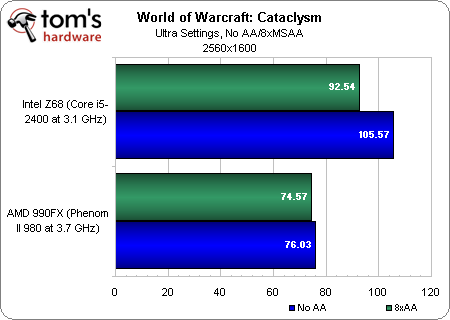

Today we see that, with proper support for SLI in place, AMD’s platforms hit 75 frames per second or so. But it doesn’t matter if you run at 1680x1050 or 2560x1600, or if you use 1x multisampling or 8x. Simply, the frame rate doesn’t change. AMD’s processors are still the “problem,” for as much as 75 FPS can be considered problematic.

We’re really only concerned because Intel’s CPUs do so much better, exceeding 100 FPS at 1680x1050 and 1920x1080, only dipping under at 2560x1600 with 8x MSAA turned on.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: World Of Warcraft: Cataclysm (DX11)

Prev Page Benchmark Results: Just Cause 2 (DX11) Next Page Conclusion-

nice to see support for both videocard producers. especialy for nvidia. now you can do amd+nvidia not only amd+ati(amd)Reply

-

stingstang Tom's, what the hell is this? "At the end of the day, it's the graphics cards which are the bottleneck."Reply

Did you go about benchmarking graphics cards, or was this a motherboard/cpu comparison? I'm tired of hearing this excuse all the time. We know you have a pair of 6990s and 590s in your shop. Get rid of that stupid bottleneck and DO IT RIGHT! -

saint19 I'd keep in mind that this performance review was made it with an AM3 CPU and not with the new generation.Reply -

-Fran- Thanks for the review, but at lower resolutions we all know that the CPU differences will become clear. So you just proved that if a game is taxing on the GPUs, both solutions are equal and when the graphics card ain't being taxed, CPU differences become apparent... Ok, thanks for proving what we already know once more (not being sarcastic here >_Reply -

-Fran- Uhm... that last comment of mine was cut in half with the missing char of that face... I guess that's an escape char; oh well.Reply

What is missing said something like:

...here "face"), but you said you wanted to test AMD's SLI on their 990FX vs Intel's SLI. So, IMO, you need less graphics horse power: like 2 GTS250's or 2 GTX460's or 2 GTX560's (not ti's) to tax the graphics subsystem and really show the differences. Maybe up the resolution also to really show if there is a difference between AMD's or Intel's SLI.

Thanks again for the Article, Mr Chris.

Cheers!