Pro Graphics: Seven Cards Compared

Features

By

Uwe Scheffel

published

Add us as a preferred source on Google

Subscribe to our newsletter

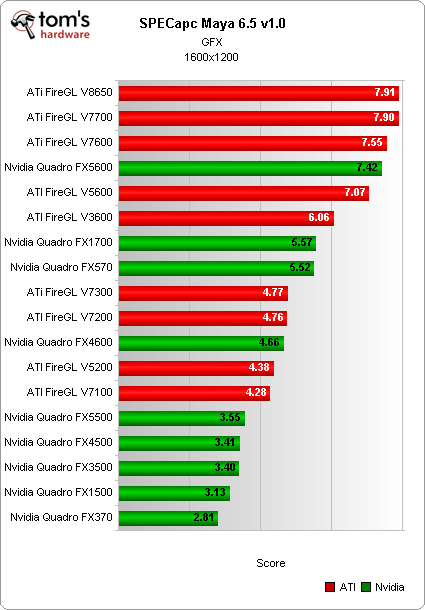

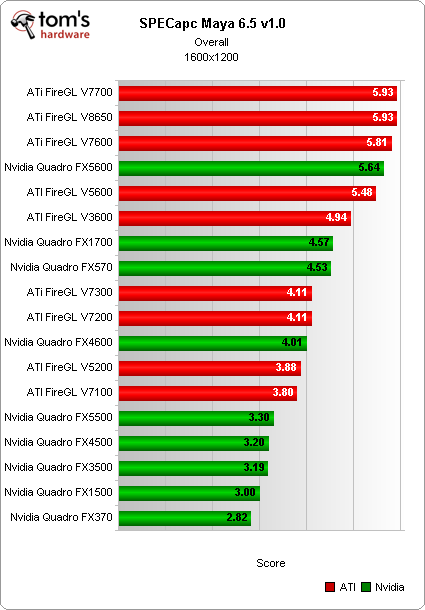

Maya 6.5: Test Results

Stay On the Cutting Edge: Get the Tom's Hardware Newsletter

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Maya 6.5: Test Results

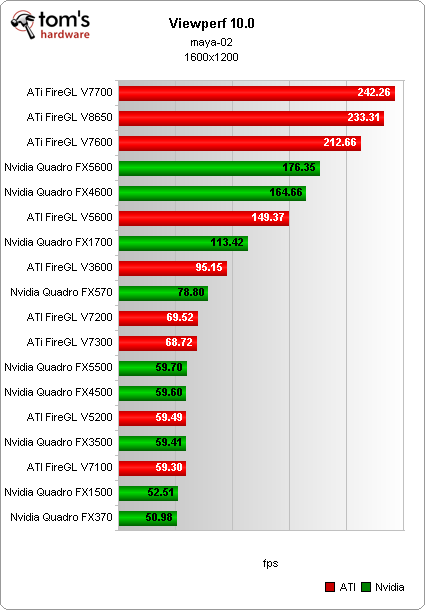

Prev Page Solidworks 2007: Test Results Next Page Viewperf 10.0 - CATIA, EnSight, Pro/Engineer: Test Results

54 Comments

Comment from the forums

-

zajooo Hi guys,Reply

I was allways wondering, how it would look with comparison of these cards with gamers cards, wheather the gamer ones did not give us as we say "Lot of music, for a little money". Whould we get simmilar results in the field of geometry transformation, without using any alliasing a any of the really not necessary features ???

Thanx -

Evendon I would also like to know how these cards handle games. Especially im interested in how mobile versions of quodro fx work with games.Reply

I would also like to play with my work laptop with quodro fx-chip (fx 3600m) -

neiroatopelcc I was pretty much wondering how low a geforce 8800gt or ati 4750 would stack? given the lowest prices in denmark for a v3600 or fx570 start at 1150kr and a 8800gt256 costs only 680kr (4750 @ 1070kr), they're comparable in the budget department.Reply

We're only doing basic grid stuff mostly at work, but we're using intel 945 chipsets with onboard graphics for that - the pentium dualcore seems to do that okay, but it isn't happy about textures at all. However for those things we use old p4's with geforce 6600gt cards - not quadro anything, and I can't help to wonder if using gamer cards is the right or wrong choice (worked great for us so far).

ps. we use inventor, mechanical desktop and revit from autodesk (newest and second newest versions only), so only the 3dstudio results are interesting for me. -

venteras Where's the Quadro FX 3700 and 4700?Reply

I don't see how you can do a review dated 13 August that seemingly cover all current main stream cards and not have 2 of NVidia's primary cards included?

Was this paid for by ATI? -

sma8 zajoooHi guys,I was allways wondering, how it would look with comparison of these cards with gamers cards, wheather the gamer ones did not give us as we say "Lot of music, for a little money". Whould we get simmilar results in the field of geometry transformation, without using any alliasing a any of the really not necessary features ???ThanxReply

Pro graphic cards are different from gamers/consumer cards. Pro graphic cards are designed to be capable of handling workstations applications such as AutoCAD or 3D Studio MAX whereas gamers/consumer cards are designed for desktop pc apps or games. You can see the differences of both cards here:

http://www.nvidia.com/object/builtforprofessionals.html

That's a good example how both cards work in workstation application. That's why pro graphic cards cost very expensive -

anonymous1000 Hello. Thank you for the interesting article. What is also interesting is the huge gap between Spec results and my day to day experiences with ATI pro cards. When the first spec results showed up, I started recommending my clients to buy FireGL 3600-8600 cards but nfortunately, they where VERY pour performers in 3DSMAX work... apart from the fact that you couldn't even use half of their strength with the first drivers, even now, comparing the FPS count on the V5600 card with that of 9600GT on 3DSMAX shows it is much more comfortable to work with the latest.. If you want to move a large project on your viewport it moves allot faster if you have a 9600GT card installed. THIS is a benchmark I would like to see here because IT REALLY MATTERS to animators. Thank youReply -

bydesign sma8Pro graphic cardshttp://en.wikipedia.org/wiki/Graphics_processing_unit are different from gamers/consumer cards. Pro graphic cards are designed to be capable of handling workstations applications such as AutoCAD or 3D Studio MAX whereas gamers/consumer cards are designed for desktop pc apps or games. You can see the differences of both cards here:http://www.nvidia.com/object/built onals.htmlThat's a good example how both cards work in workstation application. That's why pro graphic cards cost very expensiveReply

Not true I can flash the bios on my 8800 GTX and it will run just like it's workstation cousin. They are using the same hardware but handicapping the consumer card. -

theLaminator Wish this article had been published about three days ago, it would've made my decision on which new laptop to get for school (i'm an engineering major, so i actually will use this). I finally decided on an HP with with the FireGL v5600, looks like a made the right choice based on these benchmarks. Guess we'll see when I actually get it and try it in application.Reply

-

"I would also like to know how these cards handle games. Especially im interested in how mobile versions of quodro fx work with games.Reply

I would also like to play with my work laptop with quodro fx-chip (fx 3600m)"

I also have a Quadro 3600M in my laptop. In 3dMark06, I get a score of 8800. COD4 and UT3 run smoothly with all settings maxed out at 1900x1200. Quadro cards are as good or better at gaming as their Geforce equivalents.