Here's Why There's Little Concrete Data on the RTX-40 Series (Report)

Testing and validation isn't easy.

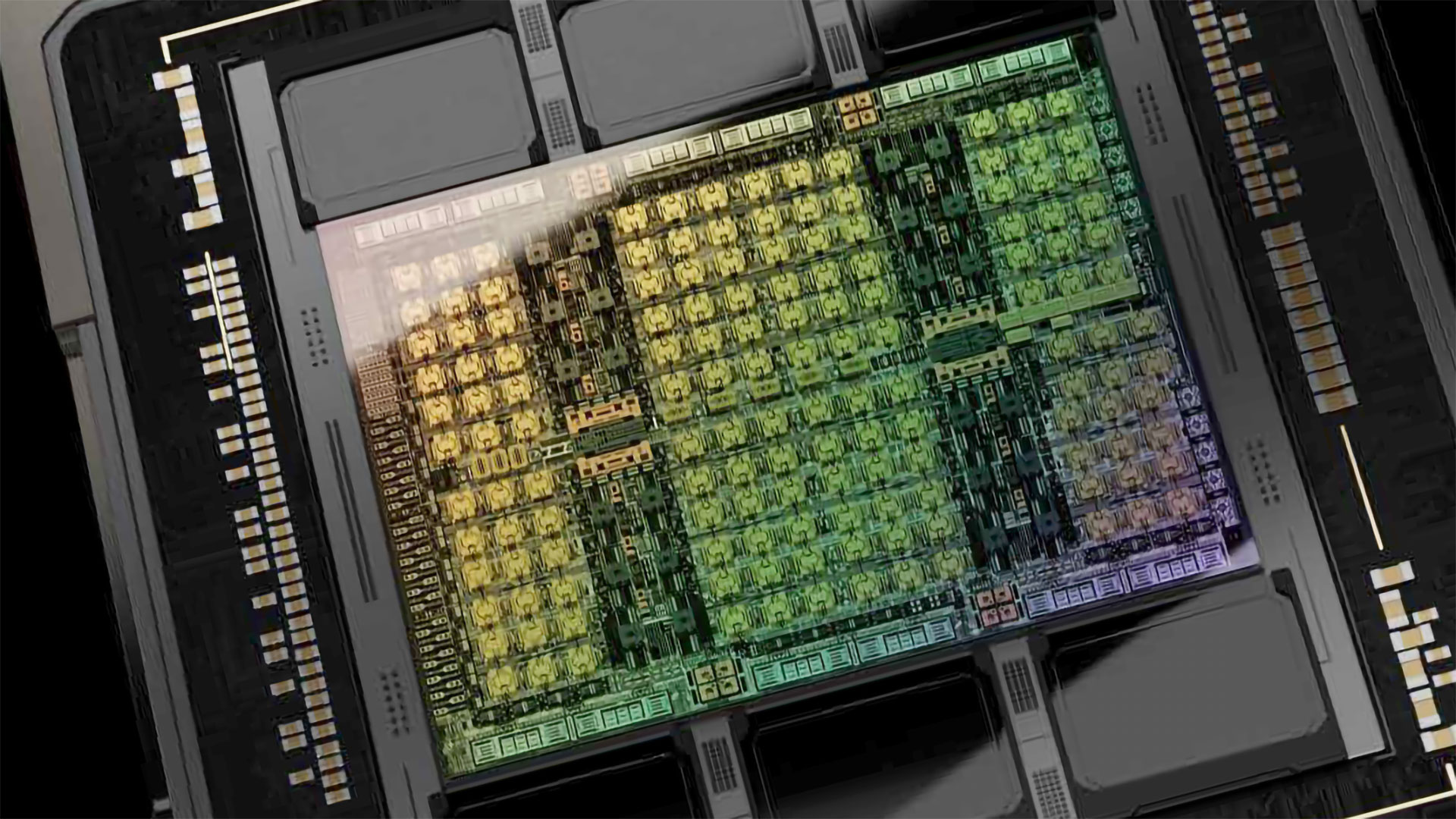

Igor's Lab recently posted an in-depth overview of Nvidia's estimated production and development schedule for its new RTX 40-series GPUs. In it, he throws some cold water on the hot rumors of an impending Nvidia launch, suggesting those won't be joining the best graphics cards as soon as we might like. The current rumors surrounding the RTX 40-series are incredibly vague and have very little concrete data, other than the information that was leaked via the LAPSU$ hack earlier this year.

Igor's take, based on his anonymous "sources," is that Ada is not out of the engineering and/or design validation phase just yet. That means none of Nvidia's AIB partners or Nvidia themselves have working engineering samples of RTX 40-series cards. The only GPUs available right now are Ampere-based test products. That means it's impossible for anyone to have anything approaching accurate performance data for Ada's gaming or compute prowess. All we have are highly speculative rumors based solely on potential core counts and memory configurations.

If you've been following the GPU industry, this isn't particularly surprising. GPU prices are in freefall from their lofty highs of last year, and current indications are we could be entering an oversupply phase. Nvidia and AMD will want to clear as much current generation inventory as possible before launching next-generation parts, so there's no need to rush. And of course Chinese Covid-19 lockdowns aren't entirely a thing of the past. Take that and then try to build a brand new GPU architecture, complete with months of testing, and you can start to get an idea of the difficulty with launching a new GPU.

Based on all of the above, and the lack of reliable 'sources' in the industry suggesting otherwise, Igor states that the rumored RTX 40-series launch window of July to early September is highly doubtful. Again, he claims there are no working prototype Ada units right now, which means getting cards finalized and released within three months would be incredibly ambitious.

Nvidia and its partners still need to get working samples, and then perform electromagnetic interference testing, production validation testing, driver and BIOS verification, and mass production. Given all of those factors, we're probably looking more at a mid-Q4 launch window (around October or November) rather than a Q3 release. Tha,t or Nvidia has managed to seriously crack down on leaks for a change.

Nvidia likely isn't alone. Intel has repeatedly pushed back its Arc GPU launch, which are now slated to arrive on desktops perhaps in Q3. Intel might be new to the dedicated GPU market, but it's likely also dealing with some similar issues, and AMD will be doing the same with RDNA 3. Hopefully things can stay on track for a 2022 launch of the next generation GPUs, but we wouldn't be shocked to see relatively limited supply for whatever remains of 2022 when or if they launch.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

-

hotaru.hino Meh, the only concrete data anyone should care about are:Reply

Performance in applications

How much power the thing actually consumes -

bigdragon I recall reading that Nvidia rushed the 30-series launch. AIBs threw together whatever they could to hit the launch window. Igor's Lab has a lot of good information, but I wouldn't discount Nvidia throwing something together quickly again. Yes, they want to sell out of 30-series stock. They also want to replace those crypto sales with gamer sales. Investors don't like companies that aren't constantly growing.Reply -

Alvar "Miles" Udell Last thing AIBs want is this all over again:Reply

Poor Soldering, Not Amazon, Killed 24 EVGA RTX 3090 GPUs | Tom's Hardware (tomshardware.com)

How to Check if Your GPU's Thermal Pads Were Incorrectly Installed | Tom's Hardware (tomshardware.com)

But I'm not getting excited at all about any new GPU until they're on shelves in quantities assured to prevent shortages and price gouging, with prices that aren't $1000+ for a mid range card... -

russell_john I generally pay the Leakers little mind because they are for the most part unethical hacks looking for clicks and attention for their overinflated egos .... Even a blind pig can find an acorn every once in a while so just because they got lucky once and predicted something correctly doesn't mean they are magically a "reliable source" ......Reply -

_dawn_chorus_ Reply

This is basically the youtuber Moores Law Is Dead in a nutshell... lol.russell_john said:I generally pay the Leakers little mind because they are for the most part unethical hacks looking for clicks and attention for their overinflated egos .... Even a blind pig can find an acorn every once in a while so just because they got lucky once and predicted something correctly doesn't mean they are magically a "reliable source" ...... -

RodroX I am somehow more interested in what the new Radeon line will be and can do, than nvidia this time around. With nvidia GPUs needing more and more power, AMD start to look better for me.Reply

For instance, in my country I can get RX 6700 XT 12Gb at a considerable lower price than the RTX 3070 8GB, been both Asus Dual models, and having the RX 6700 XT only 1x8 + 1x6 power cables, vs the RTX needing 2x8. -

hotaru.hino Reply

I wouldn't hold your breath that AMD will be leagues better on this front. For instance, the power specs for AM5 don't bode well in this regard when 16C is the top-end for this platform.RodroX said:I am somehow more interested in what the new Radeon line will be and can do, than nvidia this time around. With nvidia GPUs needing more and more power, AMD start to look better for me.

For instance, in my country I can get RX 6700 XT 12Gb at a considerable lower price than the RTX 3070 8GB, been both Asus Dual models, and having the RX 6700 XT only 1x8 + 1x6 power cables, vs the RTX needing 2x8.

As I've probably said elsewhere, this thing called Dennard Scaling, where like Moore's Law, was an observation that as transistors get smaller, their power density remains constant (meaning more transistors for the same power). And like Moore's Law (at least in its original form), it broke down in the 2010s. I wouldn't be surprised if AMD's next flagship GPU gets a 50W TBP bump.

There's also the issue that most cards are shipped with efficiency thrown out the window anyway in the name of good reviews and stroking e-peens. -

RodroX Replyhotaru.hino said:I wouldn't hold your breath that AMD will be leagues better on this front. For instance, the power specs for AM5 don't bode well in this regard when 16C is the top-end for this platform.

As I've probably said elsewhere, this thing called Dennard Scaling, where like Moore's Law, was an observation that as transistors get smaller, their power density remains constant (meaning more transistors for the same power). And like Moore's Law (at least in its original form), it broke down in the 2010s. I wouldn't be surprised if AMD's next flagship GPU gets a 50W TBP bump.

There's also the issue that most cards are shipped with efficiency thrown out the window anyway in the name of good reviews and stroking e-peens.

True, but still when you don't give a crap about Ray Tracing and considering the amount of performance you can get from a RX 6700XT at 1440p at a lower price point (and compared to my old RTX 2070) then it does look good.

I would not worry too much (yet) about the AM5 power specs, we do not know what products will even require such high power, is better to wait for the final product line and the reviews. We only know the socket will have a PPT 230 watts and a TDP of 170watts. Who knows perhaps AMD have something else hide on its sleeve for the AM5 platform. And also I don't think intel will do a lot better with its 13th gen, vs Alder Lake.

Cheers! -

hotaru.hino Reply

And sure, the market is definitely in AMD's court, but I'd argue this is due to a combination of scalpers trying to sell off their stock and trying to recoup some of their losses, while at the same time the market seems to hold NVIDIA at a higher value than AMD.RodroX said:True, but still when you don't give a crap about Ray Tracing and considering the amount of performance you can get from a RX 6700XT at 1440p at a lower price point (and compared to my old RTX 2070) then it does look good.

Otherwise if you want to argue from the standpoint of MSRP, the RTX 3070 is only $20 more than the RX 6700XT. When you consider the entire feature set of what NVIDIA GPUs have to offer, the RTX 3070 has a lot more value. Even if you don't care about ray tracing, NVIDIA cards still do everything Radeon cards can do from a feature set stand point and more. -

RodroX Replyhotaru.hino said:And sure, the market is definitely in AMD's court, but I'd argue this is due to a combination of scalpers trying to sell off their stock and trying to recoup some of their losses, while at the same time the market seems to hold NVIDIA at a higher value than AMD.

Otherwise if you want to argue from the standpoint of MSRP, the RTX 3070 is only $20 more than the RX 6700XT. When you consider the entire feature set of what NVIDIA GPUs have to offer, the RTX 3070 has a lot more value. Even if you don't care about ray tracing, NVIDIA cards still do everything Radeon cards can do from a feature set stand point and more.

Yes taking the MSRP then thats a no brainer. But in this case, in my country, the difference in money is around $150 * , and thats a lot of money, I can get a very awesome PSU with that much of a difference.

* yes $150 its insane, and Im not talking about some shady website, Im talking a very old respected retail street shop (with average prices compared to other shops in my country) where you can go see the package, and touch the GPU before paying it.