Talking Heads: Motherboard Manager Edition, Q4'10, Part 1

We've already talked to product managers representing the graphics industry. But what about the motherboard folks? We are back with ten more unidentified R&D insiders. The platform-oriented industry weighs in on Intel's, AMD's, and Nvidia's prospects.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

GPGPU Programming, Where Is It?

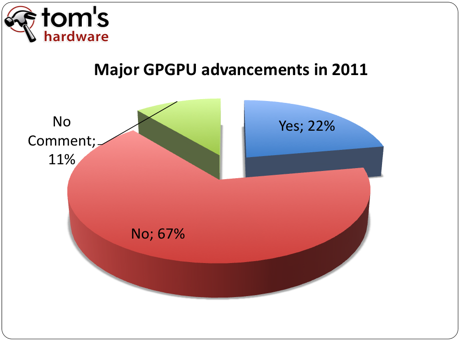

Question: The success of hybrid CPU/GPU designs like Sandy Bridge and Llano is closely tied to GPGPU programming. In the last major tech cycle, system integrators and consumers successfully adopted x86-64 processors and operating systems. Yet, potential benefits have been delayed because programmers, even today, are slow to adopt 64-bit programming. Do you think Intel and AMD can cause a major shift towards general purpose GPU programming within a year of their product launches?

- AMD will introduce its integrated graphics-equipped CPU in 2011. Intel will do so even earlier than AMD. But users still needs more time to be educated about GPU; only then can they really demand it. Consumers still think discrete graphics provide more performance and functionality.

- The 64-bit transition has been very slow and gradual. Software is always behind hardware, so we don't believe GPGPU will see any quantum leaps in the next year.

- Honestly, we have no clue. We are at the mercy of the big three: Intel, AMD, and Nvidia.

- Typically, our collaboration focuses mainly on implementing compatible hardware designs. We help drive demand through marketing, but driving the direction of demand is not within our scope.

- Hardware is always faster than software. I think that is what we are seeing with GPGPU [programming].

- I'm not really sure that it is necessarily slow. We are seeing more 64-bit programming about two years after full x86-64 adoption. If GPGPU [programming] follows suite, we should see more in 2012 or perhaps 2013.

The rise of hybrid processors brings new possibilities. Even on a system armed with integrated graphics, it is possible to see enhanced performance through the addition of some GPGPU programming. Specific tasks can be optimized on the graphics core, and even though systems with the most to gain will be those with powerful discrete graphic solutions, additional processing power can be a boon in environments that benefit most pointedly from parallelism.

By design, our question was meant to solicit the opinions on the speed of GPGPU programming adoption. Lately, progress seems to have ground to a halt (or at least, we're not hearing as much momentum behind apps optimized for CUDA and DirectCompute). Frankly, it is frustrating to see this occur. Reading through the comments from our last survey, readers seem to be in agreement. We are at a point where we have a lot of computer power, but much of the time, we aren't using it.

We also mentioned in the last survey how frustrating it was to see the slow pick-up of 64-bit programming. If you recall the emergence of 64-bit as a feature, both Intel and AMD were actively leveraging that capability as a differentiating feature. Fast forward to today. We are still lacking a concerted effort by the software development community to adopt 64-bit programming--perhaps due to a perceived lack of benefit. We still don't have a 64-bit version of Firefox, and there is no ETA on a 64-bit Flash plug-in. While the benefits of 64-bit in these two scenarios may in fact be negligible, it shows how slow the software community has been in contrast to what today’s hardware provides. Only recently did Adobe update its suite of apps to support a 64-bit architecture, and we’ve already shown the effect of that decision to be massive.

One of the key problems has been a standardized programming layer. Nvidia went with Compute Unified Device Architecture (CUDA). AMD went with Stream. And Microsoft is in the middle with DirectCompute--an attempt to standardize general purpose GPU computing across dissimilar architectures. Similar to the 64-bit extension war, this has delayed GPGPU programming adoption. CUDA was a fairly robust interface from the get-go. If you wanted to do any sort of scientific computational work, Nvidia's CUDA was the library to use. It set the standard. Unfortunately, as with many technologies in the PC industry kept proprietary, this has also limited CUDA's appeal beyond specialized scientific applications, where the software is so niche that it can demand a certain piece of hardware. That's not the case with a transcoding app or a playback utility. Even Adobe seems to have made a brave move by limiting its Mercury Playback Engine to a handful of CUDA-based GeForce and Quadro cards.

Nvidia no doubt wants to keep stressing the GPGPU capabilities rolled up into its Fermi architecture. It even hired the guy (Dr. Mark Harris) who coined the term GPGPU, which stands for "General Purpose computation on Graphics Processing Units." Unfortunately, mainstream adoption isn't going to happen without support from Intel and AMD, who probably have the biggest ability to help augment support for DirectCompute and OpenCL through large development budgets.

We have been playing with some of the CUDA framework and would love to see more mainstream adoption, but we understands the lack of progress. Looking at the big picture, a software developer would have to justify months (maybe even years) of extra programming in CUDA to get some of the GPGPU enhancements. And even then, gains are going to depend on the application.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

A single GPGPU coding framework does a lot for adoption, since it allows developers to target any properly-enabled graphics card, and not just one from Nvidia. Again, this makes much more sense in the context of broad adoption. For the moment, CUDA remains the best solution if you have a lot of money, a very specific task able to benefit from parallelism, and the resources to develop with GPGPU in mind. Personally, we are enjoying Jacket for MATLAB. OpenCL and DirectCompute come close, but both give up lower-level hooks into the architecture in favor of compatibility.

Intel and AMD both need to get with the program--particularly AMD. Its much-hyped APUs are right around the corner, and it unquestionably has the advantage with regard to graphics. Intel's solution, at first blush, looks more like an evolutionary afterthought than anything that'll be capable of augmenting its processors. And to be frank, Intel's CPUs are its first priority.

Current page: GPGPU Programming, Where Is It?

Prev Page The Future Of Nvidia Next Page CPU/GPU Hybrids And Performance Integrated Graphics-

dannyboy3210 I seem to have this nagging feeling that discrete graphics options will probably be around for another 10-15 years, at the least.Reply

If you factor the fact that getting a fusion of cpu/gpu will cost a bit more than a simple cpu, if you plan on doing any gaming at all, why not invest an extra 30$ or so (over the cost of cpu/gpu fusion, not just cpu) and get something that will game like twice as well and likely have support for more monitors to boot?

Edit: Although after the slow release of Fermi, I bet everyone's wondering what exactly is in store for Nvidia in the near future; like this article says, there seems to be a lot of ambivalence on the subject. -

sudeshc I would rather like improvements in chipsets then in CPU GPU they already are doing a wow job, but we need chipsets with less and less limitation and bottlenecks.Reply -

ta152h I'm kind of confused why you guys are jumping on 64-bit code not being common. There's no point for most applications, unless you like taking more memory and running slower. 32-bit code is denser, and therefore improves cache hit rates, and helps other apps have higher cache hit rates.Reply

Unless you need more memory, or are adding numbers more than over 2 billion, there's absolutely no point in it. 8-bit to 16-bit was huge, since adding over 128 is pretty common. 16-bit to 32-bit was huge, because segments were a pain in the neck, and 32-bit mode essentially removed that. Plus, adding over 32K isn't that uncommon. 64-bit mode adds some registers, and things like that, but even with that, often times is slower than 32-bit coding.

SSE and SSE2 would be better comparisons. Four years after they were introduced, they had pretty good support.

It's hard to imagine discrete graphic cards lasting indefinitely. They will more likely go the way of the math co-processor, but not in the near future. Low latency should make a big difference, but I would guess it might not happen unless Intel introduces a uniform instruction set, or basically adds it to the processor/GPU complex, for graphics cards, which would allow for greater compiler efficiency, and stronger integration. I'm a little surprised they haven't attempted to, but that would leave NVIDIA out in the cold, and maybe there are non-technical reasons they haven't done that yet. -

Draven35 ReplyCUDA was a fairly robust interface from the get-go. If you wanted to do any sort of scientific computational work, Nvidia's CUDA was the library to use. It set the standard. Unfortunately, as with many technologies in the PC industry kept proprietary, this has also limited CUDA's appeal beyond specialized scientific applications, where the software is so niche that it can demand a certain piece of hardware.

A lot of scientific software vendors I have communicated with about this sort of thing actually have been hesitant to code for CUDA because until the release of the Fermi cards, the floating-point support in CUDA was only single-precision floating point. They were *very* excited about the hardware releases at SIGGRAPH... -

enzo matrix Odd how everyone ignored workstation graphics, even when asked about them in the last question.Reply -

K2N hater That will only replace discrete video cards once motherboards ship with dedicated RAM for video and the CPU allows a dedicated bus for that.Reply

Until then the performance of the processors with integrated GPU will be pretty much the same as platforms with integrated graphics as the bottleneck will still be RAM latency and bandwidth. -

elbert The death of discrete will never occur because the hybrids are limited like consoles. Even if the CPU makers could place large amounts of resources on the hybrid GPU they will be stripped away by refreshes. The margin of error being estimating how many thought motherboard integrated graphics would kill discrete kind of kills the percentages.Reply

From what I have read AMD's Llano hybrid gpu is about the equal to a 5570. Llano by next year has no chance of killing sales of $50+ discrete solutions. I think they hybrids will have little effect on discrete solutions and your $150+ is off. The only thing hybrid means is potentially more CPU performance when a discrete is used. Another difference will be unlike motherboard integrated GPU's going to waste the hybrids will use the integrated GPU for other tasks. -

Onus sohaib_96cant we get an integrated gpu as powerful as a discrete one??No. There are two reasons that come to my mind. The first is heat. It is hard to dissipate that much heat in such a small area. Look at how huge both graphics card and CPU coolers already are, even the stock ones.Reply

The second is defect rate in manufacturing. As the die gets bigger, the chances of a defect grow, and it's either a geometric or exponential growth. The yields would be so low as to make the "good" dies prohibitively expensive.

If you scale either of those down enough to overcome these problems, you end up with something too weak to be useful. -

Onus elbert...From what I have read AMD's Llano hybrid gpu is about the equal to a 5570. Llano by next year has no chance of killing sales of $50+ discrete solutions...Although the reasoning around this is mostly sound, I'd say your price point is off. Make that $100+ discrete solutions. A typical home user will be quite satisfied with HD5570-level performance, even able to play many games using lowered settings and/or resolution. As economic realities cause people to choose to do more with less, they will realize that this level of performance will do quite nicely for them. A $50 discrete card doesn't add a whole lot, but $100 very definitely does, and might be the jump that becomes worth taking.Reply