Adding AI to sinus surgery system saw malfunctions rocket from eight to 100 incidents, according to new investigation — skull-puncturing errors are the stuff of nightmares

FDA approved AI-integration with fetal image analyzers and cardiac monitors also draws criticism.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

The introduction of AI to the operating theater doesn’t always work out as expected. Reuters has published a detailed report, with examples taken from lawsuits involving three ‘AI-enhanced’ medical machines. In the most excruciating example of an AI-assisted medical device going wrong, the ‘enhanced’ system is blamed for causing major medical emergencies, resulting in blood spraying around the operating room, and causing victims to suffer strokes.

Before we go into detail, it must be noted that the FDA reports seen by Reuters aren’t complete, so don't definitively indicate the introduction of AI was the root cause behind the increase in mishaps. However, lawsuits are pushing the argument that AI contributed to the injuries that occurred since the medical machinery ‘enhancements’ arrived.

It was also noted by Reuters that FDA-authorized medical AI devices have seen twice the recall rate compared to the baseline. Meanwhile, the FDA is struggling to keep pace with the AI-enhanced medical devices headed to market. Sadly, the FDA has been affected by cuts under the DOGE initiative. Here, specifically, 15 out of 40 AI scientists in the Division of Imaging, Diagnostics and Software Reliability (DIDSR) have been laid off or left, say insiders.

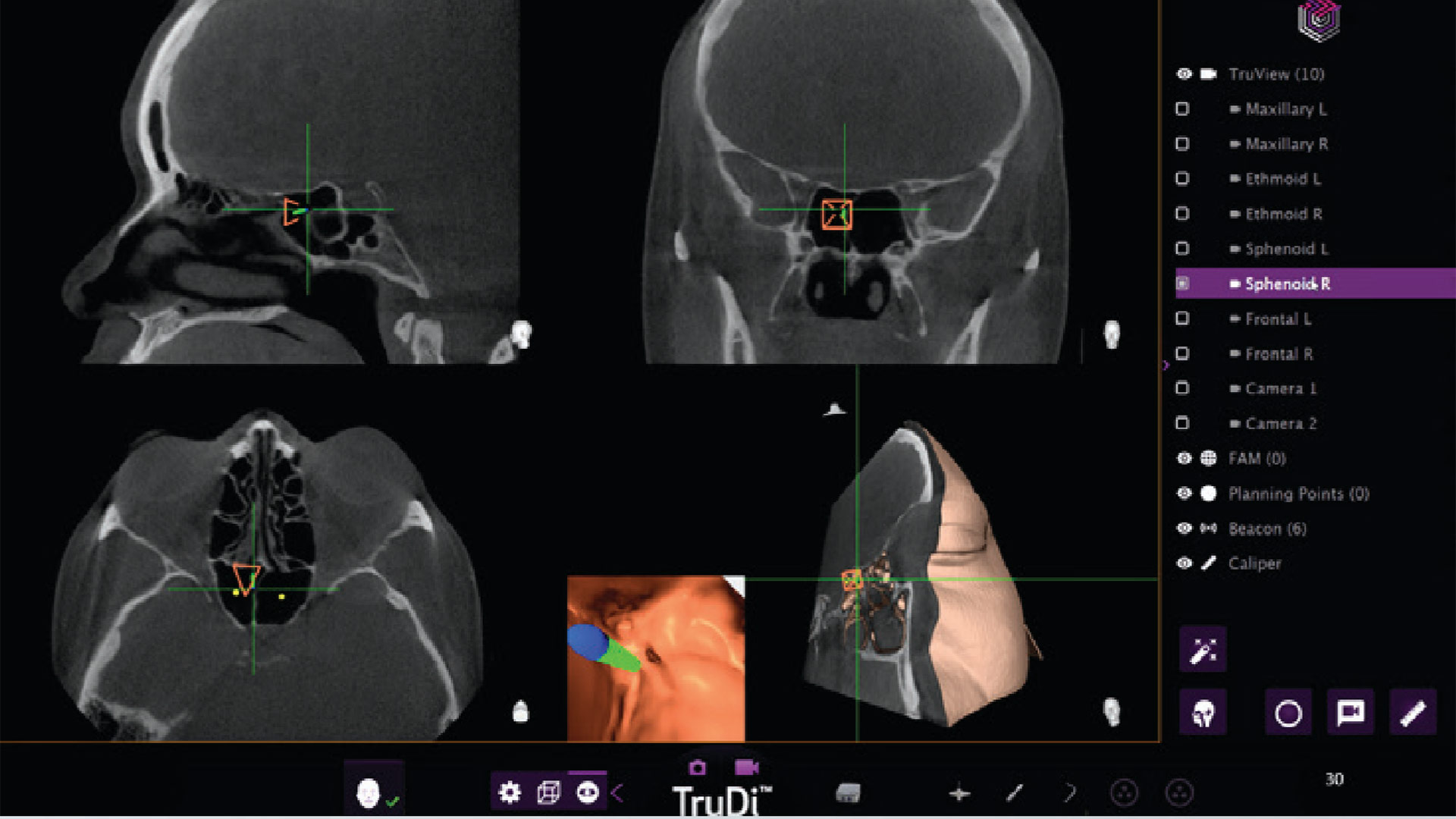

Article continues belowTruDi Navigation System from Acclarent

TruDi from Acclarent is used by clinicians to treat chronic sinusitis. It is designed to simplify surgical planning and provide real-time feedback during delicate procedures such as sinus operations.

After three years on the market, and a reported eight malfunctions, the device was ‘enhanced’ by AI algorithms and has since been involved in “at least 100 malfunctions and adverse events,” notes the Reuters report.

Problems attributed to TruDi AI have included cerebrospinal fluid leaks, puncture of the base of the skull, major arterial damage, and strokes.

Two specific (horrific) cases are detailed in the source story. The first involves Erin Ralph. This victim is currently taking legal action, and their lawsuit alleges that the TruDi system misdirected the doctor to put surgical instruments near the carotid artery. This caused a blood clot incident and stroke, it is claimed.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ralph subsequently needed five days in intensive care, experienced brain swelling, and a portion of skull needed to be removed as part of the remedial treatment. One year later, Ralph is still in physical therapy due to ongoing physical issues.

Donna Fernihough also experienced carotid artery damage. According to the source report, this artery “blew” during her op, with “blood spraying all over” the theater. The victim in this case had a stroke – again. Apparently, a TruDi rep (Acclarent) was watching this medical procedure.

Fernihough’s lawyers say the AI-enhanced system is “inconsistent, inaccurate, and unreliable.” Moreover, Acclarent “lowered its safety standards to rush the new technology to market,” and set “as a goal only 80% accuracy for some of this new technology before integrating it into the TruDi Navigation System,” insist the plaintiffs in this legal case.

Issues with the eSonio Detect system and the Medtronic LINQ implantable cardiac monitor

The Sonio Detect fetal image analyzer maker is accused of using a faulty algorithm. Due to this alleged built-in AI error, it purportedly misidentifies fetal structures and body parts, says the report. There have been no reports of patient harm from this analyzer’s use.

Medtronic LINQ implantable cardiac monitors are AI-assisted devices that are alleged to have failed to recognize abnormal rhythms or pauses in patients. Again, no incidents of patient harm are known.

In addition to FDA resources being under strain, as noted previously, the body’s AI device approval screening process may need reworking. Reuters indicates that, currently, the FDA seems to lean a lot on a device’s prior reputation. In effect, they are “positioning new devices as updates on existing ones,” suggests the source. This might help device makers push through their AI-enhanced machines and apparatus quicker, but it doesn’t seem thorough enough when human health is in the balance.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Mark Tyson is a news editor at Tom's Hardware. He enjoys covering the full breadth of PC tech; from business and semiconductor design to products approaching the edge of reason.

-

SomeoneElse23 Someone is seriously brain dead to think AI is accurate enough to assist in surgery.Reply -

Notton Reason #1trillion why you don't cut funding to a regulatory organization in charge of food and medical safety in the name ofReply

"government efficiency".

The only thing underfunding does is increase corruption from bribes and errors from overwork. -

SomeoneElse23 Reply

I'm not sure this would have been stopped by a "fully staffed FDA".Notton said:Reason #1trillion why you don't cut funding to a regulatory organization in charge of food and medical safety in the name of

"government efficiency".

The only thing underfunding does is increase corruption from bribes and errors from overwork.

It's currently politically correct to find ways to use AI.

And I can think of all sorts of things that get approved by the FDA that shouldn't have, because the FDA has been a revolving door from large corporate interests. -

Shiznizzle You rights will be signed away before you are on the table. Forced arbitration will be the norm. No rights since you signed the dotted line.Reply -

Mindstab Thrull First, AI should always be considered an item in a user's (in this case, surgeon's) toolkit. It should NEVER be a replacement for common sense and training.Reply

Second, "80% accuracy" is way too low a bar for a lot of things in the health industry, especially surgery. Take the number of people who would rather wait for a game to be properly finished before being released compared to dealing with Day 1 buggy messes - and now think about these being patients instead of gamers. 99% I would say starts to approach acceptable. 80 is unforgivable - that's one fail out of every five people.

* breathes deeply and tries withholding political comments... *

Ugh. America can do better. Give America the ability to BE better!

Signed,

A Canadian -

BFG-9000 They've long had remote-controlled surgical robots for telesurgery, and appear to have merely removed the remote surgeon + replaced them with AI to "assist" the workload of an actual, present local surgeon here. What could go wrong?https://static.wikia.nocookie.net/avp/images/b/b9/Prometheus_medpod720i.jpg/revision/latest/scale-to-width-down/1200?cb=20120414170750Reply -

pclaughton Don't worry, they'll work out the bugs on us poors before rolling it out to the people that actually matter.Reply -

palladin9479 OMG what insane MBA thought this was a good idea? Talk about ridiculous levels of legal liability due to malpractice.Reply