Intel Optane SSD 900P Review: 3D XPoint Unleashed (Update)

Why you can trust Tom's Hardware

Conclusion

Unfortunately, like most new technology, the Optane 900P is here well before you can use it to the fullest. Hard disk drives continue to stifle progress because they are still used in high-volume OEM systems. Like it or not, your shiny new operating system was designed to run on an old hard disk drive.

That doesn't mean you aren't missing out. The Intel Optane SSD 900P delivered the best user experience we've ever had. We built a new system to test the drive in BAPCo's SYSmark 2014, and that required a fresh install of the operating system, drivers, and a few pieces of software. I'd say the installation process was truly magic, but that sounds too cliche.

You will see and feel a performance benefit just by using the Optane SSD 900P as your operating system drive. The feel of the system changes even if you’re replacing a high-performance NVMe SSD. You will notice the increased responsiveness immediately and then gradually become accustomed to it. In our experience, you will take the performance for granted until you work on a slower PC. Then you'll wish it had an Optane SSD.

There is a difference between seeing a performance improvement and actually using the drive to its full capabilities. We will never use the Optane 900P to its fullest in a desktop PC. We can say the same about NAND-based NVMe SSDs like the Intel 750, Samsung 960 Pro, and even the 960 EVO. Your initial reaction to this is rational: if I can't use a 960 EVO to its fullest, why should I pay more for an Optane SSD 900P?

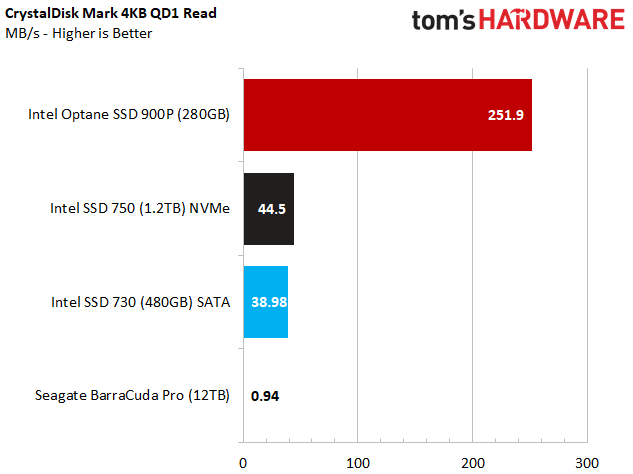

With Optane, the difference is the type of performance you gain. The Intel Optane 900P is fast at low queue depths, and that is where you need the performance the most.

Many users complain that the latest NVMe SSDs perform similarly to the SATA SSDs they replaced. If you don't push a complex workload to the drive, you will likely only see a small performance boost when you step up to a faster NAND-based SSD. The chart above shows why many users feel this way. The move from disk was a leap, but the improvements have been relatively small between SSDs.

Since SSDs debuted, companies have used hero numbers to market them. For instance, Intel touts Optane's 550,000 random read IOPS even though you will never tap that level of performance in a normal desktop environment. Samsung is the only company that routinely markets queue depth 1 random performance, which is one of the most critical factors that impact the user experience.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We usually see a 2,000 to 3,000 random read IOPS improvement when a new best-in-class NVMe SSD comes to market, but those gains come at unrealistic queue depths. In fact, over the last three years, 2,500 IOPS increases have driven the upgrade market.

In comparison, the Intel Optane SSD 900P beats the next best drive by nearly 44,000 IOPS at queue depth 1, and that's performance that matters.

Can The Competition Catch Up?

This question should be on everyone's mind. Competition is good for the consumer because it helps reduce prices and spurs innovation. Thankfully, several other companies are also working on unique beyond-flash technologies. ReRAM, Spin Torque-MRAM, and NRAM Carbon Nanotube Memory are all potential competitors. Some are already shipping in limited quantities for data center products.

Intel managed to bring a new memory to market first because the company has all of the pieces in place. We think of Intel as a technology company, but Intel sees itself as a manufacturing company. It has the R&D, the manufacturing capabilities, a proven partner in Micron, and most importantly, the capital to execute a plan. Just imagine being a startup with an idea and trying to get funding. Then you have to find a manufacturing partner to make it real. Intel doesn't have those problems.

In time, 3D XPoint will have to compete with new innovations from competitors. Until that time comes, Intel is in a strong position to dominate the beyond-flash market. We can all survive Intel's lead. We lived with Samsung having the only 3D NAND on the market for three years. It's Intel's turn to lead and for the other companies to play catch up.

Our Recommendation

3D XPoint is still in its infancy. If the small size or high price put the Optane 900P SSD out of your reach, just give it a few years.

At one point we suspected the Optane SSD 900P 280GB would cost around $600, and we were fine with that price based on our experience with the drive.

If you are tired of buying a new SSD every year or two only to gain a small performance increase, the Optane 900P is a product you can invest in and slow your upgrade frequency. In the long run, even priced at $599, the 480GB is more than a worthy upgrade. The 900P 280GB has an obvious capacity issue that many can look past to access the large performance gains. If we were trying to choose between one or the other, we would choose the 480GB model for its increased capacity.

As for the technology, the release of a new memory class in our lifetime is the storage tech-world equivalent to living through the moon landing. This is an exciting time.

MORE: Best SSDs

MORE: How We Test HDDs And SSDs

MORE: All SSD Content

Chris Ramseyer was a senior contributing editor for Tom's Hardware. He tested and reviewed consumer storage.

-

Aspiring techie If Intel would have properly implemented NVMe raid, imagine what would happen if you put 8 of these in RAID 0.Reply -

hdmark Can someone comment on the statement "your shiny new operating system was designed to run on an old hard disk drive. "? I somewhat understand it, but what does that mean in this case? If microsoft wanted... could they rewrite windows to perform better on an SSD or optane? Obviously they wont until there are literally no HDD's on the market anymore due to compatibility issues but I just wasnt sure what changes could be made to improve performance for these faster drives.Reply -

AgentLozen Thanks for the review. I have a couple questions if you don't mind answering them.Reply

1. Is PCI-e 3.0 a bottleneck for Optane drives (or even flash in general). If solid state drive developers built PCI-e 4.0 drives today, would they scale to 2x the performance of modern 3.0 drives assuming there were compatible motherboards?

2. Can someone explain what queue depth is and under what circumstances it's most important? The benchmarks show WILD differences in performance at various queue depths. Does it matter that flash based drives catch up at greater queue depths? Is QD1 the most important measurement to desktop users?

3. In the service time benchmarks, it seems like there is no difference between drives in World of Warcraft, Battlefield 3, Adobe Photoshop, Indesign, After Effects. Sequential and Random Read and Write conclude "Optane is WAAAAY better" but the application service time concludes "there's no difference". Is it even worth investing in an Intel Optane drive if you won't see a difference in real world performance? -

AndrewJacksonZA This...Reply

I mean...

This is just...

.

.

.

I want one. I want ten!!!

Also, "Where Is VROC?" listed as a con? Hehe, I like how you're thinking. :-) -

dudmont Reply20314320 said:Can someone comment on the statement "your shiny new operating system was designed to run on an old hard disk drive. "? I somewhat understand it, but what does that mean in this case? If microsoft wanted... could they rewrite windows to perform better on an SSD or optane? Obviously they wont until there are literally no HDD's on the market anymore due to compatibility issues but I just wasnt sure what changes could be made to improve performance for these faster drives.

Simplest way to answer is the term, "lowest common denominator". They(MS) designed 10 to be as smooth and fast as a standard old platter HD could run it, not a RAID array(which a SSD is basically a NAND raid array), or even better an XPoint raid array. Short queue depths(think of data movement as people standing in line) were what Windows is designed for, cause platter drives can't do more data movements than the number of heads on all the platters combined without the queue(people in line) growing, and thus slowing things down. In short, old platter drives can slowly handle like 8-20 lines of people, before they start to clog up, while nand, can quickly handle many lines of people(how many lines depends on the controller and the number of nand packages). Admittedly, it may not be the best answer, but it's how I visualize it in my head. -

hdmark Reply20314454 said:20314320 said:Can someone comment on the statement "your shiny new operating system was designed to run on an old hard disk drive. "? I somewhat understand it, but what does that mean in this case? If microsoft wanted... could they rewrite windows to perform better on an SSD or optane? Obviously they wont until there are literally no HDD's on the market anymore due to compatibility issues but I just wasnt sure what changes could be made to improve performance for these faster drives.

Simplest way to answer is the term, "lowest common denominator". They(MS) designed 10 to be as smooth and fast as a standard old platter HD could run it, not a RAID array(which a SSD is basically a NAND raid array), or even better an XPoint raid array. Short queue depths(think of data movement as people standing in line) were what Windows is designed for, cause platter drives can't do more data movements than the number of heads on all the platters combined without the queue(people in line) growing, and thus slowing things down. In short, old platter drives can slowly handle like 8-20 lines of people, before they start to clog up, while nand, can quickly handle many lines of people(how many lines depends on the controller and the number of nand packages). Admittedly, it may not be the best answer, but it's how I visualize it in my head.

That helps a lot actually! thank you!

-

WyomingKnott Queue depth can be simplified to how many operations can be started but not completed at the same time. It tends to stay low for consumer applications, and get higher if you are running many virtualized servers or heavy database access.Reply

With spinning metal disks, the main advantage was that the drive could re-order the queued requests to reduce total seek and latency at higher queue depths. With NMMe the possible queue size increased many-fold (weasel words for I don't know how much), and I have no idea why they provide a benefit for actually random-access memory. -

TMTOWTSAC Reply20314320 said:Can someone comment on the statement "your shiny new operating system was designed to run on an old hard disk drive. "? I somewhat understand it, but what does that mean in this case? If microsoft wanted... could they rewrite windows to perform better on an SSD or optane? Obviously they wont until there are literally no HDD's on the market anymore due to compatibility issues but I just wasnt sure what changes could be made to improve performance for these faster drives.

Off the top of my head, the entire caching structure and methodology. Right now, the number one job of any cache is to avoid accessing the hard drive during computation. As soon as that happens, your billions of cycles per second CPU is stuck waiting behind your hundredths of a second hard drive access and multiple second transfer speed. This penalty is tens of orders of magnitude greater than anything else, branch misprediction, in-order stalls, etc. So anytime you have a choice between coding your cache for greater speed (like filling it completely to speed up just one program) vs avoiding a cache miss (reserving space for other programs that might be accessed), you have to weigh it against that enormous penalty. -

samer.forums where is the power usage test ? I can see a HUGE heatsink on that monster , and I want the Wattage of this card compared to other PLEASE.Reply

This SSD cant be made M2 card . so Samsung 960 pro has a huge advantage over it. -

dbrees A lot of the charts that show these massive performance gains are captioned that this is theoretical bandwidth which is not seen in actual usage due to the limitations of the OS. I get it, it's very cool, but if the OS is the bottleneck, no one in the consumer space would see a benefit, especially since NVMe RAID is still not fully developed. If I am wrong, please correct me.Reply