Plextor M5S 256 GB Review: Marvell Inside, With A Twist

Plextor's optical drives were always known for their quality. Now, the company is trying to carry that reputation over to SSDs. Its M5S is actually a fourth-generation offering based on Marvell's controller technology. But Plextor adds its own spin, too.

The Impact Of A DRAM Buffer

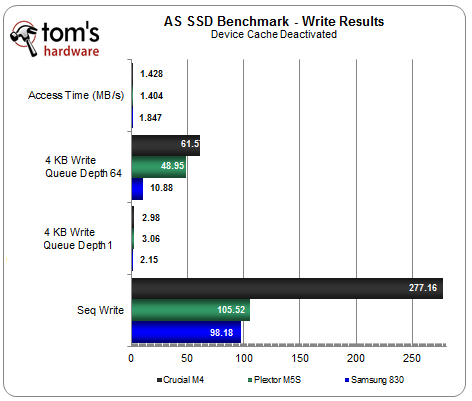

This might have gone unnoticed, but each drive in today's review employs a different capacity of DRAM buffer. Crucial's m4 has only 128 MB. Samsung's 830 sports 256 MB. And Plextor's M5S has 512 MB. When we disable the device cache, we can pinpoint the contribution that DRAM buffer makes to improving write performance.

The M5S' write performance, along with Samsung's 830, is badly impacted across the board when we disable the DRAM buffer. The m4's sequential write speeds are not impacted, but 4 KB random performance is. Clearly, the on-board cache is helping improve write speeds by consolidating written data before moving it over to the NAND. Not only can this help with performance, but it aids wear leveling, too.

By default, the cache in your SSD is enabled by Windows. It's accompanied by the following warning, though:

Article continues below"Improves system performance by enabling write caching on the device, but a power outage or equipment failure might result in data loss or corruption."

That sounds like it could be pretty scary, right? What's the risk of leaving it enabled? The interaction between the volatile device cache and volatile file system cache is complex, and we're working on a separate article to fully explore this. But we can provide some insight using the graphs below, which are based on a large file transfer.

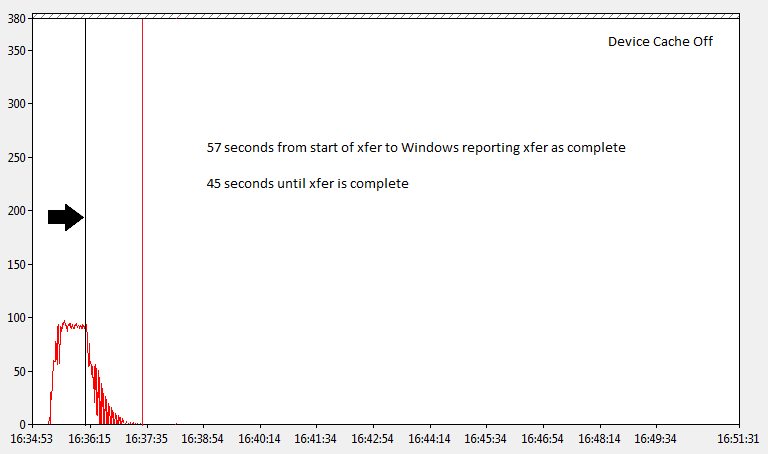

First, we transfer our file to a drive with its cache disabled. Where you see the arrow and black vertical line, Windows is reporting that the transfer is complete. All of the data has been transferred into the file system cache at this point. However, not all of the data has been moved to the device media. At the physical volume level, the transfer isn't complete for another 45 seconds. So, if the power goes out within that window, data loss or corruption could occur.

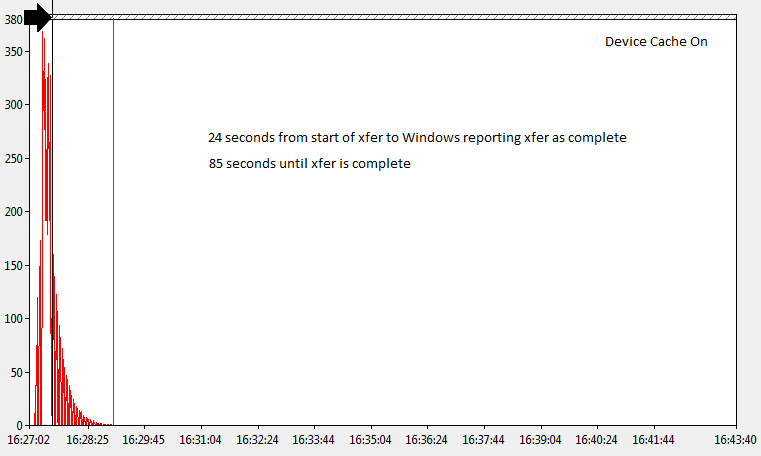

In the next chart, we transfer the same file with the drive's cache enabled. This time, there's an 85-second window after the operating system reports that the transfer is complete. There is also a time after that when data might still be stored in the device cache. We can't say exactly how much, but we know that there are various mechanisms that typically force the buffer to commit data to non-volatile media quite quickly.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

As far as risk goes, the main threat to data integrity is the time between when the transfer completes within the file system and when it's committed to an SSD's NAND. In our example, we see that period lasts longer when the device cache is enabled.

We saw in our first graph that the file system cache was still a risk to data integrity in the event of an unplanned power loss, even with the DRAM cache disabled. This is because the file system still caches information before writing it to the drive itself. File system cache can be avoided by applications that use write-through flags, forcing data to bypass the file system cache, thereby minimizing the risk of data loss.

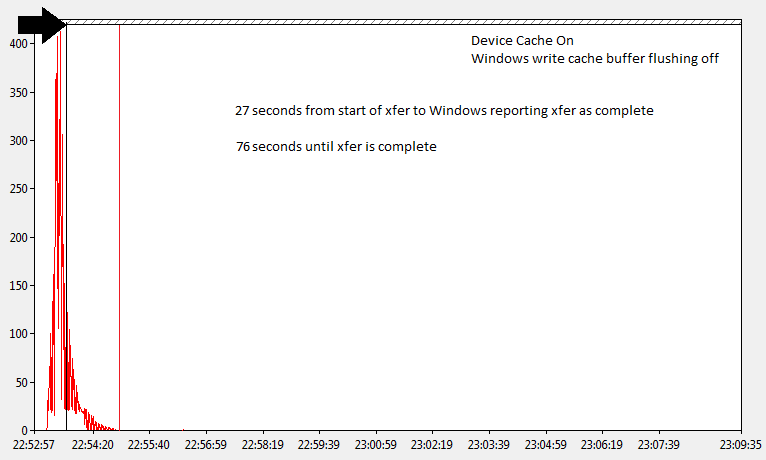

In our last graph, we enable the device cache and use the "Turn off Windows write-cache buffer flushing on the device" option. This strips away write-through flags from disk requests and removes flush-cache commands. This is potentially the highest-risk strategy. But, in our file transfer example, it also yields the fastest competition time at the physical volume level.

As mentioned, the interaction between the DRAM cache and the file system cache is complex. Clearly, though, disabling the device cache alone does not eliminate the risk of data loss in the event of a power loss. The extent of that danger is subject to a number of variables. However, it has to be considered against the benefits of improved write performance and less wear.

Current page: The Impact Of A DRAM Buffer

Prev Page In Depth: TRIM And Garbage Collection, Tested Next Page Benchmark Results: Iometer-

lutel Why in your reviews you dont mention anything about FDE and its support in modern mainboards based on chipsets for Ivy Bridge? It is much more crucial feature to some people than small differences in performance.Reply -

JackNaylorPE I'd be more worried about matching Mushkin's price / performance ... and same 3 year warranty.Reply

Samsung 230 - $227 ($0.89 / GB)

http://www.newegg.com/Product/Product.aspx?Item=N82E16820147164

Mushkin Chronos Deluxe - $180 ($0.75 / GB)

http://www.newegg.com/Product/Product.aspx?Item=N82E16820226225

-

NuclearShadow Good I hope the market continues to get flooded with SSD's the recent price drops are no doubt hugely influenced by competition.Reply

JackNaylorPEI'd be more worried about matching Mushkin's price / performance ... and same 3 year warranty.Samsung 230 - $227 ($0.89 / GB)http://www.newegg.com/Product/Prod 6820147164Mushkin Chronos Deluxe - $180 ($0.75 / GB)http://www.newegg.com/Product/Prod 6820226225

Hate to break it to you buddy but the Mushkin link leads to a 128GB for $179.99 That is well above $1 per GB. -

blazorthon NuclearShadowGood I hope the market continues to get flooded with SSD's the recent price drops are no doubt hugely influenced by competition. Hate to break it to you buddy but the Mushkin link leads to a 128GB for $179.99 That is well above $1 per GB.Reply

http://www.newegg.com/Product/Product.aspx?Item=N82E16820226237

He gave the wrong link and mistook the Delux for the non-Delux. The above link is the non-Delux 256GB for $179.99. The Delux is another $10 at $190:

http://www.newegg.com/Product/Product.aspx?Item=N82E16820226226

Still, I'd go with the Vertex 4 256GB at $190 instead of any of these at their prices.

http://www.newegg.com/Product/Product.aspx?Item=N82E16820227792&Tpk=Vertex%204%20256GB -

uriah A small inexpensive (25 cent) capacitor could provide enough power to complete writing what remained in the dram if it is a major problem.Reply -

hypermole It would be nice to know if this new ssd is worth more overall than the M3 Pro and why? Warranty?Reply