AMD A10-4600M ''Trinity'' 3DMark 11 Performance Leaked

AMD has released slides to the Korean market detailing the graphics performance and specifications of its next generation ''Trinity'' APU.

The slides give us a small glimpse of what to expect from “Trinity” based APUs in the near future, specifically from the upcoming A10-4600M mobile APU. On the CPU side, the A10-4600M packs a quad-core powered “Piledriver” CPU clocked at 2.3 GHz with 4MB of L2 cache, a TDP of 35W and features Turbo Core Technology, capable of bringing the clock speeds up to 3.0 GHz. On the GPU side, you'll find an integrated Radeon HD 7660G clocked at 685Mhz with 384 Radeon cores, which can be coupled with a discrete Radeon HD 7670M for Crossfire.

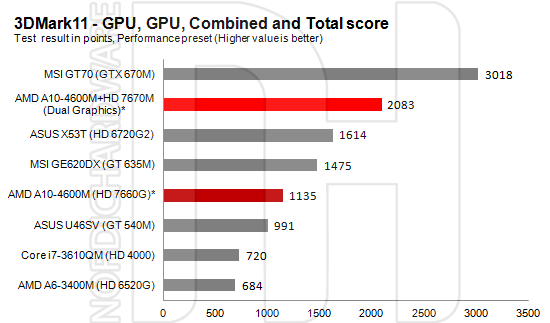

So how well does the APU perform? The folks at Nordichardware extracted the 3DMark 11 scores from the leaked Korean slide and combined it with scores from other notebooks for comparison:

Although the test is strictly focused on graphics performance, we can see the GPU by itself scores 1135 points in 3DMark 11, a 58 percent performance increase over Intel's HD 4000 Graphics. But why stop there? Add in a Radeon HD 7670M to take advantage of the Dual-GPU feature and hit 2083 3DMark 11 points. Not bad for an APU. Unfortunately, the slides lack CPU performance details or even non-synthetic benchmarks, but we'll see all that soon enough. Check out the Korean slides below, for all the leaked goodies.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

A Bad Day I wonder, when will integrated GPUs come with their own VRAM? A mid range GPU with a mid-latency 1600 MHz RAM can stand toe-to-toe with a high end GPU armed with high-latency 1066 MHz RAM. An integrated GPU with 256-512MB of GDDR5 can easily dominate other intergrated GPUs.Reply -

danwat1234 The 7660 is probably just a hair slower than the Nvidia 9800gs in my Asus G50VT. Pretty cool. We'll see how it turns out ..Reply

Does anyone know if the Trinity GPU can overclock itself like the cores can? -

jacobdrj Darn... Just got an A8 lappy... Waiting for it in the mail... But it was so darned cheep: $400!!!Reply -

JAYDEEJOHN When they start using interposers with stacked memort, it will open up the bandwidthReply

Next gen, next year hopefully -

dheadley That isn't what he said at all. I got from it that he was saying that an intergrated APU would do more with intergrated and dedicated GDDR5 graphics memory that was of higher quality instead of using shared system memory or lower quality memory as they do now. Since that has been proven repeatedly with dedicated graphics cards I would say that he is dead on with his comment and you kinda look like the noob.Reply -

jaber2 FYI nothing is leaked unless company thinks its a good idea to see reaction, when we have our meetings with Intel reps they showed us slides and such but only told us verbaly about new things they were working on, I would usually see those slides on websites marked "leaked" :)Reply -

el painto @frozonicReply

Bad Day was talking about vram. It's video ram; usually built onto the graphics board which is usually much faster and higher performance than the ram you put on the motherboard. He seems to be wondering if companies might one day have something like that built right on the die (as part of the chip) rather than use the standard ram on the computer. It would probably be a small amount, but it would maybe run really fast with low latency. I think it's a fine concept.

The issue right now seems to be that there's less of a direct connection between an integrated graphics chip (makes for higher latency) and its associated memory than there would be on a discreet board which has everything built together with high end parts.