Intel, AMD, Nvidia Claim Greenest Supercomputer Technology

The world's most prestigious supercomputers are usually spotlighted in the Top500 list of the world's fastest systems.

But there is also a similarly interesting, albeit less known listing that is showing tremendous progress in the power efficiency of some supercomputers. Intel, AMD and Nvidia are the main proponents of this group.

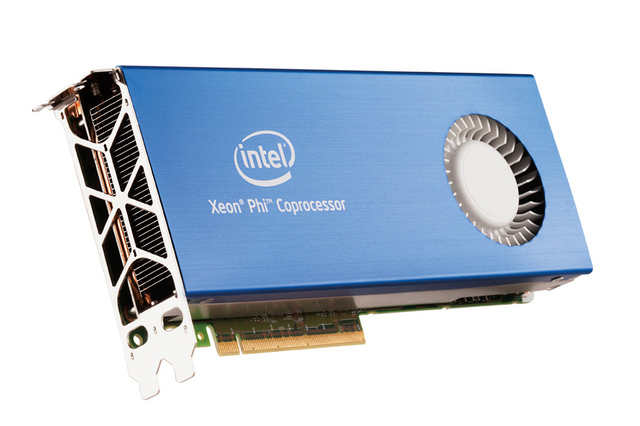

ORNL's Titan may be the world's fastest supercomputer, but it is only the third most efficient, according to Green500. The honor of being the greenest supercomputer system goes to University of Tennessee and its Beacon system, which is based on Xeon E5-2670 and Xeon Phi 5110P processors. The computer delivers 2,499.44 Mflops per watt.

In second is King Abdulaziz City for Science and Technology's SANAM supercomputer, based on Xeon E5-2650 and 420 dual-GPU AMD FirePro S10000 server graphics cards, with 2,351.10 Mflops per watt.

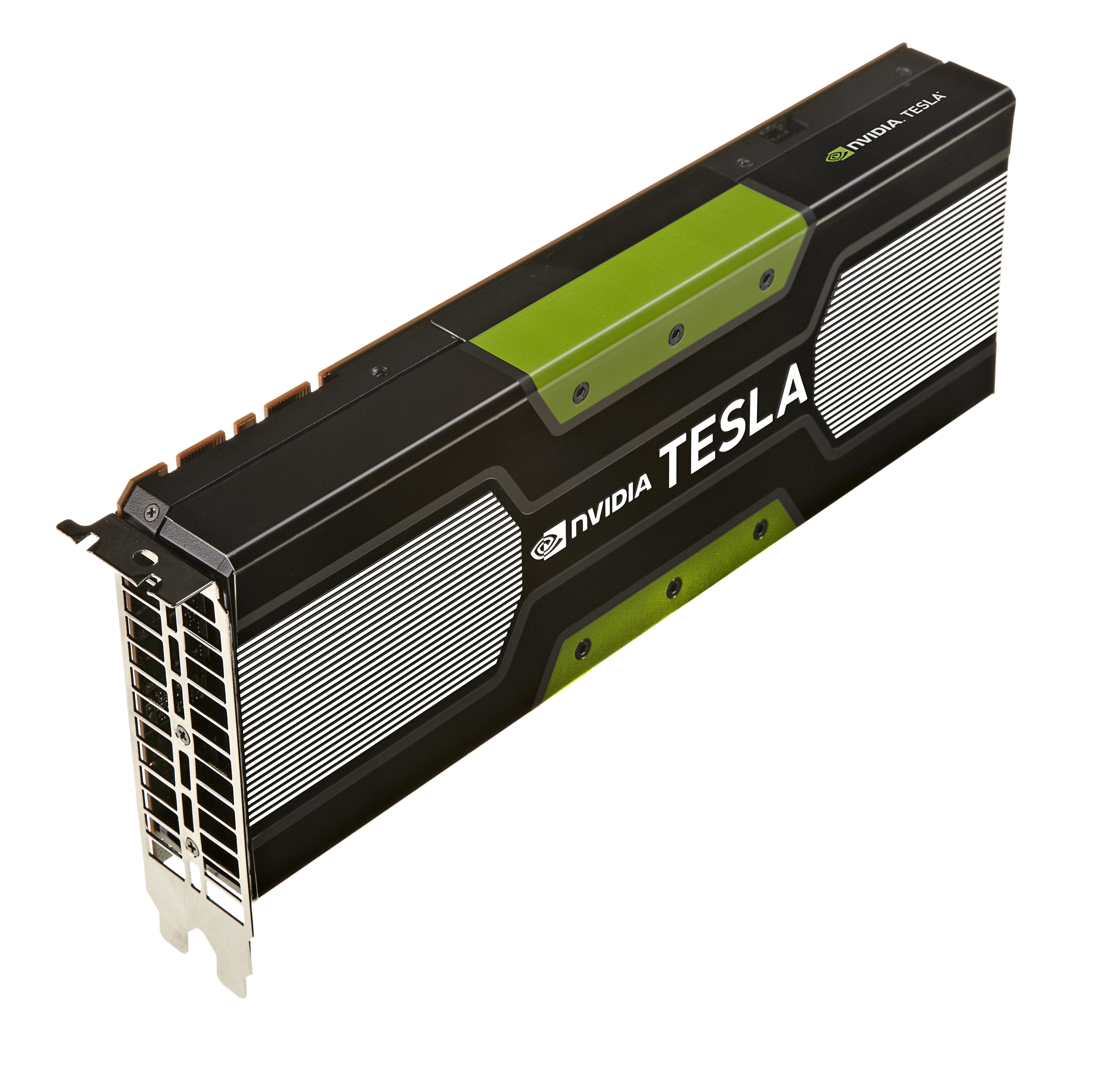

The ORNL Titan, which integrates AMD Opteron 6274 processors and Nvidia Tesla K20x graphics cards, posted 2,142.77 Mflops per watt.

The three systems cannot be compared in their absolute performance. Titan holds position #1 on the Top500 list; SANAM can be found at #52 and Beacon at #253.

Titan (560,640 CPU cores, 46,6 million Nvidia CUDA processors) delivers a sustained performance of 17.6 Pflops, while SANAM (38,400 CPU cores, 1.5 million AMD stream processors) is rated at 421 TFlops, and Beacon at 110.5 TFlops (9,216 CPU cores, undisclosed number of Xeon Phi 5110P cards with 60 cores each).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Wolfgang Gruener is an experienced professional in digital strategy and content, specializing in web strategy, content architecture, user experience, and applying AI in content operations within the insurtech industry. His previous roles include Director, Digital Strategy and Content Experience at American Eagle, Managing Editor at TG Daily, and contributing to publications like Tom's Guide and Tom's Hardware.

-

adgjlsfhk Really, the three biggest chip makers claim the best supercomputers. How shocking. How does the Raspberry Pi one measure up?Reply -

bystander adgjlsfhkReally, the three biggest chip makers claim the best supercomputers. How shocking. How does the Raspberry Pi one measure up?I'm not sure you read, but they listed the 3 top most efficient systems. Not what claims to be, but what are the most efficient, and of the top 3, all those 3 brands were present.Reply -

bystander abbadon_34eh who cares, supercomputer are about power, or at least power/costExactly, Power per cost, which is what efficiency is. And the most powerful was #3 on efficiency. Therefor you may start seeing the most efficient processor setups in the newer supercomputers being built.Reply -

A Bad Day bystanderExactly, Power per cost, which is what efficiency is. And the most powerful was #3 on efficiency. Therefor you may start seeing the most efficient processor setups in the newer supercomputers being built.Reply

There's a certain point where the annual electricity cost for powering and cooling the supercomputer exceeds the setup cost of the supercomputer.

And I'm fairly sure we're already past that point. -

cjl adgjlsfhkReally, the three biggest chip makers claim the best supercomputers. How shocking. How does the Raspberry Pi one measure up?Actually, IBM's absence on this list is rather notable, since their Blue Gene/Q system was the best in efficiency until just recently.Reply -

dragonsqrrl "46,6 million Nvidia CUDA processors"Reply

Titan has 50,233,344 CUDA cores Gruener, not 46 million. You've already published this number before, and I've already pointed it out to be inaccurate. Where are you getting this number from? It's in neither of the articles you've sourced.

It makes me question the accuracy of other news articles I don't know as much about, because I might not know any better. I think everyone would appreciate it if you and the rest of the news team would make an attempt to correct some of these mistakes from time to time, or at least acknowledge that you've made them. -

x3style dragonsqrrl"46,6 million Nvidia CUDA processors"Titan has 50,233,344 CUDA cores Gruener, not 46 million. You've already published this number before, and I've already pointed it out to be inaccurate. Where are you getting this number from? It's in neither of the articles you've sourced.It makes me question the accuracy of other news articles I don't know as much about, because I might not know any better. I think everyone would appreciate it if you and the rest of the news team would make an attempt to correct some of these mistakes from time to time, or at least acknowledge that you've made them.Where do you think you are? Engadget? THIS IS THG, they are never wrong, the industry is basically not sticking with THG's designs. If THG says its 46,6 million then its NVIDIA's fault for putting 50,233,344 in them. ;)Reply