Tom's Hardware's 2007 CPU Charts

Status Quo, Continued

During this time, Intel's manufacturing advantage saved the company from losing substantial ground to AMD. It shrunk the power hungry and vastly inefficient NetBurst processors Pentium 4 and Pentium D down to the 65 nm process, while AMD has always been at least 12 months behind and had to stay with 90 nm at that point. The upside of AMD's lag in this regard was its (forced) opportunity to fine-tune the existing process, which resulted in 90 nm AMD processors that exhibited equal or even better idle and low to medium load power consumption then even the latest Core 2 processors from Intel. As a consequence, an AMD system can still be more energy efficient than an Intel machine, although Intel usually wins the performance race rather clearly.

A dual core processor has two significant advantages over a single core chip: it ensures that your system remains more responsive even when the processor load is high, and it offers up to twice the performance thanks to the second processing unit. However, both the operating system and the applications need to be designed for multi core processors, which means that tasks (applications) have to be broken down into threads. Not all program code is suitable for thread-optimization, and thread-level optimization hides pitfalls: for example, if more than one processing unit attempts to work on the same data, consistency and data race conditions become issues. Also, thread optimization doesn't scale that well unless you start introducing more complex logic (nodes). Windows Vista also doesn't make the whole thing easier, as it was not designed for more than four cores.

Parallel processing clearly is the future, and we're close to using general purpose ATI or Nvidia graphics processors with programmable units (shaders) to assist the system processor with all computing-intensive floating point calculations, a technology sometimes called GPGPU. Even so, increasing the core count per processor is an evolutionary process, not something that has to be done right away. Four cores have to be fed with data, which is why Intel is increasing the FSB speed from 266 MHz to 333 MHz (FSB1066 to FSB1333) in the first place. AMD will accelerate its Hyper Transport link to provide sufficient bandwidth to multi core processors, and Intel also plans to replace the Front Side Bus by the Common System Interface (CSI), which is a serial high-bandwidth interlink. Also remember that four or more cores need to be managed smartly if you want to maintain low idle power consumption and still yield high performance.

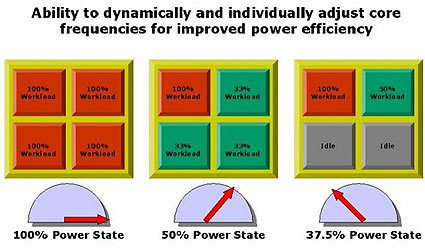

Future quad core processors, whether AMD's Barcelona core or Intel's Nehalem-microarchitecture based Bloomfield, will be capable of throttling and accelerating individual cores according to workload requirements. It will be possible to completely shut down unused units, and individual cores may clock faster than the normal speed when all four cores work together, so the quad core can run a single-threaded application as quickly as possible. All that counts is maintaining the given thermal envelopes, which will level off into three segments: low-power (~35 W), mainstream (50-65 W) and high-end (70-95 W).

AMD's quad core Barcelona core will presumably be the first multi core processor to introduce flexible management to speed up or stop individual cores to find the best operating mode for various workloads.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.