Far Cry 3 Performance, Benchmarked

The third installment of the Far Cry series is more impressive as a game (to us, at least) than its predecessor. But do you need really powerful hardware to enjoy it? We benchmark 15 different graphics cards and eight different CPUs to find out.

High-Detail Benchmarks

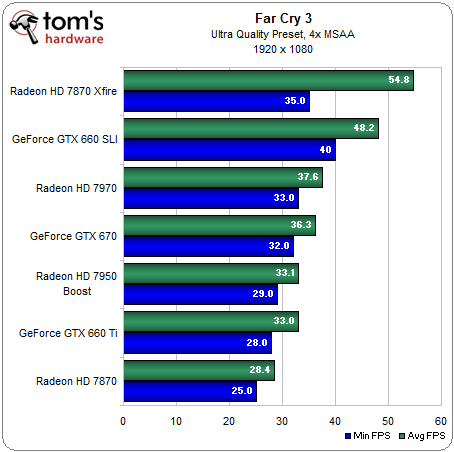

Our high-quality benchmarks are tested using Far Cry 3's Ultra quality preset, with the addition of 4x MSAA.

AMD's Radeon HD 7870 generally hovers under 30 FPS, below our rough target for playability. Meanwhile, the GeForce GTX 660 Ti and Radeon HD 7950 with Boost are only a little bit quicker. Even the powerful Radeon HD 7970 and GeForce GTX 670 are humbled by average frame rates just above 35 FPS. Only the two Radeon HD 7870s in CrossFire and GeForce GTX 660 cards in SLI manage to generate averages in excess of 45 FPS.

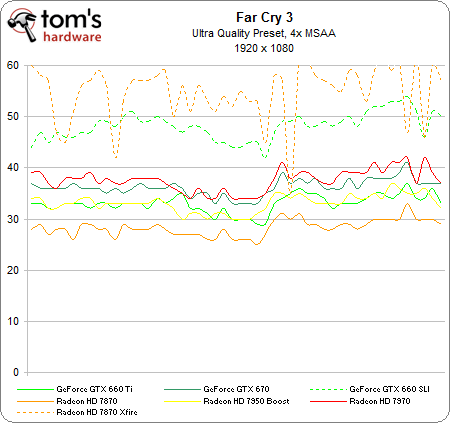

Speaking of multi-card solutions, notice that the Radeons achieve higher average results, but suffer lower minimum frame rates. In the frame rate-over-time chart, you can see that the GeForce boards in SLI yield smoother numbers than AMD's cards, which are not as consistent.

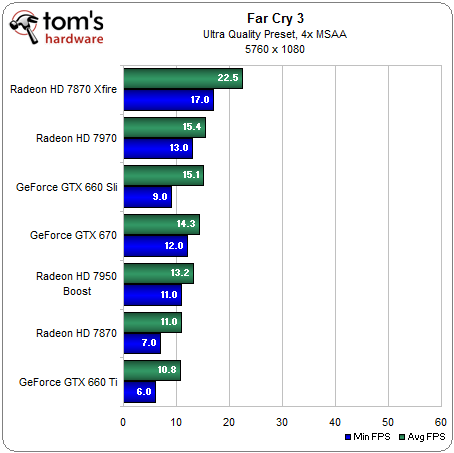

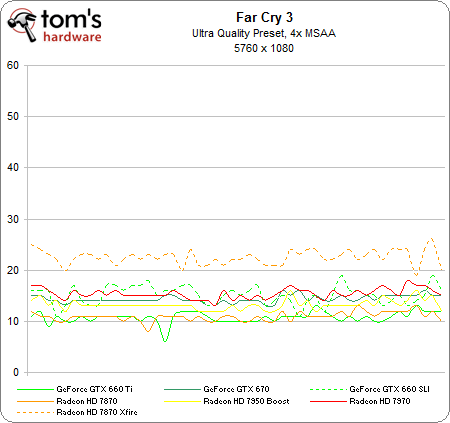

None of these cards are able to handle 5760x1080 using Far Cry 3's most demanding settings.

Although the Radeon HD 7970 and GeForce GTX 670 manage playable results at lower detail presets, I don't think we'll see a GPU able to handle this title at its Ultra detail settings using three screens until the next generation of hardware shows up.

We should also mention that we experienced some texture anomalies on the GeForce cards at this detail level. None of the results are playable, so the issue isn't particularly significant. But we did see something similar when Battlefield 3 debuted, requiring a driver revision from Nvidia to fix.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

sugetsu "The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."Reply

My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community. -

rdc85 :DReply

I thinks it read like this

"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i7-3960X (never mind the fact that the Core i7 costs more than $500..). "

hehe....

anyways good review... -

Tom Burnqest sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.LOL truthed ! I bet that 8350 when OCed can even close the tiny gap between it and the Intel processors. Can the i3 OC I don't think so.Reply -

echondo Why did the benchmark go from Medium straight to Ultra? Why not High settings? Now I don't know how well my 7870 will do on High at 1080p. It does pretty good at medium, but then gets destroyed with everything else on Ultra/high resolution.Reply

Why no middle ground? And why no 7970/680 tests in Crossfire/SLI? Why use single flagship cards, but then only use SLI/Crossfire for the medium bunch?

I'm very glad to see that this game uses Crossfire/SLI effectively, ~50% increase in performance for dual GPU configurations. -

EzioAs I've heard that FC3 was a demanding game but I never realized that ultra settings was SUPER demanding. Anyways, heard a lot of good things about this game, maybe I'll give it a try.Reply

Thanks Don for the great review as always. -

Heironious 2 x 2GB Galaxy GTX 560's in SLI with everything maxed in game and control panel gives around 35 FPS average. (4 X MSAA only though) Ran the cards to 78 which is fine. Turned it down in the NVIDIA control panel to get steadier frames. Not the best looking game you've seen? I think it looks better than even BF 3.Reply

Edit: These still screen shots don't do it justice. -

sayantan This game can be really demanding on CPU depending upon the environment. In a firefight that involves flame throwers and explosions along with some AIs , you can see the framerates drop from 60 to 40 in no time. Also I would like to mention that game stutters like hell with anything below 60 fps . Even 57 -58 fps is unplayable and gives me headache. So it is essential to tweak the settings such that the fps is above 60 most of the time. The good thing is if you have a decent system you can maintain 60fps without loosing too much visual fiedelity. I can run the game at 0x AA @1080p with all other details maxed out using OCed 7970(1060,1575) and 2500k(4.0Ghz).Reply

-

sayantan sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.Reply

rdc85I thinks it read like this"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i7-3960X (never mind the fact that the Core i7 costs more than $500..). "hehe....anyways good review...

The good thing is the game doesn't scale up with intel CPUs making the 8350 really look good in comparison.

-

sharpies sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.Reply

Dude, the writer is only trying to point out that using a dual core i3 is more meaningful than using the 8core FX8350. AND B.T.W. its common sense than the latest games dont even benefit from so many cores. Stop moaning about whether or not the writer is an Intel fanboy because AMD performed well in the GPU section.