Web Browser Grand Prix: Firefox 15, Safari 6, OS X Mountain Lion

Today we're breaking out the Hackintosh for our first-ever Web Browser Grand Prix on Apple OS X 10.8 (Mountain Lion). How will Chrome 21, Firefox 15, Opera 12.02, and Safari 6 stack up against each other, and to IE9 and the rest of the Windows 7 browsers?

JavaScript Performance

Why you can trust Tom's Hardware

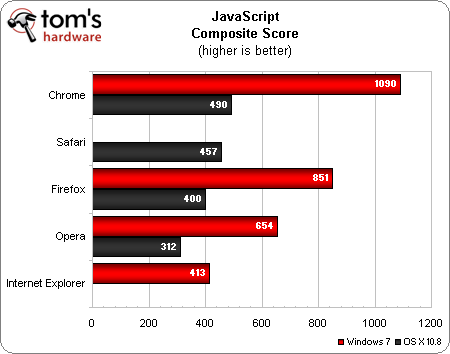

Composite Scoring

The JavaScript composite is the geometric mean of the four JavaScript performance test results (RIABench, Peacekeeper, Kraken, and SunSpider), multiplied by one thousand (to create nice, whole number scores).

Predictably, Chrome takes the lead in JavaScript performance, followed closely by Safari in second place. Firefox grabs third place, with Opera taking last.

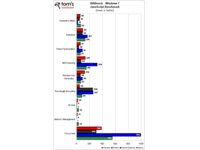

Once again, the Windows 7 scores all dwarf the OS X scores. Chrome remains the leader on Windows, followed by Firefox. About 200 points behind Firefox is Opera in third place, followed by last-place finisher IE9.

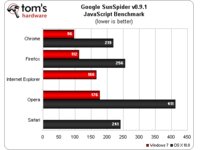

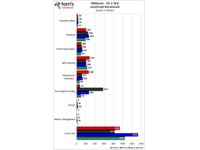

Drill Down

The charts below contain the individual JavaScript benchmarks, Peacekeeper, Kraken, and SunSpider, followed by RIABench JavaScript for OS X and Windows 7.

All of the JavaScript performance tests place Chrome in the lead, with the exception of Peacekeeper, where Safari wins by a hair. In OS X, Firefox has a poor showing in Run-length Encoding, while Opera has a disadvantage in the Focus test. IE9 exhibits considerably lower scores than the other browsers in the Focus test and MD5 Hashing

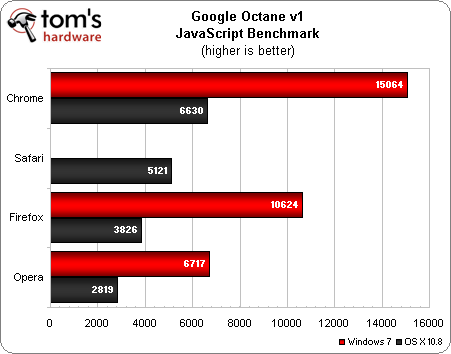

Google Octane

About a week ago Google introduced its new JavaScript performance benchmark, dubbed Octane. This new benchmark contains the eight tests that make up the older V8 JavaScript benchmark, along with five new tests. Unfortunately, IE9 cannot run Octane, and the IE10 RTM build cannot finish the test. Therefore, Octane will not be included in the JavaScript composite until it functions with the current version of Internet Explorer.

Chrome takes the lead in both operating systems, followed by Safari on OS X and Firefox on Windows 7. Firefox snatches third place on OS X, while Opera takes third on Windows and fourth on OS X.

While Chrome dominates the Windows 7 results, the OS X results are a lot more in line with the other JavaScript performance benchmarks.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

Eggrenade It would be nice if I could view the additional charts with only one click, and not in a separate window.Reply -

lahawzel It's nice to see Chrome performing so well, but I'm still waiting on the Chrome equivalents of all the plugins I use in FF before I think about switching. The web just doesn't feel the same without them.Reply

(The nice popular ones like ABP, Lazarus, Greasemonkey all have equivalents; some lesser-used plugins like Rikaichan also have ports by now. Only a matter of time!) -

bennaye chrome is absolutely deserving of the award. say what you will about the frequent patch releases touted as upgrades, chrome is a very good browser, as shown by this month's article. even on OSX there is only a small margin separating chrome and safari. but the one qualm i do have with chrome is the lack of add-ons compared to firefox. and i a lot of people share this concern. the add-ons do make the experience that much better.Reply

as always, a great read. -

adamovera bennayechrome is absolutely deserving of the award. say what you will about the frequent patch releases touted as upgrades, chrome is a very good browser, as shown by this month's article. even on OSX there is only a small margin separating chrome and safari. but the one qualm i do have with chrome is the lack of add-ons compared to firefox. and i a lot of people share this concern. the add-ons do make the experience that much better.as always, a great read.All versions of Chrome hold up incredibly well cross-platform, if you look back at the two Linux WBGPs, it won there, too. Thanks for reading!Reply -

adamovera AdamsTaiwanWould like to see this again after IE10 is released.Absolutely, a Windows 8-based WBGP is already in the cards for October.Reply -

adamovera JOSHSKORNHow about 64-bit Internet Explorer 9 vs Waterfox 15.0?When we have more stable 64-bit browsers, I'll definitely do a 64-bit WBGP - including versus their 32-bit counterparts.Reply -

I wish Tom's would fiddle around with the settings of these browsers for these tests. In every System Builder Marathon you overclock the builds, why not try and crank the most speed while ensuring better memory management out of the browser as well?Reply

Testing these browsers at stock doesn't reveal even an eighth of the picture.