Game Developers Conference 2011: In The Trenches

Biofeedback In Game Play

Early attempts at biofeedback in games have been met with mixed results. Perhaps the best known effort comes from Neurosky, though its headset sensor hasn’t gained traction in mainstream gaming.

At least one major game developer is diving deeper into the topic. Mike Ambinder works as a research psychologist for Valve, looking into the potential for biofeedback in games. We’re not talking about games where you relax and concentrate on moving a little ball around the screen, either.

Why would you want biofeedback in a game like Left 4 Dead 2? Games engage our emotions on a moment-by-moment basis, and using those emotions as an input gives games the potential for more dynamic and immersive environments. Done right, biofeedback can also help calibrate the game. Auto-difficulty adjustment in the past has been very crude, but biofeedback could actually improve the quality of automated difficulty adjustment.

The psychology of emotions has two vectors: magnitude (or arousal) and direction (also called valence). High positive valence is usually associated with positive emotions, like being happy. A person with high arousal and valence vectors would be energetic, jubilant, and engaged. Down and to the left means a bored or passive player.

Types of physiological signals useful for biofeedback include heart rate, skin conductance level (SCL), facial expressions, eye movements, and EEGs (Electroencephalography is the recording of electrical activity along the scalp, produced by the firing of neurons within the brain). Ambinder dived into the details of the pros and cons of using a number of different methods, but the key point is that collecting data isn’t easy, is generally expensive, and, depending on the technique, subject to bias. After all, if you know your emotional state is being tracked, that can alter the effect.

The coolest part of the whole talk, though, were the demos. Ambinder demonstrated eye tracking used in a Portal 2 level. This type of technique might be more useful to level designers than the players themselves, allowing designers to see which areas and objects on a level the player considered important, since they could track where the player looked as she moved through the level.

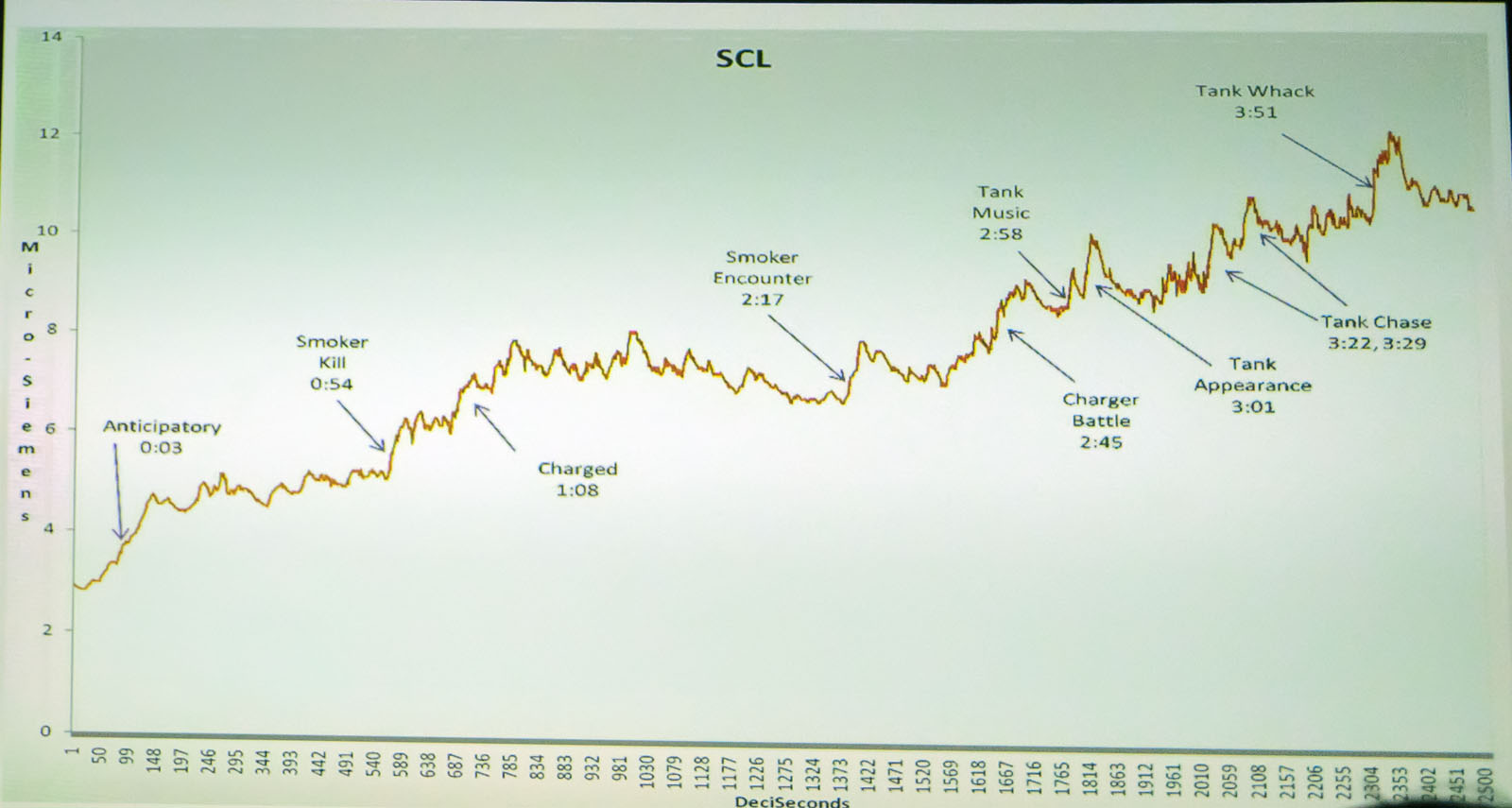

In another demo more directly applicable to gamers, Ambinder conducted an experiment where the AI Director in Left 4 Dead 2 was modified to respond to signals from a SCL (skin conductance level) sensor. The AI Director already tries to respond to player arousal, but indirectly through controller inputs and idle time. Now, actual SCL data would affect when mobs would show up and even the type of boss zombie the players might encounter.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The graph shows the progress through one level. You can see sharp rises in the SCL data as mobs or key bosses appear.

Whether biofeedback will ever go mainstream is open to debate, but certainly Valve’s research into the area demonstrates that implementing biofeedback into certain types of games may pay off through a better gaming experience.

-

madjimms "Today, games are both highly integrated into the culture, yet apart from it. We clearly see conflicts between the growing gaming culture and those who consider gaming a waste of time, or in the case of some news networks, even dangerous."Reply

I know what "news" network hes talking about. *cough* fox *cough* -

radiumburn Still don't understand how fox can be considered a news network when it seems that its main goal is to push out false information and fearReply -

davewolfgang Wow you two - this is SUPPOSE to be about gaming. Leave your bias about cable channels because you don't agree with them or don't like one of their show hosts, for a political website, not Tom's.Reply

-

I don't think biofeedback can help , I mean every person is different you can't measure a game like that, could be the greatest game for some people and the worst game ever for others ...Reply

-

dennisburke FOX is playing the biggest game of all "Battle For Your Brain". Spending hours watching FOX is more dangerous to society than spending time playing games.Reply

Playing a stratagy game is no different than playing chess, other than being treated to a great visual spectacle...is watching a sunset art?

I like playing fps games...maybe because it reminds me of playing 'Hide and Seek' or 'Cowboys and Indians' as a kid...the thrill of a chase, etc.

Art will always be in the eye of the beholder and it cannot be defined. -

Khimera2000 this is all cool, but to be honest whats really going to happen is once the new consols are released, everything spoken at these confrences about quality, new technolagy, and intergration will be lost (unless its intergrated into the consols).Reply

As it looks from my side these companies will be tooooo buisy trying to program for technolagy that they all but ignored since the release of currant generation consols.

the games comming out will be full of leaks, bugs, and problems, then when they get ported to PC it will be made worse.

There is nothing impresive happening in the game industry. they can talk all they want, but like De Vinci, if all you do is talk and draw pictures thats all they will ever be talk and fluff.

once they use whats out there then ill start listening, till then I would be terrified to try anything these companies would pump out just by the chance of bugs destroying the experiance. -

sudeshc In my opinion games are art it all starts with good concept/story and takes it to another level games are do inspired by culture but more or less global culture. we can or can not say they are waste of time or are dangerous as all of us know "too much of anything is not good" and there are always exceptions.Reply