Benchmarked: How Well Does Watch Dogs Run On your PC?

Watch Dogs is one of the most anticipated games of 2014. So, we're testing it across a range of CPUs and graphics cards. By the end of today's story, you'll know what you need for playable performance. Spoiler: this game is surprisingly demanding!

Low Settings Are Playable; High Details Are Demanding

Watch Dogs is a surprisingly demanding game, particularly when you consider the console hardware it's also running on. But at its most entry-level detail settings and a 1280x720 resolution, you can get by with a Radeon R7 240 or GeForce GT 630 GDDR5. At 1920x1080, you want a GeForce GTX 650 or Radeon R7 250X to keep your nose above 30 FPS. But a Radeon R7 260X or GeForce GTX 750 Ti is going to save you from a lot of the stuttering we observed.

Step up to the highest detail levels, though, and you'll want a Radeon R9 270 or GeForce GTX 760 to run at 1080p. At any resolution higher than that, shoot for high-end hardware like the Radeon R9 290 or GeForce GTX 780. Indeed, the best experience we had was with Nvidia's GeForce GTX Titan overclocked to achieve performance similar to a GeForce GTX 780 Ti.

Even with the Ultra detail preset enabled, which you'd think would shift the workload toward graphics, a strong host processor is surprisingly critical. While the Core i3-3220 and FX-4170 only mustered a 24 FPS minimum, the FX-6300 almost hit 30. The FX-8350 and Core i5-3550 managed a more tolerable 37-88 FPS, and Intel's Core i7-3960X lead by not dropping under 51 FPS.

Of course, you can mitigate the performance hit by lowering your detail settings, bringing frame rates back up, but that somewhat defeats the purpose of gaming on a PC. Make sure you have an FX-6000-series CPU at minimum to enjoy Watch Dogs at higher graphics quality settings, but a Core i5 or FX-8000 would be much better. The publisher recommends a Core i7 for the best possible performance, and we have to agree.

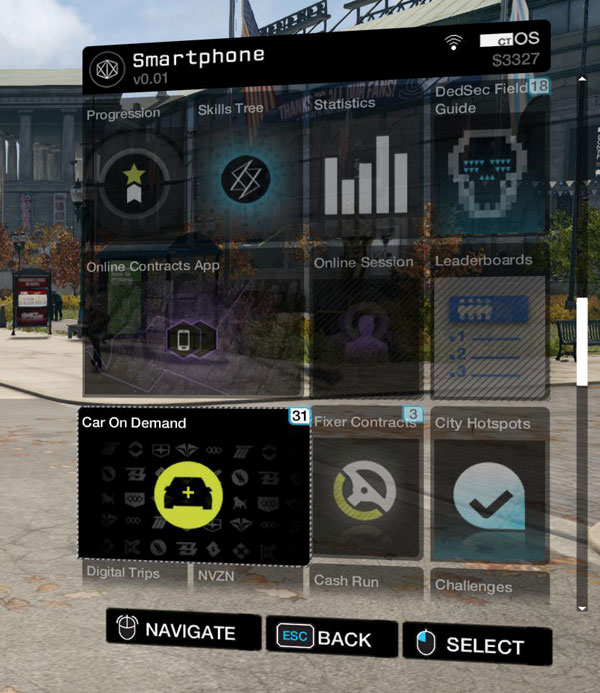

As for the game itself, Watch Dogs is a little bit of GTA with a hearty helping of Deus Ex and a dash of Far Cry 3. I wouldn't go so far as to say it's any better than those titles, but if you're into the sandbox genre, I'm sure you'll find something to enjoy. The PC build sells for $60 on Amazon, and Nvidia bundles it with certain GeForce cards.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Low Settings Are Playable; High Details Are Demanding

Prev Page Results: CPU BenchmarksDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

coolcole01 Running on my system with ultra and highest settings and fxaa it is pretty steady at 60-70 fps with weird drops randomly almost perfectly to 30 then up to 60 almost like adaptive sync is on, Currently playing it withe the texture at high and hba0+ and smaa and its a pretty rock steady 60fps with vsync still with the random drops.Reply -

edwinjr why no core i5 3570k in the cpu benchmark section?Reply

the most popular gaming cpu in the world. -

chimera201 So a Core i5 is enough compared to Ubisoft's recommended system requirement of i7 3770Reply -

jonnyapps What speed is that 8350 tested at? Seems silly not to test OC'd as anyone on here with an 8350 will have it at at least 4.6Reply -

Patrick Tobin Most 780Ti cards come with 3GB of ram, the Titan has 6GB. This is an unfair comparison as the Titan has more than ample VRAM. Get a real 780Ti or do not label it as such. HardOCP just did the same tests and the 290X destroyed the 780 since the FSAA + Ultra textures started causing swapping since it was pushing past 3GB.Reply -

tomfreak If u dont have 780ti, 780, just show us stock Titan speed, Why would u rather show us Titan OCed speed than showing Titan stock speed & all that without showing 290X OCed speed? Infact an OCed Titan does not represent a 780Ti, because it has 6GB VRAM. Vram is a big deal in watchdog. So ur Oced titan does not look like 780ti nor a real titan.Reply -

AndrewJacksonZA Hi DonReply

Please could you include tests at 4K resolution, and also please use a real 780Ti and also a 295X2? Can you not ask another lab to do it, or get one shipped to you please?

+1 also on what @Patrick Tobin said.

I can appreciate that you might've spent a lot of time on this review, and we'd really appreciate you doing the final bit of this review. I know that not a lot of gamers currently game at 4K, but I am definitely interested in it please.

Thank you! -

Lee Yong Quan why doesnt you have the high detail setting? and would a 7790 1gb perform the same as 260x 2gb in medium texture? if not which is betterReply