Testing EVGA's GeForce GTX 1080 FTW2 With New iCX Cooler

Does a new heat sink translate to new success? EVGA complements its existing technology with some on-board sensors and gives enthusiasts access to asynchronous fan control.

Power Consumption And Cooling Performance

The table below shows the quick and dirty summary of our measurements:

| Power Consumption | |

|---|---|

| Idle | 12W |

| Idle (Multi-Montor) | 15W |

| Blu-ray Playback | 14W |

| Browser-Based Games | 115 to 135W |

| Gaming (Metro Last Light, 4K) | 216W |

| Torture (FurMark) | 238W |

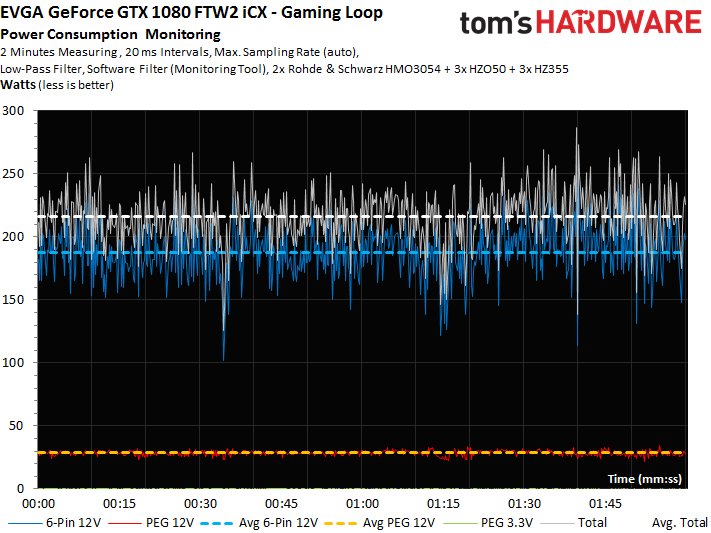

The following chart details power consumption during our Metro Last Light gaming loop:

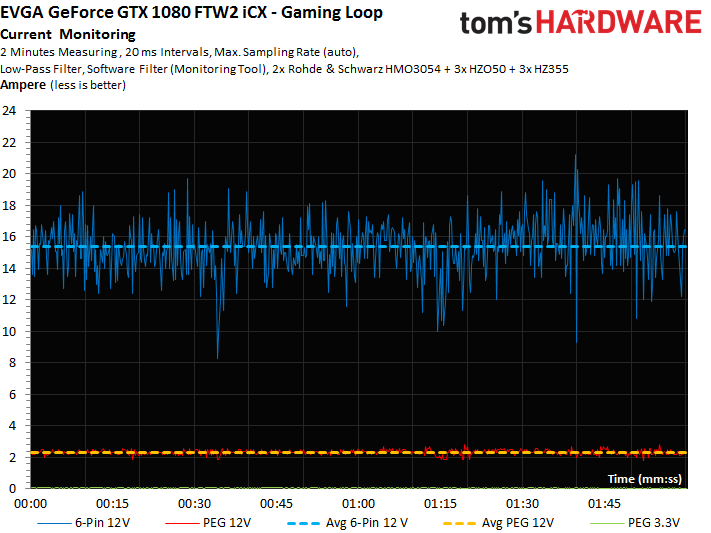

The measured current comes almost exclusively from the external power connectors, and not the motherboard slot.

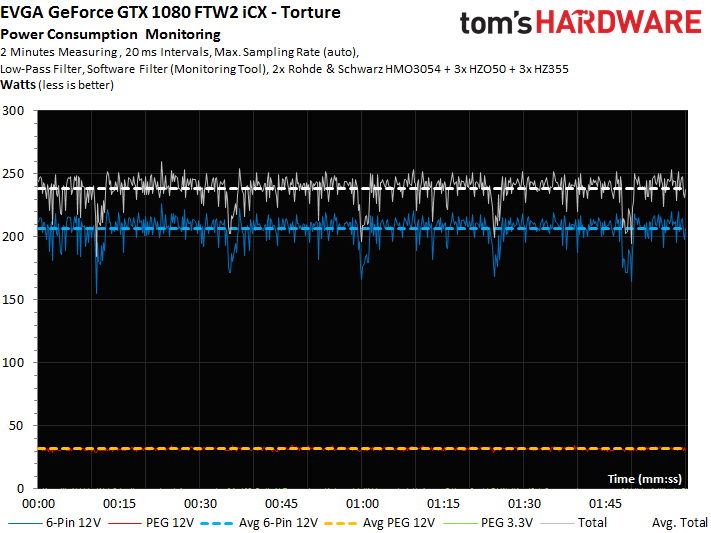

In our stress test, EVGA’s card consumes a maximum of almost 240W due to an imposed power limit. If the target is increased using the right tools, it’s possible to hit 250W. EVGA could have delivered this using one eight- and one six-pin connector as well, it just wouldn’t look very good.

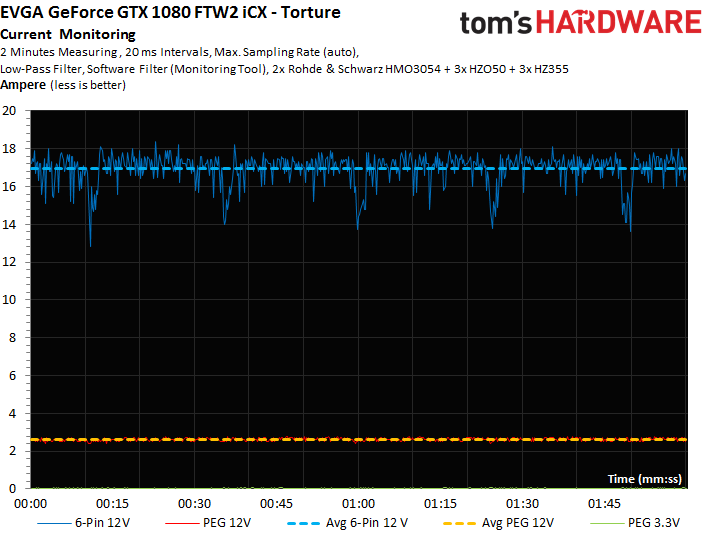

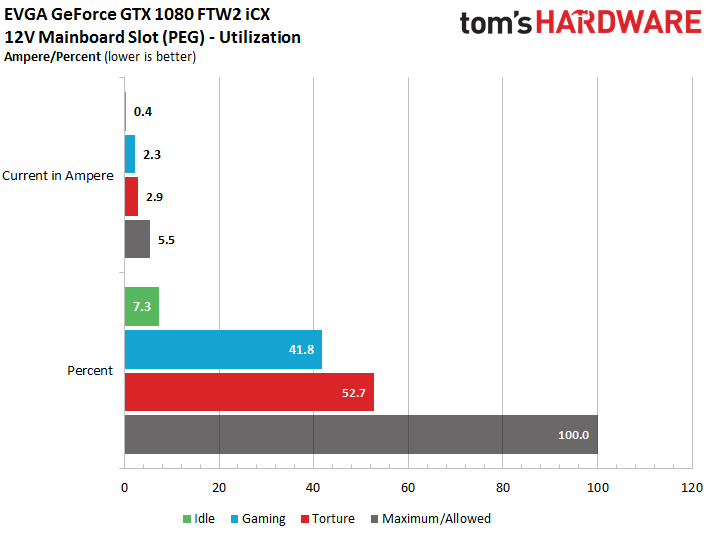

Naturally, we also measured the current flow in our stress test:

This is the motherboard slot’s utilization. 100% represents 5.5A, the maximum load according to the PCI-SIG’s specification. The fact that 75W is often mentioned as the ceiling, rather than 66W (5.5A * 12V), has to do with the PCI-SIG including voltage fluctuations coming from ATX power supplies. At a stable 12V, the math works out to 66W.

Cooling Performance

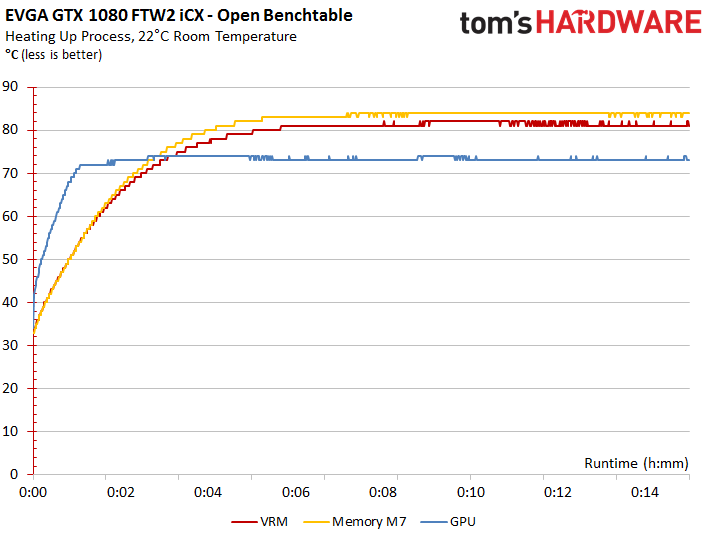

After a long technical introduction, we finally come to what EVGA sees as the main feature of its slightly more expensive iCX model. After exercising the card on an open test bench, we plot the temperature curve using EVGA’s software.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

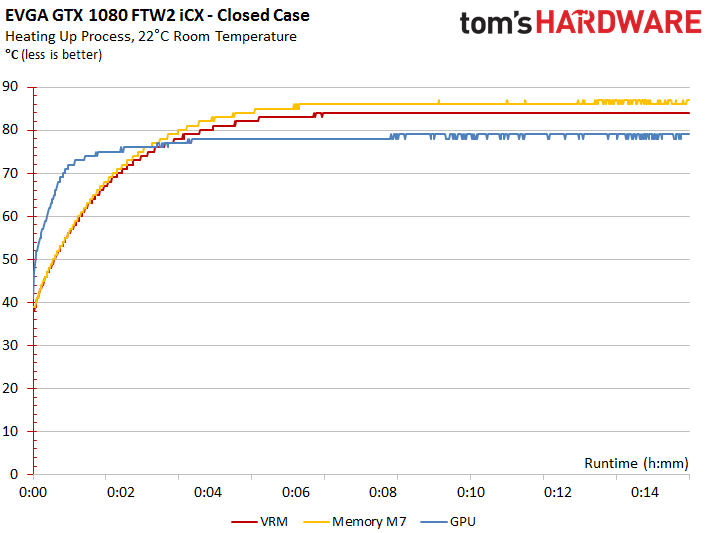

The chart below includes what we consider to be the most important readings: the GPU, the hottest voltage regulator, and the hottest memory module (M7).

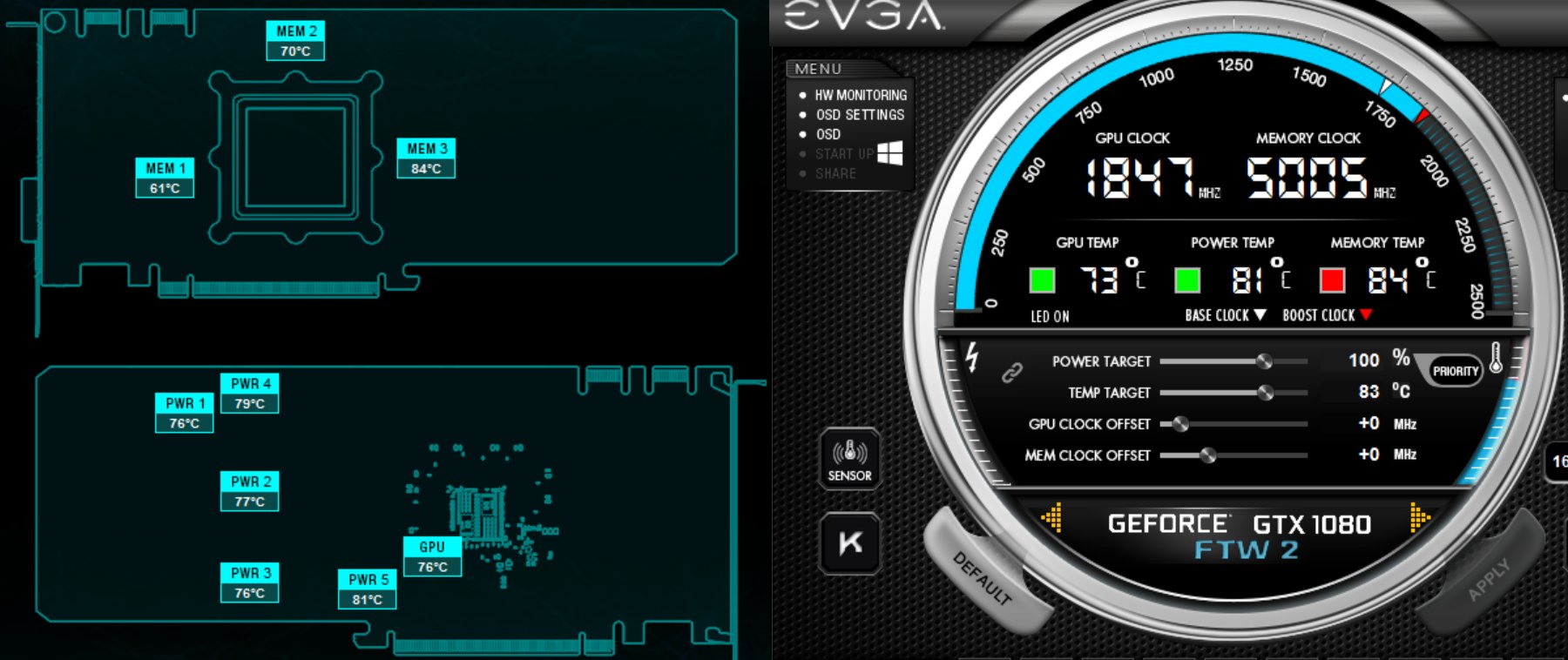

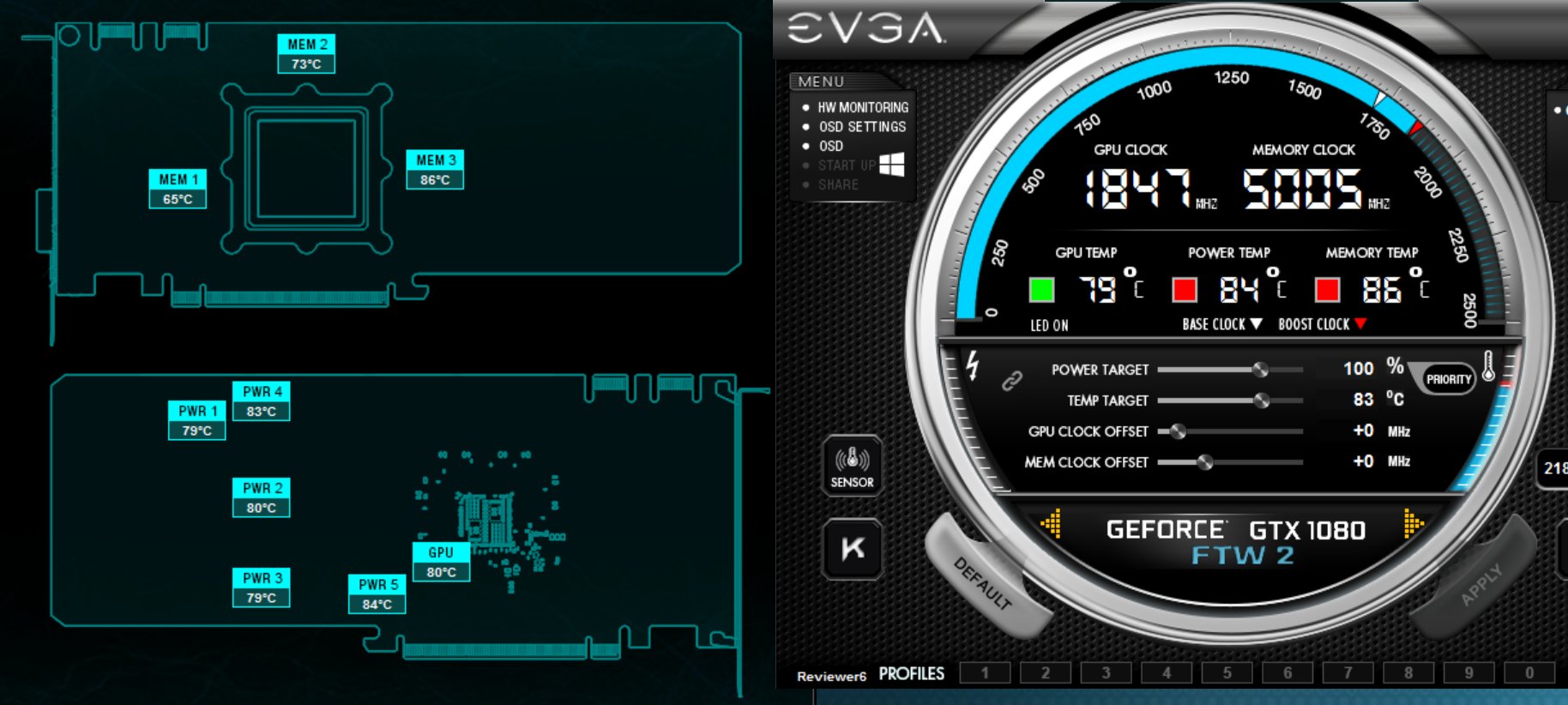

EVGA’s Precision tool also shows us the relevant sensor locations on the board, along with their readouts. Our next question is whether the results of this interesting new technology agree with real-world measurements?

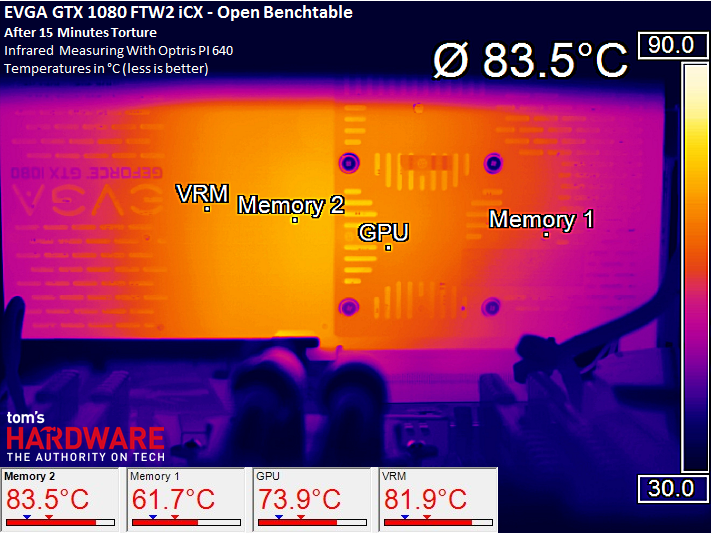

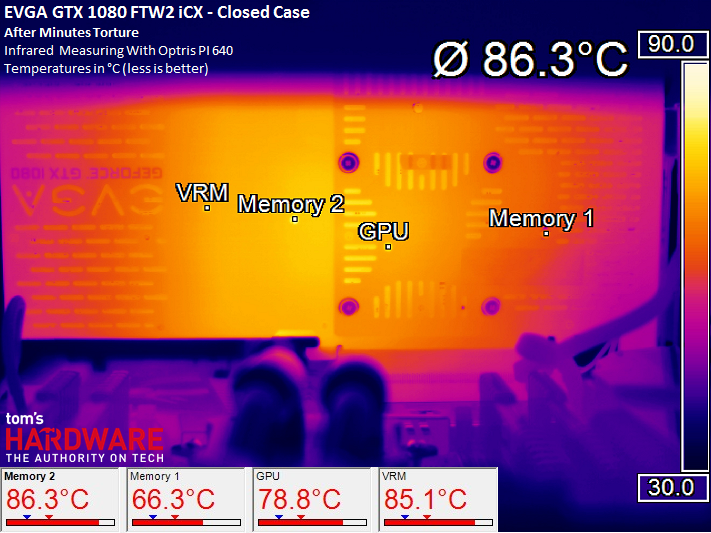

We drilled holes in the three places corresponding to our temperature curves, and then cut the thermal pads where necessary for direct access to the circuit board. Measurements generated with our high-res thermal imaging camera come strikingly close to the sensor data.

Our new test setup, which you’ll read about in the days to come, allows us to collect accurate readings in a closed case. First, consider the temperature curves compiled from the sensors’ log file:

The EVGA tool reliably shows the corresponding values:

And our thermal camera proves that the numbers agree:

Remember that the memory’s thermal ceiling should be 85°C. It’s not surprising, then, that the status LED on top of the card is lit up red.

Granted, this is a worst-case scenario. In games, the memory temperatures were up to six Kelvin lower, while the GPU barely crested 70°C. You can live with this, even if it requires pushing a little more air through the cooler.

Current page: Power Consumption And Cooling Performance

Prev Page Cooler Design And Technical Implementation Next Page Performance, Noise, And Conclusion

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

FormatC Very similar to the AVX after thermal mod and BIOS flash. And as I wrote: generally a little bit better :)Reply

No idea, were are all the previous posts. Horrible tech... -

redgarl My first 1080 GTX FTW exploded. These cards were having huge issues due to the cooler.Reply -

FormatC I don't think, that any card can explode if you use it in a normal case with a good PSU. Normally ;)Reply

The main problem is every time the cooler philosophy. You can see three main solutions on the market:

Most used cooler types:

(1) Sandwich (like EVGA or MSI) with a large cooling plate/frame between PCB and cooler with tons of thick thermal pads

(2) Cooler only and a separate VRM cooler below the main cooler, memory mostly cooled over the heatsink

(3) Integrated real heatsink for VRM/coils and larger CPU heatsink/frame for direct memory cooling (Gigabyte, Palit, Zotac, Galax etc.)

I'm investigating this things since years and visited a lot of factories in Asia and the HQs of the bigger manufacturers. I have contact to a lot of R&D guys of this companies and we exchanged/ discussed my data over a long time. I remember, that I was sitting with the PM and R&D from Brand G in Taipei to discuss the first coolers of Type 3 in 2013 and it was good to see, how the R&D was following my suggestions:

This were the first coolers with integrated heatsinks for VRM and memory. Later it was improved to include the coils into this concept. The problem was at the begin the stability of the heavy cards and they moved to backplates. I was also in discussion with a few companies to use this backplates not only for marketing or stabilization but also for cooling. One of the first cards with thermal pads between PCB and backplate was the R9 380X Nitro from Sapphire. Other companies copied this and the cards with the biggest amount of thermal pads are now the FTW with thermal mod and the FTW2. I reported the issues to EVGA in early August 2016 and we had to wait over 3 months to see the suggested solution on the market.

One of the the problems is based on the splitted development/production process. The PBCs are mostly designed/produced from/together with a few big, specialized OEMs. But nobody is proceeding a simulation to detect possible thermal hotspots (design dependend) first. The cooler industry works also totally separately and the data exchange is simply worse. Mostly they are using (or get) only the main info about dimensions of the PCB, holes and component positions (especially height) and nothing else. This may work if you lucky, but the chance is 50:50. Other things, like a strictly cost-down and useless discussions about a few washers or screws (yes, it's not a joke!) will produce even more possible issues. Companies like EVGA are totally fabless and it is a very hard job to keep all this OEMs and third-party vendors on a common line. Especially the communication between the different OEMs is mostly too bad or not existing.

Another problem is the equipment and the utilization in the R&Ds. If I see pseudo-thermal cams (in truth it are mostly cheap pyrometers with a fake graphical output and not real bolometers) and how the guys are using it (wrong angle and distance, wrong or no emissive factor, no calibrated paintings) I'm not surprised, what happens each day. Heat is a real bitch and the density their terrible sister. :D

For all people, interested in development and production of VGA cards:

I collected over the years a lot of material and pictures/videos from inside the factories and write now, step-by-step, an article about this industry, their projects, prototypes and biggest fails. But I have to wait for all permissions, because a few things are/were secret (yet) or it was prohibited to use it public. But I think that's worth to be published at the right moment:)

-

FormatC The MX-2 ist totally outdated and the performance is really worse in direct comparison with current mid-class products. The long-term stability is also nothing to believe (on a VGA card). The problem of too thick thermal grease to compensate some bigger gaps (instead of pads) is the dry-out-problem. The paste will be thinner and lose the contact to the component or heatsink. The sense of such products is to have a very thin film between heatsink and heatpreader/die in combination with a higher pressure.Reply

I tested over the weekend the OC stablity of the memory modules. If I use the original ACX 3.0 or the iCX, I get not more than 100-150 MHz stable (tested with heavy scientific workloads). With a water block I was able to OC the same modules up to 300 MHz more and got no errors - with a big headroom. I write not about gaming, some games are running with much higher memory clocks. But this isn't really stable. It only seems so. But this is nothing to work with it. :) -

FormatC Gelid GC Extreme or Thermal Grizzly Kryonaut. A lot better and not bad for long-term projects. The Gelid must be warmed up a little bit, it's more comfortable without experience. :)Reply -

FormatC Too dangerous without experience and not so much better. And it is nearly impossible to clean it later without issues.Reply -

Martell1977 I've used Arctic MX-4 on several CPU's, GPU's and in laptops. In fact I just used some last night in a laptop that was hitting 100c under load. I put some of the MX-4 on th CPU and both sides of the thermal pad that was on the GPU and the system runs at 58c under load now.Reply -

FormatC The MX-2 is entry level, the MX-4 mid-class but both were developed years ago. The best bang for the buck is the Gelid GC Extreme (a lot of overclockers like it) but the handling is not so easy. The Kryonaut is a fresh high-performance product and easier to use (more liquid). You have only a short Burn-In time and the performance is perfect from the begin. The older arctic products are simply outdated but good enough for cheaper CPUs. Nothing for VGA.Reply

I tested it a few weeks ago, also here (how to improve VGA cooling):