P67 Motherboard Roundup: Nine $150-200 Boards

Improved per-clock performance and higher achievable frequencies are sure to put Intel’s latest K-Series CPUs on top of many builders’ whish lists, but they’ll still need a new socket to put it in. We test nine enthusiast-oriented LGA-1155 motherboards.

Test Settings

| Test System Configuration | |

|---|---|

| CPU | Intel Core i7-2600K LGA 1155 3.40-3.80 GHz, 8 MB L3 Cache |

| RAM | Kingston KHX2133C9D3T1K2/4GX (4 GB) DDR3-2133 at DDR3-1600 CAS 7-7-7-21, 1.60 V |

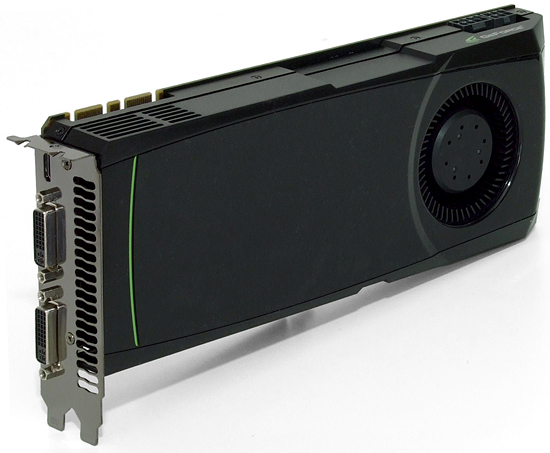

| Graphics | Nvidia GeForce GTX 580 1.5 GB 772MHz GPU, GDDR5-4008 |

| Hard Drive | Western Digital WD1002FBYS 1 TB 7200 RPM, SATA 3Gb/s, 32 MB cache |

| Sound | Integrated HD Audio |

| Network | Integrated Gigabit Networking |

| Power | OCZ-Z1000M 1000 W Modular, ATX12V v2.2, EPS12V, 80 PLUS Gold |

| Software | |

| OS | Microsoft Windows 7 Ultimate x64 |

| Graphics | Nvidia 263.09 |

| Chipset | Intel INF 9.2.0.1019 |

Kingston’s HyperX 2133 allows us to evaluate each motherboard’s memory overclocking potential, at least within the limits of our CPU. For normal benchmarks, we used less aggressive DDR3-1600 CAS 7-7-7-21 settings.

Nvidia’s GeForce GTX 580 helps to shift the performance bottleneck away from graphics, putting more benchmark focus on each motherboard’s ability to manage Intel Turbo Boost and memory timings.

With efficiency approaching 90% through a wide range of loads, OCZ’s Z1000M optimized our power consumption tests.

| Benchmark Configuration | |

|---|---|

| 3D Games | |

| Crysis | Patch 1.2.1, DirectX 10, 64-bit executable, benchmark tool Test Set 1: High Quality, No AA Test Set 2: Very High Quality, 4x AA |

| F1 2010 | V1.01, Run with -benchmark example_benchmark.xml Test Set 1: High Quality Preset, No AA Test Set 2: Ultra Quality Preset, 8x AA |

| Just Cause 2 | Version 1.0.0.2, Built-In Benchmark "Concrete Jungle" Test Set 1: Medium Details, No AA, 8x AF Test Set 2: Highest Details, 8x AA, 16x AF |

| S.T.A.L.K.E.R.: Call Of Pripyat | Call Of Pripyat Benchmark version, all options, HDAO Test Set 1: High Preset, DX11 EFDL, High SSAO, No AA Test Set 2: Ultra Preset, DX11 EFDL, High SSAO, 4x MSAA |

| Audio/Video Encoding | |

| iTunes | Version:9.0.2.25 x64 Audio CD (Terminator II SE), 53 min Default format AAC |

| HandBrake 0.9.4 | Version 0.9.4, convert first .vob file from The Last Samurai (1 GB) to .mp4, High Profile |

| TMPGEnc 4.0 XPress | Version: 4.7.3.292 Import File: Terminator 2 SE DVD (5 Minutes) Resolution: 720x576 (PAL) 16:9 |

| DivX Codec 6.9.1 | Encoding mode: Insane Quality Enhanced multithreading enabled using SSE4 Quarter-pixel search |

| Xvid 1.2.2 | Display encoding status = off |

| MainConcept Reference 1.6.1 | MPEG-2 to H.264, MainConcept H.264/AVC Codec, 28 sec HDTV 1920x1080 (MPEG-2), Audio: MPEG-2 (44.1 KHz, 2 Channel, 16-Bit, 224 Kb/s), Mode: PAL (25 FPS) |

| Productivity | |

| Adobe Photoshop CS4 | Version: 11.0 x64, Filter 15.7 MB TIF Image Radial Blur, Shape Blur, Median, Polar Coordinates |

| Autodesk 3ds Max 2010 | Version: 11.0 x64, Rendering Dragon Image at 1920x1080 (HDTV) |

| WinRAR 3.90 | Version x64 3.90, Dictionary = 4,096 KB, Benchmark: THG-Workload (334 MB) |

| 7-Zip | Version 9.20: Format=Zip, Compression=Ultra, Method=Deflate, Dictionary Size=32 KB, Word Size=128, Threads=8 Benchmark: THG-Workload (334 MB) |

| Synthetic Benchmarks and Settings | |

| 3DMark 11 | Version: 1.0.1.0, Benchmark Only |

| PCMark Vantage | Version: 1.0.1.0 x64, System, Productivity, Hard Disk Drive benchmarks |

| SiSoftware Sandra 2011 | Version 2011.1.17.25, CPU Test = CPU Arithmetic / MultiMedia, Memory Test = Bandwidth Benchmark |

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

reprotected I thought that the ECS looked pretty sick, and it did perform alright. But unfortunately, it wasn't the best.Reply -

rantsky You guys rock! Thanks for the review!Reply

I'm just missing benchmarks like SATA/USB speeds etc. Please Tom's get those numbers for us! -

rmse17 Thanks for the prompt review of the boards! I would like to see any differences in quality of audio and networking components. For example, what chipsets are used for Audio in each board, how that affects sound quality. Same thing for network, which chipset is used for networking, and bandwidth benchmarks. If you guys make part 2 to the review, it would be nice to see those features, as I think that would be one more way these boards would differentiate themselves.Reply -

VVV850 Would have been good to know the bios version for the tested motherboards. Sorry if I double posted.Reply -

flabbergasted I'm going for the ASrock because I can use my socket 775 aftermarket cooler with it.Reply -

stasdm Do not see any board worth spending money on.Reply

1. SLI "support". Do not understand why end-user has to pay for mythical SLI "sertification" (all latest Intel chips support SLI by definition) and a SLI bridge coming with the board (at least 75% of end users would never need one). The bridge should come with NVIDIA cards (same as with AMD ones). Also, in x8/x8 PCIe configuration nearly all NVIDIA cards (exept for low-end ones) will loose at least 12% productivity - with top cards that is about $100 spent for nothing (AMD cards would not see that difference). So, If those cards are coming as SLI-"sertified" they have to be, in the worst case, equipped by NVIDIA NF200 chip (though, I would not recommend to by cards with this PCIe v.1.1 bridge). As even NVIDIA GF110 cards really need less than 1GB/s bandwidth (all other NVIDIA and AMD - less than 0.8GB/s)and secondary cards in SLI/CrossFire use no more than 1/4 of that, a normal PCIe v.2.0 switch (costing less than thrown away with x8/x8 SLI money) will nicely support three "Graphics only" x16 slots, fully-functional x8 slot and will provide bandwidth enough to support one PCIe v.2.0 x4 (or 4 x x1) slot(s)/device(s).

2. Do not understand the author euphoria of mass use of Marvell "SATA 6G" chips. The PCIe x1 chip might not be "SATA 6G" by definision, as it woud newer be able to provide more than 470GB/s (which is far from the standard 600GB/s) - so, I'd recommend to denote tham as 3G+ or 6G-. As it is shown in the upper section, there is enough bandwidth for real 6G solution (PCIe x8 LSISAS 2008 or x4 LSISAS 2004). Yes, will be a bit more expensive, but do not see the reason to have a palliative solutions on $200+ mobos.

-

I was hoping that the new Asus Sabertooth P67 would be included. Its new design really is leaving people wondering if the change is as good as they claim.Reply