P67 Motherboard Roundup: Nine $150-200 Boards

Improved per-clock performance and higher achievable frequencies are sure to put Intel’s latest K-Series CPUs on top of many builders’ whish lists, but they’ll still need a new socket to put it in. We test nine enthusiast-oriented LGA-1155 motherboards.

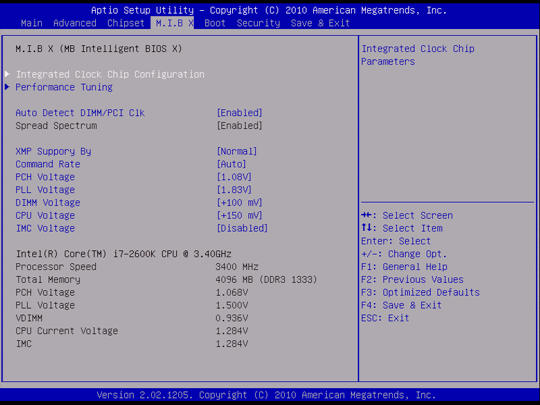

P67H2-A2 UEFI

ECS' UEFI gave us the most competitive overclocking experience we’ve ever had with one of the company's products, though a few teething problems are still present in this version. We hope to see a fully-patched version very soon.

The first problem we found was that some of the settings were mislabeled. DIMM voltage controls the CPU core, and we believe that CPU core voltage controls the DIMMs. This mix-up is also reflected in a DIMM voltage reading that doesn’t correspond to anything realistic. Overclocking adjustments were easy after we got to the root of this problem.

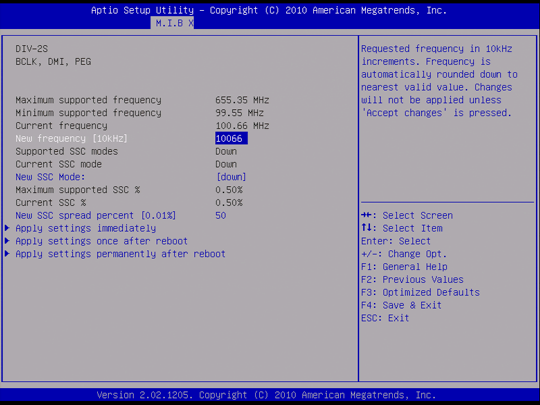

Our next problem was again one of naming, but only because it forces you to convert numbers in your head. Rather than write its base clock in MHz with a decimal point, ECS chooses a 10 kHz scale.

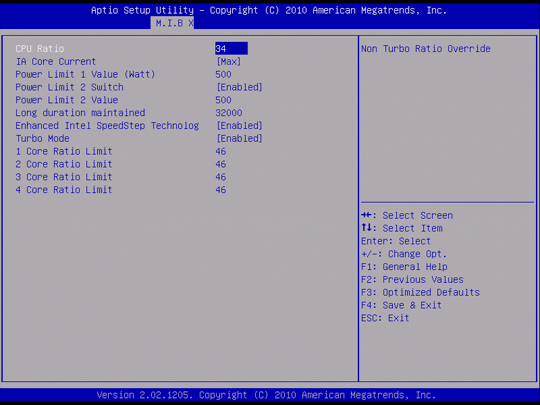

Most P67 motherboard firmware has a hold-up time for turbo multipliers, but ECS’ appears to go up to nearly nine hours. Perhaps this is a millisecond scale?

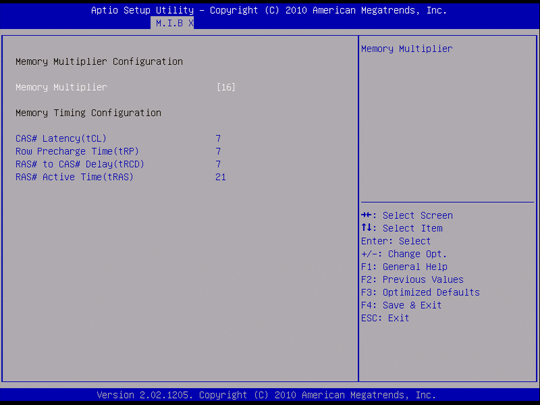

Timing controls are extremely limited, but do work. Setting the main M.I.B. X menu to an XMP profile overrides these, and there are no individual or global controls to revert to “automatic” mode.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

reprotected I thought that the ECS looked pretty sick, and it did perform alright. But unfortunately, it wasn't the best.Reply -

rantsky You guys rock! Thanks for the review!Reply

I'm just missing benchmarks like SATA/USB speeds etc. Please Tom's get those numbers for us! -

rmse17 Thanks for the prompt review of the boards! I would like to see any differences in quality of audio and networking components. For example, what chipsets are used for Audio in each board, how that affects sound quality. Same thing for network, which chipset is used for networking, and bandwidth benchmarks. If you guys make part 2 to the review, it would be nice to see those features, as I think that would be one more way these boards would differentiate themselves.Reply -

VVV850 Would have been good to know the bios version for the tested motherboards. Sorry if I double posted.Reply -

flabbergasted I'm going for the ASrock because I can use my socket 775 aftermarket cooler with it.Reply -

stasdm Do not see any board worth spending money on.Reply

1. SLI "support". Do not understand why end-user has to pay for mythical SLI "sertification" (all latest Intel chips support SLI by definition) and a SLI bridge coming with the board (at least 75% of end users would never need one). The bridge should come with NVIDIA cards (same as with AMD ones). Also, in x8/x8 PCIe configuration nearly all NVIDIA cards (exept for low-end ones) will loose at least 12% productivity - with top cards that is about $100 spent for nothing (AMD cards would not see that difference). So, If those cards are coming as SLI-"sertified" they have to be, in the worst case, equipped by NVIDIA NF200 chip (though, I would not recommend to by cards with this PCIe v.1.1 bridge). As even NVIDIA GF110 cards really need less than 1GB/s bandwidth (all other NVIDIA and AMD - less than 0.8GB/s)and secondary cards in SLI/CrossFire use no more than 1/4 of that, a normal PCIe v.2.0 switch (costing less than thrown away with x8/x8 SLI money) will nicely support three "Graphics only" x16 slots, fully-functional x8 slot and will provide bandwidth enough to support one PCIe v.2.0 x4 (or 4 x x1) slot(s)/device(s).

2. Do not understand the author euphoria of mass use of Marvell "SATA 6G" chips. The PCIe x1 chip might not be "SATA 6G" by definision, as it woud newer be able to provide more than 470GB/s (which is far from the standard 600GB/s) - so, I'd recommend to denote tham as 3G+ or 6G-. As it is shown in the upper section, there is enough bandwidth for real 6G solution (PCIe x8 LSISAS 2008 or x4 LSISAS 2004). Yes, will be a bit more expensive, but do not see the reason to have a palliative solutions on $200+ mobos.

-

I was hoping that the new Asus Sabertooth P67 would be included. Its new design really is leaving people wondering if the change is as good as they claim.Reply