AMD Carrizo APU's Excavator Cores Significantly Improve Efficiency

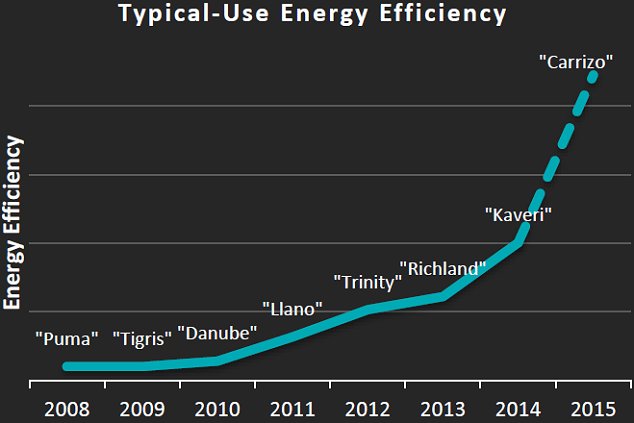

AMD significantly boosts efficiency with its new "Carrizo" APUs, first presenting the mobile version at ISSCC 2015, offering either higher performance at normal power consumption or significantly decreased power consumption at normal performance.

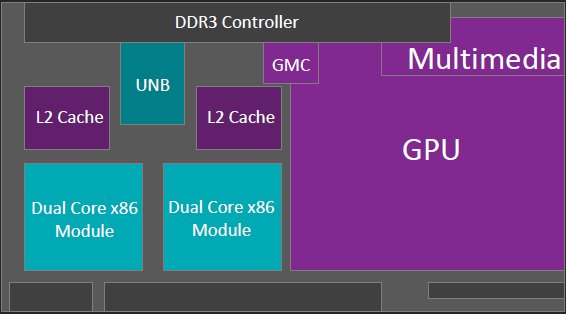

Made especially for notebooks, convertibles and similar devices, the new mobile "Carrizo" model APU utilizes new energy-efficient "Excavator" cores and combines the APU and Southbridge on a single die for the first time.

The advantages of combining the CPU, GPU, VPE and memory controller on a single chip are obvious. On one hand, it alleviates the long transmission times between conventional chips, and on the other hand, it allows for much finer power management tuning, taking the individual components' interactions into account.

Decreasing power consumption is further facilitated by the shared memory controller, but it's more about the improved power management and new "telemetry" later. The GPU component is equally state-of-the-art, with the Carrizo utilizing DX12, Mantle, and of course, HSA.

Article continues belowNew IC Layout Sports Considerably Increased Component Density

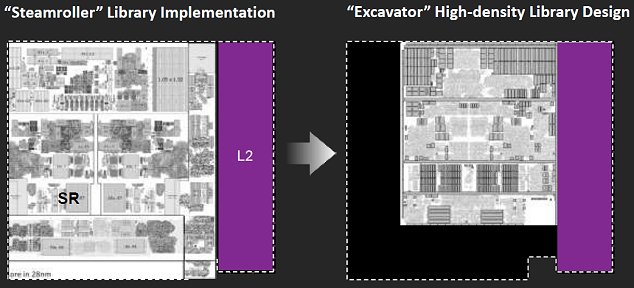

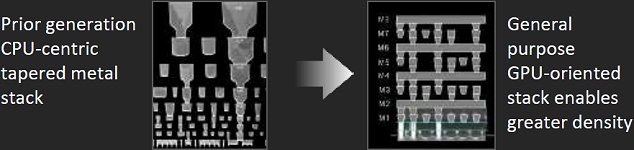

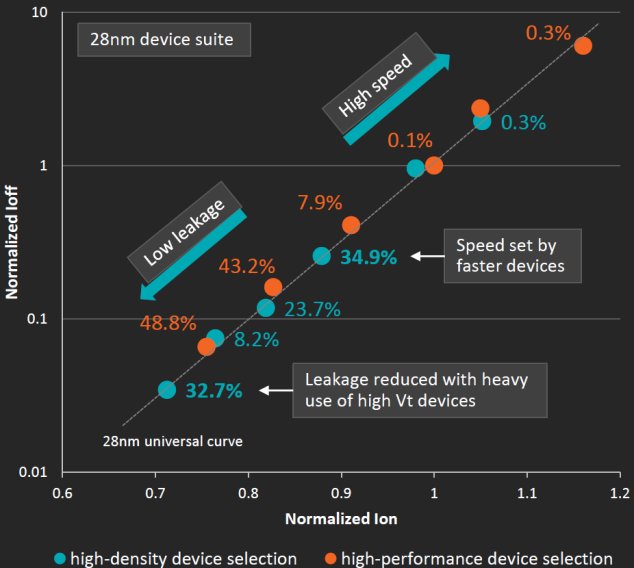

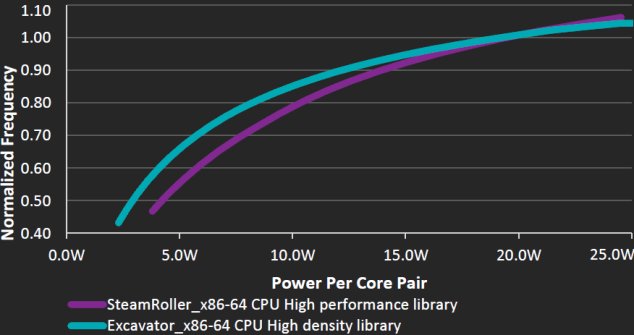

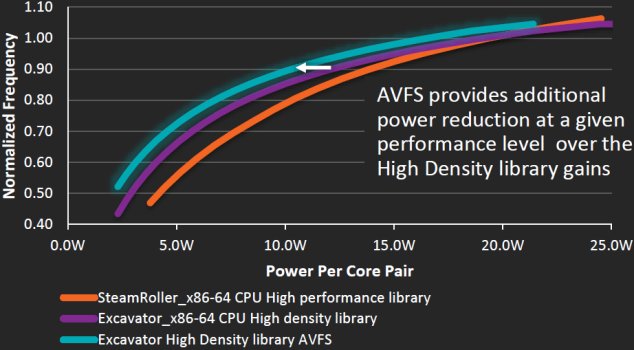

Of course, optimal implementation of all this on one chip requires a new approach with regard to layout and new configuration standards in general. With AMD still utilizing 28nm process technology, the Excavator core utilizes the high-density library design, which in the end amounts to much higher component density within the same structural dimensions.

This frees up 23 percent of the die's surface area, which also results in lower power consumption and less loss due to leakage, without having to completely redesign the manufacturing process.

The deciding factor is the approach of either a CPU-centric design, as it is with the Steamroller, or a more GPU-oriented configuration, which is what Carrizo has. Bear in mind that 3.1 billion transistors on the same surface area as the Kaveri is an increase of approximately 29 percent!

For AMD, the future is increasingly about distributing operations between CPU and GPU and parallel processing, with the aid of the GPU part of the APU. The future of these ideas of course holds much more potential, but considering that the HSA support has already been completely implemented, a significant performance boost is to be expected, even in the present stages of development.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Clock Speed To Power Consumption Ratio

The advantages become obvious when we compare the two approaches, at least in theory. When it comes to efficiency, the new Excavator cores surpass the Steamroller cores with a power use of 18 W to 20 W, offering a higher clock frequency at equal power consumption levels, and lower power consumption levels at equal clock speed.

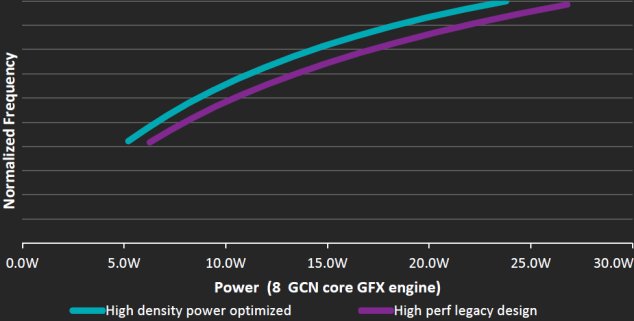

The benefits become increasingly evident when comparing the GPU's GCN cores, which have been optimized to consume less power at the same clock speed.

The die's configuration, however, is only half of it; not just the optimized layout, but in large part the optimized telemetry, which monitors the individual components and greatly influences CPU and GPU clock speeds and voltage use.

Is It Merely A Question Of Communication And Regulation?

As we all know, simply lowering power consumption isn't that easy; a more in-depth look at the problem is in order here. It requires more than a few protocol changes and technical tricks, and it shows that AMD has worked on this quite intensively, in part out of necessity to stay current.

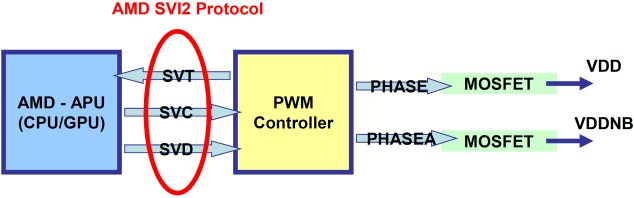

Trying to improve power management and supply efficiency based on the widely-known SVI2 (Serial Voltage Identification Interface 2.0), which connects the APU to the power supply, has been done before. This issue is touched upon in The Math Behind GPU Power Consumption And PSUs – with the difference that it now applies to both the CPU and GPU at the same time.

This can definitely be achieved with a more stable and clear-cut power supply, allowing for much finer calibration of the interactions between clock frequency and applied voltage.

Lowering Power Consumption By Reducing Clock Speed And Voltage

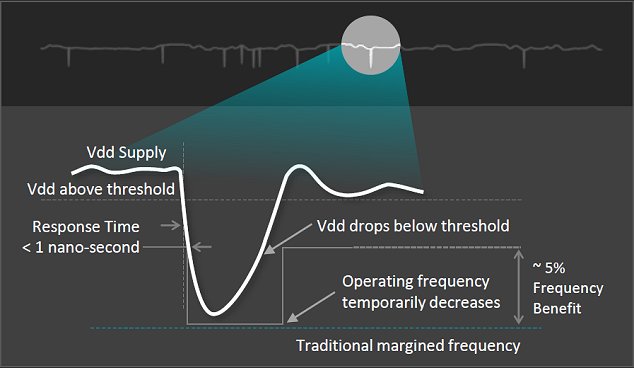

When the arbiter acts to achieve compliance with maximum temperature and power consumption parameters or to adjust clock speed to actual processing load (power estimation), clock frequency and necessary power provision are simultaneously regulated, which is what AVFS (Adaptive Voltage and Frequency Scaling) is all about.

Assuming the adaptive (adjusted) clock rates are less than the global, the current clock rate can be manipulated with a simple frequency divider while at the same time setting a lower voltage (Vdd). This divider is actually just a counter counting global clock cycles before triggering some event. This state change can be used to scale the voltage accordingly. This quickly becomes much less efficient, however, in the event of large load changes and the associated very large differences in voltage.

Here's where the technology comes into play. Instead of completely linear regulation, different thresholds can be used to adjust clock speed and voltage.

In the diagram below, clock speed as well as voltage is lowered almost simultaneously to a clearly defined value. Following the load spike, the modified threshold produces a much smoother clock performance, thereby increasing available clock speed on average.

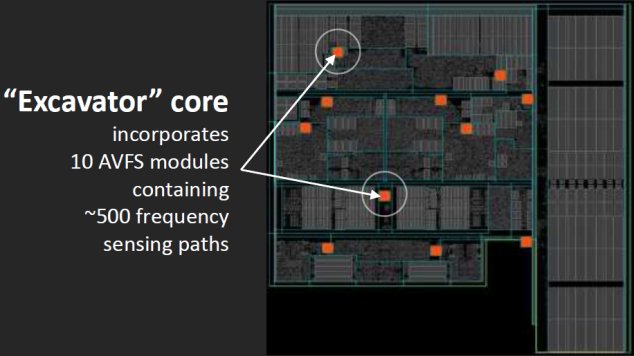

This "smoother" performance is only one part of the equation, though. Regulation requires data, and that has to come from somewhere – especially if the GPU and CPU are to be managed the same way. Aside from the usual temperature and voltage sensors, a total of 10 AVFS modules with a total of around 500 frequency-sensing paths serve to monitor their respective sections.

This in turn allows for considerably finer and, more importantly, localized frequency and voltage manipulation and management in the APU, and this is exactly where the consolidation of all of these components onto a single die really pays off.

If and how these improvements will be implemented in the coming graphics cards generation will be quite interesting, considering that Carrizo's eight GCN cores are only a precursor to what comes next.

Faster And More Precise Activation Of Various States

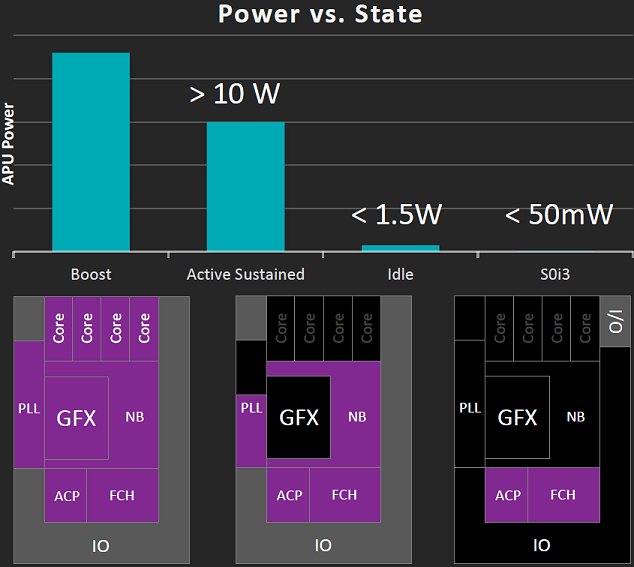

When it comes to building an efficient system, an issue that should not be underestimated is individual performance states and their regulation. Selective activation and deactivation of specific functionality is an important factor for longer battery life in mobile devices.

The S3 or "standby" status was especially relevant to AMD's considerations. The S0i3, or "idle" mode enables Carrizo to shut down all functionality deemed unnecessary without having to waste time interacting with the operating system.

Increased Efficiency And Expectations

Efficiency-wise, Carrizo does represent a sort of milestone when compared to Kaveri – presuming the data and results provided by AMD hold up to scrutiny under real-world operating conditions. Besides increasing IPC by around 5 percent, AMD's assurances are that the Excavator cores have 23 percent less die surface area and 40 percent less power consumption than Steamroller cores.

Unlike Kaveri, Carrizo will also support the H.265 codec, although no exact details on implementation have been issued by AMD yet. In any case, conversion speed is supposed to be 3.5 times faster than Kaveri. With regard to its APU's eight GCN cores, AMD has mentioned an approximately 20 percent decrease in power consumption compared to its predecessor.

The bottom line is that AMD promises to deliver double-digit boosts to performance and battery life. How well this assertion actually holds up, how OEMs will react, and what this new generation of APUs actually gets us is of course something have to be further examined by us at a later date.

Follow us @tomshardware, on Facebook and on Google+.

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

TechyInAZ Nice! And if they offer the most powerful A10 desktop for only $150, that will defiantly be faster than a i3.Reply -

TechyInAZ Reply15359323 said:I can't even focus enough to read the entire article. :(

Yeah a little complicated, but once you do some research on the basics of CPUs, then you'll get the hang of it. :) -

turkey3_scratch Good for AMD, this seems like it'll be a success in laptops. Well-written article, very thorough. However IPC only increased 5% I was hoping for more.Reply -

dragonsqrrl Reply15359294 said:Nice! And if they offer the most powerful A10 desktop for only $150, that will defiantly be faster than a i3.

Apparently Carrizo is mobile BGA only. Desktop FM2+ is getting a refresh of Kaveri this year called Godavari. -

digitaldoc AMD often has good theoreticals, but I will wait to see some benchmarks to see how this thing can really perform.Reply -

TechyInAZ Reply15359402 said:15359294 said:Nice! And if they offer the most powerful A10 desktop for only $150, that will defiantly be faster than a i3.

Apparently Carrizo is mobile BGA only. Desktop FM2+ is getting a refresh of Kaveri this year called Godavari.

Lol, the names that intel and AMD come up with (Devils canyon, Godavari) are so goofy sometimes.