Intel Tapes Out First Xe-HPG 'DG2' GPU for Gamers

Intel's first Xe-HPG powers on, tested in the labs

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Intel this week announced that it had taped out and powered on its first graphics processor based on the Xe-HPG architecture. The company also reaffirmed that it is working on a stack of discrete Xe-HPG GPUs that will be used for mid-range and enthusiast-class gaming PCs sometimes next year. In the meantime, Intel started to ship its DG1 discrete GPU based on the Xe-LP architecture for entry-level gaming PCs. (via SeekingAlpha / Intel Earnings Presentation).

"We powered on our next-generation GPU for client DG2," said Bob Swan, CEO of Intel, during the company's earnings call with analysts and investors. "Based on our Xe high-performance gaming architecture, this product will take our discrete graphics capability up the stack into the enthusiast segment."

Intel's first discrete GPU in two decades — the DG1 — relies on the same Xe-LP architecture that is used for the company's latest built-in GPUs found in codenamed Tiger Lake processors. Intel is currently shipping its DG1 GPUs for revenue and expects the first PCs with its discrete graphics inside to hit the market later this quarter.

Article continues below"Our first discrete GPU DG1 is shipping now and will be in systems from multiple OEMs later in Q4," said Swan.

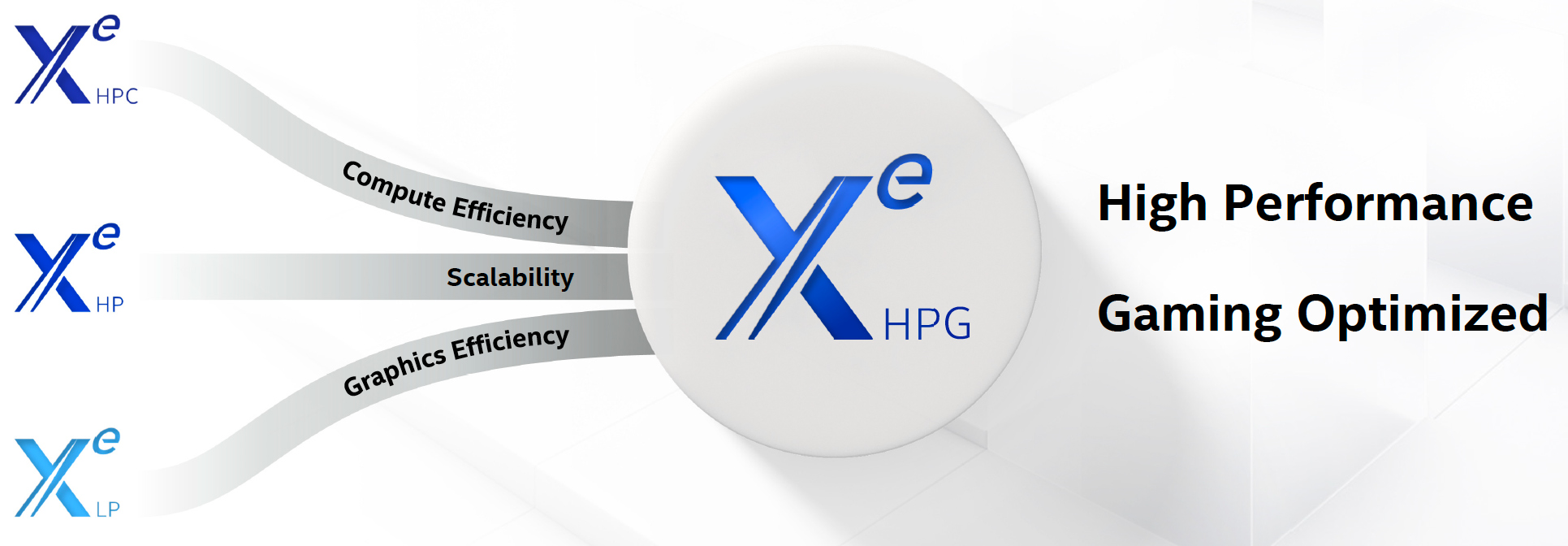

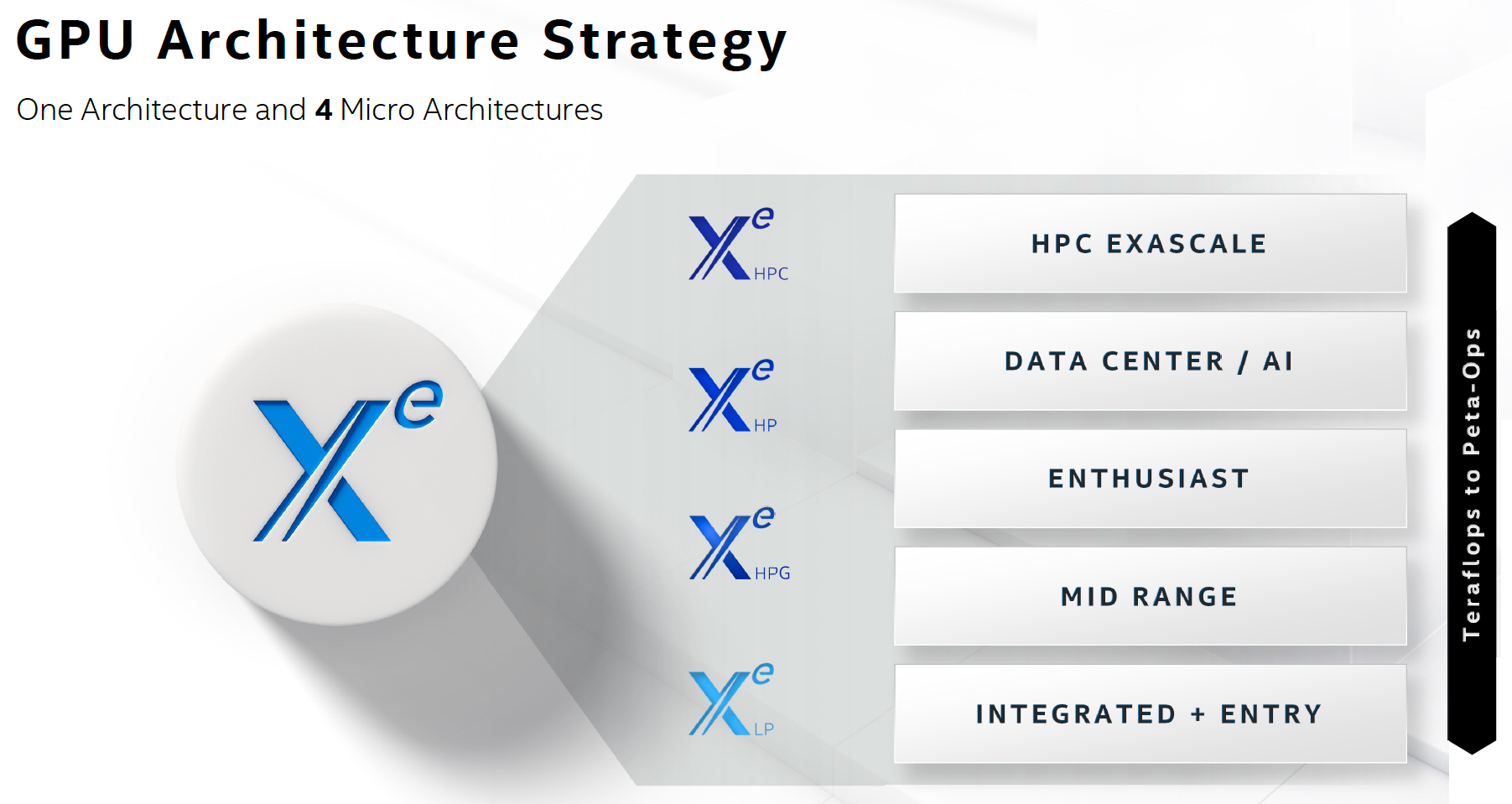

Intel's Xe-LP GPUs have a significantly higher performance than Intel's previous-generation graphics solutions, but since Xe-LP GPUs have to be integrated into CPUs, they are tailored primarily for low power consumption and efficiency in terms of transistor count. This is not the case for other Intel Xe architectures, namely Xe-HP for datacenters, Xe-HPC for supercomputers, and Xe-HPG for gaming PCs. In fact, Xe-HPG combines numerous peculiarities of other three architectures.

"We have been working since 2018 on another optimization of Xe-HP targeted gaming," said Raja Koduri, chief architect of Intel, at the company's Architecture Day in August. "That microarchitecture variant is called Xe-HPG. […] We had to leverage the best aspects of the three designs we've had in progress to build a gaming optimized GPU. We had a good perf-per-watt building block to start with, Xe-LP. We leveraged the scale from Xe-HP to get a much bigger config and we leveraged the compute frequency optimizations from Xe-HPC."

Intel's Xe-HPG GPUs will support hardware-accelerated raytracing along with other features, which will have an impact on the architecture of its execution units and/or sub-slices. Furthermore, since Xe-HPG GPUs will be made at an external foundry (i.e., at TSMC), Intel used a lot of third-party IP (e.g., memory controller and interface, display interfaces, etc.) to optimize design costs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Intel got its first Xe-HPG silicon from its foundry partner back in mid-August and has tested it internally since then. So far, the company has only confirmed that the GPU could be powered on, but this is a good sign in general.

Intel's family of Xe-HPG graphics processors will consist of multiple GPUs targeting market segments that will span from mid-range all the way to enthusiast level. So far, Intel has not disclosed how many discrete Xe-HPG graphics chips it plans to launch next year. Intel also did not reveal at this time whether it had taped out and powered on its big flagship Xe-HPG GPU, or something smaller and cheaper. Typically, GPU companies like AMD and Nvidia tend to introduce their big GPUs first and then follow up with smaller processors. However, every rule has an exception. For example, Nvidia started to roll-out its highly-successful Maxwell architecture from mainstream offerings.

In any case, right now Intel is bringing up its Xe-HP graphics processors for datacenters that are made using its 10nm Enhanced SuperFin process technology and demonstrate performance of around 40 FP32 TFLOPS as well as an unknown Xe-HPG graphics processor.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

DookieDraws Hope you don't mind me pointing this out. Your headline is using the wrong word, it should be taps out, not tapes out. And you use it again later in the article. :)Reply -

hotaru.hino Reply

Are you suggesting Intel should give up?DookieDraws said:Hope you don't mind me pointing this out. Your headline is using the wrong word, it should be taps out, not tapes out. And you use it again later in the article. :)

Because the tape-out is an actual term: https://en.wikipedia.org/wiki/Tape-out -

DookieDraws Reply

No way would I suggest Intel give up competing against AMD and NVIDIA in the GPU department! No way! :photaru.hino said:Are you suggesting Intel should give up?

Because the tape-out is an actual term: https://en.wikipedia.org/wiki/Tape-out -

hotaru.hino Reply

Well "tap-out" means to quit, at least in most English speaking places. :PDookieDraws said:No way would I suggest Intel give up competing against AMD and NVIDIA in the GPU department! No way! :p -

DookieDraws Reply

Okay. I was joking that Intel should give up (tap out)! That's why I added the smiley faces at the end. But all kidding aside, I do wish Intel nothing but the best. If they can produce a quality desktop GPU in the near future, I would love to see it.hotaru.hino said:Well "tap-out" means to quit, at least in most English speaking places. :p -

bobn9lvu Well, I would say tape-out in the context that the author used it is indeed correct.Reply

Tap-out is to concede. -

DookieDraws Reply

Yes, he is correct. Again, I was just poking fun at Intel making GPUs.bobn9lvu said:Well, I would say tape-out in the context that the author used it is indeed correct.

Tap-out is to concede. -

Bamda With AMD 6000 series and Nvidia 3000 series being released soon, Intel will not be getting any of my GPU money this round, they are just too late to the party.Reply -

jkflipflop98 Replyright now Intel is bringing up its Xe-HP graphics processors for datacenters that are made using its 10nm Enhanced SuperFin process technology and demonstrate performance of around 40 FP32 TFLOPS as well as an unknown Xe-HPG graphics processor.

RTX3080 is around 30 TFLOPS, innit? -

itzmec Reply

Tough crowd.DookieDraws said:Yes, he is correct. Again, I was just poking fun at Intel making GPUs.