AMD Bulldozer Review: FX-8150 Gets Tested

Perhaps the most hotly-anticipated launch in 2011, AMD’s FX processor line-up is finally ready for prime time. Does the company’s new Bulldozer architecture have what it takes to face Intel’s Sandy Bridge and usher in a new era of competition?

Per-Core Performance

There’s a really good reason why, when we benchmark a processor in a real-world application, the results are often very different from other tests. Explaining why requires breaking down performance in a more understandable way. A processor’s per-core potential is defined by the number of instructions it can execute per cycle and its clock rate.

We can isolate IPC, to a certain extent, by comparing various architectures at the same clock rate using applications designed to run in a single thread. That’s exactly what I did in Intel’s Second-Gen Core CPUs: The Sandy Bridge Review to determine just how much Intel improved the IPC rate of Sandy Bridge.

With its Bulldozer architecture, AMD's architects say it was their goal to “hold the line” on IPC and create hardware that’d scale to much higher frequencies. Given what we already know about the FX-8150's specifications, significantly higher frequencies aren’t being realized today, so before we even run any benchmarks, we have to assume similar IPC throughput, fairly comparable clocks, and then cross our fingers for better scaling across multiple cores if Bulldozer has any hope at all of outperforming the 3.7 GHz Phenom II X4 980 or Turbo Core-equipped Phenom II X6 1100T.

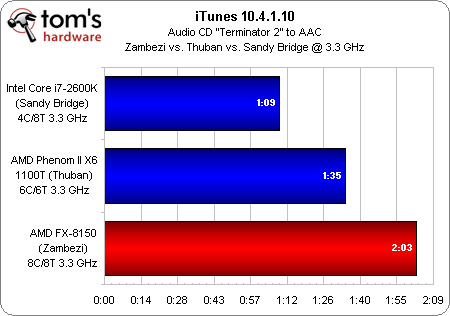

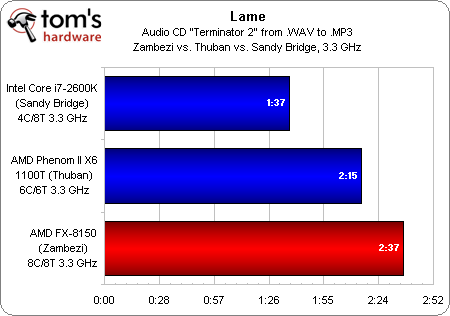

With a Core i7-2600K (Hyper-Threading, SpeedStep, and Turbo Boost all disabled), Phenom II X6 (Cool’n’Quiet and Turbo Core disabled), and FX-8150 (Cool’n’Quiet and Turbo Core disabled) all running our single-threaded iTunes test at an even 3.3 GHz, we see that Intel gets significantly more work done per cycle than the Phenom II X6 1100T, which in turns outperforms the FX. We see the same outcome in Lame, another single-threaded test.

John Fruehe, director of product marketing for server products at AMD, says he doesn’t like the performance per core comparison on the server side because it knowingly favors Intel. I’d absolutely agree that, in the server world, John's view is correct. Performance per watt and performance per dollar are both more pressing metrics in that space. On the desktop, however, enough workloads are still single- and lightly-threaded that per-core performance matters (even more so when the results of that measurement step backward, generationally).

Early on, then, we already have an idea of where the Bulldozer architecture might trip up...

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Per-Core Performance

Prev Page Single Floating-Point Unit, AVX Performance, And L2 Next Page Power Management