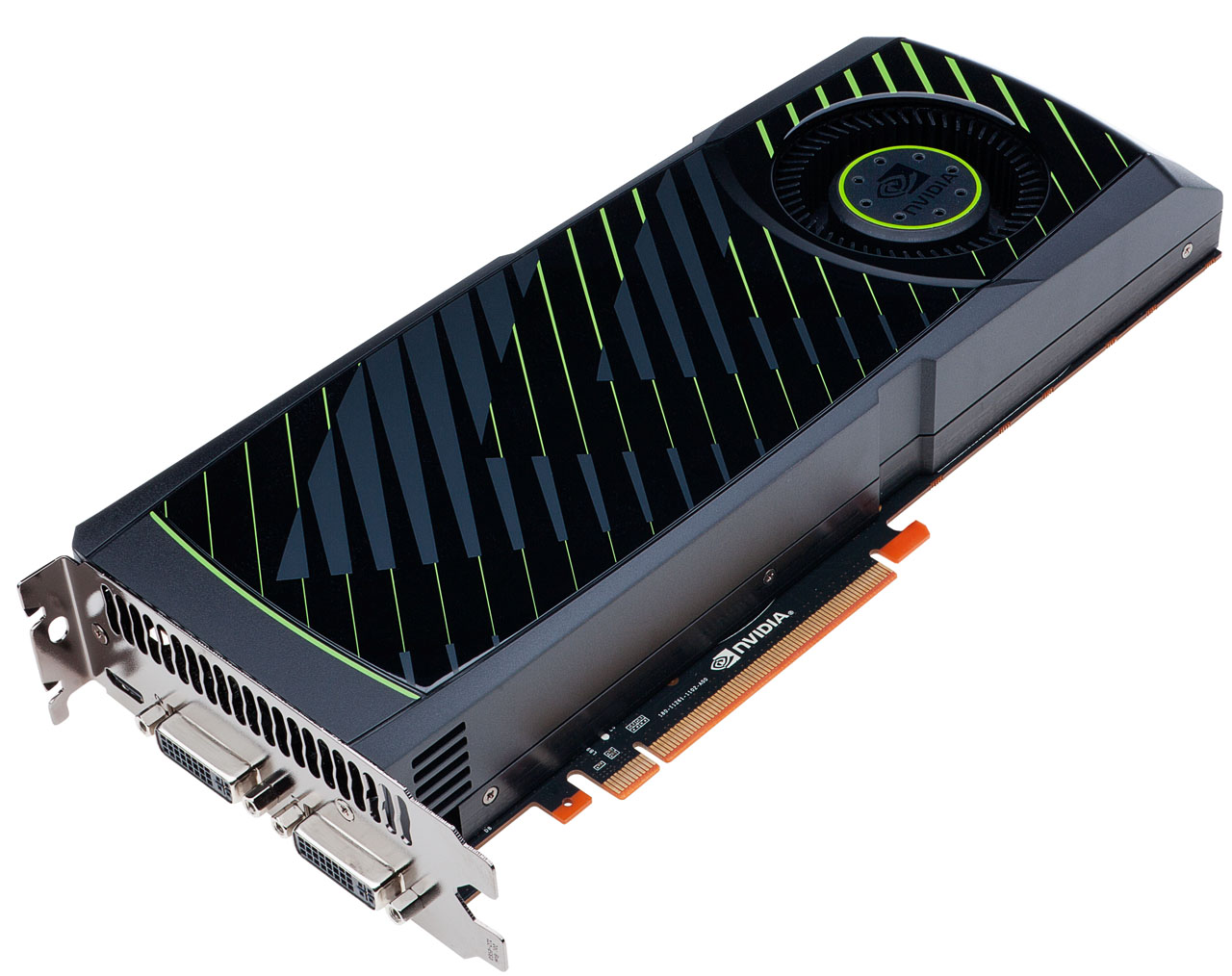

GeForce GTX 570 Review: Hitting $349 With Nvidia's GF110

A month ago, Nvidia launched its GeForce GTX 580, and it was everything we wanted back in March. Now the company is introducing the GeForce GTX 570, also based on its GF110. Is it fast enough to make us forget the GF100-based 400-series ever existed?

GeForce GTX 570: Now That's More Like It

Tom’s Hardware reader nevertell in response to my review of Nvidia’s GeForce GTX 580:

“So it's basically what the 480 should have been. Fair enough, I'll wait for the 470 version of the GF110 and buy that.”

If nevertell stuck to his guns, then he’s probably pretty happy right about now. After all, I’ve been playing with “the 470 version of the GF110” for three days now, and have to say I’m genuinely embarrassed for the GeForce GTX 480 cards selling for $450 online. Why? Well, here’s a bit of a spoiler alert: the GeForce GTX 570 is every bit as fast, and in some cases faster. Moreover, Nvidia’s pricing the thing at $349. As soon as I found out about this board, I tried to warn those of you following me on Twitter to abandon any plans to scoop up a discounted GTX 480 ahead of the holidays. The GTX 570 is far more attractive.

Then, before sitting down to write this piece up, I went back to read all 10 pages of feedback on the GeForce GTX 580 launch. The one theme that came up over and over was 6800-series cards in CrossFire. I didn’t have the boards to make that happen at the time (they were hanging out over at Don’s place in Canada), but I do now. And so this time around, you’ll see Nvidia’s GeForce GTX 570 compared to a pair of Radeon HD 6850s yoked together. The AMD cards are $30 more expensive, but if they can also manage to put down better performance, perhaps that’ll make for an even better value.

Not Every Flower Can Be A Rose

With GeForce GTX 580, Nvidia showed us what its Fermi architecture was intended to look like nine months ago.

All 16 Shader Multiprocessors are enabled, yielding 512 CUDA cores, 64 texture units, and 16 PolyMorph engines. And while the back-end still consists of six ROP partitions associated with six 64-bit memory controllers (384-bit aggregate), it runs at a slightly higher data rate, improving memory bandwidth. The graphics and shader clocks are also a fair bit quicker. Lo and behold, a more efficient heatsink and better fan design keep the thermals and noise in check too, even as the GeForce GTX 580 uses just about as much power as its predecessor.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Unfortunately, not every GF110 GPU cut from TSMC’s silicon can grow up to be a princess (that’s not to say the rest of them have to be GeForce GTX 465 toads either, though).

The GeForce GTX 570 is what you’d get if GeForce GTX 480 slept with GeForce GTX 470, and the offspring somehow ended up with GF110 DNA. That is to say, the card’s GPU features 15 Shader Multiprocessors, yielding 480 CUDA cores and 60 texture units. That’s GTX 480’s bosom. It also wields five ROP partitions, a 320-bit memory bus, and 1.25 GB of GDDR5 memory. That’s GTX 470’s derriere.

And of course you end up with the architectural enhancements made to GF110. Mainly, FP16 texture filtering happens in one clock cycle, just as it does on the GF104 found in GeForce GTX 460, and not the two cycles endured by GF100. Nvidia also made improvements to GF110’s Z-culling efficiency. This means the GPU is smarter about discarding pixels that don’t need to be rendered in a scene (because they’re behind other objects), conserving memory bandwidth and compute muscle.

Current page: GeForce GTX 570: Now That's More Like It

Next Page Meet Nvidia’s GeForce GTX 570-

thearm Grrrr... Every time I see these benchmarks, I'm hoping Nvidia has taken the lead. They'll come back. It's alllll a cycle.Reply -

xurwin at $350 beating the 6850 in xfire? i COULD say this would be a pretty good deal, but why no 6870 in xfire? but with a narrow margin and if you need cuda. this would be a pretty sweet deal, but i'd also wait for 6900's but for now. we have a winner?Reply -

sstym thearmGrrrr... Every time I see these benchmarks, I'm hoping Nvidia has taken the lead. They'll come back. It's alllll a cycle.Reply

There is no need to root for either one. What you really want is a healthy and competitive Nvidia to drive prices down. With Intel shutting them off the chipset market and AMD beating them on their turf with the 5XXX cards, the future looked grim for NVidia.

It looks like they still got it, and that's what counts for consumers. Let's leave fanboyism to 12 year old console owners. -

nevertell It's disappointing to see the freaky power/temperature parameters of the card when using two different displays. I was planing on using a display setup similar to that of the test, now I am in doubt.Reply -

reggieray I always wonder why they use the overpriced Ultimate edition of Windows? I understand the 64 bit because of memory, that is what I bought but purchased the OEM home premium and saved some cash. For games the Ultimate does no extra value to them.Reply

Or am I missing something? -

theholylancer hmmm more sexual innuendo today than usual, new GF there chris? :DReply

EDIT:

Love this gem:

Before we shift away from HAWX 2 and onto another bit of laboratory drama, let me just say that Ubisoft’s mechanism for playing this game is perhaps the most invasive I’ve ever seen. If you’re going to require your customers to log in to a service every time they play a game, at least make that service somewhat responsive. Waiting a minute to authenticate over a 24 Mb/s connection is ridiculous, as is waiting another 45 seconds once the game shuts down for a sync. Ubi’s own version of Steam, this is not.

When a reviewer of not your game, but of some hardware using your game comments on how bad it is for the DRM, you know it's time to not do that, or get your game else where warning.