Does Your Storage Controller Affect The Performance Of An SSD?

Twelve SATA Controllers, Benchmarked

Is your SSD turning in lower benchmark numbers than what its spec sheet promises? It's possible that your motherboard's chipset or an add-in storage controller is to blame. But do those results really mean anything in an enthusiast's desktop PC?

The real-world performance of a SSD doesn’t just depend on drive, but also the computer you're dropping it into. Which chipset does your motherboard employ? Is it older, and limited to 3 Gb/s transfers, or does it sport 6 Gb/s connectivity? More specifically, even if your storage controller supports the very latest standards, is it as fast as the other controllers out there with similar specifications from competing vendors?

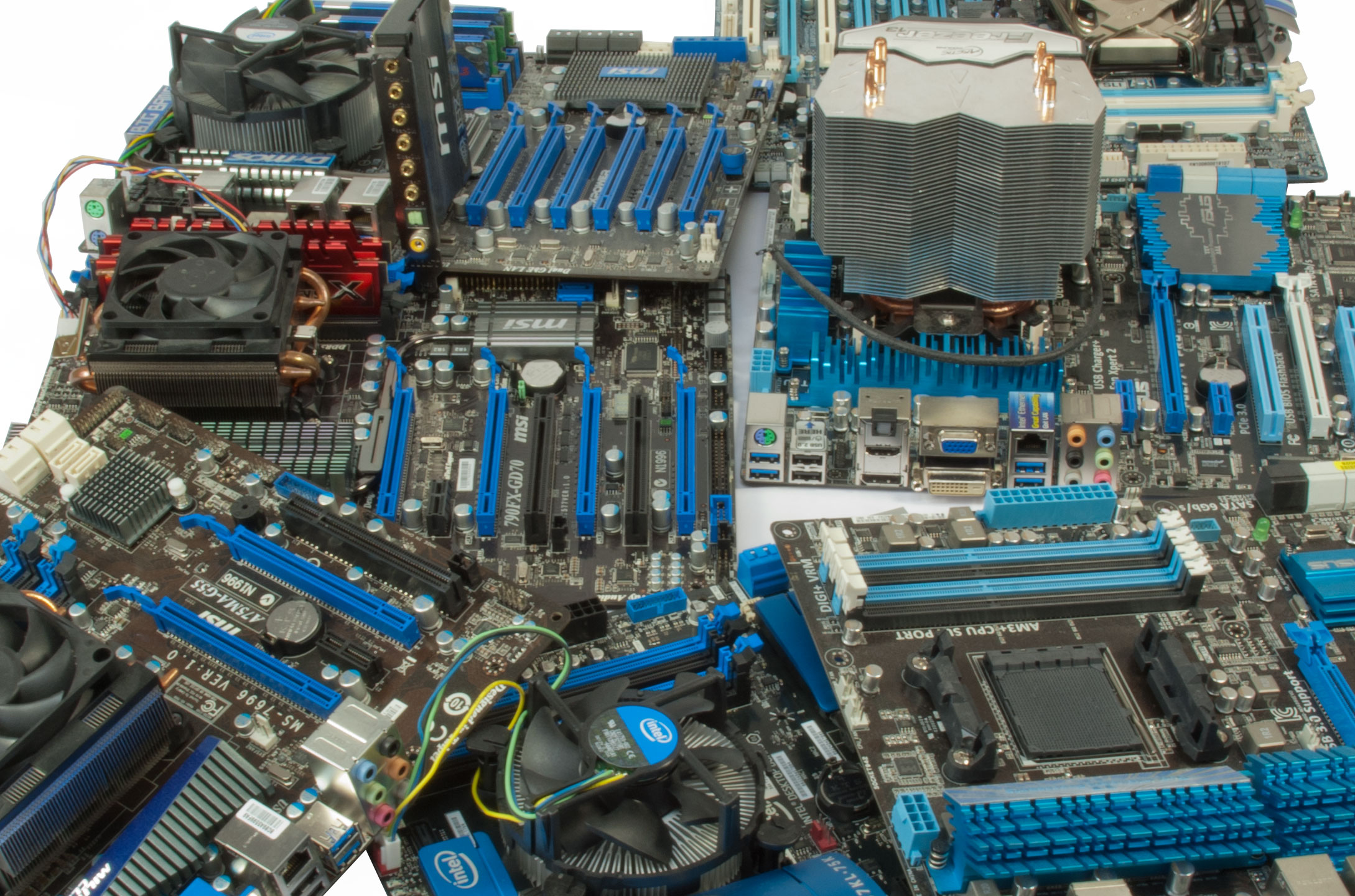

We've spent plenty of time digging into the performance attributes of SSDs. Now, we want to have a look at how different south bridges and standalone controllers affect storage performance. We gathered an impressive array of motherboards and add-in cards from around the lab. The boards represent a veritable who's who of chipsets from 2008 to 2013, including AMD SB750, AMD A75, AMD SB950, Intel Z87, Intel P55, Intel ICH10R, and Intel Z77. The cards include ASMedia's ASM1061, Marvell's 88SE9123-NAA2, Marvell's 88SE9125-NAA2, Marvell's 88SE9128-NAA2, and Marvell's 88SE9130-NAA2 controllers.

Article continues belowIn an SSD review, you'd see us use a bunch of different drives as comparison points. Here, though, we want to stick with one and have that serve as a stake in the ground, facilitating consistent throughput to our various controllers. Samsung's 256 GB 840 Pro is one of the highest-end SSDs we have in the lab, and the company sent out one to each of our editors for use as Tom's Hardware's reference through 2013.

If you want more information on the 840 Pro, check out our launch coverage: Samsung 840 Pro SSD: More Speed, Less Power, And Toggle-Mode 2.0, along with our last two experiments with these things: Is A SATA 3Gb/s Platform Still Worth Upgrading With An SSD? and One SSD Vs. Two In RAID: Which Is Better?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Twelve SATA Controllers, Benchmarked

Next Page Chipsets, SATA Controllers, And The Test Platforms-

Madn3ss795 It's clear that Intel won over AMD in this, because 4k read/write and access time is what we care most about nowadays. It's a shame that AMD went for quantity over quality.Reply

As a side note, when can we see an usb3.0 controller comparison with those new AMD and Intel chipsets? -

SteelCity1981 I was surprised to see intels 3gbs outpace marvels 6bps controllers in many benchmarks. Just goes to show you that not all SATA controllers are created equal.Reply -

mapesdhs The one thing the article didn't say, which it should, is that Marvell controllersReply

are garbage. Notice how often the P55 matches or beats one of the Marvell

6gbit controllers. The PCIe x1 link issue is bad enough, but sometimes even

having a proper connection doesn't help their performance.

Also not mentioned is SSD reliability. The only time I've ever had problems

with an SSD were when it was connected to a Marvell controller (eg. failed

fw update; move the SSD to an Intel port, the update then works ok).

Ian.

-

slomo4sho I would like to see if there is any performance difference when each of the 3rd party controllers are tested on the available platforms.Reply -

jg11 There is one very significant piece of information that is not included in this article. Which particular ports being used on the controller makes a big difference.Reply

Most of the embedded chipsets (or external chipsets) carry a multiplexer between SATA and PCI Express. The CPUs accept PCI Express connections, not SATA, so there is a conversion that must be made, which is done by the SATA chipset. Each lane on PCI Express 2.0 supports approximately 8GB/s, and PCI Express 3.0 supports approximately 15 GB/s.

Here's the problem I have seen in external expansion slots. They connect 4 SATA slots to a single PCI Express 2.0. So potentially, four connected SATA 6 GB/s drives, or 24 GB/s total I/O throughput, is being processed into a single 5 GB/s connection to the CPU. I don't care how good the SATA chipset is at processing and prioritizing I/O data, you are going to have an I/O bottleneck. Even four SATA 3 GB/s drives create a total of 12 GB/s throughput, more than a single PCI Express 2.0 lane can handle. SSDs can approach speeds greater than 3 GB/s, so it is not a theoretical bottleneck, it is a very real limitation.

So going back to the article. At most, I have seen 4 SATA slots connected to a single PCI Express 2.0 lane. I have seen 6 or 8 connected to either 2 discrete lanes or a 2x lane (or 4x lane when talking about SAS), which carries approximately 10 GB/s of total throughput. So depending on the implementation of the embedded chipset on the motherboard, it may be the PCI Express lanes giving you the throughput limitation and not the SATA chipset. Different ports may be connected to different 1x PCI Express lanes or to a 2x lane, giving you either two discrete paths to the CPU, maximizing throughput, or a larger pipeline to the CPU, which is better than a 1x lane but not nearly as good as discrete pathways.

I have an external PCI Express controller with a few drives on my main system, and when transferring files from drives on the internal (motherboard) chipset to drives on the connected card, there is a noticeable throughput difference. -

Onus I would like to have seen how CPU speed affects these measurements, if at all. As it is, other than to get off a Marvel controller or upgrade from 3Gb/s to 6Gb/s, there doesn't appear to be a whole lot of difference; some, but not enough to write home about (i.e. to suggest an upgrade).Reply

-

Shneiky I am disappointed that there was no 67 chipset. After all, most of the people are still on Sandy Bridge.Reply -

Lefturn Great article guys. I own an 840 pro myself, and I was wondering why the built-in benchmark numbers weren't as high as what was advertised. Now I know.Reply -

ArmedandDangerous Looking at the testbed, I see the Intel X-25M G ONE. How the heck did that achieve above 300+MBps doing anyting at all? It's a SATA2.0 device, which is a 3Gbps interface. Your benchmarks are showing 6Gbps scores.Reply -

ronch79 Sorry guys, I just need to put in some 'constructive criticism'. This article's last paragraph just sounds so stupid and OBVIOUS that it's like reading an old issue of PC Magazine where the authors are a bunch of old fuddy-duddies who say things that are just too obvious. ALL motherboards today come with built-in SATA ports and nobody who has half a brain will buy a separate PCIe SATA controller to run his SSD or mech HDD. NOBODY! Unless that person has (1) run out of southbridge-provided SATA ports, or (2) he has an old board with old SATA 3Gbps ports and thinks a fancy new SATA 6 PCIe card will be a nice upgrade, or (3) he does have less than half a brain and thinks that a separate SATA controller somehow has some secret sauce that's faster than the motherboard SATA ports, or, lastly, (4) he thinks that the ASMedia controller that also came extra with his board is better than what Intel or AMD came up with. Of these four possibilities, 1 and 2 are probably acceptable, 3 and 4 are stupid scenarios.Reply

No, OF COURSE and OBVIOUSLY you plug devices into the built-in southbridge-connected SATA ports. Anyone who even thinks about installing his own SSD will AUTOMATICALLY do that, not go out and buy a separate SATA controller!