Why you can trust Tom's Hardware

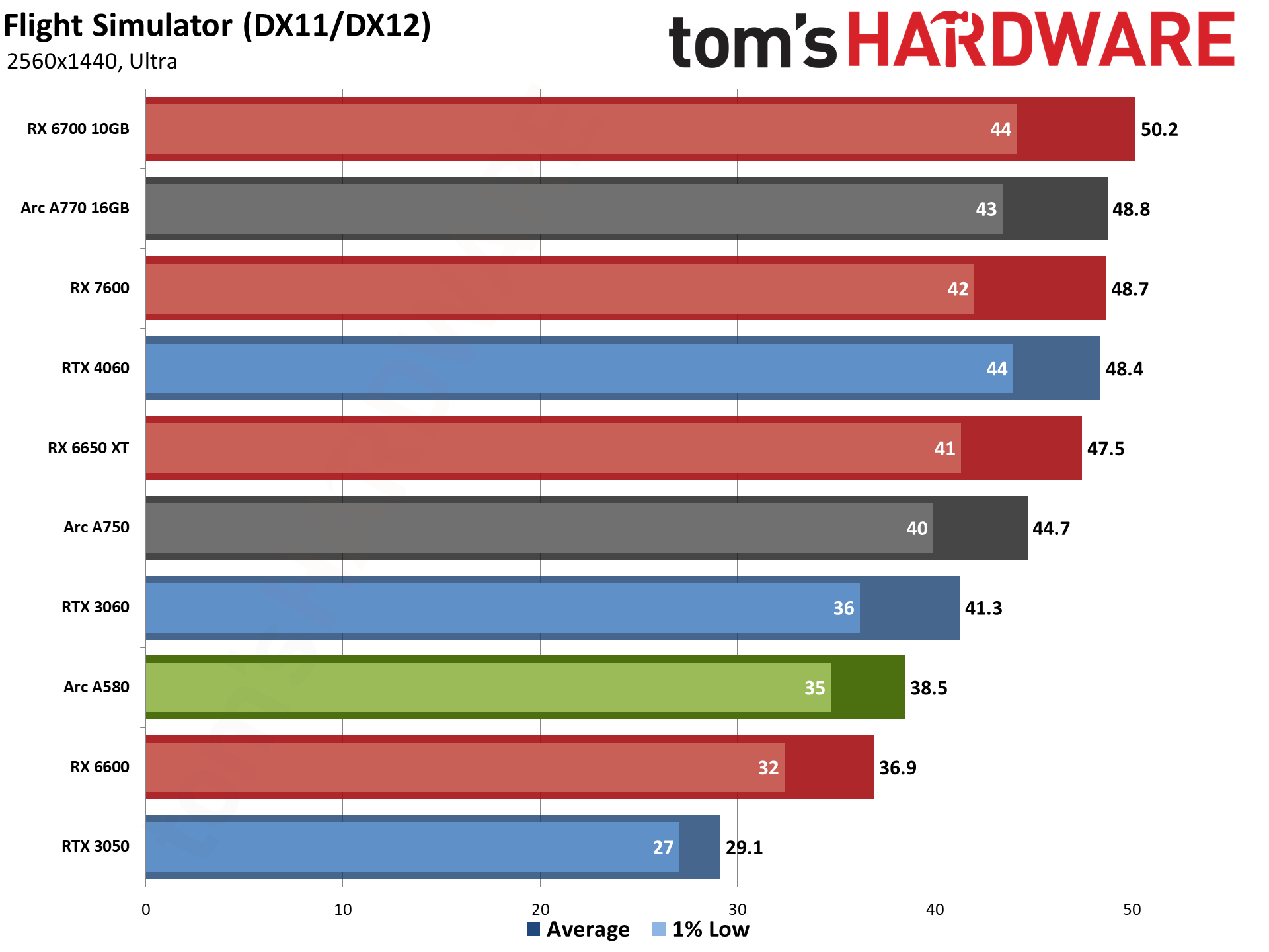

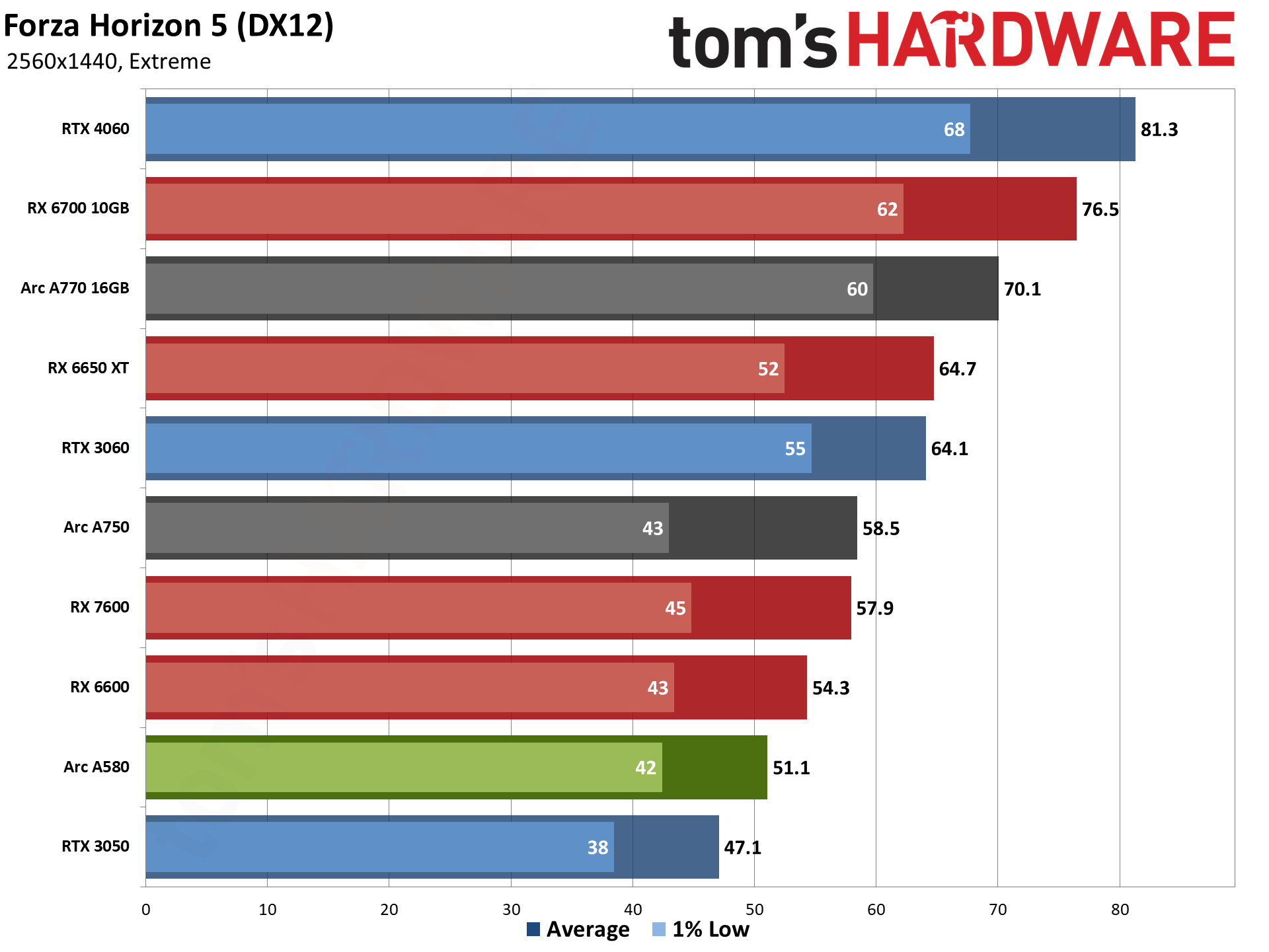

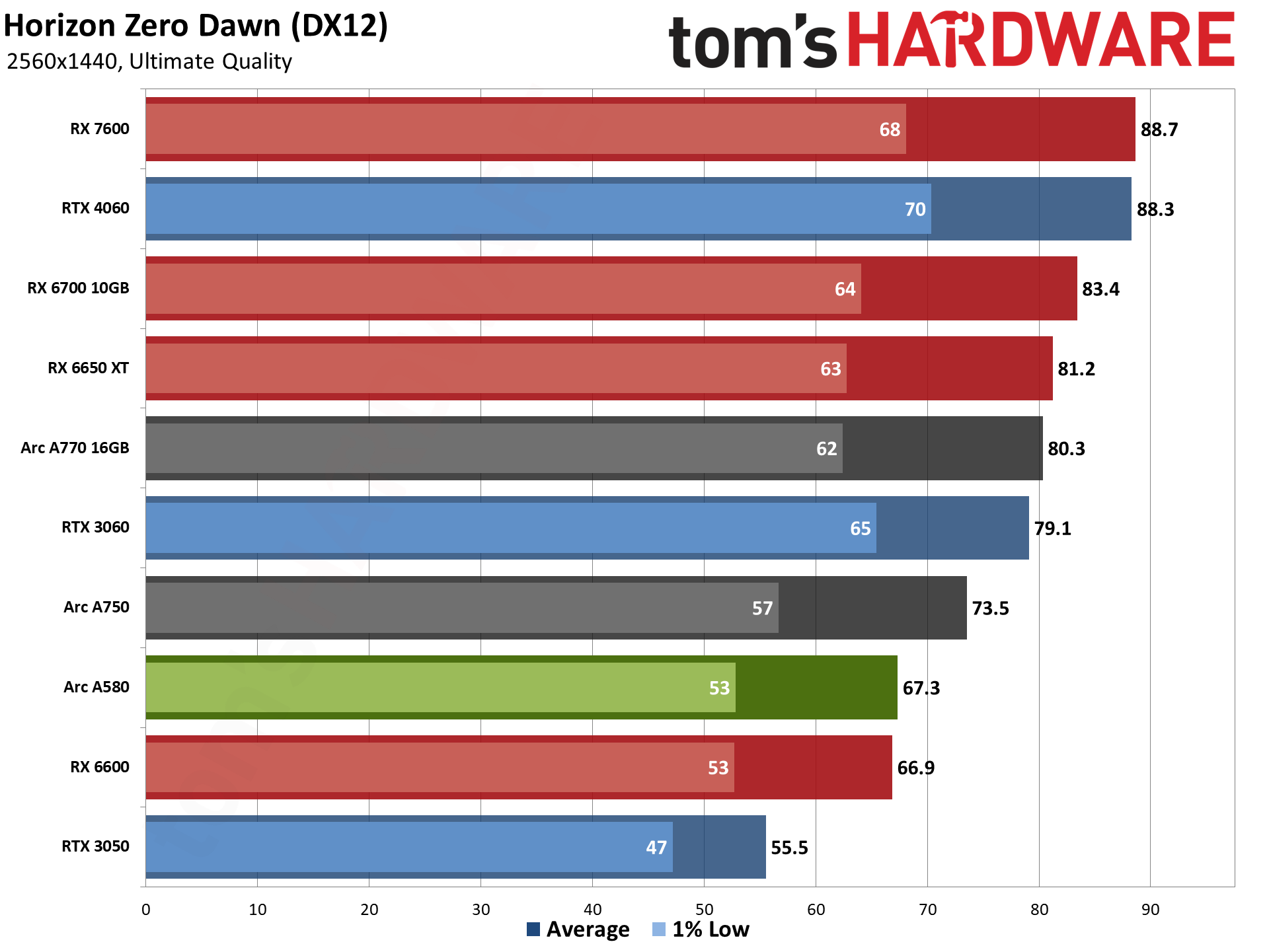

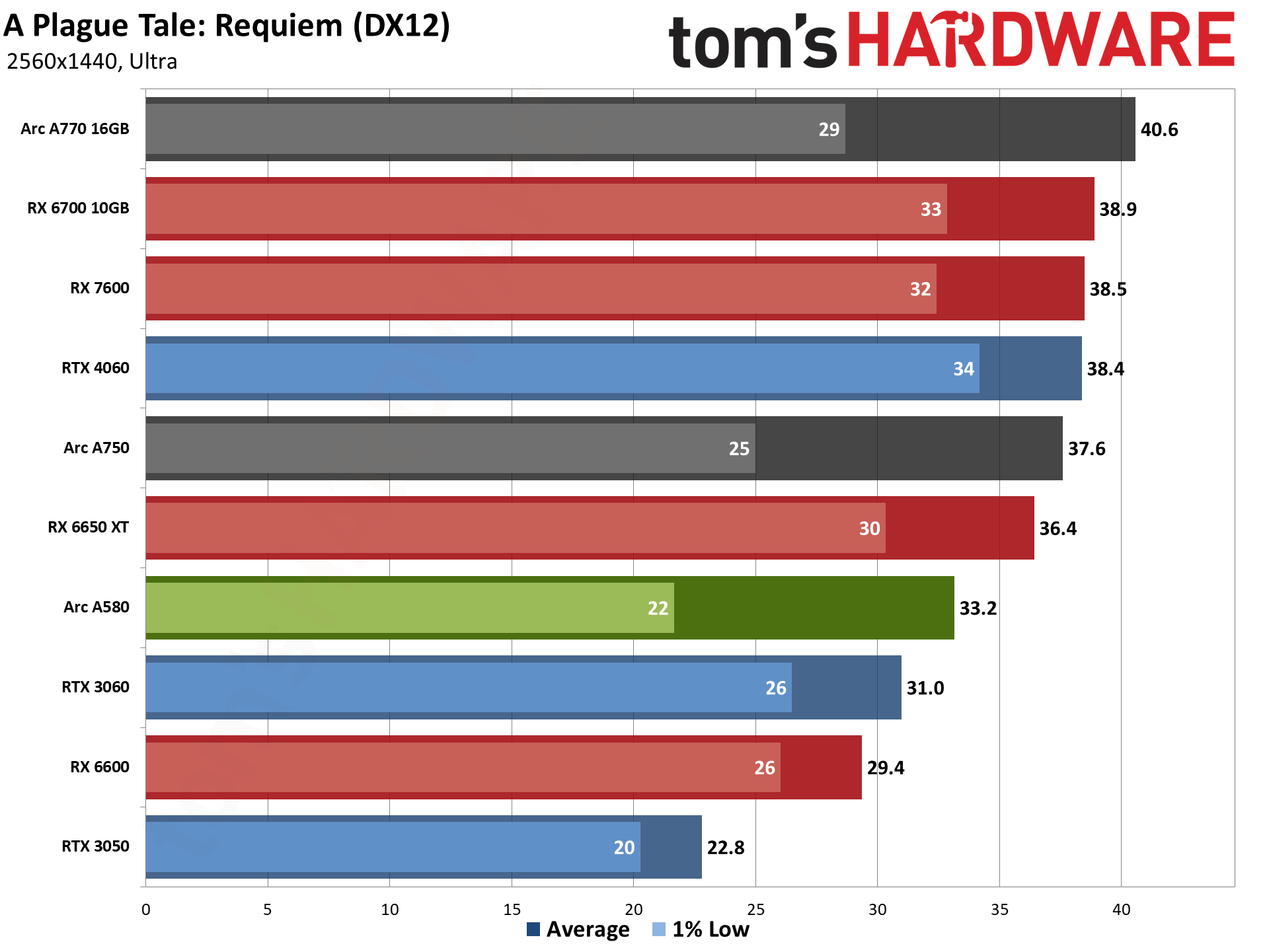

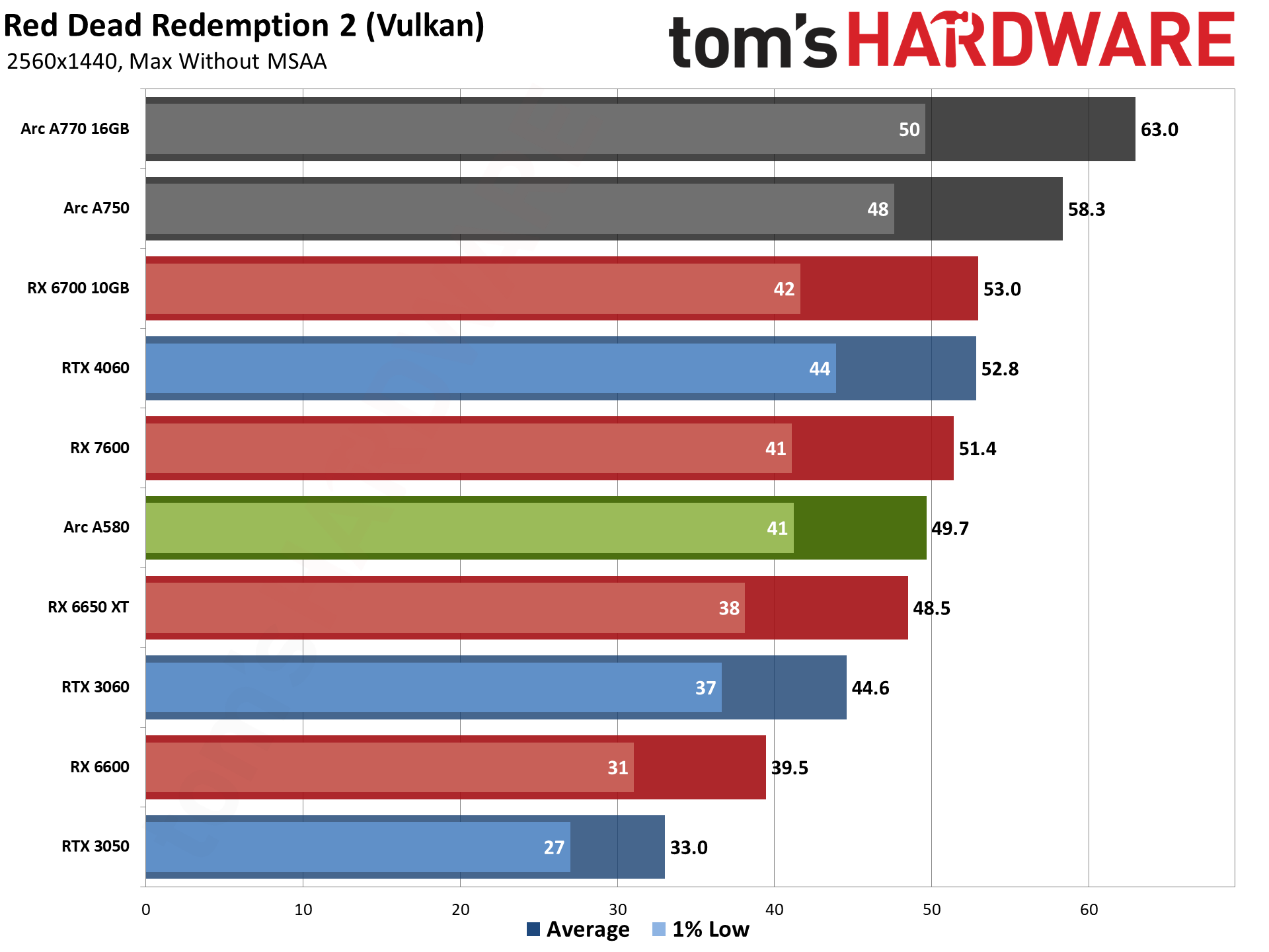

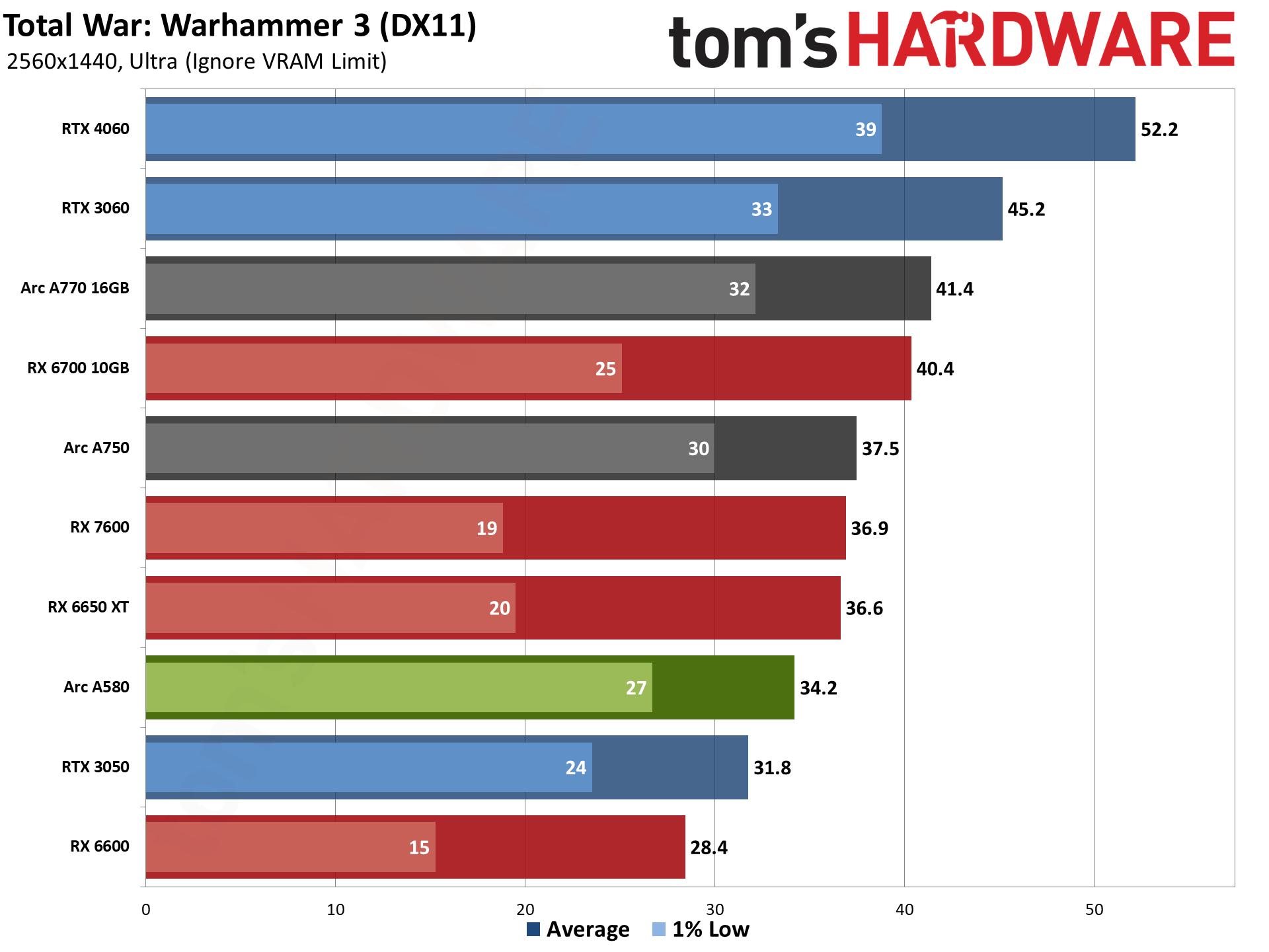

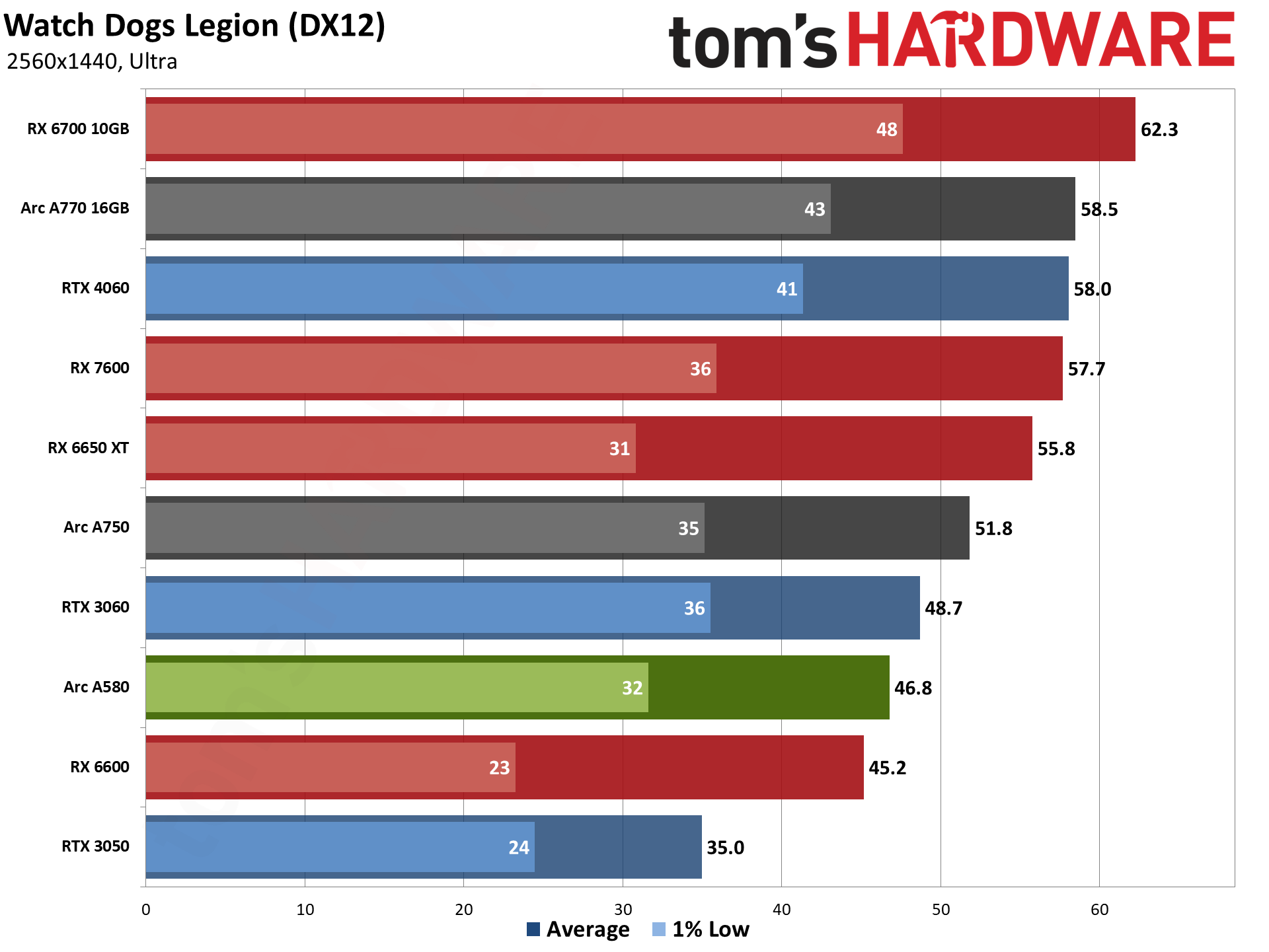

We ran all of the games through our test suite at 1440p ultra settings, though performance obviously drops quite a bit compared to 1080p ultra. We even tested 4K ultra... but not with the ray tracing games, as that's simply too much. We'll have the 4K rasterization charts below, if you're interested, but only a few of the games even managed to break 30 fps. Let's start with 1440p.

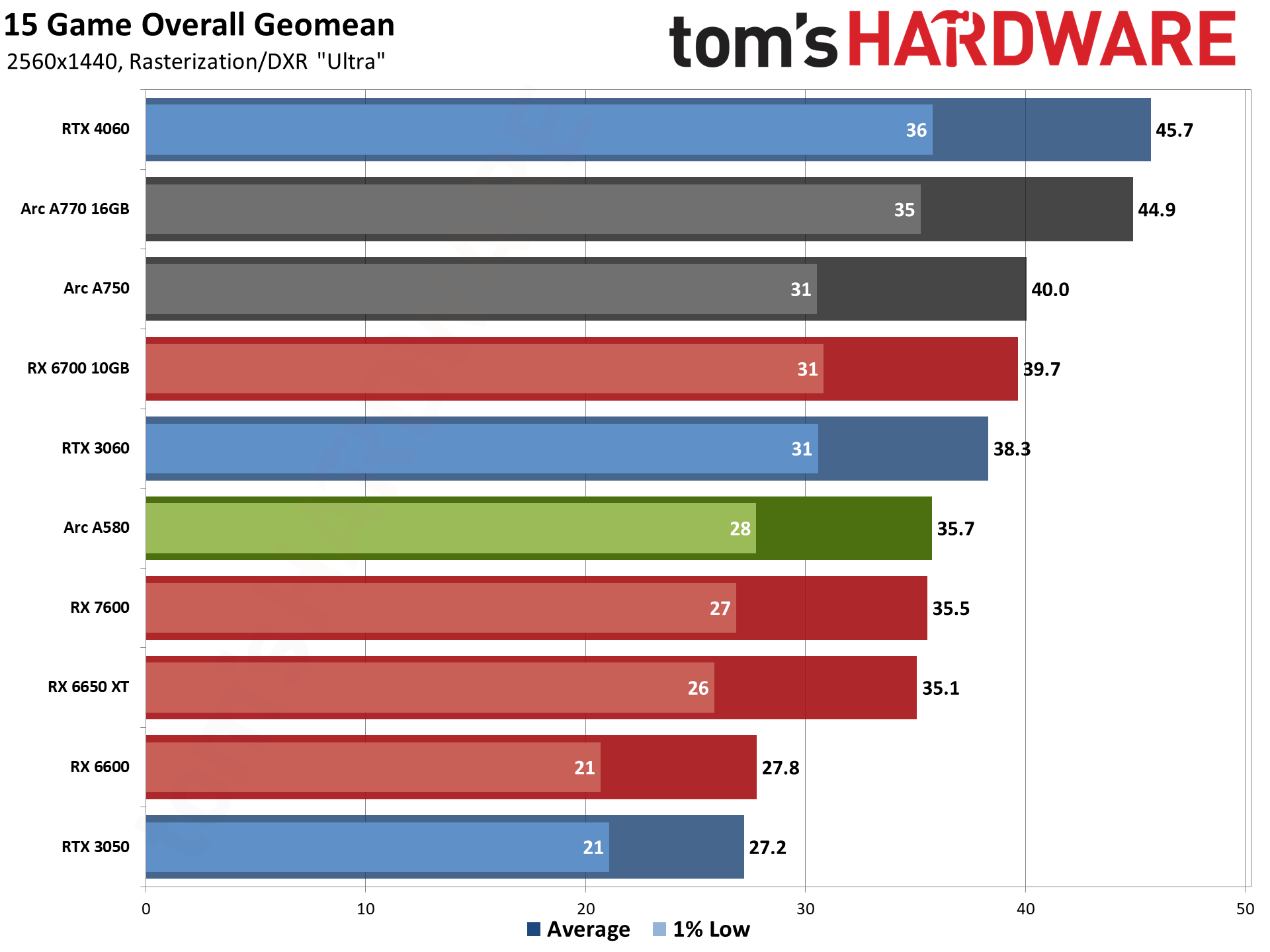

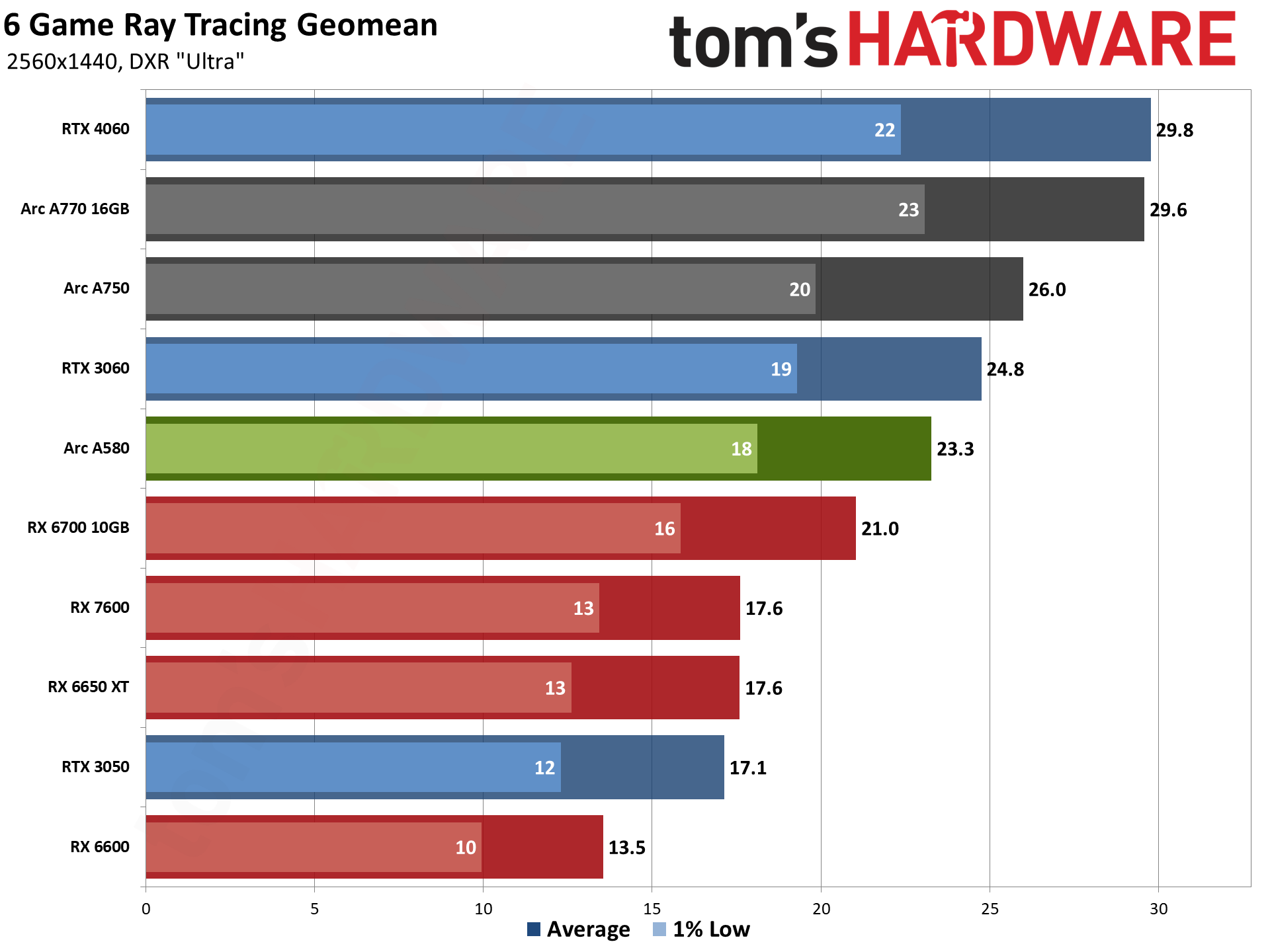

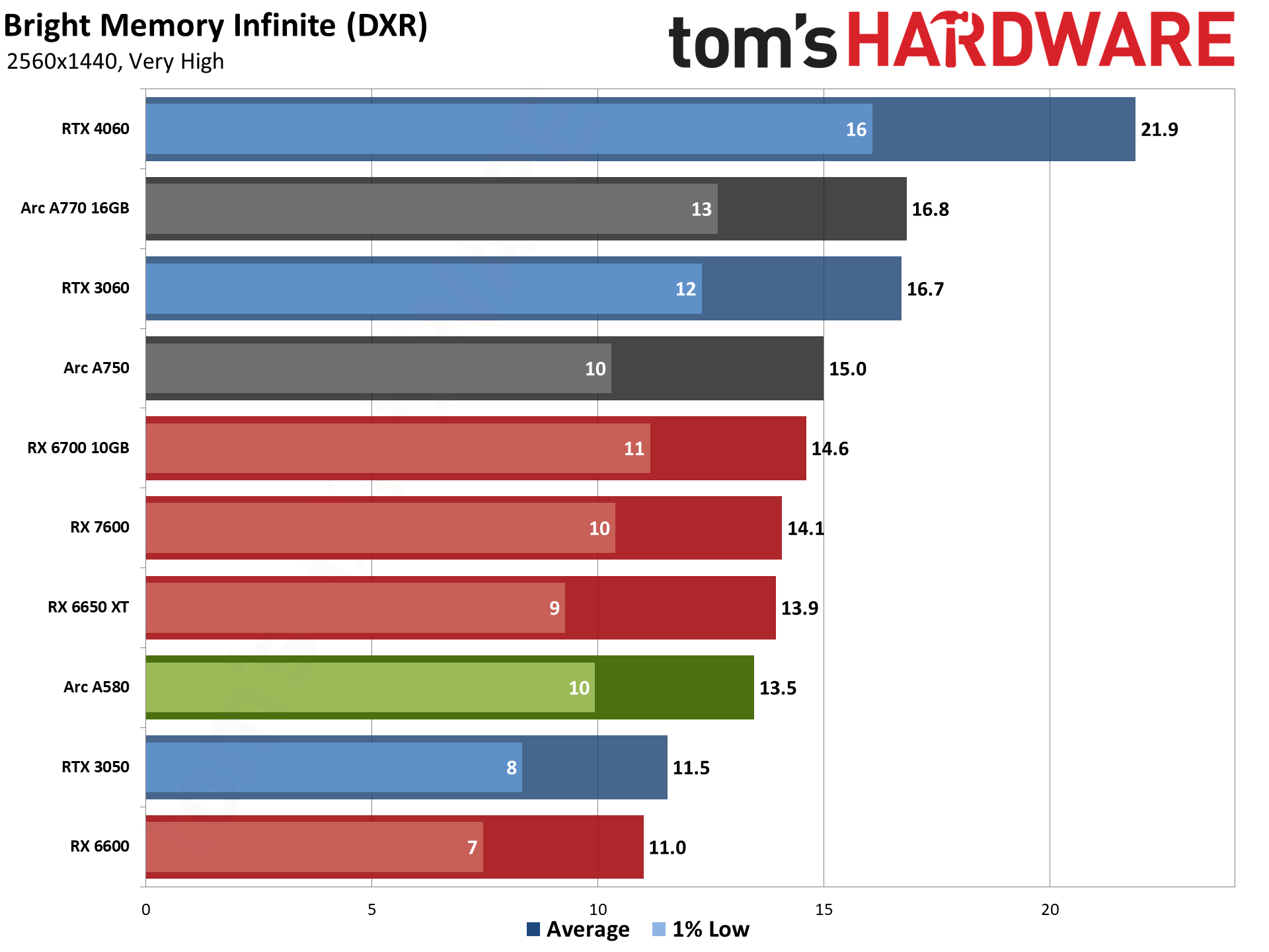

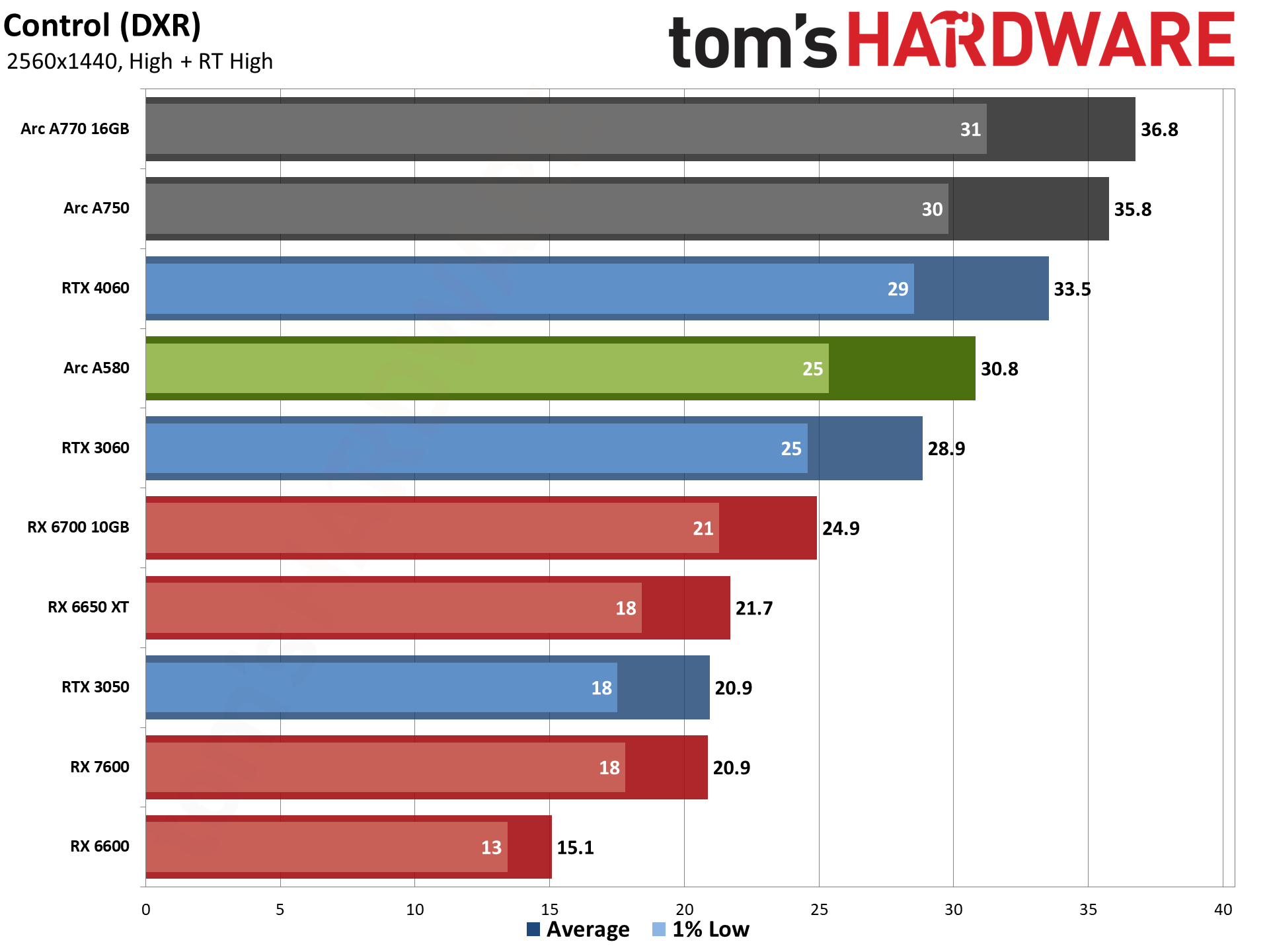

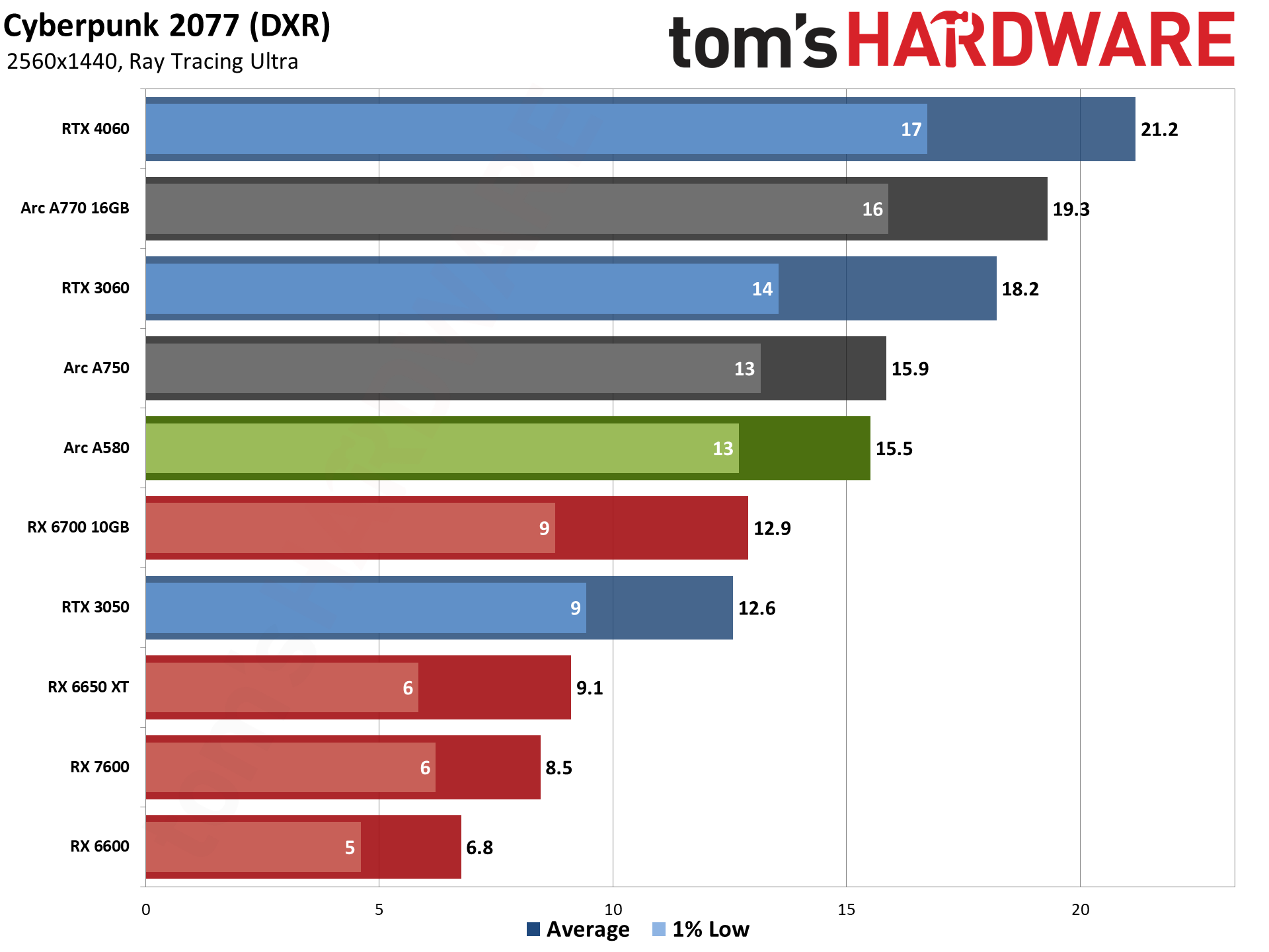

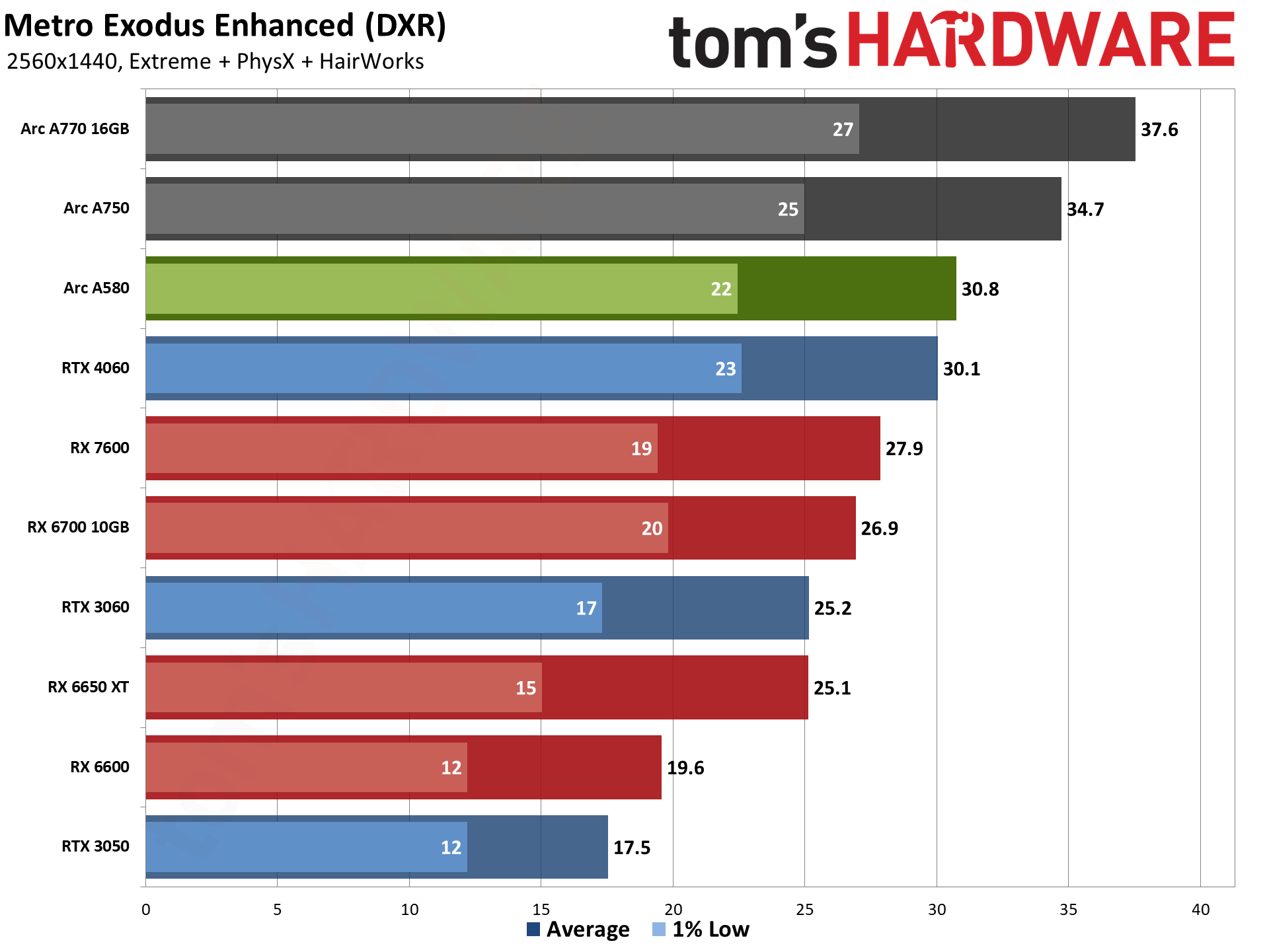

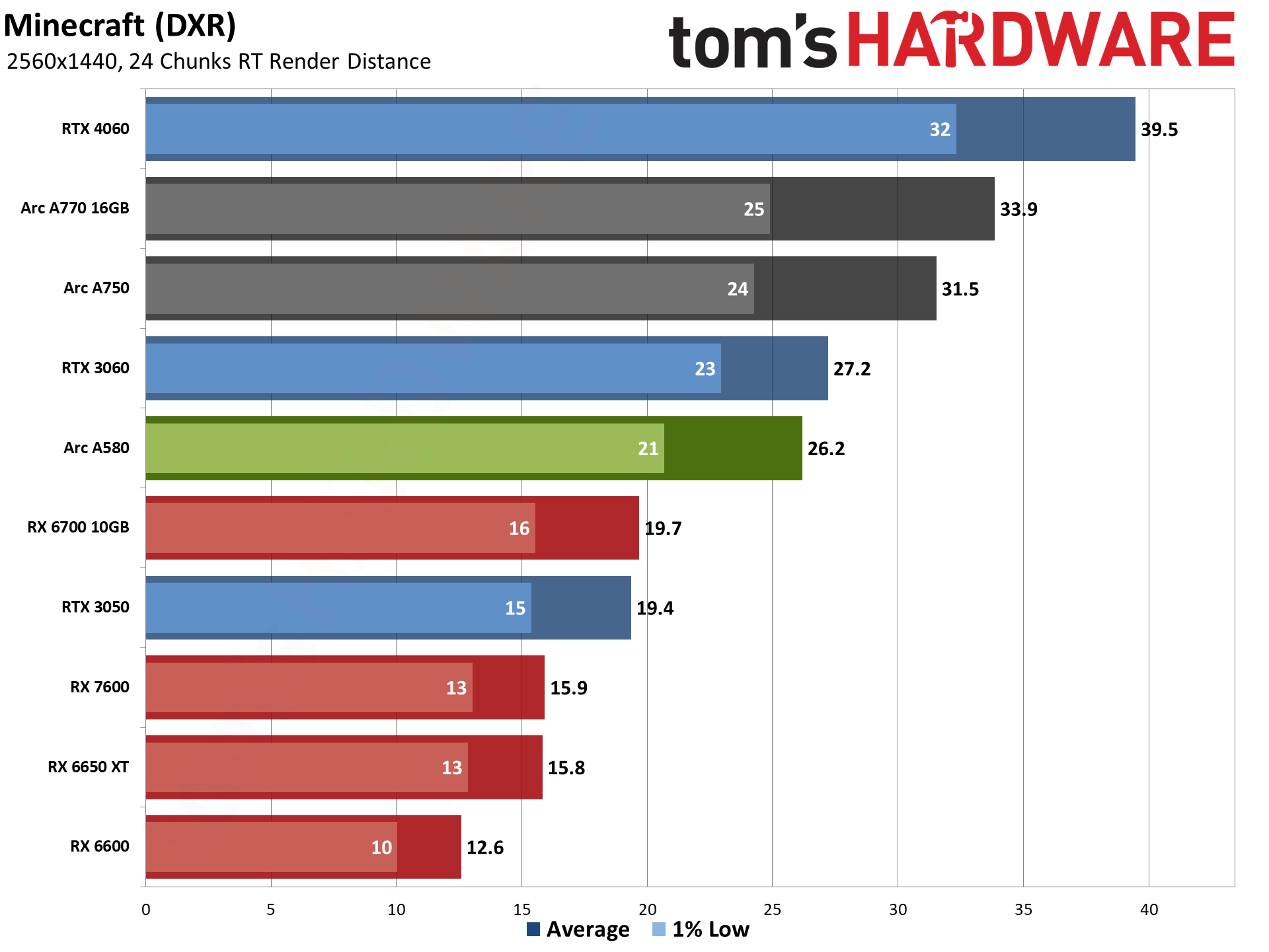

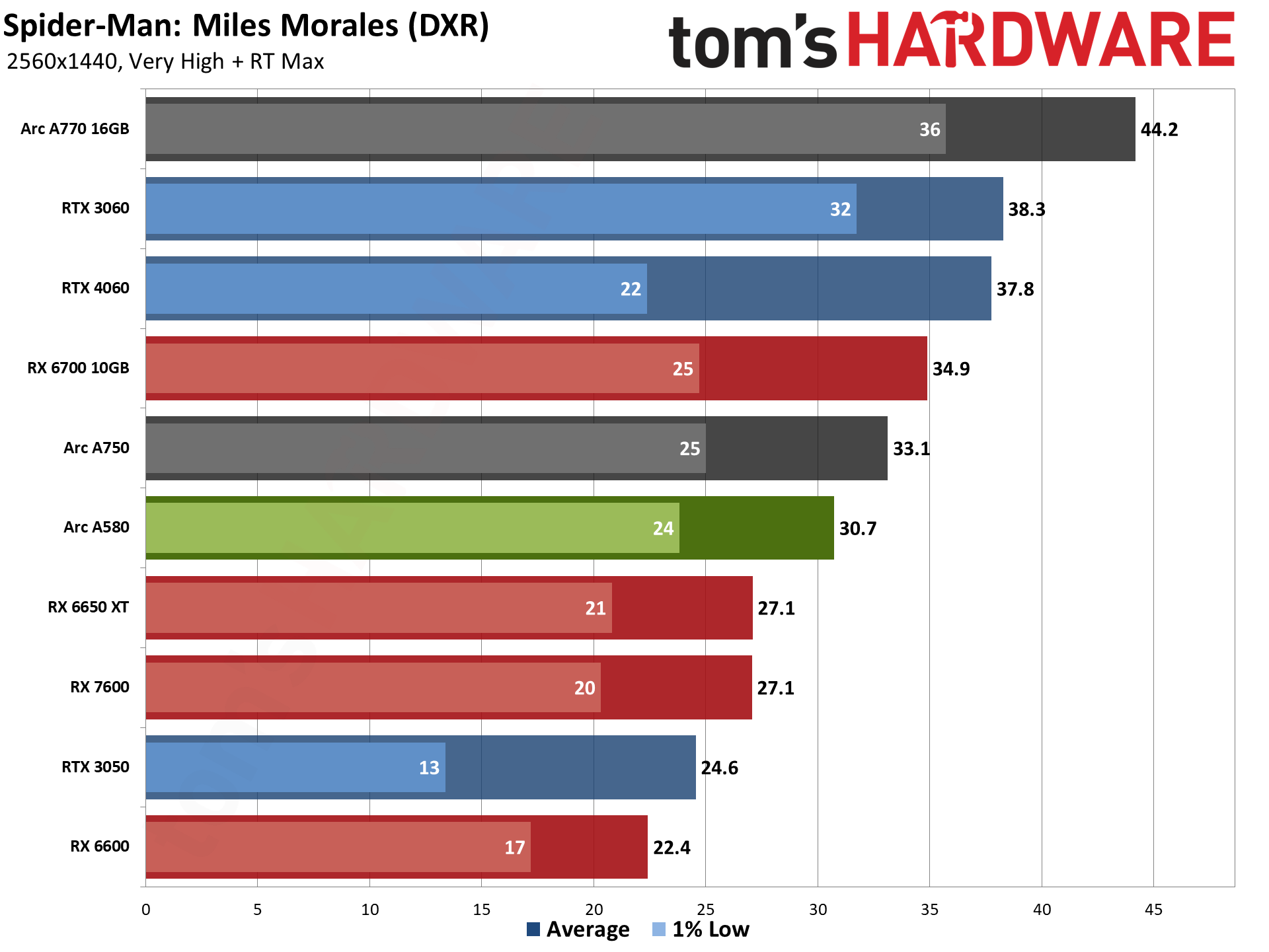

The 256-bit memory interface starts to become a much bigger factor at 1440p, allowing the Arc A580 to pull ahead of the RX 7600 and RX 6650 XT now. That's still mostly thanks to the DXR performance, however, and the A580 really doesn't have enough muscle to do 1440p with ray tracing.

You can certainly make the argument that 1440p is too demanding for most of the GPUs in our charts. If you're gunning for 60 fps or higher, the A580 only hits that mark in two games. It also barely squeaks past 30 fps in five other games, with five more in the 39~53 fps range. And again, some form of upscaling (XeSS or FSR2) would make some of the games far more playable, even at 1440p.

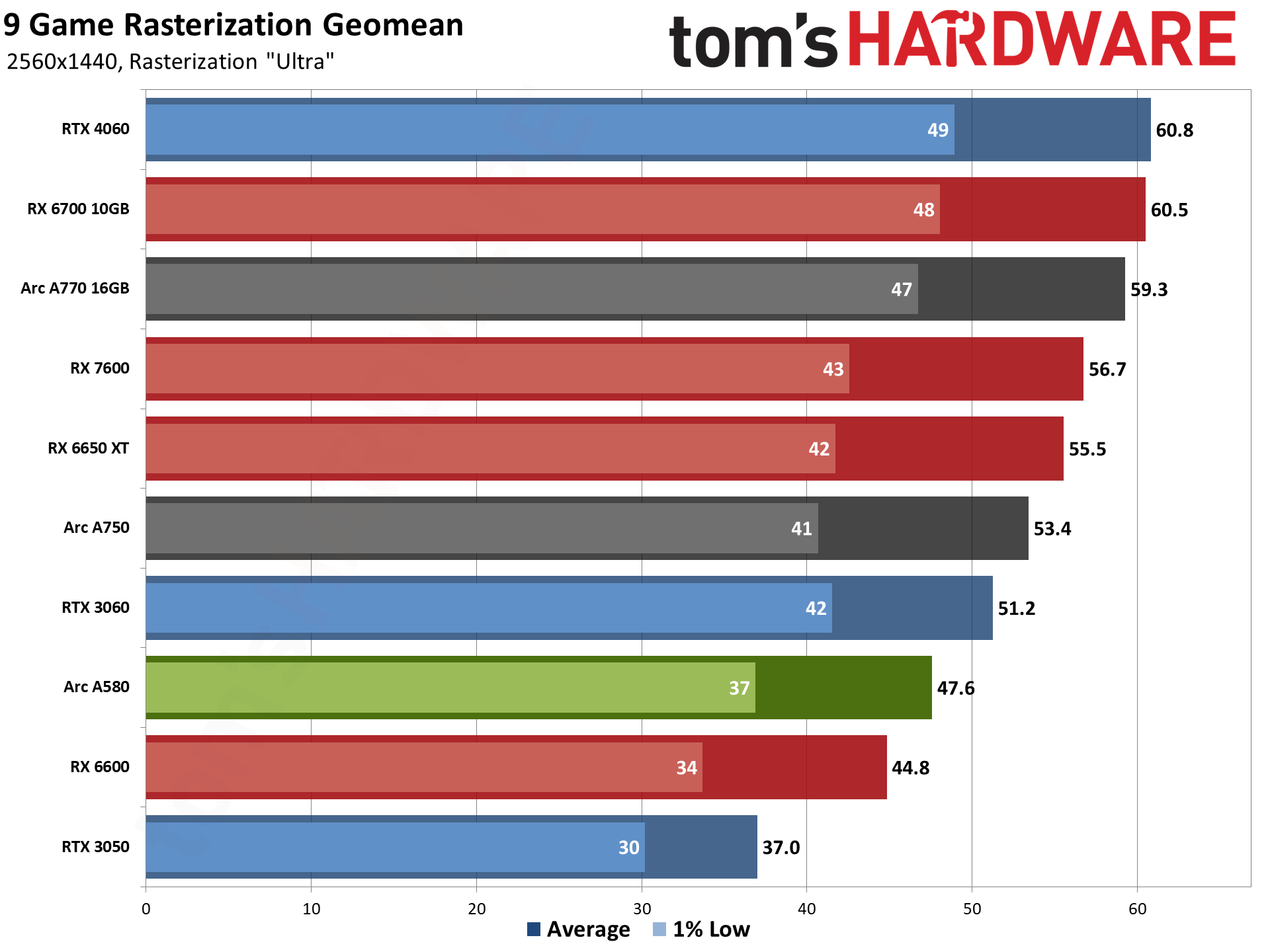

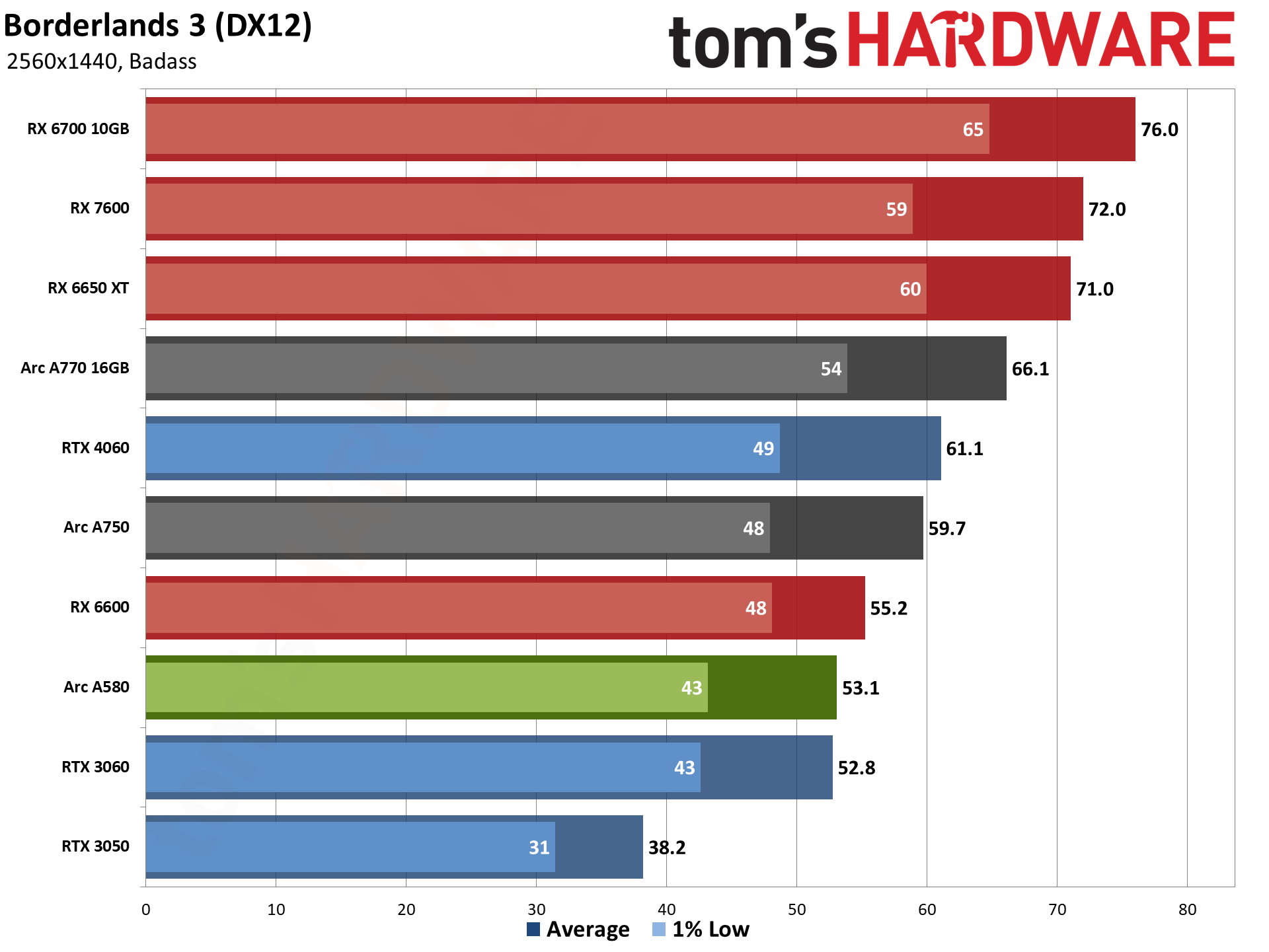

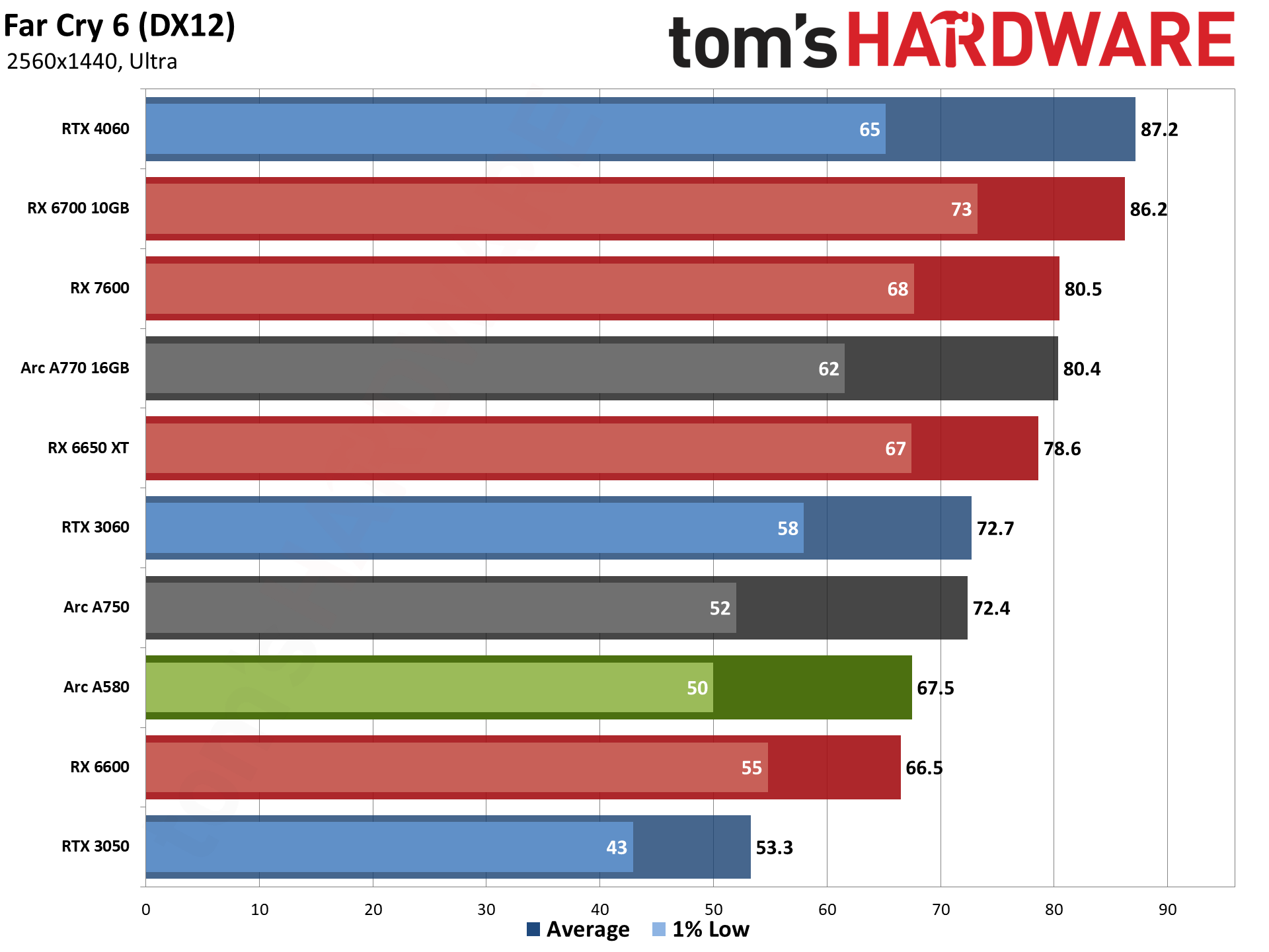

1440p ultra with rasterization remains mostly playable on the Arc A580, with performance again landing between the RX 6600 and RTX 3060. If we ignore the close price proximity of the A750, it's easily the best value. But don't forget that power requirements will also tend to be higher than many of the other GPUs shown here.

Every game in our rasterization suite still breaks 30 fps, and Far Cry 6 and Horizon Zero Dawn even break the 60 fps mark. You could also boost performance a moderate ~20% in most of the games by dropping to the high preset, which would give more breathing room for 1440p gaming.

There's not too much to say about heavy ray tracing games at 1440p without upscaling. The Arc A580 generally comes up short in such situations. Control, Metro Exodus Enhanced, and Spider-Man: Miles Morales all get 31 fps average performance, but 1% lows are in the low to mid 20s. Of those, only Spider-Man has XeSS upscaling support, while the other two only have DLSS support.

If you want a GPU for 1440p gaming, with ray tracing enabled, you'll need at least an RTX 4060 to give it a real shot, and stepping up to an RTX 4070 or RX 7800 XT would be advisable.

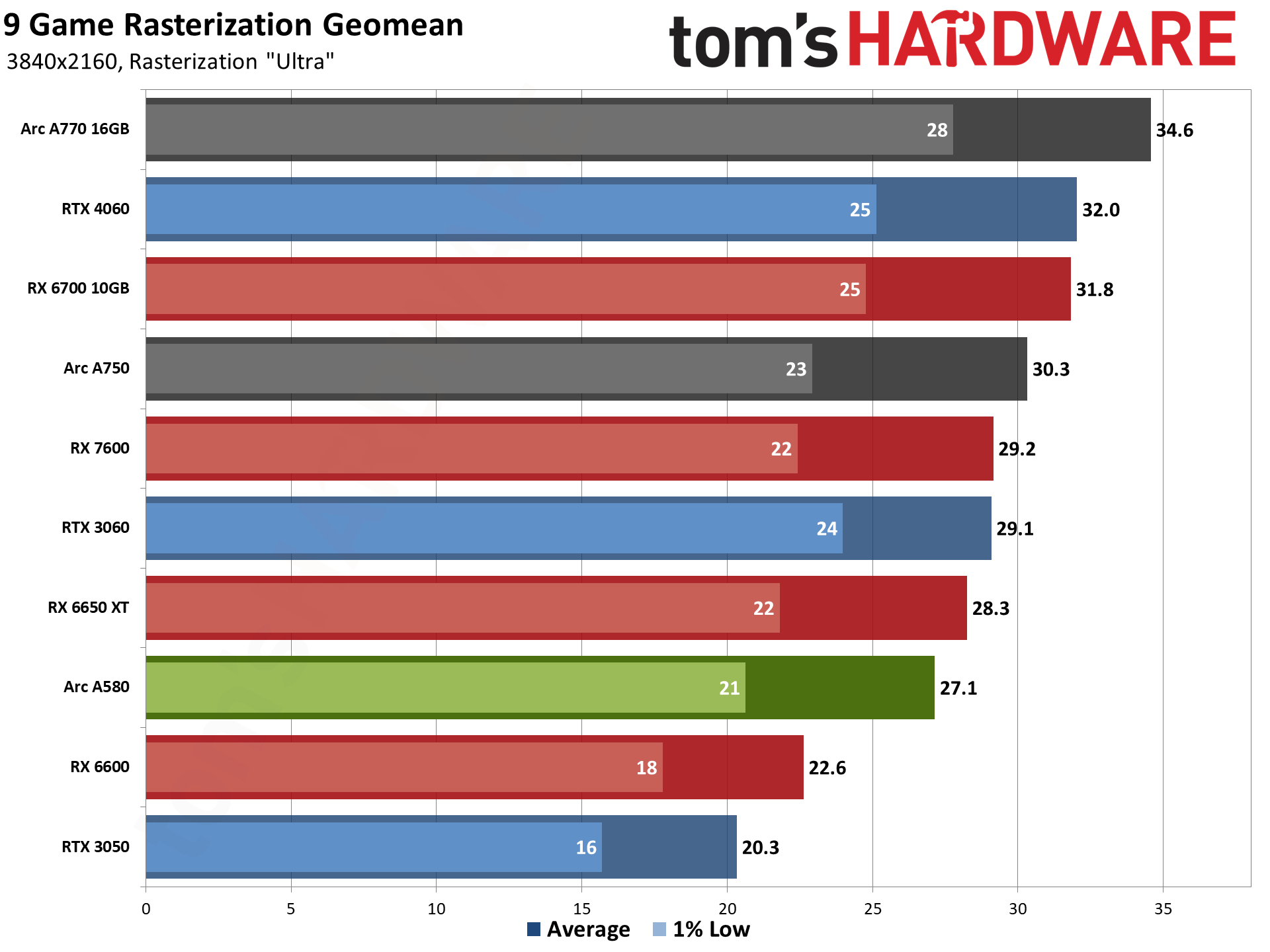

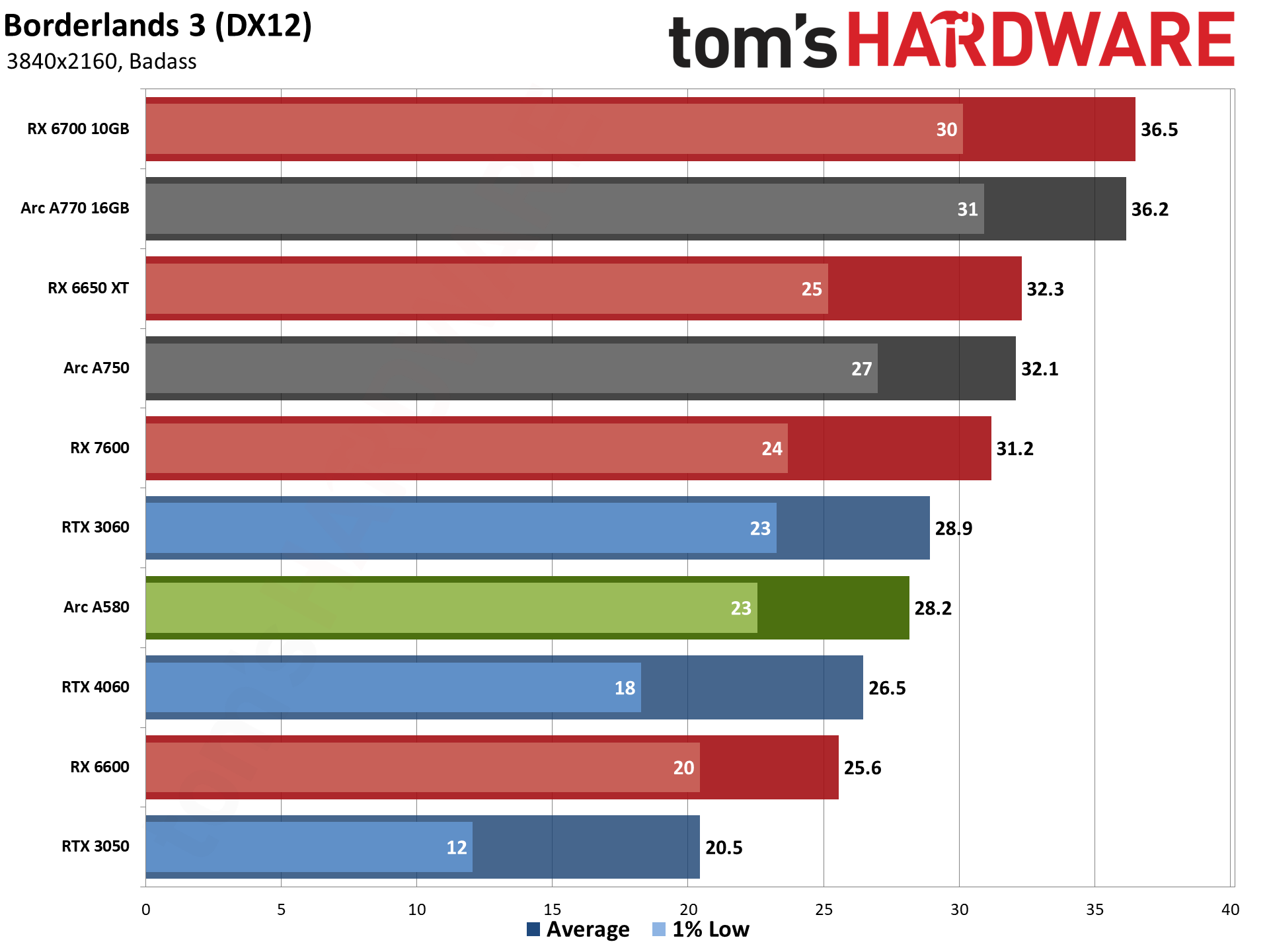

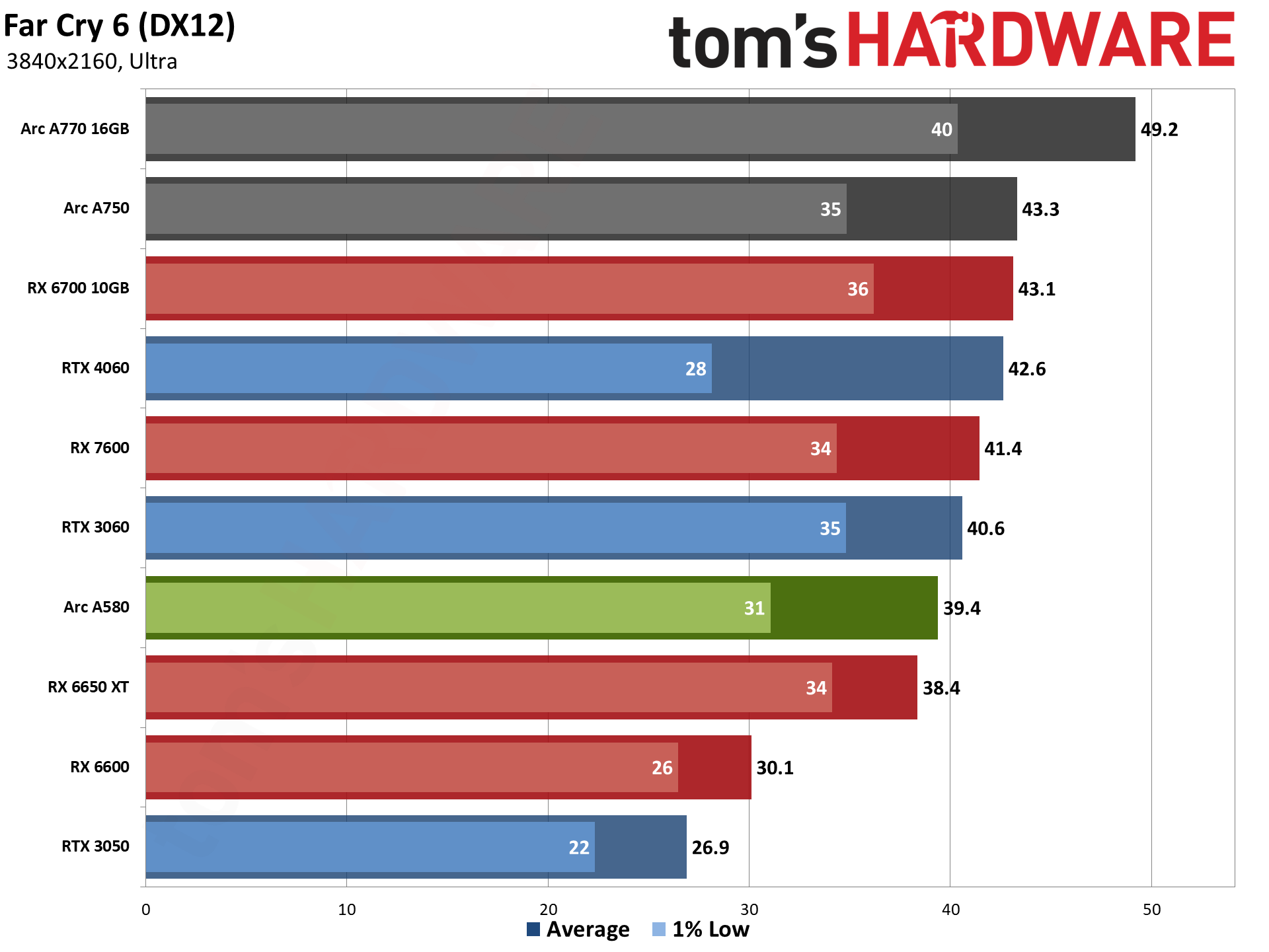

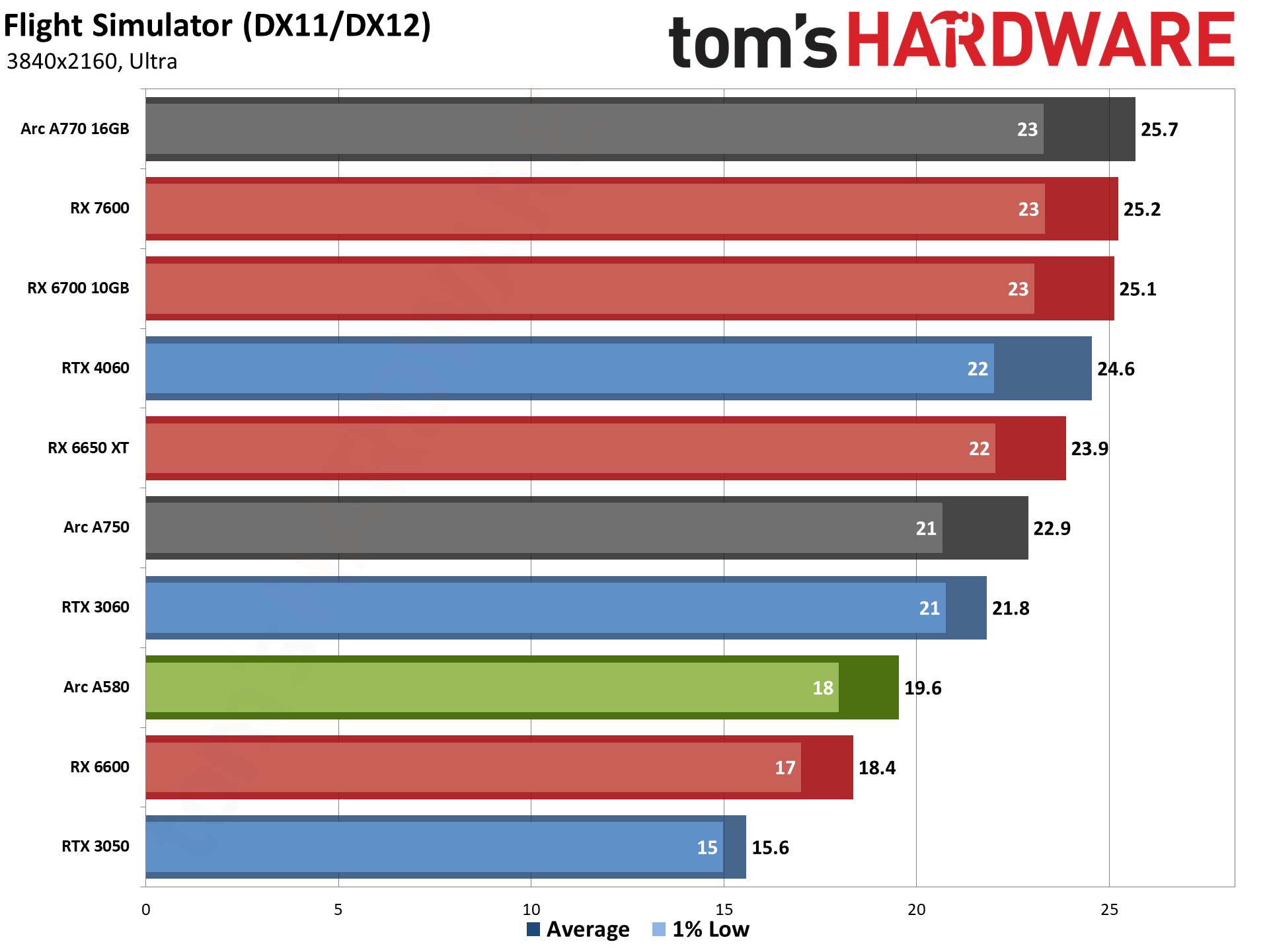

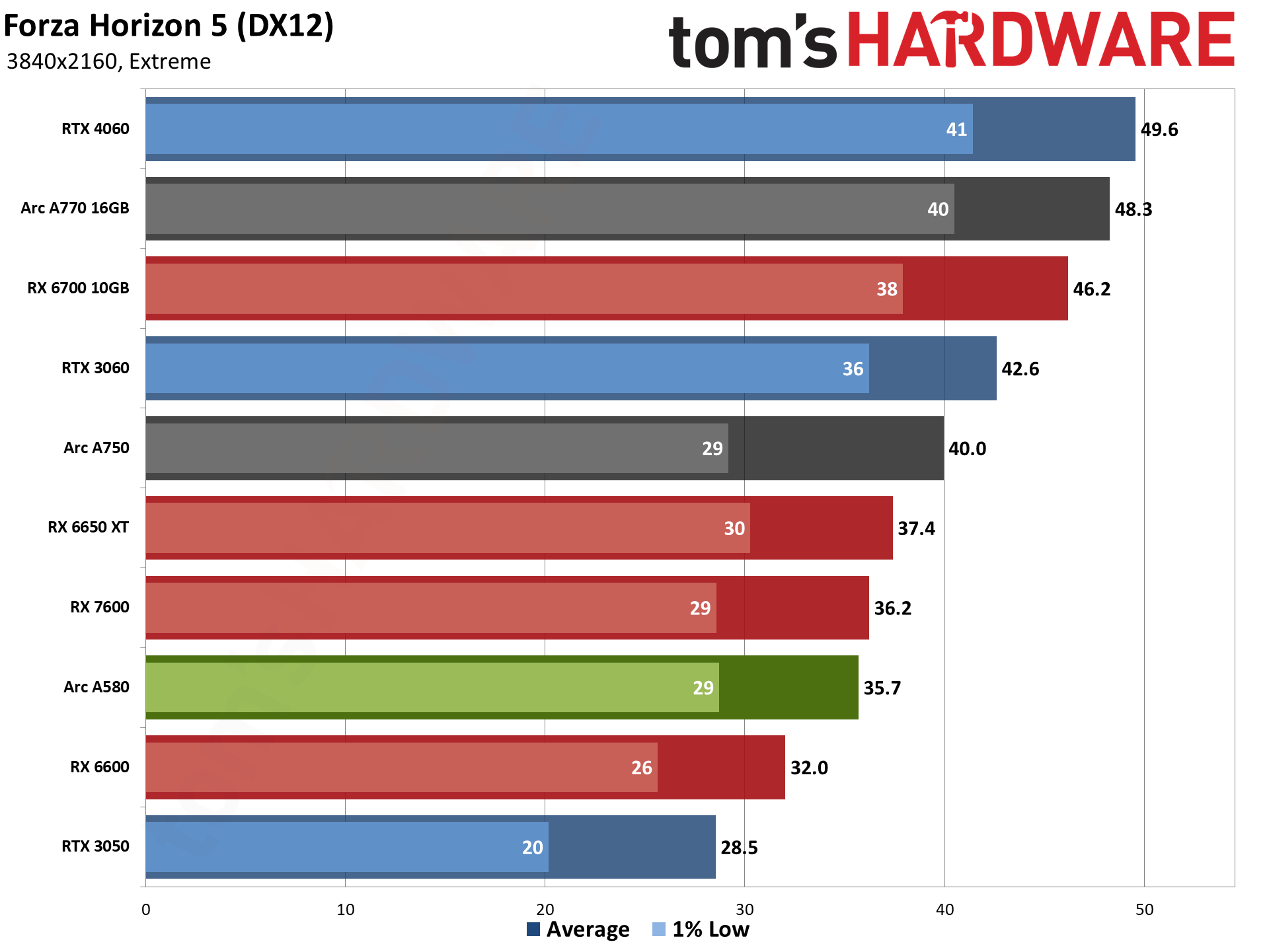

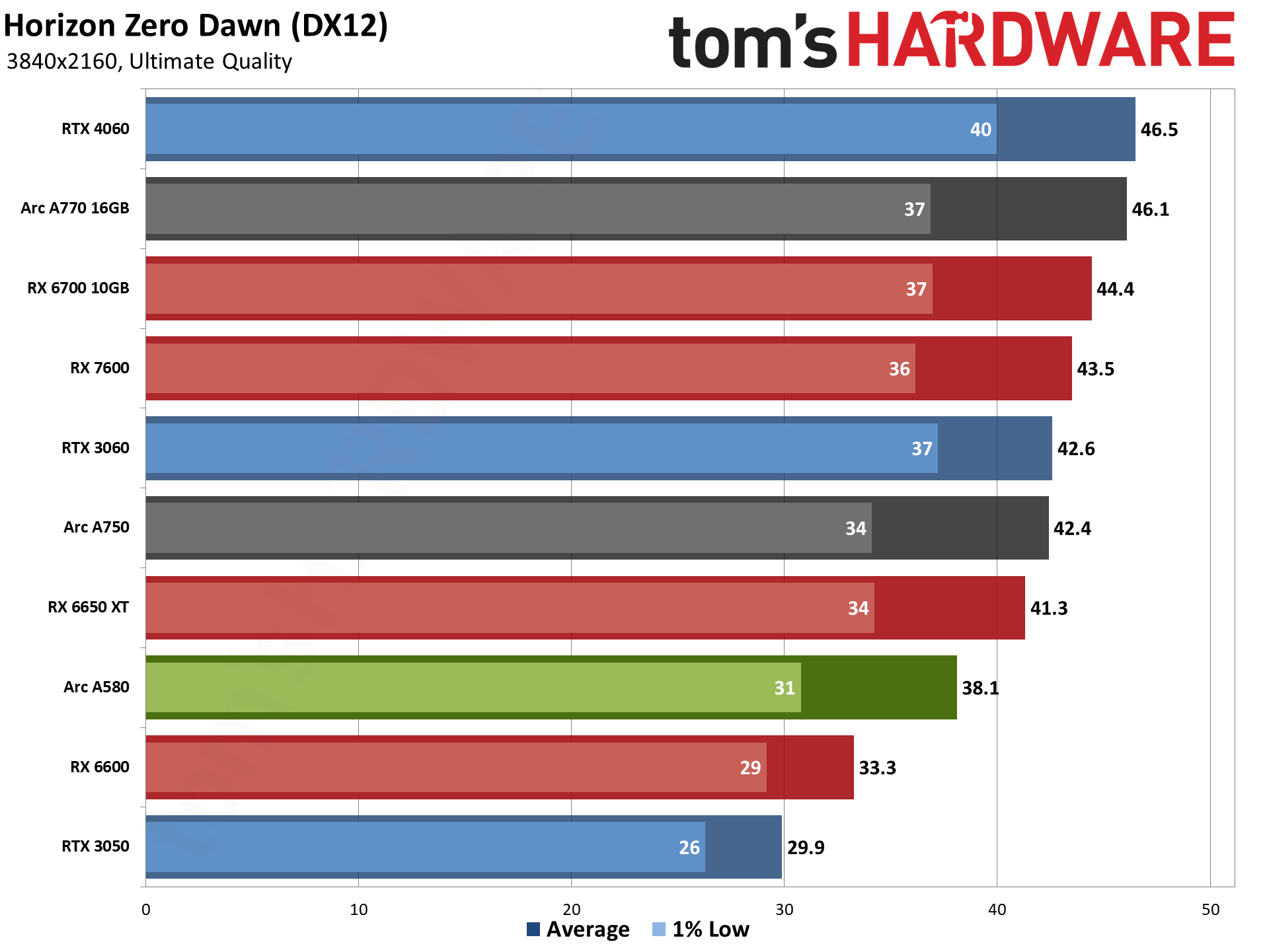

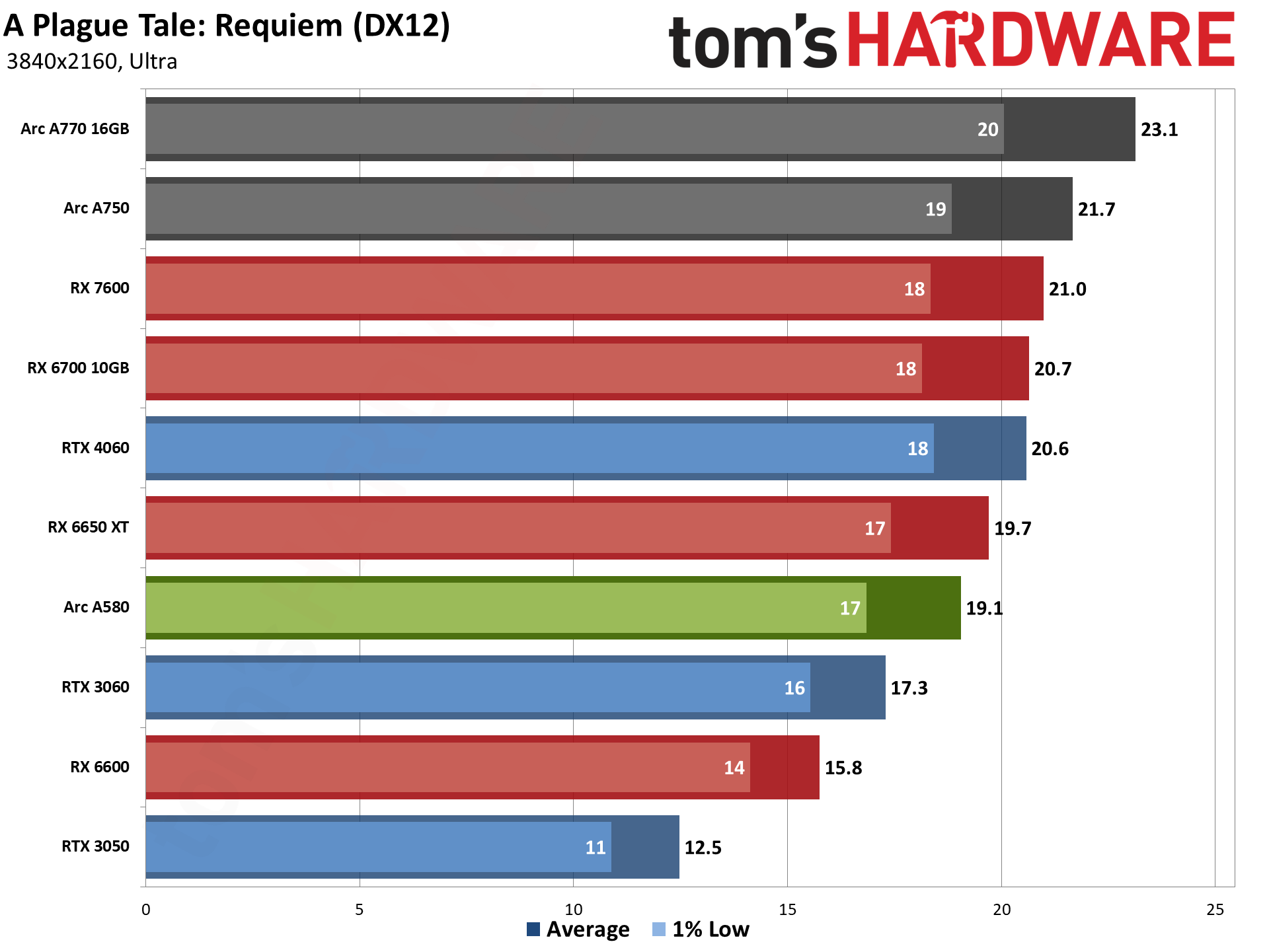

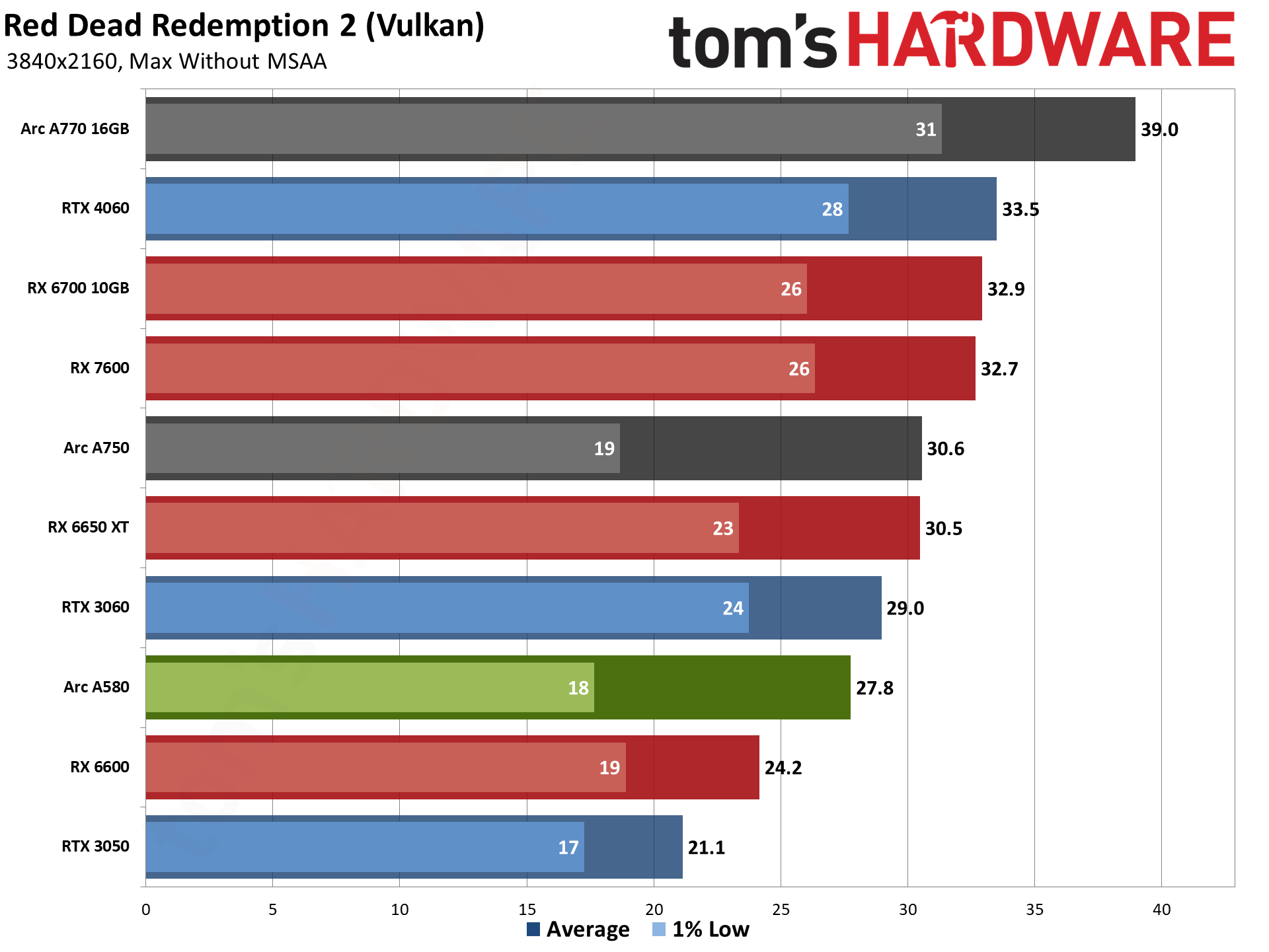

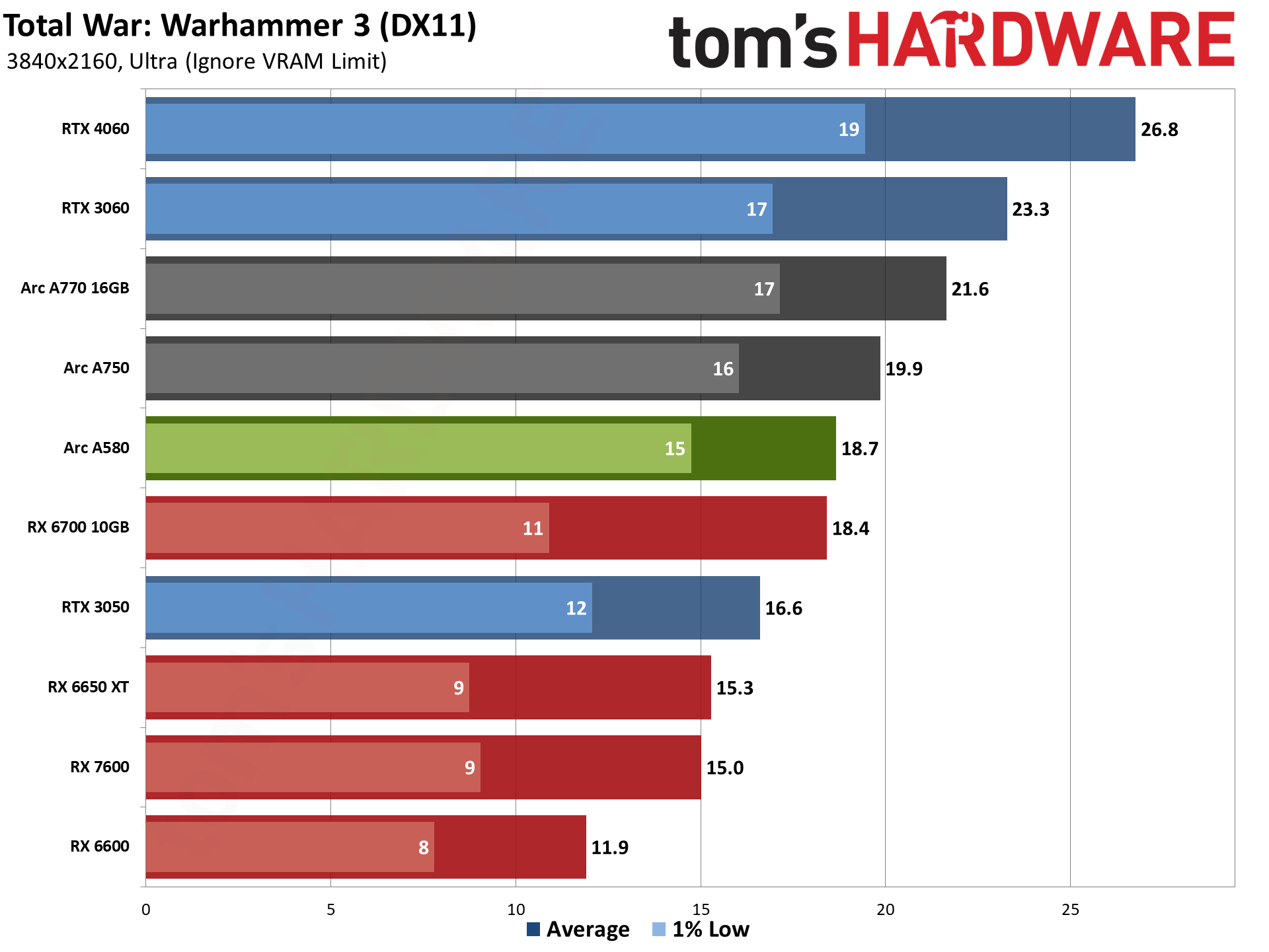

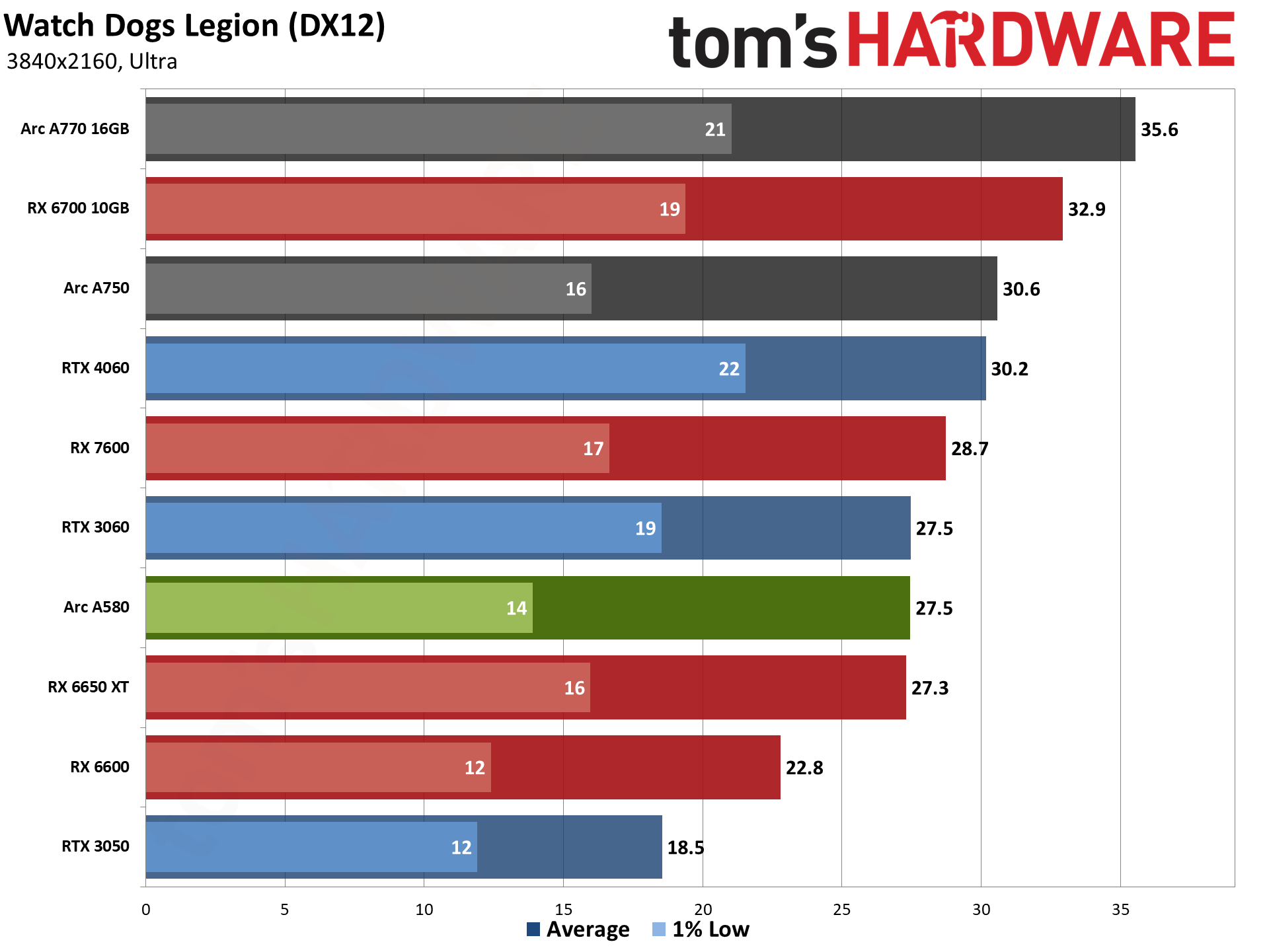

Here's a look at the 4K rasterization performance, which as you'd expect isn't much to write home about. The overall average is just 27 fps, and only three of the nine games we tested (Far Cry 6, Forza Horizon 5, and Horizon Zero Dawn) manage to break 30 fps. These charts are mostly to satisfy people's curiosity rather than a major talking point for the Arc A580.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Intel Arc A580: 1440p and 4K Gaming Performance

Prev Page Intel Arc A580: 1080p Medium Gaming Performance Next Page Intel Arc A580: Professional Content Creation and AI Performance

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

AgentBirdnest Awesome review, as always!Reply

Dang, was really hoping this would be more like $150-160. I bet the price will drop before long, though; I can't imagine many people choosing this over the A750 that is so closely priced. Still, it just feels good to see a card that can actually play modern AAA games for under $200. -

JarredWaltonGPU Reply

Yeah, the $180 MSRP just feels like wishful thinking right now rather than reality. I don't know what supply of Arc GPUs looks like from the manufacturing side, and I feel like Intel may already be losing money per chip. But losing a few dollars rather than losing $50 or whatever is probably a win. This would feel a ton better at $150 or even $160, and maybe add half a star to the review.AgentBirdnest said:Awesome review, as always!

Dang, was really hoping this would be more like $150-160. I bet the price will drop before long, though; I can't imagine many people choosing this over the A750 that is so closely priced. Still, it just feels good to see a card that can actually play modern AAA games for under $200. -

hotaru.hino Intel does have some cash to burn and if they are selling these cards at a loss, it'd at least put weight that they're serious about staying in the discrete GPU business.Reply -

JarredWaltonGPU Reply

That's the assumption I'm going off: Intel is willing to take a short-term / medium-term loss on GPUs in order to bootstrap its data center and overall ambitions. The consumer graphics market is just a side benefit that helps to defray the cost of driver development and all the other stuff that needs to happen.hotaru.hino said:Intel does have some cash to burn and if they are selling these cards at a loss, it'd at least put weight that they're serious about staying in the discrete GPU business.

But when you see the number of people who have left Intel Graphics in the past year, and the way Gelsinger keeps divesting of non-profitable businesses, I can't help but wonder how much longer he'll be willing to let the Arc experiment continue. I hope we can at least get to Celestial and Druid before any final decision is made, but that will probably depend on how Battlemage does.

Intel's GPU has a lot of room to improve, not just on drivers but on power and performance. Basically, look at Ada Lovelace and that's the bare minimum we need from Battlemage if it's really going to be competitive. We already have RDNA 3 as the less efficient, not quite as fast, etc. alternative to Intel, and AMD still has better drivers. Matching AMD isn't the end goal; Intel needs to take on Nvidia, at least up to the 4070 Ti level. -

mwm2010 If the price of this goes down, then I would be very impressed. But because of the $180 price, it isn't quite at its full potential. You're probably better off with a 6600.Reply -

btmedic04 Arc just feels like one of the industries greatest "what ifs' to me. Had these launched during the great gpu shortage of 2021, Intel would have sold as many as they could produce. Hopefully Intel sticks with it, as consumers desperately need a third vendor in the market.Reply -

cyrusfox Reply

What other choice do they have? If they canned their dGPU efforts, they still need staff to support for iGPU, or are they going to give up on that and license GPU tech? Also what would they do with their datacenter GPU(Ponte Vechio subsequent product).JarredWaltonGPU said:I can't help but wonder how much longer he'll be willing to let the Arc experiment continue. I hope we can at least get to Celestial and Druid before any final decision is made, but that will probably depend on how Battlemage does.

Only clear path forward is to continue and I hope they do bet on themselves and take these licks (financial loss + negative driver feedback) and keep pushing forward. But you are right Pat has killed a lot of items and spun off some great businesses from Intel. I hope battlemage fixes a lot of the big issues and also hope we see 3rd and 4th gen Arc play out. -

bit_user Thanks @JarredWaltonGPU for another comprehensive GPU review!Reply

I was rather surprised not to see you reference its relatively strong Raytracing, AI, and GPU Compute performance, in either the intro or the conclusion. For me, those are definitely highlights of Alchemist, just as much as AV1 support.

Looking at that gigantic table, on the first page, I can't help but wonder if you can ask the appropriate party for a "zoom" feature to be added for tables, similar to the way we can expand embedded images. It helps if I make my window too narrow for the sidebar - then, at least the table will grow to the full width of the window, but it's still not wide enough to avoid having the horizontal scroll bar.

Whatever you do, don't skimp on the detail! I love it! -

JarredWaltonGPU Reply

The evil CMS overlords won't let us have nice tables. That's basically the way things shake out. It hurts my heart every time I try to put in a bunch of GPUs, because I know I want to see all the specs, and I figure others do as well. Sigh.bit_user said:Thanks @JarredWaltonGPU for another comprehensive GPU review!

I was rather surprised not to see you reference its relatively strong Raytracing, AI, and GPU Compute performance, in either the intro or the conclusion. For me, those are definitely highlights of Alchemist, just as much as AV1 support.

Looking at that gigantic table, on the first page, I can't help but wonder if you can ask the appropriate party for a "zoom" feature to be added for tables, similar to the way we can expand embedded images. It helps if I make my window too narrow for the sidebar - then, at least the table will grow to the full width of the window, but it's still not wide enough to avoid having the horizontal scroll bar.

Whatever you do, don't skimp on the detail! I love it!

As for RT and AI, it's decent for sure, though I guess I just got sidetracked looking at the A750. I can't help but wonder how things could have gone differently for Intel Arc, but then the drivers still have lingering concerns. (I didn't get into it as much here, but in testing a few extra games, I noticed some were definitely underperforming on Arc.)