GPUs

No tech has seen more innovation in the last decade than graphics cards, thanks to powerhouse rivals AMD and Nvidia advancing the state of the art with ray-tracing, FSR and DLSS, 3D chip stacking and more. What does it all mean? Tom's Hardware is the industry standard for GPU news, reviews, and insights.

The latest reviews Need a new GPU? Start here. We benchmark, analyze, and rate the hottest new chips.

Ranked and Rated The most comprehensive GPU ranking guide in the world -- only on Tom's Hardware.

News and Trends The latest industry buzz, up to the minute info on technology break-throughs, and more.

Explore GPUs

Latest about GPUs

Walmart flooded with RTX 40-series GPUs as 50-series remains out of reach for most gamers

By Zhiye Liu published

Walmart stocks up on Nvidia's GeForce RTX 40-series (codenamed Ada Lovelace) graphics cards amid an AI-driven memory shortage.

Optiscaler team fixes INT8 FSR 4 ghosting on RX 6000 series GPUs

By Aaron Klotz published

Optiscaler has provided a new update that helps remove ghosting on the INT8 version of FSR 4 for RX 6000 series graphics cards.

GPU price tracking 2026: Lowest price on every graphics card from Nvidia, AMD, and Intel today

By Stewart Bendle last updated

Check the best prices on Nvidia RTX and AMD Radeon graphics cards.

Intel's new Precompiled Shader Distribution can improve game loading times by up to 3x on Arc GPUs

By Hassam Nasir published

Support doesn't extend to Alchemist for now.

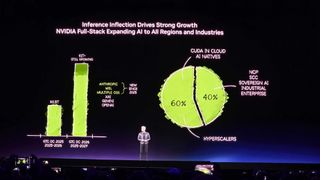

Nvidia updates data center roadmap with Rosa CPU and stacked Feynman GPUs

By Anton Shilov published

Nvidia publishes 2026 – 2028 data center roadmap with Rosa CPU, Feynman GPU, optical NVLinks and Groq LPUs with NVFP4 and NVLink.

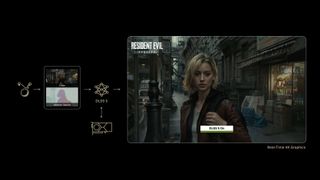

Jensen Huang says gamers are 'completely wrong' about DLSS 5

By Andrew E. Freedman published

Nvidia CEO Jensen Huang responded to backlash against DLSS 5, saying artistic control remained with developers and that the AI works with existing geometry.

We got a first look at Nvidia's DLSS 5 and the future of neural rendering at GTC

By Jeffrey Kampman published

New AI model can dramatically improve the appearance of games, but it's early days for the tech.

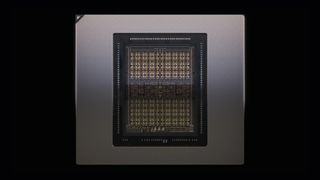

Nvidia demonstrates Rubin Ultra tray, the world's first AI GPU with 1TB of HBM4E memory

By Anton Shilov published

Nvidia shows off its next-generation Kyber rack-scale solution to be powered by Rubin Ultra GPUs with four compute chiplets and 1 TB of HBM4E memory per package.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.