Intel Reveals Full Details for Its Arc A-Series Mobile Lineup

Desktop GPUs still coming at a later date

Today marks the day when Intel Arc Alchemist officially enters the dedicated graphics card fray, turning this two-pony race into a three-way battle for supremacy. Or at least, that's the story we'd like to tell. Instead, we're getting the first full description of Intel's Arc A-Series mobile GPU lineup, with the first laptops sporting the new GPUs slated to arrive in April. But desktop users need not worry: Arc graphics cards for desktops are still coming, just not first. If you're not up to speed on Arc, we'll recap some of the details, but our main Arc Alchemist hub contains more low-level details.

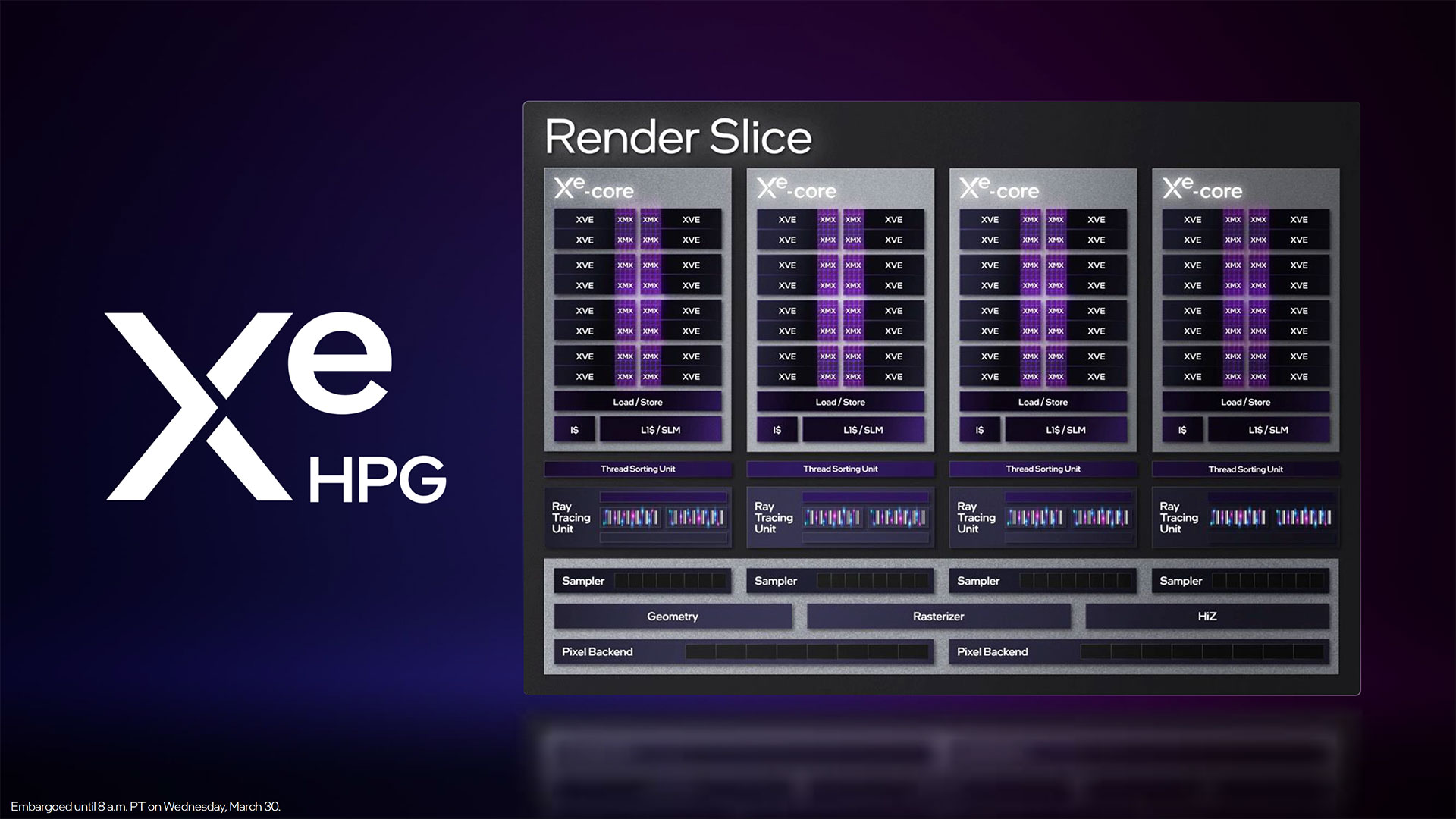

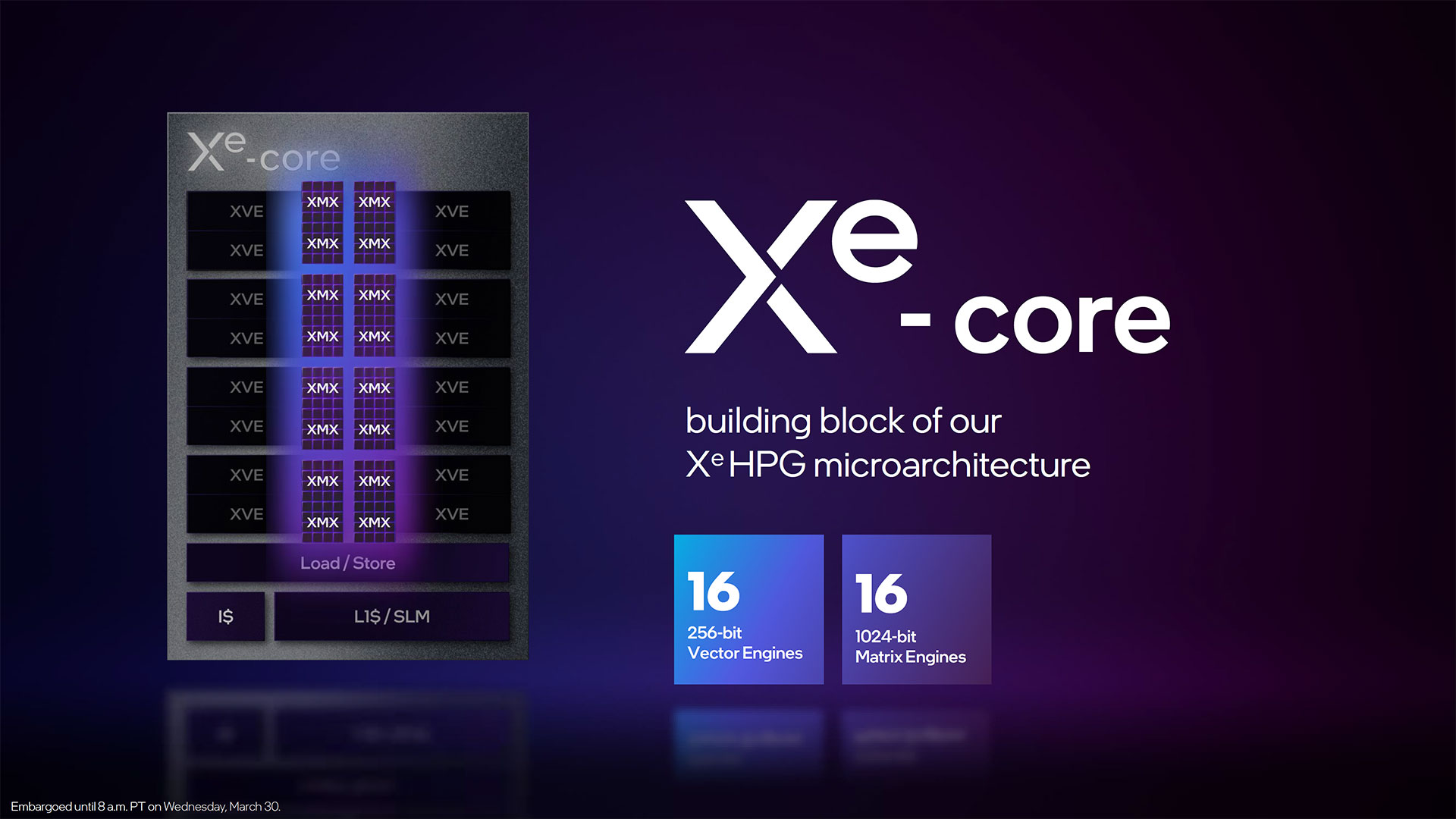

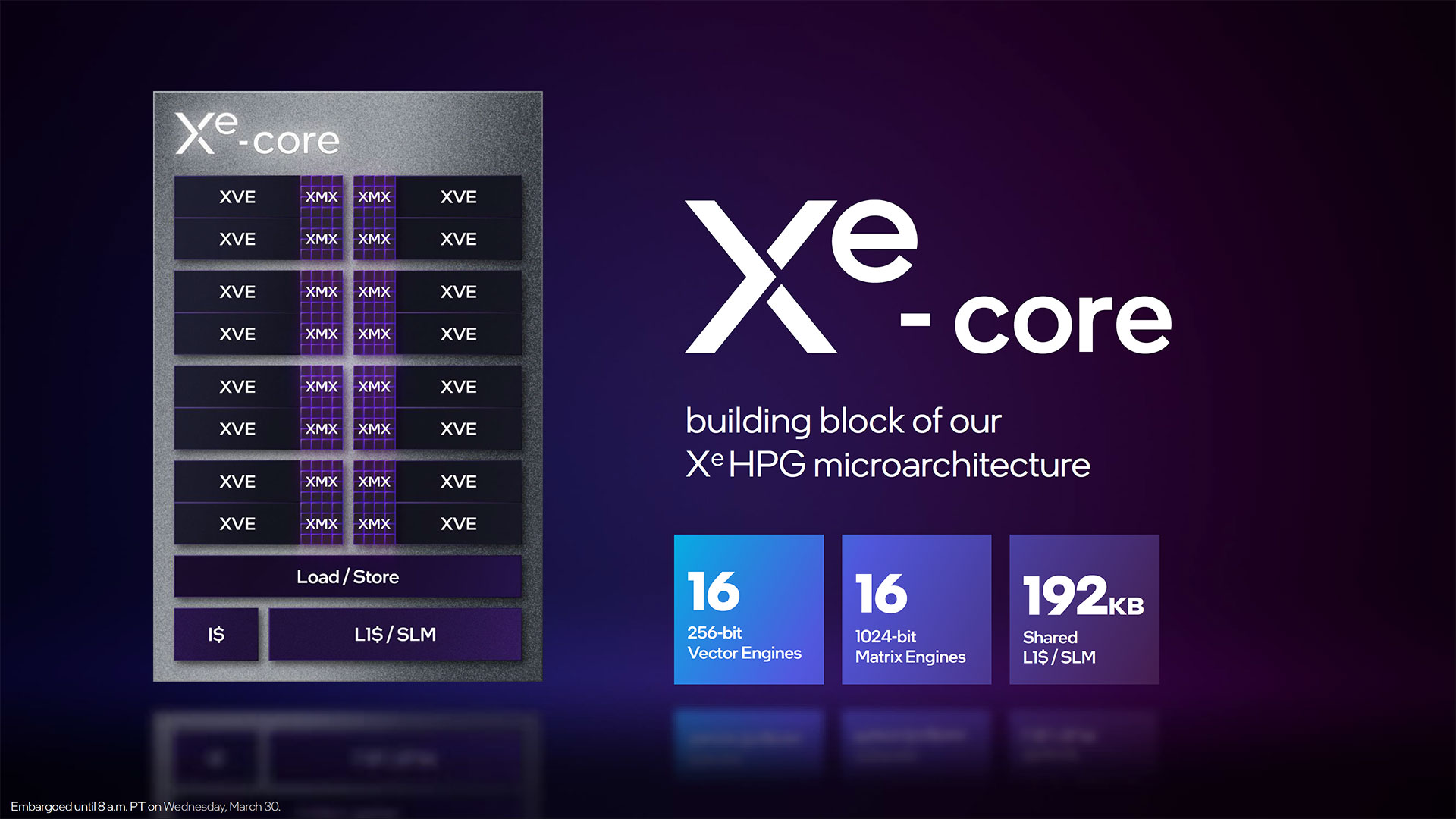

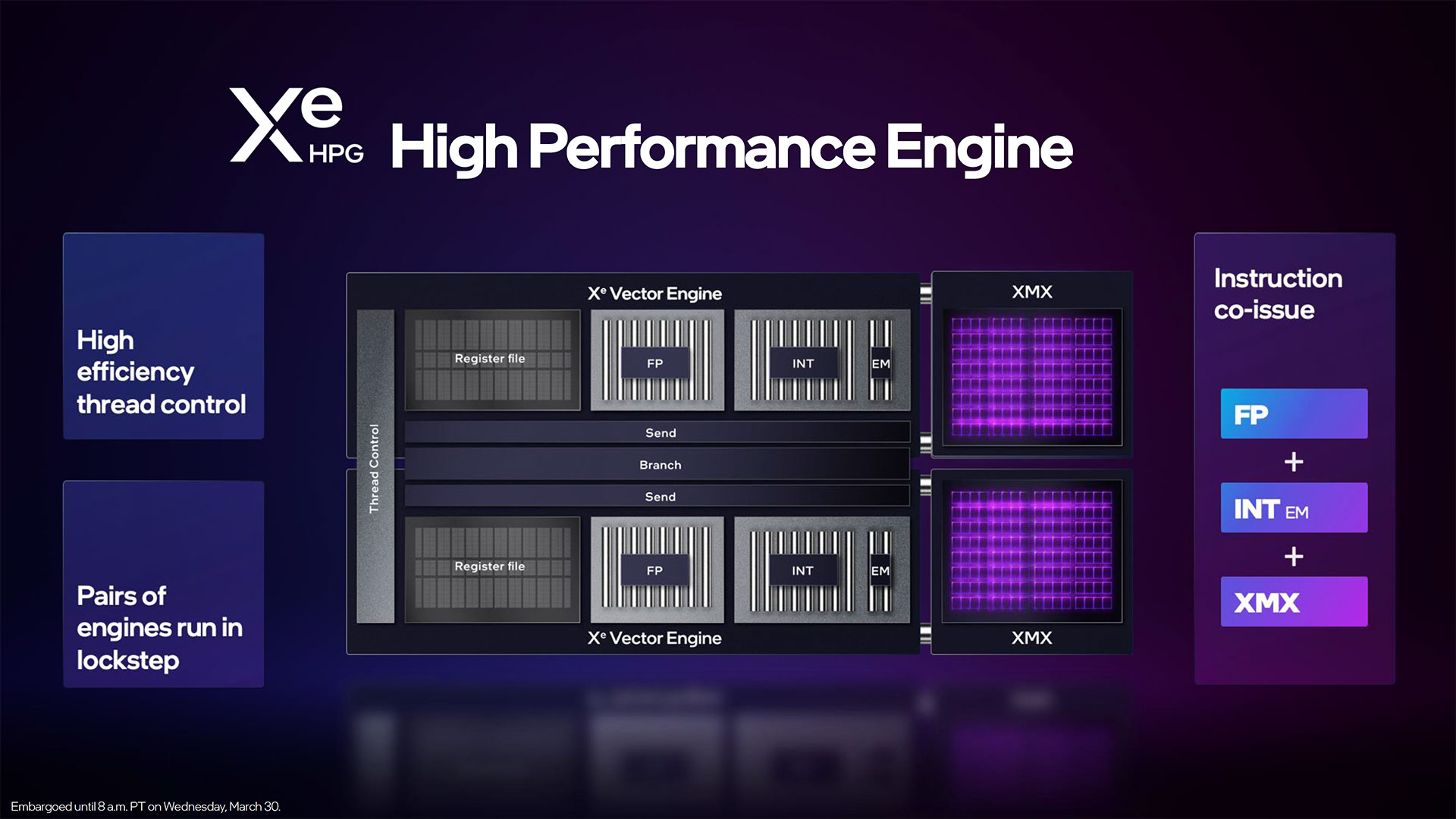

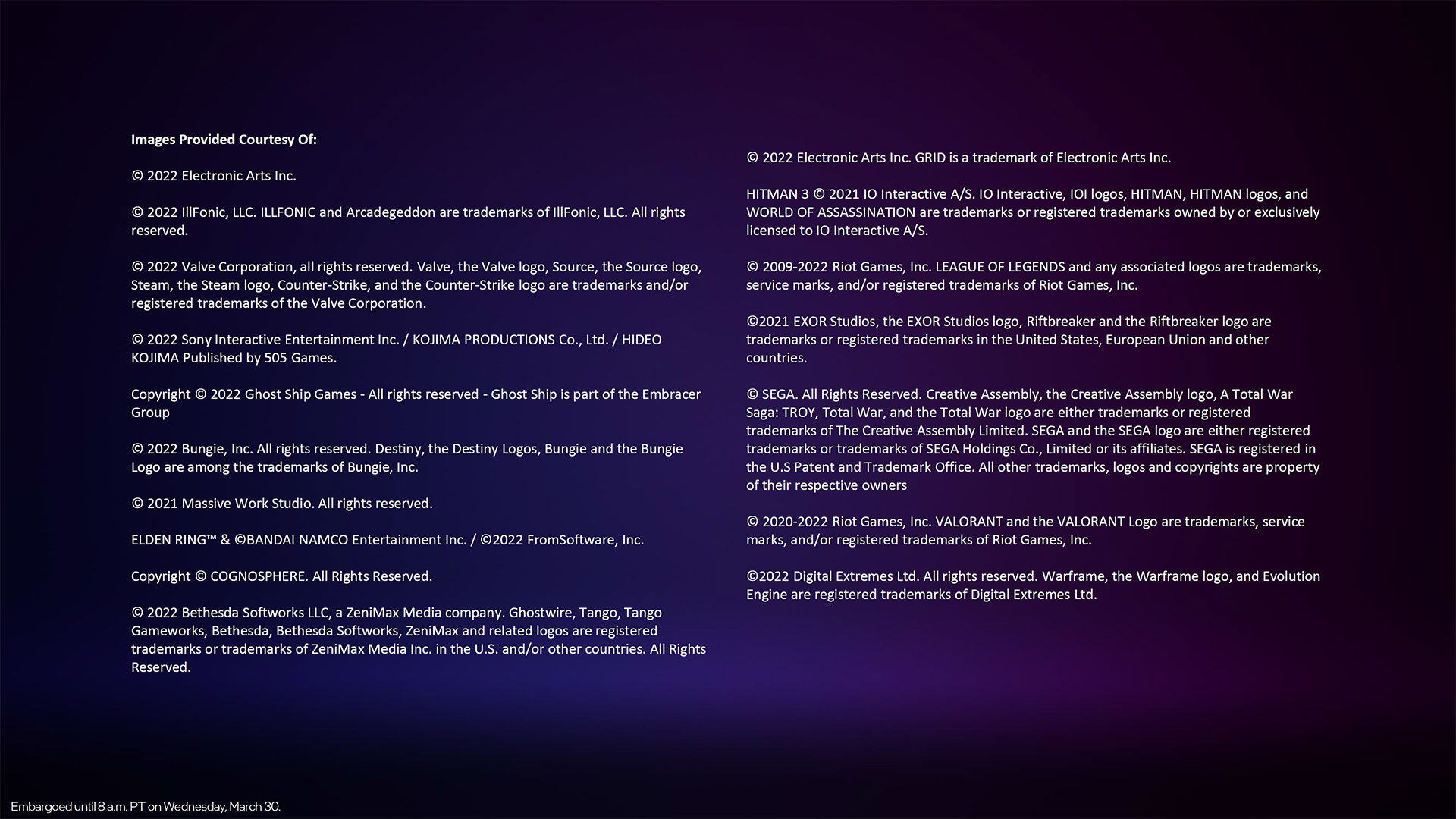

Similar to its CPU stack, Intel's Arc Alchemist will have multiple tiers of performance: Arc 3, Arc 5, and Arc 7. The various models will be based off two chip designs, one with up to 128 Xe Vector Engines (XVE) and the other with up to 512 XVE. Note that XVE is the new name for what was formerly called an EU (Execution Unit), though the new XVE makes plenty of changes and certainly warrants a change in name.

Two Physical Arc Chips

The smaller chip is called ACM-G11, and it will launch first, with laptops expected to arrive shortly using the new Arc 3 branded GPUs. ACM-G10 uses a significantly larger chip and Intel expects laptops using the Arc 5 and Arc 7 GPUs to arrive by early summer.

In most respects, ACM-G10 has four times the hardware of ACM-G11: 4X the Xe-cores, ray tracing units, and L2 cache. The memory subsystem however is 2.67X as wide, with a maximum 256-bit bus compared to a maximum 96-bit bus on the smaller chip, and the PCIe slot interface is twice as wide at x16 vs. x8. The media and display capabilities meanwhile are equivalent between the two GPUs, so all Arc graphics solutions will have the same dual Xe Media Engines (MFX) and four display engines.

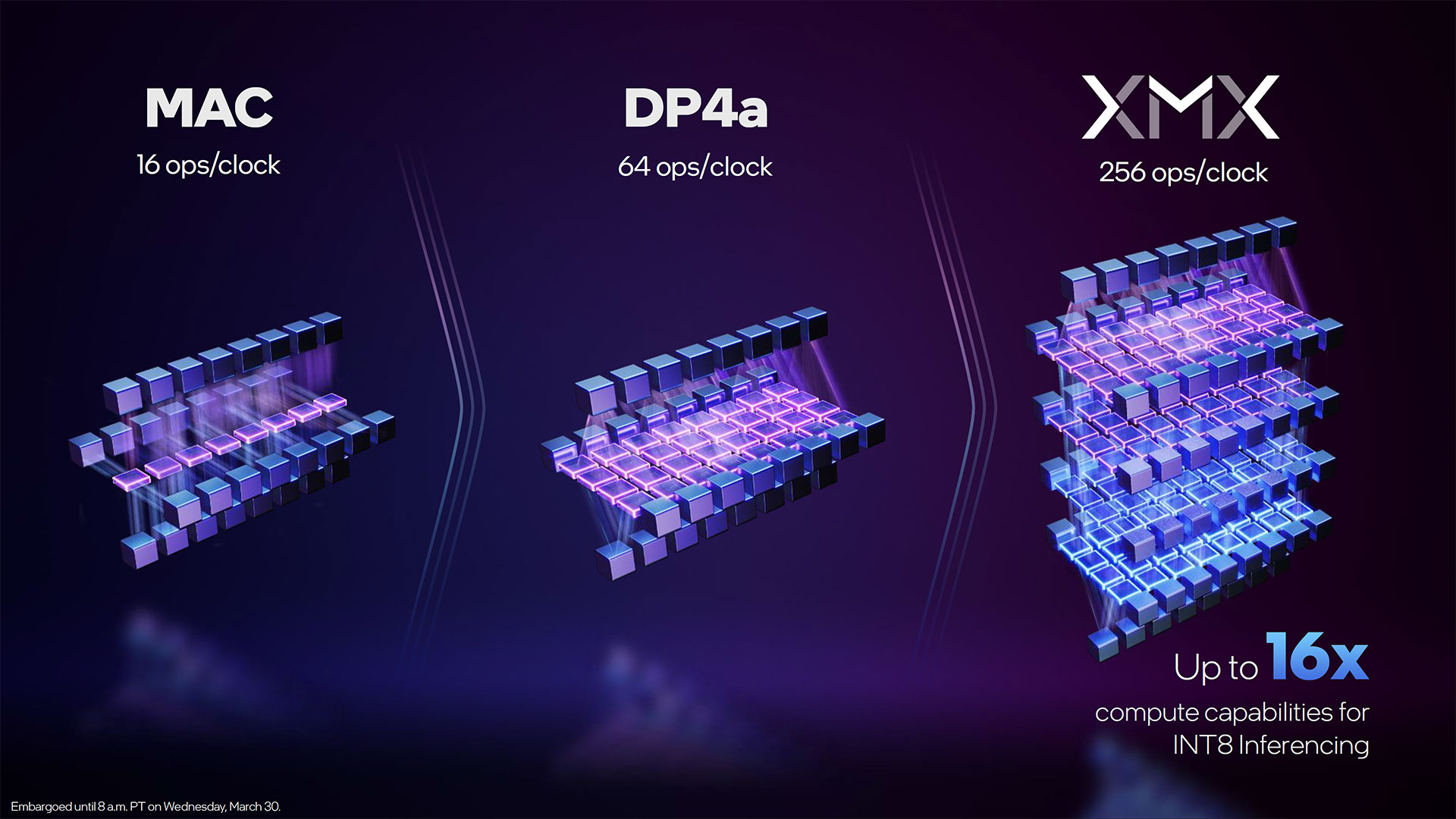

To quickly recap, each Xe-core contains 16 XVEs, and another 16 XMX units. The XVE vector engines can do 16 FP32, 32 FP16, and 64 INT8 operations per clock. The XMX matrix engines meanwhile can do 128 FP16/BF16, 256 INT8, or 512 INT4/INT2 operations per clock.

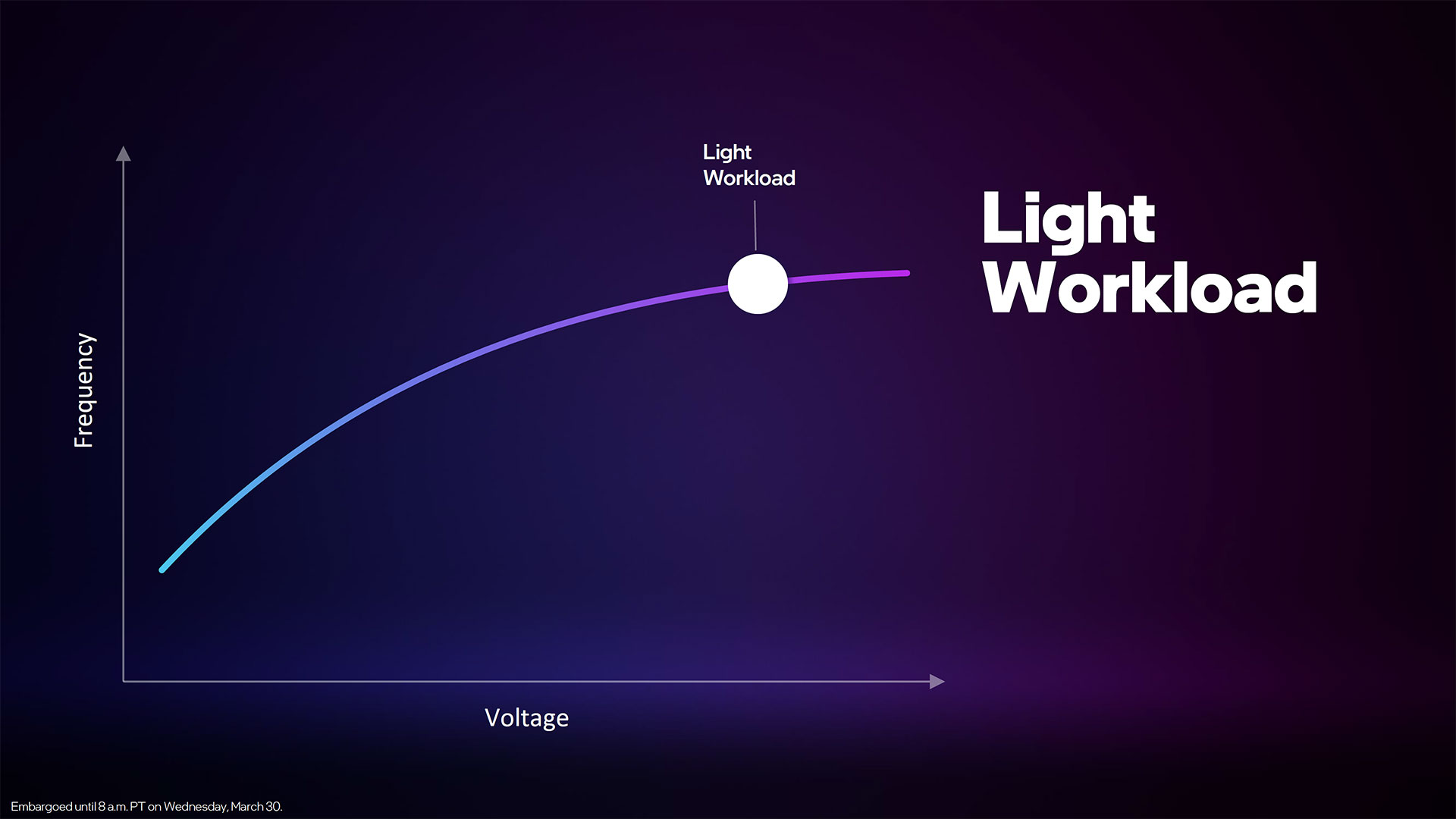

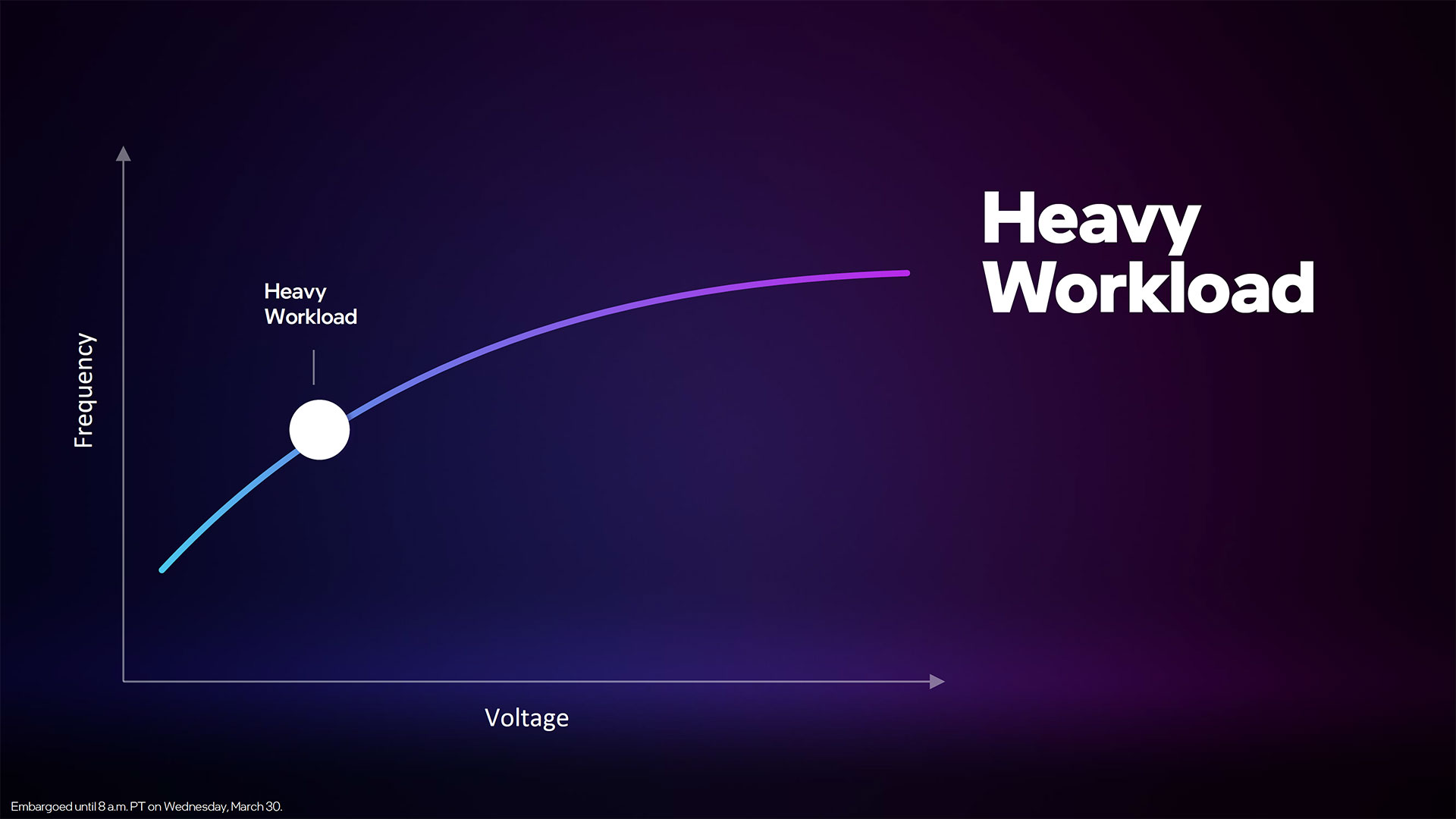

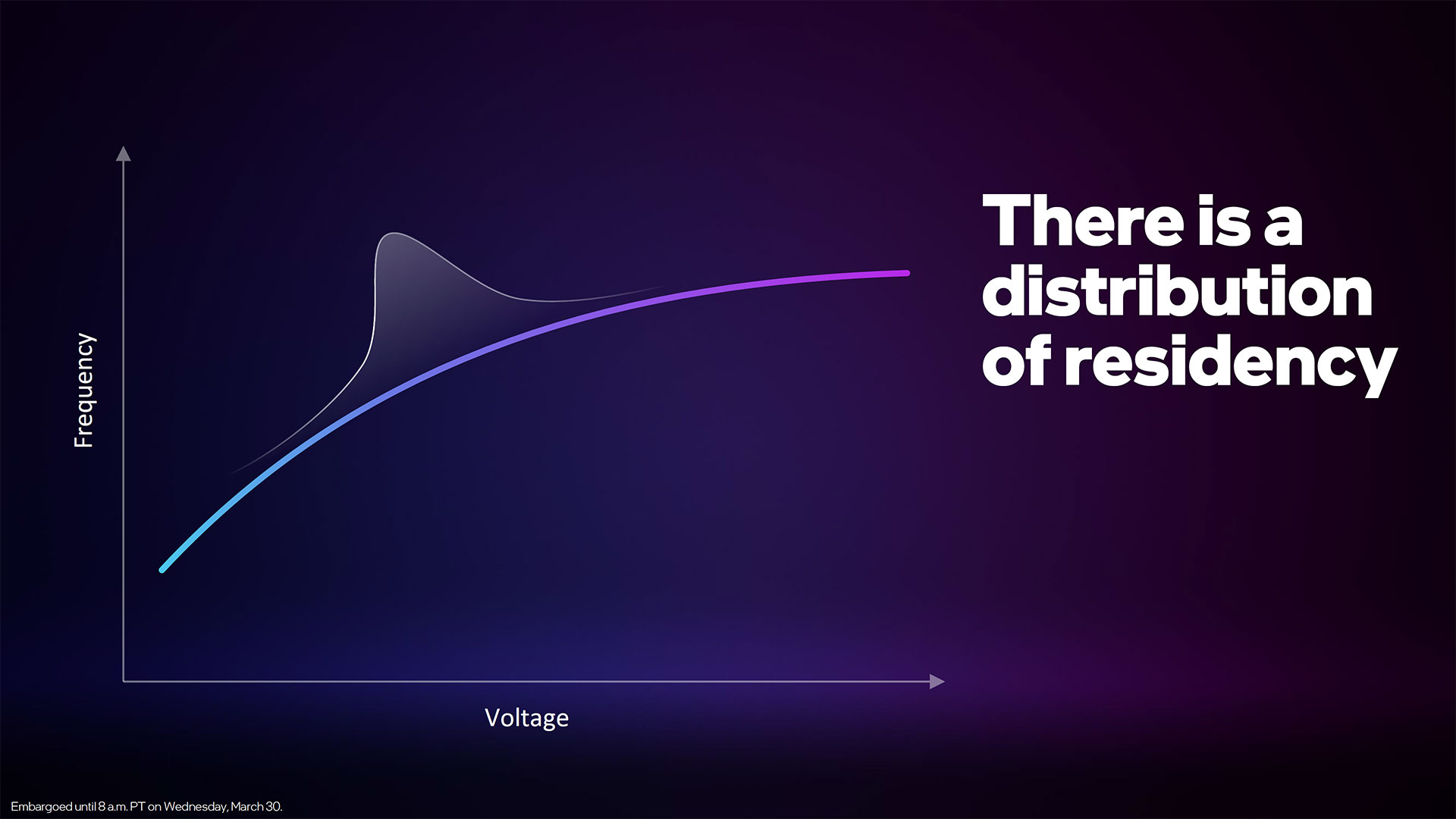

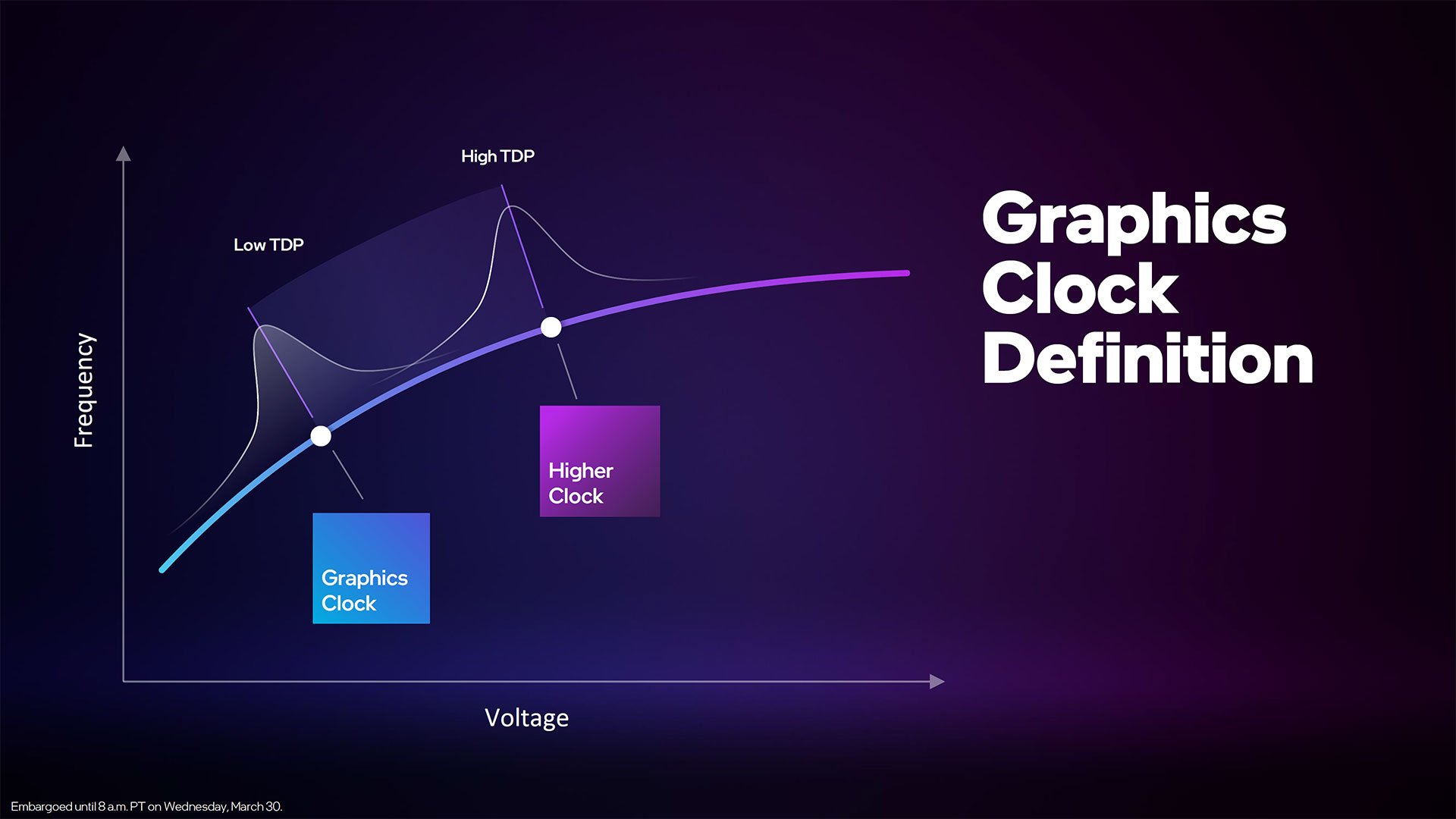

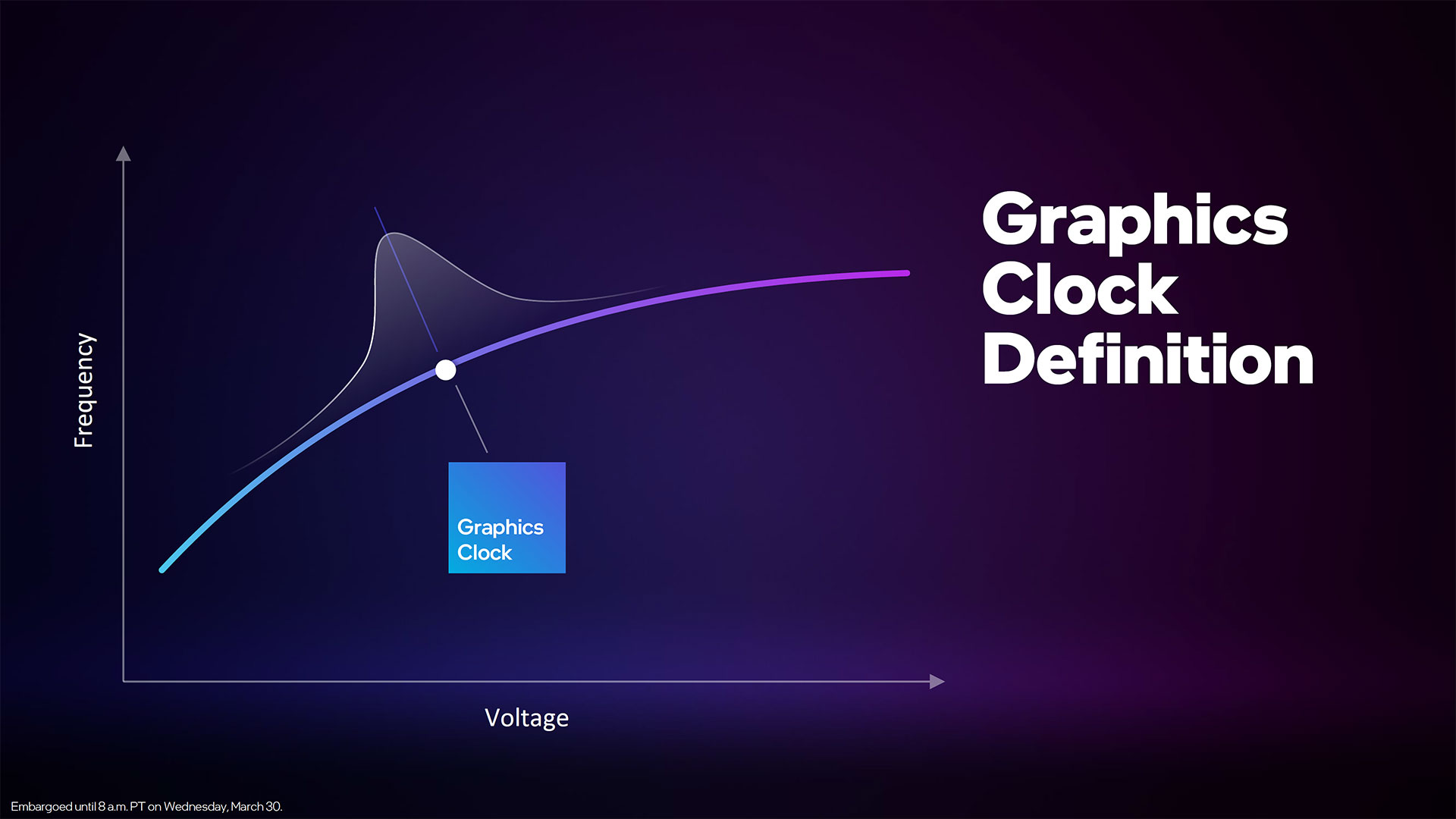

We'll get to clock speeds in a bit, but these can vary quite a lot, particularly in mobile form factors. We suspect Intel will break 2GHz on desktop cards, but the mobile parts appear to top out at around 1.55GHz on the smaller chip and 1.65GHz on the larger chip. Do the math and the smaller ACM-G11 should have a peak throughput of over 3 TFLOPS FP32, with 25 TFLOPS of FP16 deep learning capability. The larger ACM-G10 will more than quadruple those figures, hitting peak throughput of 13.5 TFLOPS FP32 and 108 TFLOPS FP16.

Besides the vector and matrix engines, each Xe-core also contains a single ray tracing unit (RTU). Intel still hasn't provided any details of what sort of ray tracing performance we can expect from Arc, but even the largest chip will top out at 32 RTUs. In contrast, AMD's RX 6900 XT has 80 ray accelerators and Nvidia's GeForce RTX 3090 Ti comes with 84 RT cores that are each about 1.75X as fast as its first-generation RTX 20-series RT cores.

We know from our GPU benchmarks that Nvidia's current RT cores are much faster than AMD's ray accelerators, so unless Intel's RTUs are substantially more capable than the AMD and Nvidia equivalents, we don't expect a lot from Arc in terms of ray tracing performance.

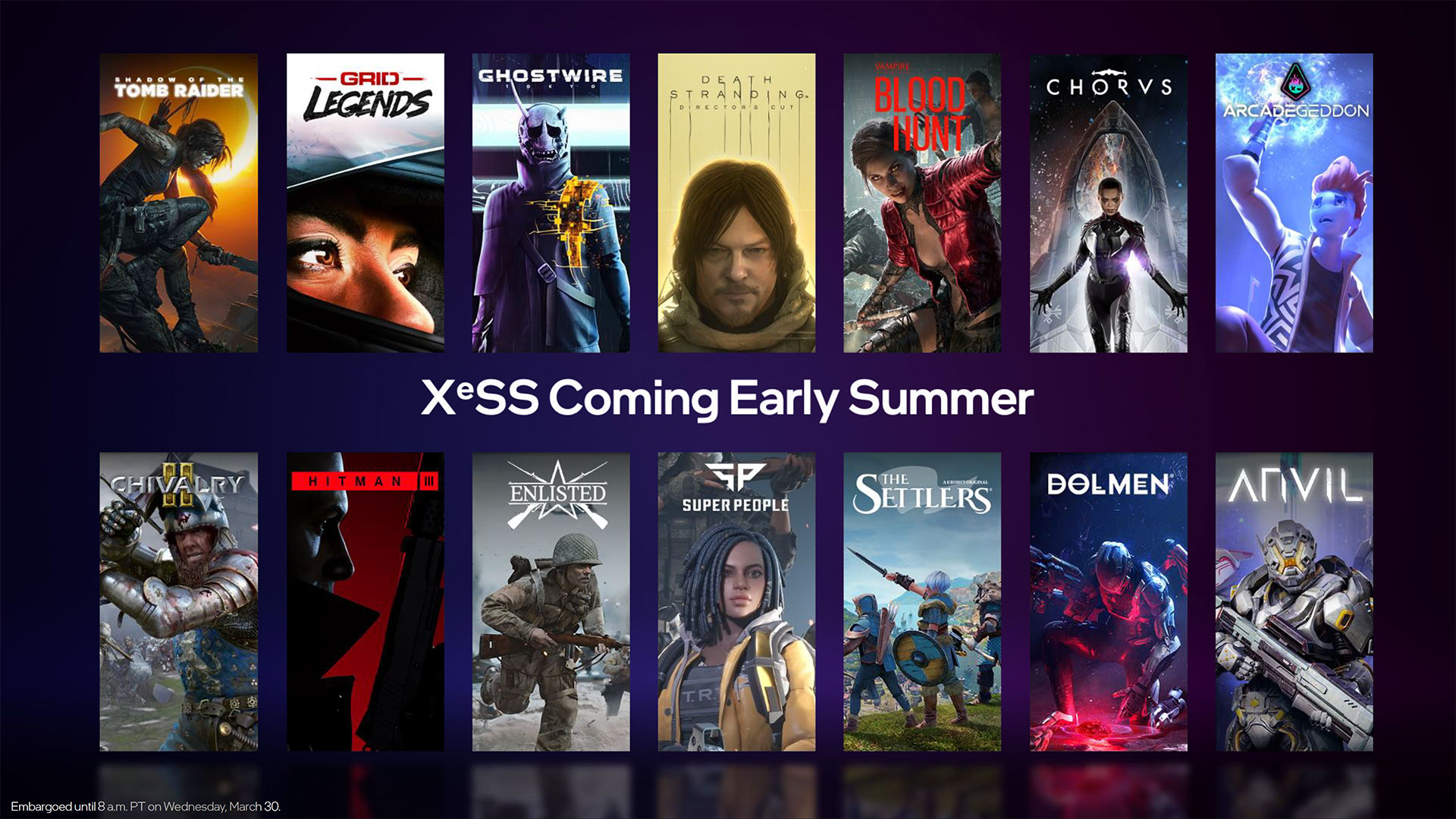

Intel does hope to make up for any lack in RT performance with the matrix cores. Similar to Nvidia's tensor cores, these can dramatically accelerate AI workloads, and Intel has its open-source XeSS (Xe Super Sampling) upscaling algorithm slated to launch this summer. Unlike Nvidia's DLSS, XeSS will also have fail-back support for DP4a instructions, which are included on Pascal and later Nvidia GPUs and RDNA and later AMD GPUs.

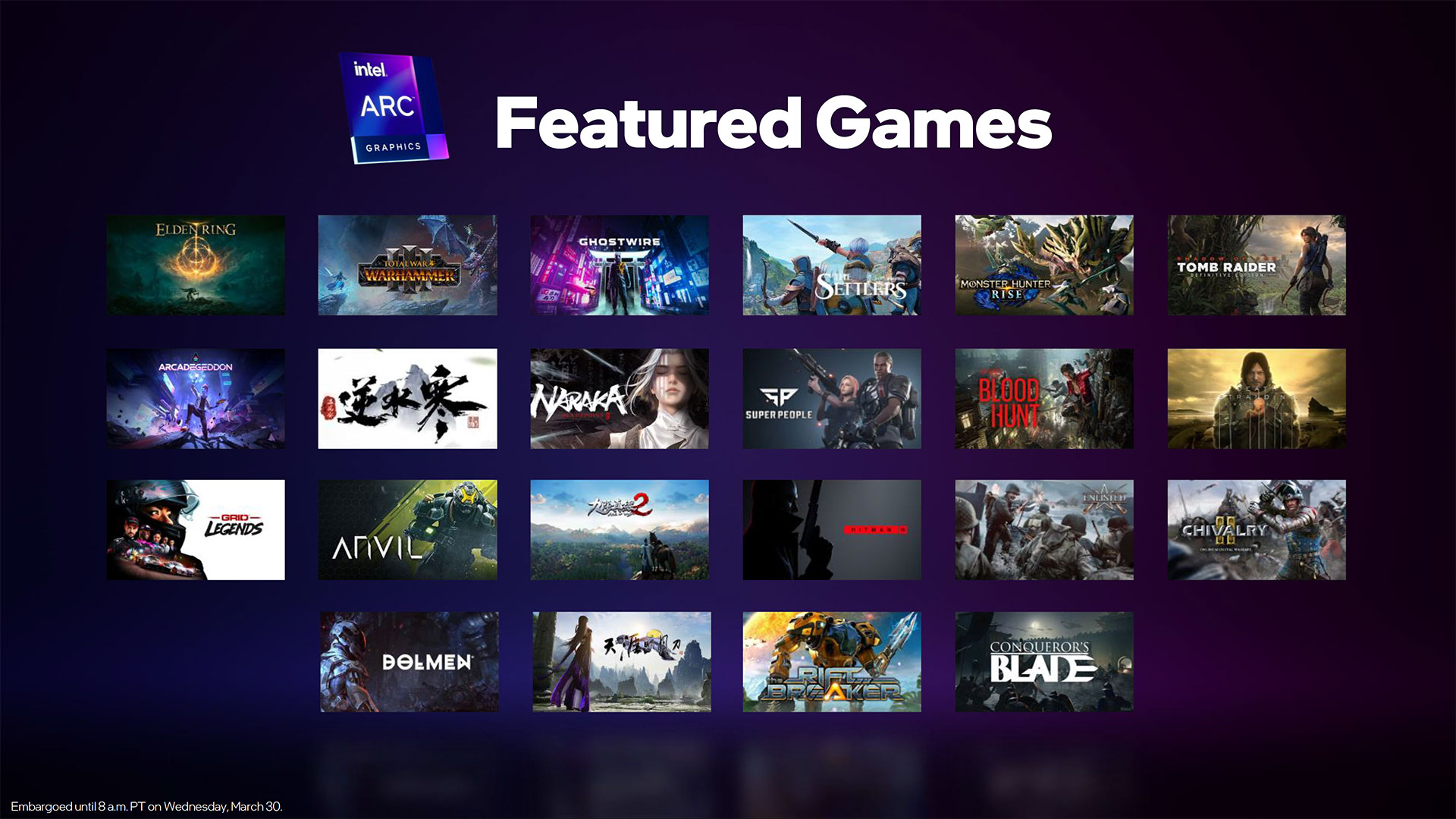

How it will fare in terms of performance and image quality remains to be seen, though Intel has offered up a video showcasing the quality of XeSS 1080p to 4K upscaling vs. native 1080p in the upcoming sci-fi game Dolmen.

Arc A-Series Mobile Graphics Solutions

With the hardware overview out of the way, here are the specific A-Series mobile solutions that Intel will be launching in the coming months. The Arc 3 A350M and A370M are available "now" — laptops with these GPUs should be on sale in early April. The midrange Arc 5 A550M basically doubles most of the hardware features, but with a much lower graphics clock. Finally, the Arc 7 A730M and A770M are two higher-end options, with the latter being the full-fat variant of the ACM-G10 chip.

It's a bit odd to see some of the other specs for the announced mobile chips. Both the Arc 3 solutions only use a 64-bit memory interface, which feels decidedly low-end. The ACM-G11 chip could have easily supported 6GB on a wider 96-bit interface, though that would have increased power consumption a bit. There's also a very large 400MHz gap between the A350M and A370M, which means the A370M should in practice be around 35% faster than the lesser A350M.

If anything, the A550M makes even less sense. With a graphics clock of just 900MHz, it has a theoretical performance level of just 3.7 TFLOPS FP32, only 16% faster than the A370M despite having twice the cores, memory, and bandwidth. The A730M bumps the core and memory aspects up 50%, with a 22% increase in clocks as well, giving it potentially 83% more performance than the Arc 5 chip. Finally, there's another big gap between the A730M and A770M, with the latter delivering up to twice the compute performance.

We can't say for certain that these are all the mobile Arc solutions we're likely to see, though there's clearly room for other configurations should there be demand for them. We also don't know without testing how Arc will actually stack up against the existing Nvidia and AMD GPU incumbents, so it might be better for Intel to just focus on a few models rather than a wide range of options, some of which are likely to be unpopular.

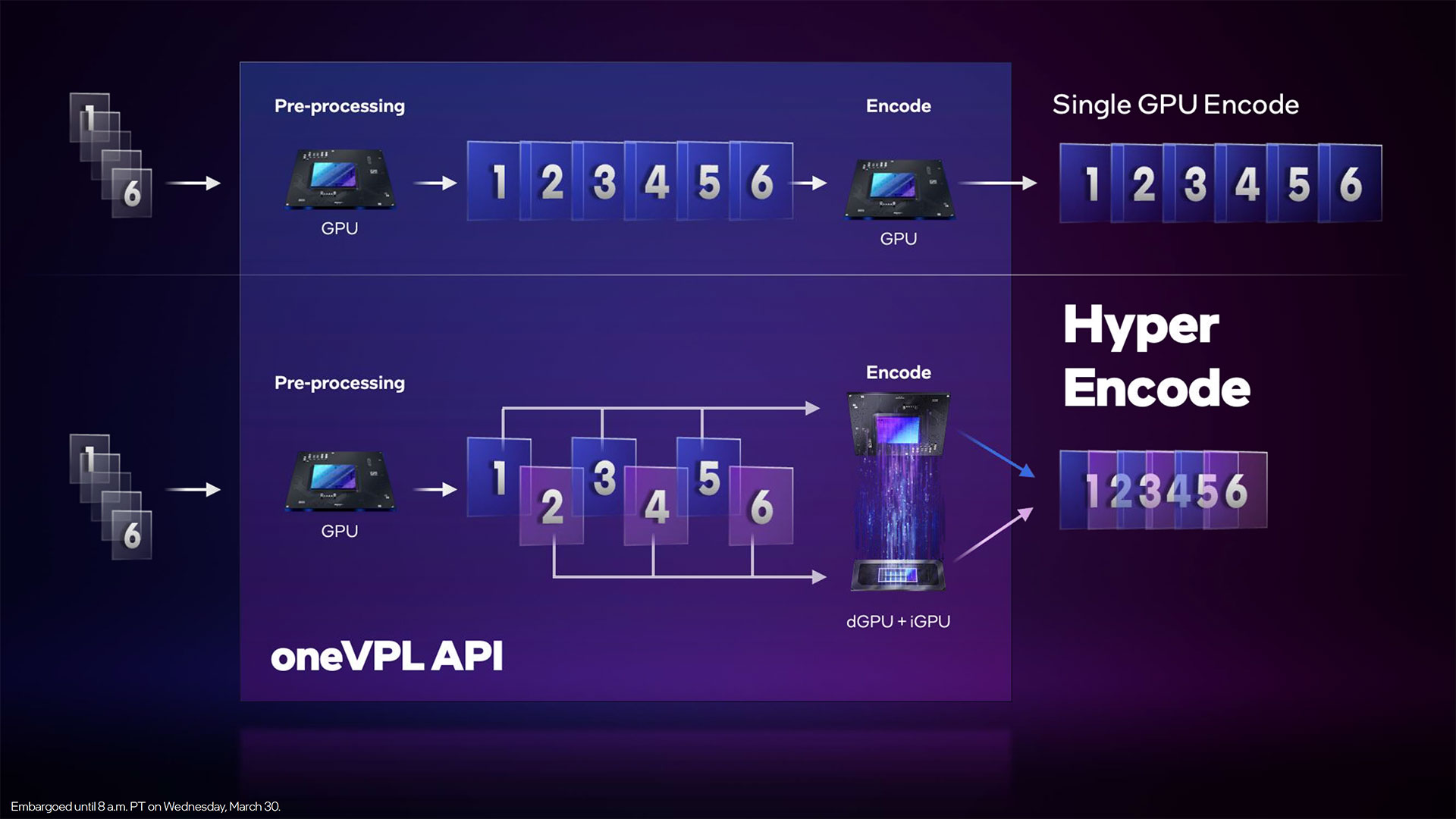

Arc Xe Media Engine

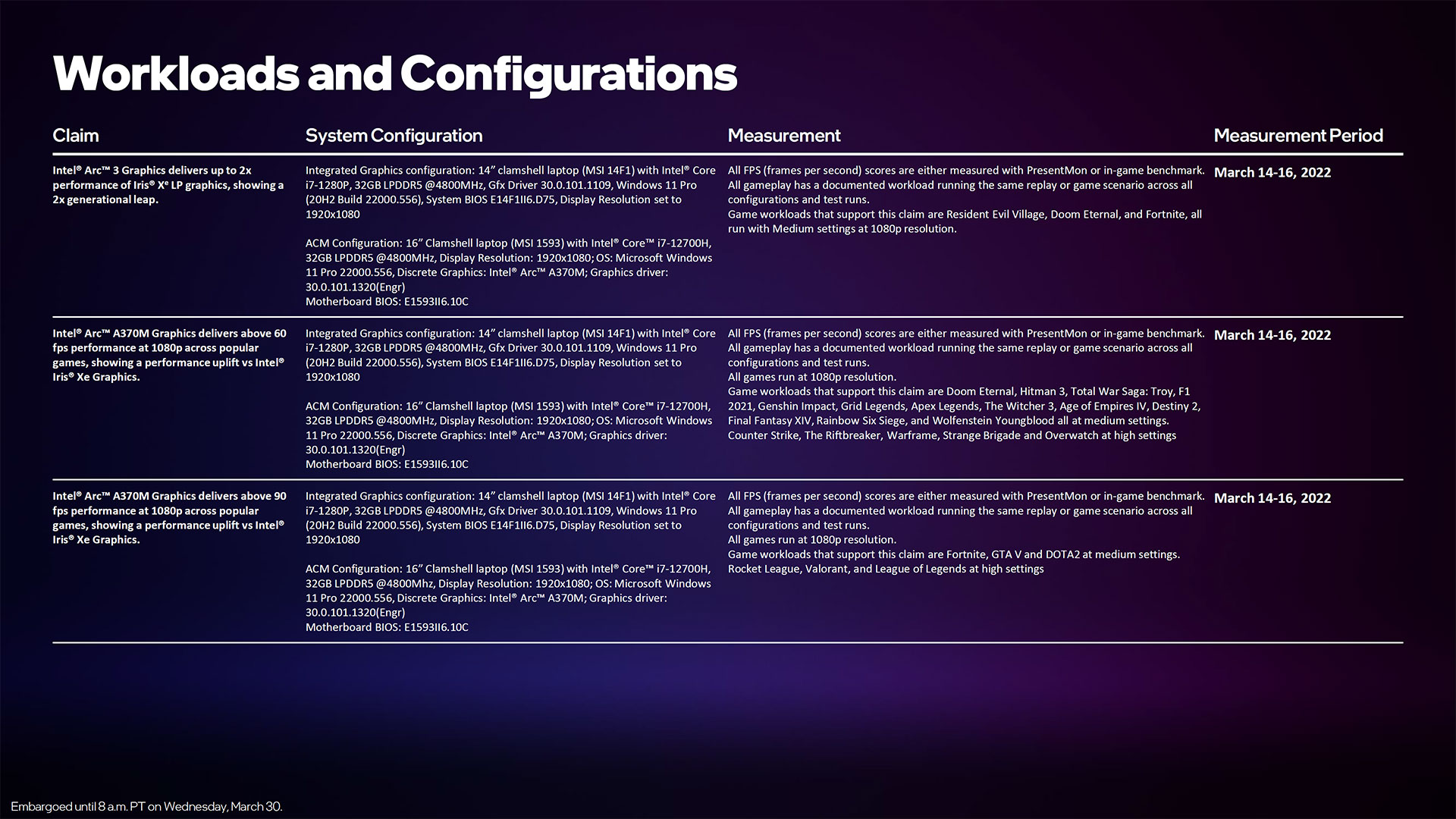

Intel is particularly pleased with Arc's media capabilities, which contain an industry-first hardware-accelerated AV1 video encoding support. The two engines support up to 8K60 10-bit HDR encoding support, with 8K60 12-bit HDR decoding. Besides AV1, Arc also supports the VP9, AVC (H.264), and HEVC (H.265) video codecs.

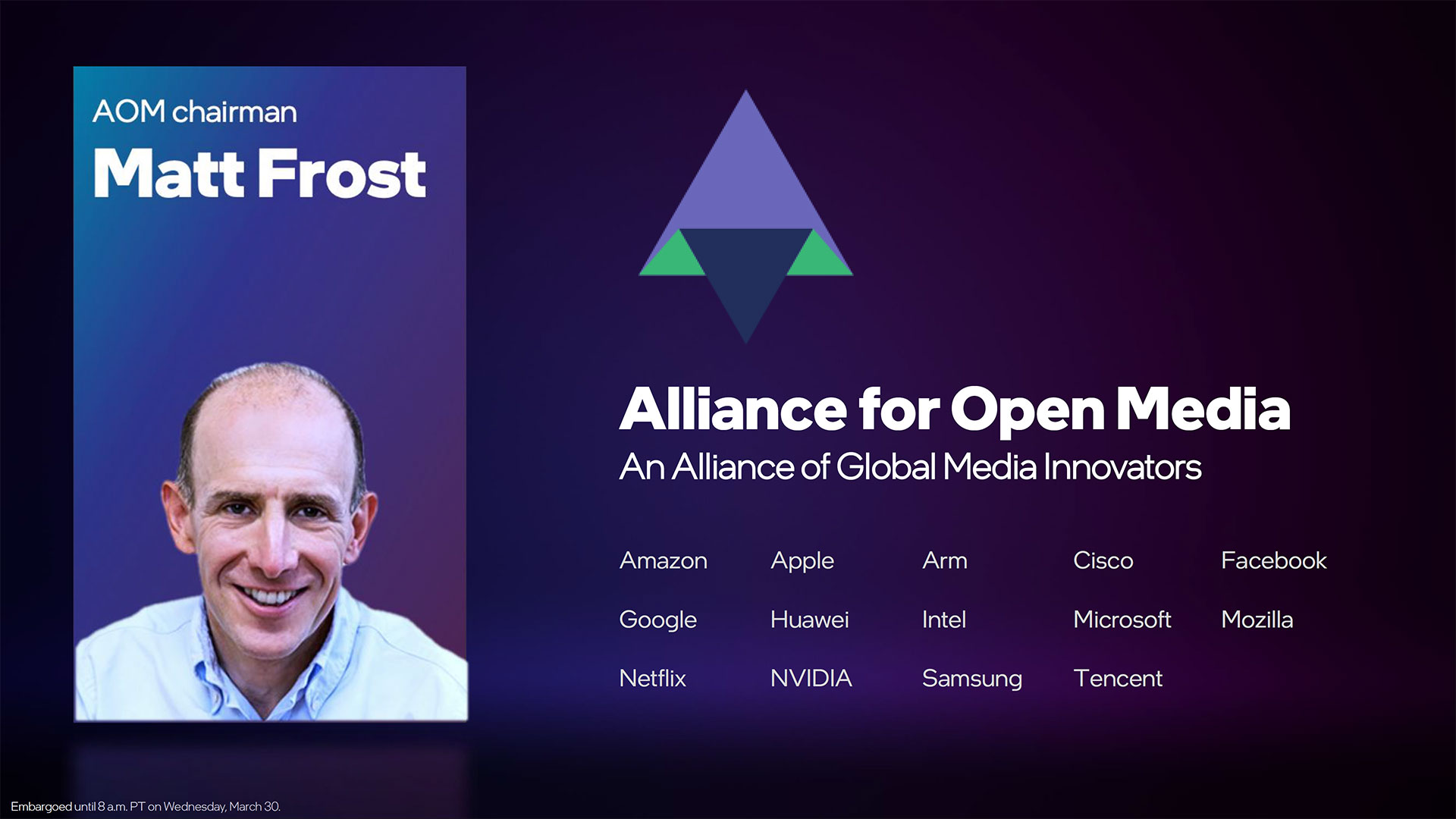

AV1 was created by the Alliance for Open Media, with Intel, Amazon, Apple, Google, Netflix, Nvidia, Samsung, Facebook, Microsoft, and others signing on. AV1 can provide improved video quality within the same bandwidth, or the same quality as H.264 with about half the bandwidth, or 20% less bandwidth than H.265. As a demonstration of what can be done, Intel provided the following Xsplit Gamecast comparison of H.264 vs. AV1, with both using 5Mbps bitrates.

AV1 definitely has some big names pushing it, and hardware support from Intel will prove a big benefit for mobile users that may not have enough CPU power to effectively handle such encodes. Intel says the AV1 hardware acceleration can be up to 50X faster than a software encode. But the video capabilities don't end there.

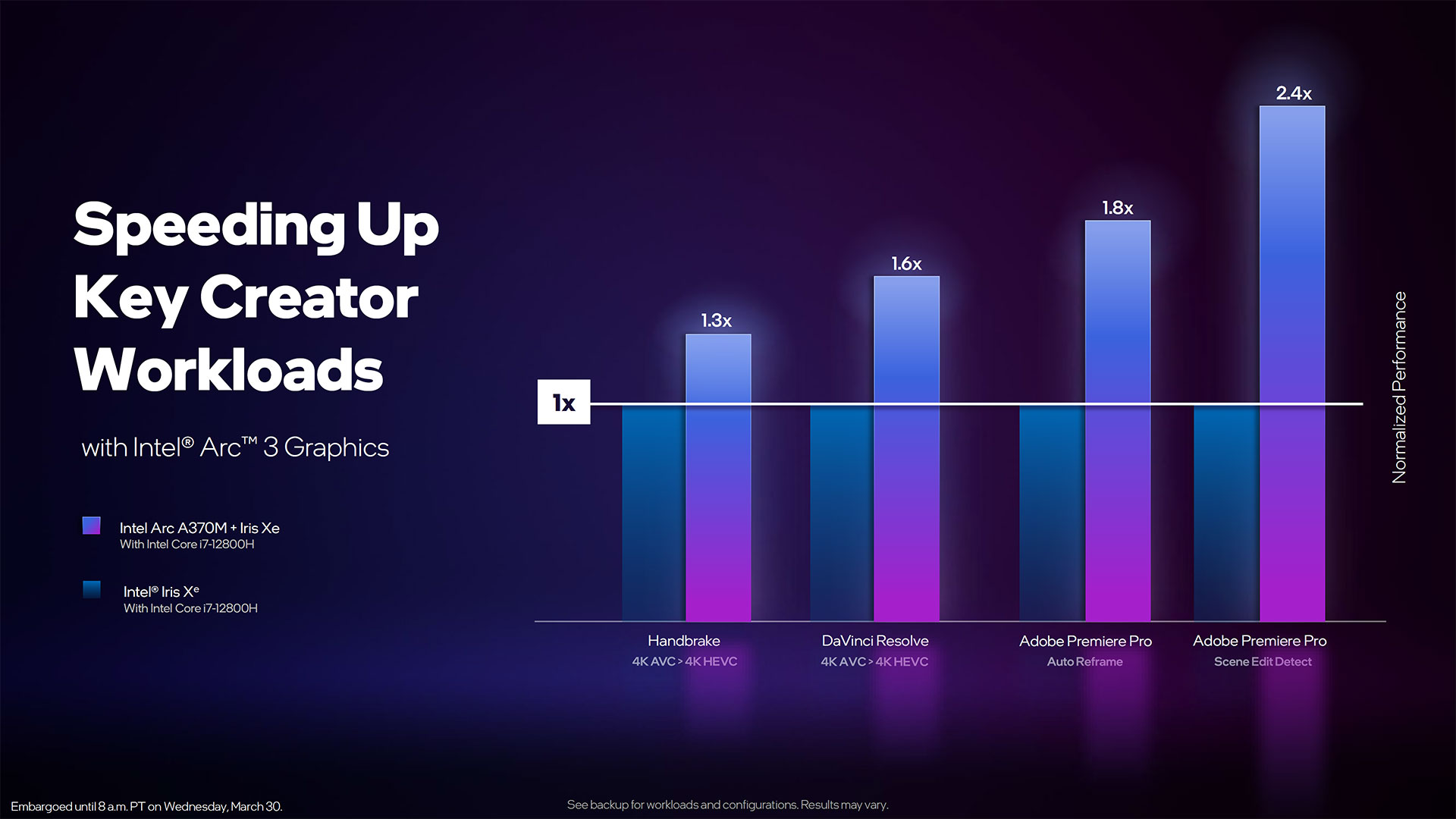

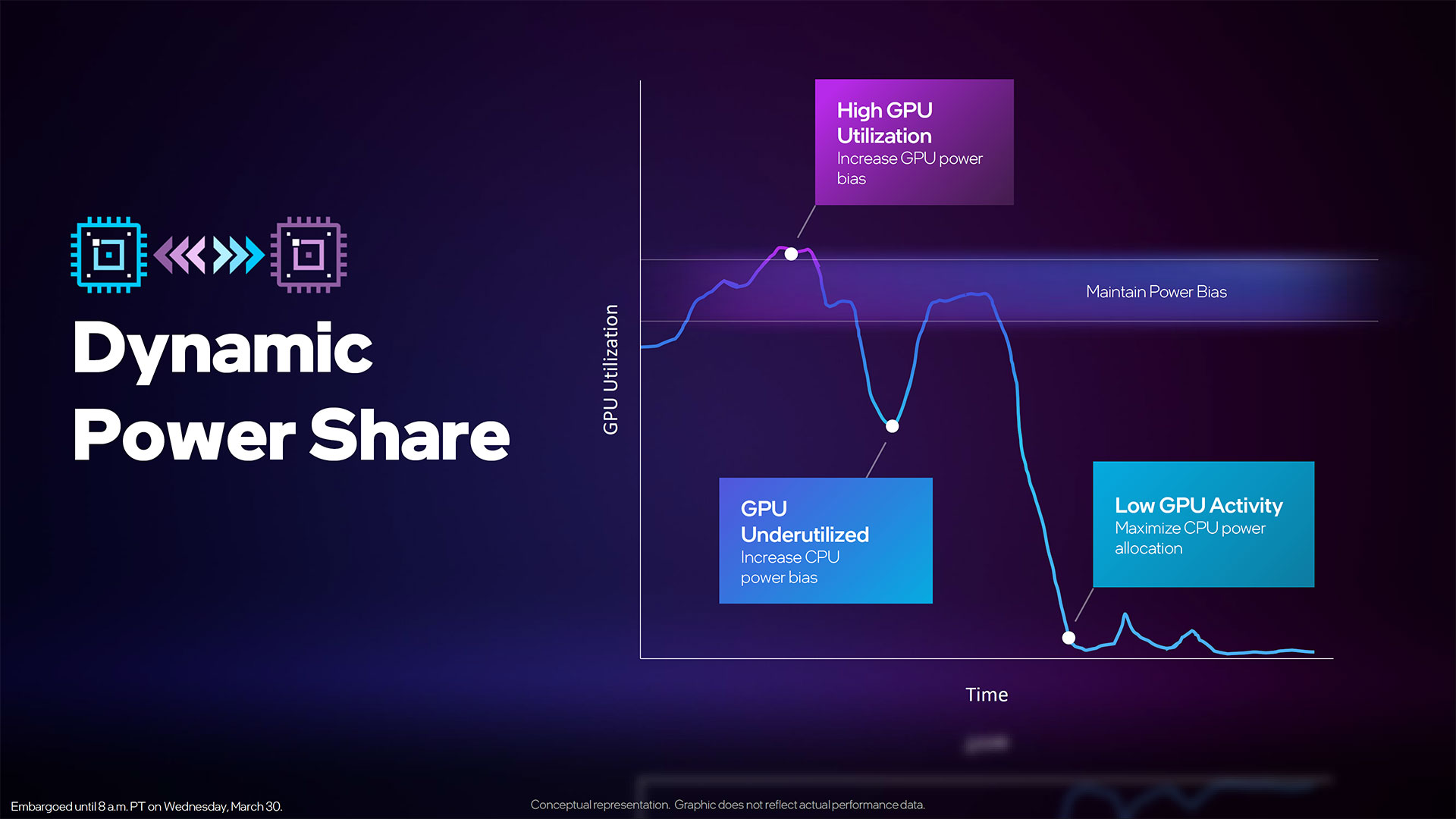

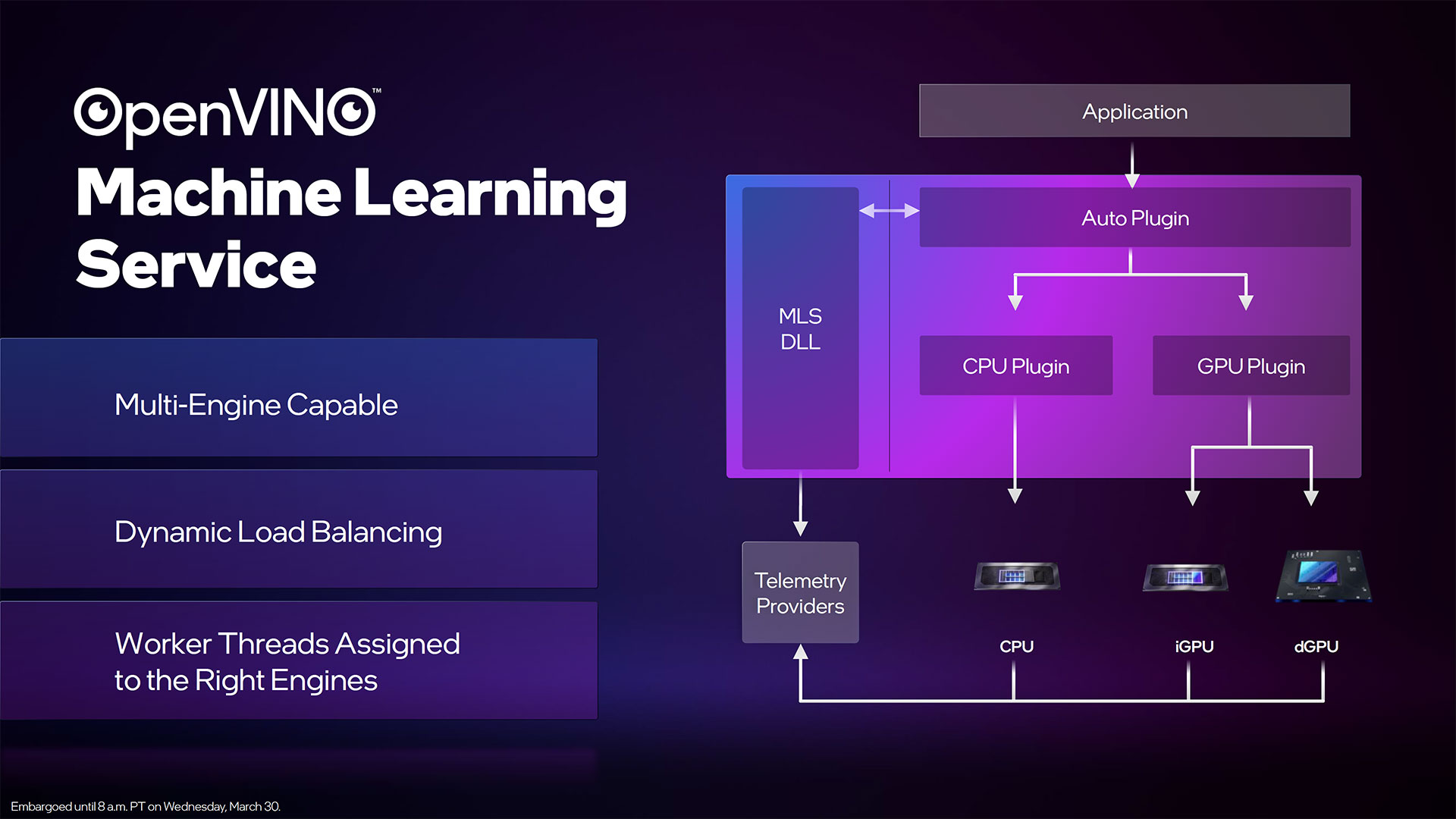

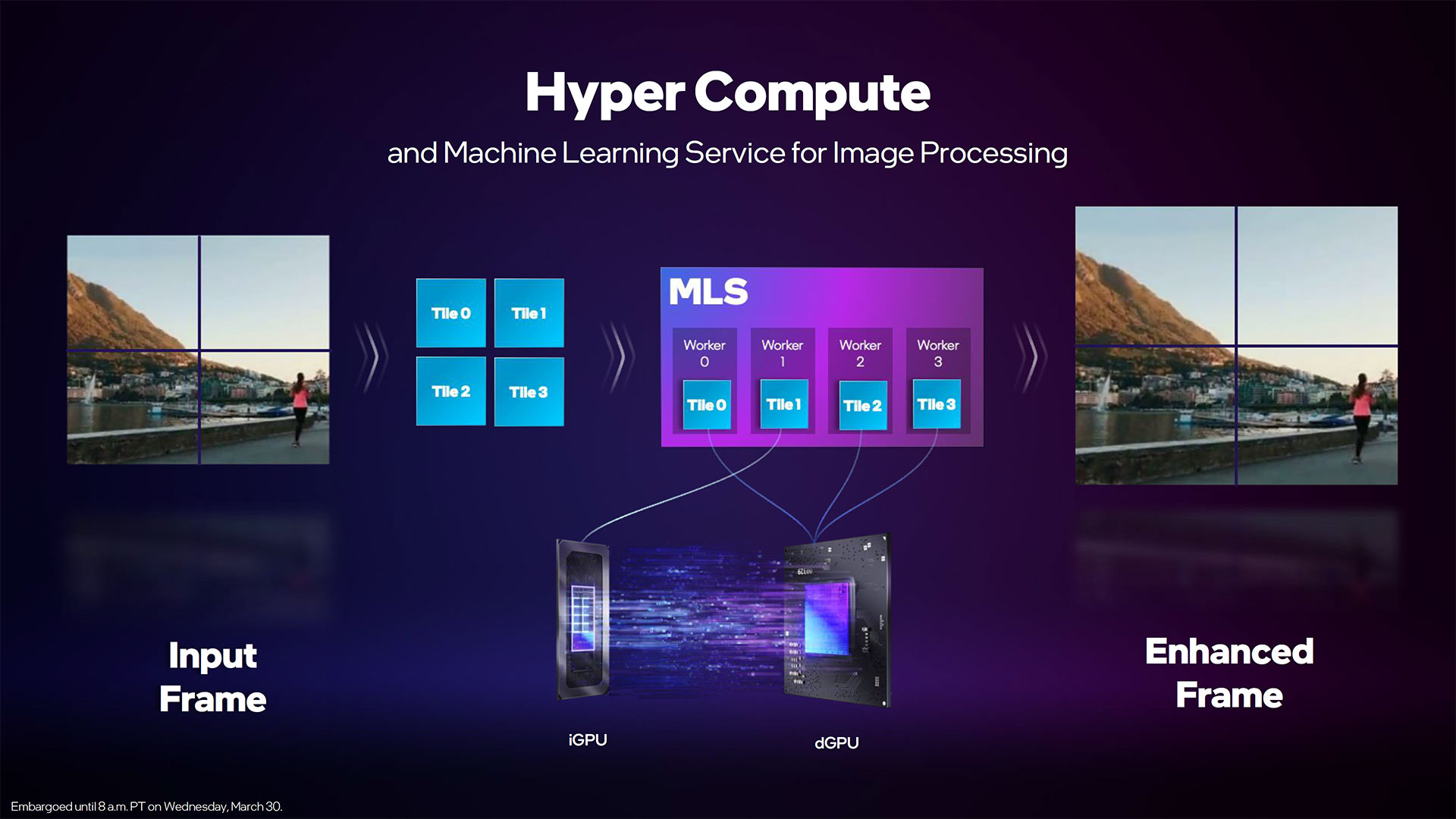

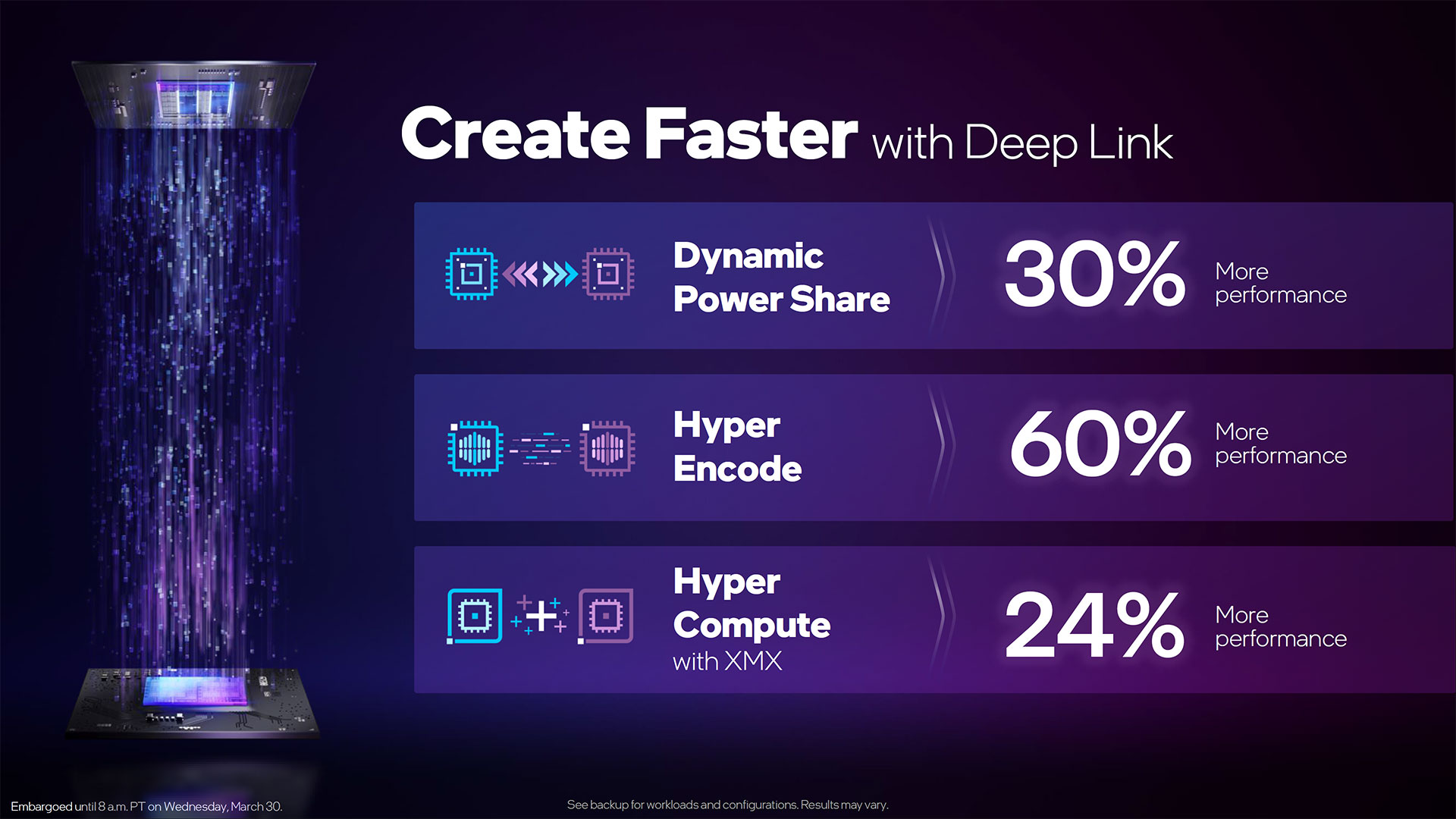

Intel also wants to make enhancing videos a lot better and faster, leveraging the capabilities of the XMX matrix engines. It provided a separate demo of Topaz Labs' Video Enhance AI running on Arc, which was over twice as fast as the previous Xe-LP (Tiger Lake) integrated graphics. Intel also has a new feature called Hyper Compute where both the integrated and dedicated Intel graphics chips can work together, nearly tripling performance for such tasks.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Xe Display Engine is a bit interesting, in that Intel specifies HDMI 2.0b and DisplayPort 1.4a support, but then also lists it as being "DisplayPort 2.010G Ready." AMD and Nvidia have both moved to HDMI 2.1 with their latest GPUs, but remain with DisplayPort 1.4. Perhaps the issue is that no DisplayPort 2.0 monitors exist yet, though if that's the reason, we can only hope the initial monitors to support the new standard won't be plagued with teething pains.

Intel supports adaptive sync displays with Arc, and it also adds a couple of extra options. Speed sync looks to combine low latency with tear-free gaming, even for fixed refresh rate monitors. Basically, the GPU will virtualize the render buffer and then monitor the display, and sync transfers to avoid tearing while still avoiding a hard framerate cap. Smooth sync on the other hand is a new idea that will add a blurring effect between screen tears should a user choose to run with vsync off in order to improve the overall responsiveness. It's a very intriguing idea, and we look forward to testing it in actual games to see how it looks.

Arc 3 A370M Performance Preview

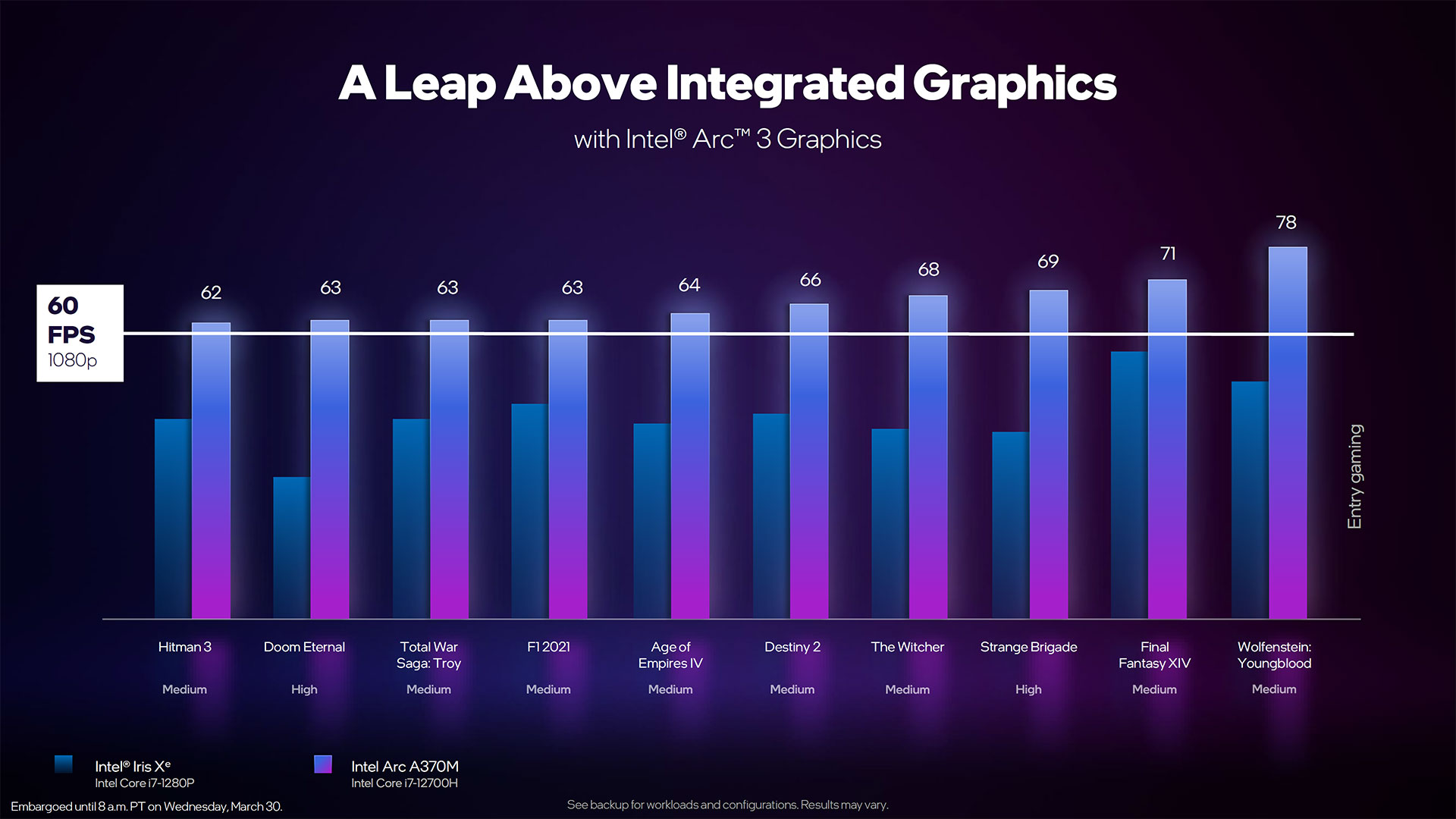

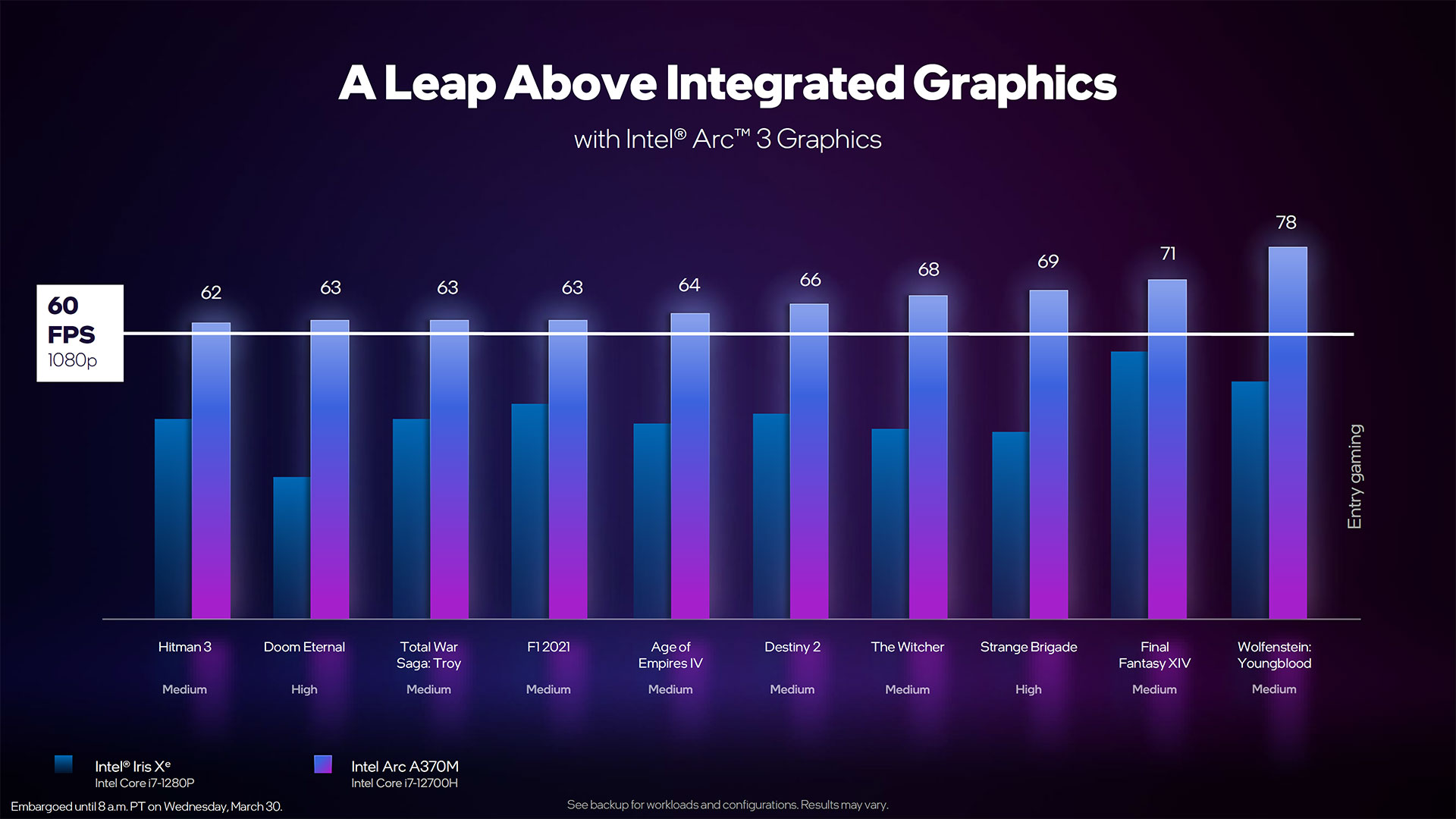

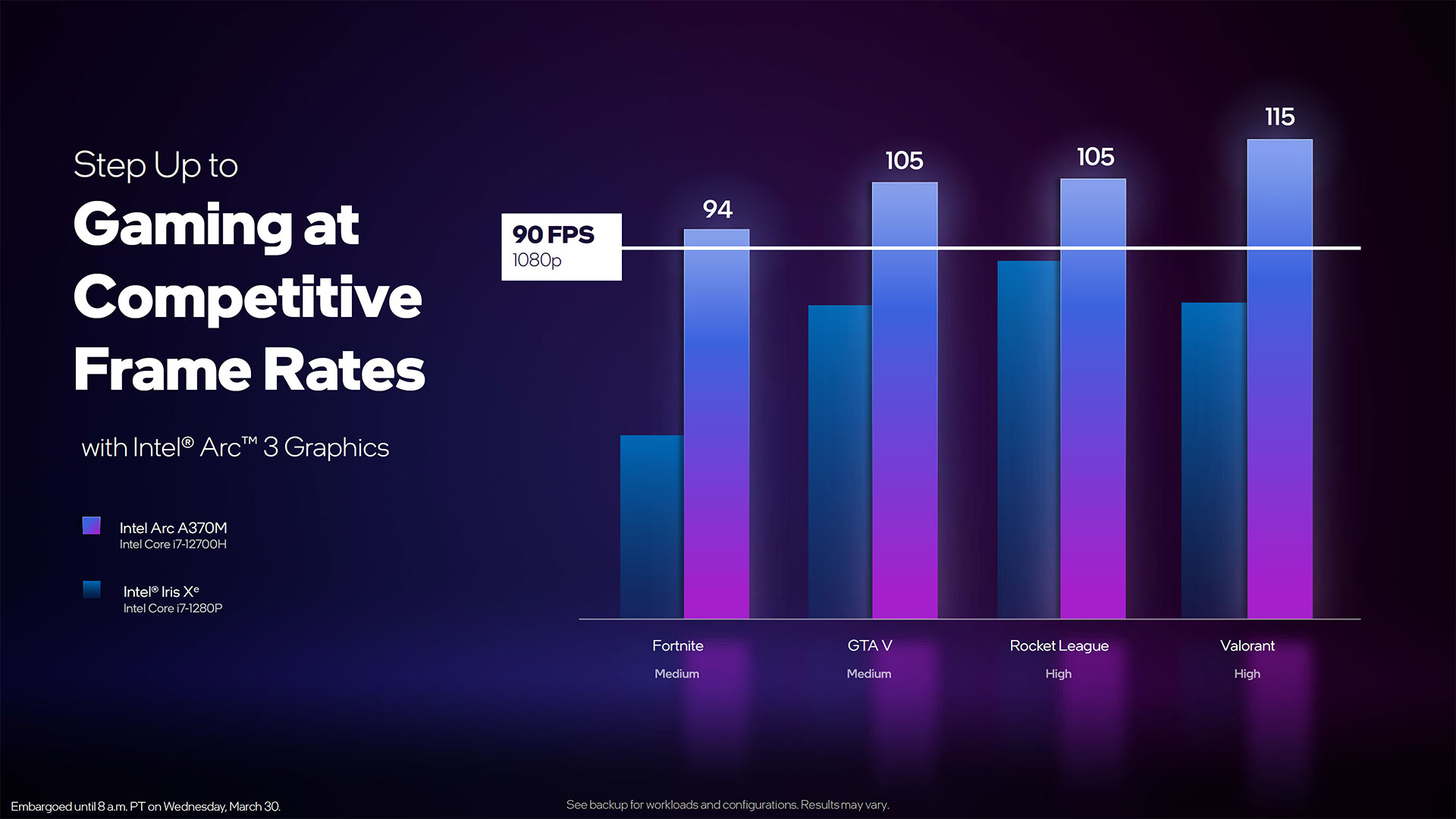

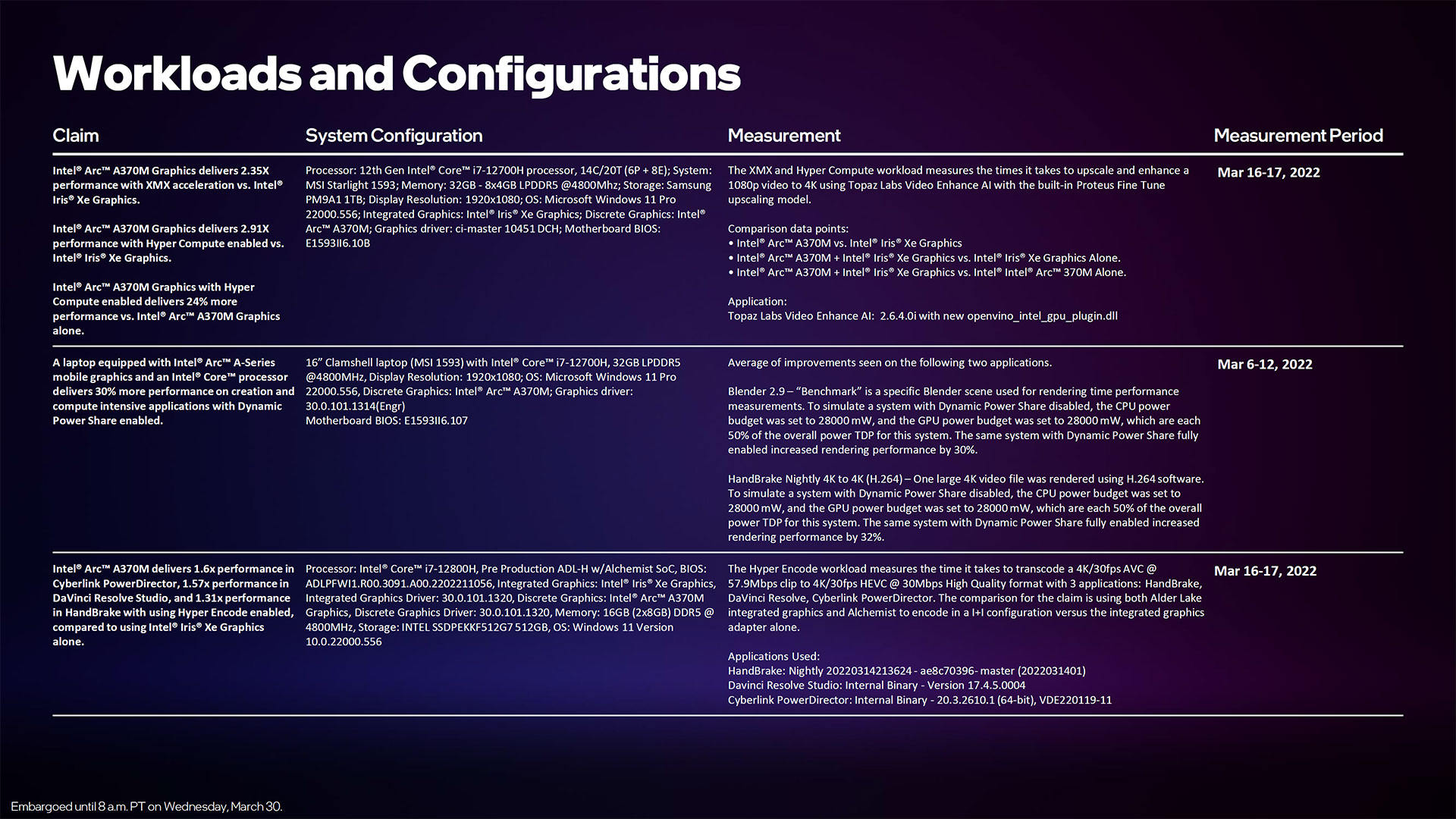

We don't have hardware for testing mobile Arc just yet, but Intel did provide the above tease of performance. Unfortunately, it compares its own A370M to the integrated Xe Graphics in a Core i7-1280P, which isn't exactly a high-performance solution. Still, even the second lowest mobile Arc chip managed to break 60fps at 1080p with medium to high settings, which certainly isn't something that was possible with the previous integrated graphics solutions.

Except… these are mostly older and less demanding games. You can tell simply by the fact that the integrated Iris Xe GPU is generally pulling over 30 fps at 1080p. Strange Brigade was in our previous GPU test suite, and high-end GPUs could hit 400+ fps as an example. But then, this is also Intel's lower tier of Arc GPUs, so hopefully the high-end A770M — and the eventual desktop variant — will post far more impressive results in modern games. That's something we're looking forward to testing once we get hardware in hand.

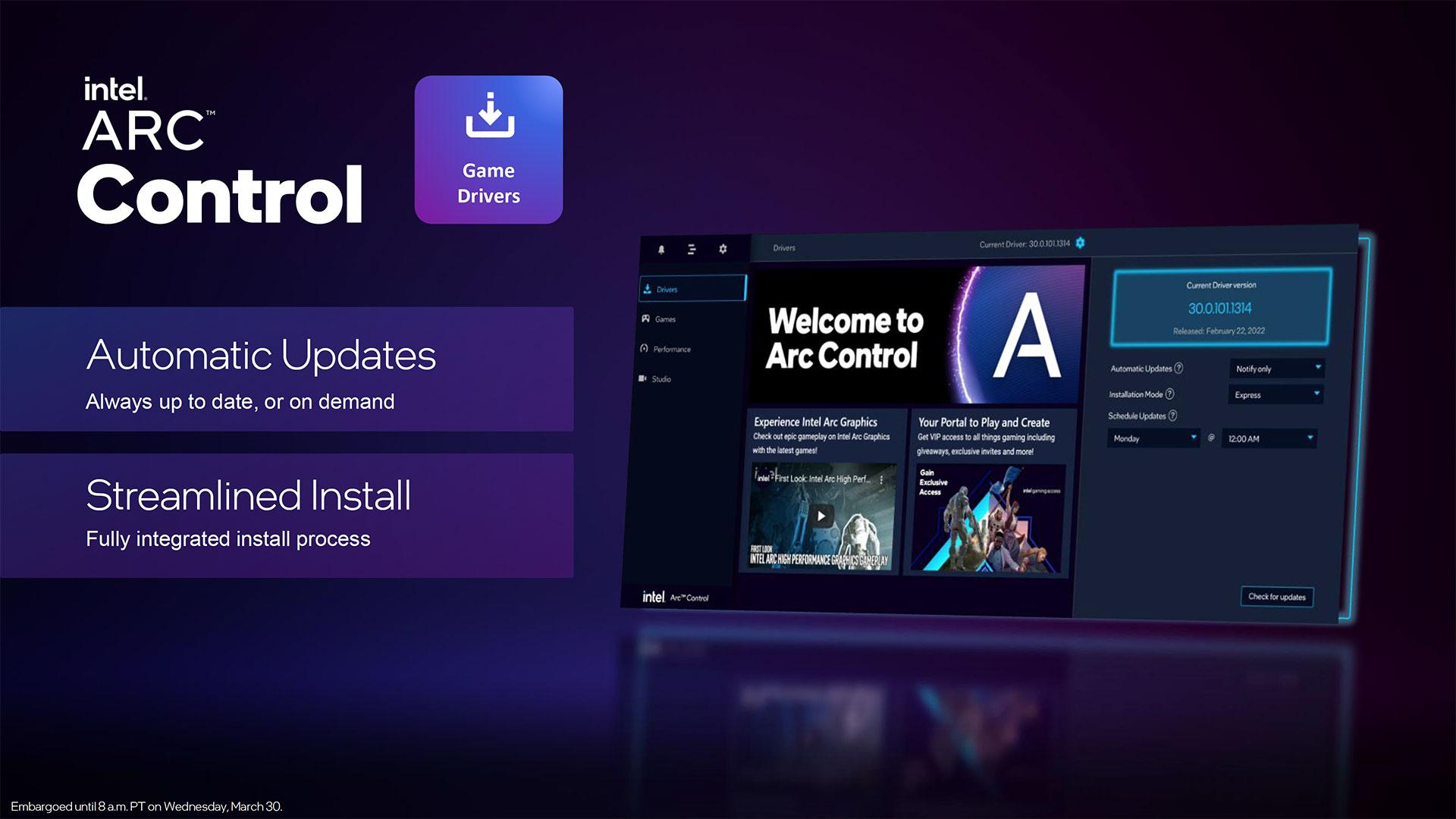

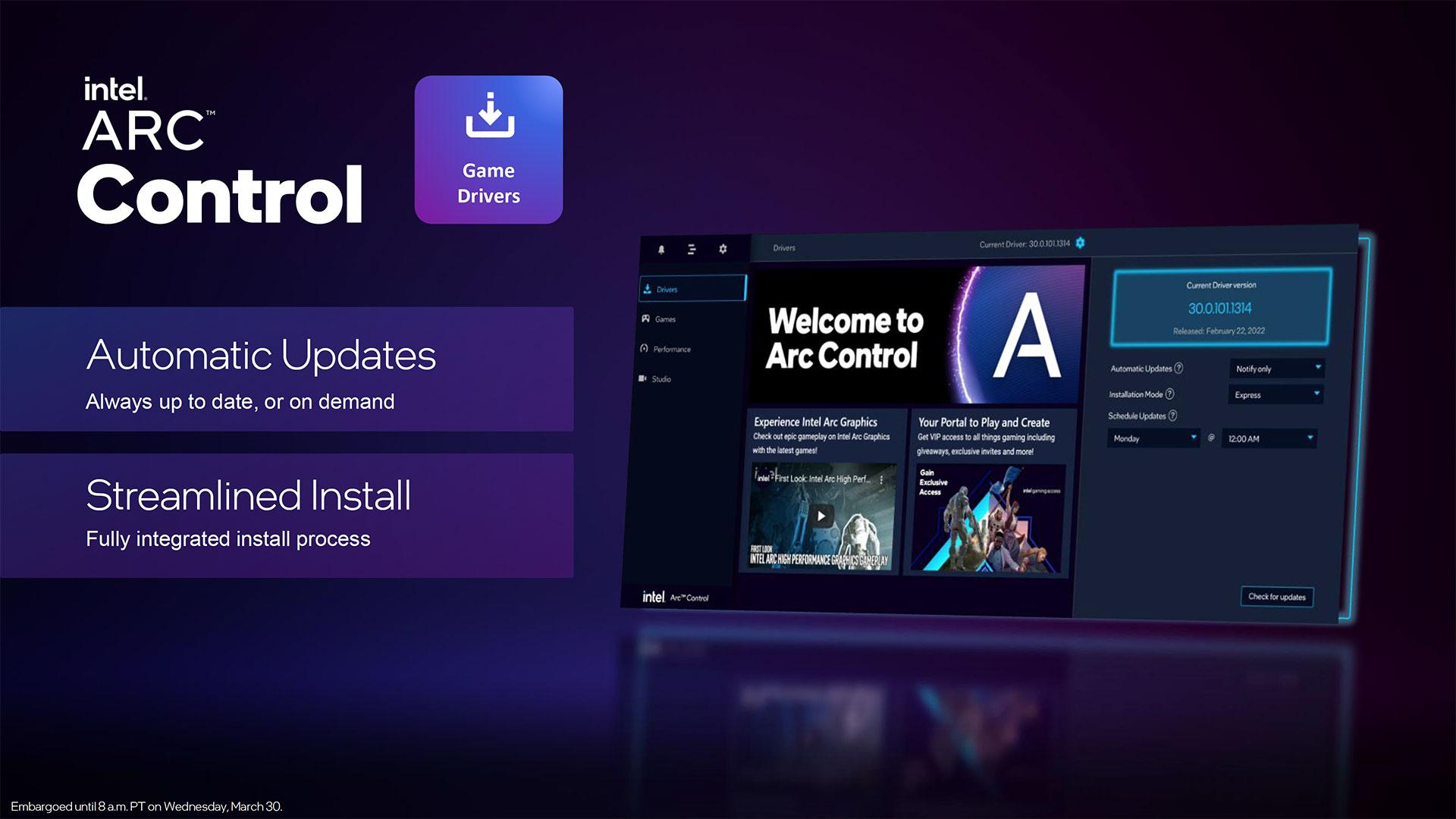

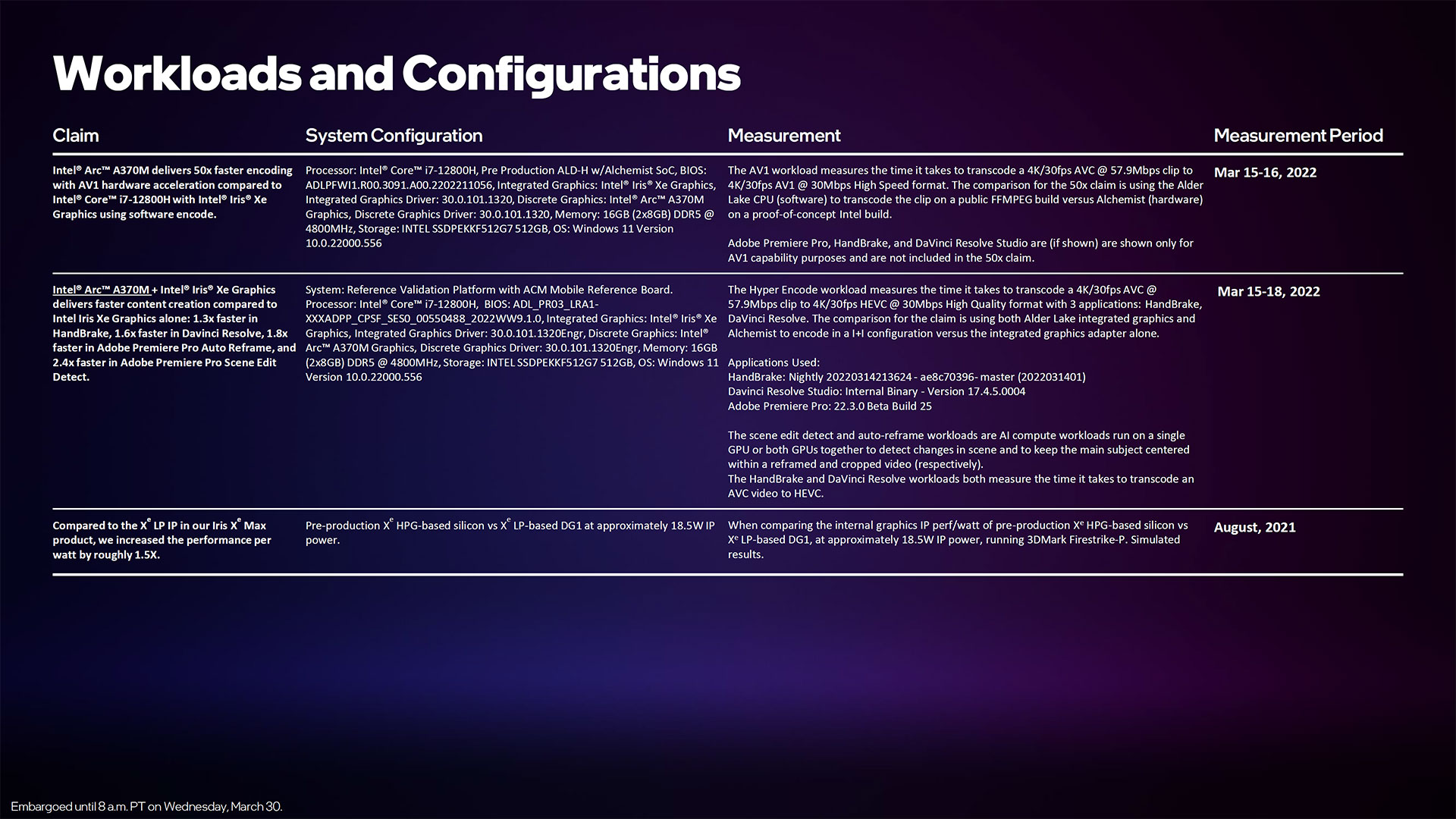

New Arc Control Driver Interface

Wrapping things up, Intel also showed off its new graphics driver front-end, dubbed Arc Control. It's intended to function as a one-stop solution for all your graphics and driver needs. Intel will provide unified drivers — meaning, no specialty drivers from laptop OEMs — with global settings control, auto download and update options for drivers, and other services. The UI looks like a modest update to the current Xe drivers, which were a significant improvement over the previous Intel drivers.

Now that the specs and details for Arc are out, we look forward to actually putting some laptops to the test. It looks like the A350M and A370M will compete with Nvidia's MX350/MX450, and maybe even the newer MX550/MX570. They're certainly not high-performance solutions likely to dethrone Nvidia's RTX 30-series offerings, but they don't need to. If Intel can provide better multimedia function and battery life with acceptable gaming performance, it could win a lot of users from Nvidia and AMD.

We didn't cover all of the details that Intel reiterated today, but if you want to view the full slide deck, we've included it below.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

JamesJones44 Maybe I mis-read the article, but seems odd to me you would name a more capable part G10 and a less capable G11. I guess if consumers don't see those names it won't matter.Reply -

hotaru.hino Reply

AMD and NVIDIA both name their GPUs in increasing order for decreasing performance. I would imagine starting with a lower value as a part number is better for the highest end part because you're not going to get any higher and it would be really awkward if you started off with two product lines, say G10 and G11, but then you want a third, lesser product. That would make the G12 worse than the G11 and you've just broken your convention. And having a G10.5 is weird... because what if you make yet another product? Are you going to call it 10.25 or 10.75?JamesJones44 said:Maybe I mis-read the article, but seems odd to me you would name a more capable part G10 and a less capable G11. I guess if consumers don't see those names it won't matter. -

JarredWaltonGPU Reply

Industry standard:JamesJones44 said:Maybe I mis-read the article, but seems odd to me you would name a more capable part G10 and a less capable G11. I guess if consumers don't see those names it won't matter.

Nvidia GA100 > GA102 > GA103 > GA104 > GA106 > GA107

AMD Navi 21 > Navi 22 > Navi 23 > Navi 24 -

closs.sebastien but rtx2080 is better than 2070 which is better than 2060... wich is better than a 1060 which is better than a 900 serie... which is better than a 600 serie...Reply -

drivinfast247 Reply

Correct. The model series numbers increase to denote the age(release date) of the product.closs.sebastien said:but rtx2080 is better than 2070 which is better than 2060... wich is better than a 1060 which is better than a 900 serie... which is better than a 600 serie...

The die numbers increase to show the quality of silicon, highest quality is first in series. -

Aurizon I am very wary of Intel's long history of 'monopolistic practices' where they tried incessantly to create an Apple styled 'walled garden', as shown by their underhanded bus practices and chip-set linkage tying. Waaay back I supported AMD through thick and thin and I was greatly pleased to see AMD overcome these barriers and do well on their raw smarts. Maybe I am overly suspicious, but has this leopard changed their spots??? - I doubt it, so I wait for the shoes to fall...Reply -

JayNor "The XMX matrix engines meanwhile can do 128 FP16/BF16, 256 INT8, or 512 INT4/INT2 operations per clock. "Reply

I've seen this info for Xe-hpc, but are all these modes also supported on Xe-HPG? What is your source? -

JarredWaltonGPU Reply

Slide 22, at the bottom of the article. Considering Intel was specifically briefing us on the Arc product line, it would be very odd to include a slide showing Xe-HPC capabilities that don't apply to Xe-HPG.JayNor said:"The XMX matrix engines meanwhile can do 128 FP16/BF16, 256 INT8, or 512 INT4/INT2 operations per clock. "

I've seen this info for Xe-hpc, but are all these modes also supported on Xe-HPG? What is your source?

https://cdn.mos.cms.futurecdn.net/s2F46dgEzhXhXn7CLLtagF.jpg