Curbing Your GPU's Power Use: Is It Worthwhile?

In many cases, the graphics card is the most power-hungry component in a PC. The enthusiast community is no stranger to CPU tweaking, so why hasn't GPU modification caught on? We're going to see just how much you stand to gain (or lose) from tweaking.

Saving Power On AMD Graphics Cards

Introduction

An increasing number of enthusiasts are becoming aware that their GPUs are the primary consumers of power in their PCs. For some, especially for our readers outside of the U.S., power consumption is an important factor in choosing a graphics card, along with performance, price, and noise levels. At the same time, as we begin to focus more intently on GPU power consumption, it is disconcerting to see the real cost of owning a high-end graphics card. If you read What Do High-End Graphics Cards Cost In Terms Of Electricity?, then you already know what we’re talking about.

Now, you're probably wondering what you can do to help alleviate the issue. Graphics vendors like AMD and Nvidia build in technologies that help cut power use during idle periods, but the only surefire way to slash consumption is using a mainstream graphics card instead of a high-end model. At the end of the day, simpler cards based on less-complex GPUs require less power than their high-end siblings.

You end up making sacrifices when you give up the displacement of a big graphics engine, though. Most mainstream cards don't offer enough performance to play the latest games at in the highest resolutions using the most realistic detail settings. If you want to play games completely maxed out, a high-end card is the only option.

Is there really no alternative to using mainstream graphics cards for the power-conscious? What if there was a way to manually cut down the power consumption of faster graphics cards? These are questions we ask (and try to answer) today.

A Short Overview of GPU Power Management

Although add-in graphics cards for desktop PCs don't curb power consumption as aggressively as discrete notebook GPUs, they still employ power management. In fact, power management technologies for desktop graphics cards have been available for quite a while. They usually manifest themselves as separate clocks for 2D (desktop) mode and 3D mode. Think of these as P-states on modern processors. With the availability of hardware-accelerated video playback, vendors have also added a new mode for video playback.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What's missing in most graphics cards is an option to limit power consumption, which is what the “Power saver” preset in the Windows Control Panel does. Enable this and the graphics card runs at lower clocks to keep power consumption down. There are new approaches to this problem. AMD introduced PowerTune for its Radeon HD 6900–series cards. We’ll look into the effectiveness of this capability later in this piece.

Finding the Right Combination

Lowering operating clocks is one way to reduce power consumption. This is as true for GPUs as it is for CPUs. However, clock speed (core and memory) is only one part of the equation. As with CPUs, the graphics processor’s operating voltage also plays a role.

If we wanted to limit or lower power consumption, couldn't we just manually set clocks and voltages? It’s actually really easy to modify frequencies using vendor-provided utilities and third-party software. And why not? Finding the right combination of clock and voltage can offer significant power savings.

Altering voltages is a different matter. Most graphics cards don't offer an easy way to adjust voltage. And in fact, in light of the fact that certain individuals have blown up their GeForce GTX 590s using unrealistic voltage settings, Nvidia even locks out voltage manipulation of those cards altogether. It’s not clear if that’ll apply to just the GTX 590 or a broader sampling of the company’s portfolio, but it demonstrates the bad that can come from too much tinkering.

How about the other cards out there that can still be modified? Unfortunately, voltage adjustments are limited to 3D-mode. Most cards do not offer a way to adjust voltages at idle or some intermediate mode.

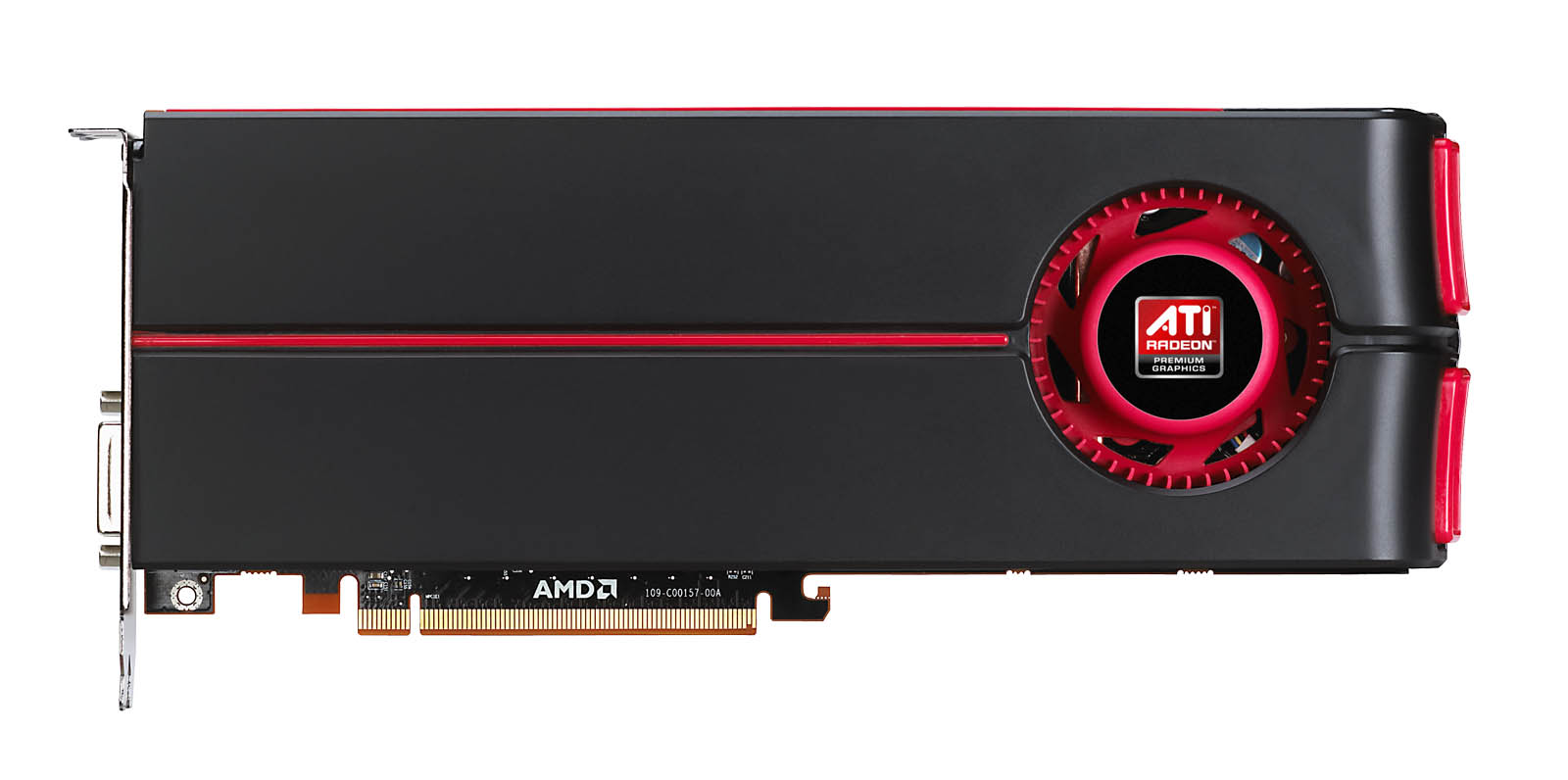

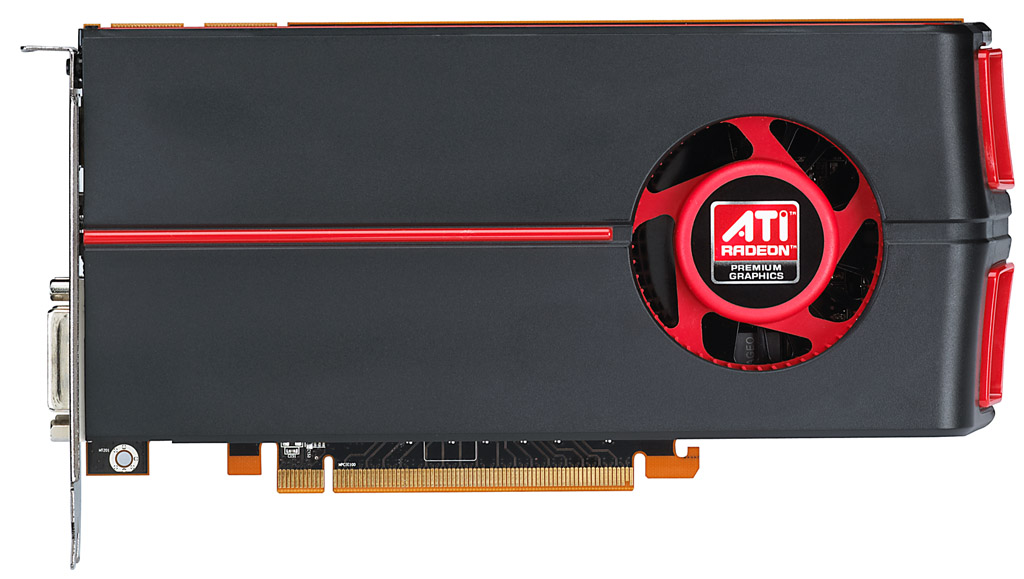

But today we’re going to perform an experiment. We're going to see just how much power we can save by lowering clocks and voltages. In the process, we’re going to measure the associated performance hit to gauge whether those changes are worthwhile. We’ll be using two cards: AMD’s Radeon HD 5870 and 6970, with a 5770 for comparison.

Current page: Saving Power On AMD Graphics Cards

Next Page The Tests, Test Setup, And A Side Note-

I think, considering those people using SLi and crossfire and higher end videocards, they don't really give a gat about how much elec. they are using. They can afford to buy two expensive PCBs, why would they care about extra 5~10 bucks per month? If poeple are focused on lower power consumption, they would go for lower performance components, arent they?Reply

-

anttonij I guess the most important point of this review is that you can lower the cards voltage while running at stock speed. For example I'm running my GTX 460 (stock 675/1800@1.012V) at 777/2070@0.975V or if I wanted to use the stock speeds, I could lower the voltage to 0.875V. I've also lowered the fan speeds to allow the card to run almost silently even at full load.Reply -

Khimera2000 @.@ there is no apple @.@Reply

This is neat though :) I wonder if this article might inspire someone to make an application. Come on open source dont fail me now >. -

Could you do comparison of "the fastest VC" vs "entry level" and then show us how much money we might end up paying each month or day?Reply

-

the_krasno Manufacturers should find a way to implement this automatically, imagine the possibilities!Reply -

wrxchris @OvaCerReply

I have 2 gfx cards pushing 3 displays, but I'm all for saving watts wherever I can. Our society has advanced to the point where sustainability is a very important buzzword that is widely ignored by mainstream media and many corporations, and this ignorance trickles down to the mainstream like Reaganomics. Minuscule reductions such as 30w savings across hundreds of thousands if not millions of users adds up to a significant reduction in carcinogenic emissions and saves valuable resources for future consumption. -

neiroatopelcc So when playing video, you risk your amd card going into uvd mode? What models does that apply to?Reply

I want to know, cause for instance in a raid, I'd sometimes watch video content on another screen while waiting around for whatever there is to wait for. I already lose the crossfire performance because of window mode. I don't want to lose even more.

Does my ancient 4870x2 support uvd? -

jestersage so... for the dual bios HD6900s, I can RBE one bios with my desired settings and just choose which bios to use before I power up my PC? hmmm... interesting.Reply