AMD's Ryzen 3D V-Cache Chips Have Been in Development For Years

Bolt it on and go

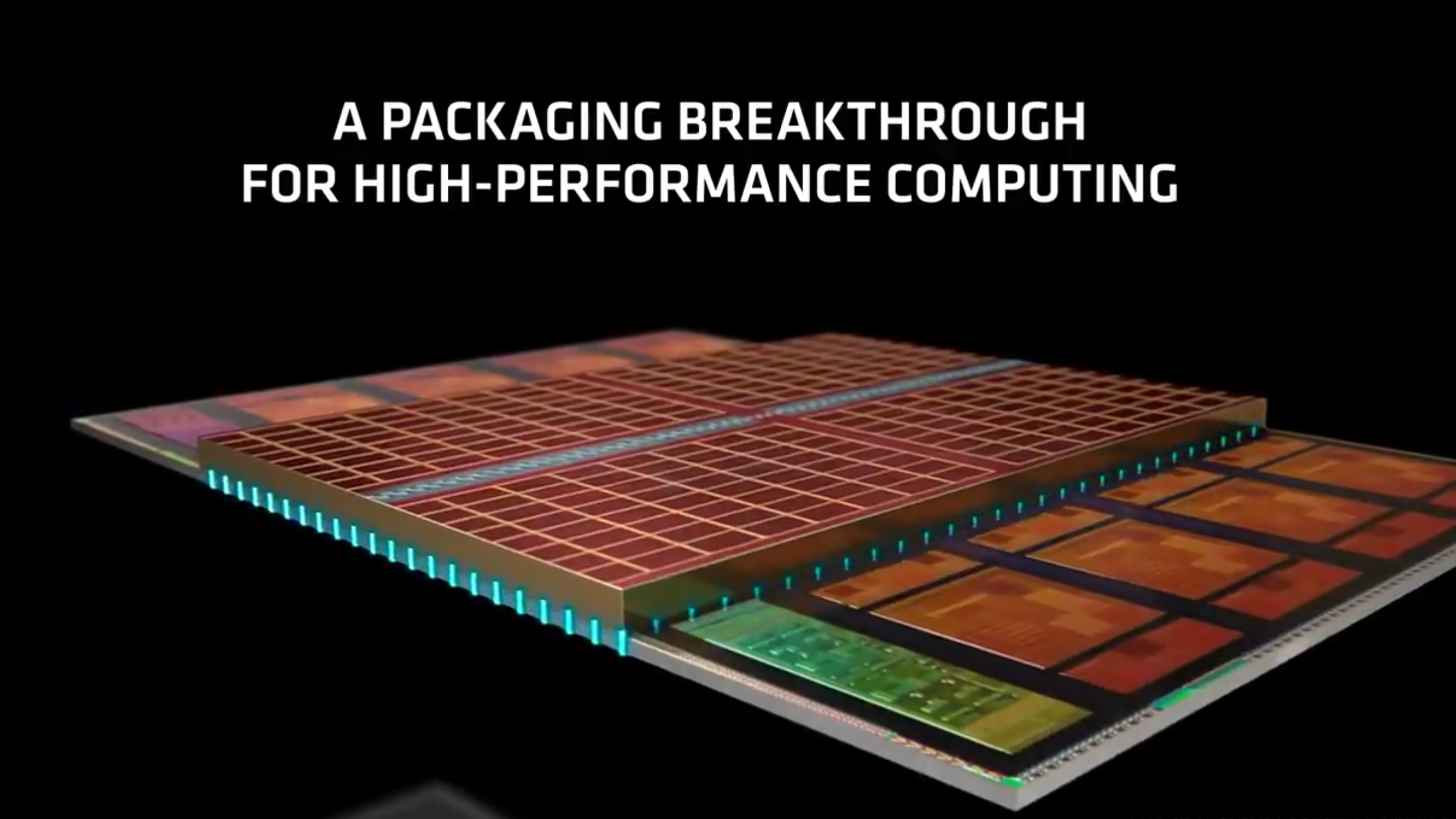

AMD announced its 3D V-Cache technology a few months ago, demonstrating incredible performance gains from simply stacking more L3 cache, up to 64MB, on top of Ryzen CPUs. Now, thanks to Yuzo Fukuzaki from TechInsights, we have more details related to 3D V-Cache. This includes data that shows that modern Zen 3 CPUs were designed to accommodate stacked 3D cache from the start, telling us this technology has been in the works for years.

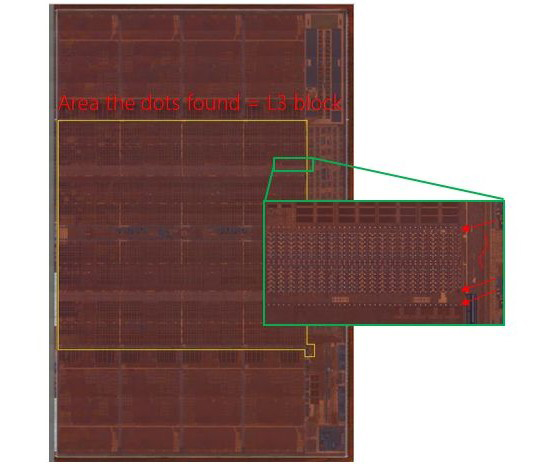

According to Fukuzaki, he has found connection points, and the space for 3D stacked cache on a standard Ryzen 9 5950X sample. Looking at the picture below, you can see dots along the edges of Zen 3's die where the 3D V-Cache could be connected. These are copper connection points for another stack of 3D cache if it were to be installed.

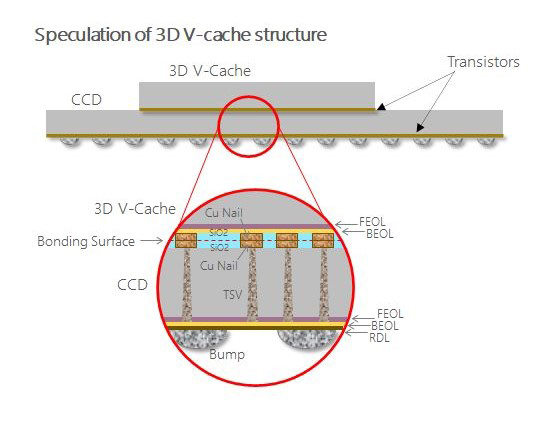

This installation process uses TSVs (through-silicon vias) via hybrid bonding to secure the second layer of SRAM to the chip. Because TSVs use copper instead of solder, the SRAM has significantly higher bandwidth and thermal efficiency than simply soldering the chips together.

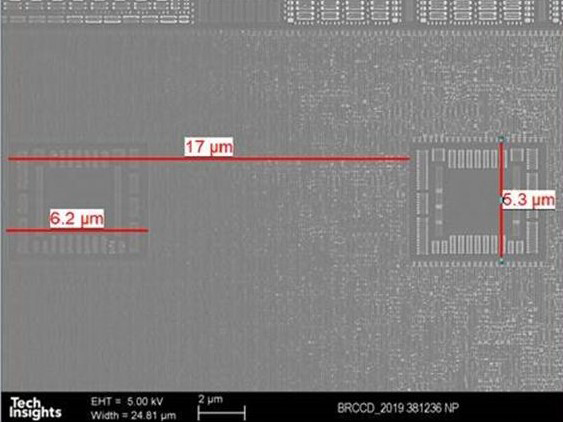

Based on their analysis, the firm has reverse-engineered some of the specifics behind how the 3D V-Cache will be connected, including TSV pitch, the empty space inside the CPU for another stack of cache, and more.

- TSV pitch ; 17μm

- KOZ size ; 6.2 x 5.3 μm

- TSV counts rough estimation ; about 23 thousands!!

- TSV process position ; Between M10-M11 (15 Metals in total, starting from M0)

This all confirms that AMD has been planning to implement 3D V-Cache for quite some time now, so it makes perfect sense that it can release updated Zen 3 parts later this year with significantly more cache thanks to this technology — the company obviously laid the foundation several years ago.

It's logical to expect AMD to use 3D V-Cache with its future architectures, too, like the new Zen 4 architecture that is around the corner. 3D V-Cache will give AMD CPUs a huge advantage over Intel in terms of raw L3 cache sizes (at least for now), which are becoming more and more important as CPU core counts increase.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

-

thisisaname ReplyKrotow said:Want to see how those tiny gnomes with copper nails and hammers look.

I would assume that the GPUs use tiny dwarfs with picks, which is why they are so good at mining?;) -

JayNor "demonstrating incredible performance gains..."Reply

really? let's see your numbers.

btw, there was an AMD tomshardware in March,2019. "Ryzen Up: AMD to 3D Stack DRAM and SRAM on Processors" -

Gillerer ReplyJayNor said:"demonstrating incredible performance gains..."

really? let's see your numbers.

The sentence was in reference to AMD demonstrating the design in their Computex 2021 keynote in May (with a 5900X + V-Cache). AMD did have some +% numbers. -

Howardohyea is it just me or does AMD really likes caches?Reply

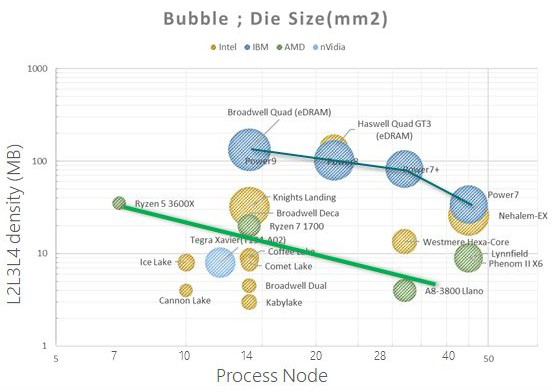

The i9 10980XE only uses 24.75 MB of L3 cache while AMD's 3950X and 5950X is more than double at 64MB. What's the reason behind a smaller cache in Intel or will they see the same jump in performance with more cache? -

MasterMadBones Reply

Both AMD and Intel architectures like to have more cache. Intel has larger cores, which leaves less die space for lots of cache. Combine that with the fact that Intel still uses a monolithic design, which means their total die area budget is lower for the same yield.Howardohyea said:is it just me or does AMD really likes caches?

The i9 10980XE only uses 24.75 MB of L3 cache while AMD's 3950X and 5950X is more than double at 64MB. What's the reason behind a smaller cache in Intel or will they see the same jump in performance with more cache?

Architecturally, AMD does theoretically benefit slightly more from more cache due to the higher memory latency, but Intel's L3 cache is a little more sophisticated with the way it works. -

Conahl ReplyJayNor said:"demonstrating incredible performance gains..."

really? let's see your numbers.

DOTA2 (Vulkan): +18%

Gears 5 (DX12): +12%

Monster Hunter World (DX11): +25%

League of Legends (DX11): +4%

Fortnite (DX12): +17%from anandtech, not sure if it is incredible performance gains, but still pretty decient. -

JayNor The numbers presented by Anandtech are from AMD's claims for FPS boost while running games... 15% overall, on average while using an unnamed graphics card.Reply

Projecting a node jump 15% overall processor performance update from this is a stretch. -

Conahl Reply

considering AMD's track record with Zen from the start, and the fact that what they have stated for performance gains over previous gens, turned out to be pretty accurate, its probably safe to believe them.JayNor said:Projecting a node jump 15% overall processor performance update from this is a stretch. -

BFG-9000 Reply

It's the one place they can really stick it to Intel where it hurts.Howardohyea said:is it just me or does AMD really likes caches?

Used to be AMD was always a process node behind Intel so they had to have less cache, because it was more expensive in die area for them. Well now the shoe is on the other foot and they should take advantage of it while they can. It wasn't that long ago when mainstream Intel quadcores had 12MB L2 while contemporary AMD only 2MB plus another 2MB L3 or triple the total amount.

More cache improves hitrate, which improves performance if latency isn't also much worse. I had expected by now that they would've added a chiplet for a simply huge L4 with only around half the latency of RAM--like how Intel had the Crystal Well eDRAM separate chip on the CPU package. Wasn't 128MB L4 large for 2013? I mean the chips had 6MB L3 too.