Apple, AR, And The 2017 iPhone Lineup

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

The next generation of iPhones--the iPhone 8, iPhone 8 Plus, and iPhone X--feature the usual iterative improvements such as better displays, sleek design, and fancy materials, including higher strength glass and surgical-grade steel--but more importantly, we finally got a further glimpse into what Apple is trying to do in AR.

Even so, questions linger.

Apple hasn’t yet joined the VR revolution (perhaps it never will), but the company recently jumped into the augmented reality market with a software developer kit called ARKit that enables AR experiences on existing iOS devices. With the new iPhone, those experiences should be even stronger, thanks to the new A11 Bionic SoC, which the company designed to handle the workload of advanced AR experiences. Apple also said that it tuned to ARKit software for the new iPhone line.

Article continues belowApple’s A11 chip includes a six-core CPU with two high-performance cores and four high-efficiency cores, a three-core graphics processor, and an integrated ISP (image signal processor). Apple said the CPU processes world tracking data, while the GPU delivers high resolution graphics at 60fps. Apple uses the ISP to run real-time lighting estimations, which allows the digital content to blend in with the lighting of the real-world environment. This is a similar approach to the one Qualcomm has taken with the Snapdragon 835--that is, bake all the good stuff into the SoC and leverage the ISP, as opposed to relying on a vision processor or, in the case of Tango phones, another camera/sensor assembly.

Generally, Apple claimed that the A11 Bionic chip offers “70 percent greater performance in multi-threaded workloads,” and “30 percent faster graphics performance” than the A10 SoC from the previous generation iPhone.

The upcoming iPhone lineup also includes new gyros and accelerometers to help provide more accurate movement and orientation calibration than the previous generation iPhones. Further, Apple said the new iPhones offer a “huge difference in performance for AR” by ensuring that the cameras are calibrated for AR from the factory, although the company didn’t explain what exactly that process entails.

Apple also improved the iPhone’s audio system to better support immersive media. The new iPhones feature stereo speakers--one near the top and one near the bottom--and it supports spatial audio so that sound in augmented experiences adapts to where you are in the environment and the direction you’re facing in relation to the audio source. The spatial audio algorithms even account for sound occlusion from solid objects.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Apple’s new iPhone lineup includes three models with varying features. The iPhone 8 offers a 4.7” retina display and single 12MP rear-facing camera. The larger iPhone 8 Plus offers a larger 5.5” retina display, and it includes dual 12MP rear-facing cameras--one with a wide angle, and one with a telephoto lens. The iPhone X includes dual 12MP rear-facing cameras. It also features a 5.8” OLED “Super Retina Display,” which features 2436 x 1125 pixels (458 ppi) and support for HDR color. All three phones offer optical image stabilization

Apple didn’t explain how the dual camera system benefits its AR technology, although certainly it should improve the depth perception and object tracking performance. The dual camera system enables the new iPhones to separate the subject from the background while taking photos and shooting video to enable advanced lighting features. We imagine the dual camera system found on the iPhone 8 Plus and iPhone X would enable superior tracking fidelity and depth perception in AR apps, too.

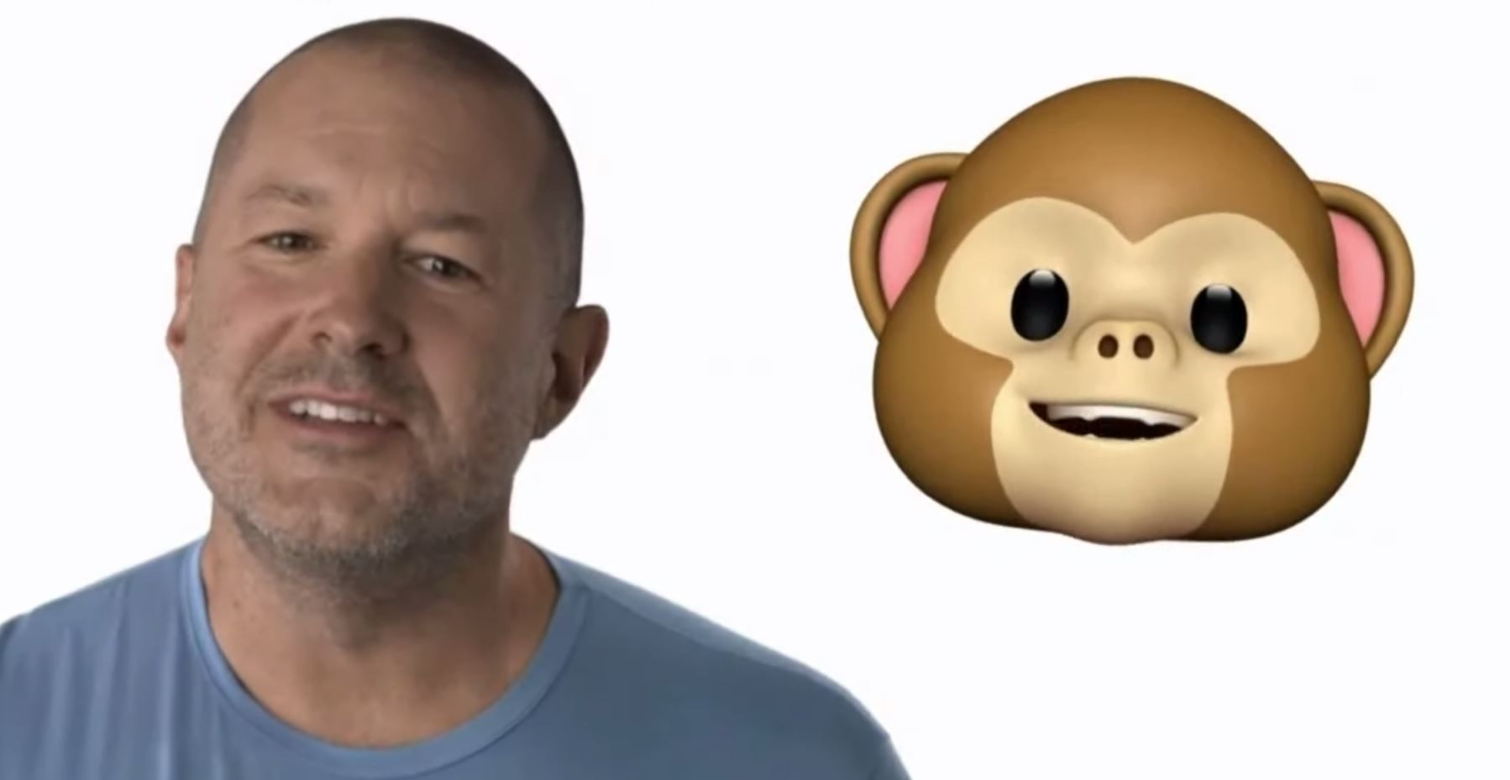

The iPhone X also offers facial recognition technology called TrueDepth, which enables logging into your phone with a glance. The front-facing TrueDepth camera system includes a standard front camera, a flood illuminator for low light situations, a dot projector that shines 30,000 invisible dots on your face, and an infrared camera to detect the dots. The data that the TrueDepth camera collects helps to build a mathematical model of your face that is stored on the device in a “secure enclave.” Apple said it won’t store your “face data” on a server. The A11 Bionic chip also includes a dual-core neural engine that performs up to 600 billion machine learning calculations per second to enable real-time facial identification.

That same technology can also be used to bring facial expressions into the virtual world. Apple created a feature called Animojis, which enables you to use your real expression in emoji icons. Apple didn’t say that the expression tracking technology could be used for other AR experiences, but we expect that we’ll see a variation of the tech used in games and apps in the future. We also expect to see eye tracking technology make its way to Apple's devices.

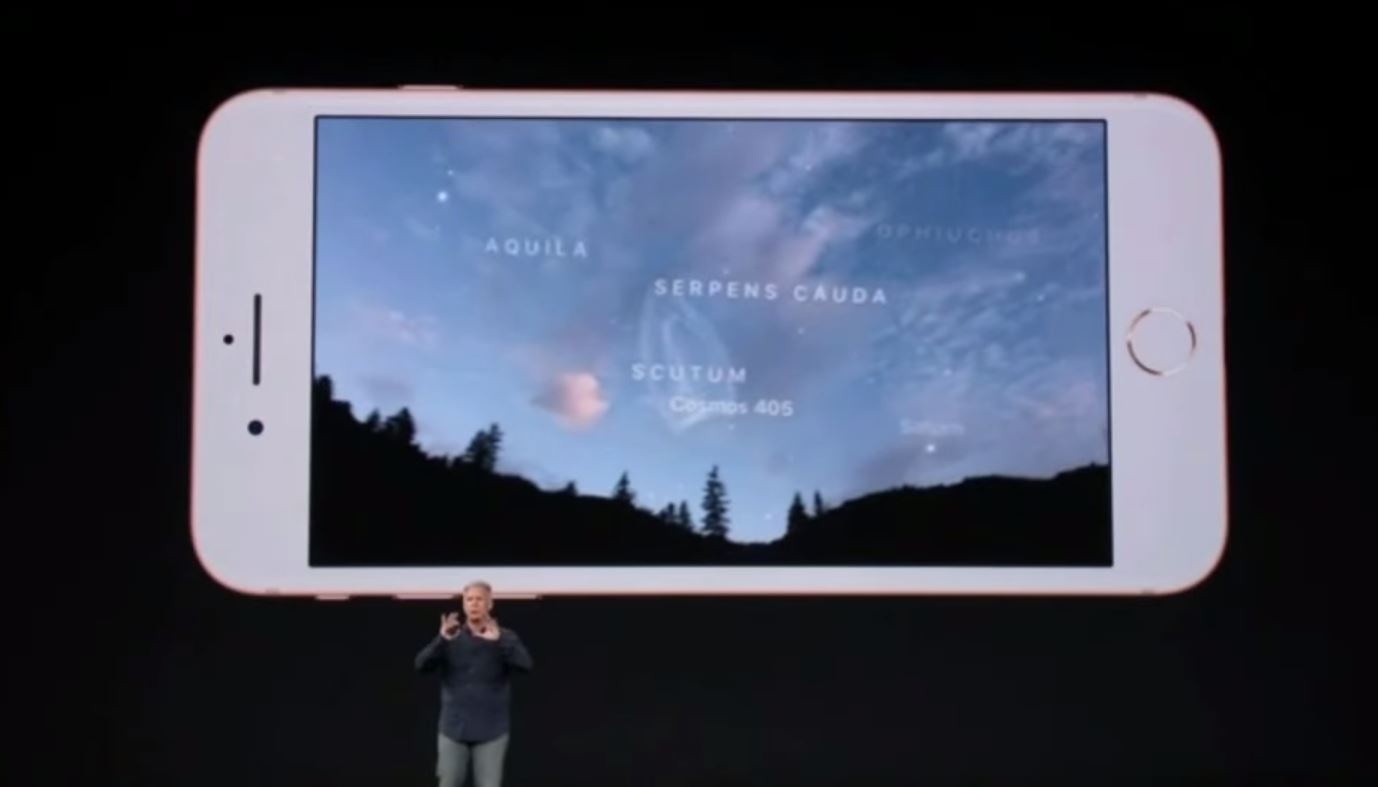

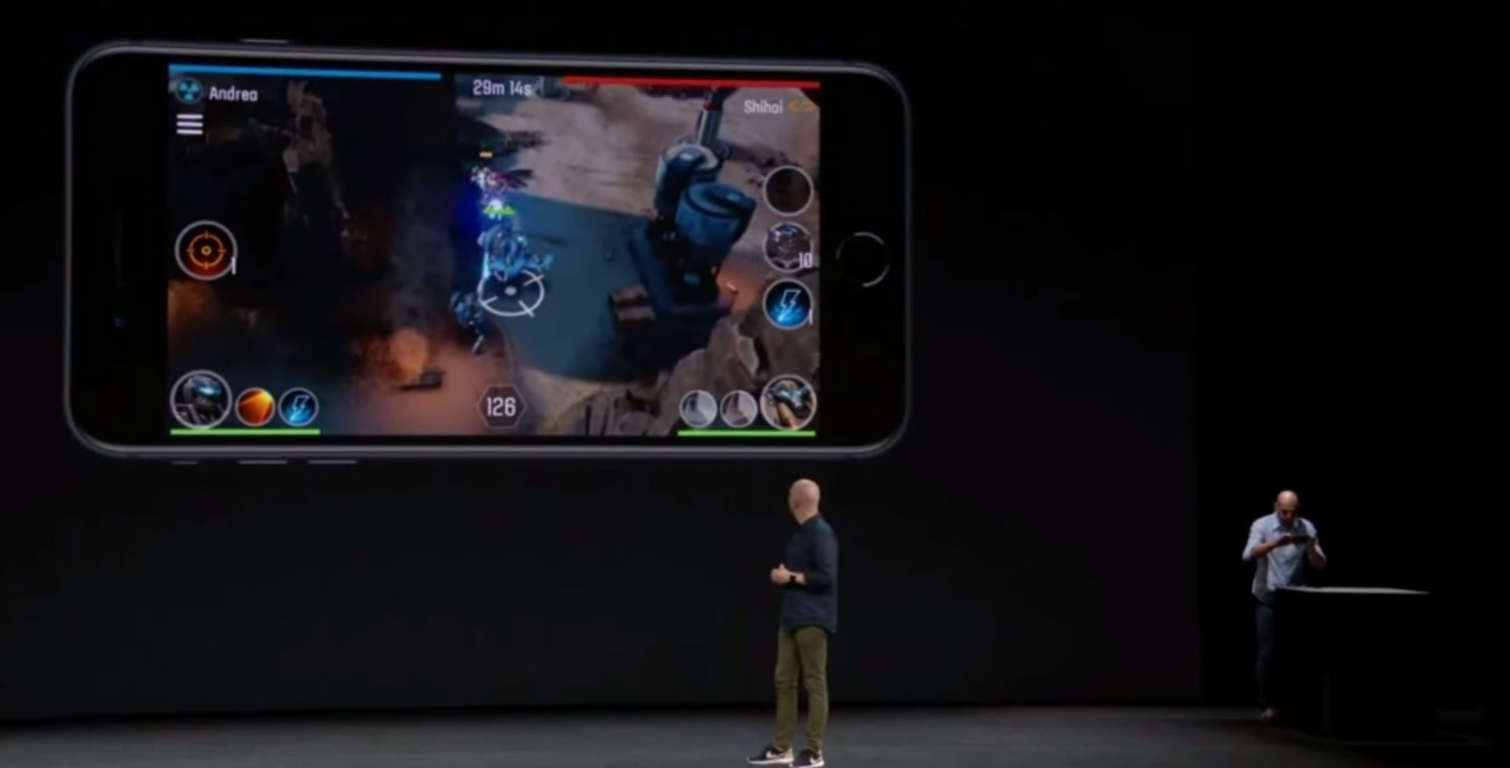

Apple said that a handful of AR apps that take advantage of the new iPhone’s features would be available this month, including a tabletop-style augmented reality strategy game called The Machines that lets you turn any flat surface into the battlegrounds that you play on. Apple also demonstrated an app that the MLB is developing that would let spectators see the statistics of the players on the field, as well as other pertinent information. And an app called Skyguide places a map of the stars over the sky so you can learn more about the firmament you see above.

Notably absent from Apple’s presentation was anything about smart glasses or an HMD of any kind that one could pair with the new iPhones and make more use of their AR capabilities. In that sense, the new iPhones will suffer the same issues that plague any AR-enabled smartphone that lacks an accompanying HMD: a sharply limited field of view (i.e., the smartphone’s display) and a clunky interface (again, the smartphone’s display and your fingers).

Apple said that the iPhone 8 and iPhone 8 Plus would be available for pre-order on Friday, September 15 and that the new phones would ship the following week. The iPhone X is a little further out--Apple will accept pre-orders on October 27 and ship the device on November 3.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

nitrium Another yawner from Apple. Incremental upgrades to at best level peg with existing Android phones (with the possible exception of its CPU/GPU).Reply

Remember this phone from the same time LAST YEAR? http://www.gsmarena.com/xiaomi_mi_mix-8400.php -

kdw75 Apple doesn't need to compete with the hardware Android has. People buy Apple for the OS, the hardware is secondary. If Samsung made a phone that ran iOS then....Reply -

hannibal ... So true, the iOs is the Main reason for big success! And ofcourse very easy communication/co-operation between different iDevices.Reply

And the new phone is not bad at all! Many other devices has so e of these features, but not so Many have all of the in one package. -

AnimeMania The perfect phone for cheating boyfriends! When she looks at your phone, she sees the normal texts and phone numbers, but when you look at the phone it unlocks the hidden texts and phone numbers.Reply -

youngevans Did anyone notice that the face unlocking didn't work when the man was demonstrating under the show? He had to put down the phone and pick another to try again. Its a shame for an anniversary phoneReply -

sasuke79 iphone X specification is really awesome but the price is shocking. I hope they will lower the price since it's their 10th year anniversary...Reply