Intel Unveils Cascade Lake, In-Silicon Spectre And Meltdown Mitigations

CUPERTINO, CALIF. -- Intel officially announced its Cascade Lake processors at its Data-Centric Summit earlier this month but provided very few details. Intel shared more information about its next data center chip here at Hot Chips 2018, with new in-silicon patches for Spectre and Meltdown and support for Optane Persistent Memory among the key developments. Similar in-silicon fixes will worm their way into processors for the desktop PC later this year, but Intel hasn't provided specific information about which processors will have the mitigations.

Intel isn't releasing further details on the processors, such as actual SKUs, specifications, or pricing, but Cascade Lake is coming to the broader market later this year. We expect a formal launch soon.

The new processors will soon contend with AMD's Rome EPYC processors fabbed on TSMC's 7nm process. Intel also has its Cooper Lake lineup waiting in the wings for 2019, but those models will come with yet another iteration of the 14nm process. The Ice Lake processors will come packing the 10nm process in 2020.

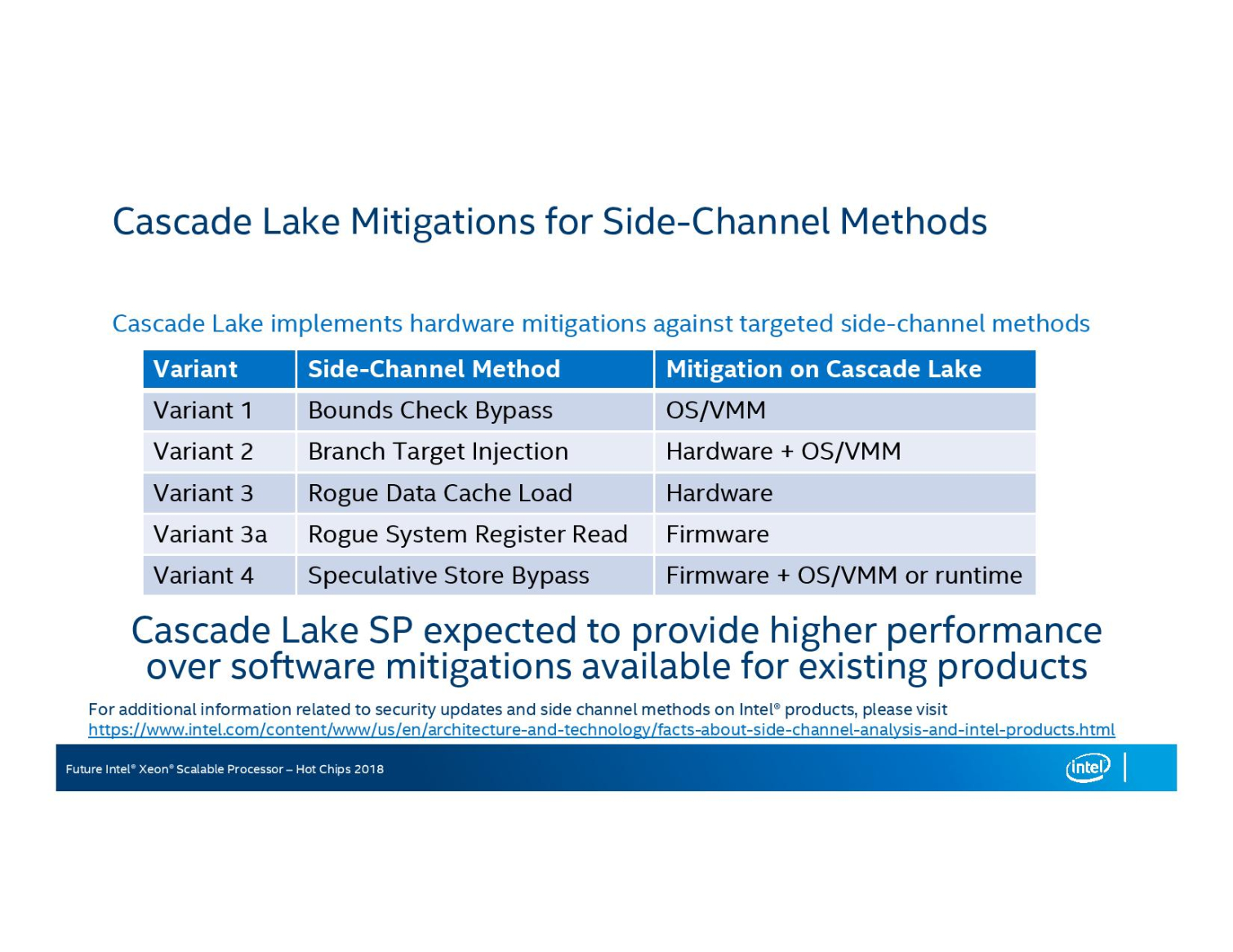

Cascade Lake represents Intel's first processors with in-silicon mitigations for the notorious Meltdown and Spectre vulnerabilities. The existing patches result in reduced performance that varies based on workload, but newer mitigations baked directly into the processors should help reduce the impact. Intel's single slide outlining the mitigations reveals it will still use a combination of firmware and software mitigations for some of the vulnerabilities. Intel will continue to use operating system (OS) and virtual machine manager (VMM) patches to tackle Variant 1, which is one of two flavors of Spectre. Cascade Lake addresses Spectre Variant 2 with a combination of in-silicon fixes and OS/VMM patches. Variant 3a and Variant 4 are newer vulnerabilities that will continue to require firmware and OS/VMM patches.

Variant 3, otherwise known as Meltdown, is the only vulnerability addressed entirely in silicon. The performance overhead of the existing combination of Spectre and Meltdown patches can range as high as 10% for some enterprise workloads, with storage accesses suffering even larger losses under some scenarios. These projections are also based on newer hardware, while older processors can suffer even larger performance penalties.

Intel's continued reliance on OS-based patches, or a combination of them, for Variant 1 and 2 mean the in-silicon patches might not completely remove the performance penalties. We predicted that Cascade Lake would spur a healthy upgrade cycle, not because users are interested in security (they already have that through firmware and software patches), but due to improved performance from alleviating security-based overhead. The limited scope of the hardware patches may reduce the impetus for data centers to upgrade ahead of a normal refresh cycle.

Regardless, the new in-silicon mitigations may help to address future vulnerabilities, as new variants based on the same techniques used in Spectre and Meltdown continue to pop up on a regular basis. Intel isn't providing any detail on the exact nature of the changes to the microarchitecture, and likely for a good reason. Like the rest of the industry, Intel is playing a game of cat and mouse with security researchers and malicious actors that range from nation-states to garden-variety hackers, so sharing too much information about the fixes wouldn't be wise.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The limited scope of the in-silicon patches reminds us that Intel, like the many other companies impacted by these vulnerabilities, is still in the early stages of addressing the issues.

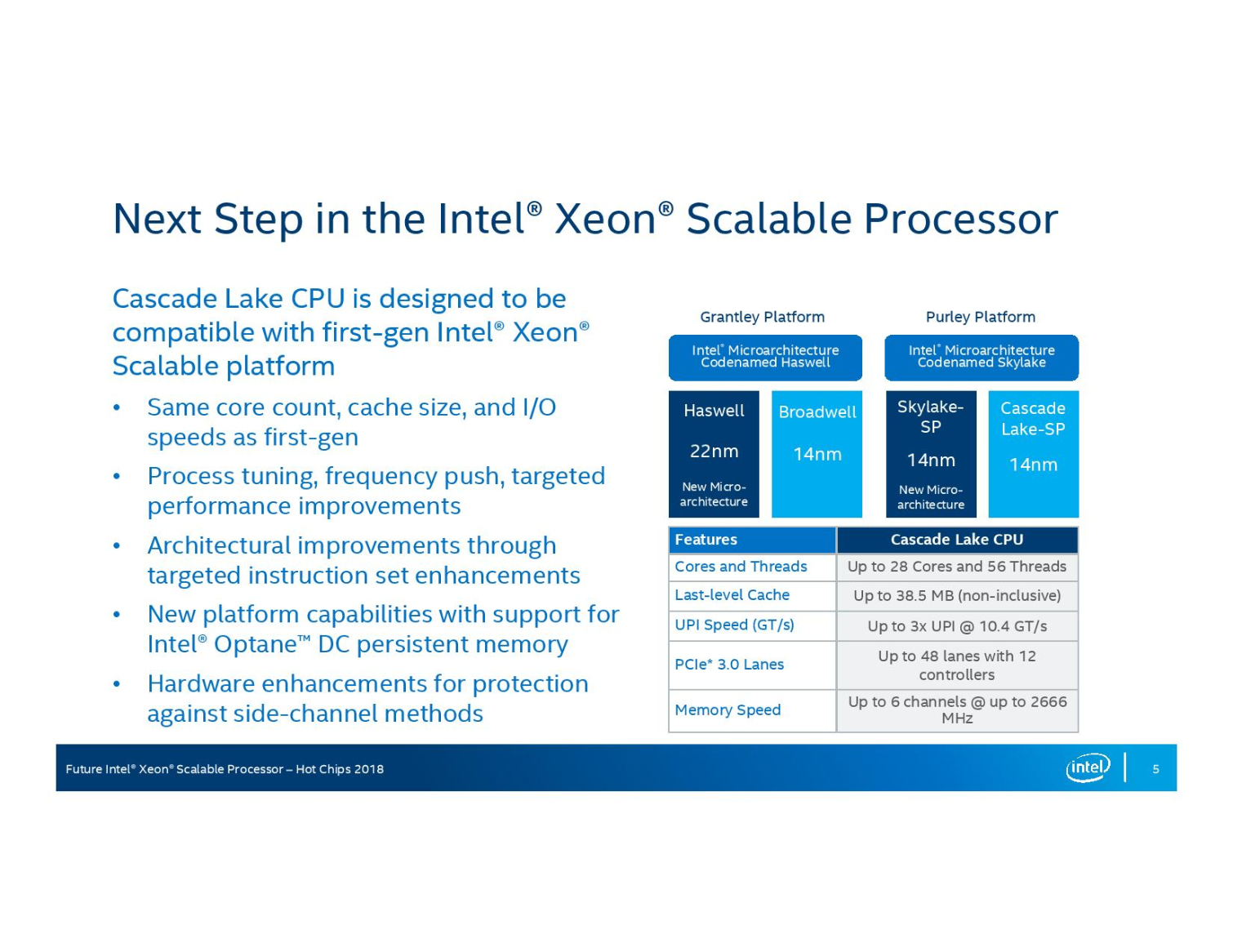

Cascade Lake features many of the same fundamental design elements of the Xeon Scalable lineup, like a 28-core ceiling, up to 38.5 MB of L3 cache, the new UPI (Ultra Path Interface), up to six memory channels, AVX-512 support, and up to 48 PCIe lanes. And yes, they drop into the same socket as the existing generation.

Intel's most notable advancement comes on the process front. The company has moved forward to the 14nm++ process. Intel says the updated process allowed it to improve frequencies, power consumption, and institute targeted improvements to critical speed paths on the die.

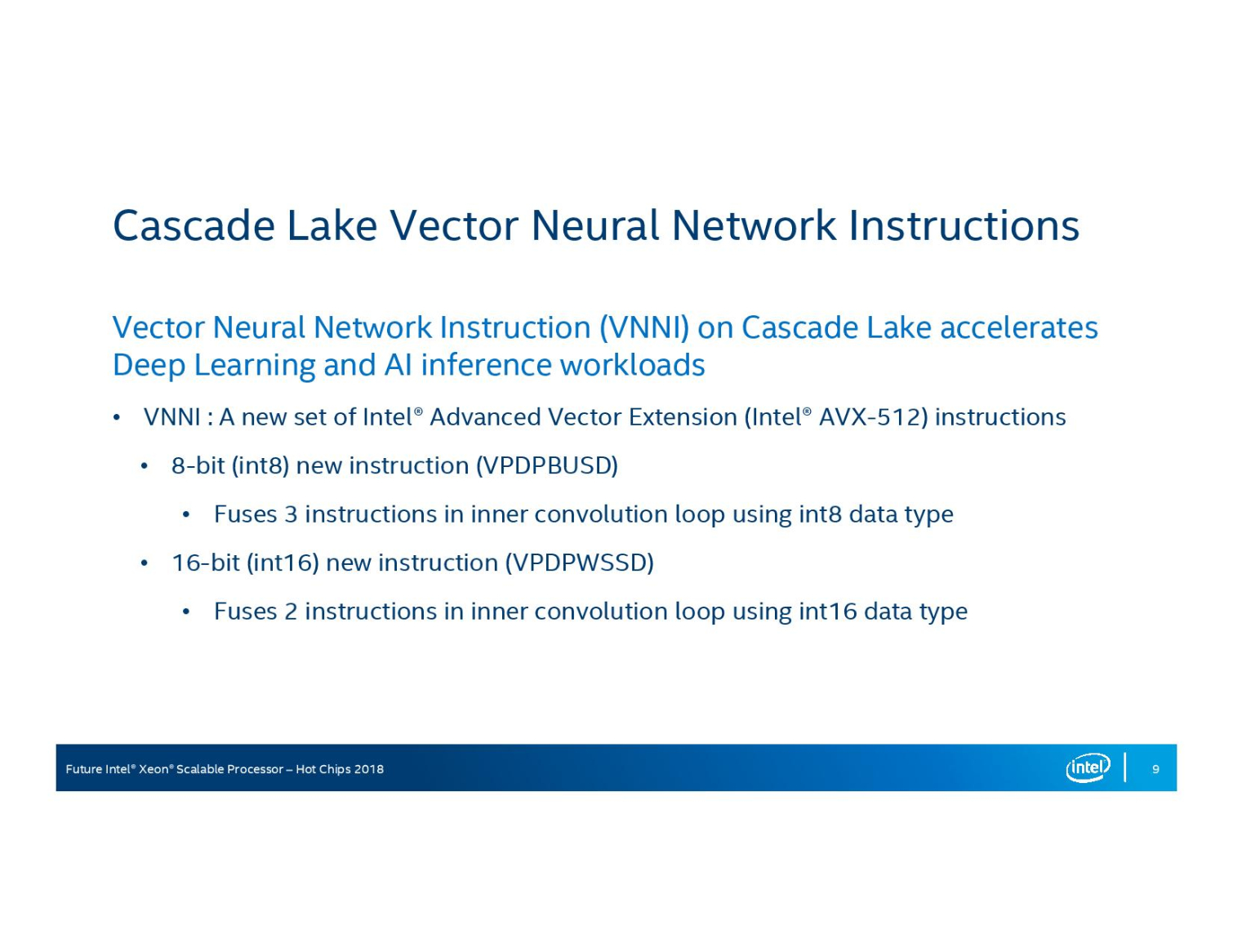

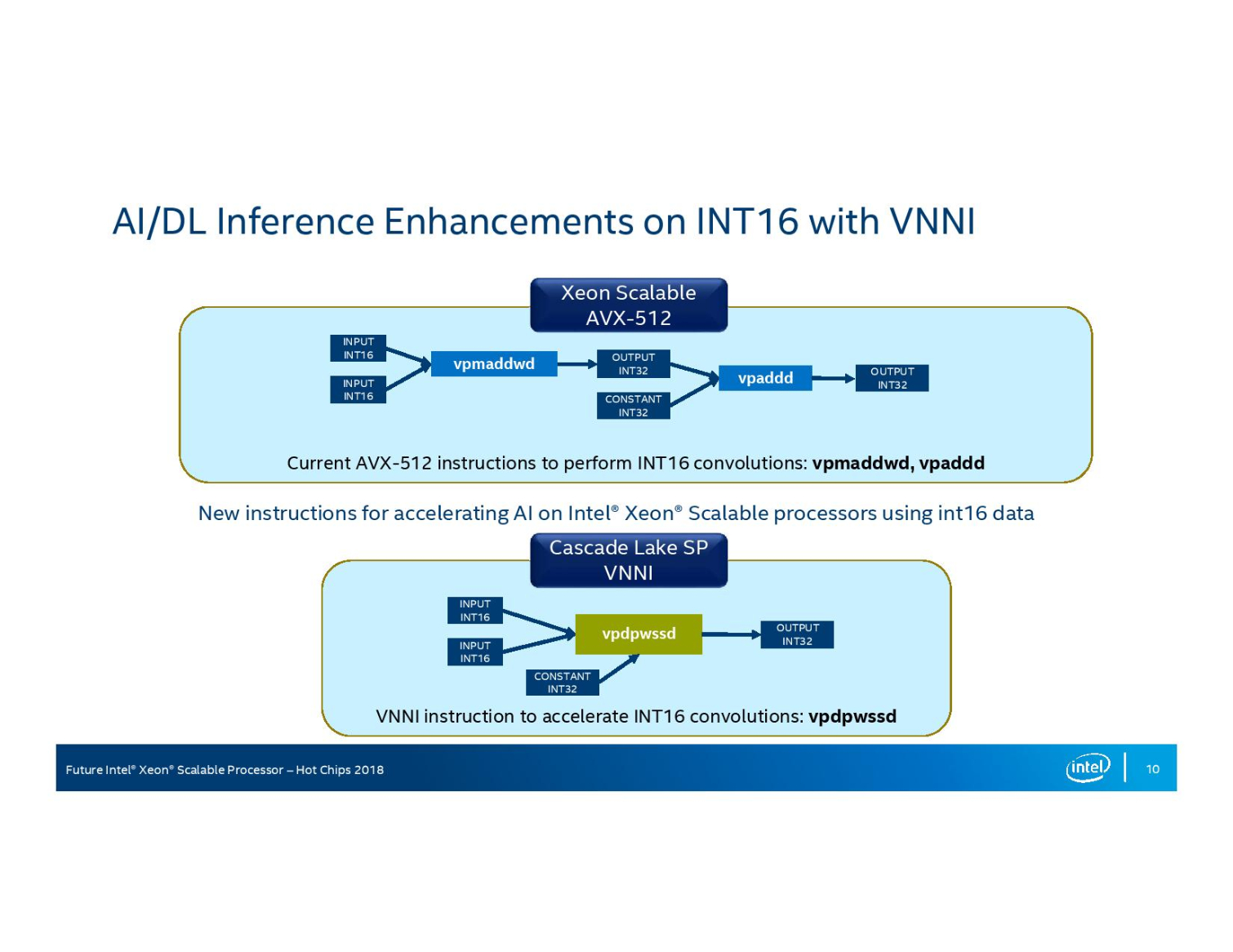

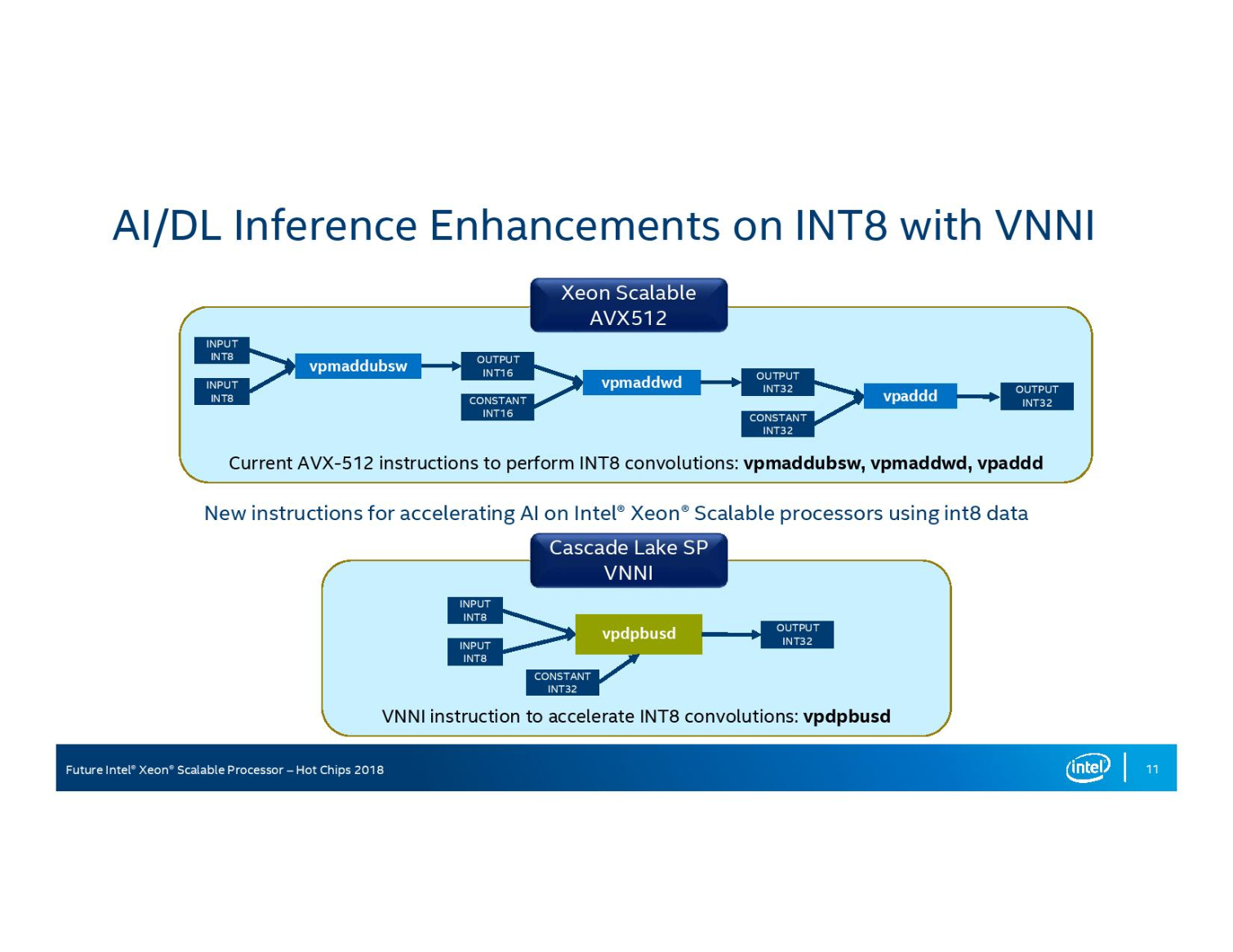

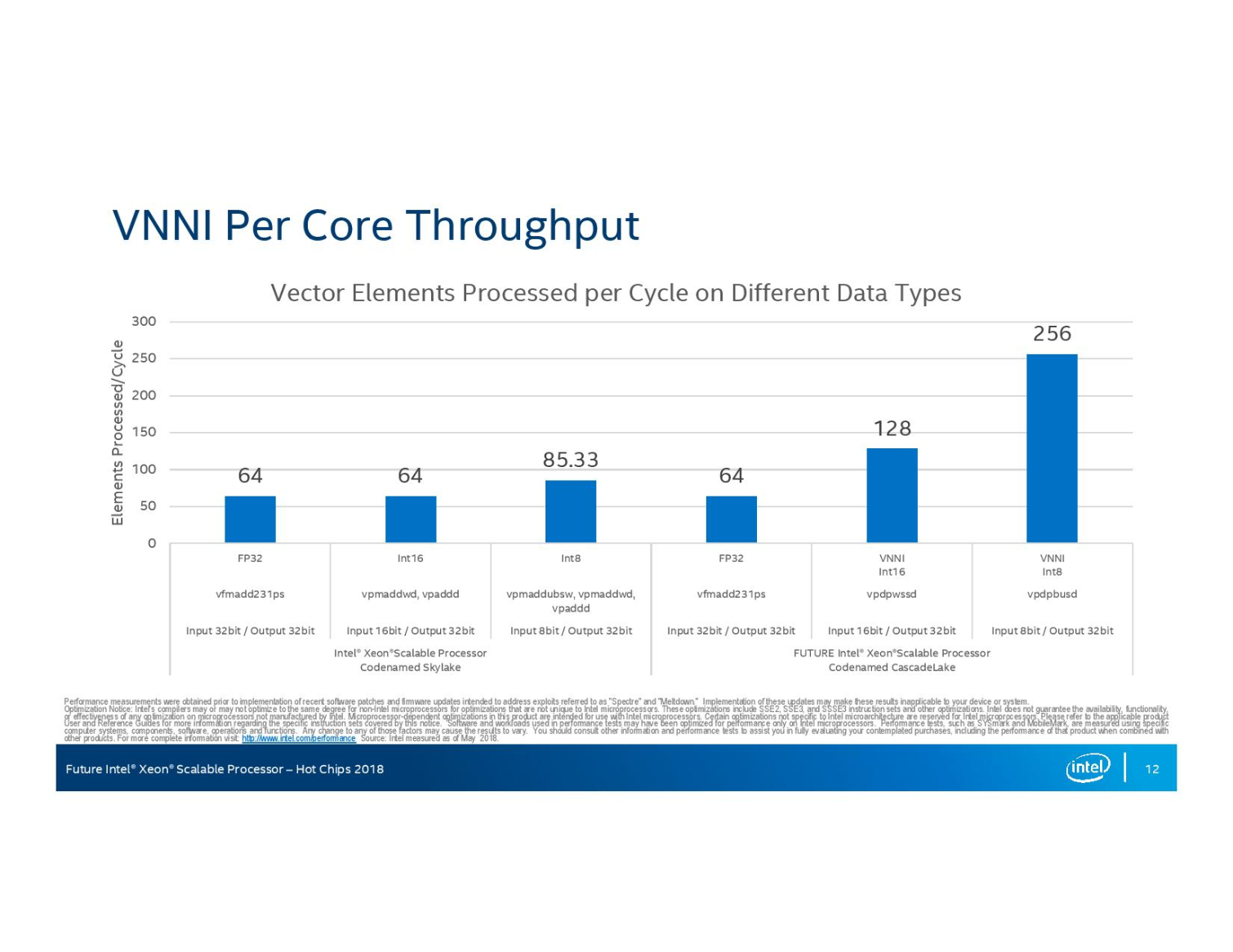

Intel also added support for new VNNI (Vector Neural Network Instructions) that optimize instructions for smaller data types commonly used in machine learning and inference. VNNI instructions fuse three instructions together to boost int8 (VPDPBUSD) performance and fuse two instructions to boost int16 (VPDPWSSD) performance. These AVX-512 instructions will still operate within the normal AVX-512 voltage/frequency curve during the operations.

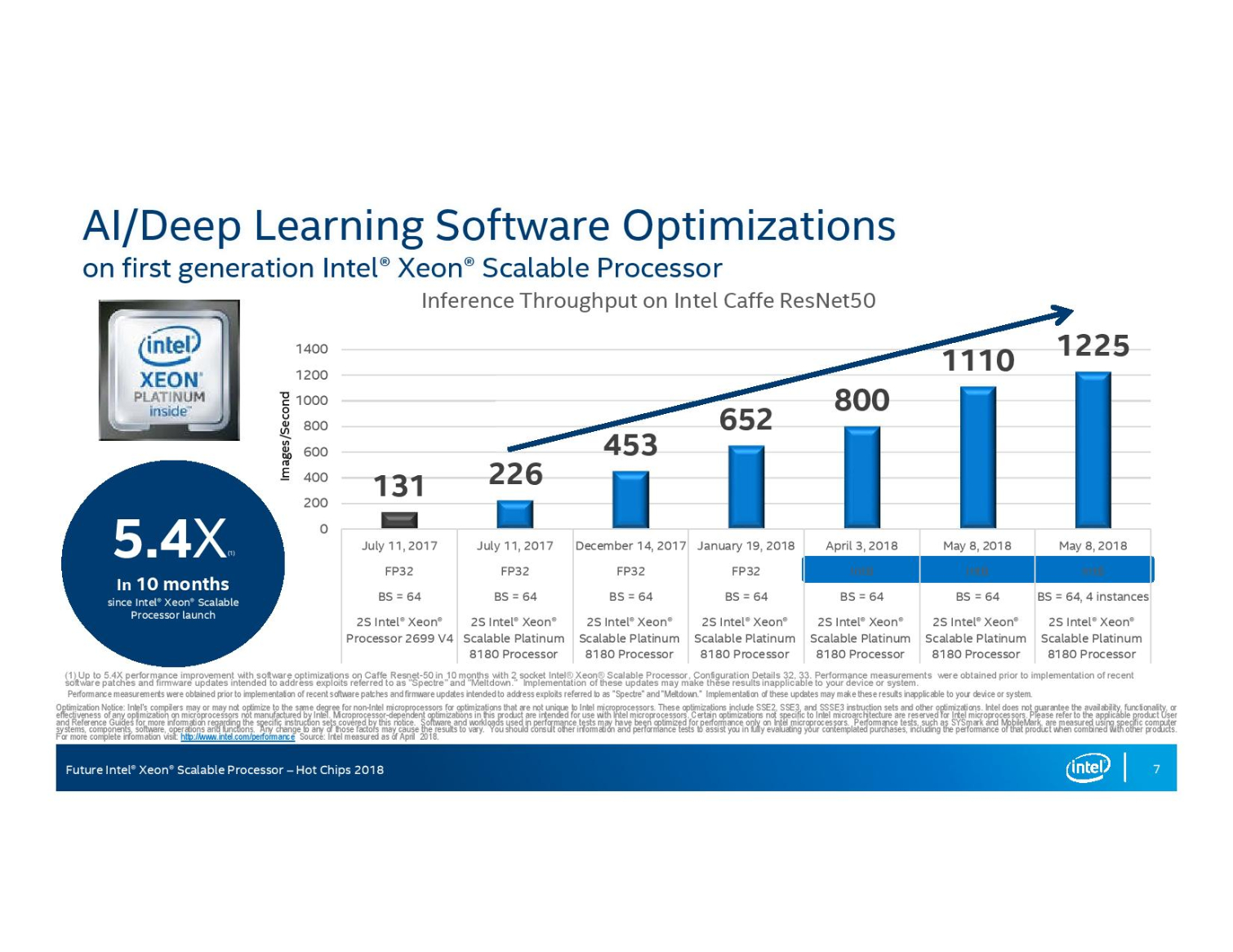

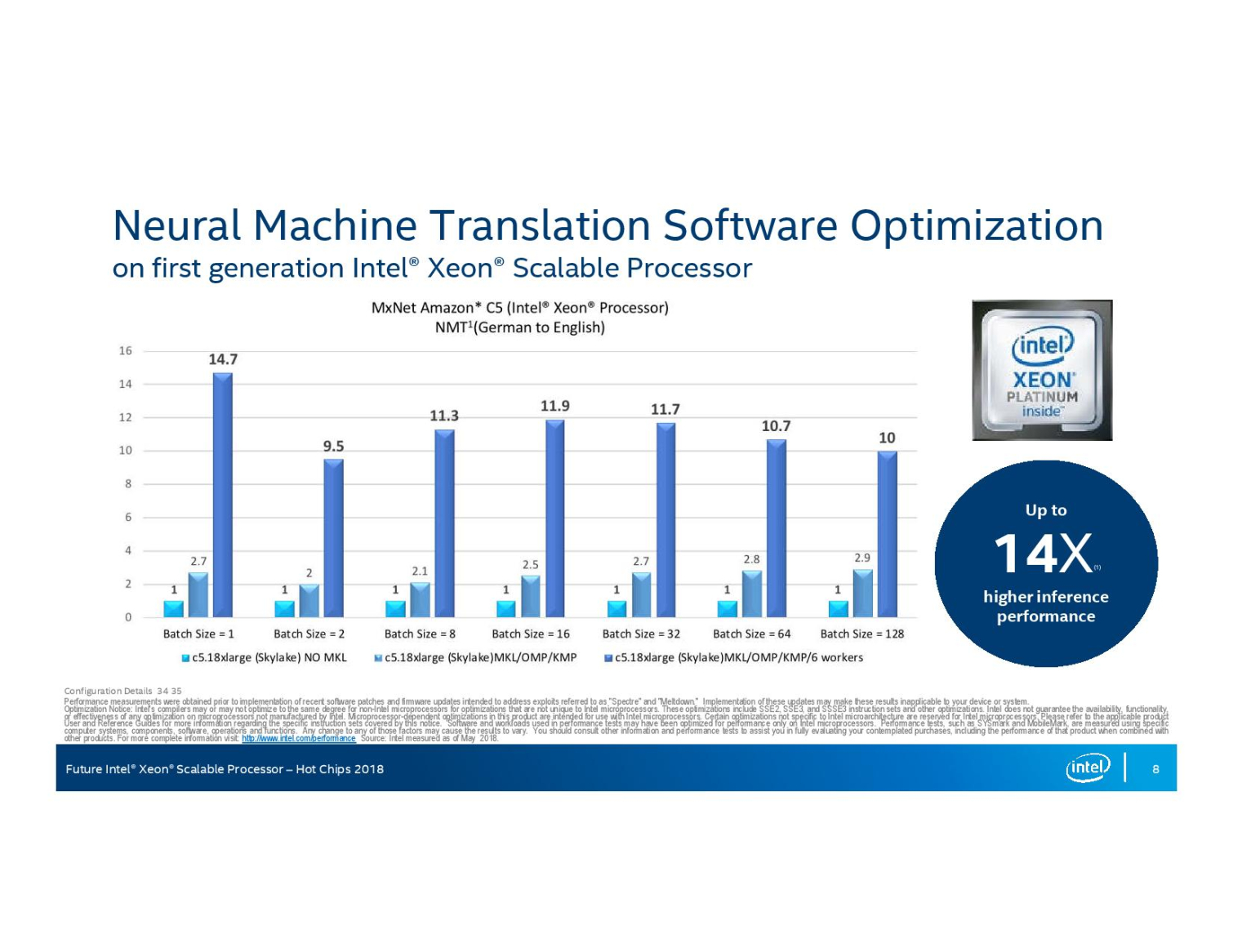

The instructions provide a strong per-core performance boost that exhibits the expected doubling and tripling of the 32-bit floating point performance. Intel has been on a steady march on the deep learning front, which is important given its claims that more inference workloads run on Xeon than any other type of processor. The company claims it has improved Caffe ResNet50 performance by 5.4X with the existing Xeon Scalable processors through targeted refinements to software libraries and frameworks. The company predicts that the addition of VNNI instructions will boost the net gains up to 14 times with Cascade Lake.

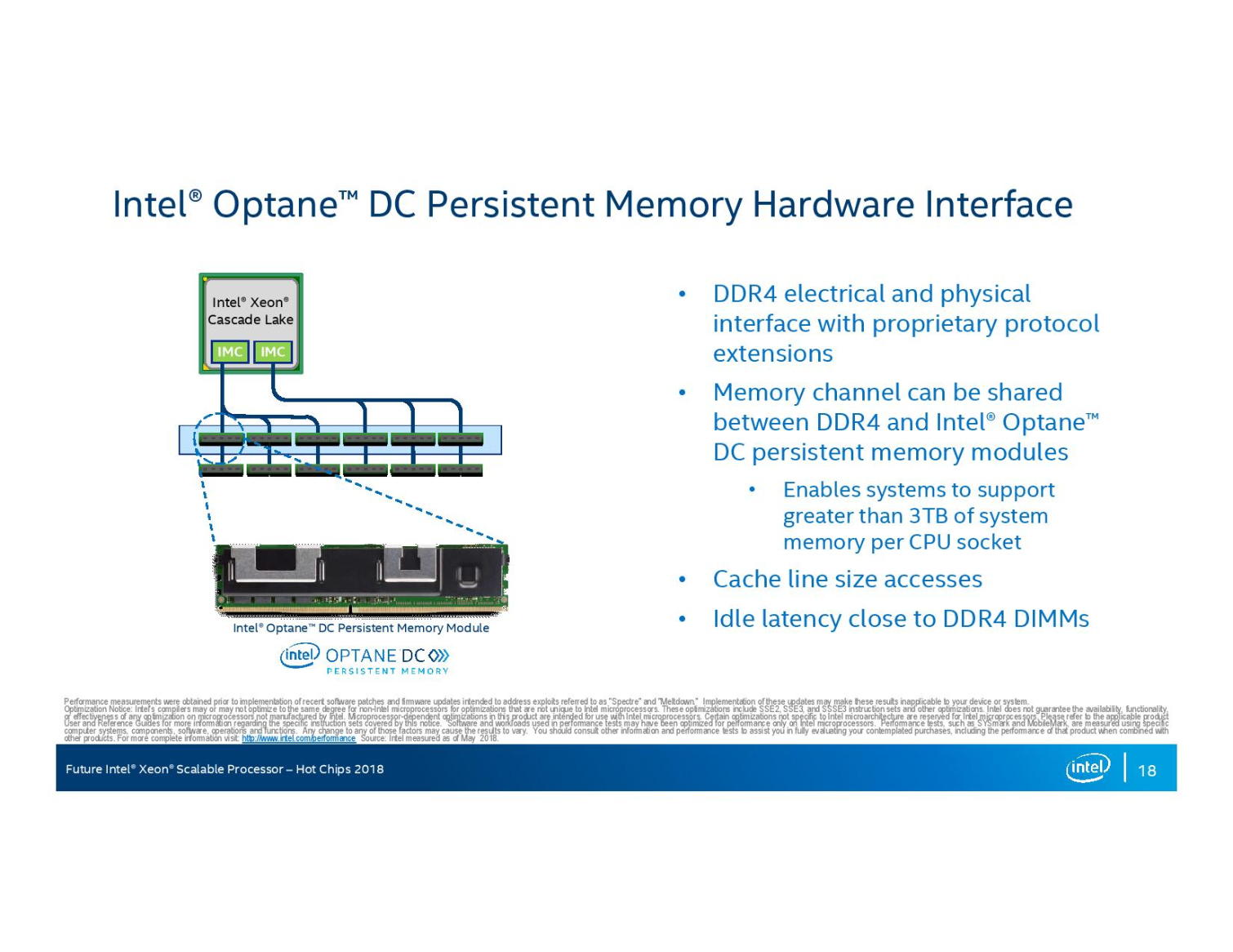

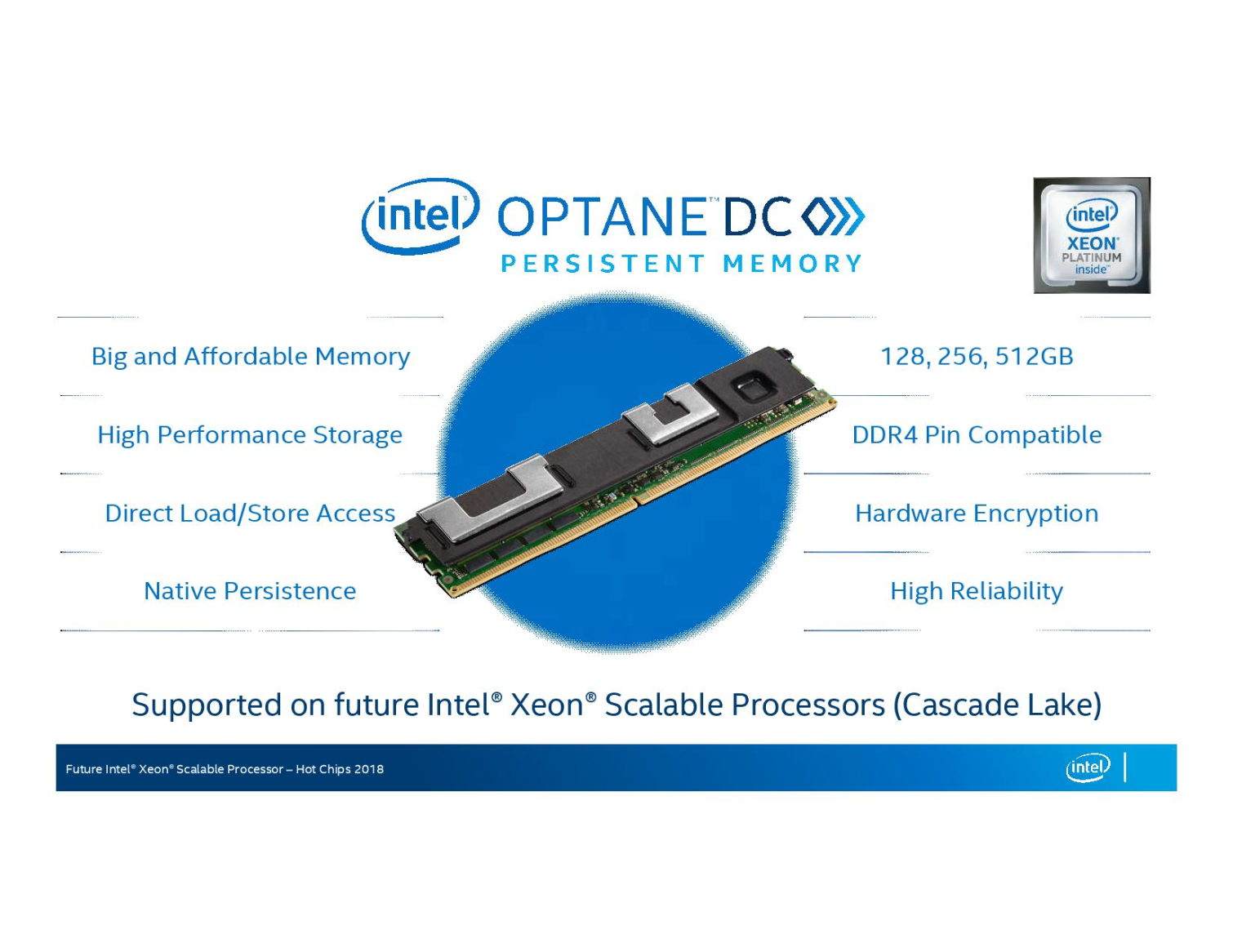

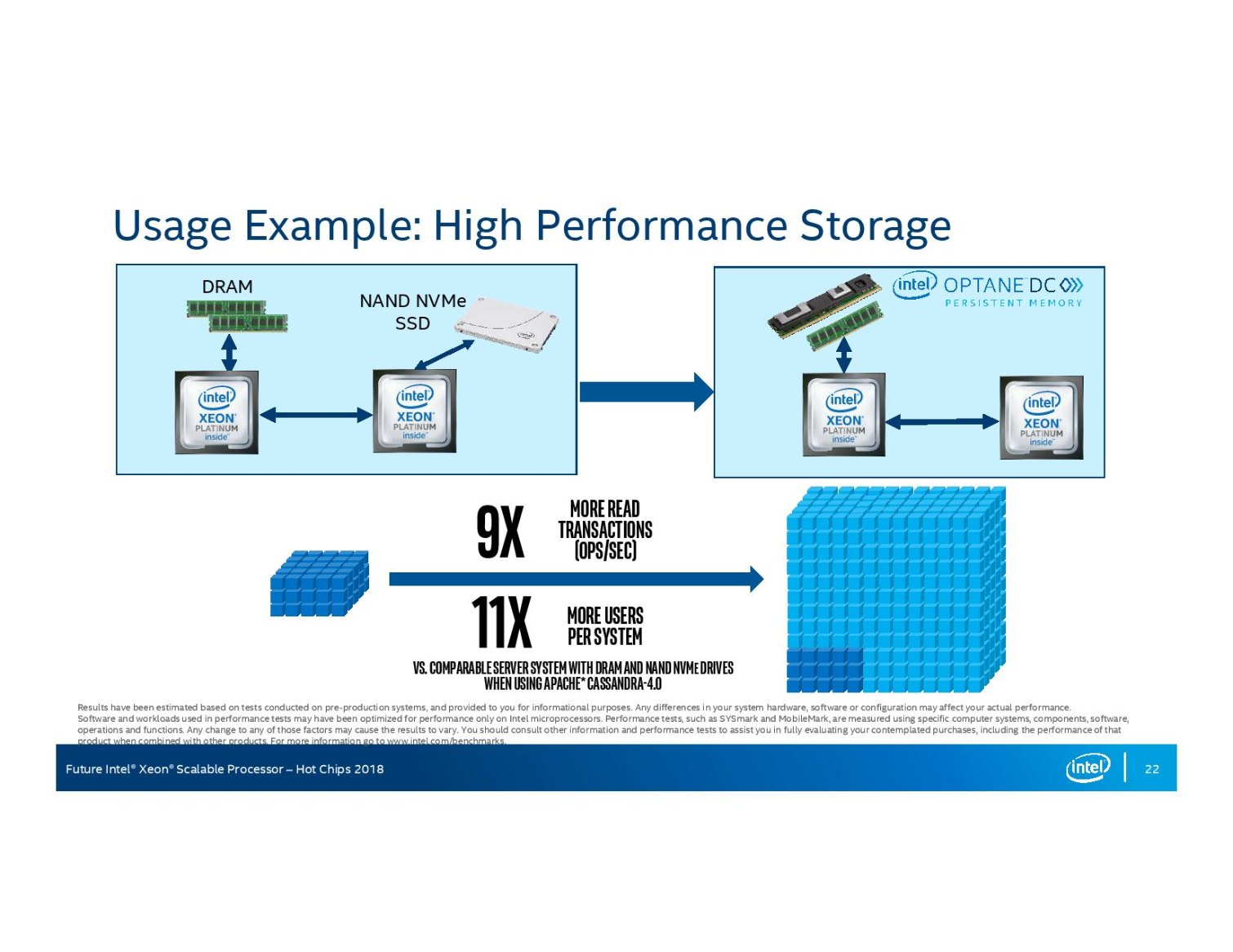

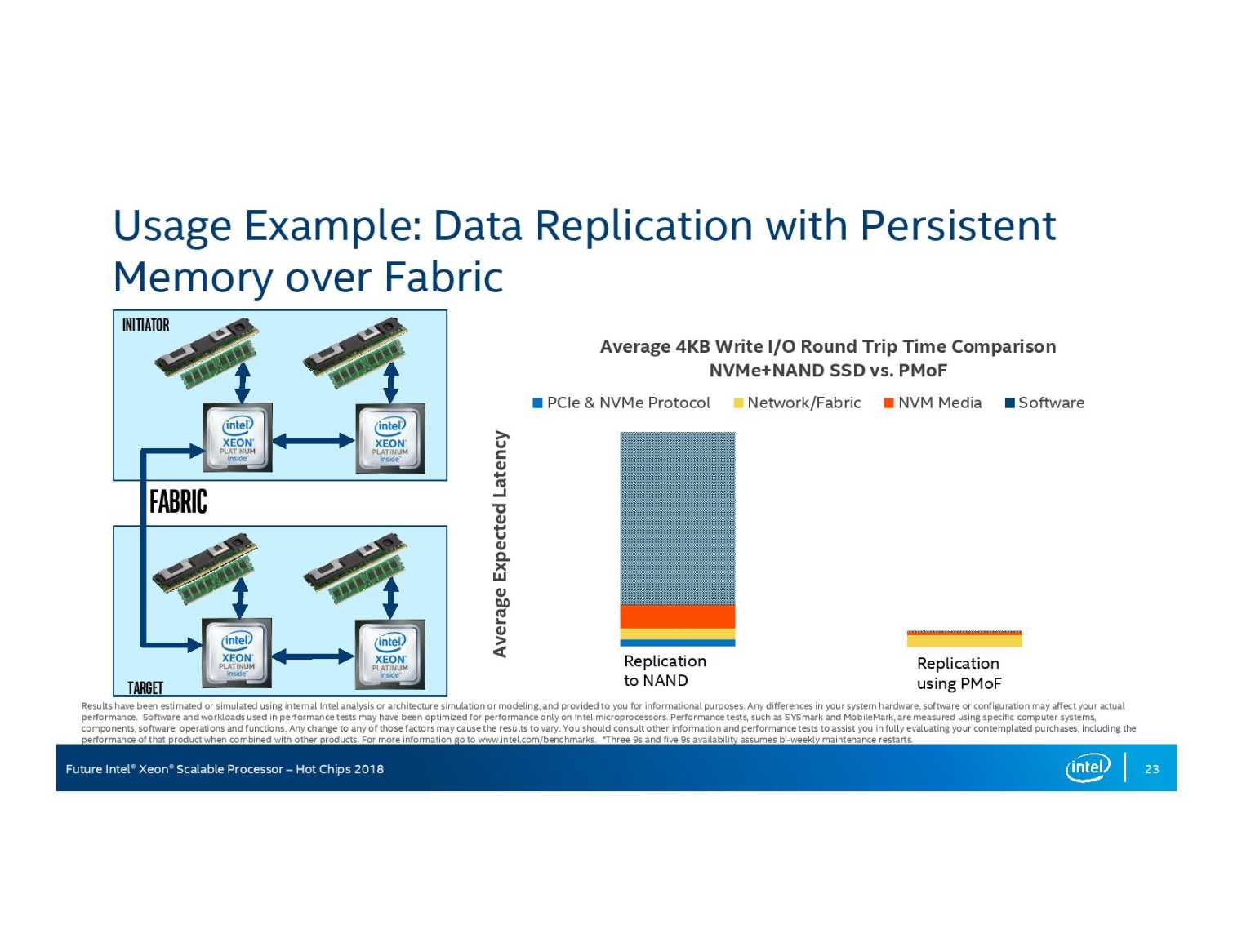

Intel's current Xeon Scalable processors do not support Optane DC Persistent Memory DIMMs. These new DIMMs slot into the DRAM interface, just like a normal stick of RAM, but come in three capacities of 128, 256, and 512GB.

Intel designed a new memory controller to support the DIMMs. The DIMMs are physically and electrically compatible with the JEDEC standard DIMM slot but use an Intel-proprietary protocol, likely to deal with the uneven latency that stems from writing data to persistent memory. Intel confirmed that the Optane DIMMs could share a memory channel with normal DIMM slots, but we already knew that from Lenovo's admissions earlier this year.

Intel announced that it is shipping the overdue Optane DIMMs in quantity during its Data-Centric event, presumably to Google, which announced that it is using the modules for SAP Hanna workloads. That means that Google likely already has the next-gen Cascade Lake processors in its arsenal, which isn't surprising given Intel's habit of selling new server processors to members of the Super Seven (+1) before they are available to the general public. Intel recently disclosed that over 50 percent of its new server processors for cloud service providers are special customized variants, so several other hyperscalers likely already have the new processors in their arsenal.

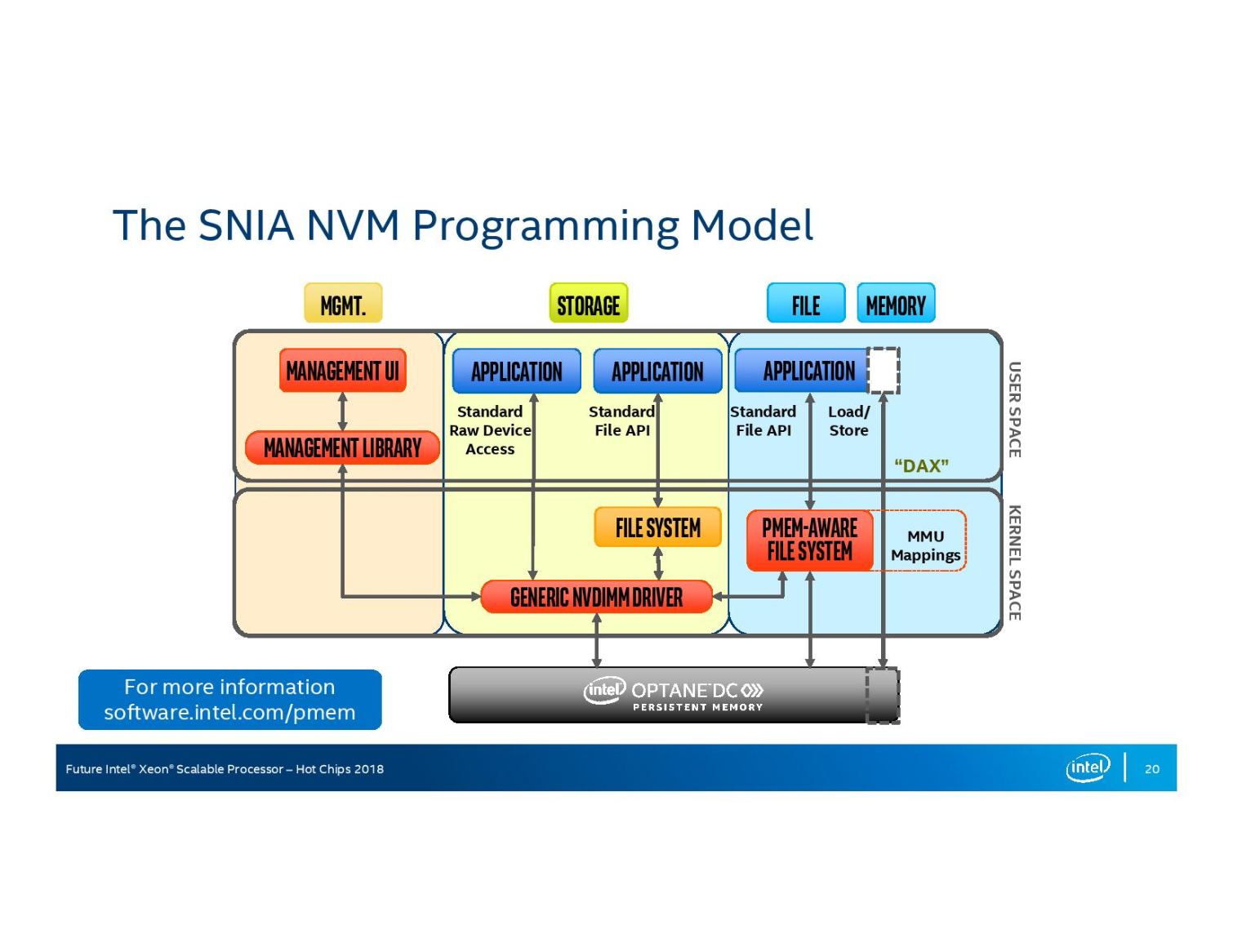

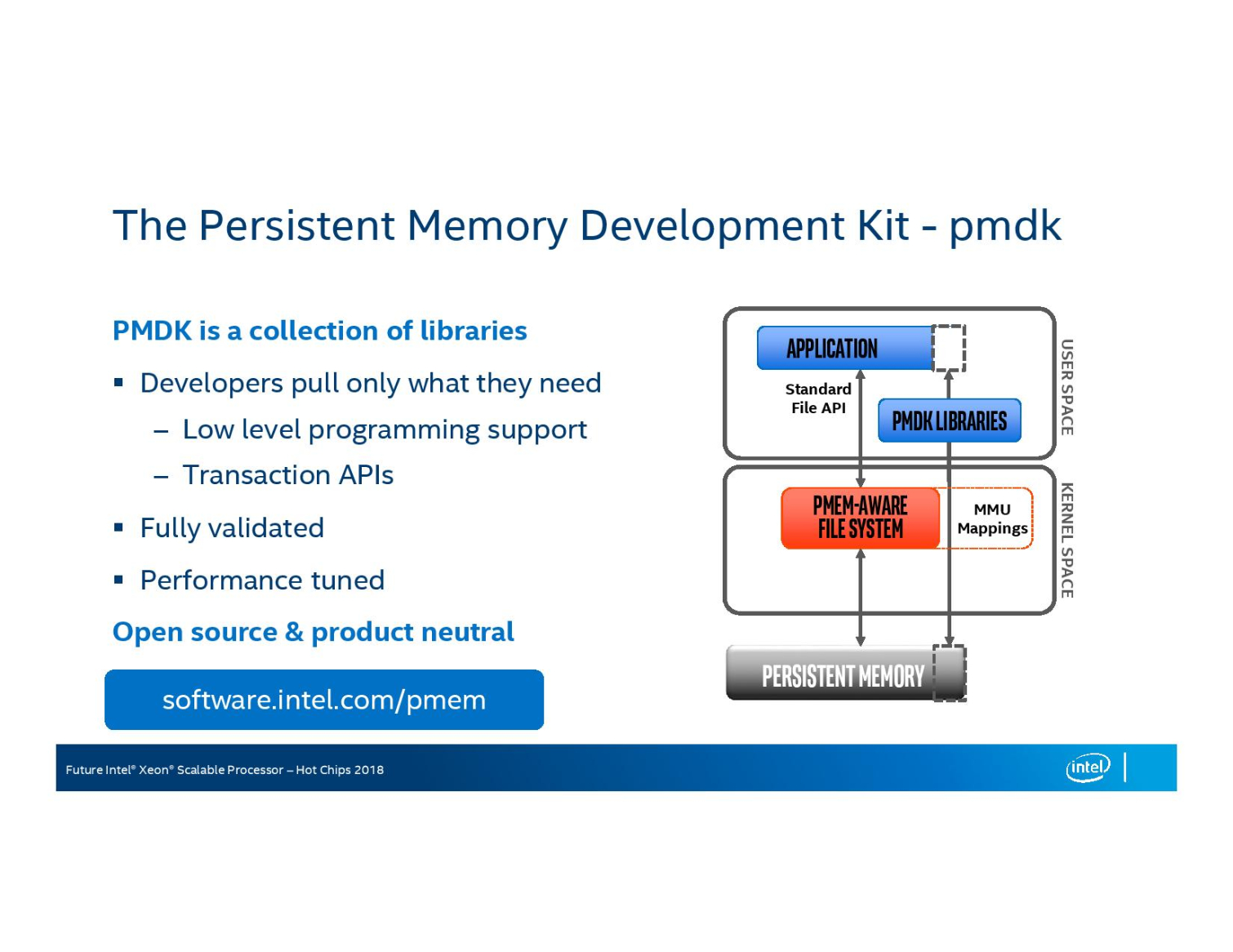

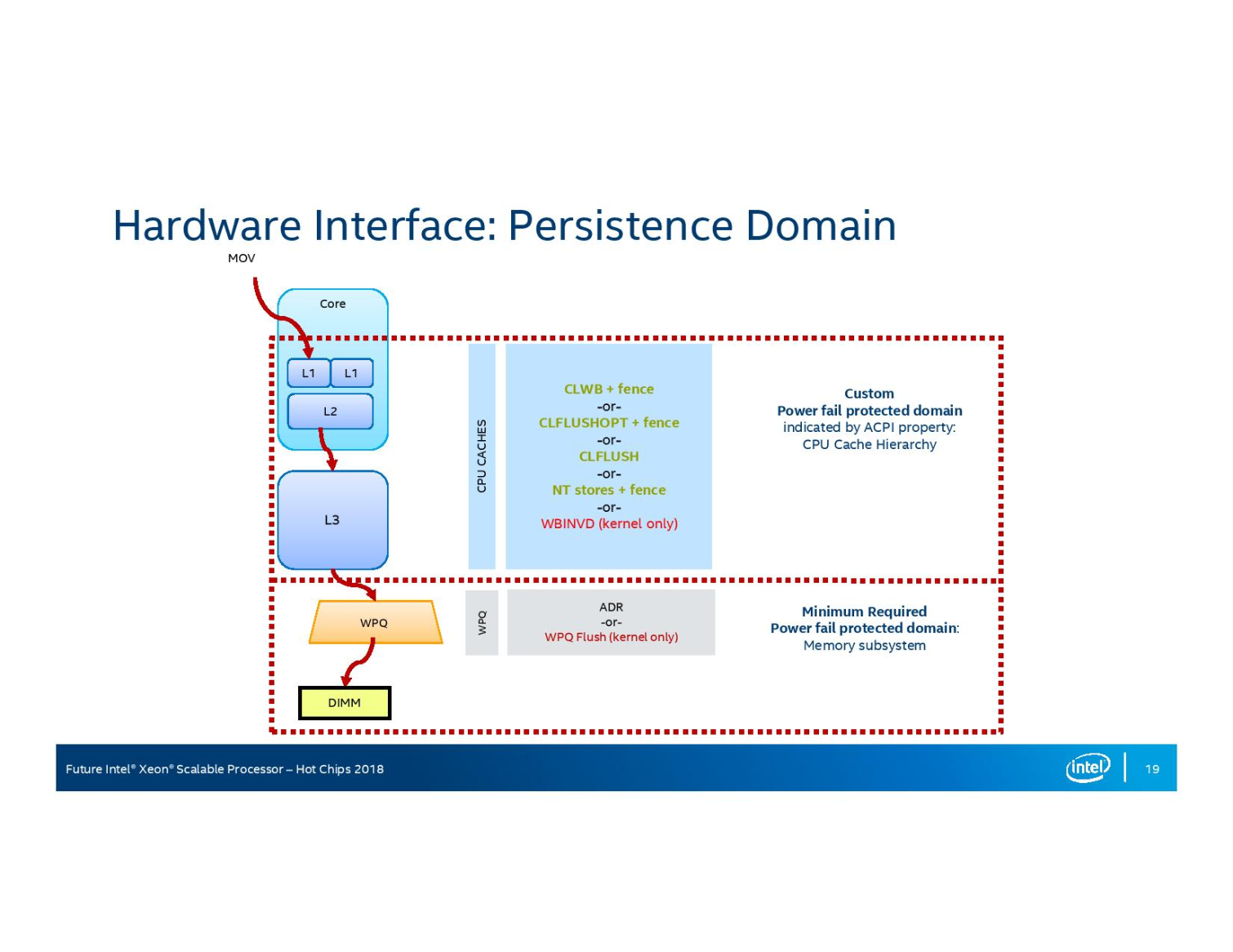

Intel also rehashed much of the information we learned earlier this year about the new software and programming models for persistent memory DIMMs, which largely piggyback on existing refinements for NVDIMMs. Intel is also driving the open-source Persistent Memory Development Kit (PDMK), which cuts through the standard driver and filesystem layers to allow faster access to the memory, into the market.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

hannibal Any news when these updates come to normal cpus, not the workstation ones... after cascade lake?Reply -

1_rick Right there in paragraph one, it says "Similar in-silicon fixes will worm their way into processors for the desktop PC later this year".Reply -

1_rick "Cascade Lake represents Intel's first processors with in-silicon mitigations for the notorious Meltdown and Spectre mitigations."Reply

I think the wrong word was used at one point there--the sentence should end "vulnerabilities." -

Paul Alcorn Reply21255260 said:"Cascade Lake represents Intel's first processors with in-silicon mitigations for the notorious Meltdown and Spectre mitigations."

I think the wrong word was used at one point there--the sentence should end "vulnerabilities."

Oops, thanks!