Do AMD's Radeon HD 7000s Trade Image Quality For Performance?

We discovered blurry textures when we reviewed the Radeon HD 7800s, so now we're performing an in-depth investigation. Why does the Radeon HD 6000 series demonstrate crisper image quality? Is performance affected? Does AMD know about the issue?

The Performance Impact Of Lower-Quality Textures

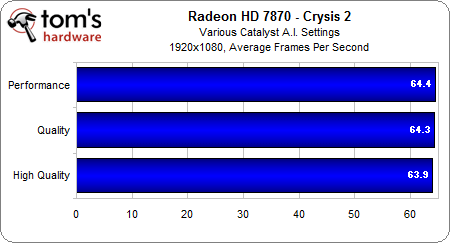

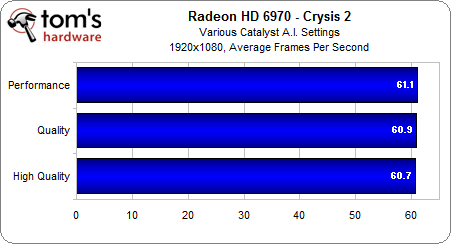

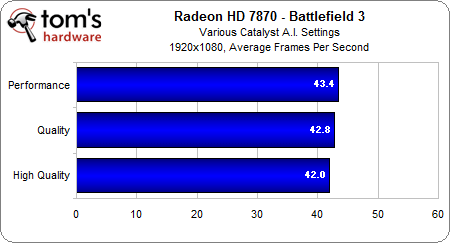

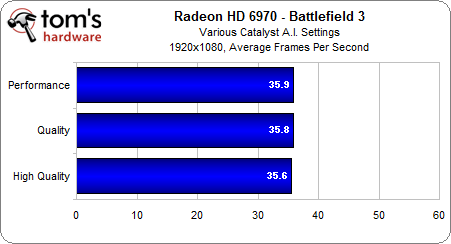

In light of the visual improvement we see when we tune the Catalyst A.I. slider, we want to know how performance gets impacted at each step of the way on AMD's Radeon HD 7870 and 6970 cards.

Crysis 2 definitely yields a quantifiable difference between the Radeon HD 7870 and 6970, as we'd expect. However, the difference between frame rates at the highest and lowest texture detail settings is negligible.

Battlefield 3 gives us an average 1.4 FPS spread on the Radeon HD 7870 and only a 0.3 FPS spread on the Radeon HD 6970, but that's hardly a notable difference on either card.

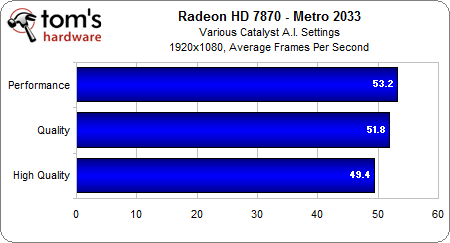

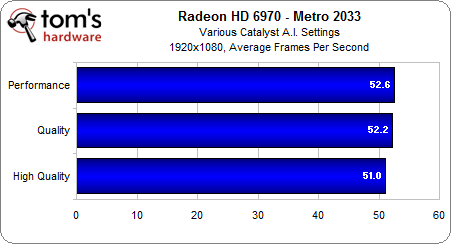

In Metro 2033, there's a more substantial 3.8 FPS difference between the highest and lowest texture quality settings on the Radeon HD 7870, while the Radeon HD 6970 incurs less than half of that.

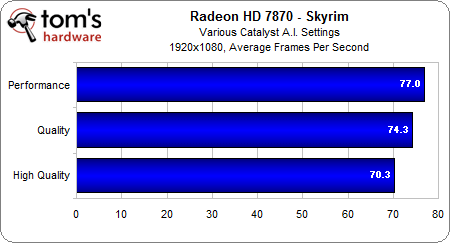

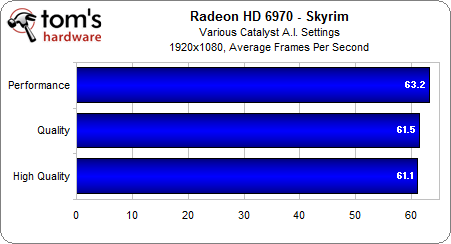

Skyrim is the game with the most obvious texture differences between the 7870 and 6970, and it's also the game that shows the largest spread of frame rates between the highest and lowest texture quality settings on the Radeon HD 7870 (6.7 FPS). The Radeon HD 6970, on the other hand, sees a mere 2.1 FPS separate its Performance and High Quality settings.

To recap, there isn't much to report in either Battlefield 3 or Crysis when it comes to performance delta, regardless of the texture filtering quality setting. But in The Elder Scrolls V: Skyrim and Metro 2033, the High Quality setting appears to force more of a performance hit on the Radeon HD 7870 than on the 6970.

Considering the data we’ve seen up until this point, we have to come to the disturbing conclusion that AMD's Radeon HD 7000-series cards currently enjoy more aggressive benchmark results at their default driver settings, resulting in reduced texture quality compared to the Radeon HD 6000s and GeForce GTX 500s. Using the highest Catalyst A.I. setting appears to be the remedy, though it costs additional speed.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

This is the kind of result that makes us uncomfortable. Is it possible that AMD knowingly sacrificed texture quality to gain marginally-better performance in some benchmarks? The company took a couple weeks to respond to our queries, and we wondered as we waited.

Current page: The Performance Impact Of Lower-Quality Textures

Prev Page Three Different Catalyst A.I. Settings Next Page AMD Responds With A Driver FixDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

lahawzel These differences are things that no one would ever notice if tech review sites didn't point them out.Reply

Well, not that I mind knowing that it can be fixed with a driver update, but I find it unnecessary for the average gamer to worry about these minor differences with image quality (knowing it's "fixed" is more of a placebo than an actual improvement of gaming experience). Not to mention that the typical gamer plays on 6-bit TN-panel monitors because "HURR 1ms RESPONSE TIME HOLY SHIT BEST SCREEN EVER" and they in turn elect to give up the superior color gamut and viewing angles conferred by IPS panels. They ought to the last ones who deserve to complain about image quality, at any rate. -

buzznut Huh, don't know about all of that but thx for the article. I do think its important to bring such things to the vendor's attention and follow up to see if they respond appropriately. Good job!Reply

-

therabiddeer Is it just me or is toms heavily biased towards nvidia? We see tons of articles for the Nvidia 6xx but very few for the 7xxx. Nothing negative for nvidia, but an article like this for AMD's, which is already being fixed even though it is undetectable... and the fix doesnt even yield a real change in framerates.Reply -

the associate "HURR 1ms RESPONSE TIME HOLY SHIT BEST SCREEN EVER"Reply

HAHAHAHAHA

Oh man that made my night. But yea, that's exactly why I just got a panny st30 screen, tn's are just garbage, and lcd just can't do black. As for framerate lag? Doesn't affect my average scoreboard k/d ratios, or lap times, or whatever other "precision" timing actions both online and offline.

Least I got a screen that can do my cards justice, this also makes me glad I got my crossfire setup with the 6780's instead of waiting for the 7000 series...

-

SteelCity1981 Nothing new really early driver support for new graphics cards always have their bugs, but normally by the 3rd supported driver version a lot of the generel bugs are normally fixed, because by then a lot more people own that card series thus giving a lot more feedback to the gpu company about the drivers suppported for that card.Reply -

neon neophyte Do AMD's Radeon HD 7000s Trade Image Quality For Performance?Reply

No, no they do not -

airborne11b the associate"HURR 1ms RESPONSE TIME HOLY SHIT BEST SCREEN EVER"HAHAHAHAHAOh man that made my night. But yea, that's exactly why I just got a panny st30 screen, tn's are just garbage, and lcd just can't do black. As for framerate lag? Doesn't affect my average scoreboard k/d ratios, or lap times, or whatever other "precision" timing actions both online and offline.Least I got a screen that can do my cards justice, this also makes me glad I got my crossfire setup with the 6780's instead of waiting for the 7000 series...Reply

Going from a dell u2711 2560 x 1600 to a asus vg278h 120hz 2ms tn panel, there is a clear difference in gaming. The u2711 compared to vg278h feels sluggish. The image quality, sharpness and color is clearly better in u2711, but the lag is terribly noticable.

Once you get a real gaming monitor, you will see the difference for yourself. TN 120hz monitors are the only true choice for pro gaming, imo.