Nvidia Shield: Hands-On With A Tegra 4-Based Handheld

Our first stop at this year's CES was Nvidia's suite in the Palms, where company representatives showed off pre-production versions of its Shield handheld. Chris Angelini weighs in with some of the specifics, plus his impressions of Nvidia's effort.

Tegra 4 And Shield's Future: The Prestige

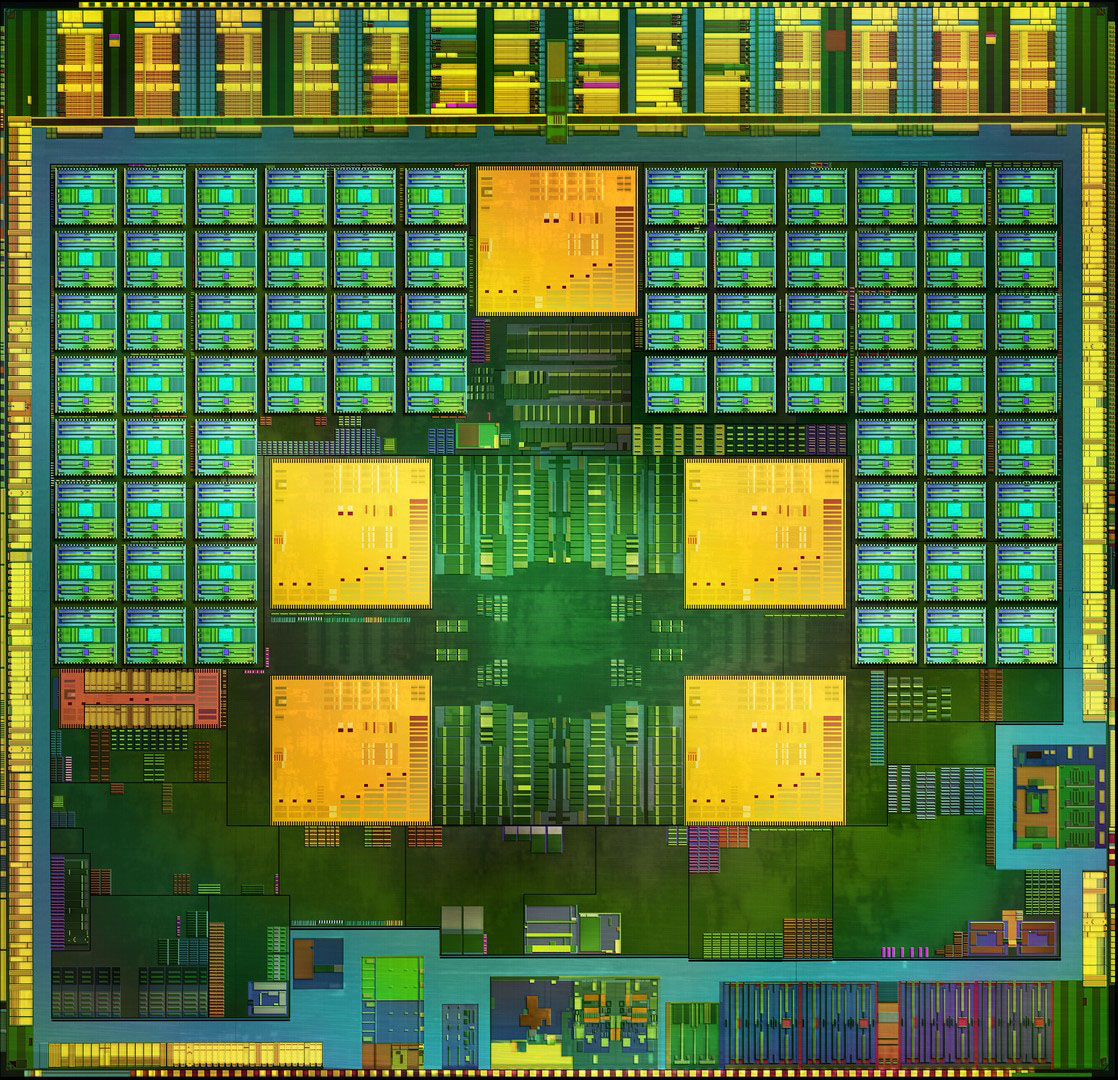

Tegra 4: The Brains

As with Nvidia’s past SoCs, Tegra 4 still does not employ a unified shader architecture. Its 72 cores are split between 24 vertex and 48 pixel shaders, evolving from Tegra 3’s four vertex shaders and eight pixel shaders. The company says that, at this point in time, it simply makes the most sense for it to continue separating them, lending to better power efficiency than it could achieve with a unified design. Also, it points out that developing for its immediate-mode renderer is easier for the ISVs already accustomed to working with Nvidia’s architecture on the desktop.

The webcast introducing Tegra 4 made it clear that Nvidia is using four Cortex-A15 cores, but it wasn’t clarified until later that the fifth battery-saver core is also Cortex-A15. In its previous generation, Nvidia stated that the fifth core was transparent to the operating system and applications. However, it isn’t supported in Windows RT. We’ve heard claims that Microsoft doesn’t properly support the asymmetrical nature of Tegra’s four processing cores (ramping up to 1.9 GHz) and single power-saver core running at a slower maximum clock rate.

Nvidia was at least able to get around this in Android, so it won’t be an issue for Shield; the fifth core will play a key role in keeping platform power consumption down around that 1 W estimate at idle.

Though the cores themselves are standard –A15s, the memory controller is Nvidia’s own design. And whereas Tegra 3 (T30) employed a single-channel 32-bit pathway with support for up to DDR3-L, Tegra 4 features a dual-channel interface supporting DDR3-L and LP-DDR3.

We’re hoping to go into greater depth with Nvidia on Tegra 4, specifically, in the weeks to come. As it pertains to Shield, the 28 nm HPL (low-power with high-k metal gates) SoC is balanced to minimize leakage at the expense of maximum performance, which we’re counting on to enable the battery life claims Jen-Hsun made on stage.

Now, Who’s Going To Use It

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

As I talked to Nvidia’s team at CES, I was very clear that I’m probably not Shield’s ideal customer. I live in a single-story house, I sit in a nice Herman Miller chair in front of three 27” screens driven by a GeForce GTX 680, and when I want to play games, I close the door to my office and I play.

I don’t find myself on the couch wishing I could beam Battlefield onto my television. I don’t travel enough to need a handheld in my bag to pass the time. And I don’t use my phone for gaming.

Yet, as a technologist, I have to admire the amount of Nvidia IP that came together to make Shield possible. Simply nailing the PC gaming component would be amazing. Offloading rendering to a desktop system and enjoying the benefit of accelerated video decode to make 38 Wh of capacity last almost all day is brilliant.

The unanswered question is: how much will it cost? Nvidia preemptively threw out that Shield won’t be subsidized, and it does want to make money on the device. But prospective customers are going to compare it to the 3DS, Xperia Play, and Vita, rather than Atom/Cortex-A15/Krait-based tablets.

To that end, I’d like to see Shield selling in the $250 range, though the shot across the bow of console companies suggests this might not be the case. Nvidia does need to lean heavily on its ISV relationships to bolster the appeal of Android gaming beyond where it sits today though, and Nvidia absolutely must knock the PC gaming experience out of the park. That’s the one capability most appealing to me. I just need to find a few titles I can play without embarrassing myself using those joysticks.

Current page: Tegra 4 And Shield's Future: The Prestige

Prev Page PC Gaming And The Shield's Secret Weapon-

mayankleoboy1 Naughty Naughty Chris!Reply

Hogging the better handheld for yourself, and giving the inferior one to Don ;) -

jase240 Streaming games to a tablet, its awesome! Now I wonder if it would be possible to stream on 4g?!?! That would be cool!Reply -

esrever Shield is nice but probably not worth the price in the end judging by how much nvidia is pushing it as a high end product.Reply -

lradunovic77 This is end of Windows because NVIDIA and soon Steam will clearly show to the world that you don't need Windows to do gaming, but actually Linux is really good platform for it.Reply -

wolley74 the question is, why would i want to stream a game from my PC, where i can use a mouse / keyboard / multiple monitors if i wish, compared to playing it on a tiny screen with limited buttons? why?Reply -

obsama1 lradunovic77This is end of Windows because NVIDIA and soon Steam will clearly show to the world that you don't need Windows to do gaming, but actually Linux is really good platform for it.Reply

Linux is great, but you have to remember two things:

1. Devs will have to port their games to Linux, which will take a long time.

2. I don't see how you're going to convince gamers to install Linux in place of Windows on their computers, and then download their entire game library again, due Linux/Windows incompatibilities. -

dark_knight33 lradunovic77This is end of Windows because NVIDIA and soon Steam will clearly show to the world that you don't need Windows to do gaming, but actually Linux is really good platform for it.Reply

3 words: Poor....driver....support...

Where's Linus giving Nvidia the finger when you need him? Afaik, you still need a windows based PC to enable streaming of steam titles.

That being said, I look forward to the day when MSFT looses it's death grip on those O/S license fees. -

dragonsqrrl It's an interesting concept, and it's impressive that Nvidia seems to have pulled off PC title streaming so effectively, but I feel like without the ability to stream remotely Shield will be little more than a niche product. I guess that's okay since Nvidia has openly stated that Shield's target audience is relatively small, but I can't help but feel that the experience could be so much more compelling with the ability to play your PC games from anywhere with a suitably fast internet connection, even if it's potentially at the expense of some image quality and latency. That would certainly get my attention.Reply