Why you can trust Tom's Hardware

Application Performance Test Notes

All systems in this section of tests use our standard test bench setup listed on the second page of the article.

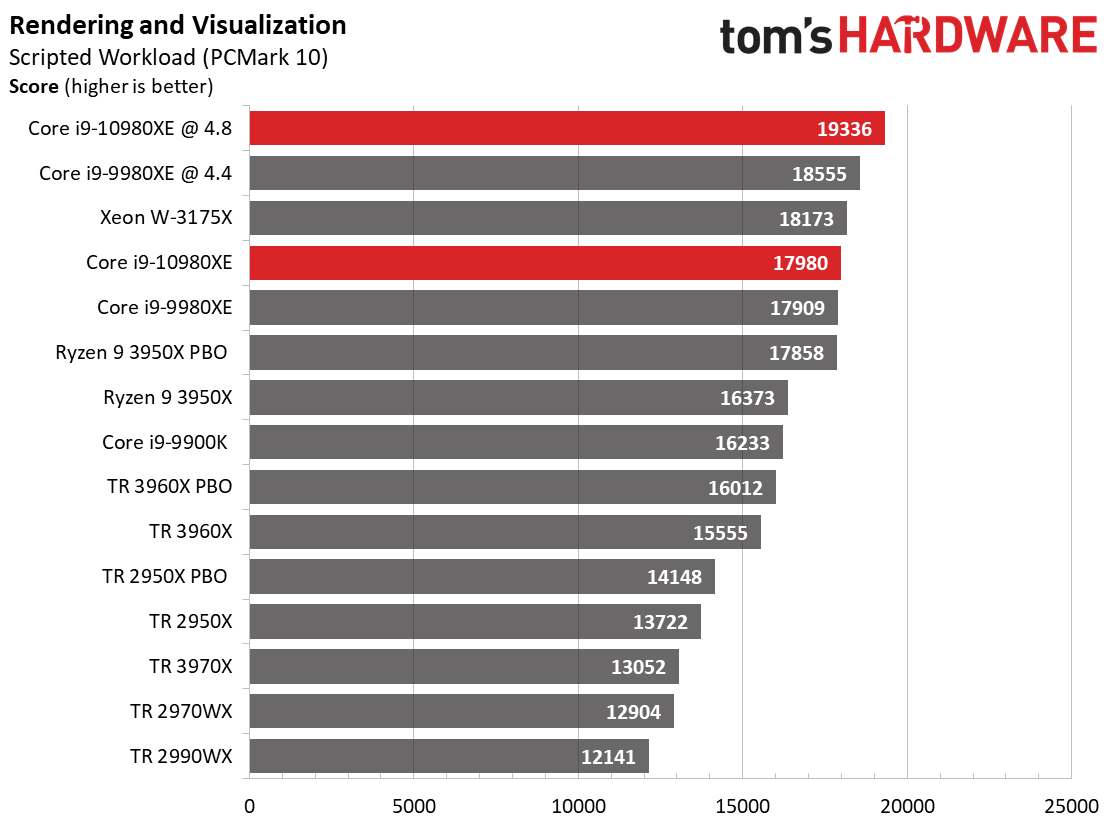

Rendering

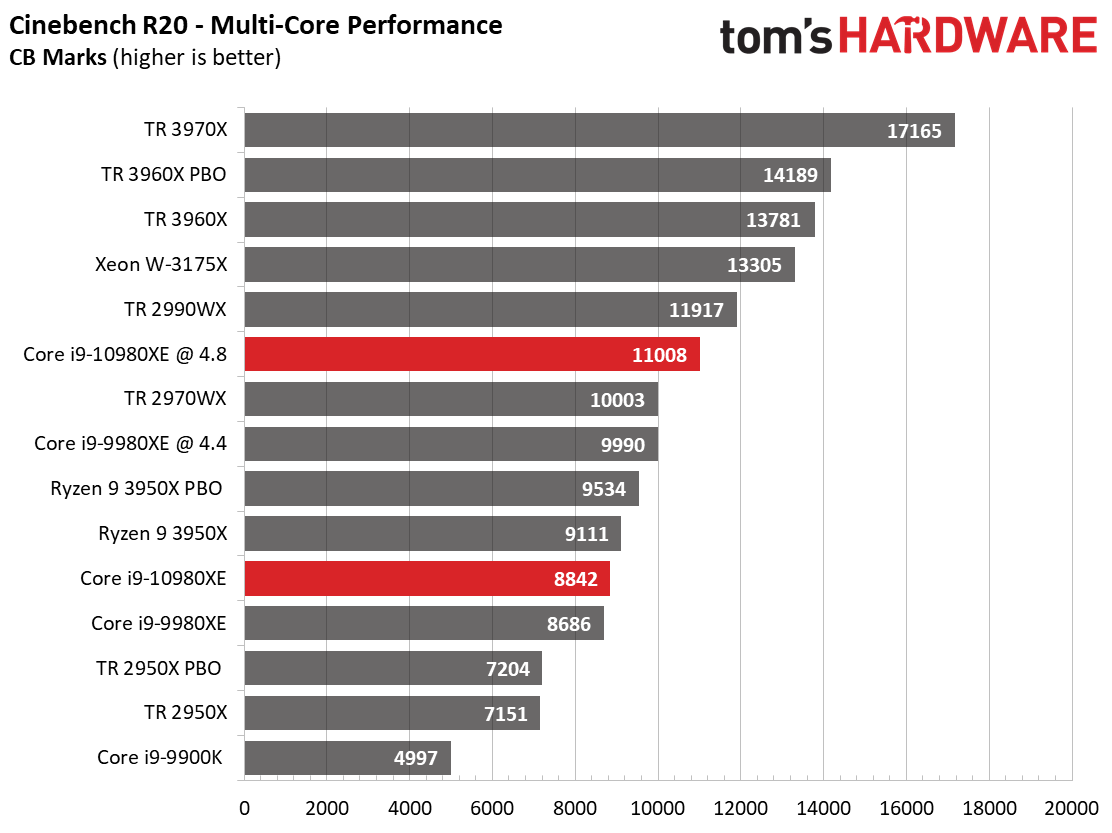

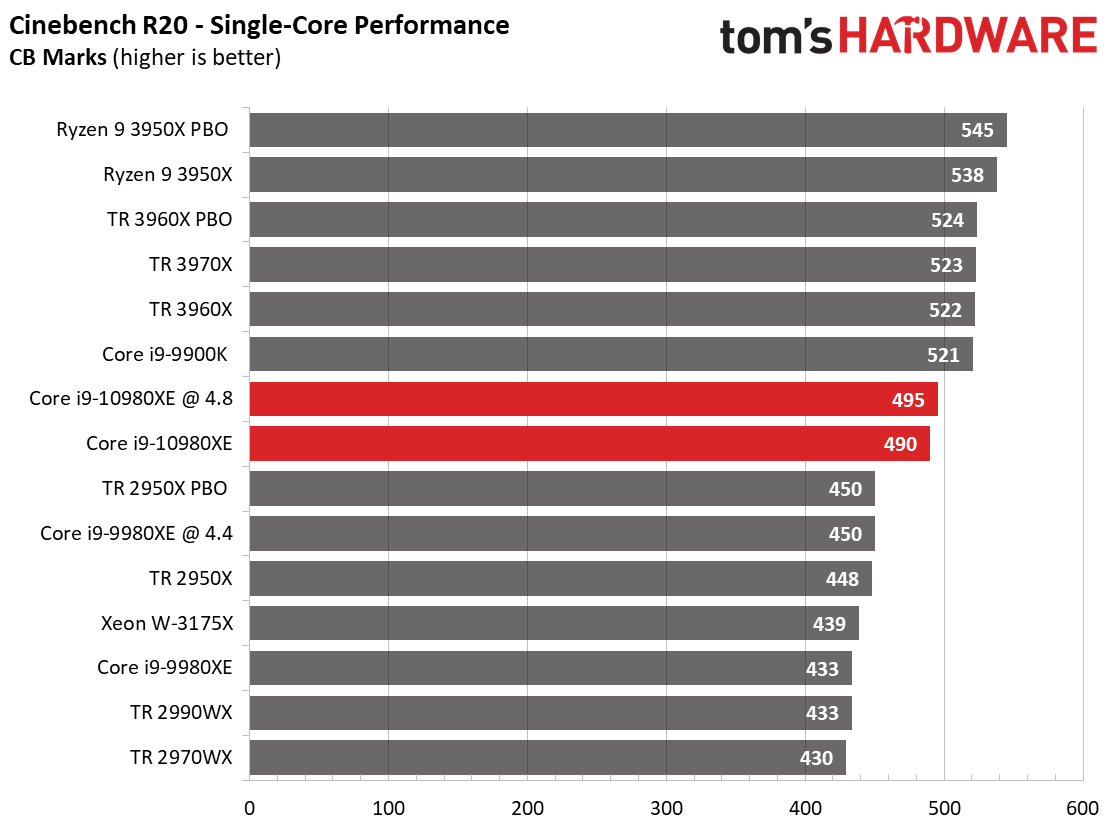

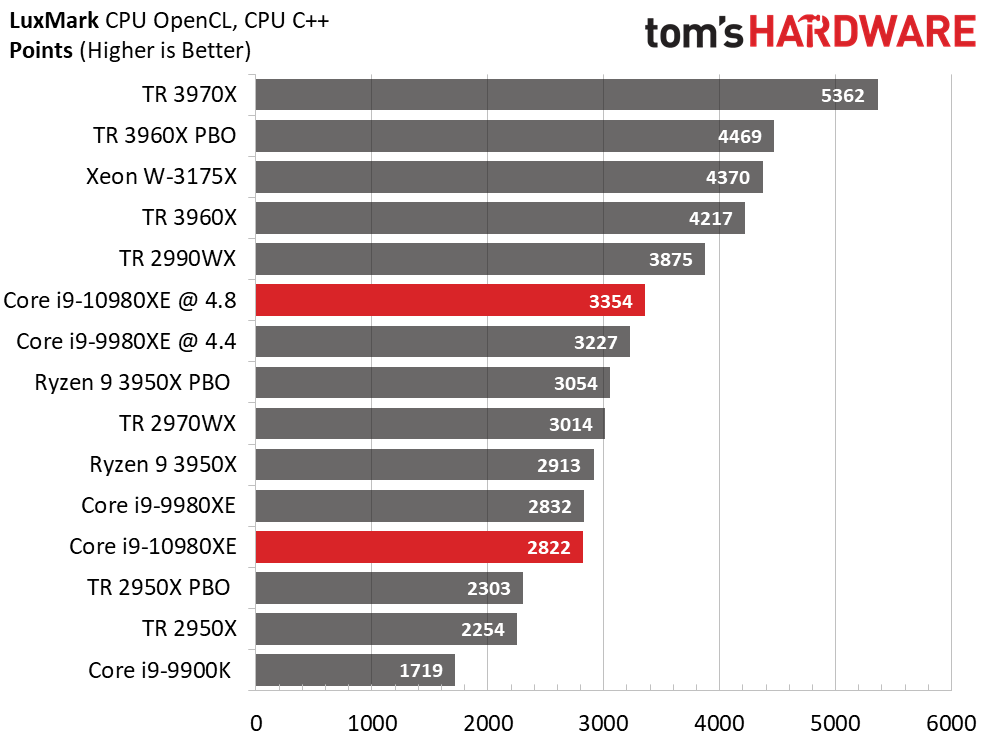

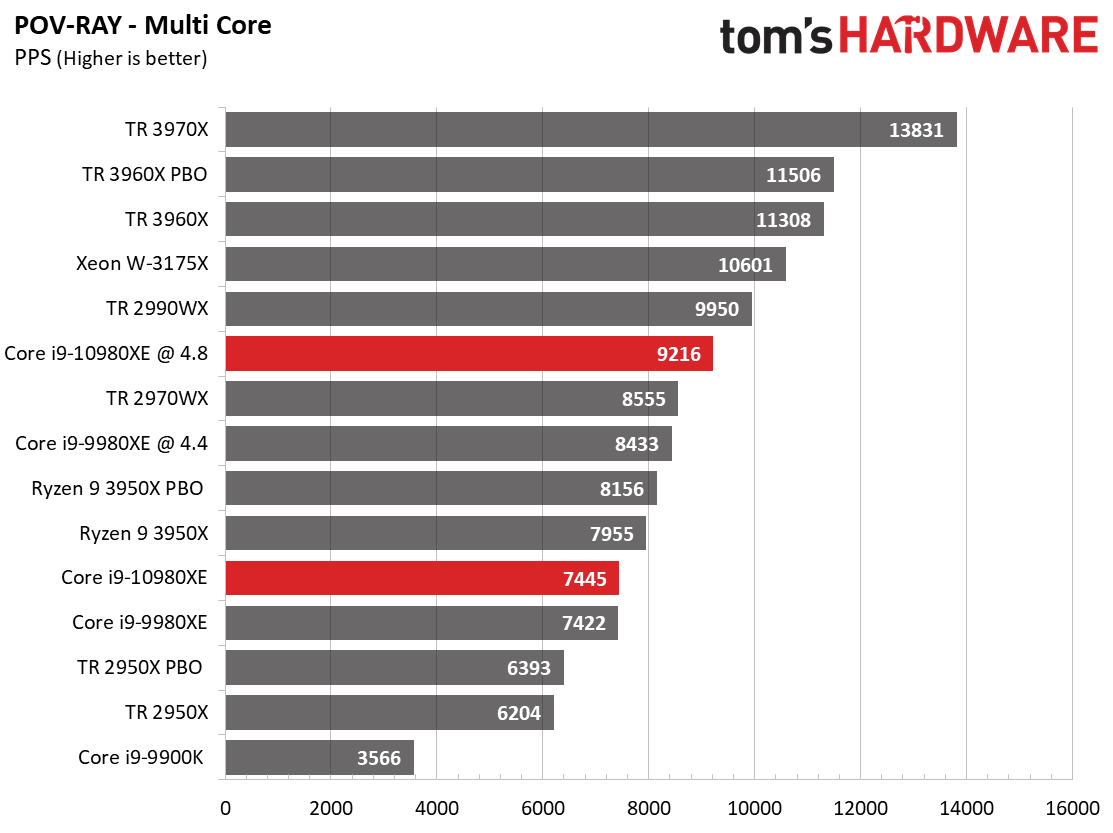

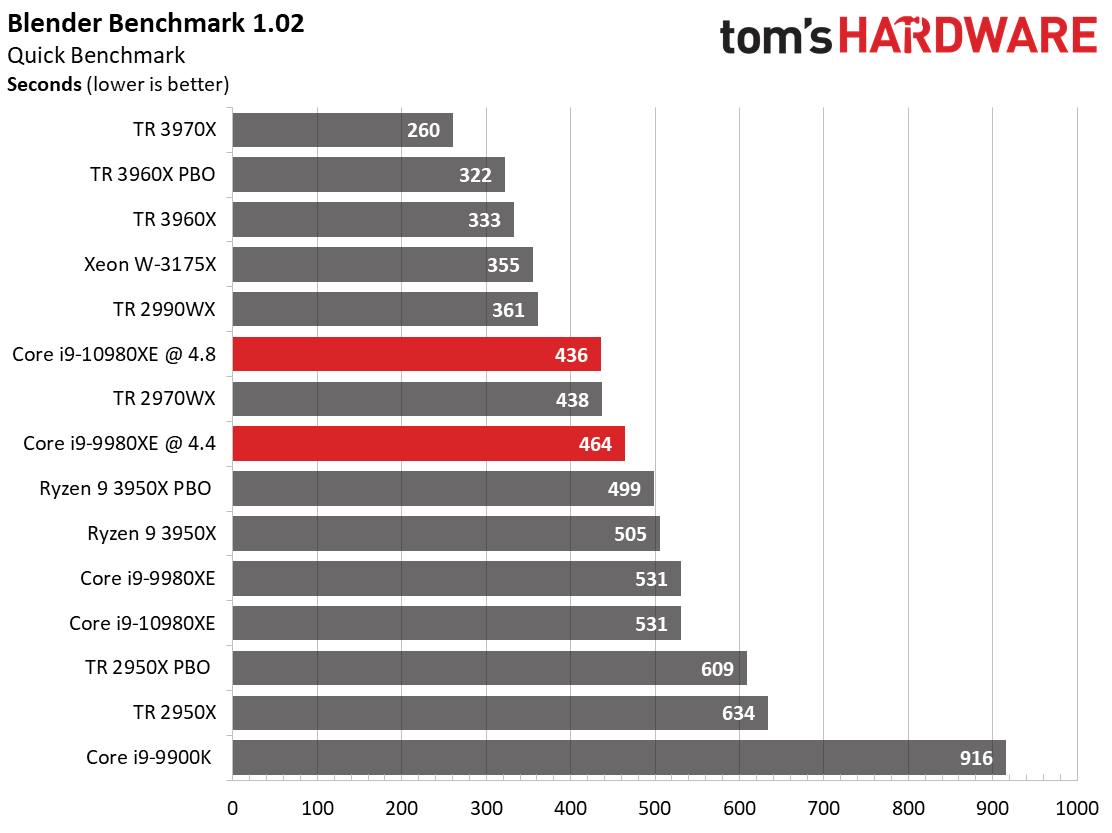

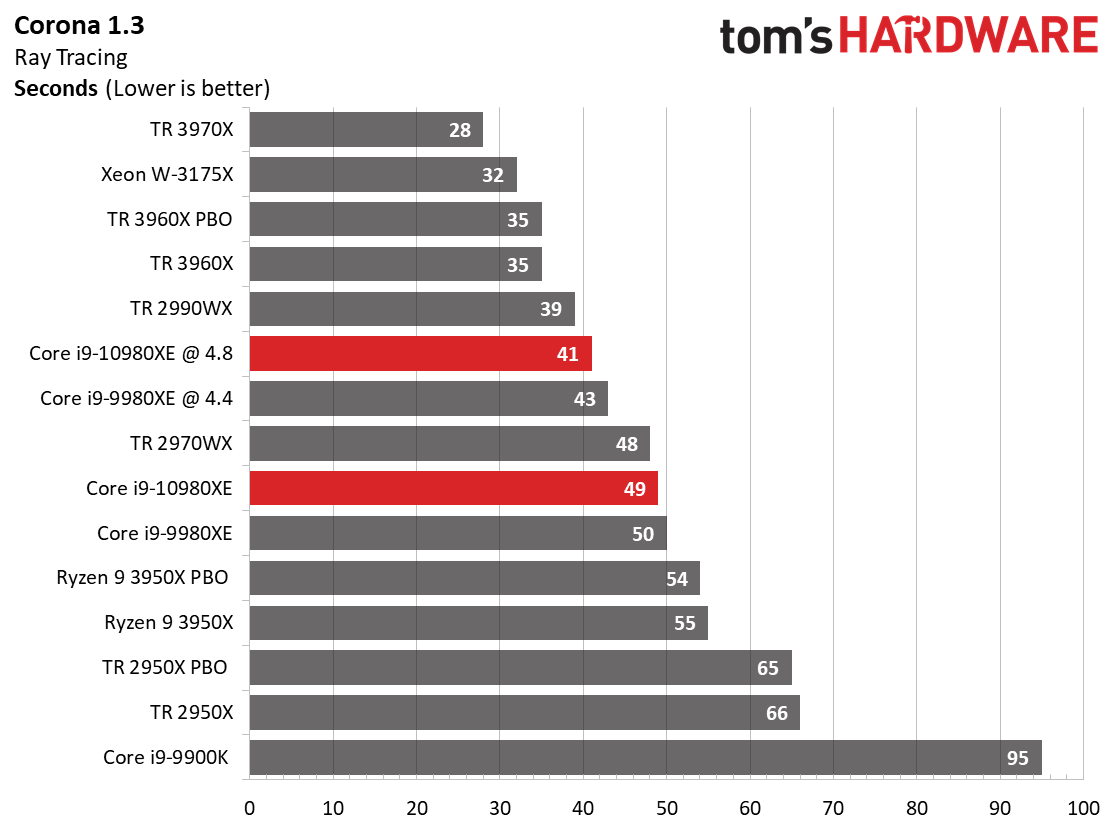

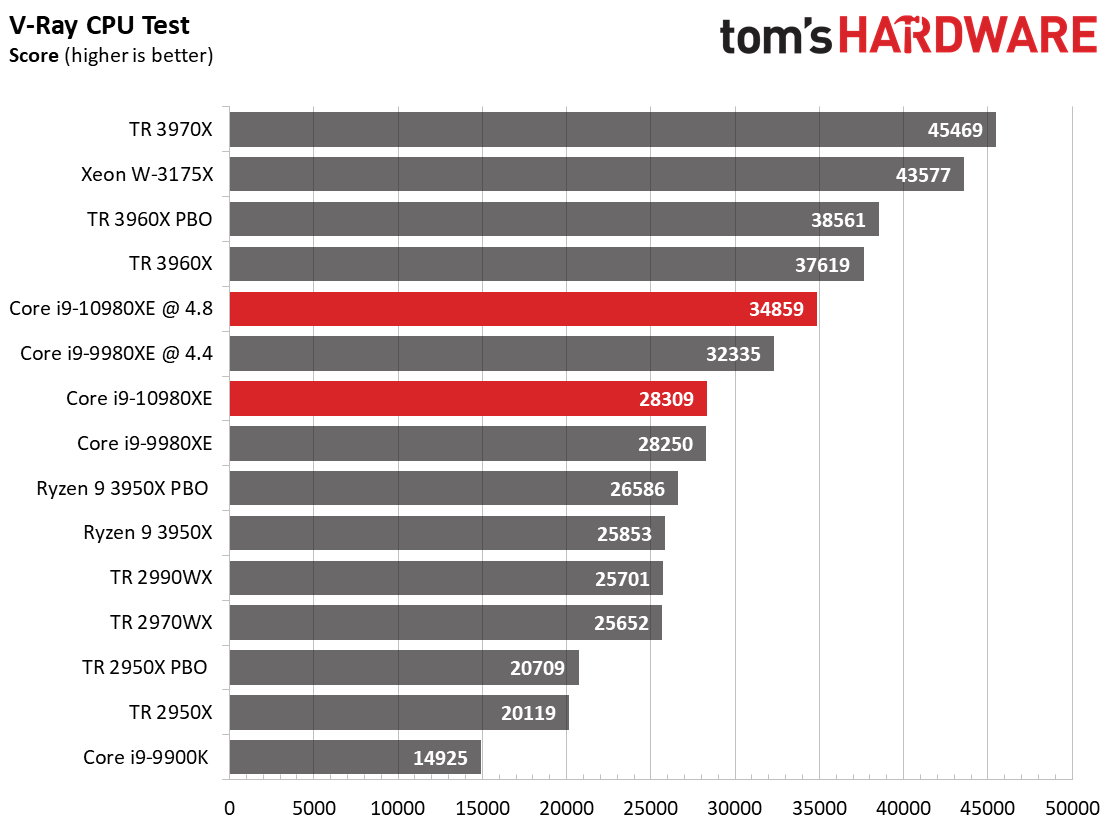

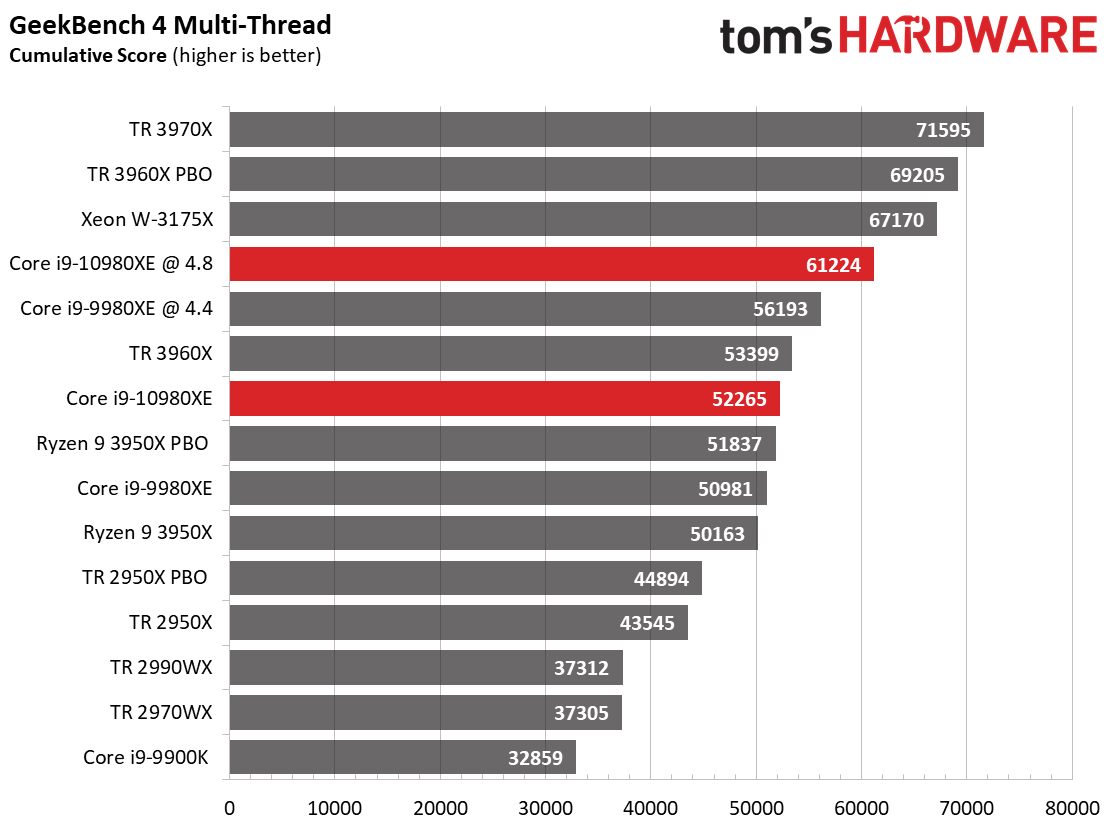

Our rendering test suite finds Intel's processors in hostile territory: Multi-threaded rendering has become the domain of Zen-based architectures and their core-heavy designs. Threadripper 2970WX leverages its 24 cores and 48 threads to upset the stock -10980XE in several of the threaded rendering benchmarks, and tuning would improve its performance further. The Ryzen 9 3950X also impresses in the threaded LuxMark and POV-Ray tests.

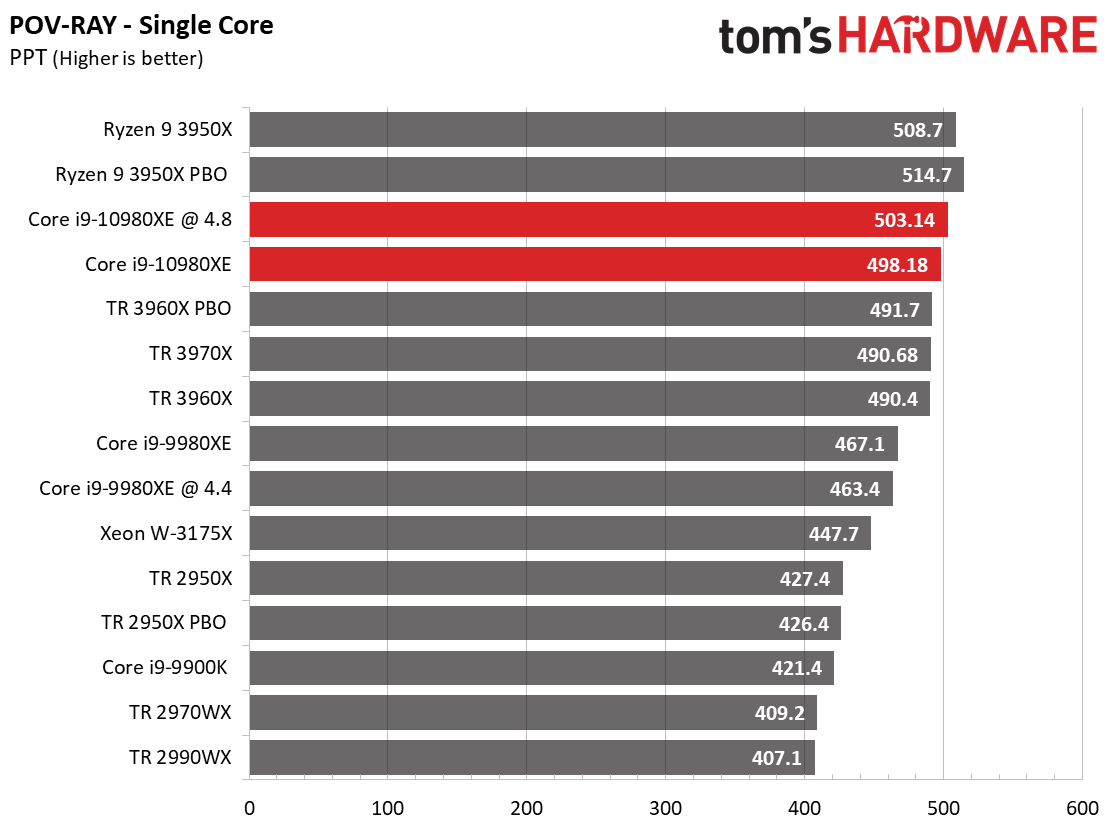

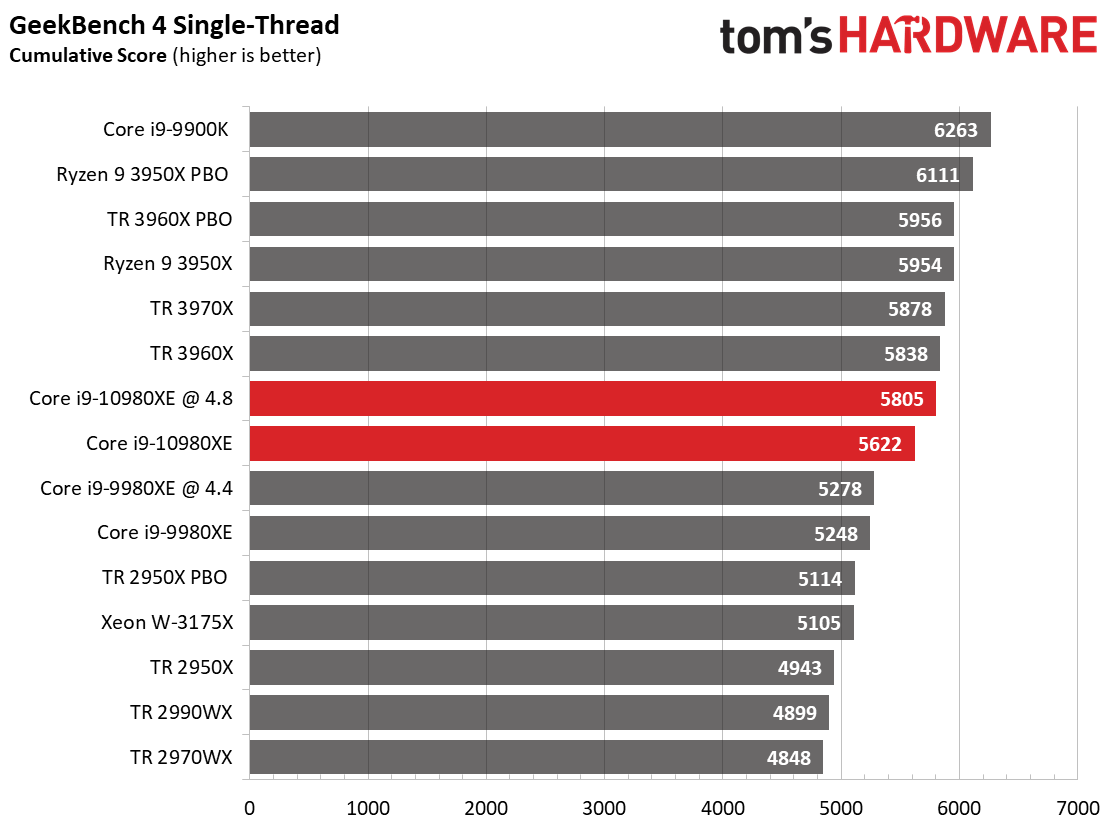

The stock Core i9-10980XE is competitive in the single-core POV-Ray benchmark due to its strong performance in AVX workloads, but the 3950X takes the lead in both the single-threaded POV-Ray and Cinebench benchmarks.

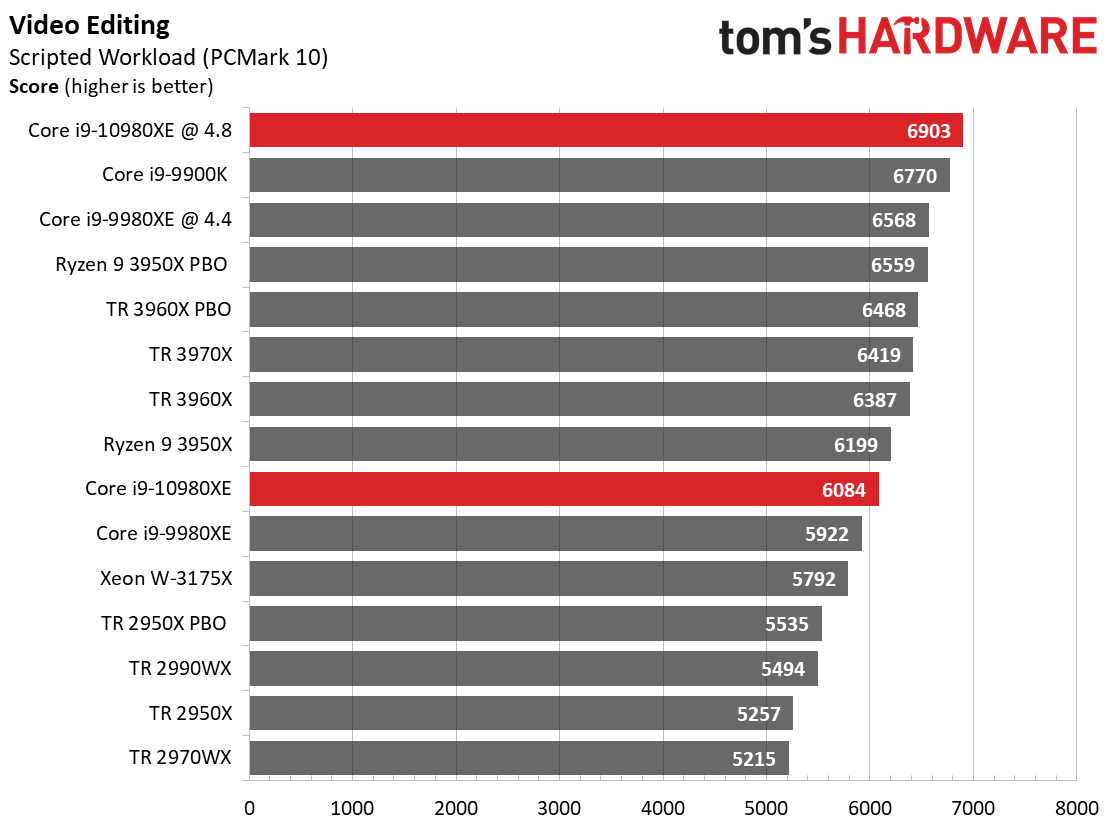

Encoding

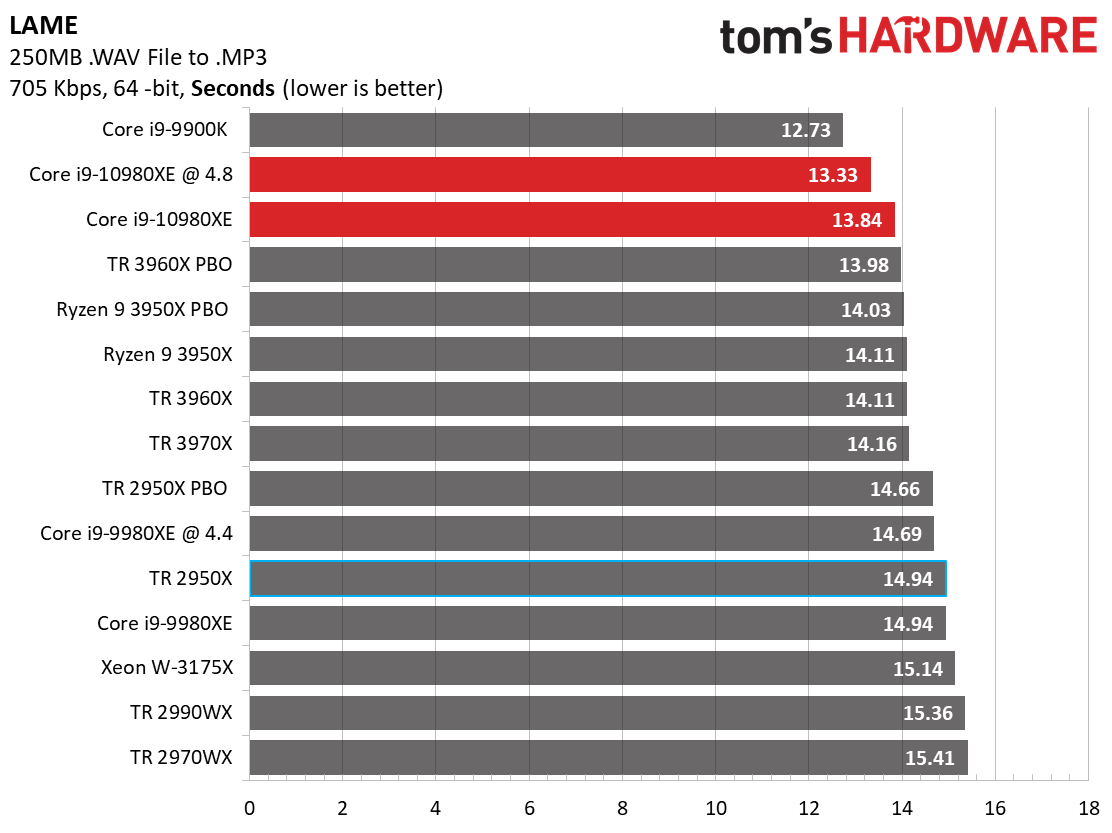

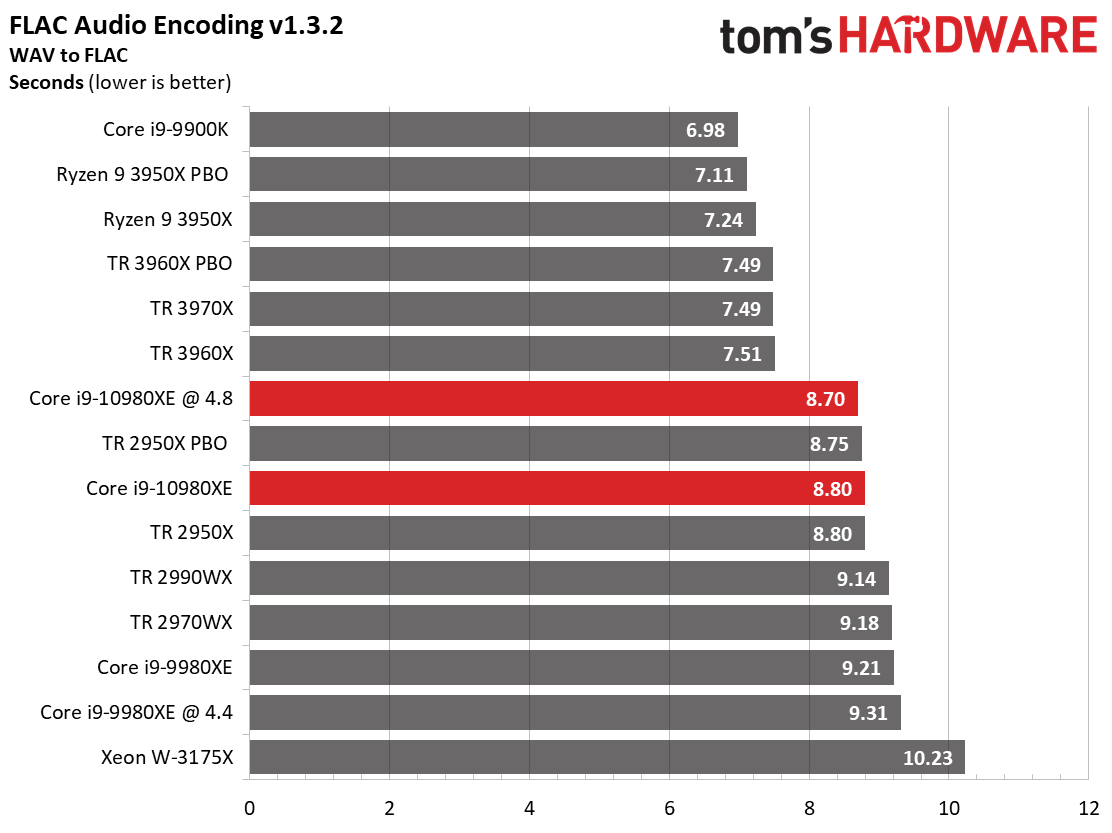

Intel's Core i9-10980XE excels at single-threaded workloads due to its aggressive 4.8 GHz boost clock, so it takes the lead over the AMD processors in the LAME benchmark at stock settings.

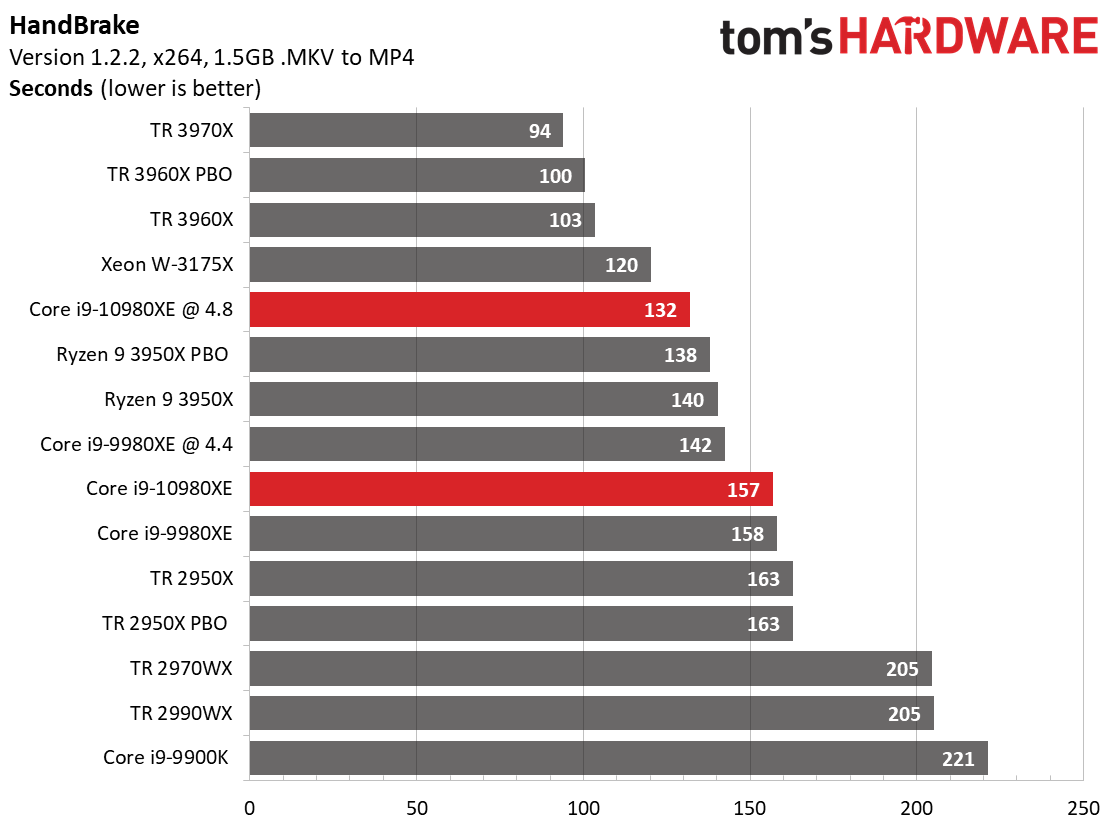

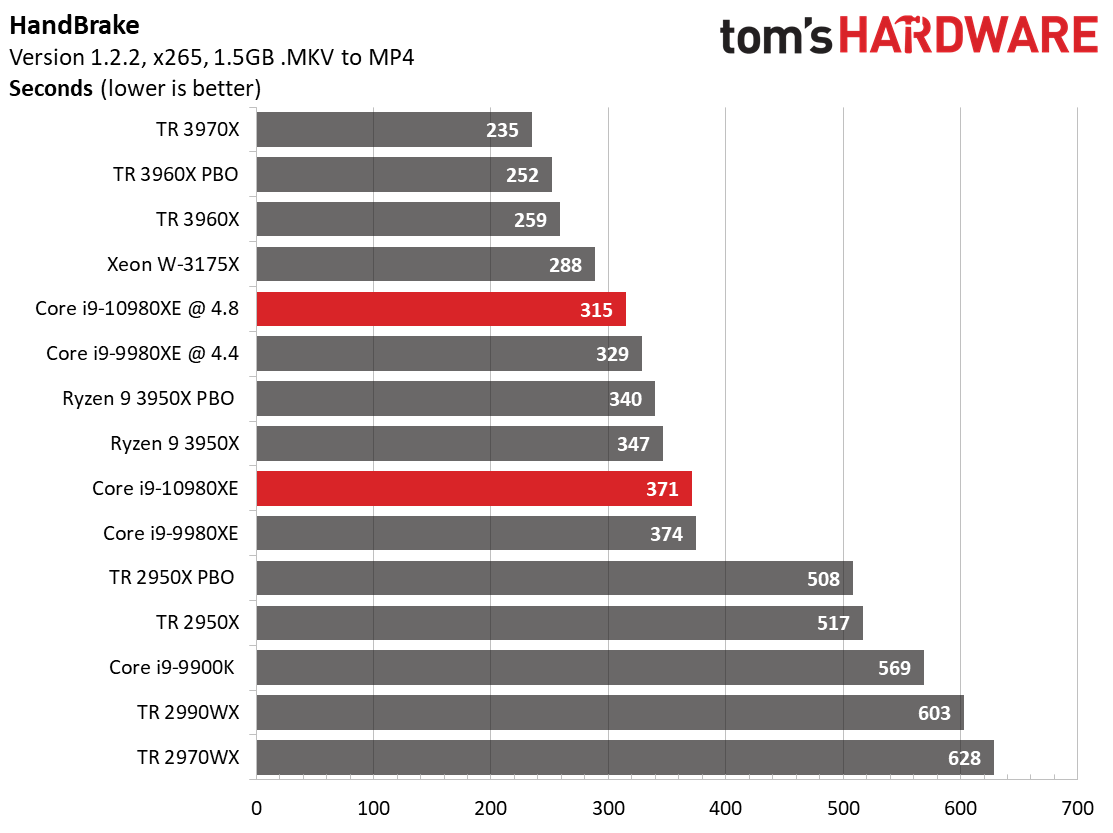

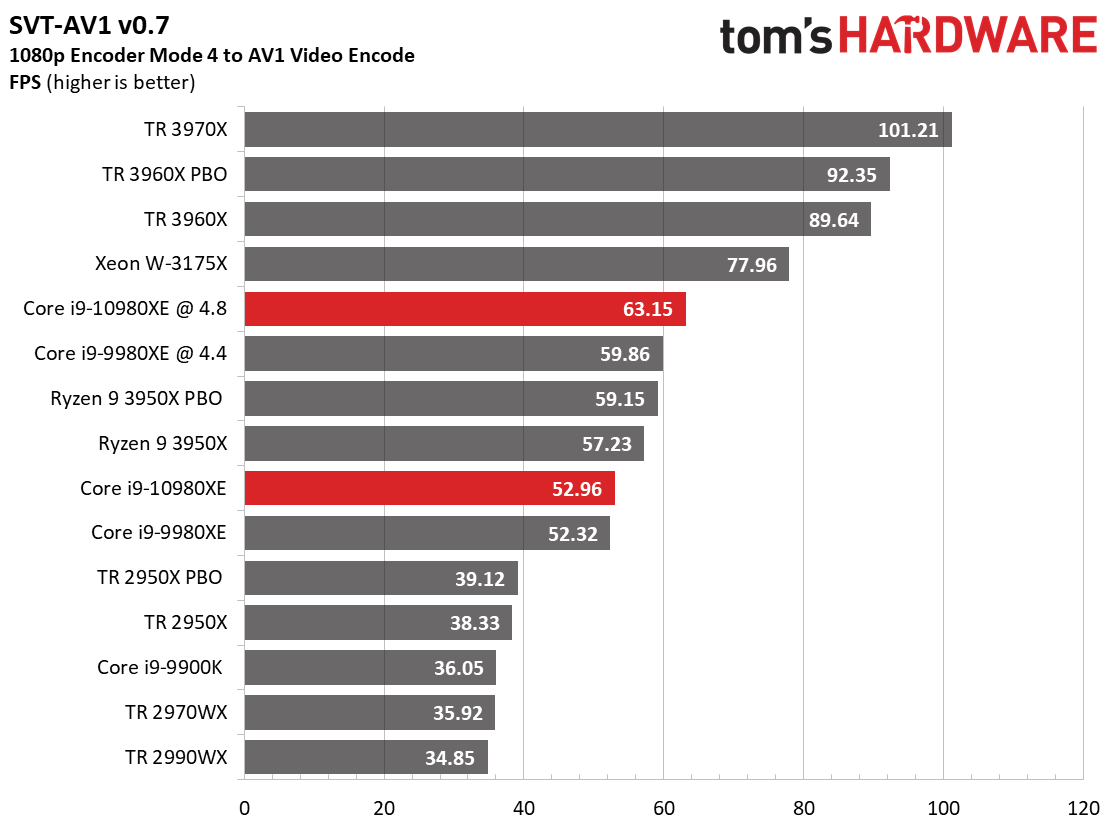

The 3950X beats the stock -10980XE in the HandBrake x264 test, and in the AVX-heavy x265 version of that same benchmark. Flipping through to the SVT-AV1 encoder, which is heavily threaded, paints a similar picture.

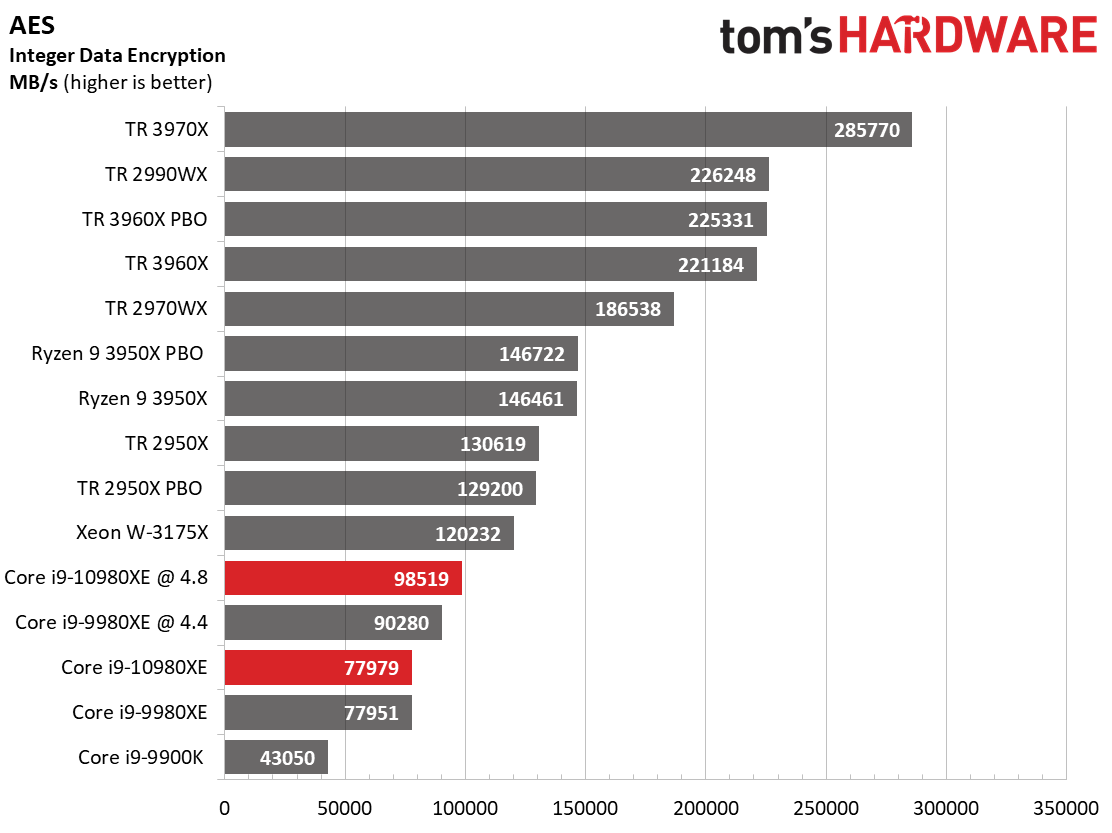

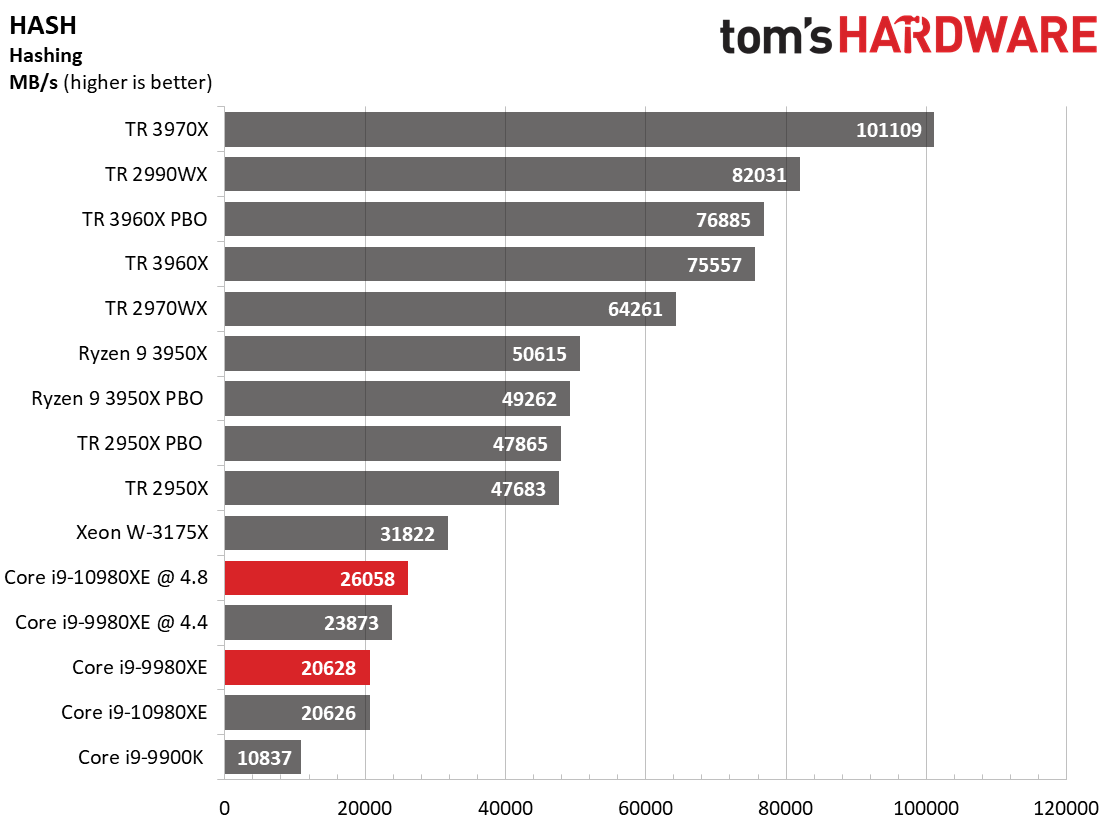

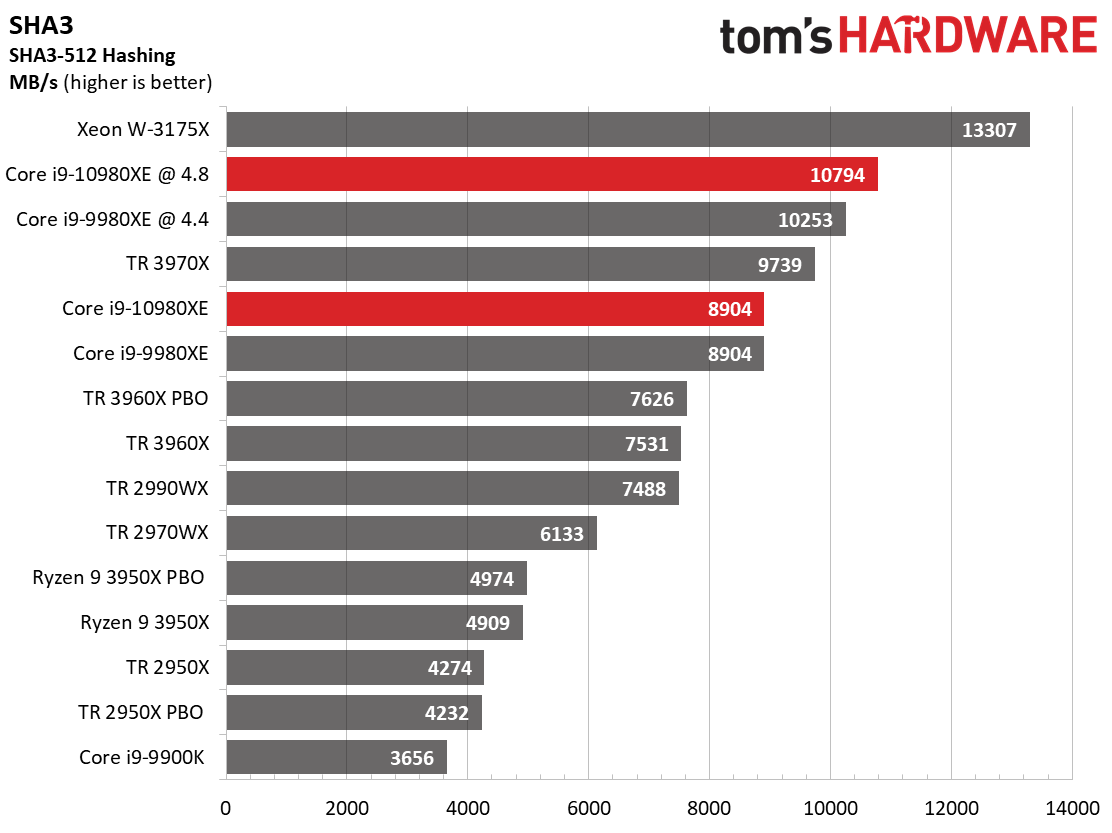

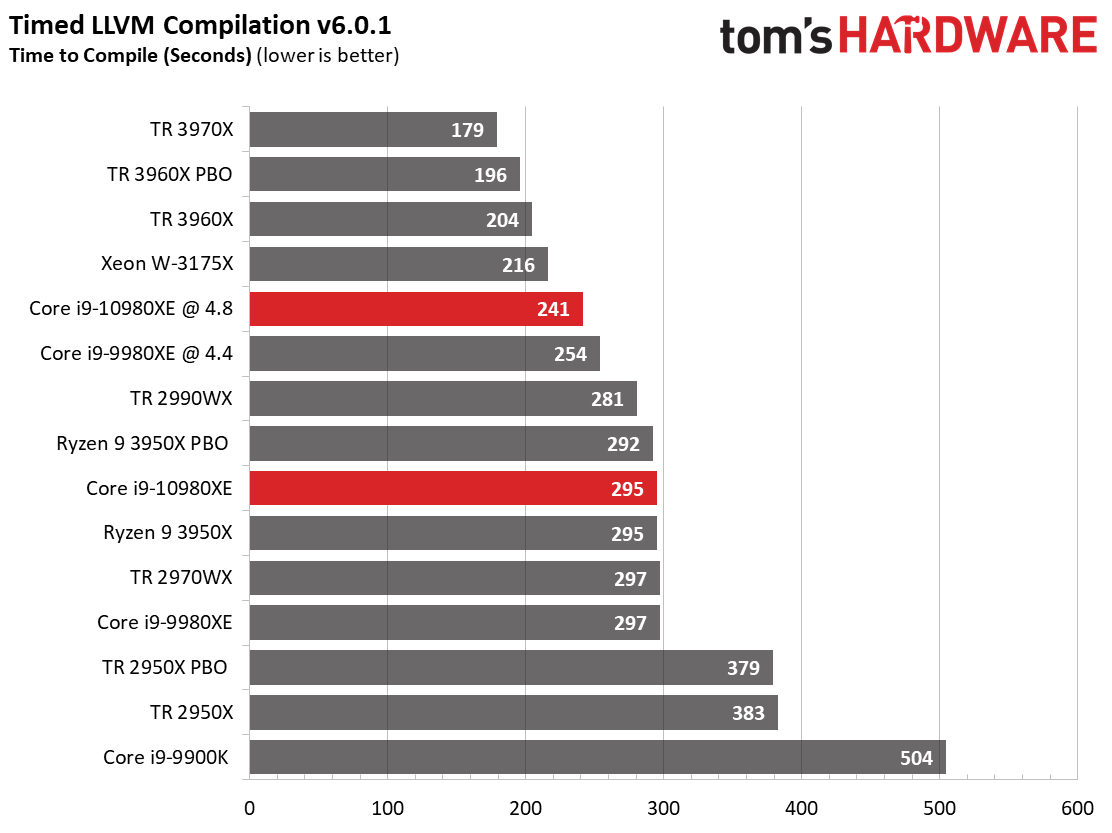

Compression, Decompression, Encryption, AVX

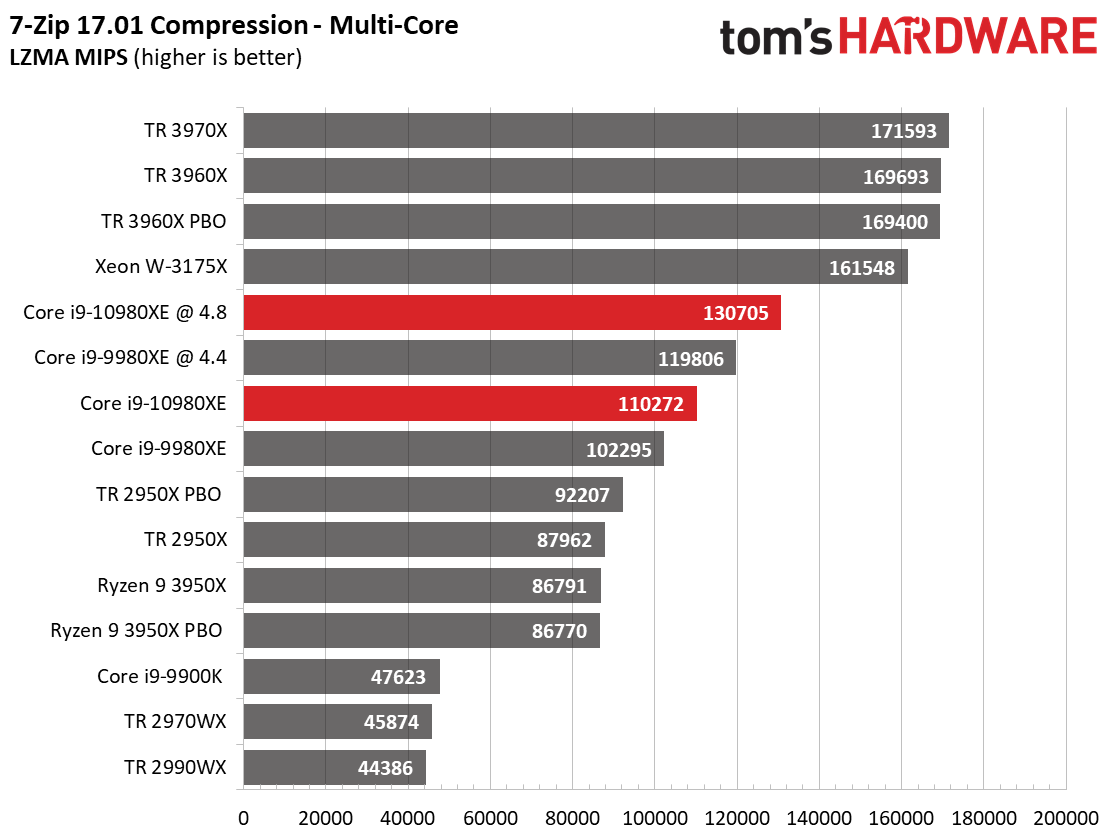

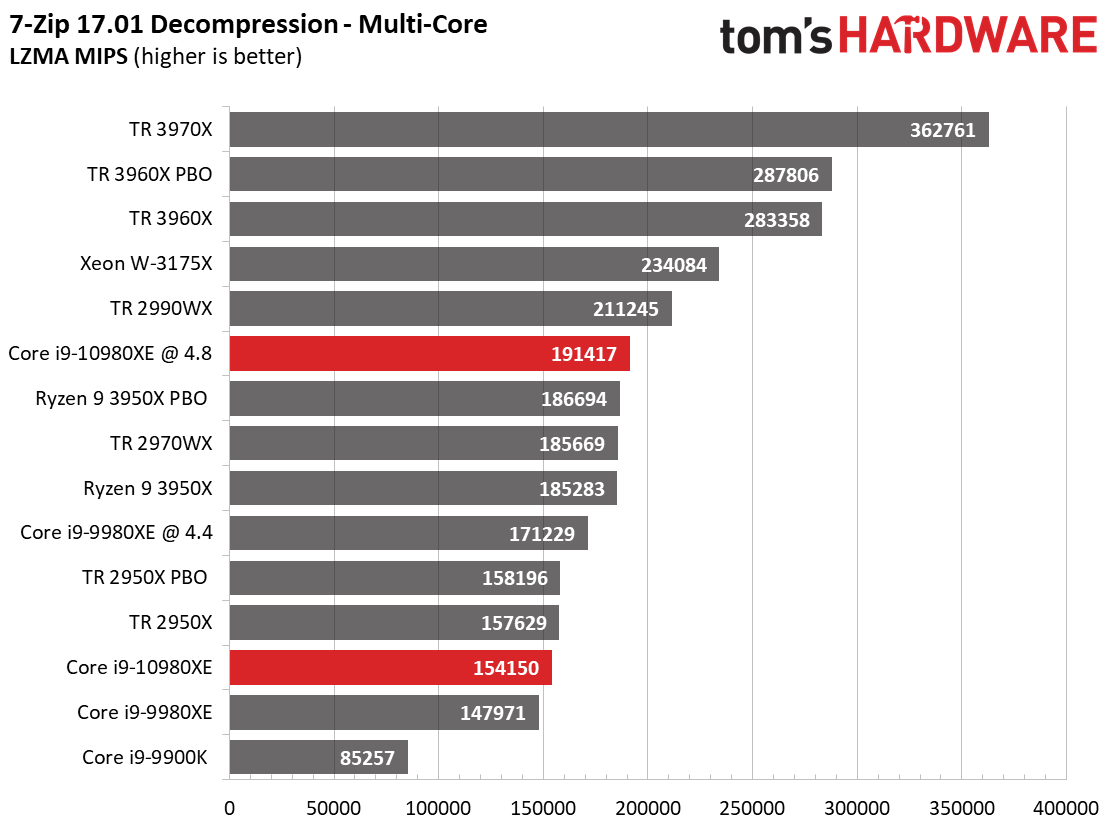

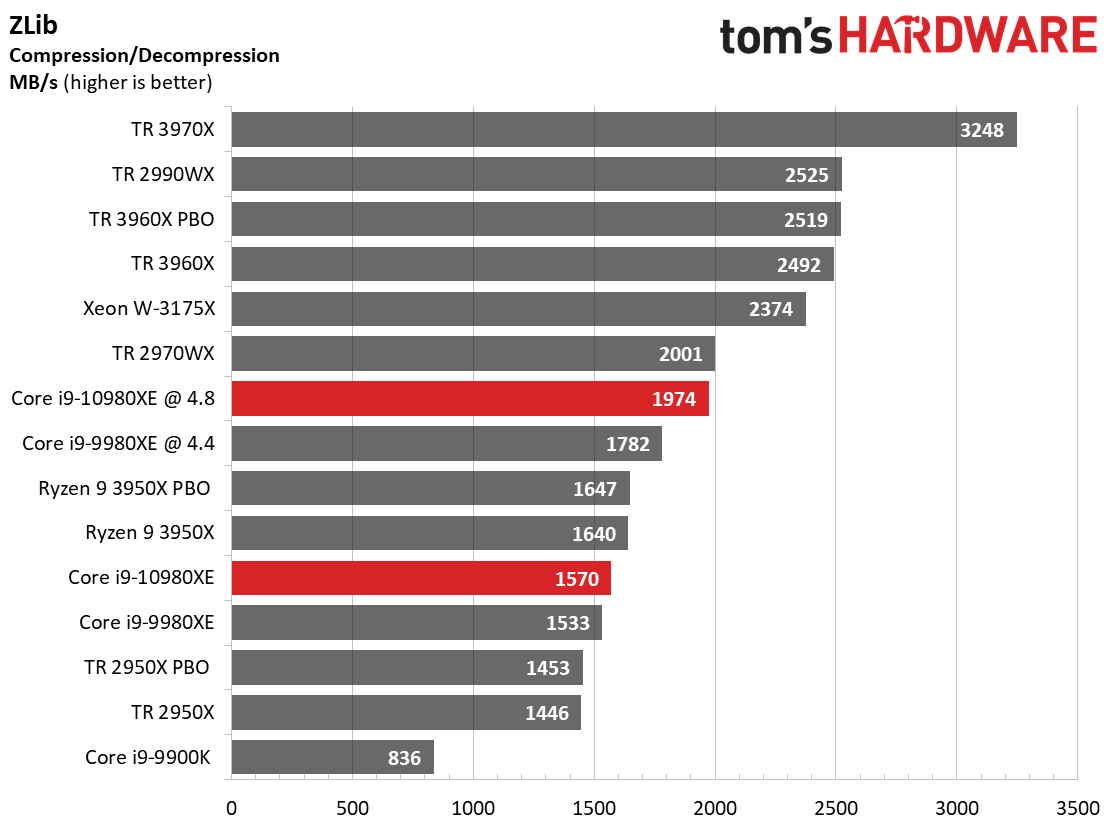

The 7zip and Zlib compression/decompression benchmarks rely heavily upon threading and work directly from system memory, thus avoiding the traditional storage bottleneck in these types of tasks.

The first-gen Threadripper processors are notorious for an unexplained deficiency in threaded 7zip compression workloads that find them trailing even the eight-core Core i9-9900K, but third-gen Threadripper marks a tremendous step forward in compression workloads. Meanwhile, the 3950X doesn't fare quite as well in compression, but proves nimble with decompression as it easily beats the stock -10980XE.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

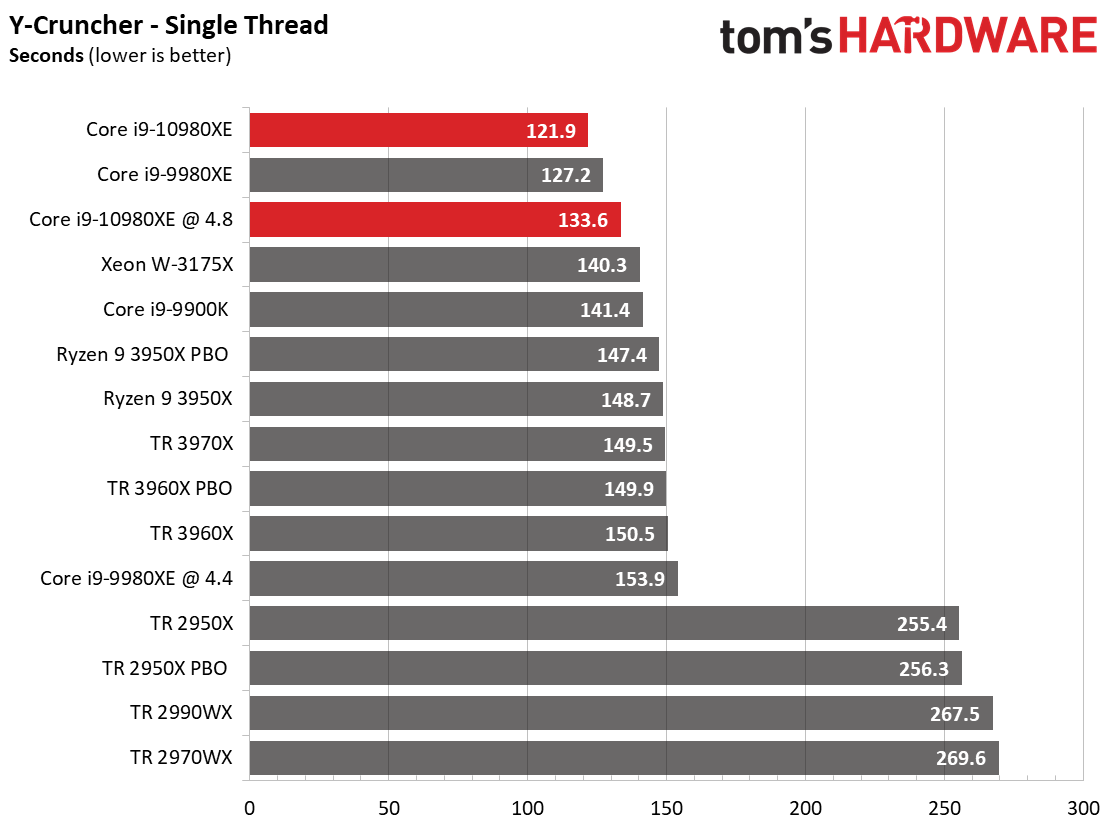

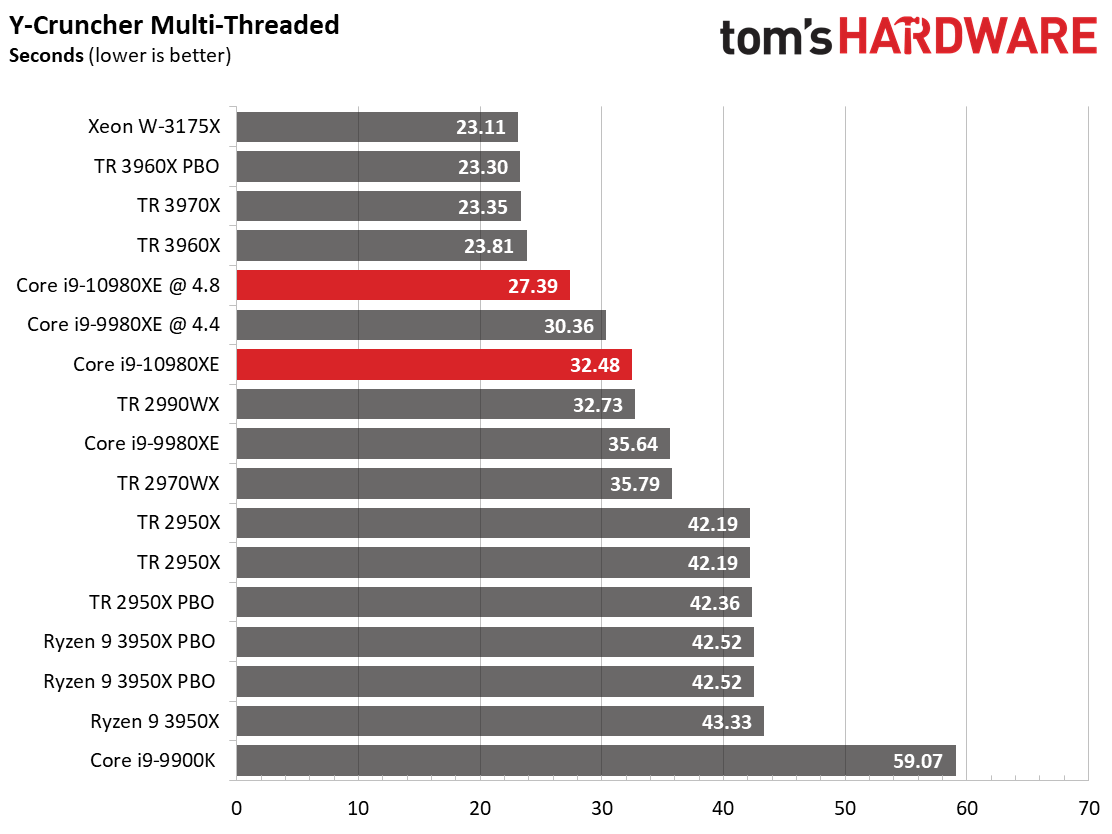

The 2970WX suffers during the single-threaded y-cruncher benchmark, which computes pi using AVX instructions, while the 3950X is far more competitive. That said, the -10980XE caps an all-Intel win.

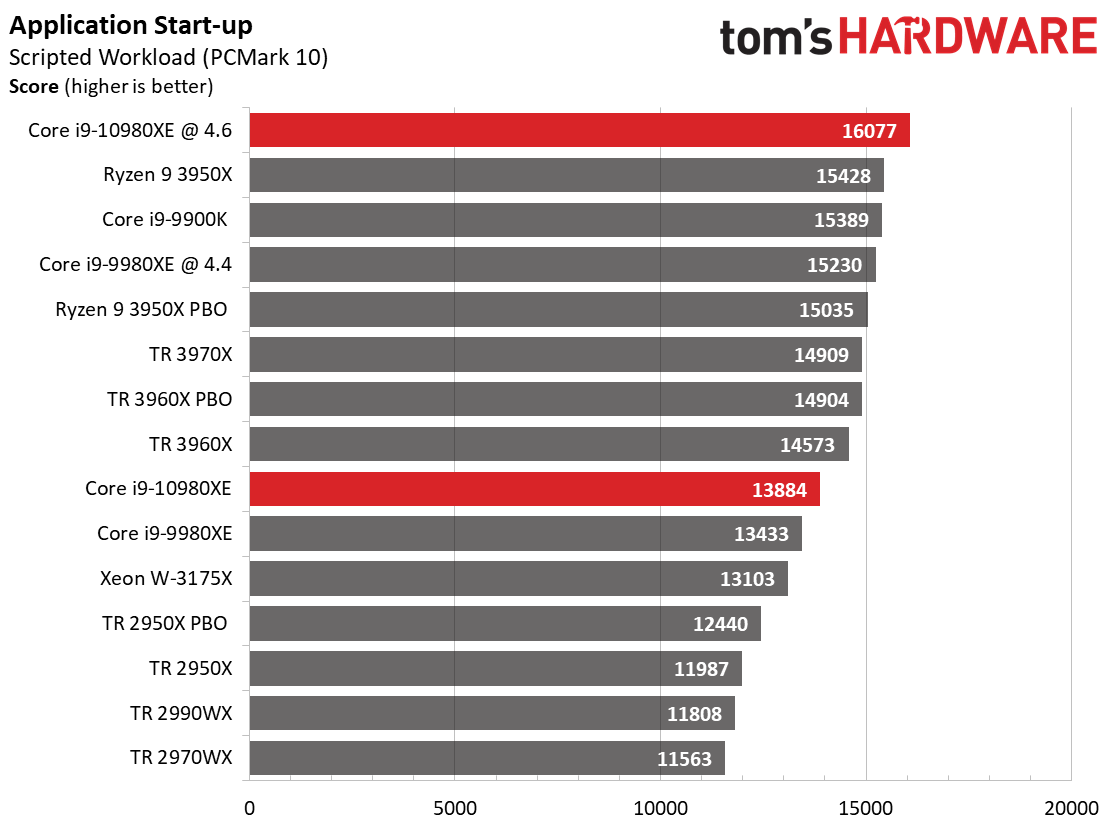

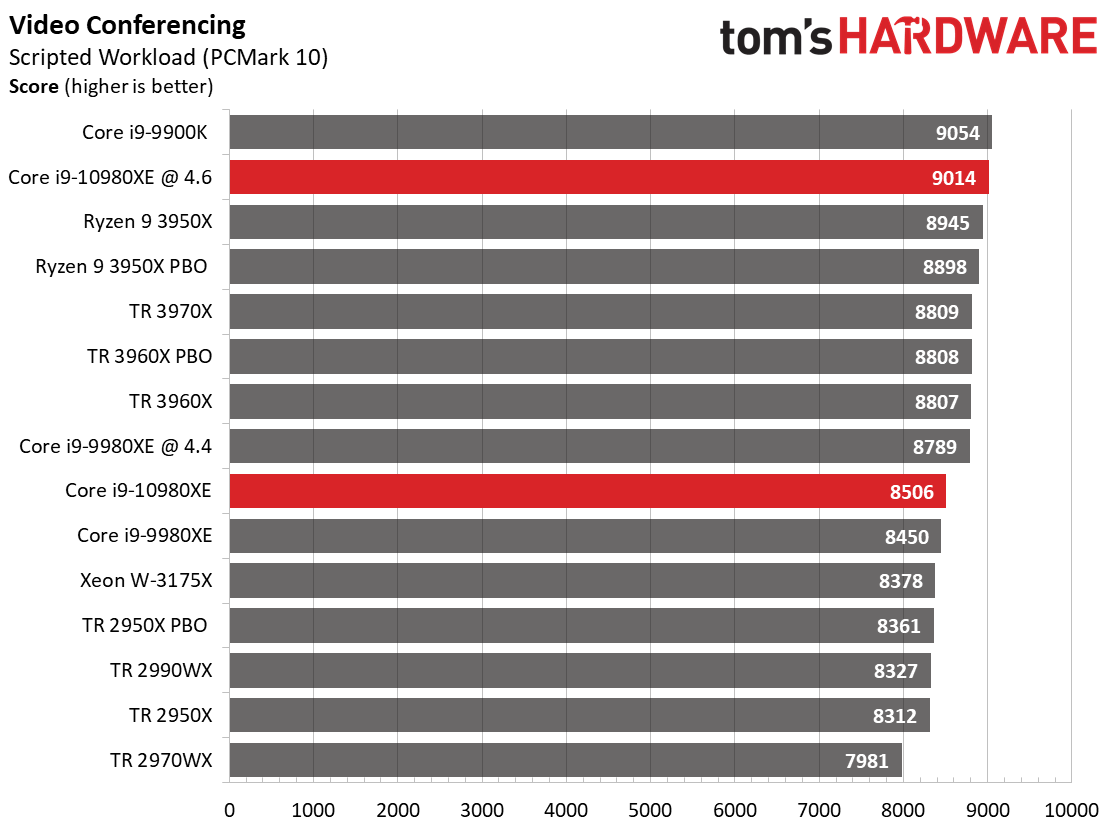

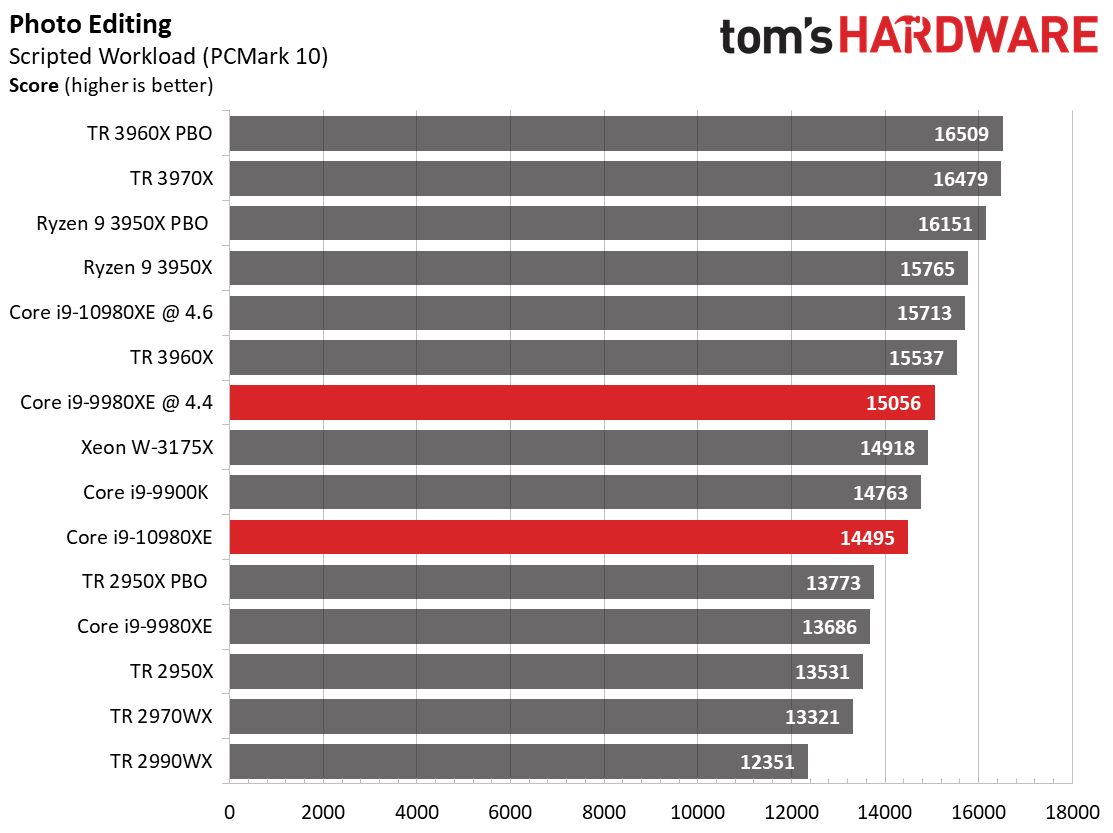

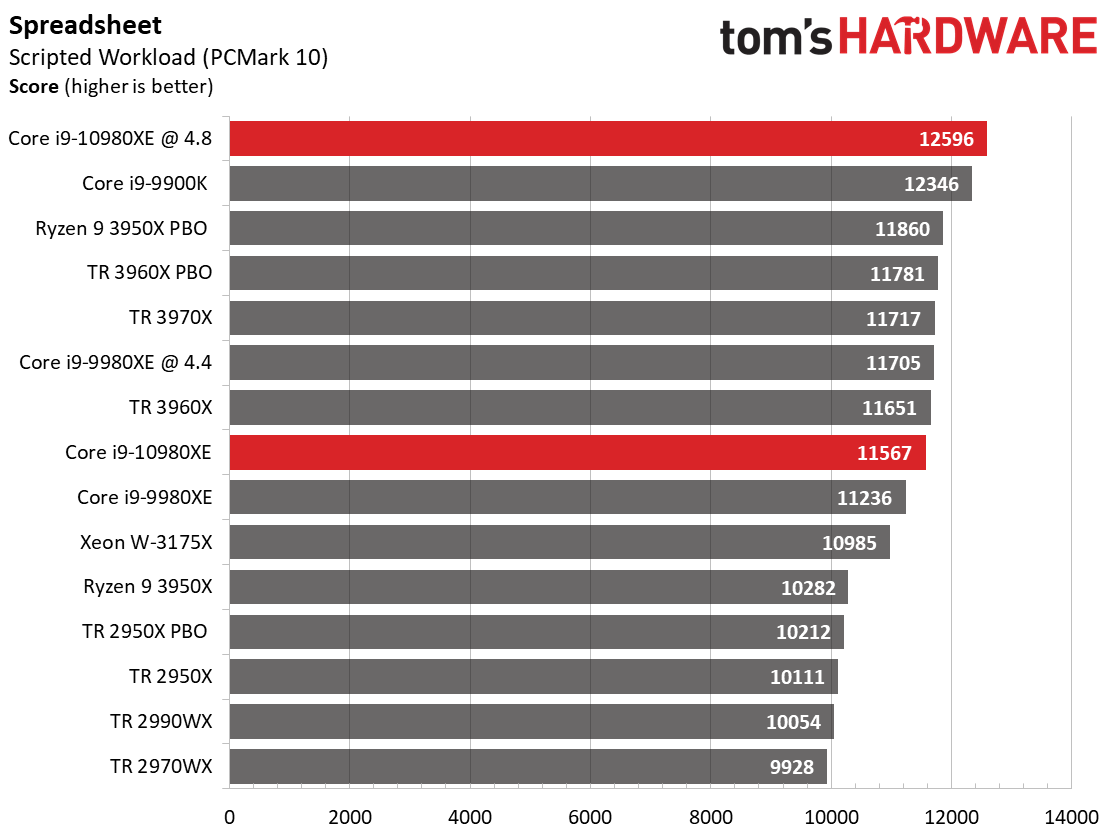

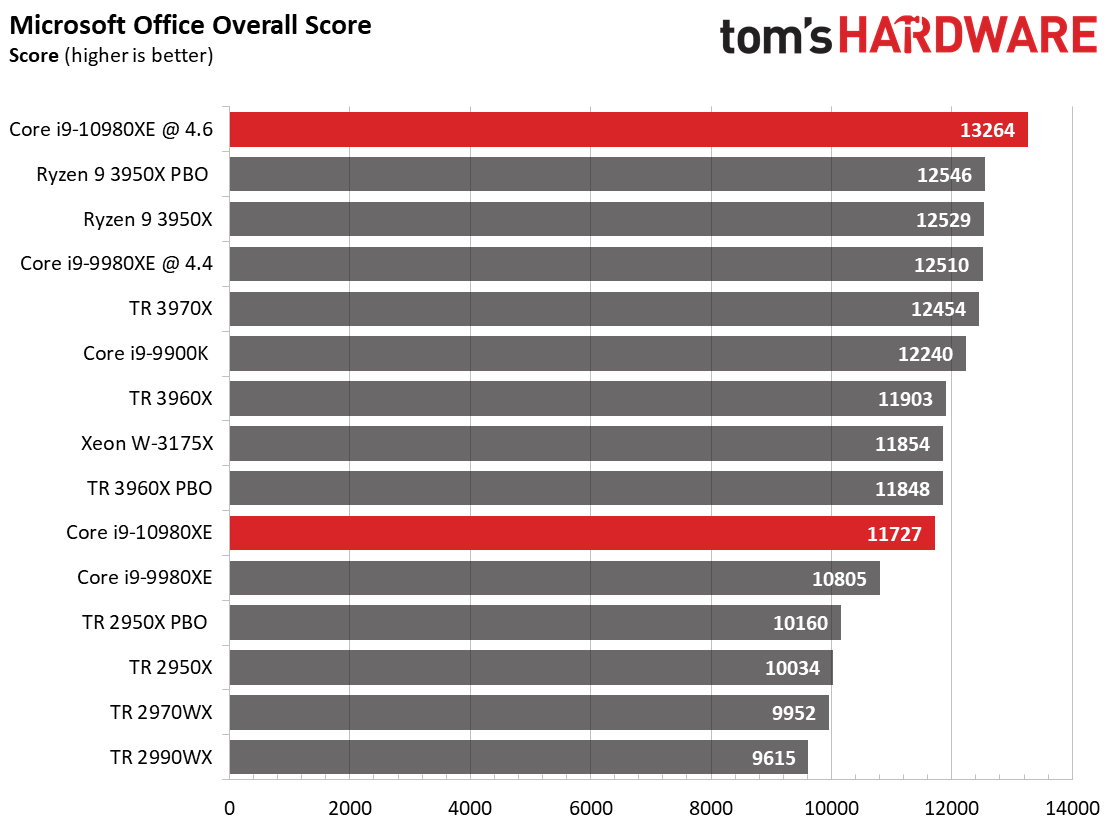

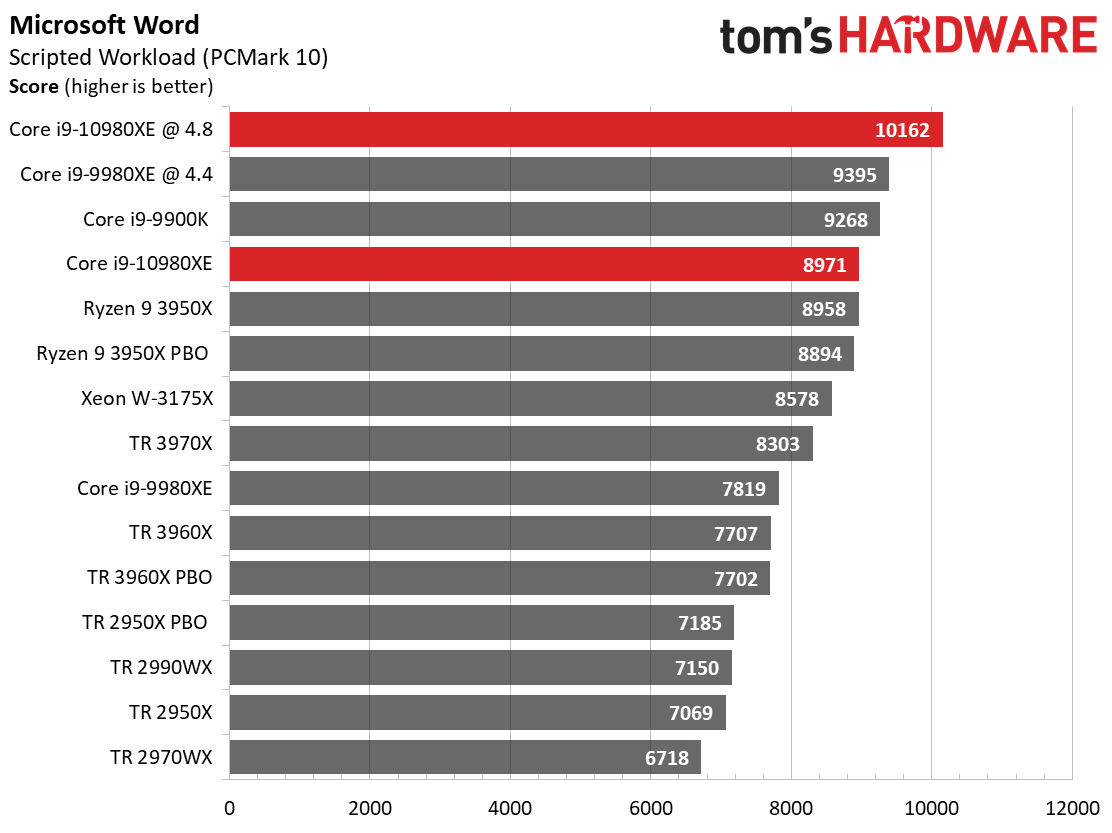

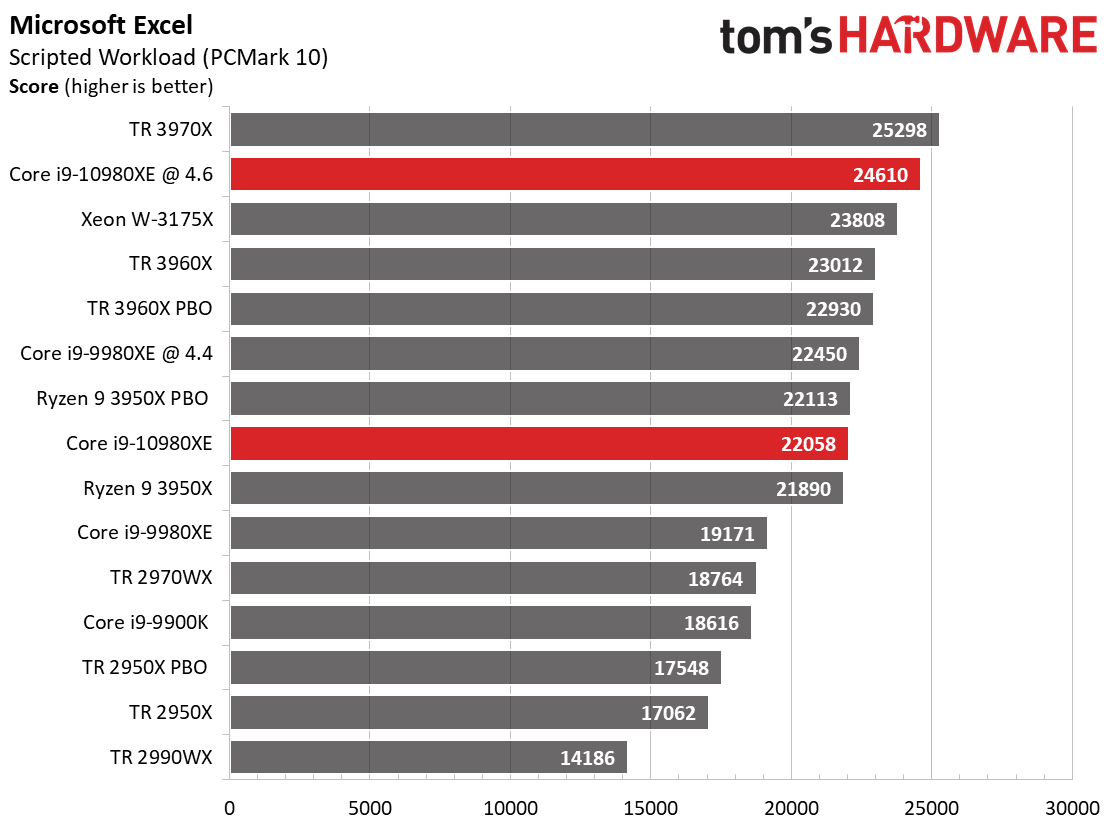

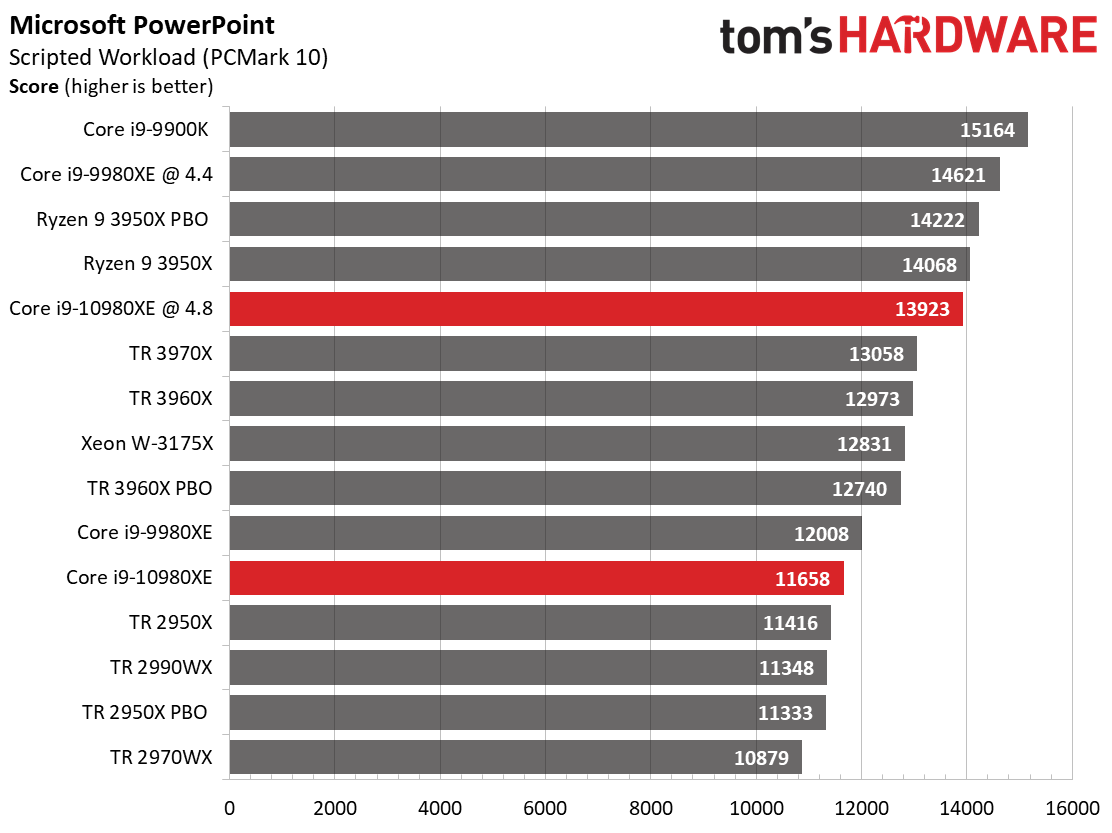

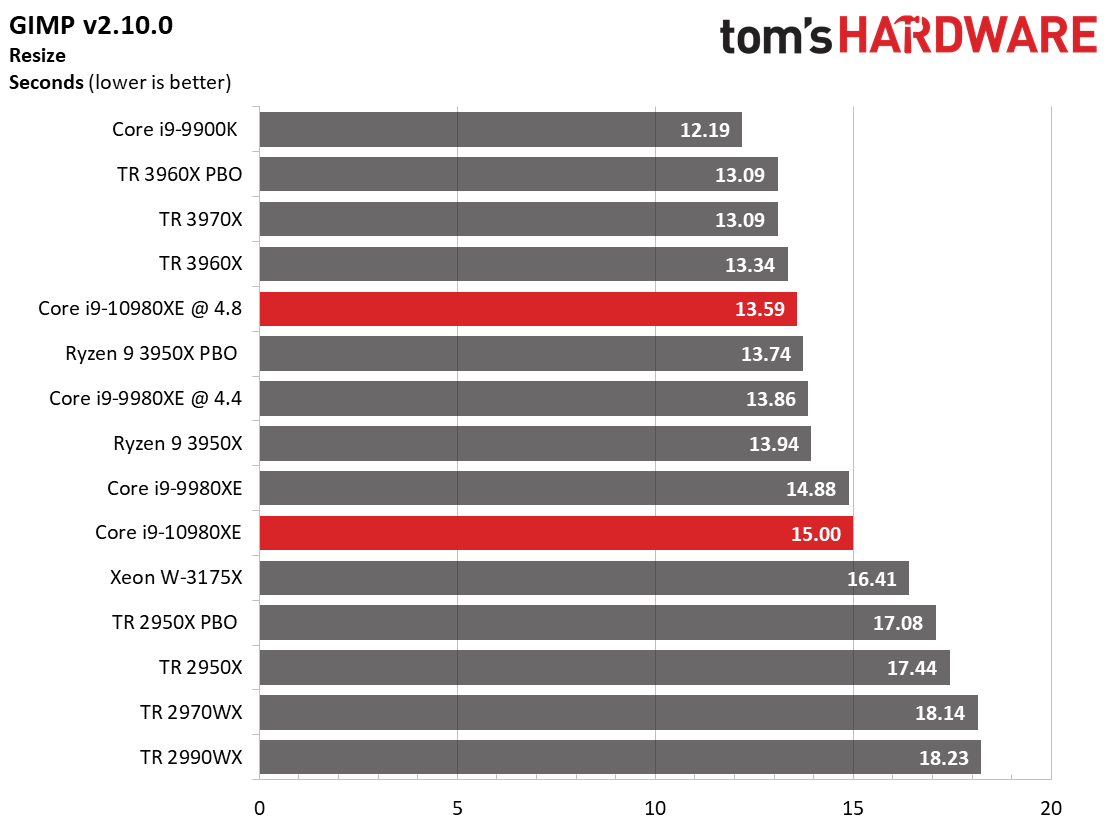

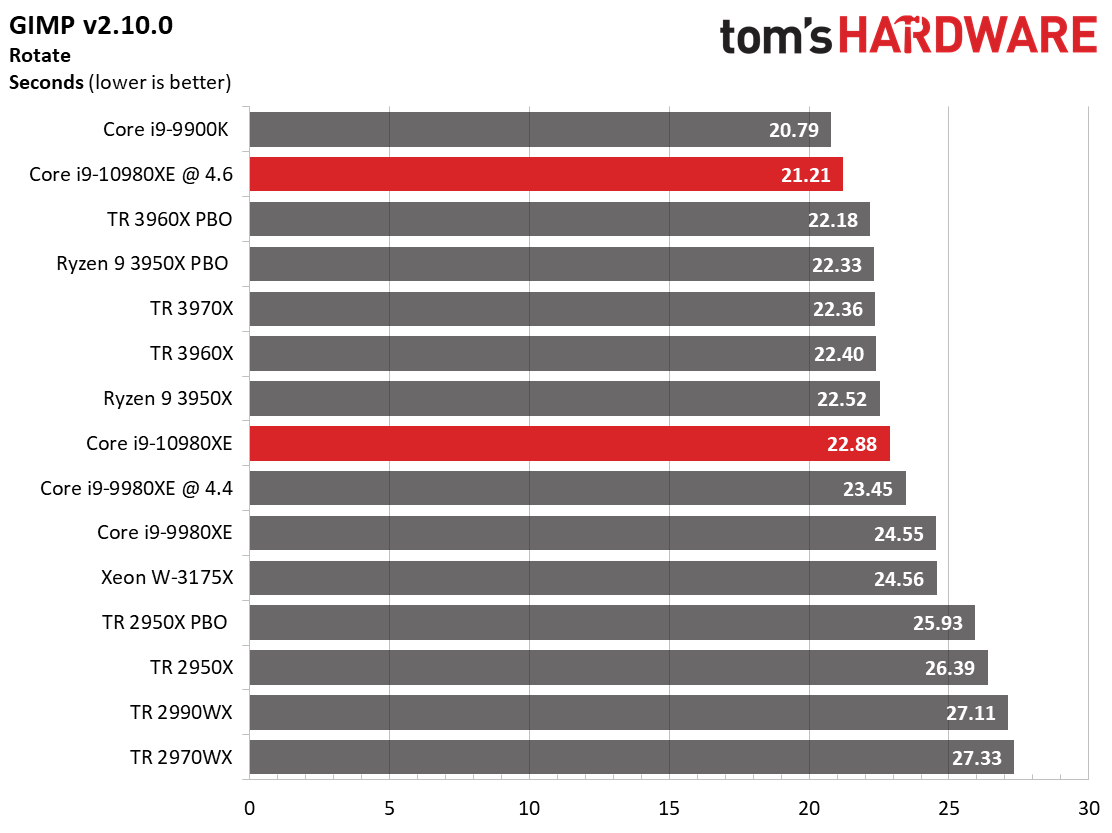

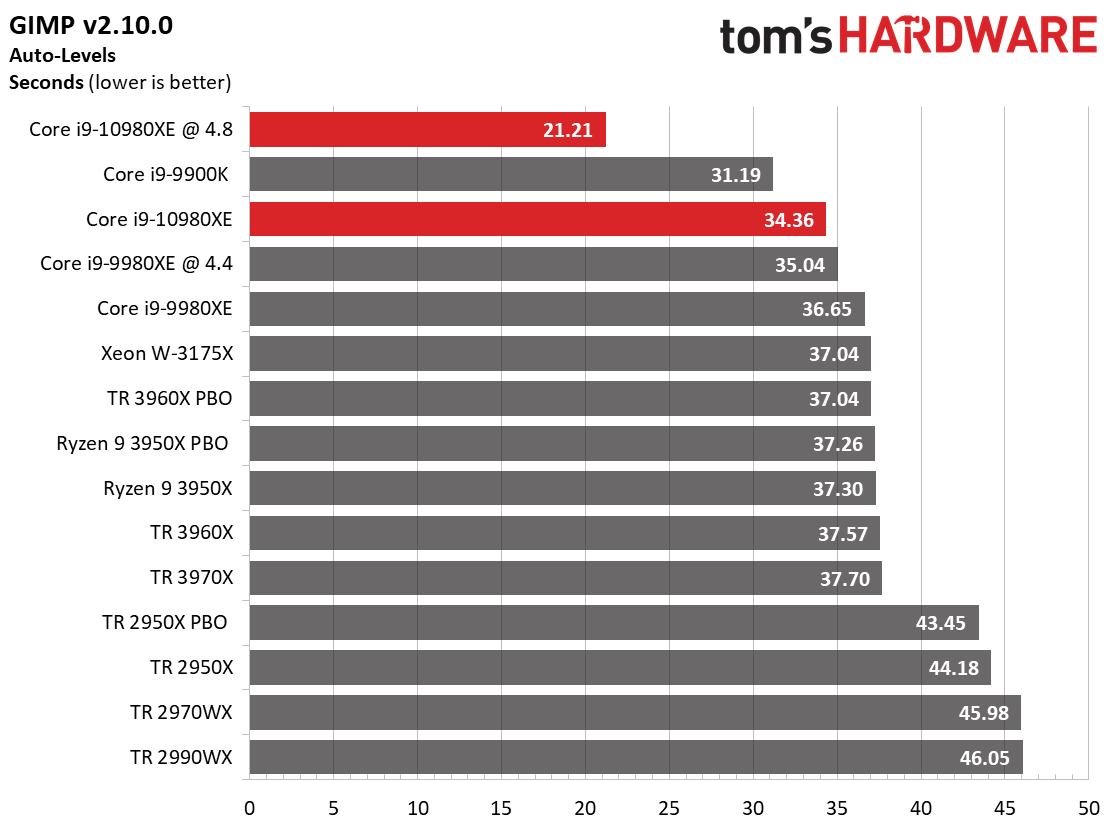

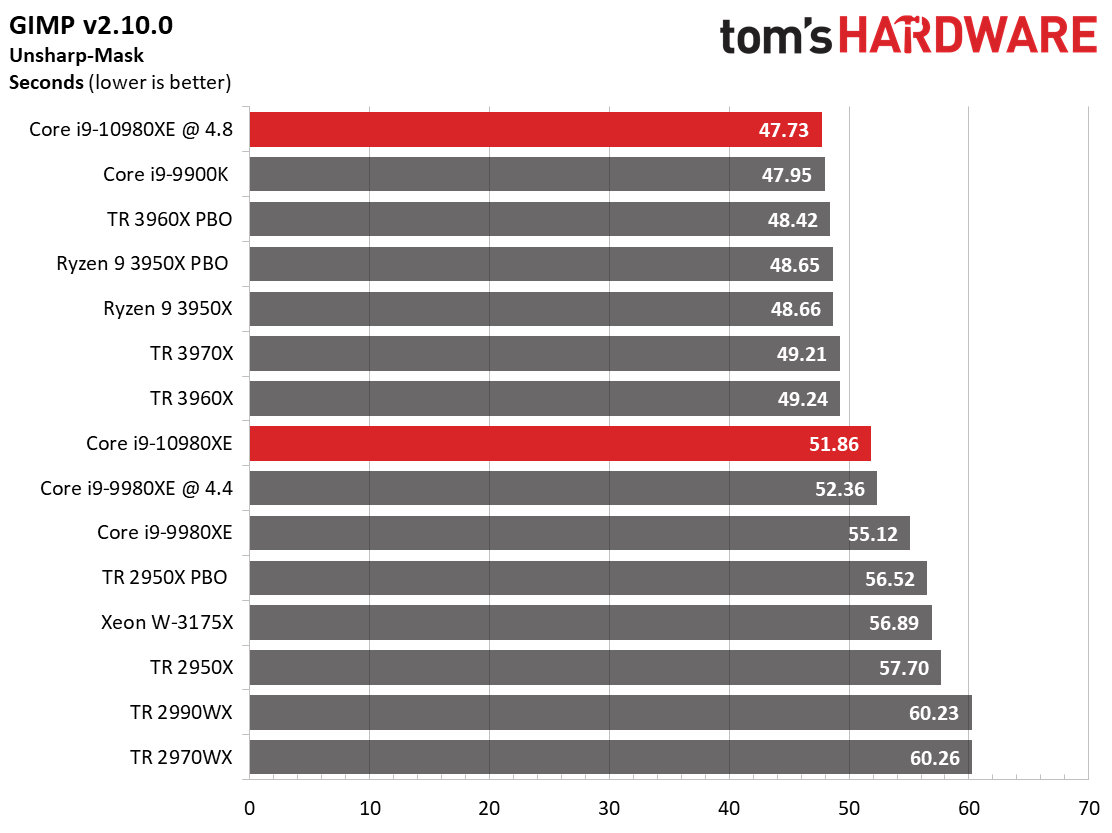

Office and Productivity

Microsoft's office suite runs via PCMark 10's new application test and uses real Microsoft Office applications. It seems like an odd fit to test these fire-breathing processors in such mundane tasks, but Office is ubiquitous. As we've come to expect, the overclocked -10980XE leads the tests, but the Ryzen 9 3950X challenges, and often beats, the stock Core i9-10980XE.

The application start-up metric measures load time snappiness in word processors, GIMP, and Web browsers. Other platform-level considerations affect this test as well, including the storage subsystem.

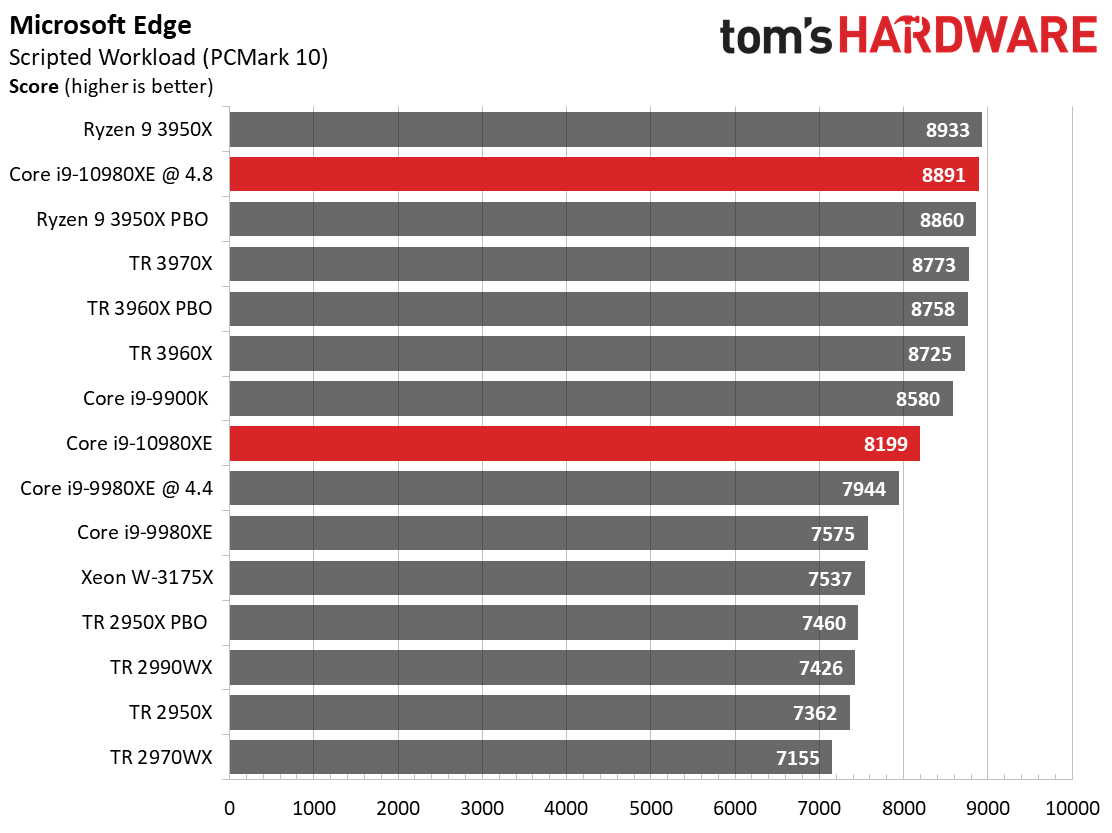

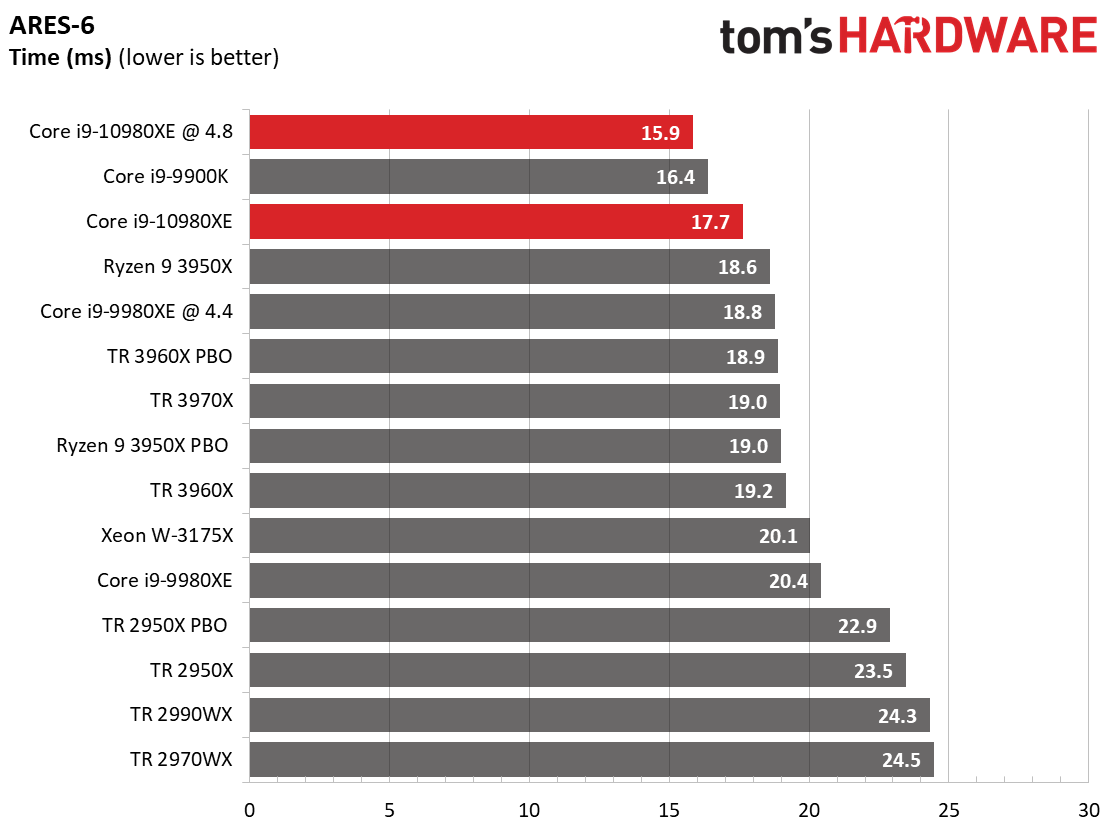

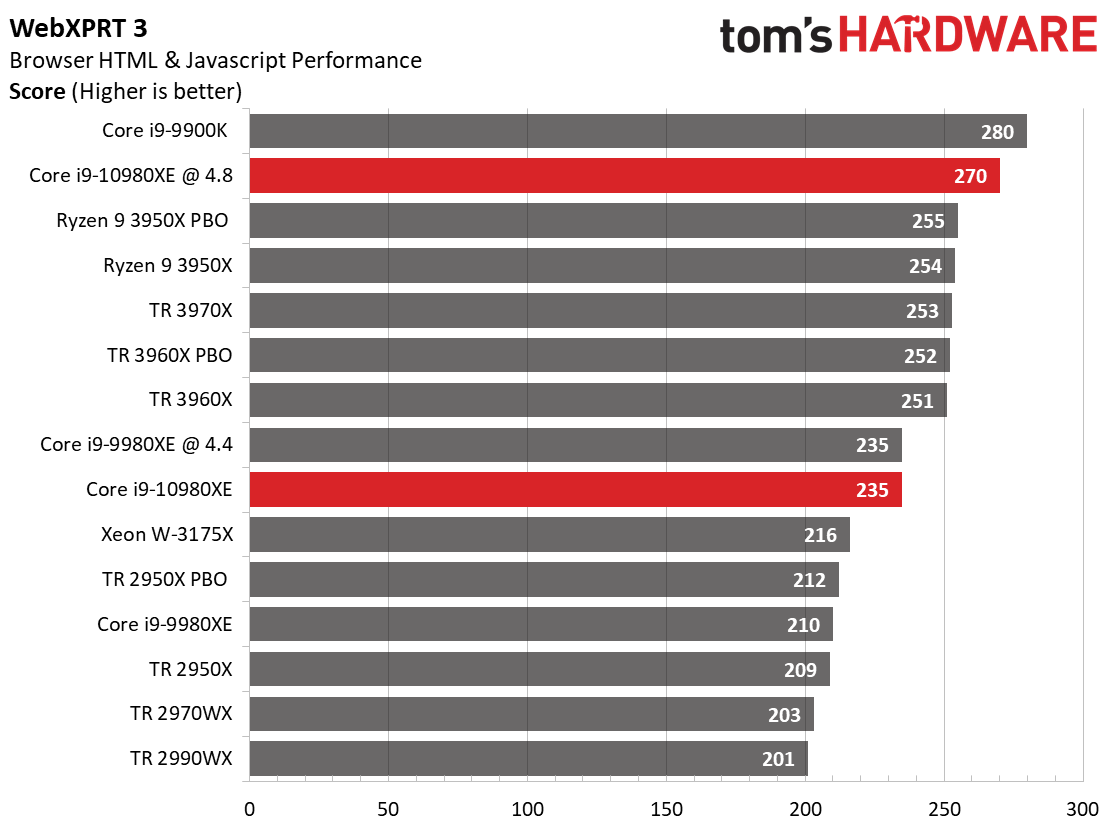

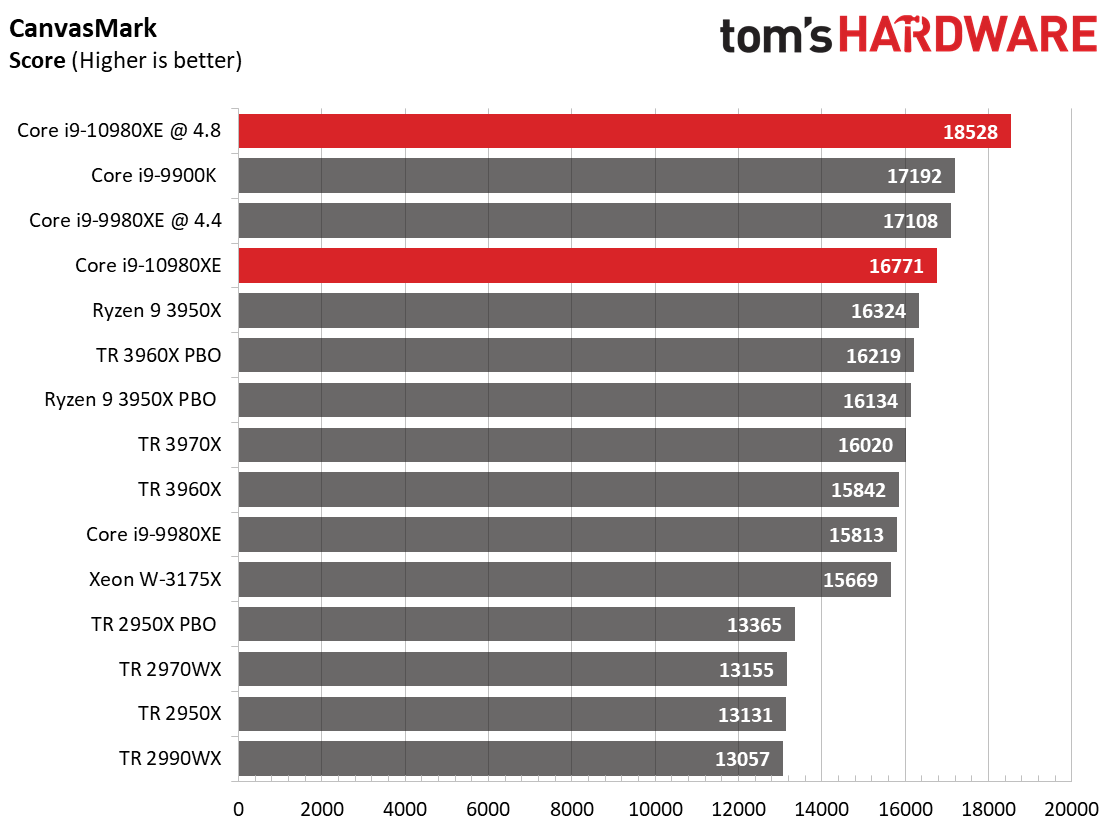

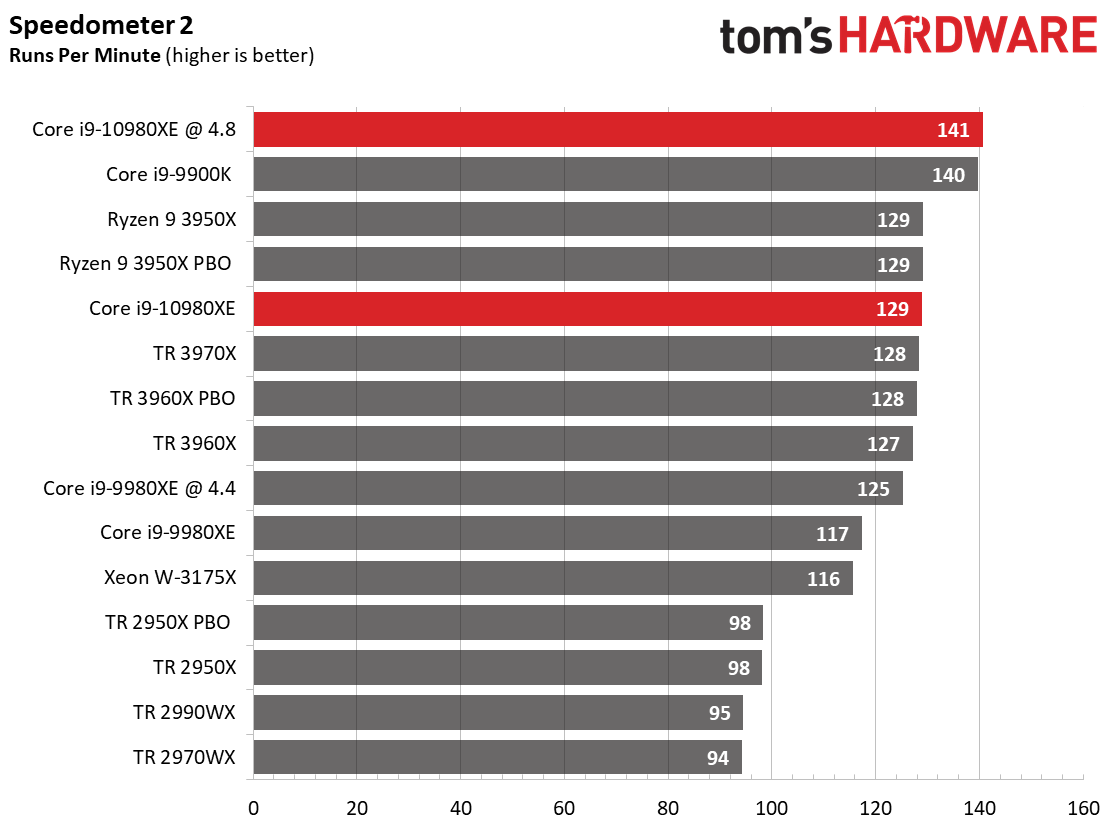

Web Browser

Browsers tend to be impacted more by the recent security mitigations than other types of applications, so Intel has generally taken a haircut in these benchmarks of fully-patched systems. Unsurprisingly, the Ryzen 9 3950X and Core i9-9900K are pretty agile in these workloads, but the Core i9-10980XE in stock trim is plenty snappy, too.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPUs Content

Current page: Core i9-10980XE Application Performance

Prev Page Core i9-10980XE SPEC Workstation and Adobe Performance Next Page Drowning in 14nm Cascade Lake

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Ful4n1t0c0sme Games benchmarks on a non gamer CPU. There is no sense. Please do compiling benchmarks and other stuff that make sense.Reply

And please stop using Windows to do that. -

Pat Flynn ReplyFul4n1t0c0sme said:Games benchmarks on a non gamer CPU. There is no sense. Please do compiling benchmarks and other stuff that make sense.

And please stop using Windows to do that.

While I agree that some Linux/Unix benchmarks should be present, the inclusion of gaming benchmarks helps not only pro-sumers, but game developers as well. It'll let them know how the CPU handles certain game engines, and whether or not they should waste tons of money on upgrading their dev teams systems.

Re: I used to build systems for Bioware... -

Paul Alcorn ReplyFul4n1t0c0sme said:Games benchmarks on a non gamer CPU. There is no sense. Please do compiling benchmarks and other stuff that make sense.

And please stop using Windows to do that.

Intel markets these chips at gamers, so we check the goods.

9 game benchmarks

28 workstation-focused benchmarks

40 consumer-class application tests

boost testing

power/thermal testing, including efficiency metrics

overclocking testing/data. I'm happy with that mix. -

Disclaimer: I badly want to dump Intel and go AMD. But are the conditions right?Reply

The AMD 3950X has 16 PCIe lanes, right? So for those of us who have multiple adapters such as RAID cards, USB or SATA port adapters, 10G NICs, etc, HEDT is the only way to go.

Someone once told me "No one in the world needs more than 16PCIe lanes, that's why mainstream CPUs have never gone over 16 lanes". If that were true the HEDT CPUs would not exist.

So we can say the 3950X destroys the Intel HEDT lineup, but only if you don't have anything other than ONE graphics card. As soon as you add other devices, you're blown.

The 3970X is $3199 where I am. That will drop by $100 by 2021.

The power consumption of 280w will cost me an extra $217 per year per PC. There are 3 HEDT PCs, so an extra $651 per year.

AMD: 1 PC @ 280w for 12 hours per day for 365 days at 43c per kilowatt hour = $527.74

Intel: 1 PC @ 165w for 12 hours per day for 365 days at 43c per kilowatt hour = $310.76

My 7900X is overclocked to 4.5GHZ all cores. Can I do that with any AMD HEDT CPU?

In summer the ambient temp here is 38 - 40 degrees Celsius. With a 280mm cooler and 11 case fans my system runs 10 degrees over ambient on idle, so 50c is not uncommon during the afternoons on idle. Put the system under load it easily sits at 80c and is very loud.

With a 280w CPU, how can I cool that? The article says that "Intel still can't deal with heat". Errr... Isn't 280w going to produce more heat than 165w. And isn't 165w much easier to cool? Am I missing something?

I'm going to have to replace motherboard and RAM too. That's another $2000 - $3000. With Intel my current memory will work and a new motherboard will set me back $900.

Like I said, I really want to go AMD, but I think the heat, energy and changeover costs are going to be prohibitive. PCIe4 is a big draw for AMD as it means I don't have to replace again when Intel finally gets with the program, but the other factors I fear are just too overwhelming to make AMD viable at this stage.

Darn it Intel is way cheaper when looked at from this perspective. -

redgarl ReplyFul4n1t0c0sme said:Games benchmarks on a non gamer CPU. There is no sense. Please do compiling benchmarks and other stuff that make sense.

And please stop using Windows to do that.

It's over pal... done, there is not even a single way to look at it the bright way, the 3950x is making the whole Intel HEDT offering a joke.

I would have give this chip a 2 stars, but we know toms and their double standards. The only time they cannot do it is when the data is just plain dead impossible to contest... like Anandtech described, it is a bloodbath.

I don't believe Intel will get back from this anytime soon. -

Paul Alcorn ReplyIceQueen0607 said:<snip>

The AMD 3950X has 16 PCIe lanes, right? So for those of us who have multiple adapters such as RAID cards, USB or SATA port adapters, 10G NICs, etc, HEDT is the only way to go.

<snip>

The article says that "Intel still can't deal with heat".

<snip>

I agree with the first point here, which is why we point out that Intel has an advantage there for users that need the I/O.

On the second point, can you point me to where it says that in the article? I must've missed it. Taken in context, it says that Intel can't deal with the heat of adding more 14nm cores in the same physical package, which is accurate if it wants to maintain a decent clock rate. -

ezst036 I'm surprised nobody caught this from the second paragraph of the article.Reply

Intel's price cuts come as a byproduct of AMD's third-gen Ryzen and Threadripper processors, with the former bringing HEDT-class levels of performance to mainstream 400- and 500-series motherboards, while the latter lineup is so powerful that Intel, for once, doesn't even have a response.

For twice? This is a recall of the olden days of the first-gen slot-A Athlon processors. Now I'm not well-versed in TomsHardware articles circa 1999, but this was not hard to find at all:

Coppermine's architecture is still based on the architecture of Pentium Pro. This architecture won't be good enough to catch up with Athlon. It will be very hard for Intel to get Coppermine to clock frequencies of 700 and above and the P6-architecture may not benefit too much from even higher core clocks anymore. Athlon however is already faster than a Pentium III at the same clock speed, which will hardly change with Coppermine, and Athlon is designed to go way higher than 600 MHz. This design screams for higher clock speeds! AMD is probably for the first time in the very situation that Intel used to enjoy for such a long time. AMD might already be able to supply Athlons at even higher clock rates right now (650 MHz is currently the fastest Athlon), but there is no reason to do so.

https://www.tomshardware.com/reviews/athlon-processor,121-16.html

Intel didn't have a response back then either. -

bigpinkdragon286 Reply

TDP is the wrong way to directly compare an Intel CPU with an AMD CPU. Neither vendor measures TDP in the same fashion so you should not compare them directly. On the most recent platforms, per watt consumed, you get more work done on the new AMD platform, plus most users don't have their chips running at max power 24/7, so why would you calculate your power usage against TDP even if it were comparable across brands?IceQueen0607 said:Disclaimer: I badly want to dump Intel and go AMD. But are the conditions right?

The AMD 3950X has 16 PCIe lanes, right? So for those of us who have multiple adapters such as RAID cards, USB or SATA port adapters, 10G NICs, etc, HEDT is the only way to go.

Someone once told me "No one in the world needs more than 16PCIe lanes, that's why mainstream CPUs have never gone over 16 lanes". If that were true the HEDT CPUs would not exist.

So we can say the 3950X destroys the Intel HEDT lineup, but only if you don't have anything other than ONE graphics card. As soon as you add other devices, you're blown.

The 3970X is $3199 where I am. That will drop by $100 by 2021.

The power consumption of 280w will cost me an extra $217 per year per PC. There are 3 HEDT PCs, so an extra $651 per year.

AMD: 1 PC @ 280w for 12 hours per day for 365 days at 43c per kilowatt hour = $527.74

Intel: 1 PC @ 165w for 12 hours per day for 365 days at 43c per kilowatt hour = $310.76

My 7900X is overclocked to 4.5GHZ all cores. Can I do that with any AMD HEDT CPU?

In summer the ambient temp here is 38 - 40 degrees Celsius. With a 280mm cooler and 11 case fans my system runs 10 degrees over ambient on idle, so 50c is not uncommon during the afternoons on idle. Put the system under load it easily sits at 80c and is very loud.

With a 280w CPU, how can I cool that? The article says that "Intel still can't deal with heat". Errr... Isn't 280w going to produce more heat than 165w. And isn't 165w much easier to cool? Am I missing something?

I'm going to have to replace motherboard and RAM too. That's another $2000 - $3000. With Intel my current memory will work and a new motherboard will set me back $900.

Like I said, I really want to go AMD, but I think the heat, energy and changeover costs are going to be prohibitive. PCIe4 is a big draw for AMD as it means I don't have to replace again when Intel finally gets with the program, but the other factors I fear are just too overwhelming to make AMD viable at this stage.

Darn it Intel is way cheaper when looked at from this perspective.

Also, your need to have all of your cores clocked to a particular, arbitrarily chosen speed is a less than ideal metric to use if speed is not directly correlated to completed work, which after all is essentially what we want from a CPU.

If you really need to get so much work done that your CPU runs at it's highest power usage perpetually, the higher cost of the power consumption is hardly going to be your biggest concern.

How about idle and average power consumption, or completed work per watt, or even overall completed work in a given time-frame, which make a better case about AMD's current level of competitiveness. -

Crashman Reply

Fun times. The Tualatin was based on Coppermine and went to 1.4 GHz, outclassing Williamette at 1.8GHz by a wide margin. Northwood came out and beat it, but at the same time Intel was developing Pentium M based on...guess what? Tualatin.ezst036 said:I'm surprised nobody caught this from the second paragraph of the article.

Intel's price cuts come as a byproduct of AMD's third-gen Ryzen and Threadripper processors, with the former bringing HEDT-class levels of performance to mainstream 400- and 500-series motherboards, while the latter lineup is so powerful that Intel, for once, doesn't even have a response.

For twice? This is a recall of the olden days of the first-gen slot-A Athlon processors. Now I'm not well-versed in TomsHardware articles circa 1999, but this was not hard to find at all:

Coppermine's architecture is still based on the architecture of Pentium Pro. This architecture won't be good enough to catch up with Athlon. It will be very hard for Intel to get Coppermine to clock frequencies of 700 and above and the P6-architecture may not benefit too much from even higher core clocks anymore. Athlon however is already faster than a Pentium III at the same clock speed, which will hardly change with Coppermine, and Athlon is designed to go way higher than 600 MHz. This design screams for higher clock speeds! AMD is probably for the first time in the very situation that Intel used to enjoy for such a long time. AMD might already be able to supply Athlons at even higher clock rates right now (650 MHz is currently the fastest Athlon), but there is no reason to do so.

https://www.tomshardware.com/reviews/athlon-processor,121-16.html

Intel didn't have a response back then either.

And then Core came out of Pentium M, etc etc etc and it wasn't long before AMD couldn't keep up.

Ten years we waited for AMD to settle the score, and it's our time to enjoy their time in the sun. -

ReplyPaulAlcorn said:I agree with the first point here, which is why we point out that Intel has an advantage there for users that need the I/O.

On the second point, can you point me to where it says that in the article? I must've missed it. Taken in context, it says that Intel can't deal with the heat of adding more 14nm cores in the same physical package, which is accurate if it wants to maintain a decent clock rate.

yes, sorry, my interpretation was not worded accurately.

Intel simply doesn't have room to add more cores, let alone deal with the increased heat, within the same package.

My point was that Intel is still going to be easier to cool producing only 165w vs AMD's 280w.

How do you calculate the watts, or heat for an overclocked CPU? I'm assuming the Intel is still more over-clockable than the AMD, so given the 10980XE's base clock of 3.00ghz, I wonder if I could still overclock it over 4.00ghz. How much heat would it produce then compared to the AMD?

Not that I can afford to spend $6000 to upgrade to the 3970X or $5000 to upgrade to the 3960X... And the 3950X is out because of PCIe lane limitations.

It looks like I'm stuck with Intel, unless I save my coins to go AMD. Makes me sick to the pit of my stomach :)