Nvidia To Power Japan's 'Fastest AI Supercomputer' This Summer

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

The Tokyo Institute of Technology announced plans to launch Japan's "fastest AI supercomputer" this summer. The supercomputer, called Tsubame3.0, will use Nvidia’s GPU accelerators to double its performance over the Tsubame2.5 predecessor.

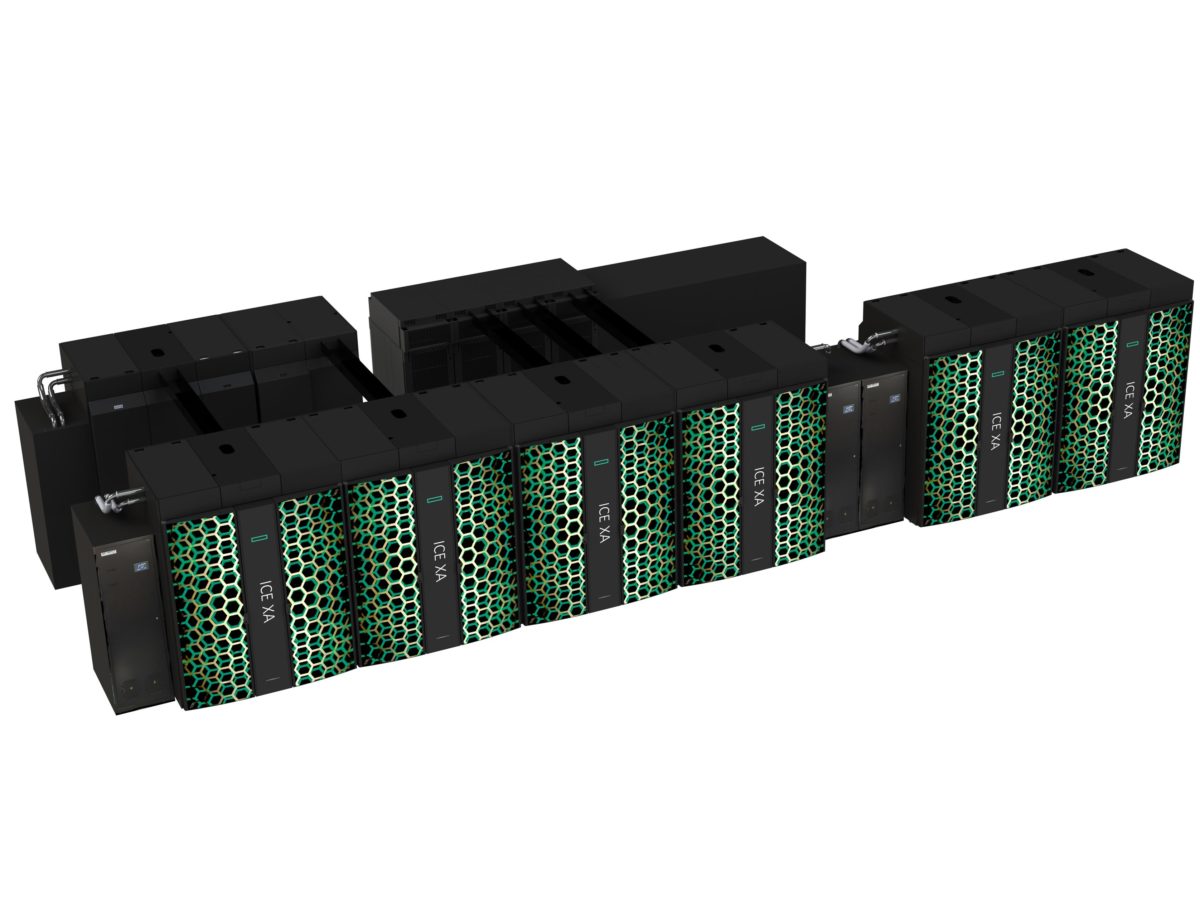

Tsubame3.0

The new Tsubame3.0 will use Nvidia’s latest Pascal-based Tesla P100 GPUs, which the company said are 3x as efficient as their predecessors. The new GPUs will deliver up to 12.2 petaflops of double precision performance, which would rank the Tsubame3.0 in the top 10 of the world’s fastest supercomputers.

The supercomputer will be able to deliver up to 47 petaflops of what Nvidia calls “AI computation.” What it really means is half precision computation, which is one of the precision sweet points for AI computation (Nvidia has made 8-bit precision GPUs as well, targeted mainly at systems that need only inference computation).

Article continues belowNvidia also said that when working together with the Tsubame2.5, the two supercomputers can deliver up to 64.3 petaflops, making the combined system Japan’s highest performing AI supercomputer.

The Tsubame3.0 is expected to be used for education and technology research at Tokyo Tech. However, it will also be accessible to researchers from the private sector and other universities.

Tokyo Tech’s Satoshi Matsuoka, a professor of computer science who is building the system, said: “Nvidia’s broad AI ecosystem, including thousands of deep learning and inference applications, will enable Tokyo Tech to begin training Tsubame3.0 immediately to help us more quickly solve some of the world’s once unsolvable problems.”

Supercomputers’ Adoption Of AI Chips

The high-performance computing (HPC) market has been traditionally quite different from the emergent machine learning/AI market. Supercomputers have been traditionally used for applications such as weather prediction, climate modeling, space and nuclear simulations, and so on.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

However, the rise of AI-optimized chips is starting to blend the two markets together, as supercomputers start adopting the same kind of chips. As the AI chip market evolves, the chips become more efficient, higher-performance, and more cost-effective. This alone makes them interesting to supercomputer customers.

The applications of both markets are starting to resemble one another as well, as more scientists start to use machine learning for their work. Many of the simulations are heavy on image recognition computation, which again makes AI-optimized chips useful to certain supercomputer customers. As the AI market continues to grow at a rapid pace, we’ll likely continue to see supercomputer-using institutions adopt machine learning chips in their next-generation supercomputers.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

bit_user There's a ton of interesting details missing, not least of which is what interconnect they're using. What's more, you'd think Tom's wouldn't miss the fact that it's water cooled. More at:Reply

https://www.nextplatform.com/2017/02/17/japan-keeps-accelerating-tsubame-3-0-ai-supercomputer/

Sorry, but you guys just dropped the ball on this one. -

Faux_Grey Nobody ever really mentions the underlying network connecting these nodes.Reply

Article just be like "nvidia powers japan's fastest supercomputer"

Nobody ever goes "several manufacturers build some crazy f***ing tech." -

bit_user Reply

It's Intel's Omnipath (100 Gbps). Check the link in my post.19322426 said:Nobody ever really mentions the underlying network connecting these nodes.

It sounds very much like they prioritized building this with existing, off-the-shelf tech. From Broadwell-E Xeons to P100 GPUs, it sounds like they're trying to keep it (relatively) cheap and simple. Even the ratio of 4x P100's with 2x Xeons is surely about avoiding the need for a PCIe switch (each of those Xeons has 40x PCIe 3.0 lanes, which it can use to host 2x P100s).

-

FaceBob Fairly certain that, actually, electricity will power Japan's 'Fastest AI Supercomputer'.Reply

Sorry. *runs away* -

Pompompaihn How can this be the fastest and there's no mention of how many case windows or RGB lights it has?Reply