Nvidia's DLSS Technology Analyzed: It All Starts With Upscaling

Could DLSS be the Future of Entry-Level GPUs?

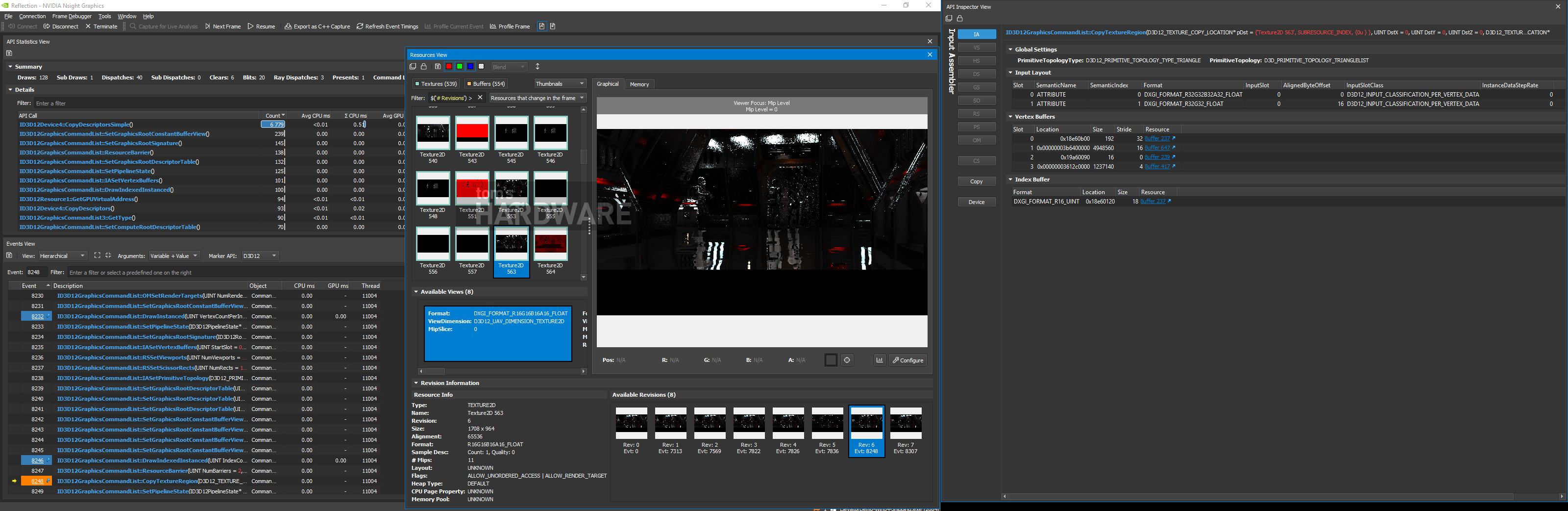

We wanted to dig deep into Nvidia's DLSS technology, beyond what the company was willing to divulge at launch. And although we made progress, the results are far from conclusive. One observation is clear, though: DLSS starts by rendering at a lower resolution and then upscaling by 150% to reach its target output. As far as quality goes, DLSS at 4K looks a lot better than DLSS at 2560 x 1440.

The steps in between rendering at a lower resolution and upscaling to the target are where the purported "magic" happens, and we have few specifics beyond Nvidia's original description. There are a number of techniques for cleanly enlarging an image thanks to advances in machine learning. This field is especially advanced, as it was one of the first applications of artificial intelligence.

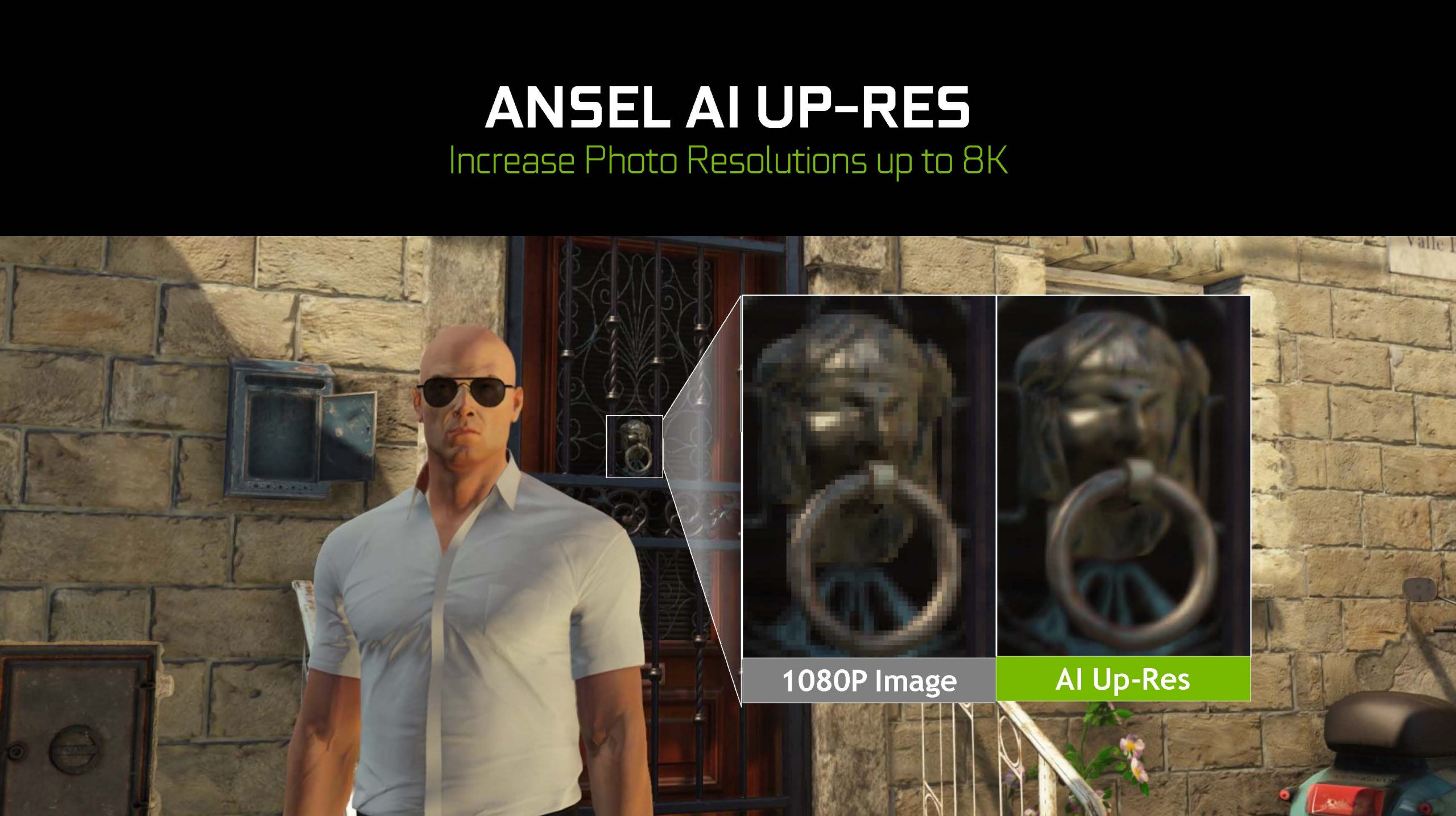

Moreover, we can't help but see similarities in what DLSS does for gaming and Nvidia's Ansel AI Up-Res technology, allowing gamers to take 1080p-based captures and generate massive 8K photos via inference. We suspect that the same inferencing goes on in real-time before each frame is upscaled in the DLSS pipeline. Also note the application of an anti-aliasing filter (TAA, DLAA?) just before the upscale step.

Article continues belowSome of this sounds familiar from Nvidia's original description of DLSS. But the company certainly omitted interesting technical details, such as explicitly rendering at a lower resolution, applying anti-aliasing, and upscaling. Those big performance gains certainly make a lot more sense now, though.

DLSS: Still One Of Turing's Most Promising Features

Inner workings aside, DLSS remains one of the Turing architecture's most interesting capabilities, and for multiple reasons. First of all, the technology consistently yields excellent image quality. If you watch any of the DLSS-enabled demos in real-time, it's difficult to distinguish between native 4K with TAA and the same scene enhanced by DLSS.

Second, we're told that DLSS should only get better as time goes on. According to Nvidia, the model for DLSS is trained on a set of data that eventually reaches a point where the quality of its inferred results flattens out. So, in a sense, the DLSS model does mature. But the company’s supercomputing cluster is constantly training with new data on new games, so improvements may roll out as time goes on.

Finally, this is a technology that might be viable on entry-level Turing-based GPUs (as opposed to ray tracing, which requires a minimum level of performance to be useful), if those graphics processors end up with Tensor cores. We'd love to see low-end GPU play through AAA games at 1920 x 1080 based off of a 720p render.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: Best Graphics Cards

MORE: ;Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Could DLSS be the Future of Entry-Level GPUs?

Prev Page Star Wars Reflections: A Special Case-

hixbot OMG this might be a decent article, but I can't tell because of the autoplay video that hovers over the text makes it impossible to read.Reply -

richardvday I keep hearing about the autoplay videos yet i never see them ?Reply

I come here on my phone and my pc never have this problem. I use chrome what browser does that -

bit_user Reply

Thank you! I was waiting for someone to try this. It seems I was vindicated, when I previously claimed that it's upsampling.21435394 said:Most surprising is that 4K with DLSS enabled runs faster than 4K without any anti-aliasing.

Now, if I could just remember where I read that... -

bit_user Reply

You only compared vs TAA. Please compare against no AA, both in 2.5k and 4k.21435394 said:Notice that there is very little difference in GDDR6 usage between the runs with and without DLSS at 4K. -

bit_user Reply

I understand what you're saying, but it's incorrect to refer to the output of an inference pipeline as "ground truth". A ground truth is only present during training or evaluation.21435394 said:In the Reflections demo, we have to wonder if DLAA is invoking the Turing architecture's Tensor cores to substitute in a higher-quality ground truth image prior to upscaling?

Anyway, thanks. Good article! -

redgarl So, 4k no AA is better... like I noticed a long time ago. No need for AA at 4k, you are killing performances for no gain. At 2160p you don't see jaggies.Reply -

coolitic So... just "smart" upscaling. I'd still rather use no AA, or MSAA/SSAA if applicable.Reply -

bit_user Reply

That's not what I see. Click on the images and look @ full resolution. Jagged lines and texture noise are readily visible.21436668 said:So, 4k no AA is better... like I noticed a long time ago.

If you read the article, DLSS @ 4k is actually faster than no AA @ 4k.21436668 said:No need for AA at 4k, you are killing performances for no gain.

Depending on monitor size, response time, and framerate. Monitors with worse response times will have some motion blurring that helps obscure artifacts. And, for any monitor, running at 144 Hz would blur away more of the artifacts than at 45 or 60 Hz.21436668 said:At 2160p you don't see jaggies. -

s1mon7 Using a 4K monitor on a daily basis, aliasing is much less of an issue than seeing low res textures on 4K content. With that in mind, the DLSS samples immediately gave me the uncomfortable feeling of low res rendering. Sure, it is obvious on the license plate screenshot, but it is also apparent on the character on the first screenshot and foliage. They lack detail and have that "blurriness" of "this was not rendered in 4K" that daily users of 4K screens quickly grow to avoid, as it removes the biggest benefit of 4K screens - the crispness and life-like appearance of characters and objects. It's the perceived resolution of things on the screen that is the most important factor there, and DLSS takes that away.Reply

The way I see it, DLSS does the opposite of what truly matters in 4K after you actually get used to it and its pains, and I would not find it usable outside of really fast paced games where you don't take the time to appreciate the vistas. Those are also the games that aren't usually as demanding in 4K anyway, nor require 4K in the first place.

This technology is much more useful for low resolutions, where aliasing is the far larger problem, and the textures, where rendered natively, don't deliver the same "wow" effect you expect from 4K anyway, thus turning them down a notch is far less noticeable.