OCZ's Vertex 3: Second-Generation SandForce For The Masses

MTTF? MTBF? "My Drive Lasts Longer"

I wanted to cover reliability because I've spent some time on a few forums (including those dedicated to storage), noticing that people are discussing MTBF ratings. This deserves a separate page of discussion.

When you look at the data sheet of any drive, you'll notice reliability is expressed in mean time between failures (MTBF) or mean time to failure (MTTF). These two values share the same units. The only difference is that the first assumes you can fix a drive, and in latter, replace it.

| Header Cell - Column 0 | SF-1222 | SF-2141 | SF-2181 | SF-2281 | SF-2282 | SF-1565 | SF-2582 | SF-2682 |

|---|---|---|---|---|---|---|---|---|

| Target Market | Client | Client | Client | Client | Client | Enterprise | Enterprise | Enterprise |

| MTTF (hours) | 2 million | 2 million | 2 million | 2 million | 2 million | 10 million | 10 million | 10 million |

If you look at the mean time to failure (MTTF) of any enterprise controller from SandForce, such as the SF-2582, you'll notice that it's rated at 10 000 000 hours. Yet, the SF-2281 is rated at 2 000 000 hours.

Article continues belowIf you do the math, you realize that 10 000 000 hours roughly equals 1140 years. Does this mean you can bequeath an enterprise drive to your tenth-generation progeny?

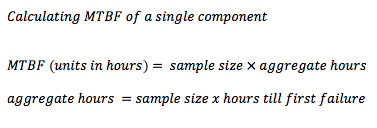

Not at all. This rating is based on probability. There are many ways to calculate MTBF, but here is one example. Let's say SandForce had 2000 drives based on the SF-2582 in its qualification lab. If you were to turn on all of these SSDs at the same time and start the clock, every passing hour would equal 2000 hours of drives running. For the sake of this example, let's assume the first SSD fails after 2000 hours (~82 days). You would stop the clock the moment the first drive failed and the MTBF would be calculated based on the number of drives originally set up. Because only one drive out of 2000 failed after 2000 hours of use, the MTBF would be four million hours.

The problem with this type of math is that it is hardly considers advanced statistics or stochastic math. For all we know, the entire batch of 2000 drives could have failed five minutes after we stopped the clock. This is why MTBF can be an inflated number (depends on the math used).

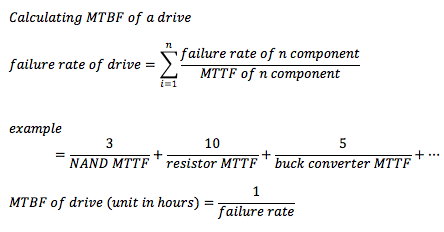

While the math may seem a bit odd, this relates to the MTBF rating you see on a drive. For example, Corsair’s Force SDDs use the same SF-1222 seen on OCZ's Vertex 2. Yet, Force drives are rated at 1.5 million to the 2 million hours for Vertex 2. This occurs because the MTBF rating of a drive is based on a sum probability. There are many components that go into making a drive: memory, buck converters, controllers, resistors, and so on. Even though the SF-1222 is rated for 2 million hours, the parts that Corsair is using collectively add up to 1.5 million. Since any system is only as strong as its weakest link, this results in a lower MTBF rating. OCZ claims a higher MTBF because the rating it gives to the parts other than the SandForce controller exceed 2 million hours. Hence, OCZ publishes the reliability rate of the controller as that of the drive.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We point this out because MTBF is not a meaningful way for us to measure how long a drive will last. At the end of the day, it would be incorrect to compare the durability of two different drives simply using the MTBF rating, even if they use the same controller (like the Force and Vertex 2). If you love math, you've already come to the realization that this number can be easily manipulated. The fastest way is to simply increase the number of drives being tested. Double the drives, double the MTBF. Let's put aside the flaw in this approach to calculating MTBF for a moment. Without knowing how many drivers were originally tested, you can't determine how long a single drive might last. Remember this when you are shopping for drives, be it disk or solid state. (If you like academia as much as I do, I recommend reading a paper written by Bianca Schroeder and Garth Gibson for more information. Eduardo Pinheiro, Wolf-Dietrich Weber and Luiz Andr´ Barroso at Google Research have also written a great paper that explores reliability of consumer drives.)

Current page: MTTF? MTBF? "My Drive Lasts Longer"

Prev Page Second-Generation SandForce: It's All About Compression Next Page Test Setup-

lradunovic77 I agree and it is time for HDD to be retired. We don't need them anymore, but Servers.Reply -

bildo123 A far cry as far as "the masses" are concerned. Still, over $2/GB is too much. Getting closer however. I'd pay $200 for a 256GB SSD with these speeds.Reply -

acku mayankleoboy1the fact that they use ~15% of a quad core SB CPU, is amazing.with the mechanical drives, they were just sitting idle. this more than anything, makes the SSD worthwhile.Reply

Well what I didn't mention in the review is that the benchmark starts as ~20% across all cores during the first 10 seconds, which is from PCMark setting up the disk trace. After that, the IO activity throttles a single core up to 100% for almost all SSDs. For the hard drives, we see ~60-80% utilization of a single core.

Cheers,

Andrew Ku

TomsHardware.com -

lradunovic77 I say keep your desktop active all the time. I am running i980x overclocked to 4.0Ghz and there is no way i will put my computer into any type of power saving mode, it is useless and power saving is just mimick. We are talking about very small amount of money over a year. Having Turbo option makes sense from certain point of view but bottom line is that it is just wasted silicon and pretty much useless.Reply -

vvhocare5 "The problem is that any price above $2/GB is going to be a hard sell unless you're an early adopter by nature. Our choices in recent System Builder Marathon stories reflect this. Look at our December $1000 PC."Reply

Overall a good article. Anyone into MTBF's will find that one page uninforming and anyone not into it is likely lost.

I would disagree with that statement only in the sense that a $1000 PC is not going to be filled with high-end superior performing parts. So I dont see a reason to apologize for its price. The person who can afford a $3000+ PC isnt going to blink buying the 240G model and will likely see it as entirely reasonable.

Me? I think I have found my next ex-drive.....

-

acku Replyso as i said, will OC increase the scores a bit?

and what about power saving enabled?

None of our tests were executed in an environment that allowed any idling. Furthermore, we disabled CPU throttling. Power saving was enabled in the sense that the display was allowed to turn off, which is part of the default profile in Windows.

OCing may increase performance, but only to the extent that the bandwidth will support it. As I mentioned, PCMark throttles a single core up to 100%. It isn't a sustained trend. -

bto on your 1000 dollar gaming system, I'd rather have a vertex 3 than two 460's hell even an agility 2... and still afford better than a 460.Reply -

bto But then that's me, and to quote the great Inigo Montoya, "I hate wait" and most games I play are not bleeding edge, I also work on my computer, play HD movies and copying time makes me angry when I'm moving files. Buy an ssd, and later spend 60 bux on a 1tb drive down the road.Reply