GFXBench 3.0: A Fresh Look At Mobile Benchmarking

Over the past few years, Kishonti has become a leading name in mobile GPU benchmarking. The newly-released GFXBench 3.0 is comprised of nearly all new tests, including battery, render quality, and the first serious OpenGL ES 3.0 performance metric.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Special Test Results: Render Quality

It is using the peak signal-to-noise ratio (computed from the mean-square error), and the metric is mB - milliBel.

The formula we use is described here: http://en.wikipedia.org/wiki/Peak_signal-to-noise_ratio

At long last, we have a test that will (hopefully) facilitate a direct comparison between graphics performance and output quality. This benchmark measures visual fidelity in a high-end gaming scenario. It compares a single rendered frame against a reference image. Differences are calculated using a Peak Signal to Noise Ratio (PSNR) based on the Mean Square Error (MSE), and reported in millibels.

Like I mentioned on the first page, the idea here is that GPUs can optimize for performance or for quality, and device vendors further tune voltage and clocks to affect frame rates against battery life. You can't improve all three simultaneously, though. So, by quantifying fidelity and battery life right next to frame rates, we get a better sense of how variables are being balanced.

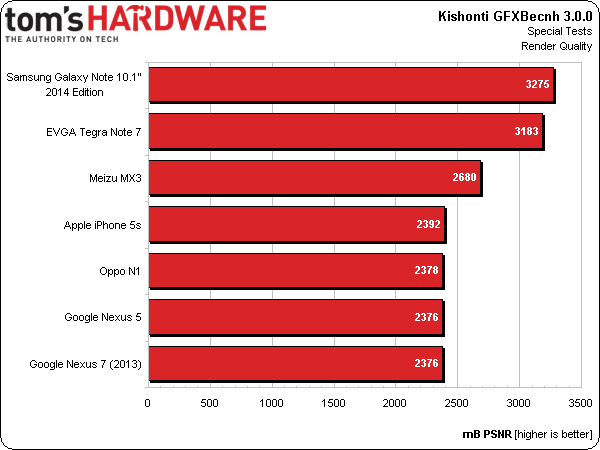

Standard Precision

GFXBench's Standard Precision test shows the same image presented by the device in T-Rex, where high precision is not artificially forced and performance matters most. This is the most common use case for mobile GPUs.

When high precision is needed for a specific task or computation, mobile GPUs typically just don't match desktop-class hardware, which isn't a power-constrained. If they can, there's a notable performance hit.

For all of the grief our performance benchmarks give Samsung's Galaxy Note 10.1” 2014 Edition, this test exonerates the tablet's Mali-T628MP6 GPU somewhat by demonstrating a bias favoring quality.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

EVGA's Tegra Note 7 doesn't finish far behind in second place, noticeably ahead of the rest of the field. The Meizu MX3 takes third place.

Everything else clumps up at the bottom of the chart, exhibiting quality trade-offs in the name of performance. Apple's iPhone 5s (A7), Oppo's N1 (Snapdragon 600), Nexus 5 (Snapdragon 800), and Nexus 7 (S4 Pro) all appear equally guilty of dialing back image quality in favor of higher frame rates.

Let's get back to the first set of results, though. It's particularly interesting that a Android-based device from Samsung tops this list. Now, the company has been caught cheating on benchmarks in the past, artificially targeting tests and increasing performance compared to performance in more real-world workloads. However, its strong finish here isn't a stroke of benevolence; rather, you should notice the same quality level from any Mali-T628-based SoC.

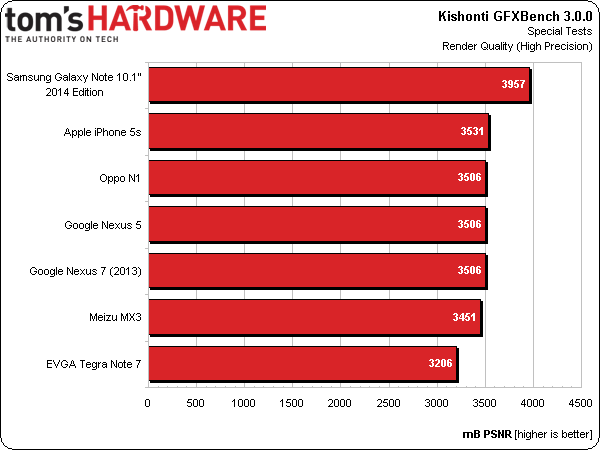

High Precision

Forced to use higher precision, Samsung's Galaxy Note 10.1” 2014 Edition improves even further. Thanks to its Mali-T628MP6 graphics engine, the Note 10.1" seems tuned for fidelity, which has to hold back its potential somewhat in the performance-oriented tests.

EVGA's Tegra Note 7 barely budges, suggesting its shaders were already running at higher precision than the rest of the field. But that also means all of the other devices surpass the Tegra 4-based tablet, leaving it in last place.

Hardware that appeared problematic in the previous test delivers higher precision in this second benchmark, achieving an otherwise-even degree of image quality compared to GFXBench's reference.

Current page: Special Test Results: Render Quality

Prev Page Low-Level Test Results: Fill Next Page Special Test Results: Battery Life And Performance-

panzerknacker Its cool u guys put so much effort into this but tbh most of the benchmark results seem to be completely random. Phones with faster SoC's performing slower and vice versa. I think there is no point at all benching a phone because 1. The benchmarking software is a POS and unreliable and 2. The phone OS's and apps are all complete POSs and act completely random in all kinda situations. I'd say just buy the phone with a fast SoC that looks the best to u and when it starts acting like a POS (which they all start doing in the end) buy a new one.Reply -

umadbro What kind of bs is this? Force 720p on all devices and you'll see what happens to your precious 5s. Even my Zl murdered it.Reply -

jamsbong The only relevant benchmarks are the first two because they are full-fletch 3D graphics, which is won by the most portable device; The iPhone. The rest of the benchies are just primitive 2D graphics which is irrelevant. Android devices won all those in flying colours.Reply -

rolli59 Well I have a smart phone but that is so I can receive business emails on the go, I have a tablet because it is great for watching movies on the go. Do I want to find out if there are any faster devices to do those things, not really while what I got is sufficient. I leave all the heavy tasks to the computers.Reply -

Durandul ReplyThe only relevant benchmarks are the first two because they are full-fletch 3D graphics, which is won by the most portable device; The iPhone. The rest of the benchies are just primitive 2D graphics which is irrelevant. Android devices won all those in flying colours.

If those are the only two benchmarks relevant to you, then I wonder why you are using a phone and not a 3DS or something. But seriously, most of the other devices have more than a million more pixels then the iPhone, so this benchmark is not so telling. It was mentioned before, but it would be nice to test at a given resolution, although as suppose applications don't give you an option on the phone. -

umadbro Reply

It does give the option to force some specific resolution. Don't know why this "review" didn't do it. That's what I've been trying to say from the start.The only relevant benchmarks are the first two because they are full-fletch 3D graphics, which is won by the most portable device; The iPhone. The rest of the benchies are just primitive 2D graphics which is irrelevant. Android devices won all those in flying colours.

If those are the only two benchmarks relevant to you, then I wonder why you are using a phone and not a 3DS or something. But seriously, most of the other devices have more than a million more pixels then the iPhone, so this benchmark is not so telling. It was mentioned before, but it would be nice to test at a given resolution, although as suppose applications don't give you an option on the phone. -

umadbro Reply

It does give the option to force some specific resolution. Don't know why this "review" didn't do it. That's what I've been trying to say from the start.The only relevant benchmarks are the first two because they are full-fletch 3D graphics, which is won by the most portable device; The iPhone. The rest of the benchies are just primitive 2D graphics which is irrelevant. Android devices won all those in flying colours.

If those are the only two benchmarks relevant to you, then I wonder why you are using a phone and not a 3DS or something. But seriously, most of the other devices have more than a million more pixels then the iPhone, so this benchmark is not so telling. It was mentioned before, but it would be nice to test at a given resolution, although as suppose applications don't give you an option on the phone.