Intel SSD 510-Series 250 GB Review: Adopting 6 Gb/s SATA

After defining the high-end SSD market with its X25-M, Intel is finally ready with its first 6 Gb/s solid-state drive, the SSD 510-series. Does the company's latest follow in its predecessor's footsteps, or does OCZ's Vertex 3 lineup go uncontested?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

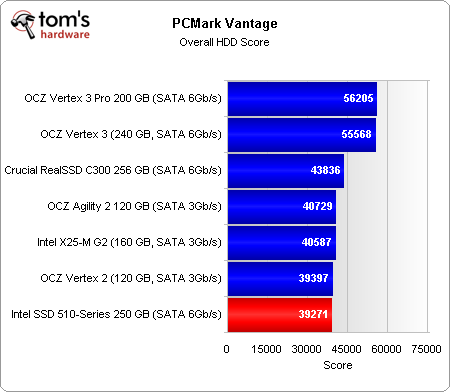

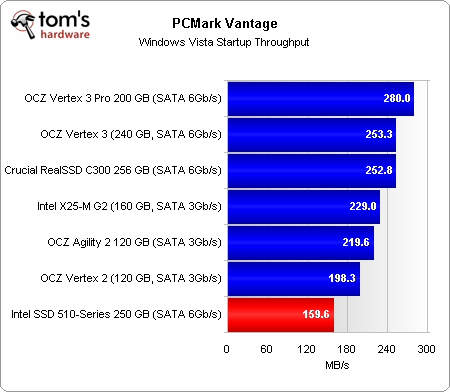

Benchmark Results: PCMark Vantage Storage Test

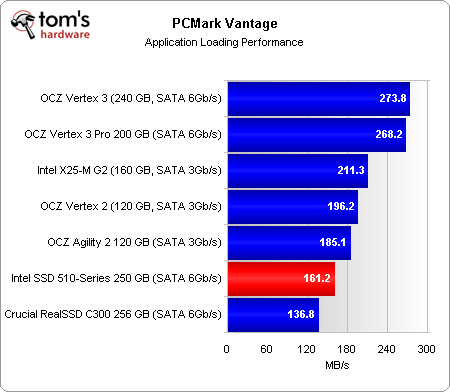

The application loading workload is predominantly read-based. However, given the SSD 510’s performance here, we’d surmise that we’re dealing with small file transfers.

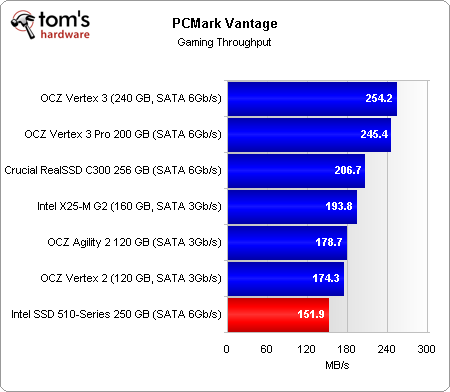

Here’s a shocker. The gaming test is more than 99% reads, and measures streaming performance from the tested drives. Despite the fact that Intel’s SSD 510 performed really well in our synthetic streaming benchmarks, it pulls a last-place finish here. Maybe this isn’t the drive you’d want for quick level loading, after all.

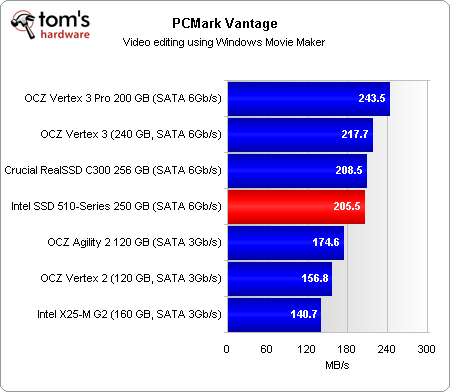

Reading and writing to Windows Movie Maker results in an almost 50/50 split between reads and writes. We’re counting on this test using large transfers, though. And perhaps that’s why the SSD 510 outperforms OCZ’s last-gen SandForce-based drives and Intel’s X25-M. It still succumbs, however, to the RealSSD C300 and both Vertex 3 drives.

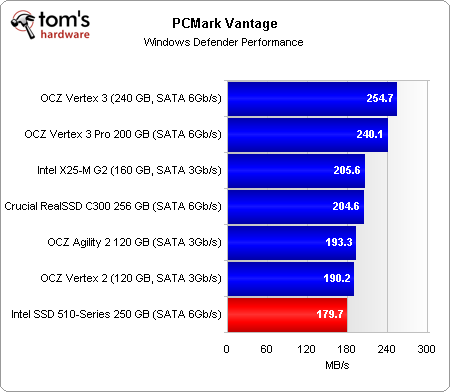

Article continues belowWindows Defender is almost entirely read-based, but the fact that we’re scanning a lot of small files means that the SSD 510 gets bogged down into a last-place finish.

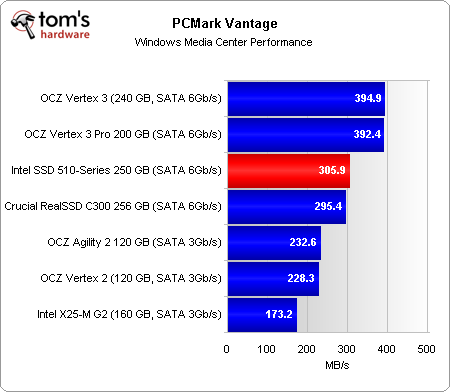

The Media Center workload is split between reads and writes. It involves concurrent video playback, streaming video, and video recording—yay, all usage cases employing large chunks of data. Here, the SSD 510 is able to post respectable numbers, ahead of even the C300.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: PCMark Vantage Storage Test

Prev Page Benchmark Results: 4 KB And 512 KB Random Writes Next Page Conclusion-

JohnnyLucky I made the rounds and read other 510 reviews. I also read the comments in a lot of forums. There is quite a bit of disappointment with the performance and price of the Intel 510. Perhaps Intel will redeem itself with the next ssd series.Reply -

eklipz330 i love when small companies like sandforce can make massive companies shake in their boots... really puts another meaning on competitionReply -

nitrium Something ALL reviewers seem to ignore is what sort of queue depths average users experience. This has been addressed in a thread on www.xtremesystems.org. The facts appear that most disk activity has a queue depth of 1. Yes, ONE. It very rarely spikes above 4. Booting Windows 7 x64 requires 190 IOPS(!) - 20,000+ IOPS on these drives are literally NEVER going to be utilized by most users in anything they do in day to day work. Almost no one buying these SSD drives is using anything remotely near their capability. It looks to me like this is all just technical benchmark BS that are of no use to the end user whatsoever... aside from bragging rights of course.Reply -

cangelini nitriumSomething ALL reviewers seem to ignore is what sort of queue depths average users experience. This has been addressed in a thread on www.xtremesystems.org. The facts appear that most disk activity has a queue depth of 1. Yes, ONE. It very rarely spikes above 4. Booting Windows 7 x64 requires 190 IOPS(!) - 20,000+ IOPS on these drives are literally NEVER going to be utilized by most users in anything they do in day to day work. Almost no one buying these SSD drives is using anything remotely near their capability. It looks to me like this is all just technical benchmark BS that are of no use to the end user whatsoever... aside from bragging rights of course.Reply

IMO, don't buy a premium SSD for booting Windows. In fact, I go for weeks at a time without rebooting at all.

Link to the XS thread you're referencing? We going to be putting more effort into quantifying real-world storage workloads in the next two months, given some new software. This could definitely help mold the work we do. The goal, of course, is real-world relevance.

Cheers,

Chris -

nitrium Sorry for not providing a proper link. Here it is:Reply

http://www.xtremesystems.org/forums/showthread.php?t=260956

My beef with this whole synthetic benchmarking is that I think the vast majority of users are unaware that getting this SSD or that SSD will make absolutely no material difference. Why don't reviewers benchmark actual things people are interested in? e.g. booting Windows 7, loading Dragon Age Origins/COD Black Ops, archiving a folder, launching Thunderbird/Firefox/Photoshop, running a virus scan? Is it because there will be no material difference between any performance SSD manufactured in the last 3 years? The thread above also notes that aside from SYNTHETIC benchmarks, raiding SSDs makes absolutely no difference in anything you do in a typical day to day environment.

Yes, absolutely enterprise class users might get something tangible out of these new drives, but I suspect they are not the core audience of Tom's Hardware. -

cangelini No worries nitrium, and thank you for the link.Reply

I'd agree that the synthetic measurements are primarily used to draw "worst-case" comparisons. There is a very deliberate reason I wanted to break down most of the results by queue depth this time around--specifically to demonstrate how wildly performance can differ based on QD. And as you mention, at a QD of 1, an SSD is doing a lot less for the average desktop user than it would if you were hammering it with the concurrent requests of a database server.

If you look at the task breakdown of PCMark Vantage, it comes relatively close to real-world usage. My problem with that metric is its consistency. Futuremark is aware that Vantage wasn't written to test SSDs optimally, and I'm expecting the company to come out with something very soon that improves its utility in that regard.

I personally don't see anything *wrong* with running real-world tests, like Windows start-up, level-loading, or launching a sequence of apps. The only challenge there is time. Adding more benchmarks is never a problem--it's what the readers want to see.

I'll go through the XS thread with a couple of staffers and see what we come away with.

Cheers nitrium,

Chris -

Nexus52085 Well, I'm sincerely happy to find out that the Crucial C300 I bought yesterday still holds up nicely against the new SSDs from the other heavy hitters. Thanks for the review, Tom's!Reply -

nitrium I think of some of this heavy focus on benchmarks on SSDs (and really, all hardware sites do it, so I'm not singling this site out specifically) is a bit like measuring say the theoretical texture fill rate of the latest and greatest GPU, but forget to mention that most people will never actually use half of it cos they're running 1920x1080 XBox360 port-acrosses. Instead GPUs are measured at variety of screen modes, in a variety of games... i.e. real world benchmarks. There is a reason for this. Frankly I could care less what an SSD scores in CrystalMark or IOMeter at a queue depth of 32 or whatever. From the Xtremesystems thread above it dawned on me that perhaps reviewers HAVE to rate SSDs using synthetic benchmarks, because otherwise most of these drives would be nearly indistinguishable.Reply

Oh, and in all my ranting I forgot to thank you (and your colleagues) for the excellent work you do. It is very much appreciated! -

ubercake Seems like a half-a#$ed effort on Intels part to get to the next gen. Why take away the two-lane advantage? Intel could have improved upon their own product. Other companies have drastically improved their offerings, while some benchmarks show Intel's new drives performing similarly to the X25-M. Disappointing is a good word for it. Seems like the competition in the SSD area has been reduced by removing the Intel controllers from the mix.Reply

If you're going to jump to the next level, it makes it really hard to consider Intel at this point. -

tipoo SATA 3 is going to become a bottlneck before it becomes the standard on most shipping PC's.Reply