Micron Confirms RTX 3090, Will Have Over 1TBps GDDR6X Bandwidth

Flagship is almost ready to set sail

Just at the start of this week, Nvidia announced that it's hosting a special GeForce event on September 1st, and it's no secret that we can expect the new Ampere-based graphics cards to be revealed. It's not clear yet which that will be, but Micron apparently didn't get the memo and spilled the beans on at least one part: It will be supplying the 12 GDDR6X memory modules in the RTX 3090. The information comes straight from Micron, though it was first spotted by VideoCardz.

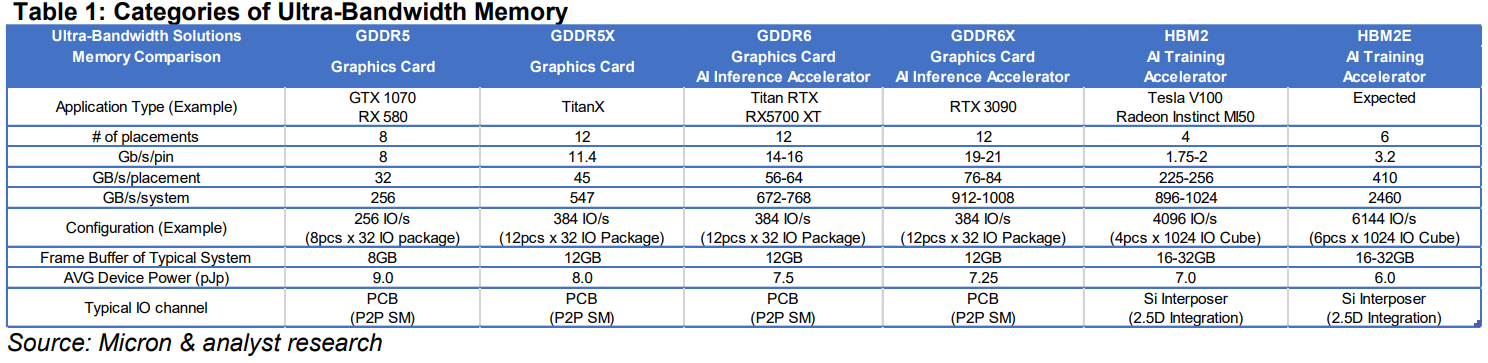

In the brief, Micron unmistakably states that the RTX 3090 will come with 12 GDDR6X modules with a capacity of 8 Gb each, which makes for a total frame buffer of 12 GB. The memory will operate over a 384-bit memory interface at 19-21 Gbps for a total bandwidth of between 912 to 1008 GBps, which would push the RTX 3090 over the 1 TBps milestone.

And let's be clear: It's going to be 21 Gbps GDDR6X. 21st anniversary of GeForce 256, the "world's first GPU" from Nvidia. A 21 day countdown to the September 1 announcement. Ergo, 21 Gbps.

"In Summer of 2020, Micron announced the next evolution of Ultra-Bandwidth Solutions in GDDR6X." read Micron's brief. "Working closely with NVIDIA on their Ampere generation of graphics cards, Micron's 8 Gb GDDR6X will deliver up to 21 Gb/s (data rate per pin) in 2020. At 21 GB/s, a graphics card with 12 pcs of GDDR6X will be able to break the 1 TB/s of system bandwidth barrier!"

Micron also noted that in 2021, it will come out with 16 Gb GDDR6X modules that can reach up to 24 Gb/s per IO pin. Does that pave the way for a 24GB Titan RTX refresh? Maybe!

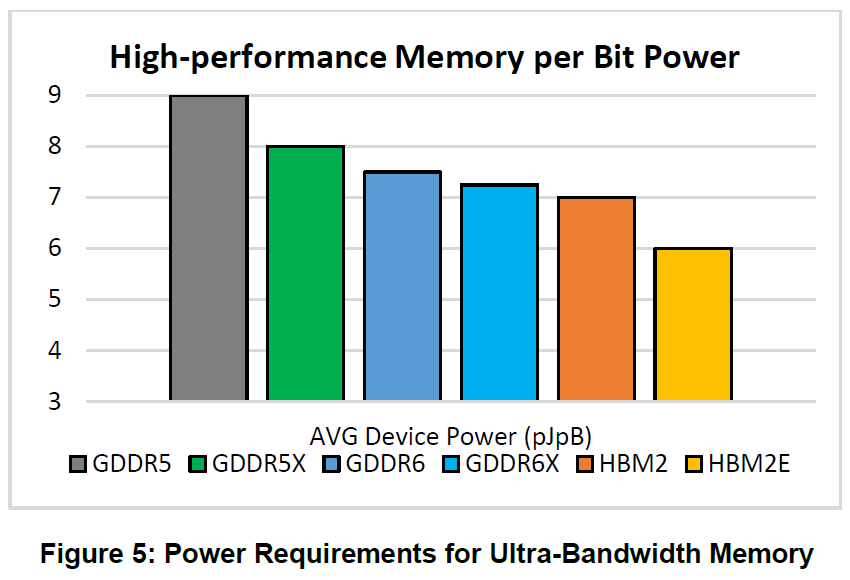

Micron also provided the above chart in its PDF that details the power per bit requirements for high performance memory. GDDR5 sits at the far left, the 'worst' solution that's still seeing use. Moving to the right we get GDDR6, GDDR6X, HBM2, and HBM2e. Considering the additional costs related to using HBM2, the apparently modest gains in power were deemed unnecessary by Nvidia and AMD this previous generation, and they'll be joined by the upcoming Intel Xe HPG gaming GPU.

This confirms the RTX 3090 rumors that have been circulating, and the RTX 3090 appears to be the 3000-series flagship part. Maybe we'll get a 3090 Super or 3090 Ti next year? Anyway, it's been pretty clear that Nvidia's next GPUs would be the RTX 3000 series, but with plenty of speculation about model names.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

For more information surrounding the RTX 3090 and Ampere rumors, check out our summary of everything we know so far.

Micron also revealed details on its upcoming HBMnext memory today, though that's unlikely to go into consumer parts any time soon.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.

-

Friesiansam So how near to ₤2000 a time is this one going to go? Given the ridiculous cost, the likelihood of me ever buying a flagship graphics card from Nvidia, are approximately nil.Reply -

danlw Flagship graphics cards are like luxury yachts. They aren't meant nor expected to be bought by everybody. They are mainly bragging rights for the manufacturers, people who are well off, those who want to be on the bleeding edge, and those who are very poor (because they make large purchases on credit). For the rest of us, there will be the 3080, 3070, 3060, and probably several other variants for people who can't afford the crème de la crème.Reply -

thGe17 The Micron PDF obviously confirms nothing!Reply

Take a closer look at the table; it only contains sample data for advertisement purposes. Column three combines specs for the Titan RTX and RX 5700 XT. The specs do not match either card. Additionally the same problem for column six. Six HBM2E stacks but a capacity of 16 - 32 GB. That's simply not possible and therefore the table data cannot be taken seriously and therefore it shouldn't be taken as a preview on Ampere. More likely the table contains example configurations and does not have the intention of representing exact card configurations. It should only demonstrate Micron's GDDR development. -

JarredWaltonGPU Reply

Sorry, but I disagree. The table says Titan RTX and RX 5700 XT. Under that it lists number of placements (32-bit channels) as 12. That's correct for the Titan RTX, and obviously not the RX 5700 XT. It's far more likely that RX 5700 XT is mistakenly included than that the RTX 3090 data was wrong.thGe17 said:The Micron PDF obviously confirms nothing!

Take a closer look at the table; it only contains sample data for advertisement purposes. Column three combines specs for the Titan RTX and RX 5700 XT. The specs do not match either card. Additionally the same problem for column six. Six HBM2E stacks but a capacity of 16 - 32 GB. That's simply not possible and therefore the table data cannot be taken seriously and therefore it shouldn't be taken as a preview on Ampere. More likely the table contains example configurations and does not have the intention of representing exact card configurations. It should only demonstrate Micron's GDDR development.

Ignore RX 5700 XT and you get Titan RTX. 12 16Gb chips, 384-bit memory, 672GBps of bandwidth. That makes for a nice comparison with RTX 3090, which has the same 12 chips (8Gb this time), 384-bit memory interface, and now 912-1008GBps of bandwidth depending on the clockspeed. -

hotaru251 Reply

yup.Friesiansam said:So how near to ₤2000 a time is this one going to go? Given the ridiculous cost, the likelihood of me ever buying a flagship graphics card from Nvidia, are approximately nil.

top tier gaming is getting to be an upperclass thing instead of middleclass.

hoping amd (or even intel) comes in next few yrs to give compentition so price drop ;/ -

spongiemaster Reply

I agree with you. This chart isn't intended to show the specific memory configuration of any card. All Micron did was list each memory generation and then pick the fastest Nvidia and AMD card for each of those generations and then show potential specs for each memory type. The only thing this chart confirms is that there is GDDR6X and AMD won't be using it. Also, that the top of the line card will not be Titan and will be named something else. I think 2190 is more likely to 3090, but that's really inconsequential information.thGe17 said:The Micron PDF obviously confirms nothing!

Take a closer look at the table; it only contains sample data for advertisement purposes. Column three combines specs for the Titan RTX and RX 5700 XT. The specs do not match either card. Additionally the same problem for column six. Six HBM2E stacks but a capacity of 16 - 32 GB. That's simply not possible and therefore the table data cannot be taken seriously and therefore it shouldn't be taken as a preview on Ampere. More likely the table contains example configurations and does not have the intention of representing exact card configurations. It should only demonstrate Micron's GDDR development. -

spongiemaster Reply

Midrange cards of today are far faster than top of the line cards from years ago. Gaming doesn't require the top of the line card. Should companies only produce goods that you can afford?hotaru251 said:yup.

top tier gaming is getting to be an upperclass thing instead of middleclass.

hoping amd (or even intel) comes in next few yrs to give compentition so price drop ;/ -

vinay2070 Reply

Yesteryears had 1080P TVs and today most of the households buy 4K TV. Likewise, if you want to game on 1440P high refresh or game with all bells and wistles on 4K, you need a top end card. Even the 2080 ti struggles at 4k in certain games. So if a 3080 costs 799$+ its a big hard blow on the consumers wallet.spongiemaster said:Midrange cards of today are far faster than top of the line cards from years ago. Gaming doesn't require the top of the line card. Should companies only produce goods that you can afford? -

spongiemaster Replyvinay2070 said:Yesteryears had 1080P TVs and today most of the households buy 4K TV.

July 2020 Steam hardware survey shows 2.23% of users are gaming at 4k. That's 1/4 the number of gamers still using 1366x768 as their primary display. 65.5% are still gaming at 1080p. What TV people have in their houses is irrelevant information.

Likewise, if you want to game on 1440P high refresh or game with all bells and wistles on 4K, you need a top end card. Even the 2080 ti struggles at 4k in certain games. So if a 3080 costs 799$+ its a big hard blow on the consumers wallet.

If you can afford a high end display, then you can afford a high end video card to drive it. Don't buy a Porsche then complain about the cost for the tires. There has never been a time when the top video card could crush every game with maxed out settings. The Crysis meme is 13 years old now. Back in the day, 60fps was the holy grail. There is no game that has ever been sold that requires 4k 144fps with every setting maxed to make it fun to play. -

MasterMadBones Afaik, JEDEC has never published the spec for GDDR6X (which it did for GDDR5X), which tells me it's more of a marketing name this time around. It's simply faster GDDR6 on a better node.Reply