Samsung 850 Pro SSD Review: 3D Vertical NAND Hits Desktop Storage

After winning an award last year for its 840 EVO, Samsung is ready to follow up with another high-end offering. The company's 850 Pro SSD merges the EVO's familiar MEX controller with 3D V-NAND. Does the combination justify an upgrade, or should you wait?

Results: Tom's Hardware Storage Bench v1.0

Storage Bench v1.0 (Background Info)

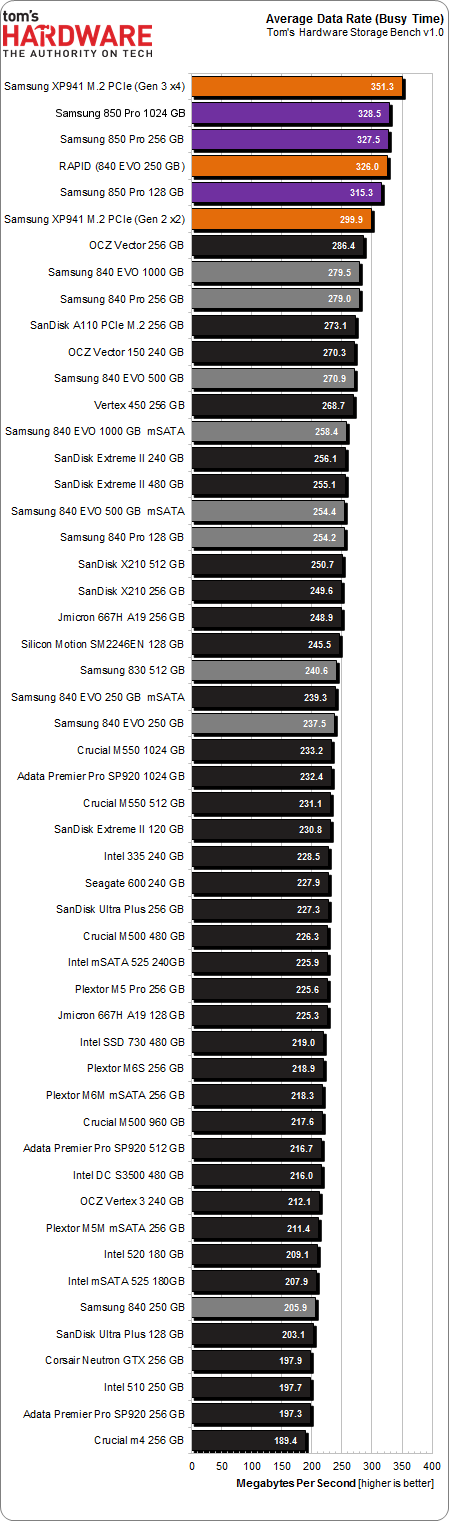

Our Storage Bench incorporates all of the I/O from a trace recorded over two weeks. The process of replaying this sequence to capture performance gives us a bunch of numbers that aren't really intuitive at first glance. Most idle time gets expunged, leaving only the time that each benchmarked drive is actually busy working on host commands. So, by taking the ratio of that busy time and the the amount of data exchanged during the trace, we arrive at an average data rate (in MB/s) metric we can use to compare drives.

It's not quite a perfect system. The original trace captures the TRIM command in transit, but since the trace is played on a drive without a file system, TRIM wouldn't work even if it were sent during the trace replay (which, sadly, it isn't). Still, trace testing is a great way to capture periods of actual storage activity, a great companion to synthetic testing like Iometer.

Incompressible Data and Storage Bench v1.0

Article continues belowAlso worth noting is the fact that our trace testing pushes incompressible data through the system's buffers to the drive getting benchmarked. So, when the trace replay plays back write activity, it's writing largely incompressible data. If we run our storage bench on a SandForce-based SSD, we can monitor the SMART attributes for a bit more insight.

| Mushkin Chronos Deluxe 120 GBSMART Attributes | RAW Value Increase |

|---|---|

| #242 Host Reads (in GB) | 84 GB |

| #241 Host Writes (in GB) | 142 GB |

| #233 Compressed NAND Writes (in GB) | 149 GB |

Host reads are greatly outstripped by host writes to be sure. That's all baked into the trace. But with SandForce's inline deduplication/compression, you'd expect that the amount of information written to flash would be less than the host writes (unless the data is mostly incompressible, of course). For every 1 GB the host asked to be written, Mushkin's drive is forced to write 1.05 GB.

If our trace replay was just writing easy-to-compress zeros out of the buffer, we'd see writes to NAND as a fraction of host writes. This puts the tested drives on a more equal footing, regardless of the controller's ability to compress data on the fly.

Average Data Rate

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Storage Bench trace generates more than 140 GB worth of writes during testing. Obviously, this tends to penalize drives smaller than 180 GB and reward those with more than 256 GB of capacity.

Again, I added results from Samsung's 512 GB XP941 over two and four PCIe lanes. Also making an appearance is the 250 GB 840 EVO with RAPID enabled. Those results are in orange, helping put the 850 Pro's performance into context.

The 128 GB 850 Pro sweeps past even the PCIe-based Samsung XP941 over two second-gen PCIe lanes. The larger models are faster still. Only Samsung's XP941 communicating across four PCIe lanes bests the 850 Pros.

Service Times

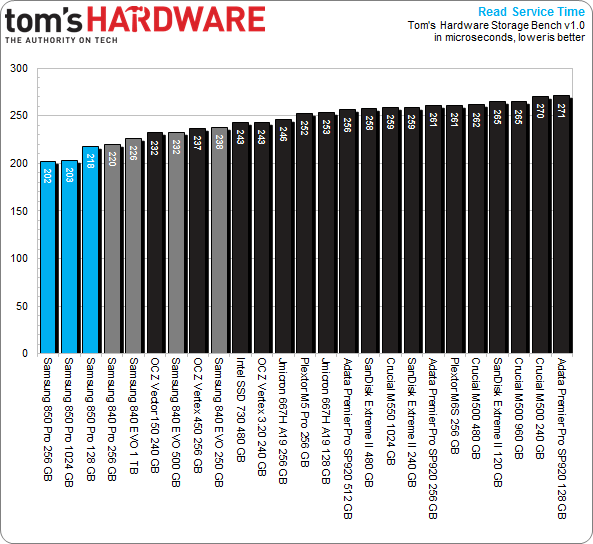

Beyond the average data rate reported on the previous page, there's even more information we can collect from Tom's Hardware's Storage Bench. For instance, mean (average) service times show what responsiveness is like on an average I/O during the trace.

Write service time is simply the total time it takes an input or output operation to be issued by the host operating system, travel to the storage subsystem, commit to the storage device, and have the drive acknowledge the operation. Read service is similar. The operating system asks the storage device for data stored in a certain location, the SSD reads that information, and then it's sent to the host. Modern computers are fast and SSDs are zippy, but there's still a significant amount of latency involved in a storage transaction.

Mean Read Service Time

Whatever medieval magic that animates the 850 Pros pushes read service times into previously-unseen territory. Without more detail on this drive's tweaks, I have to assume the subtle improvement over Samsung's previous-gen offerings comes from V-NAND.

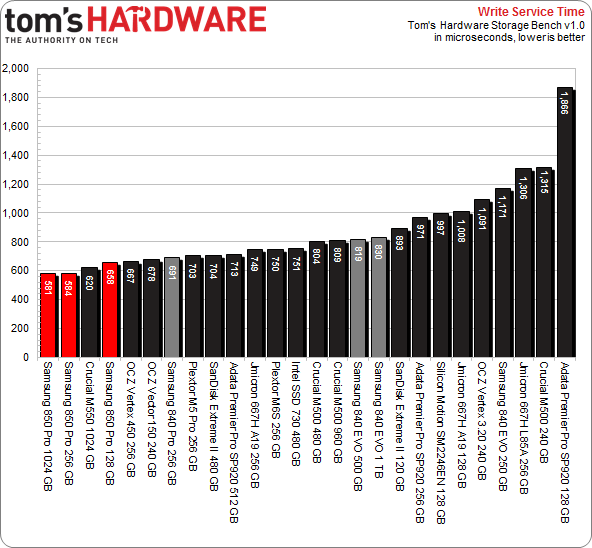

Mean Write Service Time

Crucial's 1 TB M550 splits the smaller 850 Pros. But the two larger Samsung SSDs break new ground otherwise.

Current page: Results: Tom's Hardware Storage Bench v1.0

Prev Page Results: 4 KB Random Read And Write Next Page Results: PCMark 8's Expanded Storage Testing-

MoulaZX I 'just' ordered 2x Samsung EVO 120GB a few hours ago, then I stumbled onto this article. Damn it! Damn it! Damn it! Every freaking time I run into this, be it Storage, CPU, or GPU.... -_-Reply -

cryan ReplyI 'just' ordered 2x Samsung EVO 120GB a few hours ago, then I stumbled onto this article. Damn it! Damn it! Damn it! Every freaking time I run into this, be it Storage, CPU, or GPU.... -_-

I don't know if this really changes anything for you. Two EVOs are still going to be better than one 850 Pro in every way. But I understand the sentiment!

Christopher Ryan

-

lp231 Reply

You just ordered a few hours ago. Just cancel your order if you really want this 850 Pro.13621005 said:I 'just' ordered 2x Samsung EVO 120GB a few hours ago, then I stumbled onto this article. Damn it! Damn it! Damn it! Every freaking time I run into this, be it Storage, CPU, or GPU.... -_- -

g-unit1111 Reply13621197 said:10yrs warranty, may be finally I have a reason to buy SSD. lol

I can guarantee that in 10 years you won't own that drive anymore. :lol: -

10tacle I still have several 8-10 year old drives laying around between 80GB-150GB. I mostly use them as external drives for backing up USB thumb drives and other files that aren't large volume.Reply -

razor512 Will overclocking the bus that the sata controller is on impact the performance?Reply

Can you test on an AMD platform which makes it easier to over clock that bus and some of the connected components? -

BestJinjo Looking forward to future generations of 3D Vertical Nand on M.2 / M.2 Ultra interface. Too bad SATA 3 is all maxed out and the next generation standards are not yet mainstream for the masses which is holding back SSD performance. As far as this drive goes, it's only slightly faster than MX100 but costs double. I don't think it's worth it. MX100 512GB sounds like a perfect stop-gap until M.2/SATAe drives arrive with 1-1.5TB/sec throughput. Perhaps Samsung will give us 95% of the performance for a fraction of the price in the 850 EVO.Reply -

MoulaZX ReplyI 'just' ordered 2x Samsung EVO 120GB a few hours ago, then I stumbled onto this article. Damn it! Damn it! Damn it! Every freaking time I run into this, be it Storage, CPU, or GPU.... -_-

I don't know if this really changes anything for you. Two EVOs are still going to be better than one 850 Pro in every way. But I understand the sentiment!

Christopher Ryan

Not quite. One is for my Desktop, the other is for my Father's Desktop.

For my Desktop, I'll be stepping up from 2x OCZ Vertex 2 60GB in RAID 0. Hope it'll be worth it...