Opinion: AMD, Intel, And Nvidia In The Next Ten Years

Nvidia's Ambition

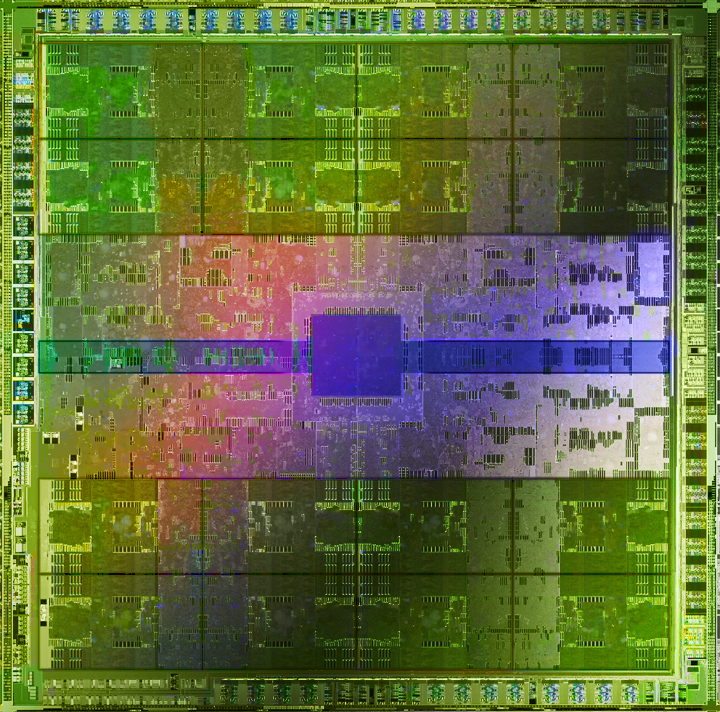

Ambition killed Caesar, and it almost killed Nvidia. Nvidia's next-generation product, GF100, is late. And it remains unclear how much additional performance it will offer over AMD’s Radeon HD 5800-series. With its Fermi architecture, Nvidia was too ambitious with its goals. If it weren’t for a strong G92 core, the company might have followed in the footsteps of S3 or Matrox. The last time this happened was NV30, and the first time it happened was NV1; Nvidia nearly went out of business.

With that said, the aggressive pursuit of high-precision computation in its products, starting with NV30’s 32-bit shaders and now Fermi’s CUDA capabilities, is probably going to pay off. At the core is CUDA.

CUDA is a marketing term that encompasses all of Nvidia’s hardware and software technology allowing non-graphics computing to be performed on the GPU. There is the core CUDA hardware architecture and then an entire ecosystem of technologies enabling developers to work with GPUs using C, Fortran, OpenCL, and DirectCompute.

If you talk to the average tech enthusiast, he’ll see C for CUDA and Fortran for CUDA as an outdated business model. Why would software developers limit themselves to supporting a single manufacturer’s product line when they could be using something such as OpenCL or even DirectCompute? After all, we’re not seeing any proprietary 3D graphics APIs in practice anymore. However, if you talk to software developers, the answer is very different.

The majority of GPU-compute applications today are built around CUDA as a result of Nvidia’s multi-year lead in terms of the software tools available. Not only do those tools support GPU-computing across the widest range of programming languages, but Nvidia has also worked on the integrated development environments required for debugging CUDA applications. One of Nvidia’s strongest wins is the Mercury Playback Engine in Adobe CS5. This is particularly important, as Adobe’s next version of its Creative Suite is expected to be the best-selling version ever due to the first implementation of 64-bit native code.

Whereas previous versions of Adobe’s software utilized OpenGL for acceleration of certain elements, the Mercury Engine is technology built on top of Nvidia CUDA to enable real-time editing of multiple high-resolution clips, including five simultaneous RED 4K clips and support for complex, temporal-based codecs such as H.264 and AVCHD. Then, when it comes to encoding the final output, Nvidia has exclusive CUDA support in Elemental Accelerator. On a dual Quadro FX 3800 setup (equivalent to a first-generation GeForce GTX 260 with 192 cores, but a 256-bit memory interface), encoding an AVCHD 1080p source to H.264 720p can be done at 40 fps. Time is money for this industry. Think about a wedding cinematographer who wants to have a "same day edit" covering the ceremony ready for an evening reception. This isn’t something they can process overnight. The faster the encode, the more time available for editing.

At the time of CS5’s development, AMD’s Stream SDK was not up to the level needed by Adobe. Though Adobe would like to support OpenCL from the philosophical standpoint of being vendor neutral, the development environment is not robust enough for its work. The jury is still out on when OpenCL will reach Adobe’s Creative Suite, and if it will happen before Nvidia can capture iPod-like market share. Additionally, though AMD offers a beta plug-in for Stream accelerated encoding of H.264 encoding, the software requires an AMD CPU and will not work with Intel processors, which represent a majority of the market.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

On the scientific computing side, Nvidia enjoys considerable dominance due to its C for CUDA and Fortran for CUDA. Fortran is a dominant programming language used in scientific computing applications. Importantly, while Nvidia inked a deal with PGI to develop a GPU-accelerated Fortran compiler almost a year after AMD did (June 2009 versus November 2008), PGI’s support for CUDA is actually shipping, while AMD’s is yet to be seen. Nvidia's customers have another option in F2C-ACC, a Fortran-to-CUDA compiler developed by the National Oceanic and Atmospheric Administration (NOAA). AMD users have HMPP for Fortran, which incidentally also supports CUDA.

In addition to wider compiler support, Nvidia GPUs have the benefit of optimized math libraries that are in development or already available.These include GPU LAPACK, developed by John Humphrey and his team at EM Photonics, in partnership with NASA Ames Research Center (CULAtools). The team offers a single-precision "CULA Basic" package to everyone who is interested for free, and sells "Premium" and "Commercial" versions with more functions, double precision support, and the option to redistributable it. In addition, Jack Dongarra, who carries the titles of Distinguished Professor of Computer Science at the University of Tennessee, Distinguished Research Staff at Oak Ridge National Laboratory, Adjunct Professor at Rice University, and holds the Turing Fellowship at the University of Manchester, is working on a mixed precision GPU/CPU implementation of these math libraries to extract even more performance.Commercially-available software, such as Jacket for MATLAB, leverages Nvidia s scientific libraries to enable high-performance computation.

Remember when ATI rendered a scaled-down version of Lord of the Rings in real-time at SIGGRAPH 2002? That was an awesome tech demo. What Nvidia has done with CUDA goes to another level. Nvidia and WETA worked together to develop custom software for the movie Avatar, dubbed PantaRay. This pre-computational tool ran 25 times faster than its CPU server and was itself four times more effective than traditional renderers. This allowed the company to work with billions of polygons per frame. Not a tech demo, but a bona fide contribution to visual effects work. We’ll be seeing PantaRay in use in the upcoming Steven Spielberg/Peter Jackson film, Tintin.

The bottom line is that Nvidia has a considerable lead over both Intel and AMD when it comes to high-performance parallel computing. The investments it has made in creating viable commercial tools for GPGPU are already paying off with exclusive Adobe Creative Suite 5 support and broader adoption of CUDA among scientific professionals. If the company continues its momentum and aggressively grows the GF100-based product line, it has a chance to obtain iPod-like dominance in the market and at the very least, I think Nvidia has established itself firmly in the GPGPU world. Third place will have to go to either AMD or Intel.

Current page: Nvidia's Ambition

Prev Page Intel Graphics In The Next Decade Next Page Nvidia As x86 Manufacturer-

anamaniac Alan DangAnd games will look pretty sweet, too. At least, that’s the way I see it.After several pages of technology mumbo jumbo jargon, that was a perfect closing statement. =)Reply

Wicked article Alan. Sounds like you've had an interesting last decade indeed.

I'm hoping we all get to see another decade of constant change and improvement to technology as we know it.

Also interesting is that you almost seemed to be attacking every company, you still managed to remain neutral.

Everyone has benefits and flaws, nice to see you mentioned them both for everybody.

Here's to another 10 years of success everyone! -

" Simply put, software development has not been moving as fast as hardware growth. While hardware manufacturers have to make faster and faster products to stay in business, software developers have to sell more and more games"Reply

Hardware is moving so fast and game developers just cant keep pace with it. -

Ikke_Niels What I miss in the article is the following (well it's partly told):Reply

I am allready suspecting a long time that the videocards are gonna surpass the CPU's.

You allready see it atm, videocards get cheaper, CPU's on the other hand keep going pricer for the relative performance.

In the past I had the problem with upgrading my videocard, but with that pushing my CPU to the limit and thus not using the full potential of the videocard.

In my view we're on that point again: you buy a system and if you upgrade your videocard after a year/year-and-a-half your mostlikely pushing your CPU to the limits, at least in the high-end part of the market.

Ofcourse in the lower regions these problems are smaller but still, it "might" happen sooner then we think especially if the NVidia design is as astonishing as they say and on the same time the major development of cpu's slowly break up.

-

lashton one of the most interesting and informativfe articles from toms hardware, what about another story about the smaller players, like Intel Atom and VILW chips and so onReply -

JeanLuc Out of all 3 companies Nvidia is the one that's facing the more threats. It may have a lead in the GPGPU arena but that's rather a niche market compared to consumer entertainment wouldn't you say? Nvidia are also facing problems at the low end of market with Intel now supplying integrated video on their CPU's which makes the need for low end video cards practically redundant and no doubt AMD will be supplying a smiler product with Fusion at some point in the near future.Reply -

jontseng This means that we haven’t reached the plateau in "subjective experience" either. Newer and more powerful GPUs will continue to be produced as software titles with more complex graphics are created. Only when this plateau is reached will sales of dedicated graphics chips begin to decline.Reply

I'm surprised that you've completely missed the console factor.

The reason why devs are not coding newer and more powerful games is nothing to do with budgetary constraints or lack thereof. It is because they are coding for an XBox360 / PS3 baseline hardware spec that is stuck somewhere in the GeForce 7800 era. Remember only 13% of COD:MW2 units were PC (and probably less as a % sales given PC ASPs are lower).

So your logic is flawed, or rather you have the wrong end of the stick. Because software titles with more complex graphics are not being created (because of the console baseline), newer and more powerful GPUs will not continue to produced.

Or to put it in more practical terms, because the most graphically demanding title you can possibly get is now three years old (Crysis), then NVidia has been happy to churn out G92 respins based on a 2006 spec.

Until we next generation of consoles comes through there is zero commercial incentive for a developer to build a AAA title which exploits the 13% of the market that has PCs (or the even smaller bit of that has a modern graphics card). Which means you don't get phat new GPUs, QED.

And the problem is the console cycle seems to be elongating...

J -

Swindez95 I agree with jontseng above ^. I've already made a point of this a couple of times. We will not see an increase in graphics intensity until the next generation of consoles come out simply because consoles is where the majority of games sales are. And as stated above developers are simply coding games and graphics for use on much older and less powerful hardware than the PC has available to it currently due to these last generation consoles still being the most popular venue for consumers.Reply