Intel Kaby Lake Core i7-7700K, i7-7700, i5-7600K, i5-7600 Review

Inconsistent CPU Quality And Its Consequences

Golden Sample or Potato Chip?

We already mentioned that we used retail CPUs for all of our tests. This means that our results should be representative of what you can expect when you purchase a new Kaby Lake-based processor. In light of our experiences, early adopters should be aware that CPU quality can vary widely, and this is especially true for early production runs. We experienced this very problem with our Core i7-7700K retail sample.

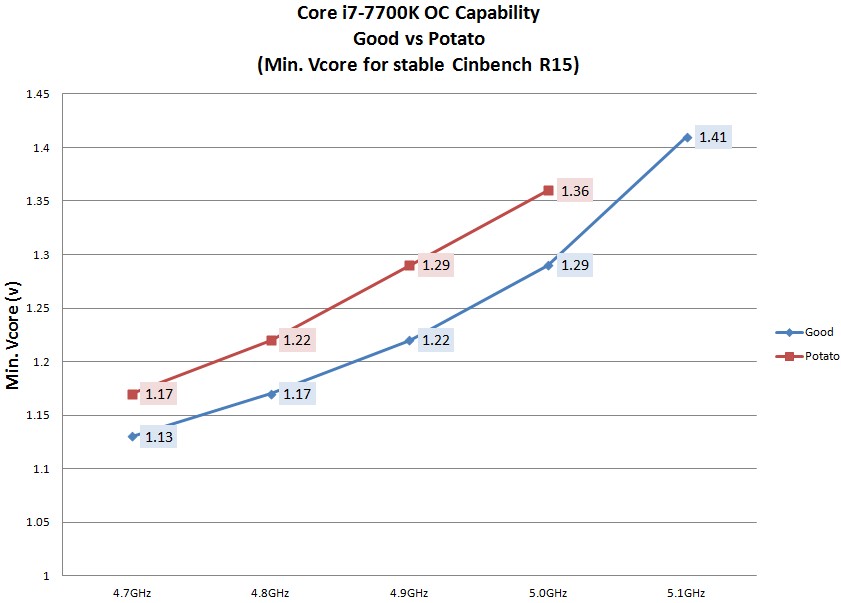

It turns out that MSI has assessed 30 more retail Core i7-7700Ks. For each of them, the company found the minimum voltage necessary for stable operation under a given workload at a specific frequency. Thirty CPUs might not be a huge number, but it still yields a good idea of how much individual samples vary in quality.

The curve tells us that the Core i7-7700K in our German lab falls toward the bottom of the distribution. Both the voltages and the maximum frequency of “only” 5 GHz are the same as the ones on the bottom of the curve. This explains why that particular processor didn’t do so well under Intel’s Power Thermal Utility: its quality is just too low, necessitating a voltage increase that’s too high to allow for sufficient cooling.

Is Undervolting the Solution?

In Germany, sending below-par CPUs or graphics cards back to online retailers, which is made possible by a specific right of withdrawal, has become very popular of late. This is probably going to continue with Intel’s new Kaby Lake CPUs, driving those sellers insane once again.

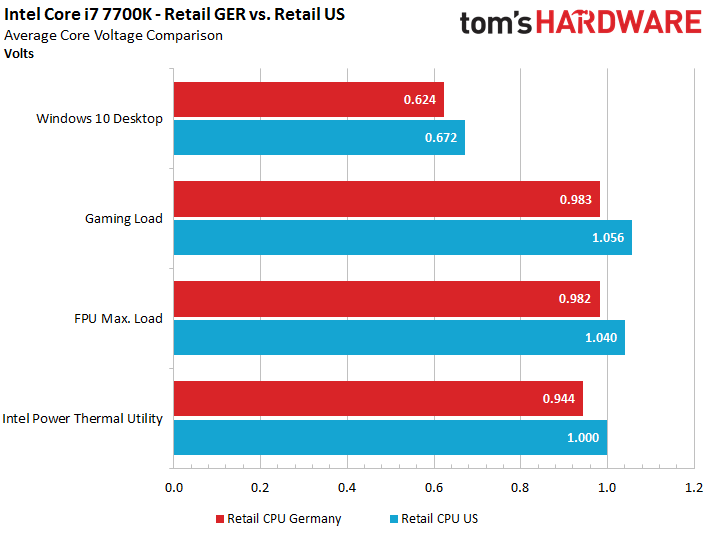

For several CPU generations, Intel has provided each processor a voltage identification (VID). This allows the motherboard to configure voltages in a way that has the CPU run stably within its specifications. Unfortunately, the increments are relatively large, meaning there could be room for lower voltages set manually. This is exactly what we test next. The question we want to answer is whether we can get our Core i7-7700K sample's power consumption under control, or if it's a lost cause.

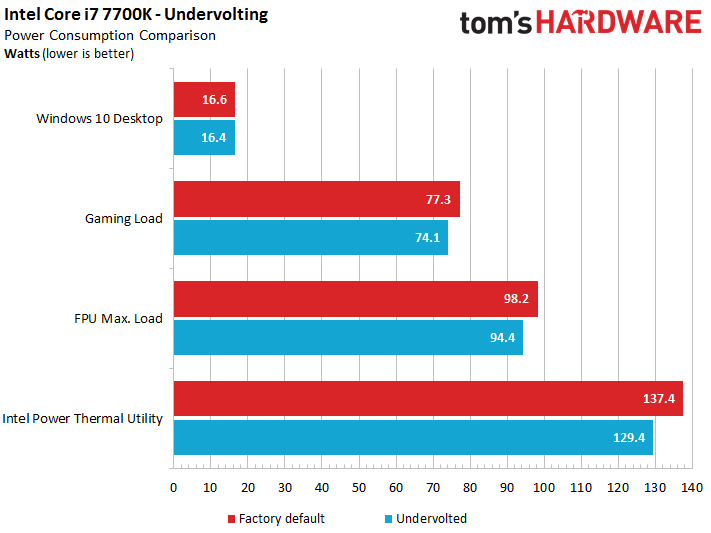

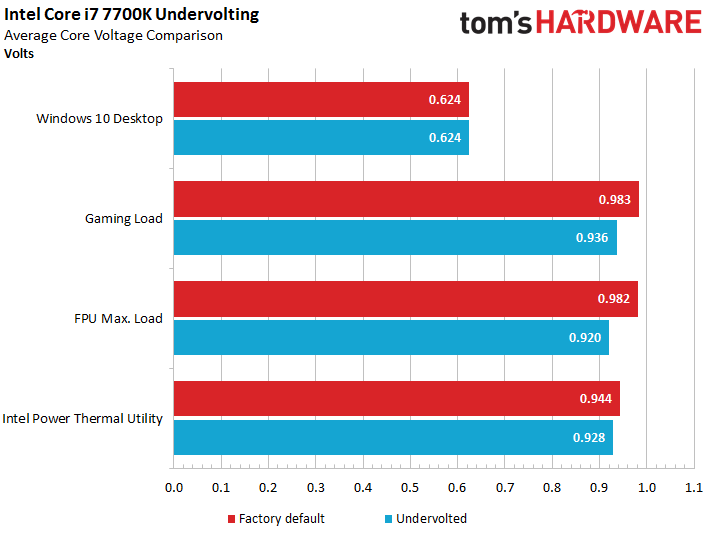

Our efforts did help pull power use lower, but they ultimately didn't make a big enough difference. We had to step all the way up to Intel’s Power Thermal Utility to save 8W. Temperatures were a little lower across all of the tests as well, resulting in somewhat lower leakage currents. A better outcome wasn't possible because we were only able to decrease the voltage by one step in the BIOS. Any further and our Core i7 wouldn't run stably.

Voltages decrease a little bit, but there definitely aren’t any substantial improvements to be found.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

For enthusiasts who receive one of these potato chips, there are two possible solutions. First, you can cool it as well as possible and hope for a long winter. Or, you can move to Germany and send the processor back to wherever you bought it. Playing the lottery again doesn’t guarantee a winning CPU, though.

An Engineering Sample in a Retail Box?

We were again surprised when our German lab received Intel’s review sample, sent to us by the company directly. It arrived in the same retail box that you'd get after purchasing a Core i7-7700K without a cooler from the store.

After breaking the seals and pulling the processor from its box, we found an actual engineering sample CPU, though. This made us wonder if we received a pre-screened golden sample that'd outperform the CPUs we sourced from elsewhere.

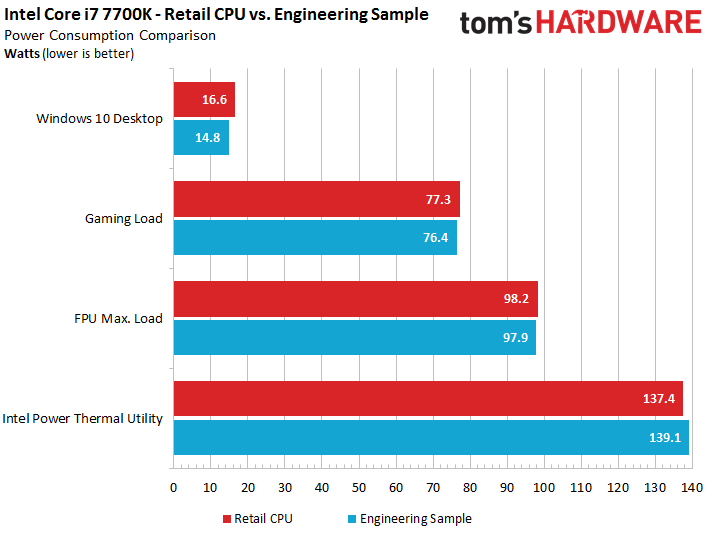

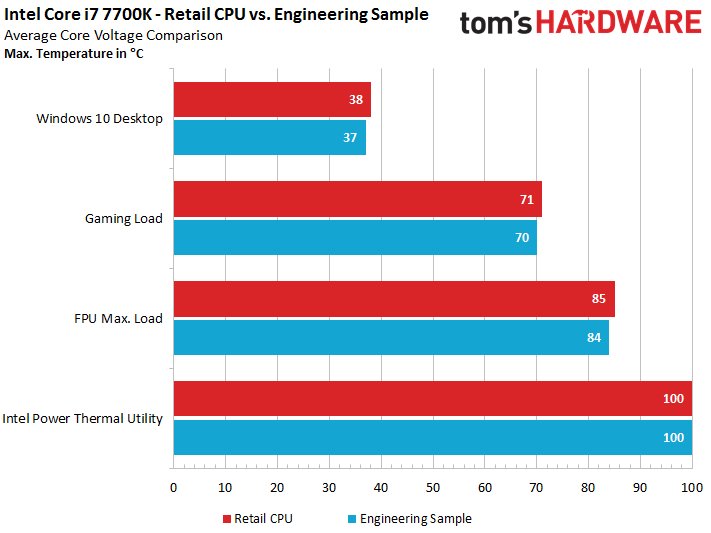

The first benchmark runs put an end to our excitement. Our engineering sample's power consumption and temperatures were almost identical to the retail sample. We're presenting the results below anyway, even though the differences are very small.

Notice that the engineering sample’s power consumption is approximately 1W lower than the retail sample’s at both idle and while gaming, but 2W higher during the Intel Power Thermal Utility benchmark. The voltage curves are almost identical as well, which shows just how similarly these two samples behave. The maximum overclock we achieved on them was also identical: 5 GHz at 1.35 and 1.36V.

We pushed the Core i5-7600K to a Prime95-stable 4.9 GHz overclock at 1.34V, with temperatures in the 80°C range under load using Corsair's H100i v2 cooler. We also ran the AIDA64 stress test at 5.1 GHz for several hours with 1.35V, but couldn't maintain that frequency under Prime95 without employing the AVX offset to reduce clock rates by 200 MHz.

In the end, we could have saved ourselves the three hours we spent running the temperature benchmarks. The two CPU samples’ temperatures are practically identical as well. We decided to use a bar graph to display these results because the curves would have been on top of each other.

There’s Hope!

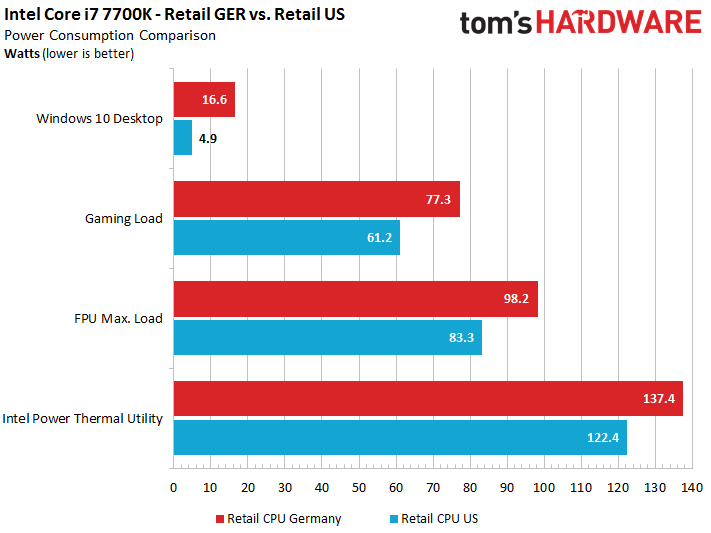

Alright, so Germany’s lab has two sub-par Core i7-7700Ks, and that's the hardware used in our performance, power consumption, and temperature benchmarks. However, the U.S. lab does have its own retail sample that consumes significantly less power. It also runs cooler.

We weren’t able to run all of our measurements in Germany and the U.S. with identical air coolers, so we went with compact closed-loop liquid coolers instead. Unfortunately, we still weren't able to achieve completely comparable performance. The Corsair H100i v2 in our U.S. lab gave us sporadic issues and generally didn’t cool as well. Consequently, we're only comparing power consumption results. We should still get plenty of information from those data points, though.

Whether we look at idle power consumption or the readings under Intel's Power Thermal Utility, the difference between samples is massive. And remember, these are retail processor we're comparing, not CPUs hand-selected by Intel for the press.

The difference between the CPU package and readings from the IA cores shrinks to a mere 2W. And leakage currents at the CPU’s highest temperature compared to before it warms up rise by a maximum of 1W. Compared to the four other CPU samples that we tested, this one’s really something special.

In this case, higher voltages paired with lower power consumption must mean lower currents, which keep the CPU significantly cooler.

The CPU Lottery Continues

Once again, the Kaby Lake generation will see enthusiasts receiving great CPUs, mediocre CPUs, and terrible CPUs that still managed to make it through quality control. For the time being, we’ll have to live with this, since the manufacturing process isn’t mature enough to produce consistent high-quality results. We haven’t seen extreme differences like these since the days of Intel’s first quad-core CPU, the Q6600.

Current page: Inconsistent CPU Quality And Its Consequences

Prev Page Intel Core i5-7600: Power Consumption And Temperatures Next Page Conclusion

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

Based on our initial testing, we can confirm that HD Graphics 630 does not function correctly under Windows 7 and 8.1. Both operating systems install generic drivers for the display adapter, even after applying the latest drivers and updates, so many core features remain unavailable. We also experienced stability issues with Windows 7 that might even negate using an add-in GPU as a workaround.Reply

Not true, i setup Kaby Lake in my lab and everything works fine under Windows 7. Did you guys even try to apply drivers for HD Graphic 630? -

adgjlsfhk It would have been kind of nice to see igpu vs gtx 750 and other low end discrete graphics cards, but other than that, great review.Reply -

cknobman Boooooooooooooooooring.Reply

Bring on the new AMD cpu's!!!

Oh, nice review the boring is not Toms fault ;) -

Jim90 "Intel’s slow cadence of incremental upgrades hasn’t done much to distance its products from AMD's.Reply

"...Obviously we need a competitive AMD to help reinvigorate the desktop PC space."

Without real competition the only risk Intel takes with the Desktop market is to loose sight of (i.e. actively ignore) the performance jump the consumer expects in a new release. Continual and lengthy minor incremental updates (pretty much what we've seen since the 2000 series) may well lead to consumer apathy. I certainly haven't upgraded 'as much as I could!' recently. Absolutely no justifiable need.

Then again, perhaps we've all had access to enough power we need? We used to talk about 'killer apps/software' to drive consumers into making a purchase. This certainly did work. Maybe new tech (currently available) isn't killer enough? We need another '3dfx Voodoo' experience?

VR is certainly (definite?) a potential driver...here's hoping for speedy and significant updates here. -

Paul Alcorn Reply19098433 said:Based on our initial testing, we can confirm that HD Graphics 630 does not function correctly under Windows 7 and 8.1. Both operating systems install generic drivers for the display adapter, even after applying the latest drivers and updates, so many core features remain unavailable. We also experienced stability issues with Windows 7 that might even negate using an add-in GPU as a workaround.

Not true, i setup Kaby Lake in my lab and everything works fine under Windows 7. Did you guys even try to apply drivers for HD Graphic 630?

Hello, yes we did test and attempt to install the HD Graphics 630 drivers. We are working with early BIOS revisions, so it is possible that we encountered a platform-specific issue. Can you share which motherboard you used for your testing? Any feedback is welcome.

-

ohim Why are they even releasing this ? It makes absolutely no sense to release such a product ...Reply -

redgarl Reply19098782 said:Why are they even releasing this ? It makes absolutely no sense to release such a product ...

Probably to make a statement of some kind.

-

FormatC Reply

You really have drivers for the Z270 chipset with official support of Windows 7 from intel and Microsoft? I tried it also and was not able to run KL with all features on a W7 installation. It runs, somehow. :)19098433 said:Not true, i setup Kaby Lake in my lab and everything works fine under Windows 7. Did you guys even try to apply drivers for HD Graphic 630? -

valeman2012 Just notice that people that are using these CPU you need Windows 10 to have everything working 100%.Reply